In this quick start guide we will walk through the steps of modifying data in a table in the Project Sandbox using Update tasks. These changes can either be made to all records in a table or a subset based on a filtering condition. Any PostgreSQL function can be used when configuring the update statements and conditions of Update tasks.

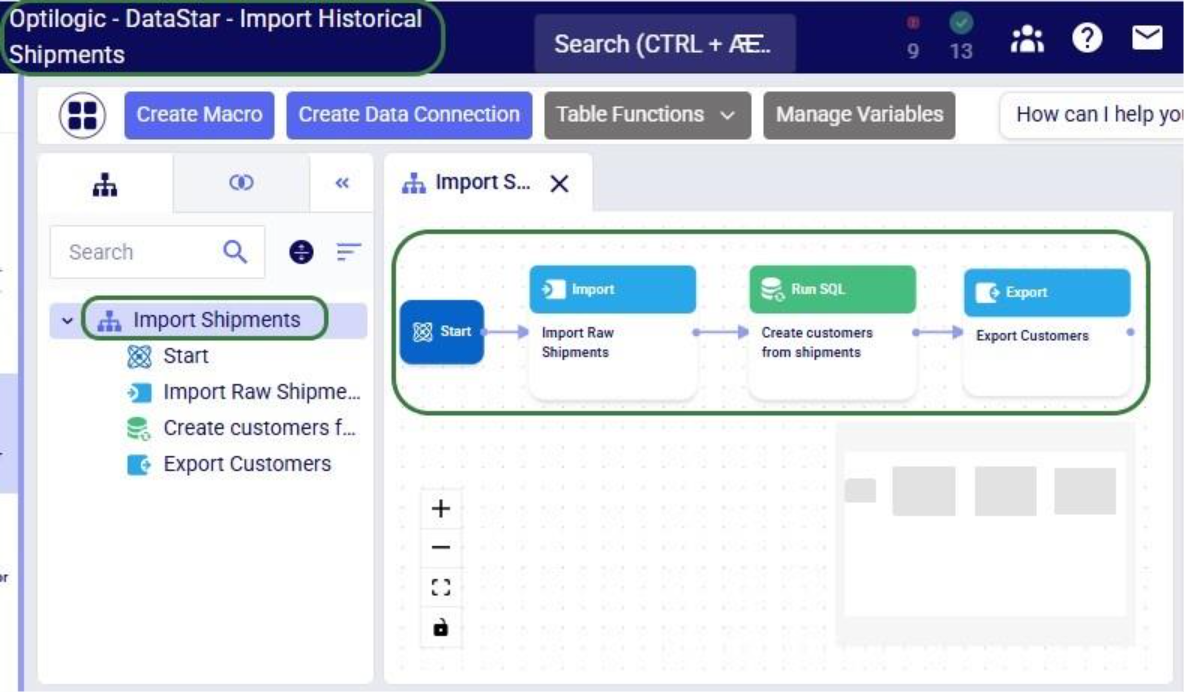

This quick start guide builds upon a previous one where unique customers were created from historical shipments using a Leapfrog-generated Run SQL task. Please follow the steps in that quick start guide first if you want to follow along with the steps in this one. The starting point for this quick start is therefore a project named “Import Historical Shipments”, which contains a macro called Import Shipments. This macro has an Import task and a Run SQL task. The project has a Historical Shipments data connection of type = CSV, and the Project Sandbox contains 2 tables named rawshipments (42,656 records) and customers (1,333 records). Note that if you also followed one of the other quick start guides on exporting data to a Cosmic Frog model (see here), your project will also contain an Export task, and a Cosmic Frog data connection; you can still follow along with this quick start guide too.

The steps we will walk through in this quick start guide are:

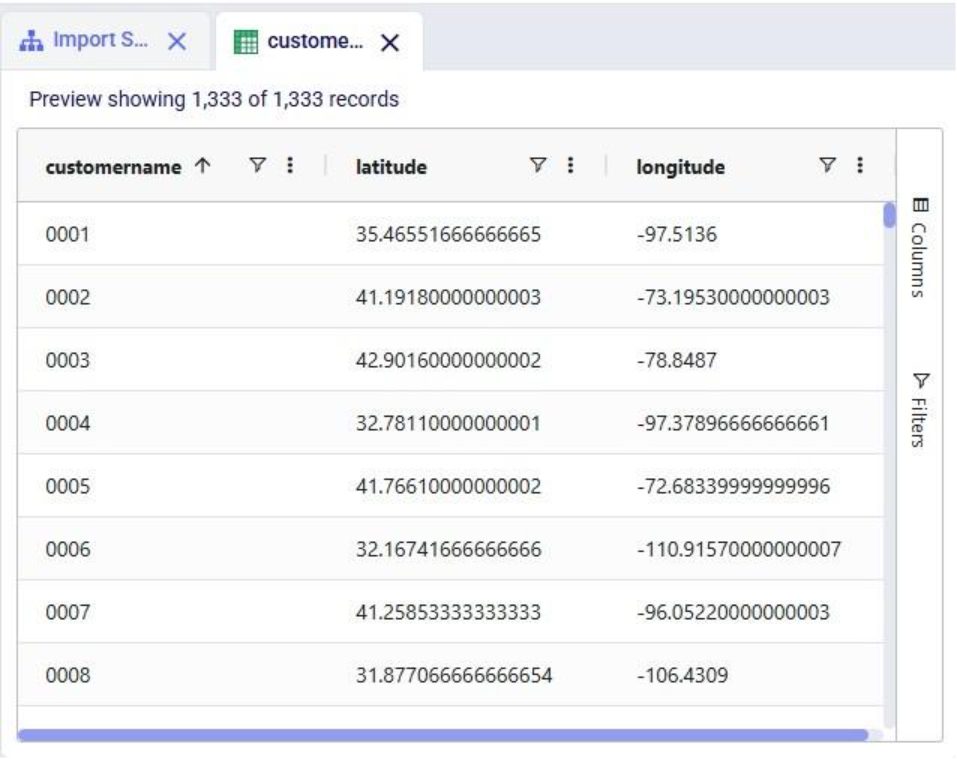

We have a look at the customers table which was created from the historical shipment data in the previous 2 quick start guides, see the screenshot below. Sorting on the customername column, we see that they are ordered in alphabetical order. This is because the customer name column is of type text as it starts with the string “CZ”. This leads to them not being ordered based on the number part that follows the “CZ” prefix.

If we want ordering customer names alphabetically to result in an order that is the same as sorting the number part of the customer name, we need to make sure each customer name has the same number of digits. We will use Update tasks to change the format of the number part of the customer names so that they are all 4 digits by adding leading 0’s to those that have less than 4 digits. While we are at it, we will also replace the “CZ” prefix with “Cust_” to make the data consistent with other data sources that contain customer names. We will break the updates to the customer name column up into 3 steps using 3 Update tasks initially. At the end, we will see how they can be combined into a single Update task. The 3 steps are:

Let us add the first Update task to our Import Shipments macro:

After dropping the Update task onto the macro canvas, its configuration tab will be opened automatically on the right-hand side:

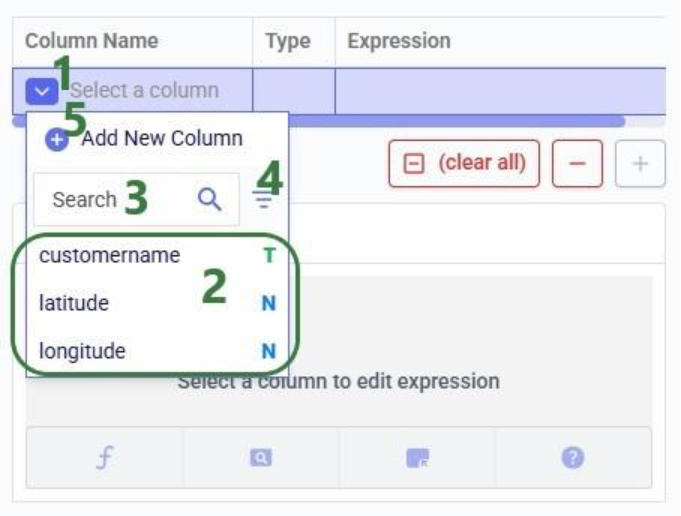

If you have not already, click on the plus button to add your first update statement:

Next, we will write the expression for which we can use the Expression Builder area just below the update statements table. What we type there will also be added to the Expression column of the selected Update Statement. These expressions can use any PostgreSQL function, also those which may not be pre-populated in the helper lists. Please see the PostgreSQL documentation for all available functions.

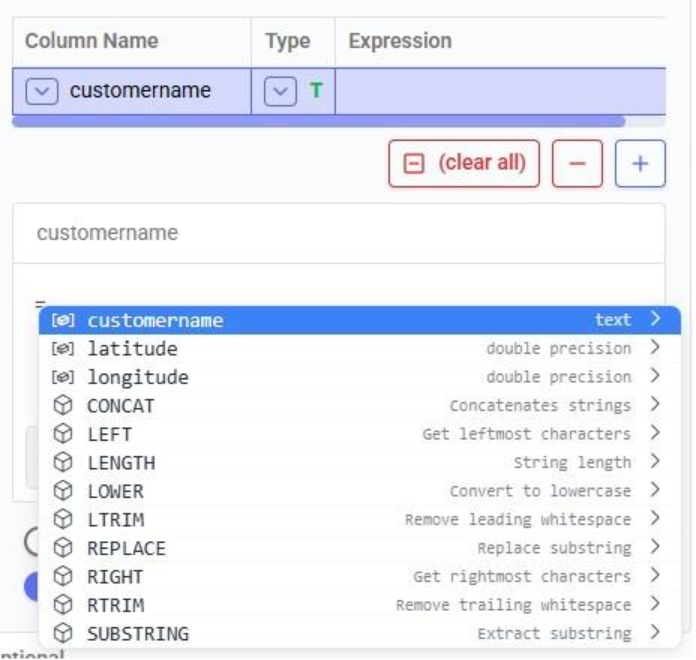

When clicking in the Expression Builder, an equal sign is already there, and a list of items comes up. At the top are the columns that are present in the target table and below those is a list of string functions which we can select to use. Here, the functions shown are string functions, since we are working on a text type column, when working on column with a different data type, other functions, those relevant to the data type, will be shown. We will select the last option shown in the screenshot, the substring function, since we want to first remove the “CZ” from the start of the customer names:

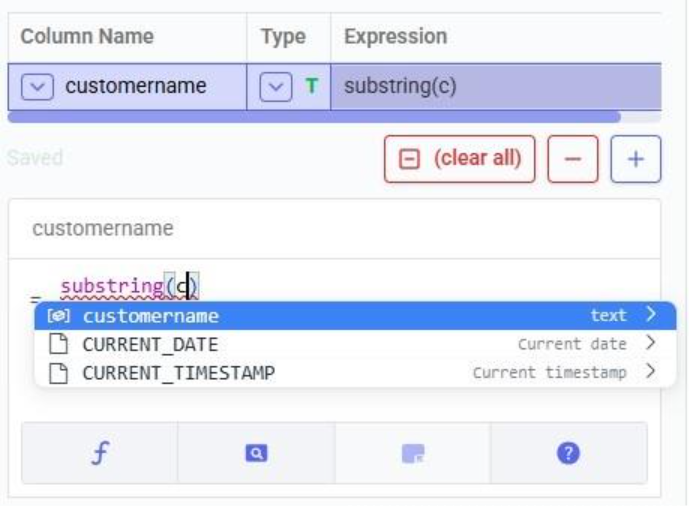

The substring function needs at least 2 arguments, which will be specified in the parentheses. The first argument needs to be the customername column in our case, since that is the column containing the string values we want manipulate. After typing a “c”, the customername column and 2 functions starting with “c” are suggested in the pop-up list. We choose the customername column. The second argument specifies the start location from where we want to start the substring. Since we want to remove the “CZ”, we specify 3 as the start location, leaving characters number 1 and 2 off. The third argument is optional; it indicates the end location of the substring. We do not specify it, meaning we want to keep all characters starting from character number 3:

We can run this task now without specifying a Condition (see section further below) in which case the expression will be applied to all records in the customers table. After running the task, we open the customers table to see the result:

We see that our intended change was made. The “CZ” is removed from the customer names. Sorted alphabetically, they still are not in increasing order of the number part of their name. Next, we use the lpad (left pad) function to add leading zeroes so all customer names consist of 4 digits. This function has 3 arguments: the string to apply the left padding to (the customername column), the number of characters the final string needs to have (4), and the padding character (‘0’).

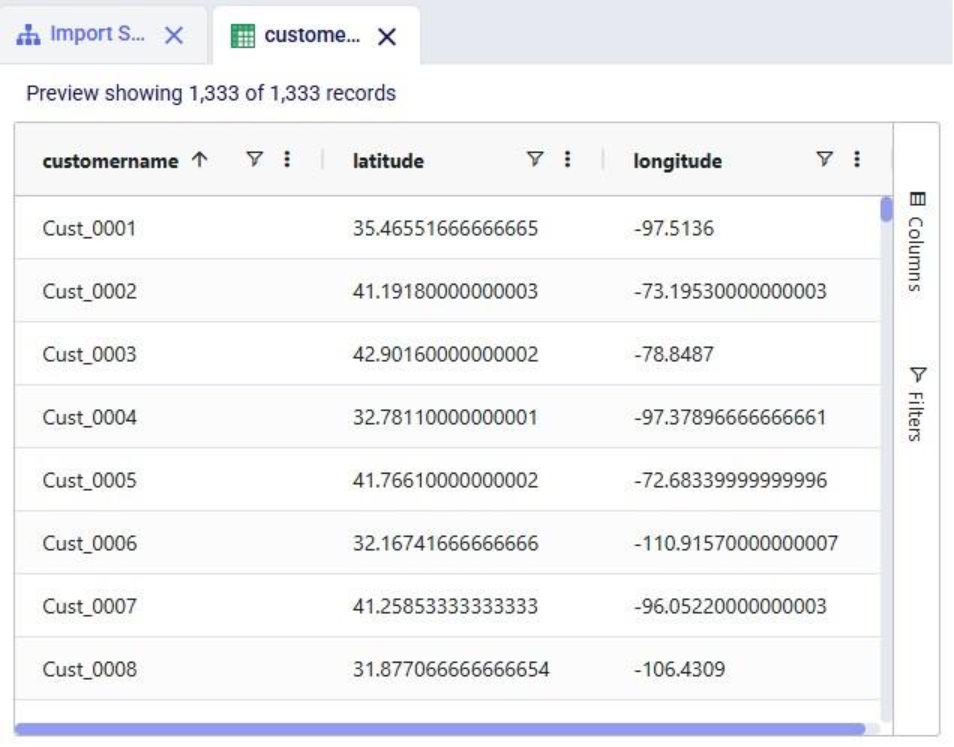

After running this task, the customername column values are as follows:

Now with the leading zeroes and all customer names being 4 characters long, sorting alphabetically results in the same order as sorting by the number part of the customer name.

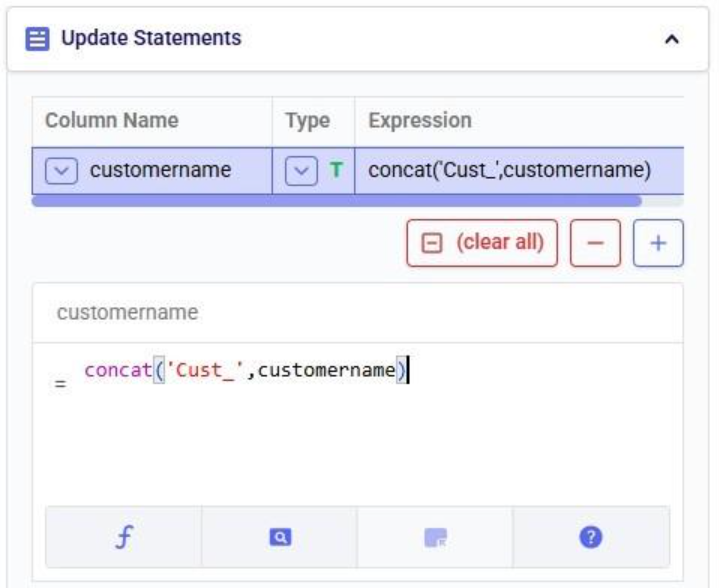

Finally, we want to add the prefix “Cust_”. We use the concat (concatenation) function for this. At first, we type Cust_ with double quotes around it, but the squiggly red line below the expression in the expression builder indicates this is not the right syntax. Hovering over the expression in the expression builder explains the problem:

The correct syntax for using strings in these functions is to use single quotes:

Instead of concat we can also use “= ‘Cust_’ || customername” as the expression. The double pipe symbol is used in PostgreSQL as the concatenation operator.

Running this third update task results in the following customer names in the customers table:

Our goal of how we wanted to update the customername column has been achieved. Our macro now looks as follows with the 3 Update tasks added:

The 3 tasks described above can be combined into 1 Update task by nesting the expressions as follows:

Running this task instead of the 3 above will result in the same changes to the customername column in the customers table.

Please note that in the above we only specified one update statement in each Update task. You can add more than one update statement per update task, in which case:

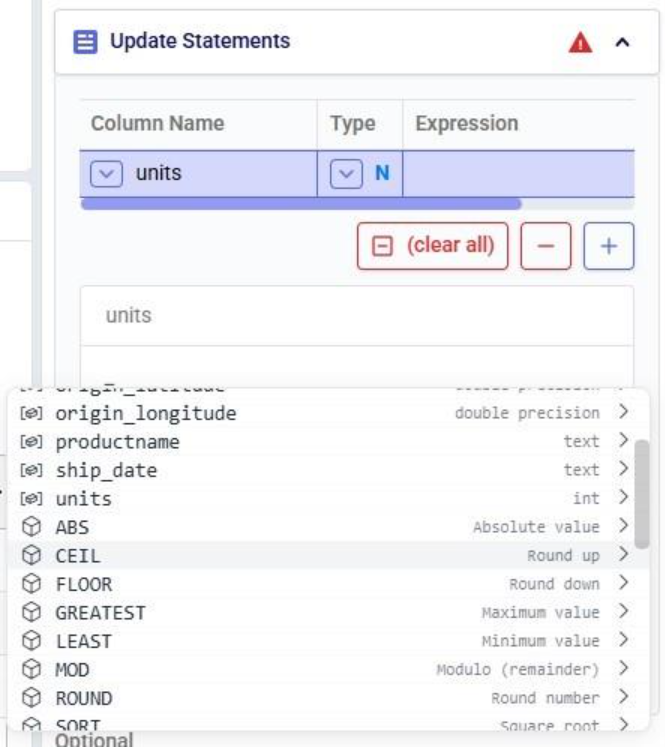

As mentioned above, the list of suggested functions is different depending on the data type of the column being updated. This screenshot shows part of the suggested functions for a number column:

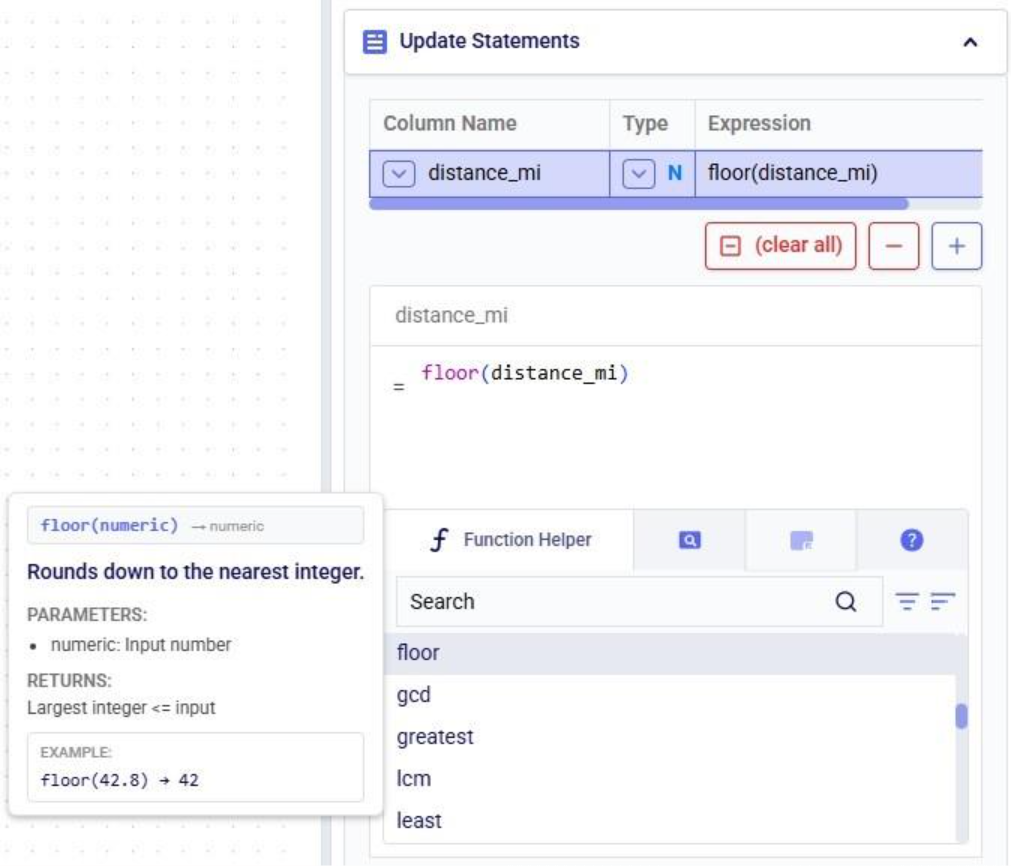

At the bottom of Expression Builder are multiple helper tabs to facilitate quickly building your desired expressions. The first one is the Function Helper which lists the available functions. The functions are listed by category: string, numeric, date, aggregate, and conditional. At the top of the list user has search, filter and sort options available to quickly find a function of interest. Hovering over a function in the list will bring up details of the function, from top to bottom: a summary of the format and input and output data types of the function, a description of what the function does, its input parameter(s), what it returns, and an example:

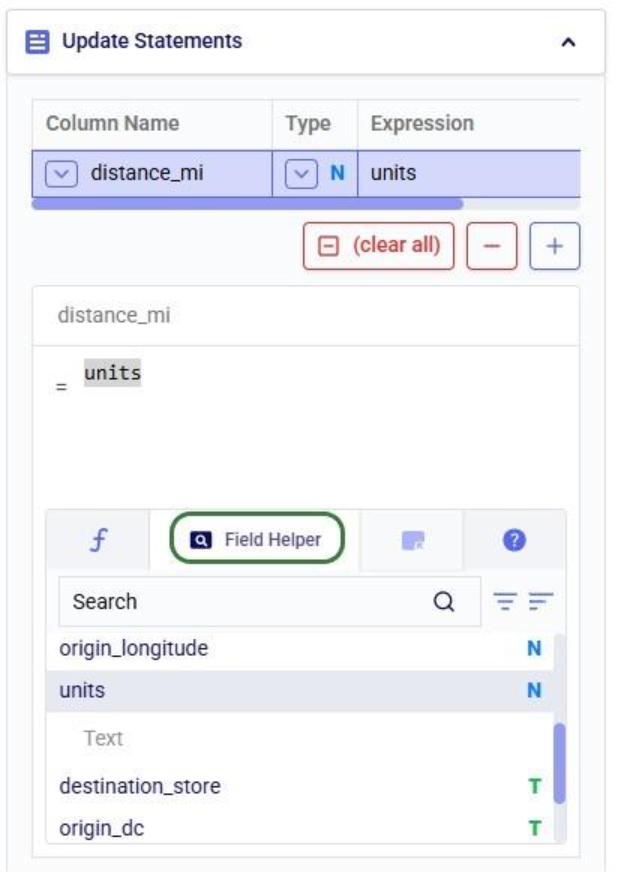

The next helper tab contains the Field Helper. This lists all the columns of the target table, sorted by their data type. Again, to quickly find the desired field, users can search, filter, and sort the list using the options at the top of the list:

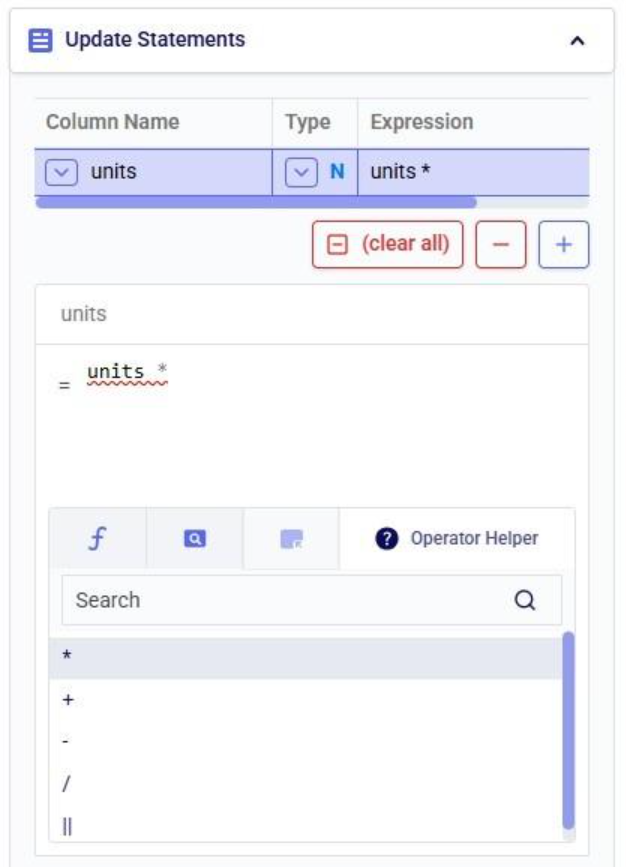

The fourth tab is the Operator Helper, which lists several helpful numerical and string operators. This list can be searched too using the Search box at the top of the list:

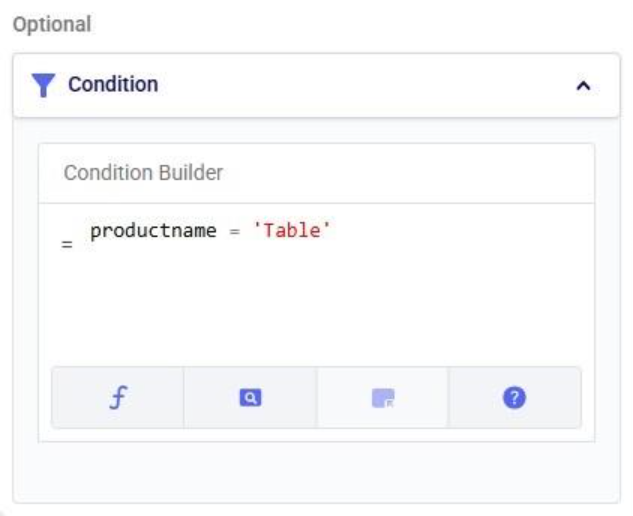

There is another optional configuration section for Update tasks, the Condition section. In here, users can specify an expression to filter the target table on before applying the update(s) specified in the Update Statements section. This way, the updates are only applied to the subset of records that match the condition.

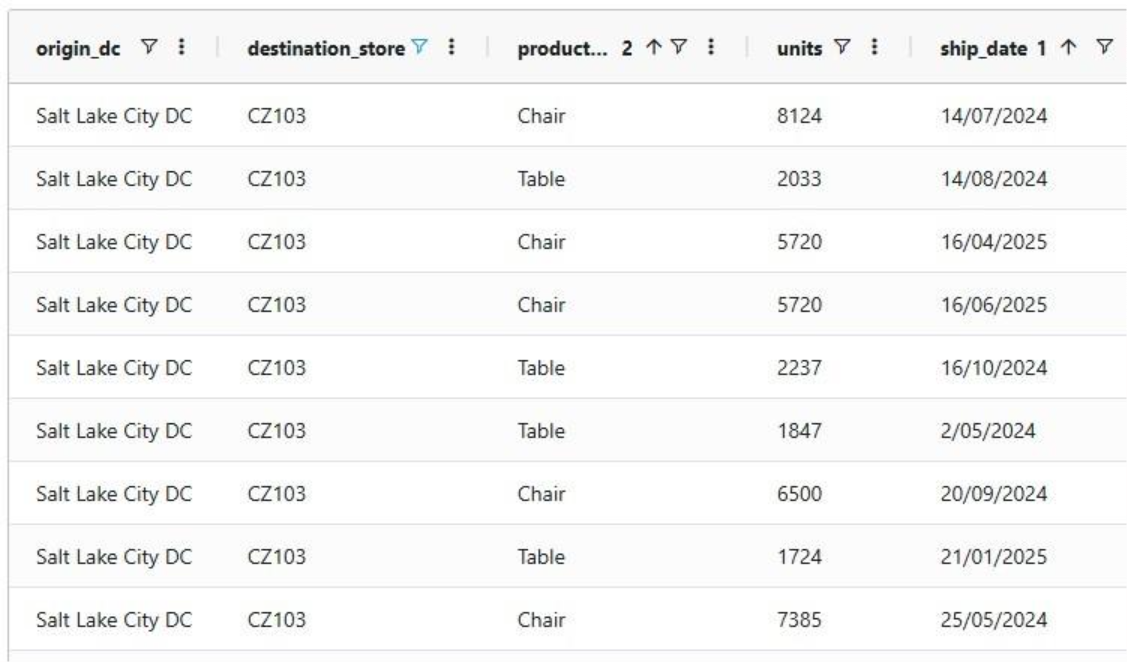

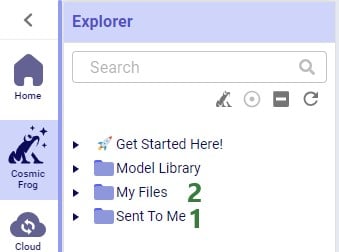

In this example, we will look at some records of the rawshipments table in the project sandbox of the same project (“Import Historical Shipments). We have opened this table in a grid and filtered for origin_dc Salt Lake City DC and destination_store CZ103.

What we want to do is update the “units” column and increase the values by 50% for the Table product. The Update Statements section shows that we set the units field to its current value multiplied by 1.5, which will achieve the 50% increase:

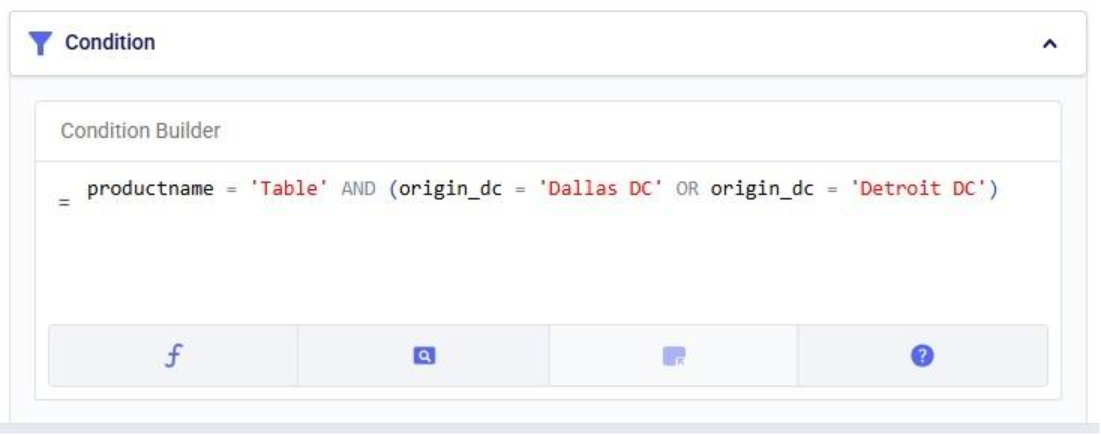

However, if we run the Update task as is, all values in the units field will be increased by 50%, for both the Table and the Chair product. To make sure we only apply this increase to the Table product, we configure the Condition section as follows:

The condition builder has the same function, field, and operator helper tabs at the bottom as the expression builder in the update statements section to enable users to quickly build their conditions. Building conditions works in the same way as building expressions.

Running the task and checking the updated rawshipments table for the same subset of records as we saw above, we can check that it worked as intended. The values in the units column for the Table records are indeed 1.5 times their original value, while the Chair units are unchanged.

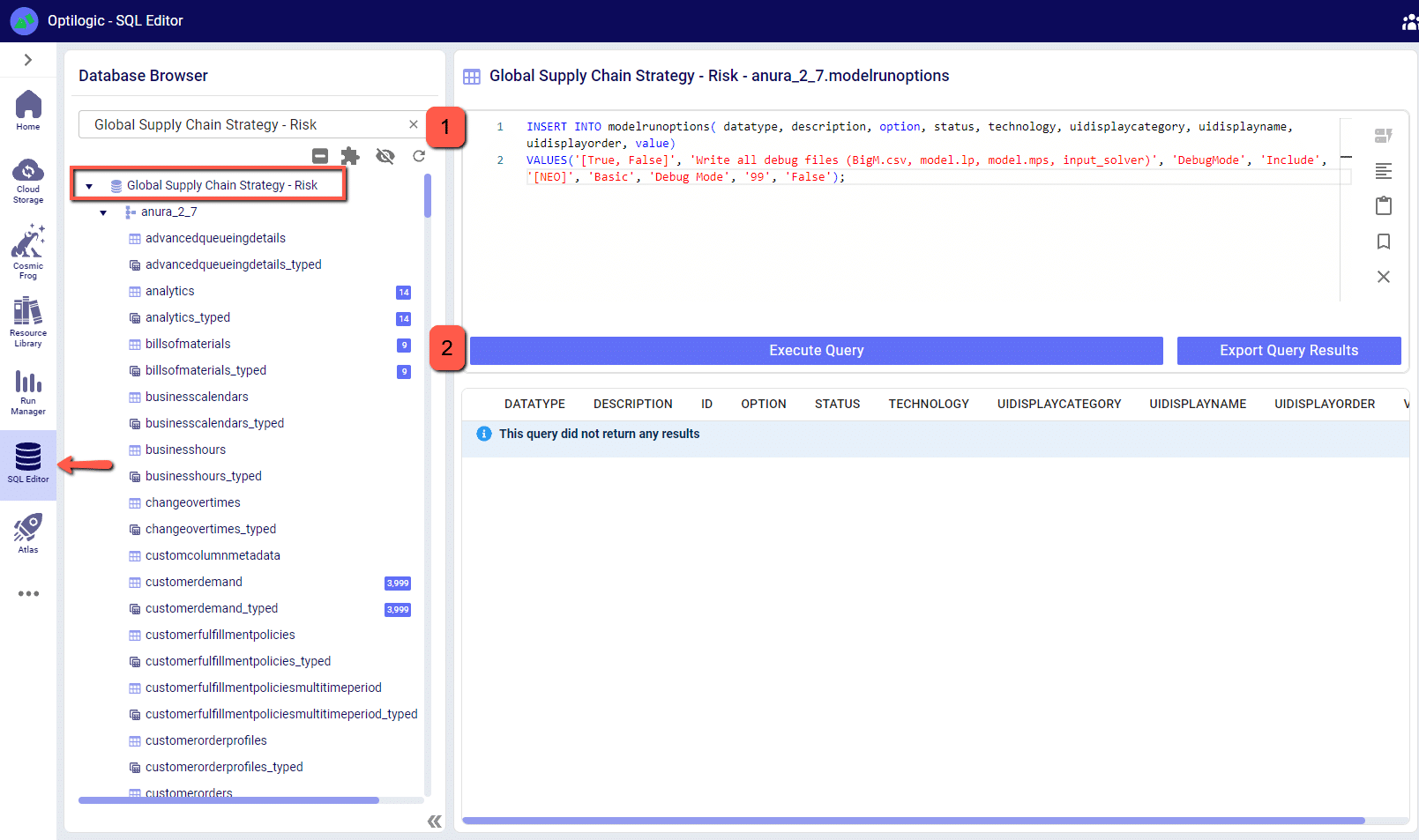

It is important to note that opening tables in DataStar currently shows a preview of 10,000 records. When filtering a table by clicking on the filter icons to the right of a column name, only the resulting subset of records from those first 10,000 records will be included. While an Update task will be applied to all records in a table, due to this limit on the number of records in the preview you may not always be able to see (all) results of your Update task in the grid. In addition, an Update task can also change the order of the records in the table. This can lead to a filter showing a different set of records after running an update task as compared to the filtered subset that was shown prior to running it. Users can use the SQL Editor application on the Optilogic platform to see the full set of records for any tables.

Finally, if you want to apply multiple conditions you can use logical AND and OR statements to combine them in the Expression Builder. You would for example specify the condition as follows if you want to increase the units for the Table product by 50% only for the records where the origin_dc value is either “Dallas DC” or “Detroit DC”:

In this quick start guide we will show how users can seamlessly go from using the Resource Library, Cosmic Frog and DataStar applications on the Optilogic platform to creating visualizations in Power BI. The example covers cost to serve analysis using a global sourcing model. We will run 2 scenarios in this Cosmic Frog model with the goal to visualize the total cost difference between the scenarios by customer on a map. We do this by coloring the customers based on the cost difference.

The steps we will walk through are:

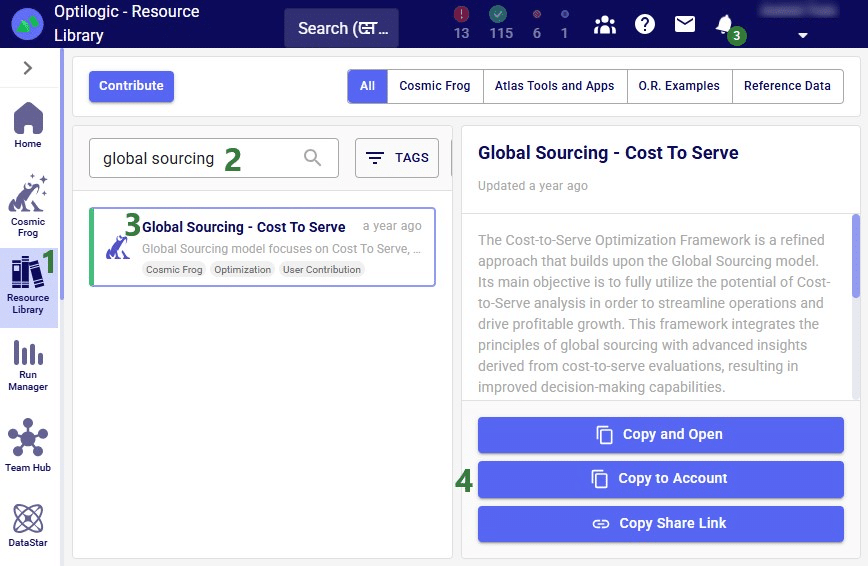

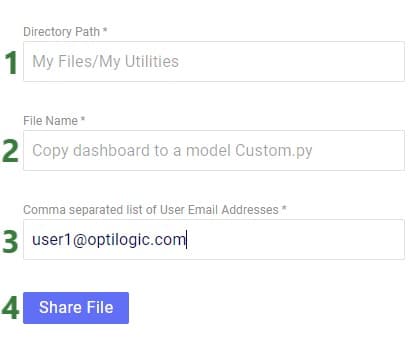

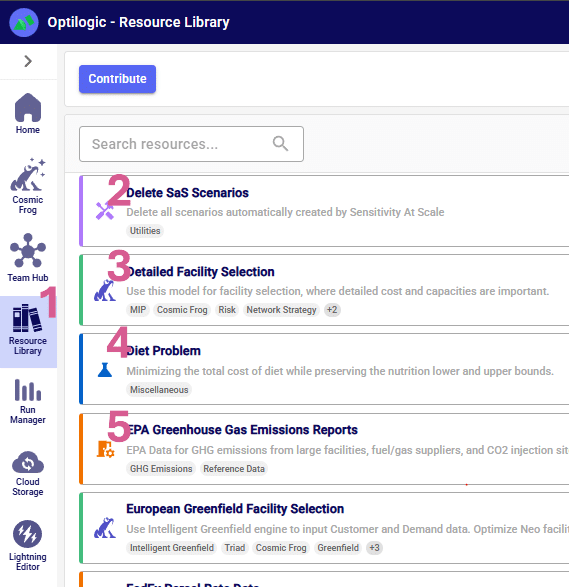

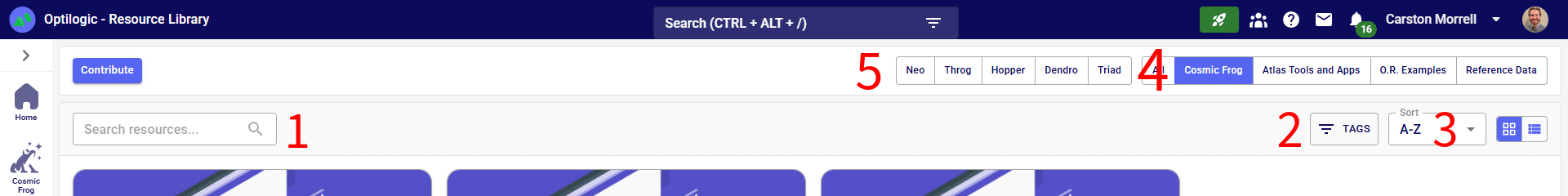

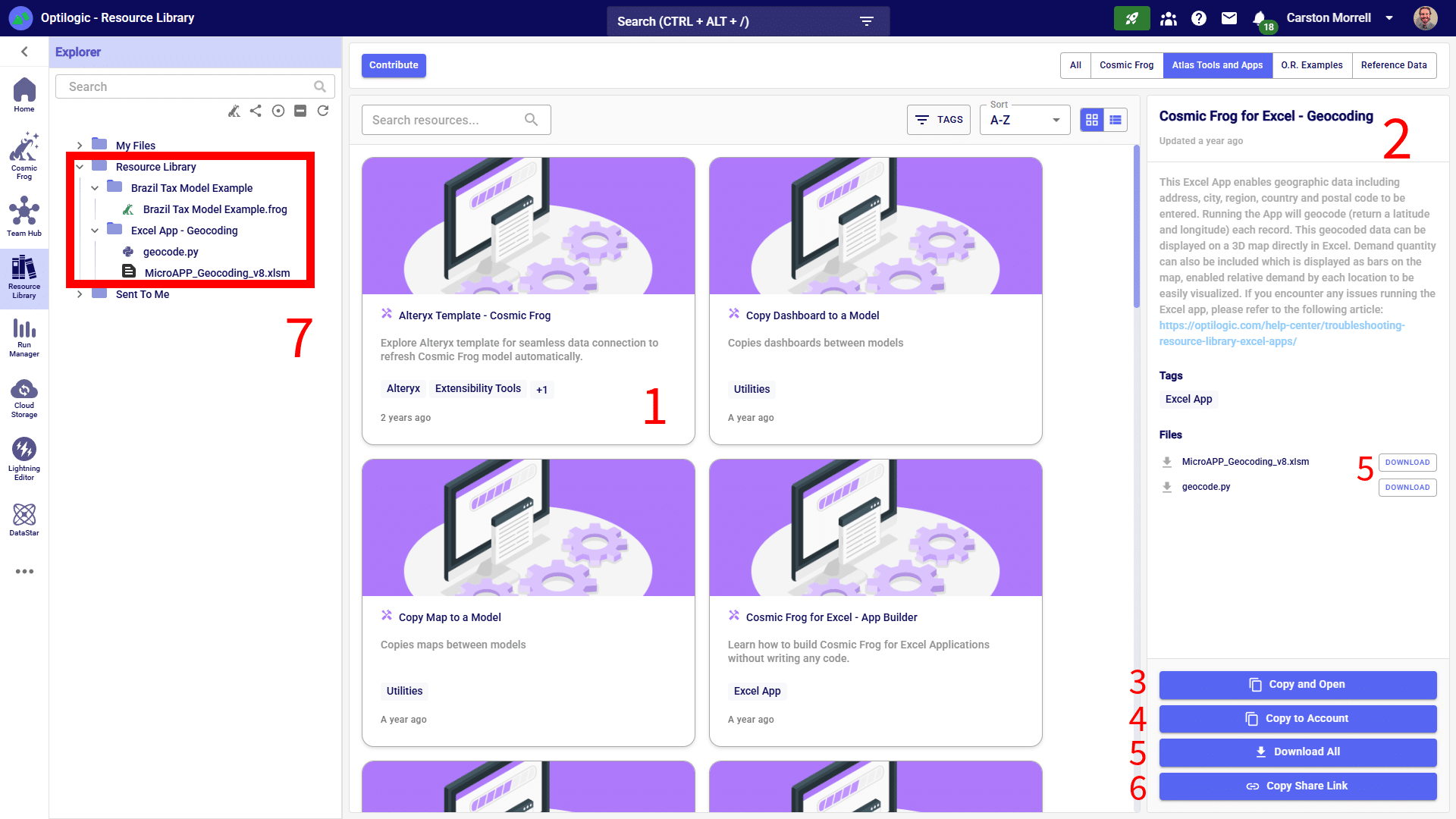

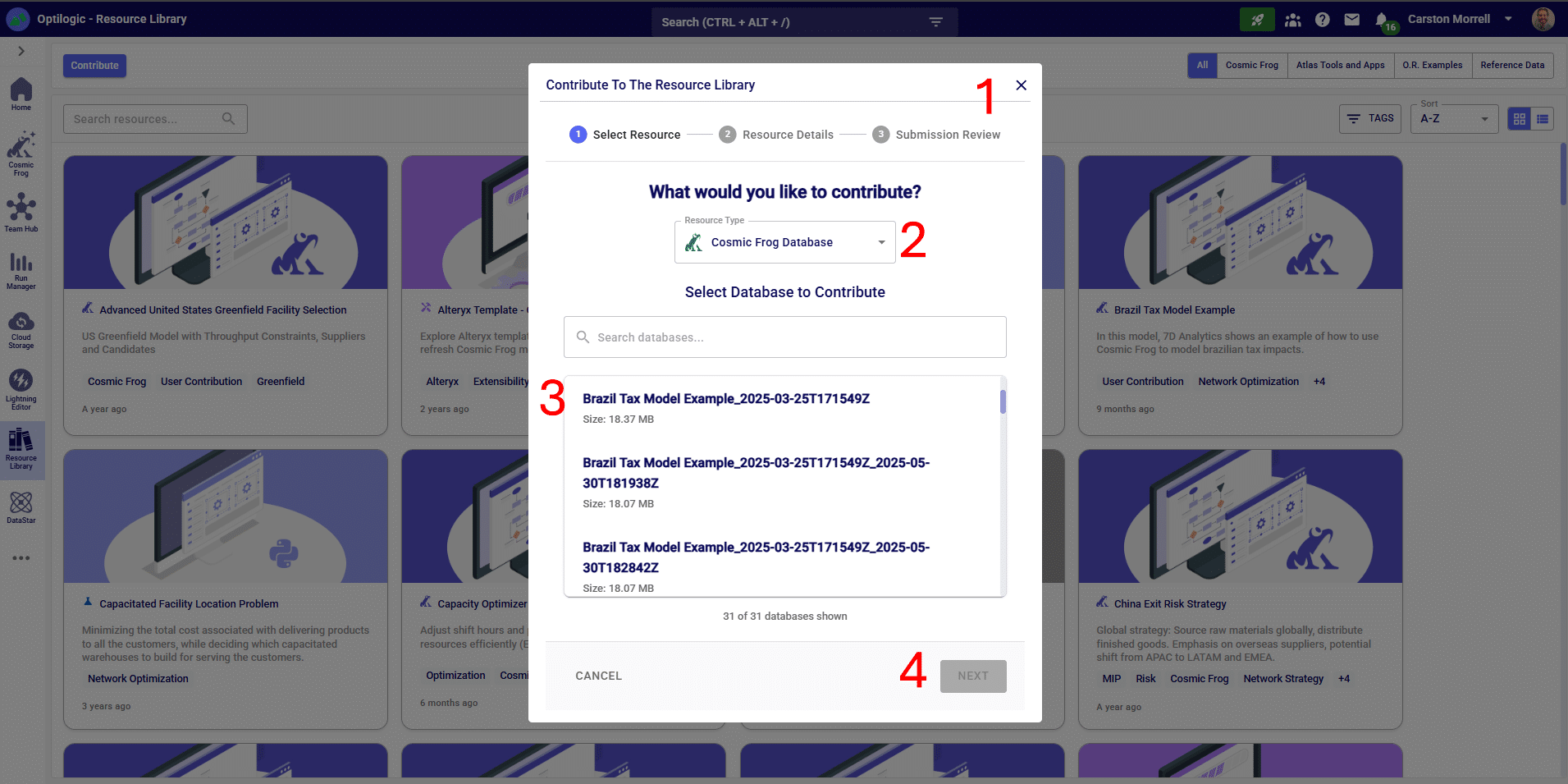

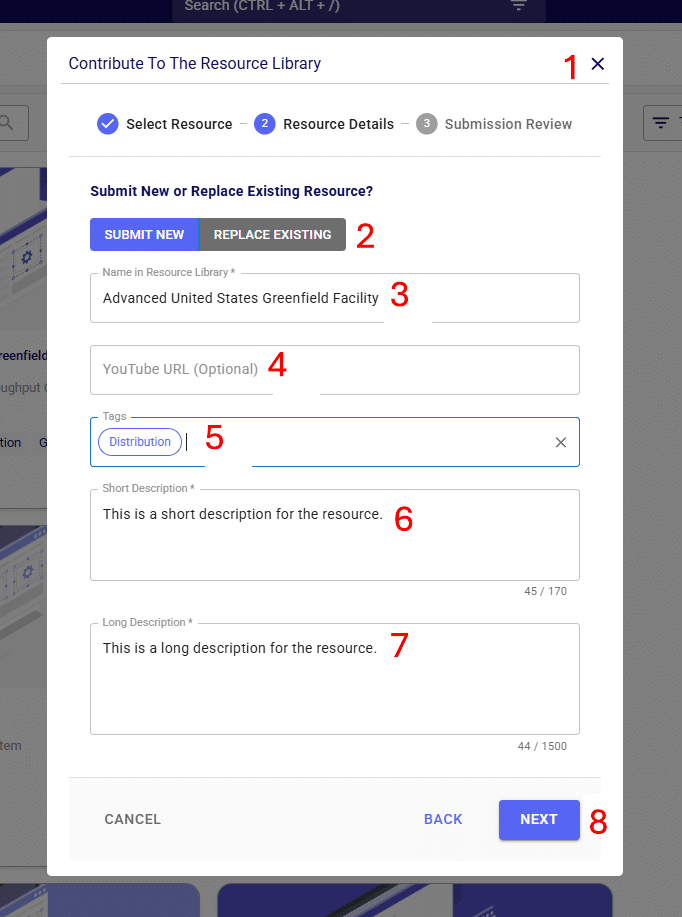

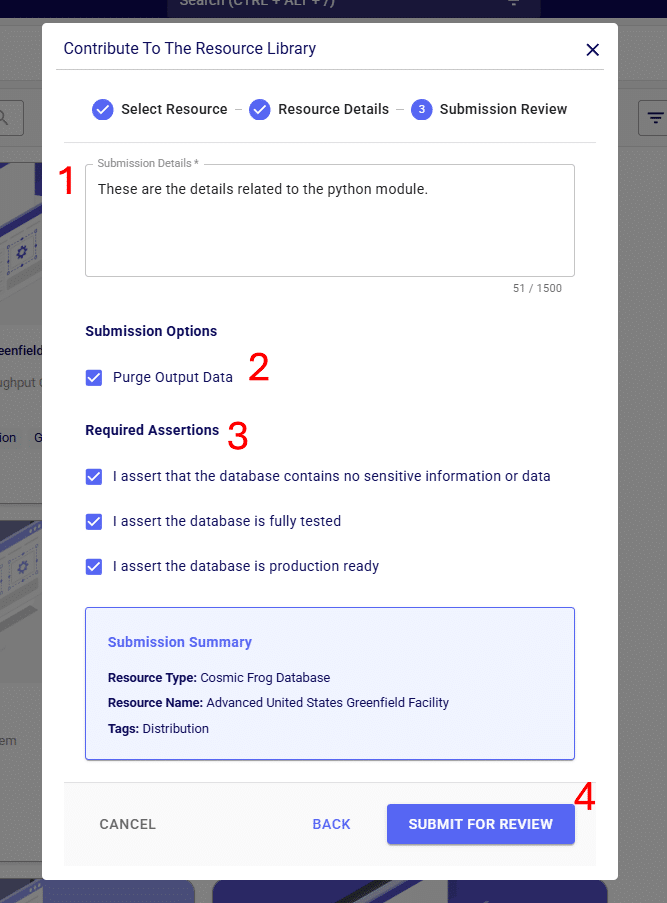

We will first copy the model named “Global Sourcing – Cost to Serve” from the Resource Library to our Optilogic account (learn more about the Resource Library in this help center article):

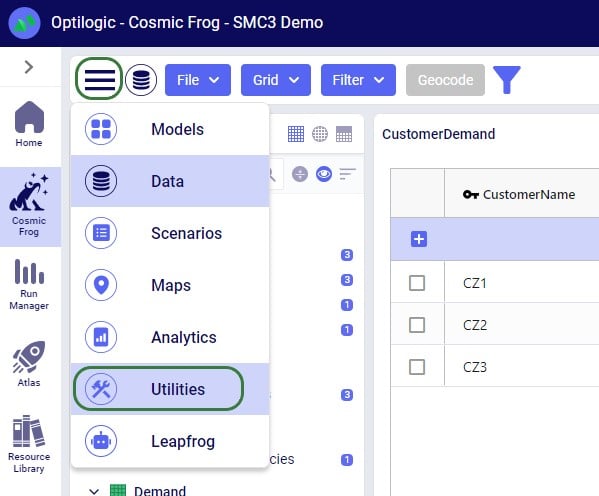

On the Optilogic platform, go to the Resource Library application by clicking on its icon in the list of applications on the left-hand side; note that you may need to scroll down. Should you not see the Resource Library icon here, then click on the icon with 3 horizontal dots which will then show all applications that were previously hidden too.

Now that the model is in the user’s account, it can be opened in the Cosmic Frog application:

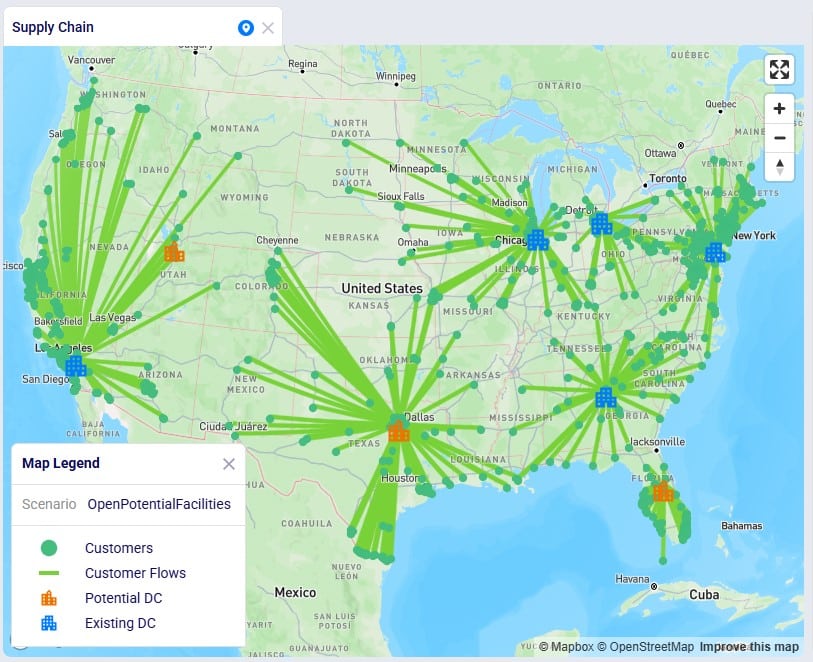

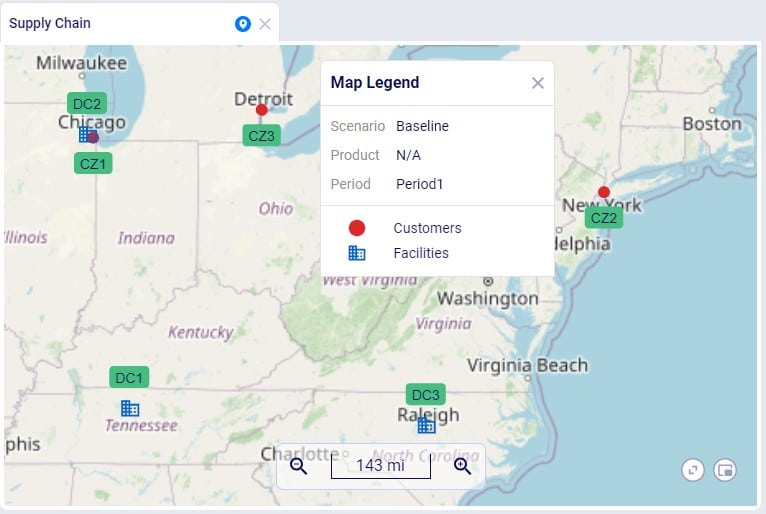

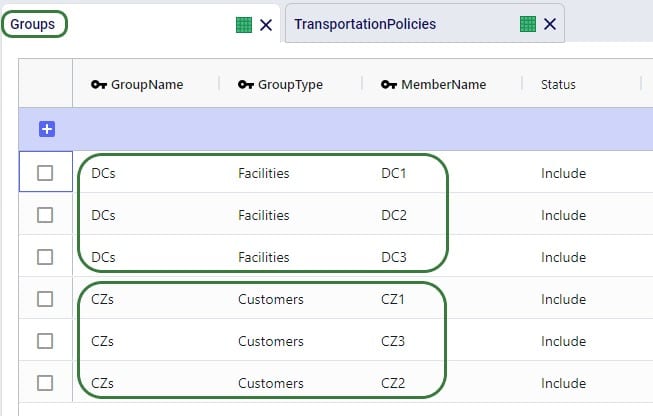

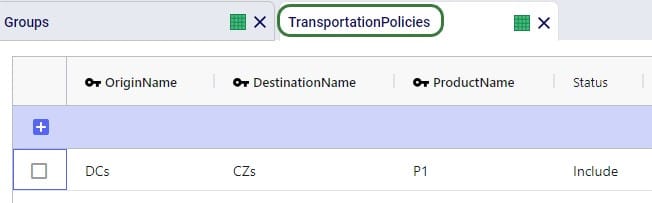

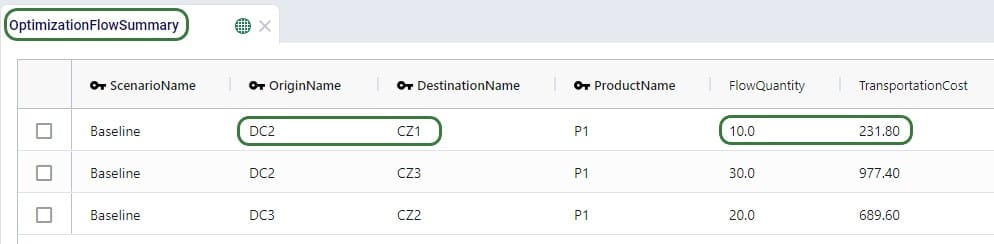

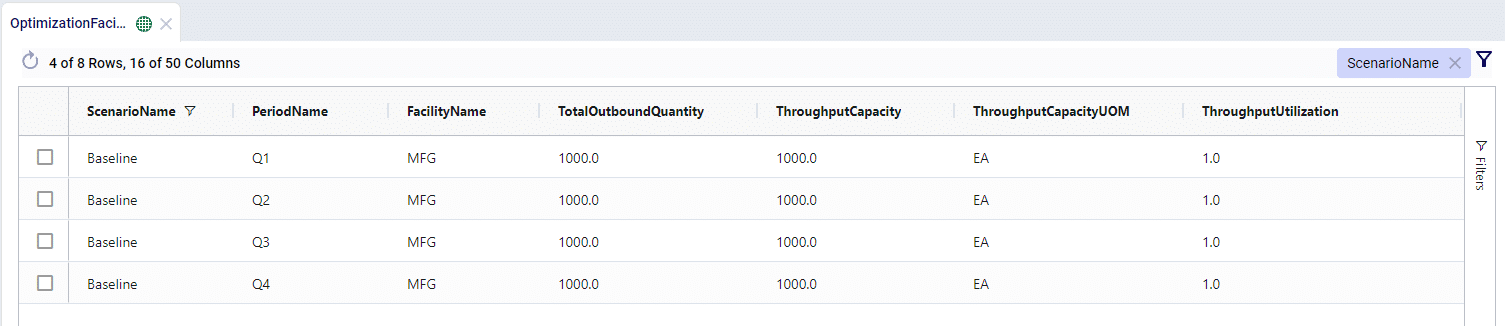

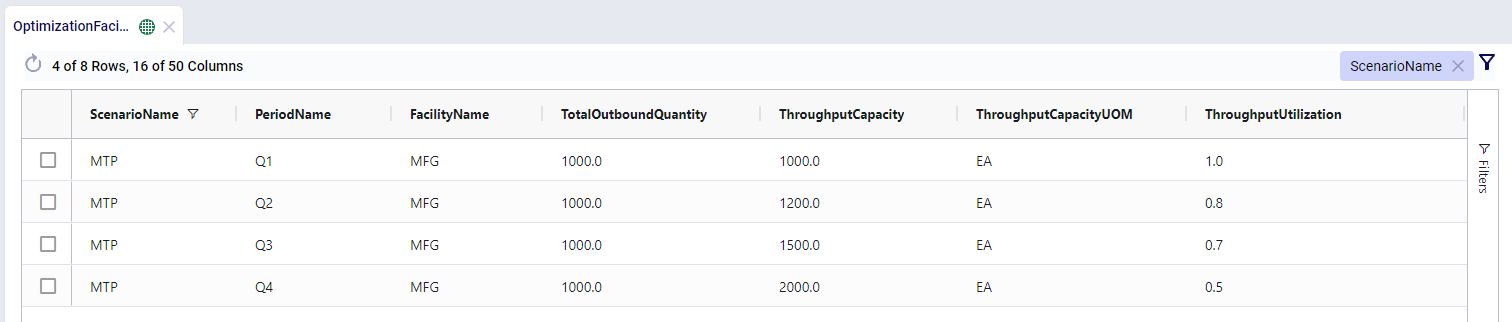

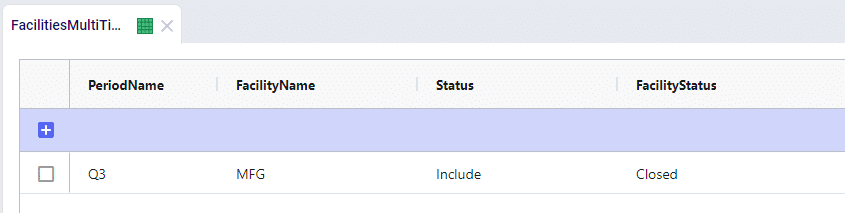

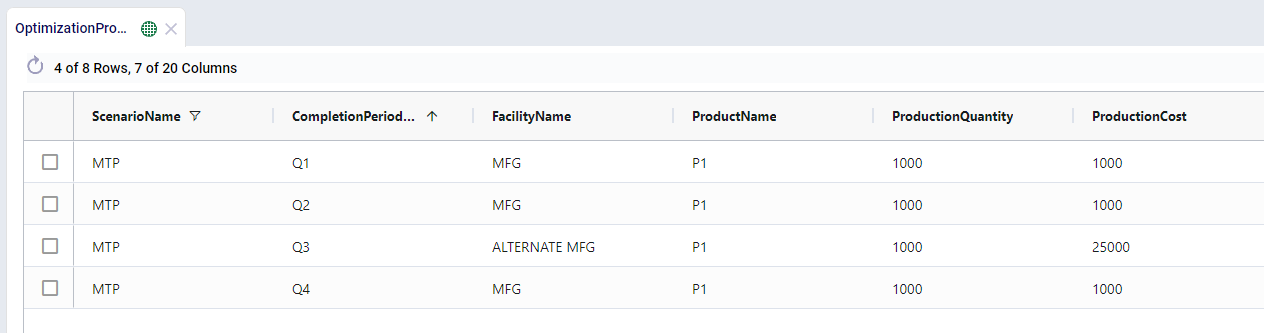

We will only have a brief look at some high-level outputs in Cosmic Frog in this quick start guide, but feel free to explore additional outputs. You can learn more about Cosmic Frog through these help center articles. Let us have a quick look at the Optimization Network Summary output table and the map:

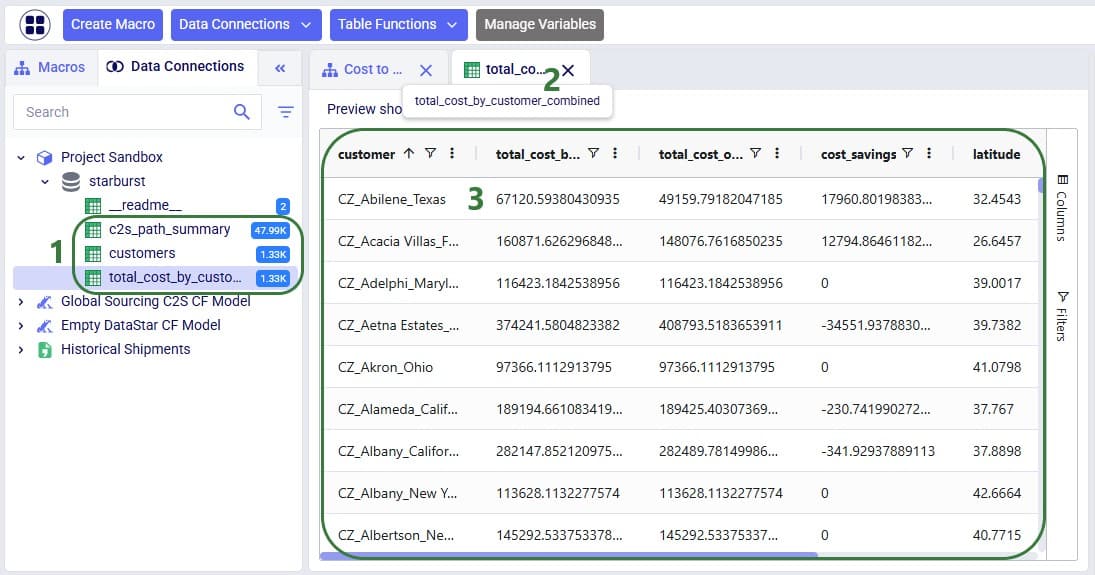

Our next step is to import the needed input table and output table of the Global Sourcing – Cost to Serve model into DataStar. Open the DataStar application on the Optilogic platform by clicking on its icon in the applications list on the left-hand side. In DataStar, we first create a new project named “Cost to Serve Analysis” and set up a data connection to the Global Sourcing – Cost to Serve model, which we will call “Global Sourcing C2S CF Model”. See the Creating Projects & Data Connections section in the Getting Started with DataStar help center article on how to create projects and data connections. Then, we want to create a macro which will calculate the increase/decrease in total cost by customer between the 2 scenarios. We build this macro as follows:

The configuration of the first import task, C2S Path Summary, is shown in this screenshot:

The configuration of the other import task, Customers, uses the same Source Data Connection, but instead of the optimizationcosttoservepathsummary table, we choose the customers table as the table to import. Again, the Project Sandbox is the Destination Data Connection, and the new table is simply called customers.

Instead of writing SQL queries ourselves to pivot the data in the cost to serve path summary table to create a new table where for each customer there is a row which has the customer name and the total cost for each scenario, we can use Leapfrog to do it for us. See the Leapfrog section in the Getting Started with DataStar help center article and this quick start guide on using natural language to create DataStar tasks to learn more about using Leapfrog in DataStar effectively. For the Pivot Total Cost by Scenario by Customer task, the 2 Leapfrog prompts that were used to create the task are shown in the following screenshot:

The SQL Script reads:

DROP TABLE IF EXISTS total_cost_by_customer_combined;

CREATE TABLE total_cost_by_customer_combined AS

SELECT

pathdestination AS customer,

SUM(CASE WHEN scenarioname = 'Baseline' THEN pathcost ELSE 0 END)

AS total_cost_baseline,

SUM(CASE WHEN scenarioname = 'OpenPotentialFacilities' THEN pathcost ELSE 0 END)

AS total_cost_openpotentialfacilities

FROM c2s_path_summary

WHERE scenarioname IN ('Baseline', 'OpenPotentialFacilities')

GROUP BY pathdestination

ORDER BY pathdestination;

To create the Calculate Cost Savings by Customer task, we gave Leapfrog the following prompt: “Use the total cost by customer table and add a column to calculate cost savings as the baseline cost minus the openpotentalfacilities cost”. The resulting SQL Script reads as follows:

ALTER TABLE total_cost_by_customer_combined

ADD COLUMN cost_savings DOUBLE PRECISION;

UPDATE total_cost_by_customer_combined

SET

cost_savings = total_cost_baseline - total_cost_openpotentialfacilities;

This task is also added to the macro; its name is "Calculate Cost Savings by Customer".

Lastly, we give Leapfrog the following prompt to join the table with cost savings (total_cost_by_customer_combined) and the customers table to add the coordinates from the customers table to the cost savings table: “Join the customers and total_cost_by_customer_combined tables on customer and add the latitude and longitude columns from the customers table to the total_cost_by_customer_combined table. Use an inner join and do not create a new table, add the columns to the existing total_cost_by_customer_combined table”. This is the resulting SQL Script, which was added to the macro as the "Add Coordinates to Cost Savings" task:

ALTER TABLE total_cost_by_customer_combined ADD COLUMN latitude VARCHAR;

ALTER TABLE total_cost_by_customer_combined ADD COLUMN longitude VARCHAR;

UPDATE total_cost_by_customer_combined SET latitude = c.latitude

FROM customers AS c

WHERE total_cost_by_customer_combined.customer = c.customername;

UPDATE total_cost_by_customer_combined SET longitude = c.longitude

FROM customers AS c

WHERE total_cost_by_customer_combined.customer = c.customername;We can now run the macro, and once it is completed, we take a look at the tables present in the Project Sandbox:

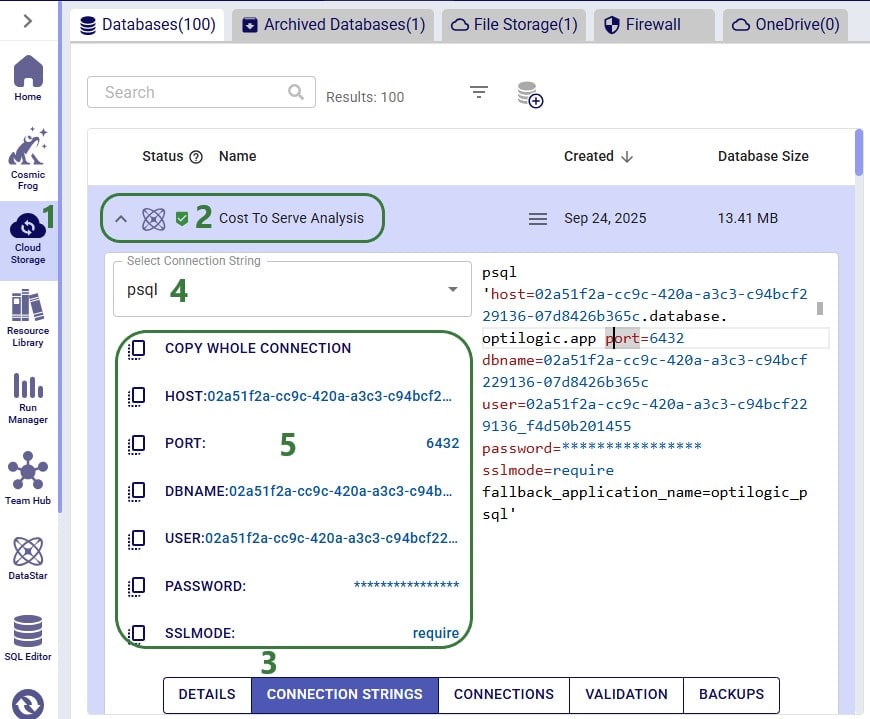

We will use Microsoft Power BI to visualize the change in total cost between the 2 scenarios by customer on a map. To do so, we first need to set up a connection to the DataStar project sandbox from within Power BI. Please follow the steps in the “Connecting to Optilogic with Microsoft Power BI” help center article to create this connection. Here we will just show the step to get the connection information for the DataStar Project Sandbox, which underneath is a PostgreSQL database (next screenshot) and selecting the table(s) to use in Power BI on the Navigator screen (screenshot after this one):

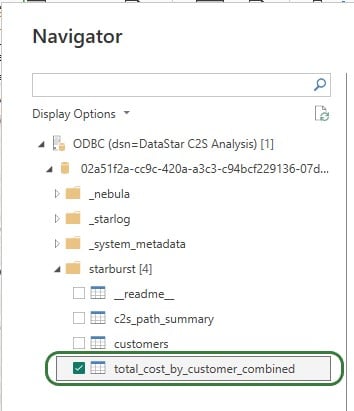

After selecting the connection within Power BI and providing the credentials again, on the Navigator screen, choose to use just the total_cost_by_customer_combined table as this one has all the information needed for the visualization:

We will set up the visualization on a map using the total_cost_by_customer_combined table that we have just selected for use in Power BI using the following steps:

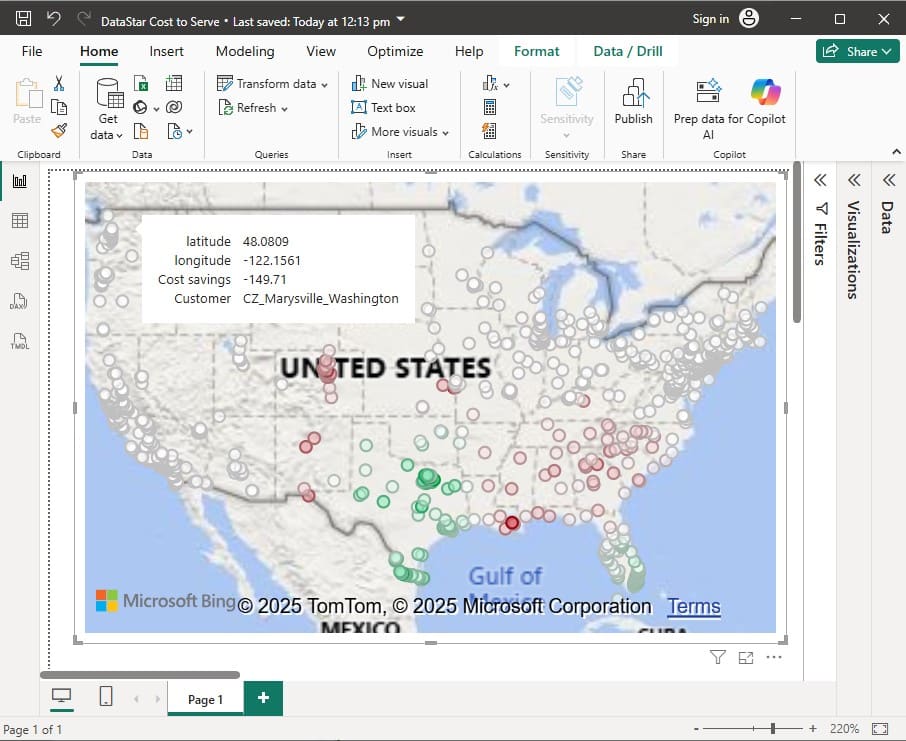

With the above configuration, the map will look as follows:

Green customers are those where the total cost went down in the OpenPotentialFacilities scenario, i.e. there are savings for this customer. The darker the green, the higher the savings. White customers did not see a lot of difference in their total costs between the 2 scenarios. The one that is hovered over, in Marysville in Washington state, has a small increase of $149.71 in total costs in the OpenPotentialFacilities scenario as compared to the Baseline scenario. Red customers are those where the total cost went up in the OpenPotentialFacilities scenario (i.e. the cost savings are a negative number); the darker the red, the higher the increase in total costs. As expected, the customers with the highest cost savings (darkest green) are those located in Texas and Florida, as they are now being served from DCs closer to them.

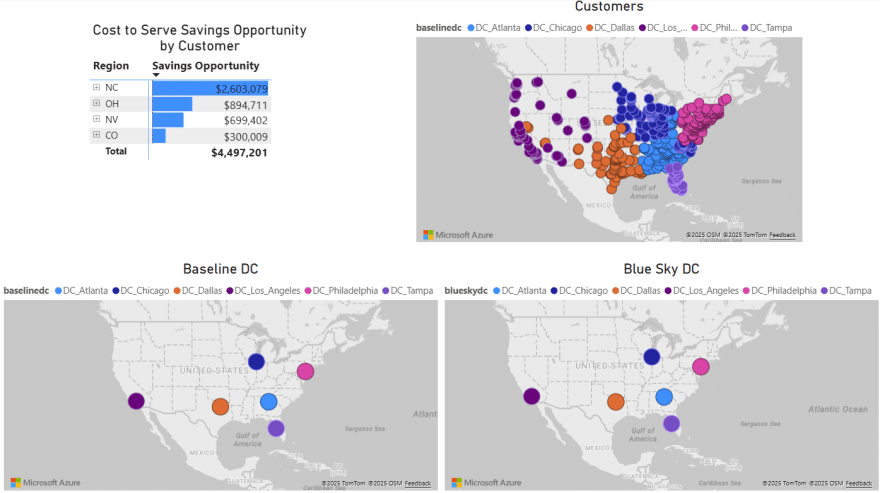

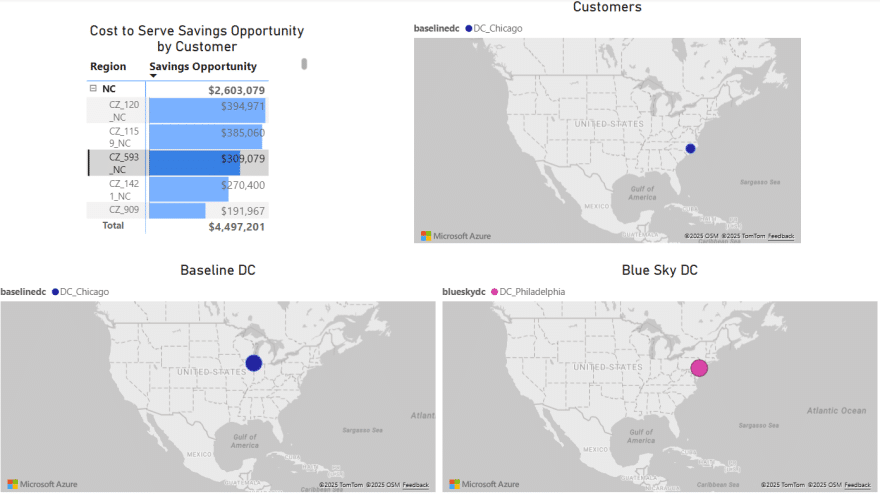

To give users an idea of what type of visualization and interactivity is possible within Power BI, we will briefly cover the 2 following screenshots. These are of a different Cosmic Frog model for which a cost to serve analysis is performed too. Two scenarios were run in this model: Baseline DC and Blue Sky DC. In the Baseline scenario, customers are assigned to their current DCs and in the Blue Sky scenario, they can be re-assigned to other DCs. The chart on the top left shows the cost savings by region (= US state) that are identified in the Blue Sky DC scenario. The other visualizations on the dashboard are all on maps: the top right map shows the customers which are colored based on which DC serves them in the Baseline scenario, the bottom 2 maps shows the DCs used in the Baseline (left) and the DCs used in the Blue Sky scenario.

To drill into the differences between the 2 scenarios, users can expand the regions in the top left chart and select 1 or multiple individual customers. This is an interactive chart, and the 3 maps are then automatically filtered for the selected location(s). In the below screenshot, the user has expanded the NC region and then selected customer CZ_593_NC in the top left chart. In this chart, we see that the cost savings for this customer in the Blue Sky DC scenario as compared to the Baseline scenario amount to $309k. From the Customers map (top right) and Baseline DC map (bottom left) we see that this customer was served from the Chicago DC in the Baseline. We can tell from the Blue Sky DC map (bottom right) that this customer is re-assigned to be served from the Philadelphia DC in the Blue Sky DC scenario.

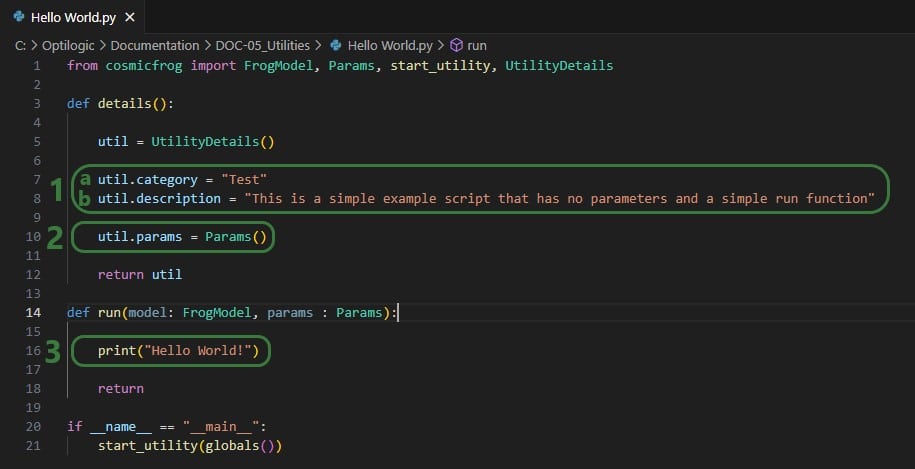

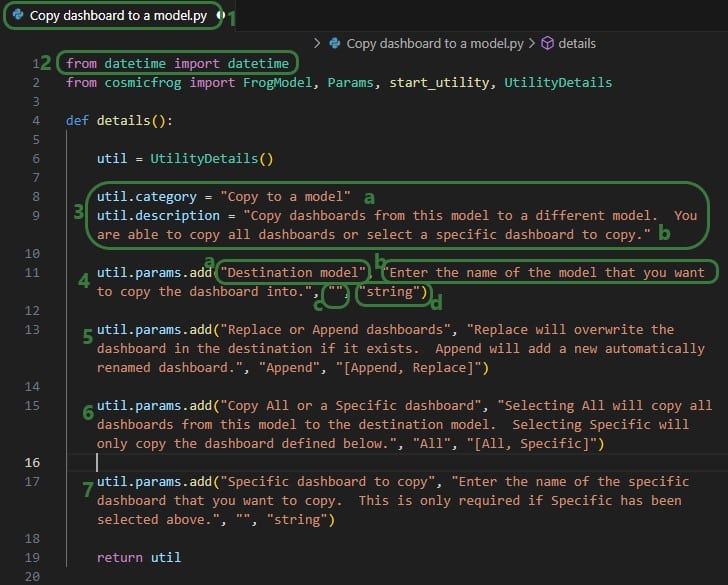

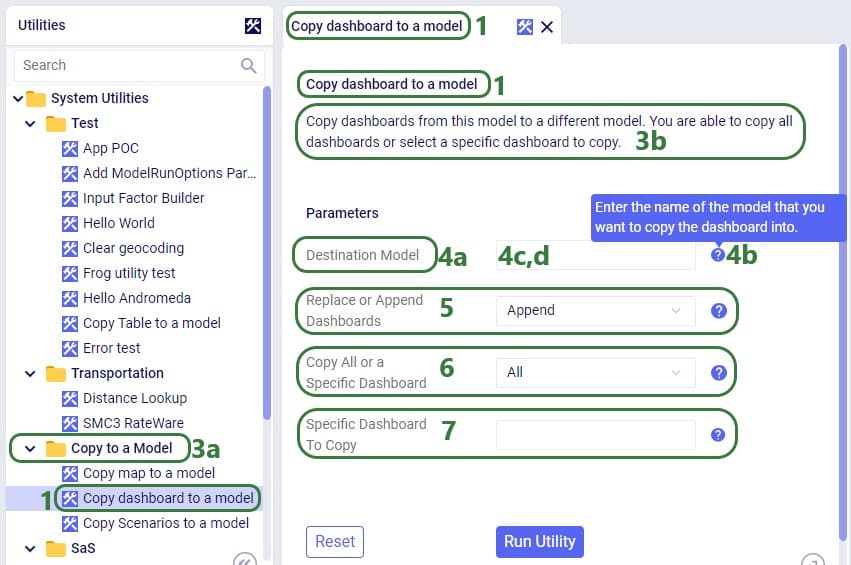

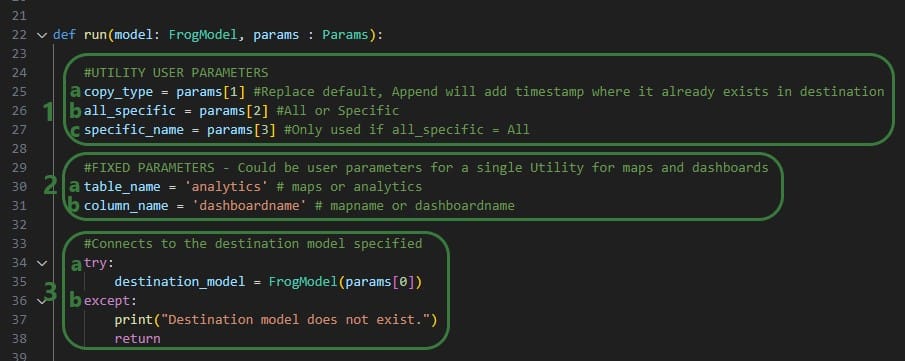

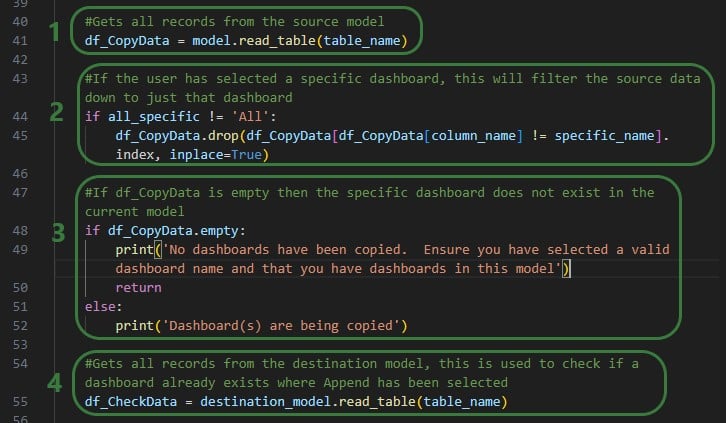

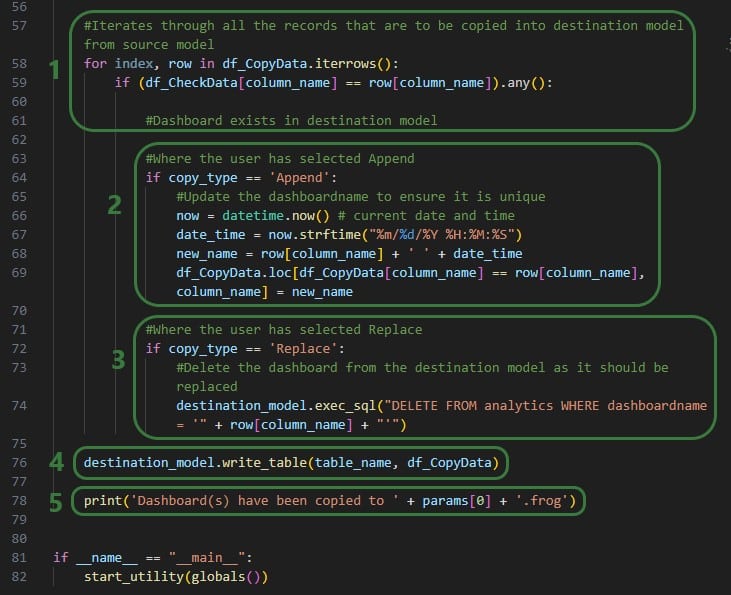

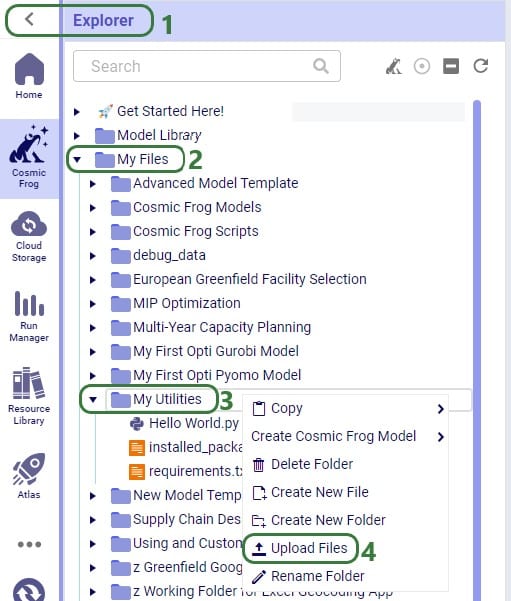

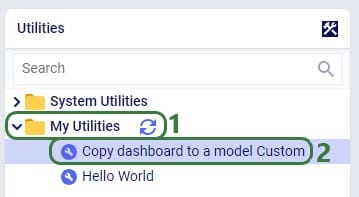

Optilogic has developed Python libraries to facilitate scripting for 2 of its flagship applications: Cosmic Frog, the most powerful supply chain design tool on the market, and DataStar, its just released AI-powered data product where users can create flexible, accessible and repeatable workflows with zero learning curve.

Instead of going into the applications themselves to build and run supply chain models and data workflows, these libraries enable users to programmatically access their functionality and underlying data. Example use cases for such scripts are:

In this documentation we cover the basics of getting yourself set up so you can take advantage of these Python scripting libraries, both on a local computer and on the Optilogic platform leveraging the Lightning Editor application. More specific details for the cosmicfrog and datastar libraries, including examples and end-to-end scripts, are detailed in the following Help Center articles and library specifications:

Working locally with Python scripts has the advantage that you can make use of code completion features which may include text auto-completion, showing what arguments functions need, catching incorrect syntax/names, etc. An example set up to achieve this is for example one where Python, Visual Studio Code, and an IntelliSense extension package for Python for Visual Studio Code are installed locally:

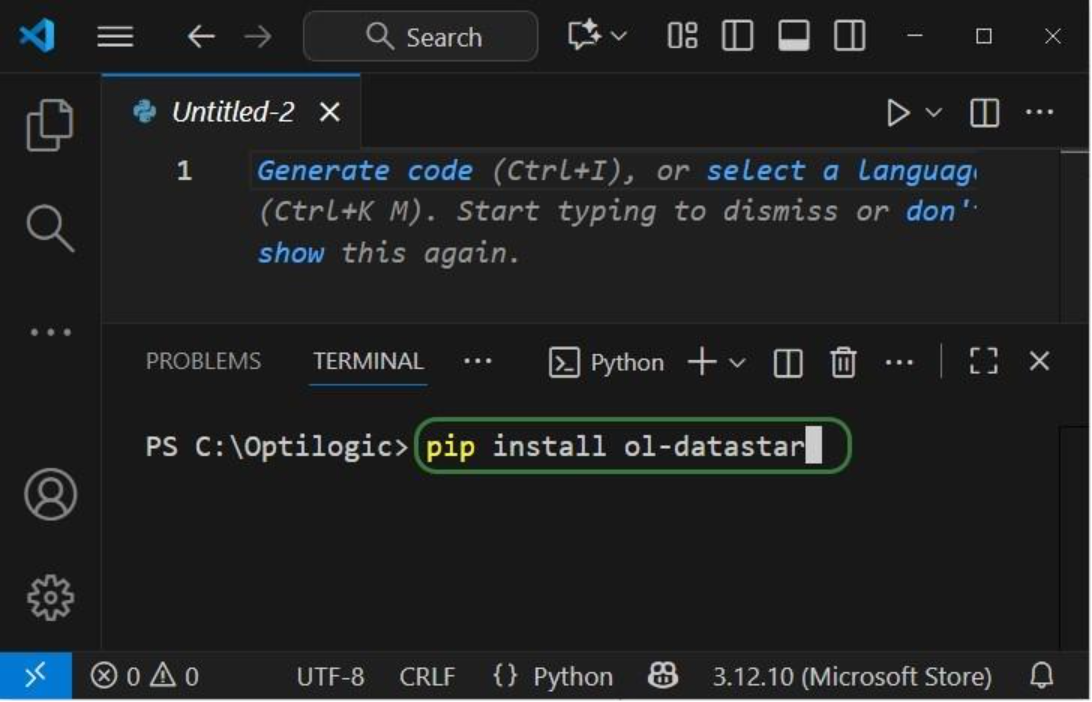

Once you are set up locally and are starting to work with Python scripts in Visual Studio Code, you will need to install the Python libraries you want to use to have access to their functionality. You do this by typing following in a terminal in Visual Studio Code (if no terminal is open yet: click on the View menu at the top and select Terminal, or the keyboard shortcut Ctrl + ` can be used):

When installing these libraries, multiple external libraries (dependencies) are installed too. These are required to run the packages successfully and/or make working with them easier. These include the optilogic, pandas, and SQLAlchemy packages (among others) for both libraries. You can find out which packages are installed with the cosmicfrog / ol-datastar libraries by typing “pip show cosmicfrog” or “pip show ol-datastar" in a terminal.

To use other Python libraries in addition, you will usually need to install them using “pip install” too before you can leverage them.

If you want to access certain items on the Optilogic platform (like Cosmic Frog models, DataStar project sandboxes) while working locally, you will need to whitelist your IP address on the platform, so the connections are not blocked by a firewall. You can do this yourself on the Optilogic platform:

Please note that for working with DataStar, the whitelisting of your IP address is only necessary if you want to access the Project Sandbox of projects directly through scripts. You do not need to whitelist your IP address to leverage other functions while scripting, like creating projects, adding macros and their tasks, and running macros.

App Keys are used to authenticate the user from the local environment on the Optilogic platform. To create an App Key, see this Help Center Article on Generating App and API Keys. Copy the generated App Key and paste it into an empty Notepad window. Save this file as app.key and place it in the same folder as your local Python script.

It is important to emphasize that App Keys and app.key files should not be shared with others, e.g. remove them from folders / zip-files before sharing. Individual users need to authenticate with their own App Key.

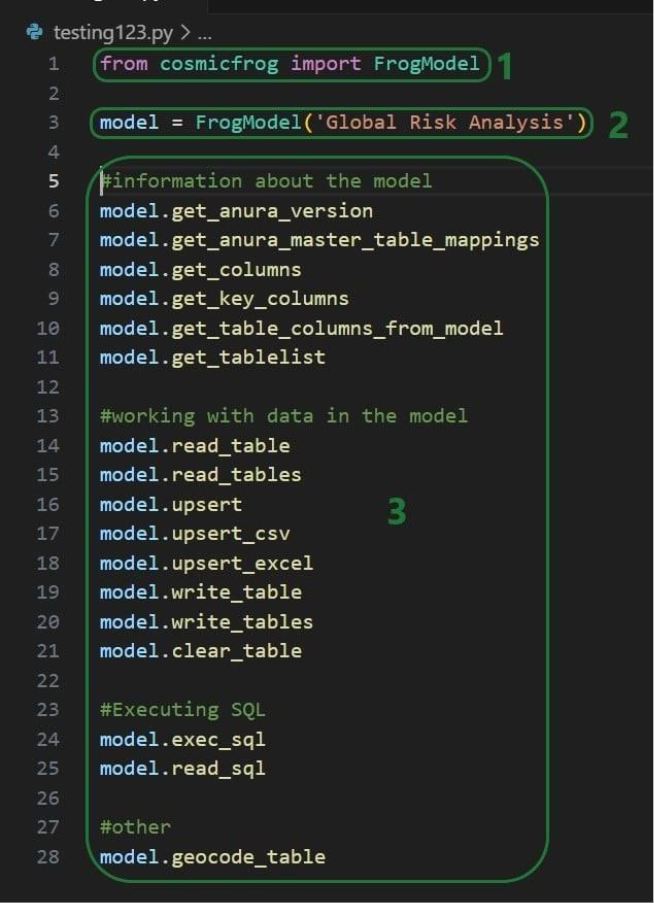

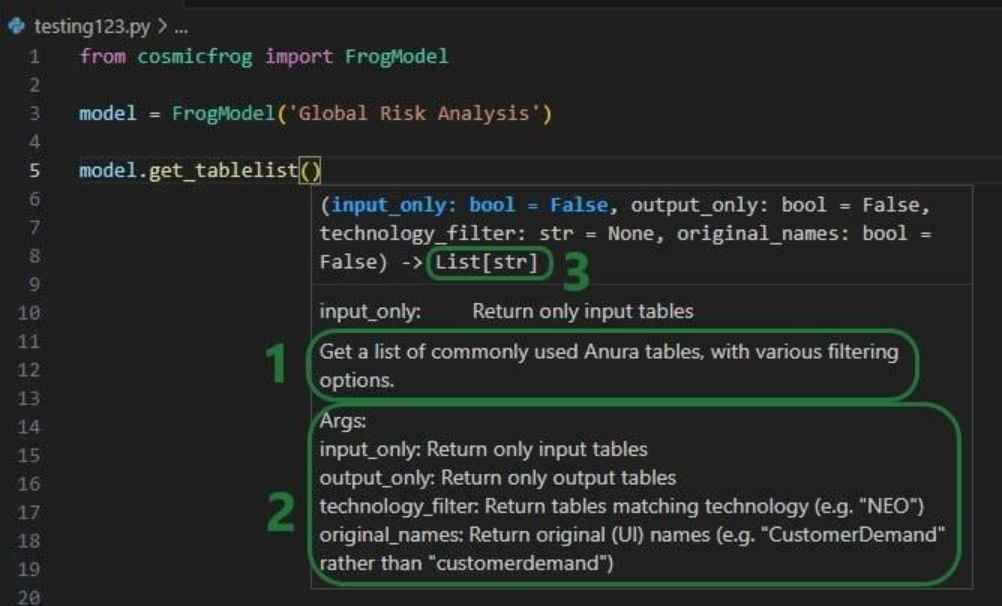

The next set of screenshots will show an example Python script named testing123.py on our local set-up. Here it uses the cosmicfrog library, using the ol-datastar library works similarly. The first screenshot shows a list of functions available from the cosmicfrog Python library:

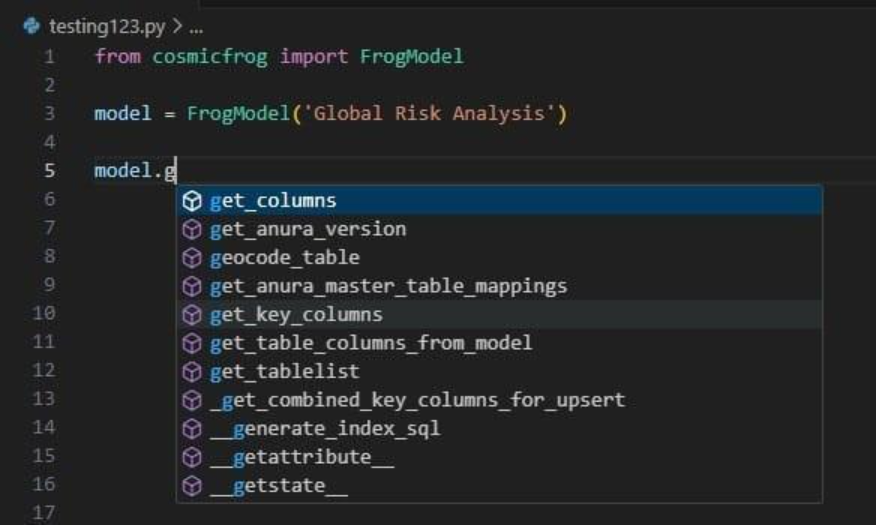

When you continue typing after you have typed “model.” the code completion feature will auto-generate a list of functions you may be getting at. In the next screenshot ones that start with or contain a “g” as I have only typed a “g” so far. This list will auto-update the more you type. You can select from the list with your cursor or arrow up/down keys and hitting the Tab key to select and auto-complete:

When you have completed typing the function name and next type a parenthesis ‘(‘ to start entering arguments, a pop-up will come up which contains information about the function and its arguments:

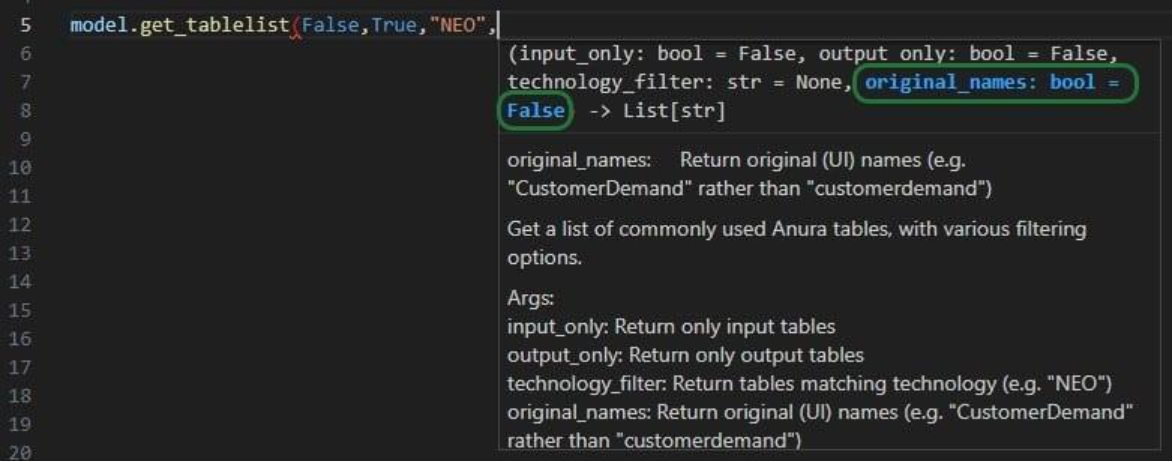

As you type the arguments for the function, the argument that you are on and the expected format (e.g. bool for a Boolean, str for string, etc.) will be in blue font and a description of this specific argument appears above the function description (e.g. above box 1 in the above screenshot). In the screenshot above we are on the first argument input_only which requires a Boolean as input and will be set to False by default if the argument is not specified. In the screenshot below we are on the fourth argument (original_names) which is now in blue font; its default is also False, and the argument description above the function description has changed now to reflect the fourth argument:

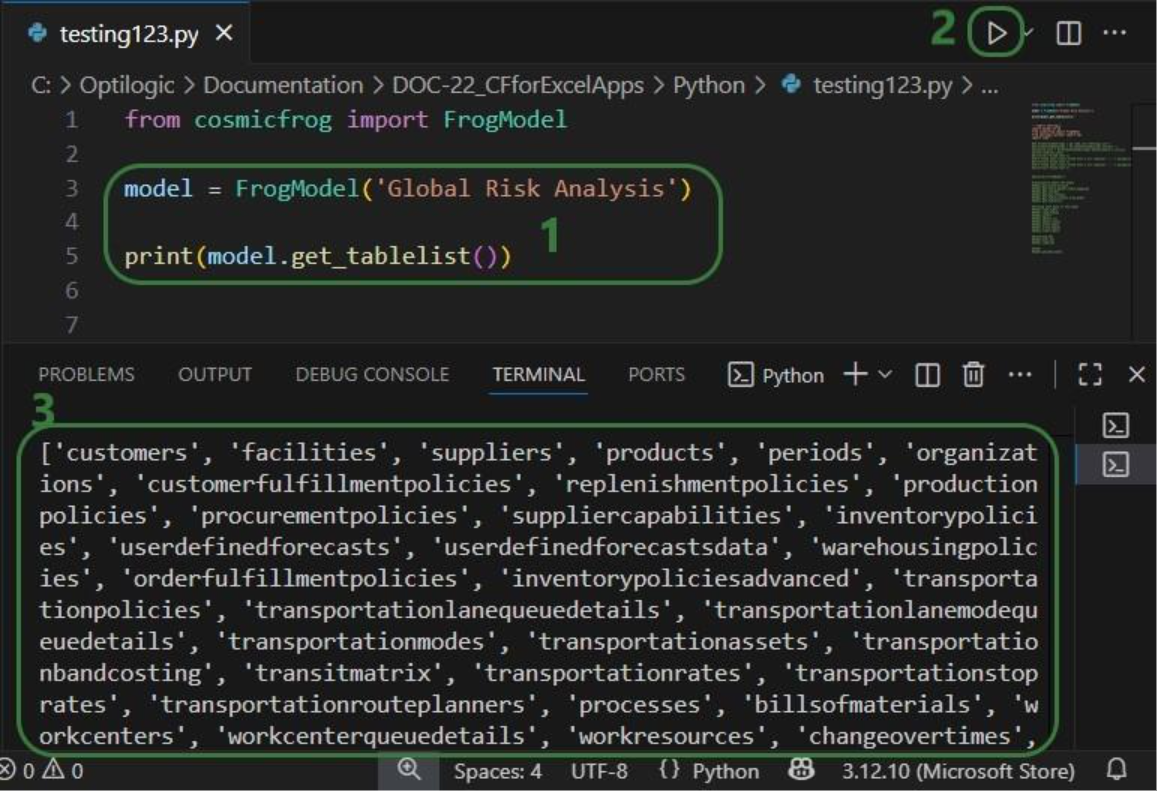

Once you are ready to run a script, you can click on the play button at the top right of the screen:

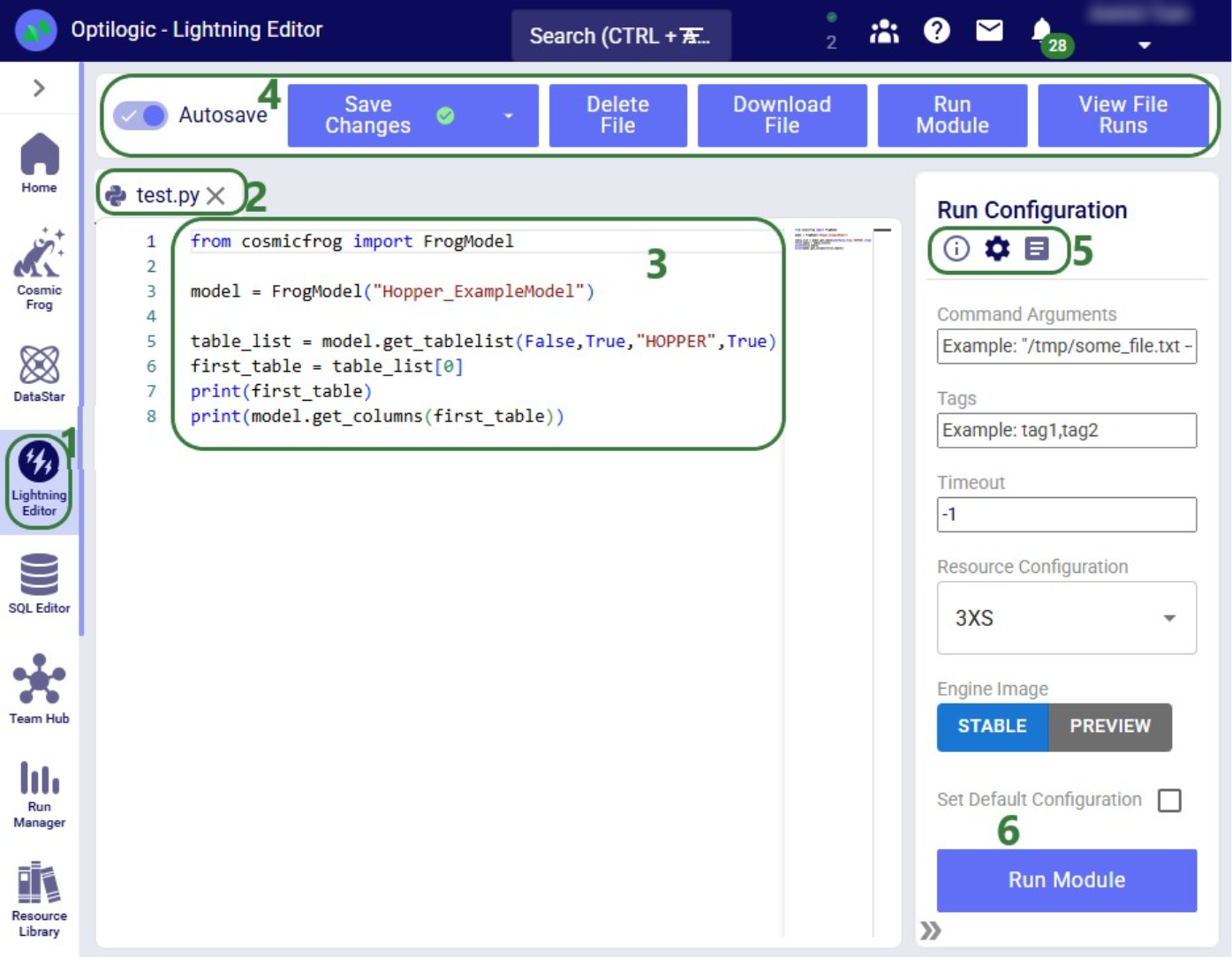

As mentioned above, you can also use the Lightning Editor application on the Optilogic platform to create and run Python scripts. Lightning Editor is an Integrated Development Environment (IDE) which has some code completion features, but these are not as extensive and complete as those in Visual Studio Code when used with an IntelliSense extension package.

When working on the Optilogic platform, you are already authenticated as a user, and you do not need to generate / provide an App Key or app.key file nor whitelist your IP address.

When using the datastar library in scripts, users need to place a requirements.txt file in the same folder on the Optilogic platform as the script. This file should only contain the text “ol-datastar” (without the quotes). No requirements.txt files is required when using the cosmicfrog library.

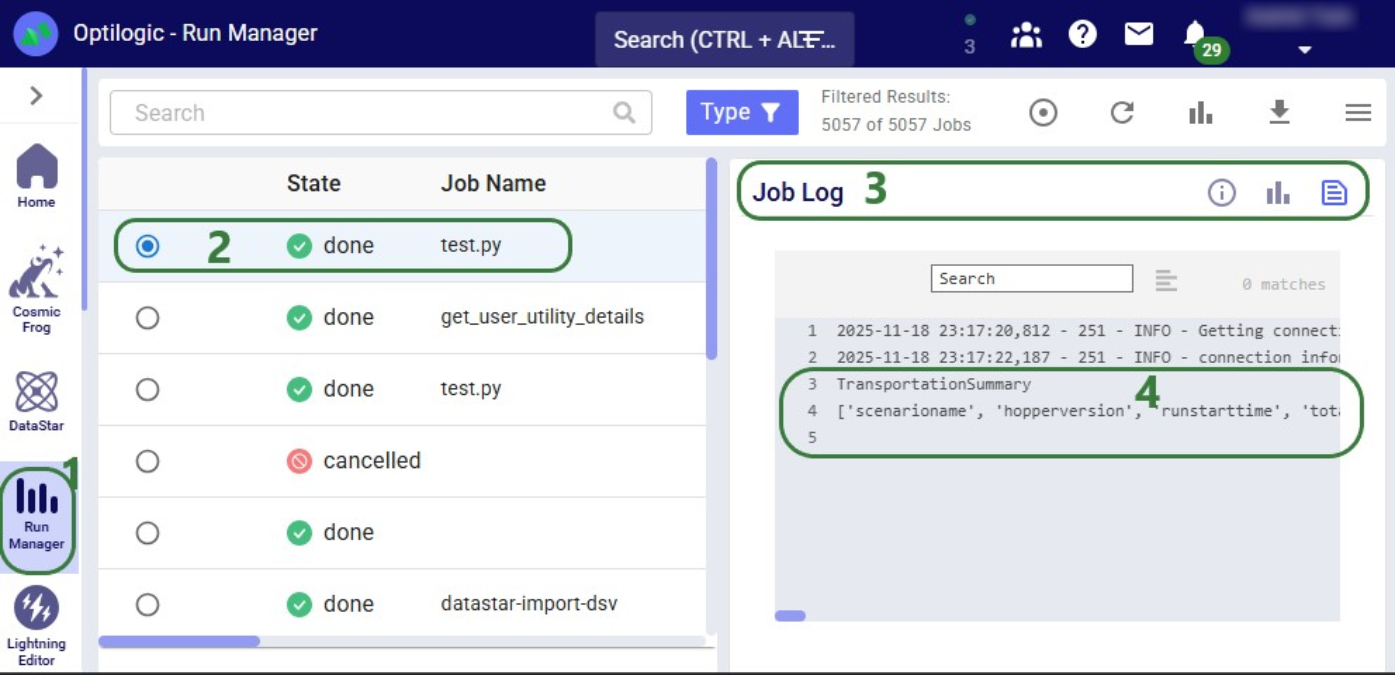

The following simple test.py Python script on Lightning Editor will print the first Hopper output table name and its column names:

Please feel free to download the Cosmic Frog Python Library PDF file. Please note that this library requires Python 3.11.

You can also reference the video shown below that covers an overview on scripting within Cosmic Frog.

DataStar users can take advantage of the datastar Python library, which gives users access to DataStar projects, macros, tasks, and connections through Python scripts. This way users can build, access, and run their DataStar workflows programmatically. The library can be used in a user’s own Python environment (local or on the Optilogic platform), and it can also be used in Run Python tasks in a DataStar macro.

In this documentation we will cover how to use the library through multiple examples. At the end, we will step through an end-to-end script that creates a new project, adds a macro to the project, and creates multiple tasks that are added to the macro. The script then runs the macro while giving regular updates on its progress.

Before diving into the details of this article, it is recommended to read this “Setup: Python Scripts for Cosmic Frog and DataStar” article first; it explains what users need to do in terms of setup before they can run Python scripts using the datastar library. To learn more about the DataStar application itself, please see these articles on Optilogic’s Help Center.

Succinct documentation in PDF format of all datastar library functionality can be downloaded here (please note that the long character string at the beginning of the filename is expected). This includes a list of all available properties and methods for the Project, Macro, Task, and Connection classes at the end of the document.

All Python code that is shown in the screenshots throughout this documentation is available in the Appendix, so that you can copy-paste from there if you want to run the exact same code in your own Python environment and/or use these as jumping off points for your own scripts.

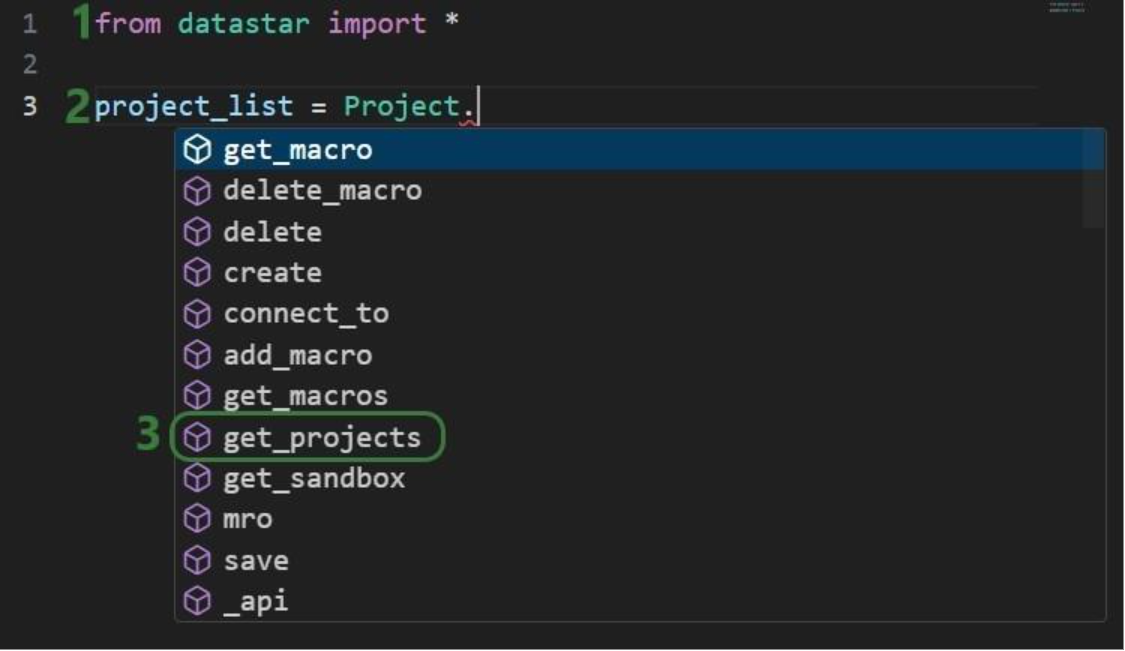

If you have reviewed the “Setup: Python Scripts for Cosmic Frog and DataStar” article and are set up with your local or online Python environment, we are ready to dive in! First, we will see how we can interrogate existing projects and macros using Python and the datastar library. We want to find out which DataStar projects are already present in the user’s Optilogic account.

Once the parentheses are typed, hover text comes up with information about this function. It tells us that the outcome of this method will be a list of strings, and the description of the method reads “Retrieve all project names visible to the authenticated user”. Most methods will have similar hover text describing the method, the arguments it takes and their default values, and the output format.

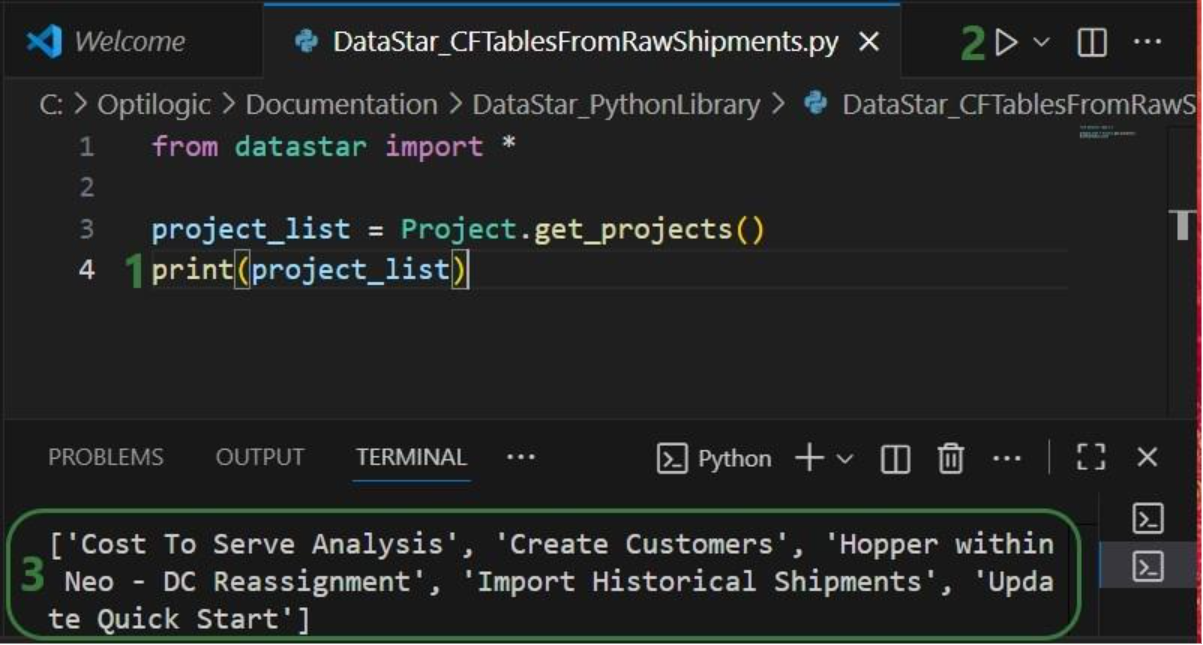

Now that we have a variable that contains the list of DataStar projects in the user account, we want to view the value of this variable:

Next, we want to dig one level deeper and for the “Import Historical Shipments” project find out what macros it contains:

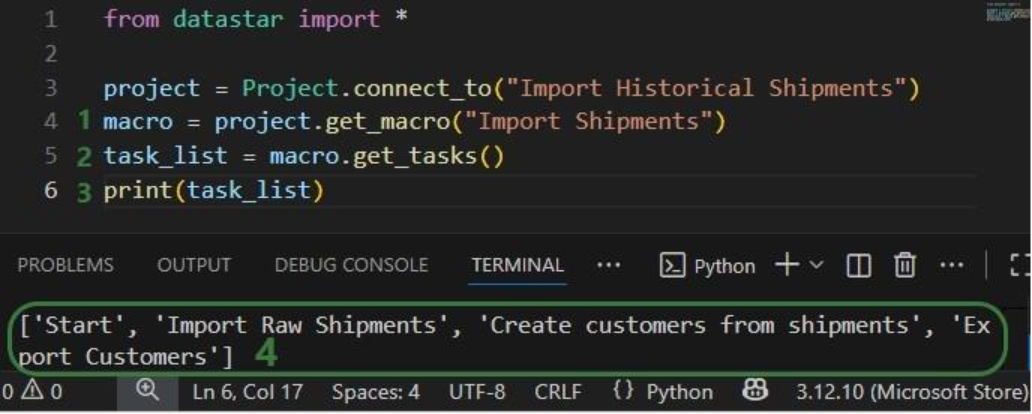

Finally, we will retrieve the tasks this “Import Shipments” macro contains in a similar fashion:

In addition, we can have a quick look in the DataStar application to see that the information we are getting from the small scripts above matches what we have in our account in terms of projects (first screenshot below), and the “Import Shipments” macro plus its tasks in the “Import Historical Shipments” project (second screenshot below):

Besides getting information about projects and macros, other useful methods for projects and macros include:

Note that when creating new objects (projects, macros, tasks or connections) these are automatically saved. If existing objects are modified, their changes need to be committed by using the save method.

Macros can be copied, either within the same project or into a different project. Tasks can also be copied, either within the same macro, between macros in the same project, or between macros of different projects. If a task is copied within the same macro, its name will automatically be suffixed with (Copy).

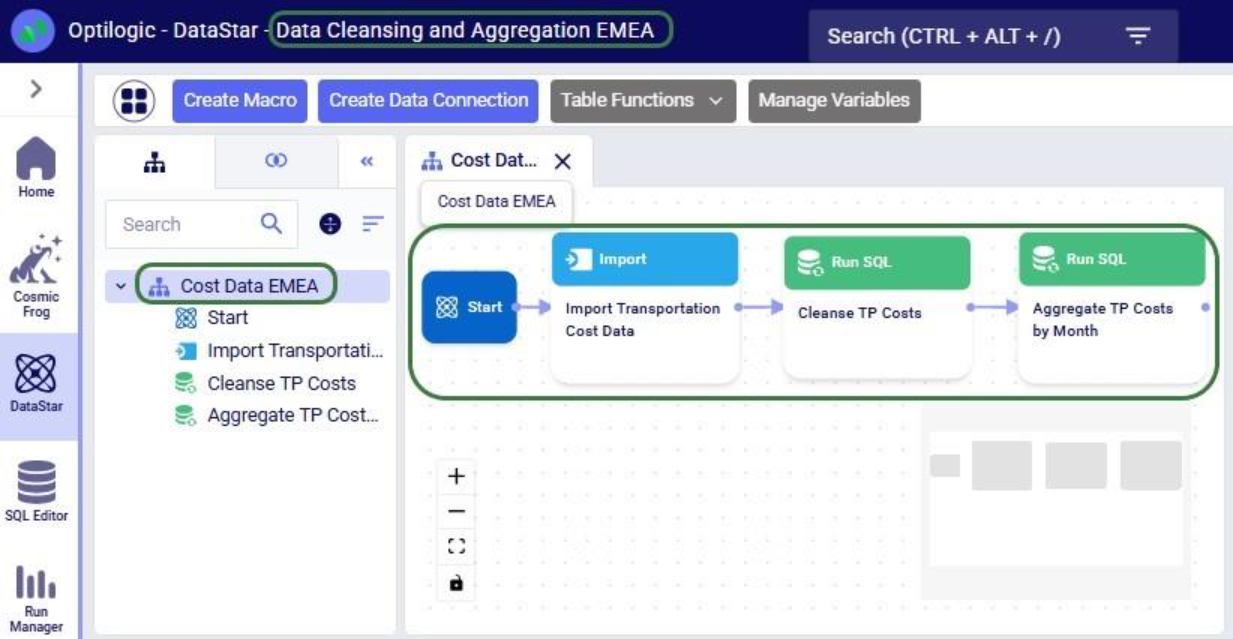

As an example, we will consider a macro called “Cost Data” in a project named “Data Cleansing and Aggregation NA Model”, which is configured as follows:

The North America team shows this macro to their EMEA counterparts who realize that they could use part of this for their purposes, as their transportation cost data has the same format as that of the NA team. Instead of manually creating a new macro with new tasks that duplicate the 3 transportation cost related ones, they decide to use a script where first the whole macro is copied to a new project, and then the 4 tasks which are not relevant for the EMEA team are deleted:

After running the script, we see in DataStar that there is indeed a new project named “Data Cleansing and Aggregation EMEA” which has a “Cost Data EMEA” macro that contains the 3 transportation cost related tasks that we wanted to keep:

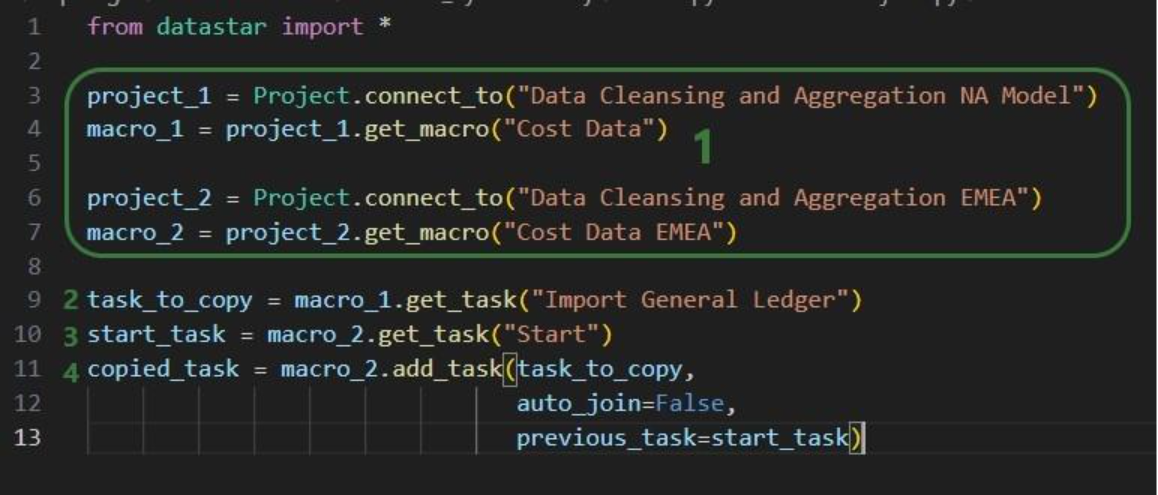

Note that another way we could have achieved this would have been to copy the 3 tasks from the macro in the NA project to the new macro in the EMEA project. The next example shows this for one task. Say that after the Cost Data EMEA macro was created, the team finds they also have a use for the “Import General Ledger” task that was deleted as it was not on the list of “tasks to keep”. In an extension of the previous script or a new one, we can leverage the add_task method of the Macro class to copy the “Import General Ledger” task from the NA project to the EMEA one:

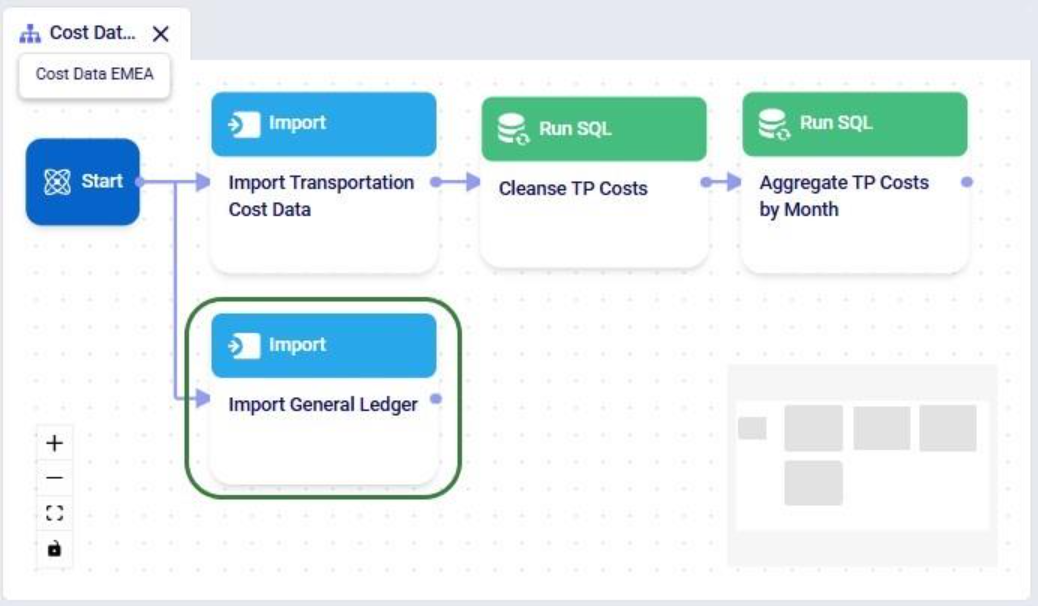

After running the script, we see that the “Import General Ledger” task is now part of the “Cost Data EMEA” macro and is connected to the Start task:

Several additional helpful features on chaining tasks together in a macro are:

DataStar connections allow users to connect to different types of data sources, including CSV-files, Excel files, Cosmic Frog models, and Postgres databases. These data sources need to be present on the Optilogic platform (i.e. visible in the Explorer application). They can then be used as sources / destinations / targets for tasks within DataStar.

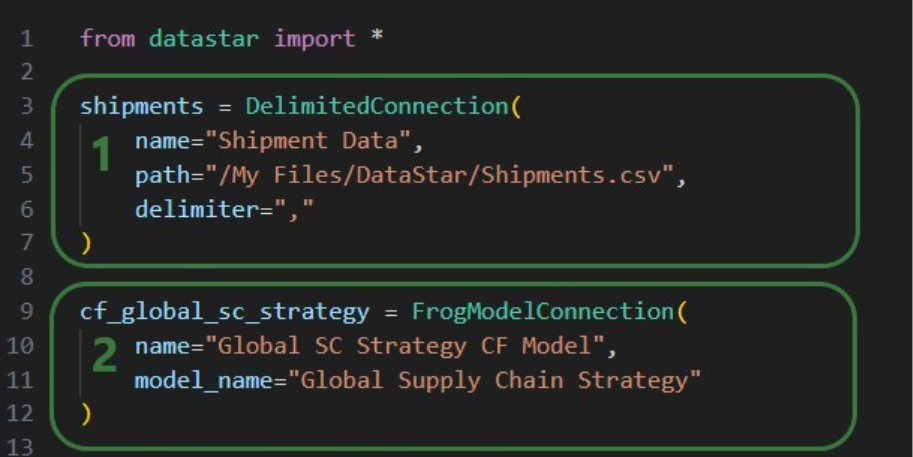

We can use scripts to create data connections:

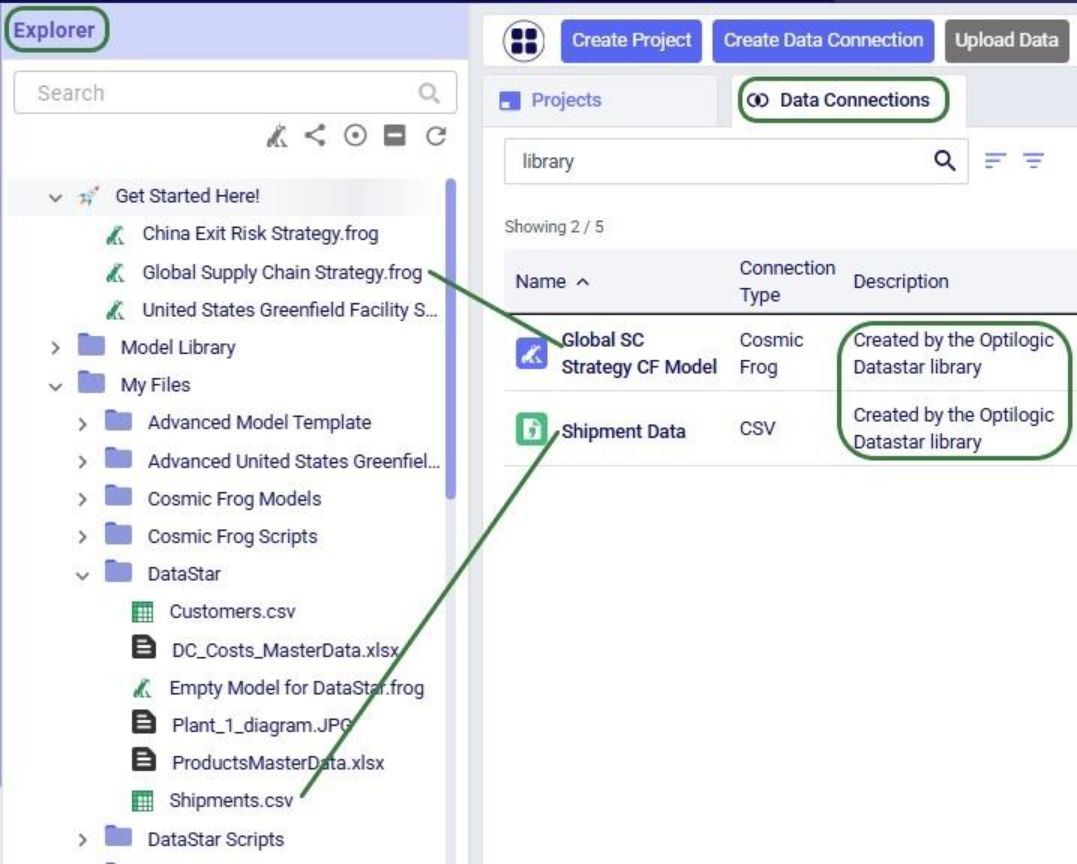

After running this script, we see the connections have been created. In the following screenshot, the Explorer is on the left, and it shows the Cosmic Frog model “Global Supply Chain Strategy.frog” and the Shipments.csv file. The connections using these are listed in the Data Connections tab of DataStar. Since we did not specify any description, an auto-generated description “Created by the Optilogic Datastar library” was added to each of these 2 connections:

In addition to the connections shown above, data connections to Excel files (.xls and .xlsx) and PostgreSQL databases which are stored on the Optilogic platform can be created too. Use the ExcelConnection and OptiConnection classes to set up such these types of connections up.

Each DataStar project has its own internal data connection, the project sandbox. This is where users perform most of the data transformations after importing data into the sandbox. Using scripts, we can access and modify data in this sandbox directly instead of using tasks in macros to do so. Note that if you have a repeatable data workflow in DataStar which is used periodically to refresh a Cosmic Frog model where you update your data sources and re-run your macros, you need to be mindful of making one-off changes to the project sandbox through a script. When you change data in the sandbox through a script, macros and tasks are not updated to reflect these modifications. When running the data workflow the next time, the results may be different if that change the script made is not made again. If you want to include such changes in your macro, you can add a Run Python task to your macro within DataStar.

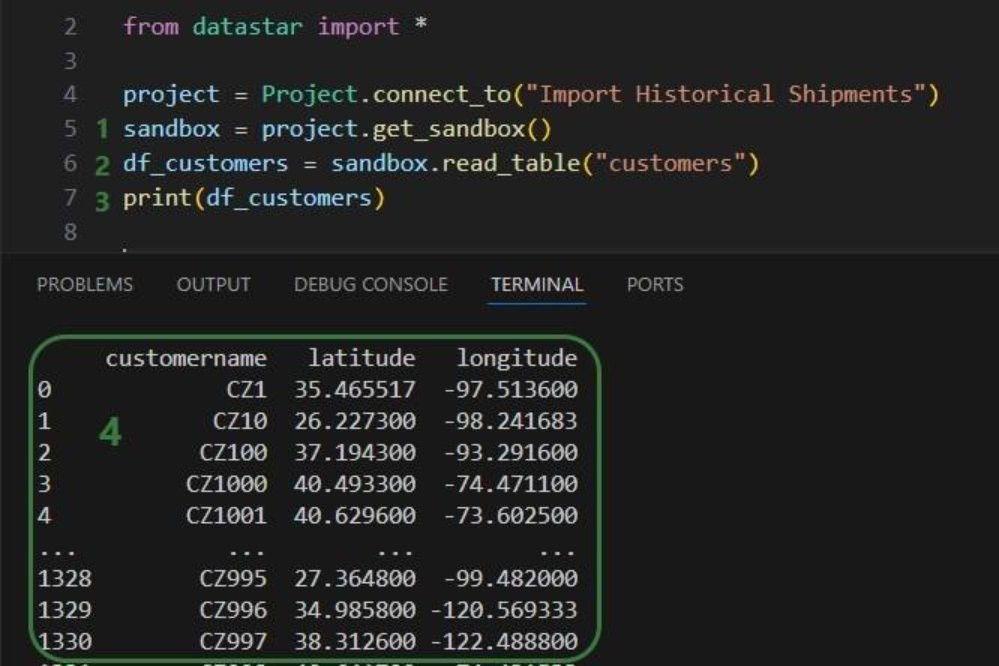

Our “Import Historical Shipments” project has a table named customers in its project sandbox:

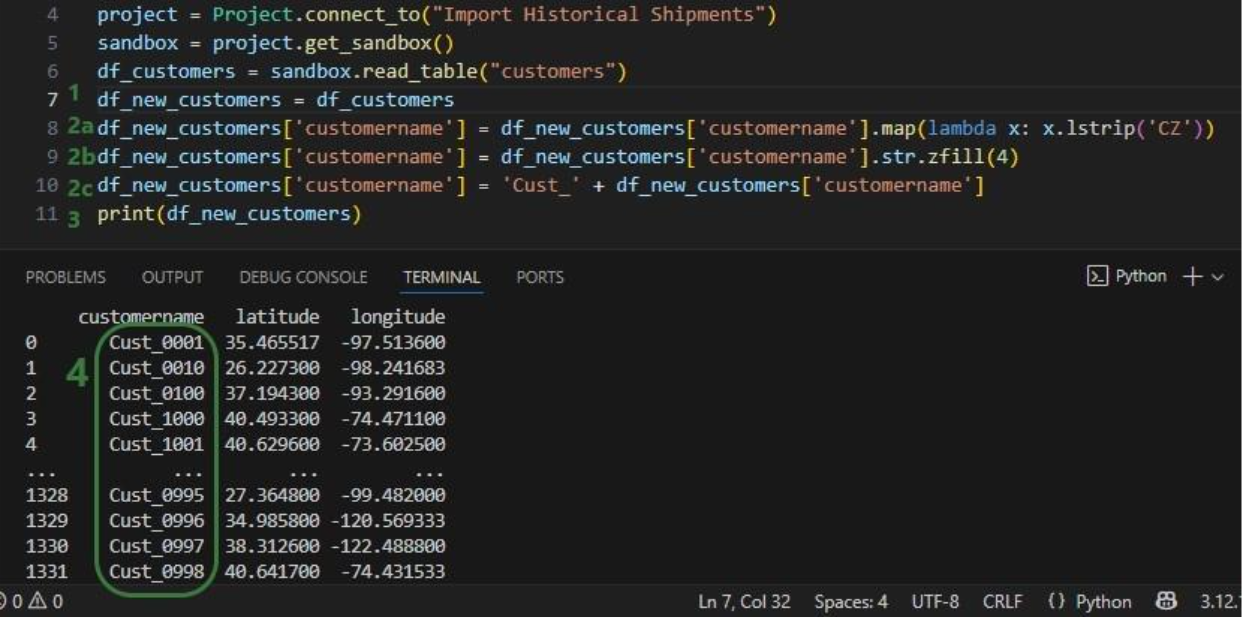

To make the customers sort in numerical order of their customer number, our goal in the next script is to update the number part of the customer names with left padded 0’s so all numbers consist of 4 digits. And while we are at it, we are also going to replace the “CZ” prefix with a “Cust_” prefix.

First, we will show how to access data in the project sandbox:

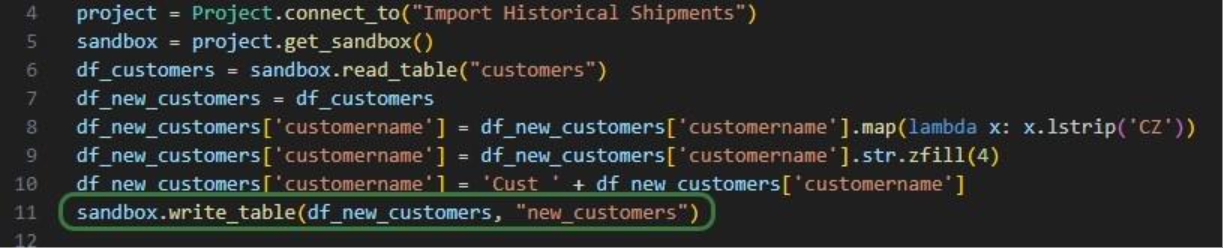

Next, we will use functionality of the pandas Python library (installed as a dependency when installing the datastar library) to transform the customer names to our desired Cust_xxxx format:

As a last step, we can now write the updated customer names back into the customers table in the sandbox. Or, if we want to preserve the data in the sandbox, we can also write to a new table as is done in the next screenshot:

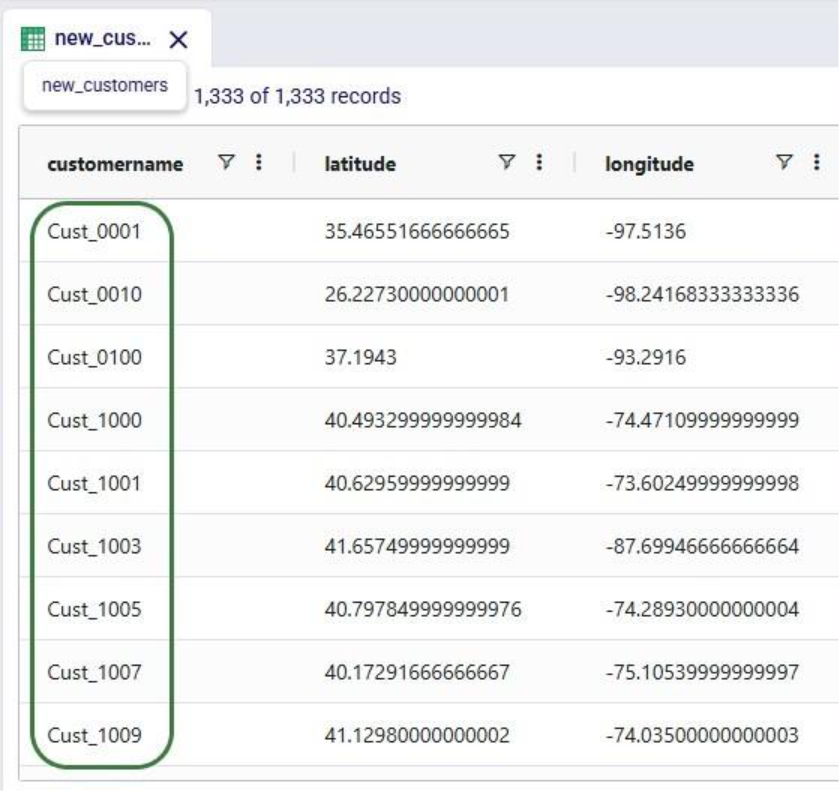

We use the write_table method to write the dataframe with the updated customer names into a new table called “new_customers” in the project sandbox. After running the script, opening this new table in DataStar shows us that the updates worked:

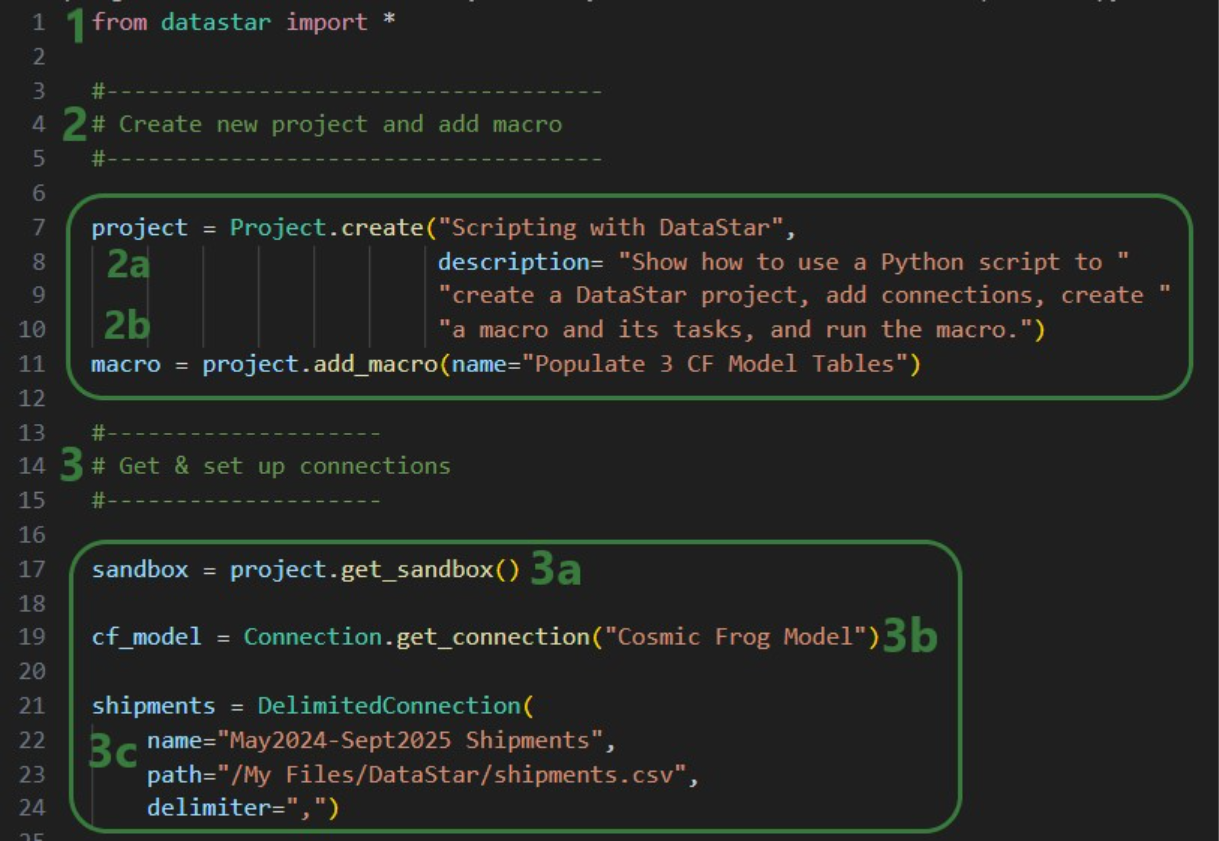

Finally, we will put everything we have covered above together in one script which will:

We will look at this script through the next set of screenshots. For those who would like to run this script themselves, and possibly use it as a starting point to modify into their own script:

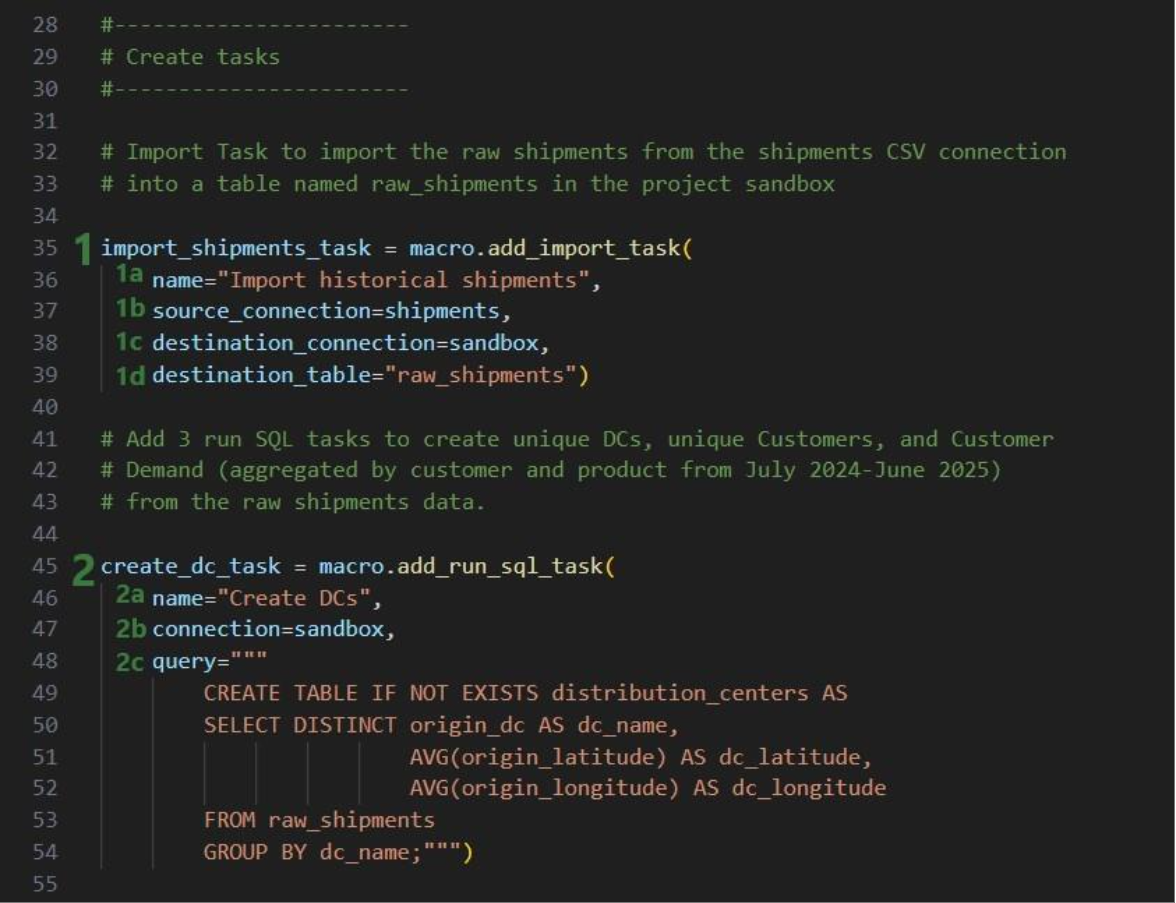

Next, we will create 7 tasks to add to the “Populate 3 CF Model Tables” macro, starting with an Import task:

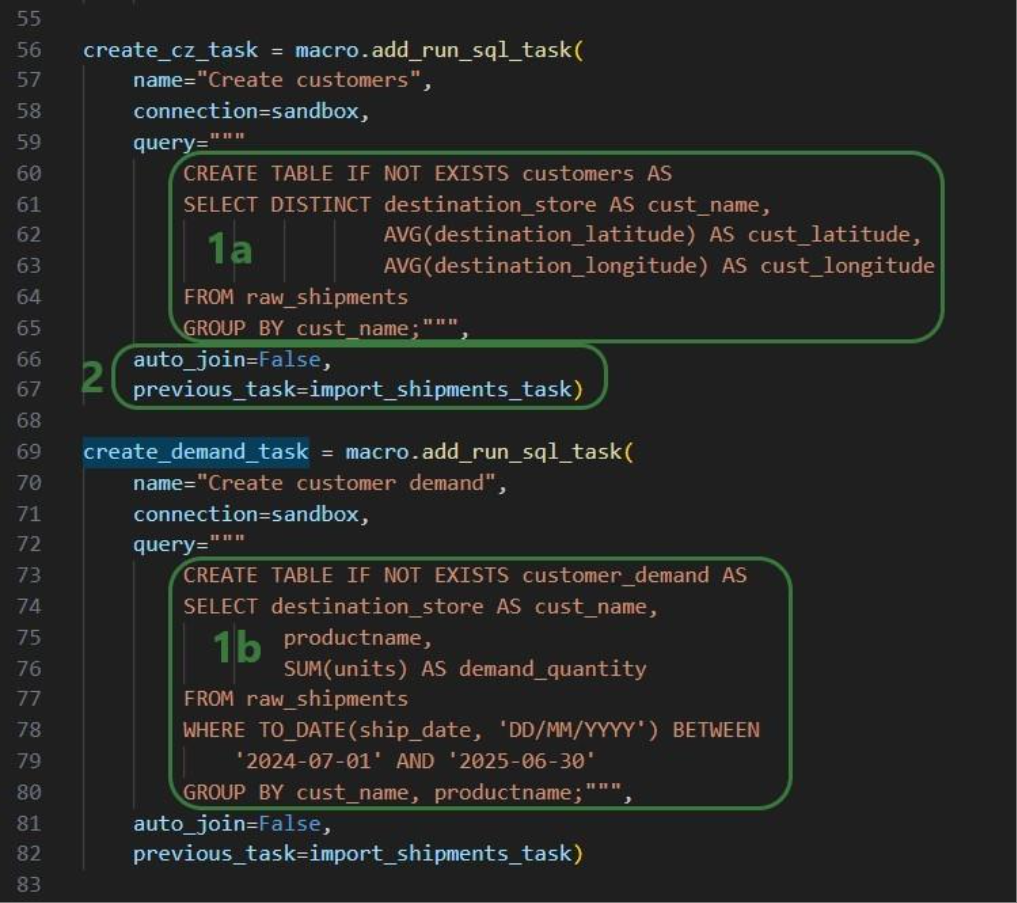

Similar to the “create_dc_task” Run SQL task, 2 more Run SQL tasks are created to create unique customers and aggregated customer demand from the raw_shipments table:

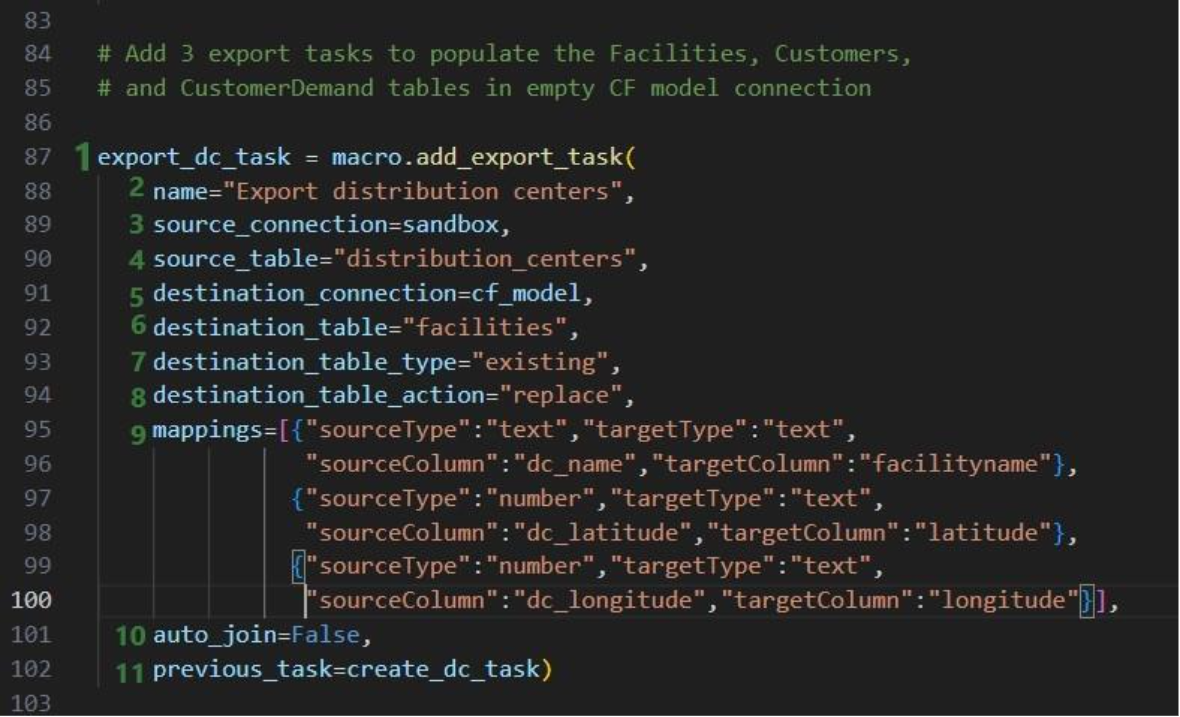

Now that we have generated the distribution_centers, customers, and customer_demand tables in the project sandbox using the 3 SQL Run tasks, we want to export these tables into their corresponding Cosmic Frog tables (facilities, customers, and customerdemand) in the empty Cosmic Frog model:

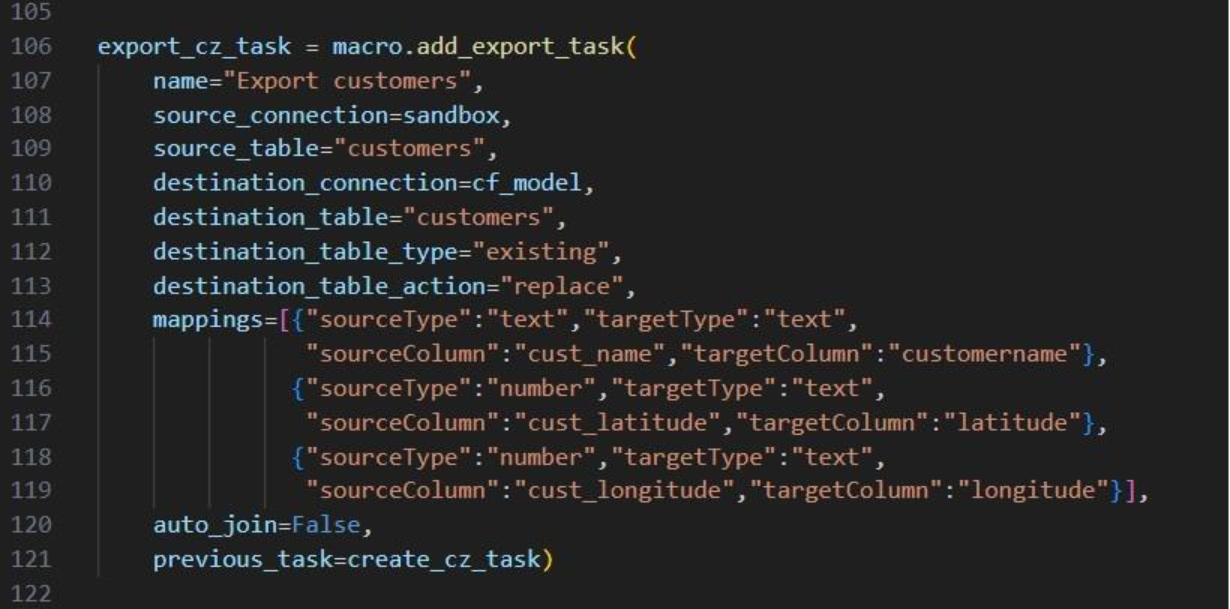

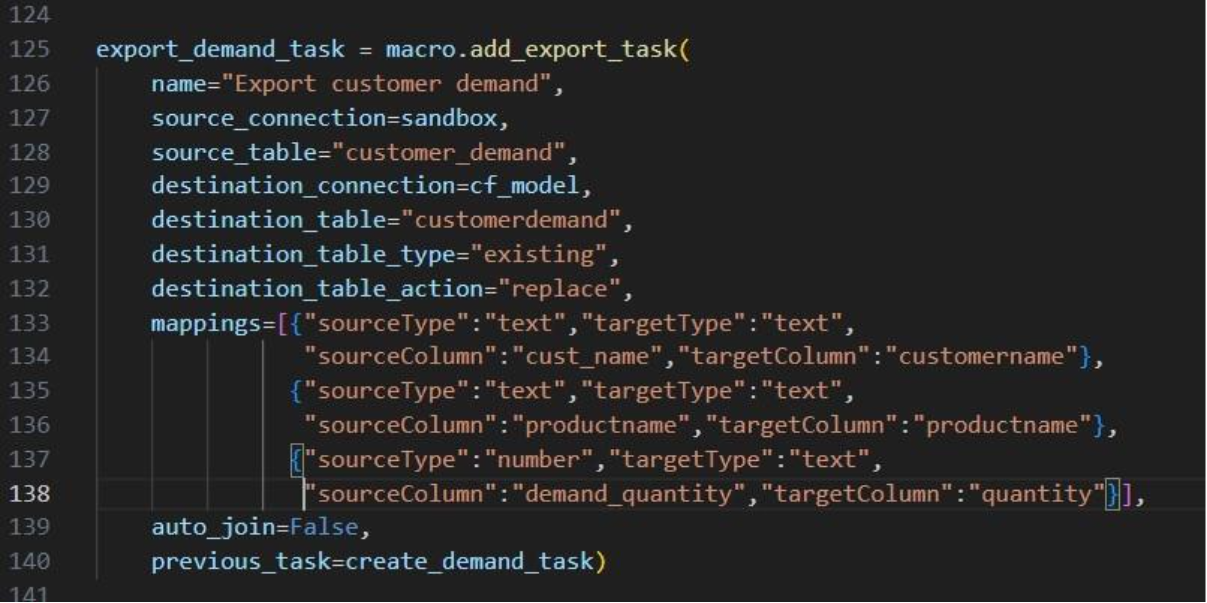

The following 2 Export tasks are created in a very similar way:

This completes the build of the macro and its tasks.

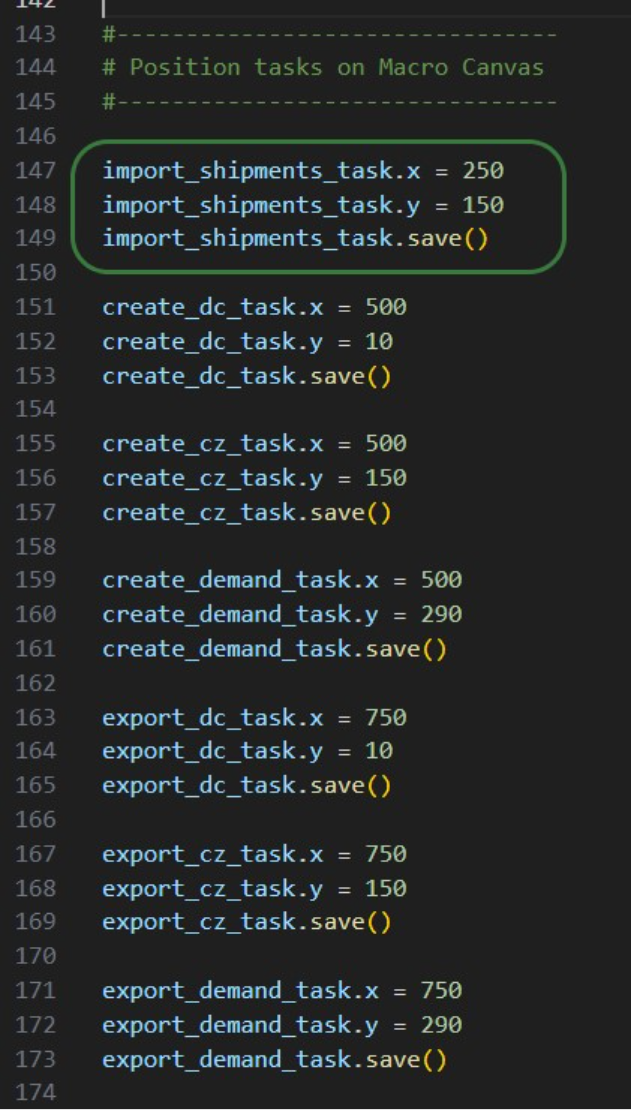

If we run it like this, the tasks will be chained in the correct way, but they will be displayed on top of each other on the Macro Canvas in DataStar. To arrange them nicely and prevent having to reposition them manually in the DataStar UI, we can use the “x” and “y” properties of tasks. Note that since we are now changing existing objects, we need to use the save method to commit the changes:

In the green outlined box, we see that the x-coordinate on the Macro Canvas for the import_shipments_task is set to 250 (line 147) and its y-coordinate to 150 (line 148). In line 149 we use the save method to persist these values.

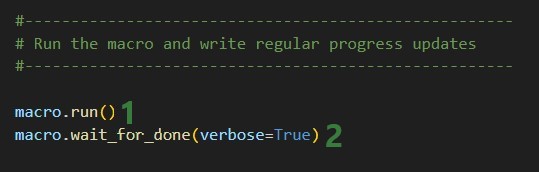

Now we can kick off the macro run and monitor its progress:

While the macro is running, messages written to the terminal by the wait_for_done method will look similar to following:

We see 4 messages where the status was “processing” and then a final fifth one stating the macro run has completed. Other statuses one might see are pending when the macro has not yet started and errored in case the macro could not finish successfully.

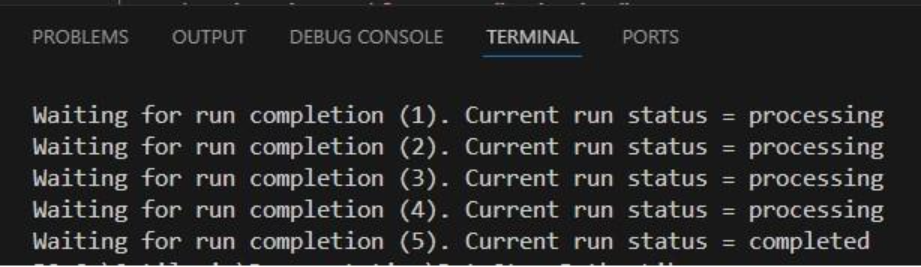

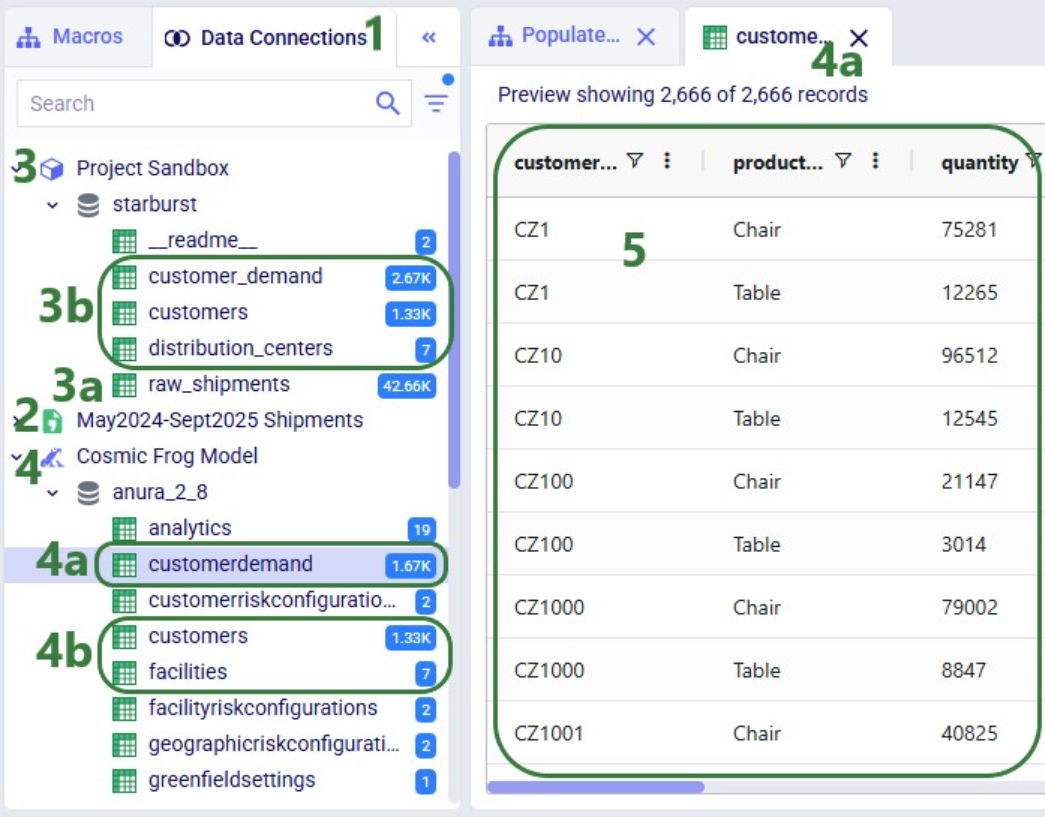

Opening the DataStar application, we can check the project and CSV connection were created on the DataStar startpage. They are indeed there, and we can open the “Scripting with DataStar” project to check the “Populate 3 CF Model Tables” macro and the results of its run:

The macro contains the 7 tasks we expect and checking their configurations shows they are set up the way we intended to.

Next, we have a look at the Data Connections tab to see the results of running the macro:

Here follows the code of each of the above examples. You can copy and paste this into your own scripts and modify them to your needs. Note that whenever names and paths are used, you may need to update these to match your own environment.

Get list of DataStar projects in user's Optilogic account and print list to terminal:

from datastar import *

project_list = Project.get_projects()

print(project_list)

Connect to the project named "Import Historical Shipments" and get the list of macros within this project. Print this list to the terminal:

from datastar import *

project = Project.connect_to("Import Historical Shipments")

macro_list = project.get_macros()

print(macro_list)

In the same "Import Historical Shipments" project, get the macro named "Import Shipments", and get the list of tasks within this macro. Print the list with task names to the terminal:

from datastar import *

project = Project.connect_to("Import Historical Shipments")

macro = project.get_macro("Import Shipments")

task_list = macro.get_tasks()

print(task_list)

Copy 3 of the 7 tasks in the "Cost Data" macro in the "Data Cleansing and Aggregation NA Model" project to a new macro "Cost Data EMEA" in a new project "Data Cleansing and Aggregation EMEA". Do this by first copying the whole macro and then removing the tasks that are not required in this new macro:

from datastar import *

# connect to project and get macro to be copied into new project

project = Project.connect_to("Data Cleansing and Aggregation NA Model")

macro = project.get_macro("Cost Data")

# create new project and clone macro into it

new_project = Project.create("Data Cleansing and Aggregation EMEA")

new_macro = macro.clone(new_project,name="Cost Data EMEA",

description="Cloned from NA project; \

keep 3 transportation tasks")

# list the transportation cost related tasks to be kept and get a list

# of tasks present in the copied macro in the new project, so that we

# can determine which tasks to delete

tasks_to_keep = ["Start",

"Import Transportation Cost Data",

"Cleanse TP Costs",

"Aggregate TP Costs by Month"]

tasks_present = new_macro.get_tasks()

# go through tasks present in the new macro and

# delete if the task name is not in the "to keep" list

for task in tasks_present:

if task not in tasks_to_keep:

new_macro.delete_task(task)

Copy specific task "Import General Ledger" from the "Cost Data" macro in the "Data Cleansing and Aggregation NA Model" project to the "Cost Data EMEA" macro in the "Data Cleansing and Aggregation EMEA" project. Chain this copied task to the Start task:

from datastar import *

project_1 = Project.connect_to("Data Cleansing and Aggregation NA Model")

macro_1 = project_1.get_macro("Cost Data")

project_2 = Project.connect_to("Data Cleansing and Aggregation EMEA")

macro_2 = project_2.get_macro("Cost Data EMEA")

task_to_copy = macro_1.get_task("Import General Ledger")

start_task = macro_2.get_task("Start")

copied_task = macro_2.add_task(task_to_copy,

auto_join=False,

previous_task=start_task)

Creating a CSV file connection and a Cosmic Frog Model connection:

from datastar import *

shipments = DelimitedConnection(

name="Shipment Data",

path="/My Files/DataStar/Shipments.csv",

delimiter=","

)

cf_global_sc_strategy = FrogModelConnection(

name="Global SC Strategy CF Model",

model_name="Global Supply Chain Strategy"

)

Connect directly to a project's sandbox, read data into a pandas dataframe, transform it, and write the new dataframe into a new table "new_customers":

from datastar import *

# connect to project and get its sandbox

project = Project.connect_to("Import Historical Shipments")

sandbox = project.get_sandbox()

# use pandas to raed the "customers" table into a dataframe

df_customers = sandbox.read_table("customers")

# copy the dataframe into a new dataframe

df_new_customers = df_customers

# use pandas to change the customername column values format

# from CZ1, CZ20, etc to Cust_0001, Cust_0020, etc

df_new_customers['customername'] = df_new_customers['customername'].map(lambda x: x.lstrip('CZ'))

df_new_customers['customername'] = df_new_customers['customername'].str.zfill(4)

df_new_customers['customername'] = 'Cust_' + df_new_customers['customername']

# write the updates customers table with the new customername

# values to a new table "new_customers"

sandbox.write_table(df_new_customers, "new_customers")

End-to-end script - create a new project and add a new macro to it; add 7 tasks to the macro to import shipments data; create unique customers, unique distribution centers, and demand aggregated by customer and product from it. Then export these 3 tables to a Cosmic Frog model:

from datastar import *

#------------------------------------

# Create new project and add macro

#------------------------------------

project = Project.create("Scripting with DataStar",

description= "Show how to use a Python script to "

"create a DataStar project, add connections, create "

"a macro and its tasks, and run the macro.")

macro = project.add_macro(name="Populate 3 CF Model Tables")

#--------------------

# Get & set up connections

#--------------------

sandbox = project.get_sandbox()

cf_model = Connection.get_connection("Cosmic Frog Model")

shipments = DelimitedConnection(

name="May2024-Sept2025 Shipments",

path="/My Files/DataStar/shipments.csv",

delimiter=",")

#-----------------------

# Create tasks

#-----------------------

# Import Task to import the raw shipments from the shipments CSV connection

# into a table named raw_shipments in the project sandbox

import_shipments_task = macro.add_import_task(

name="Import historical shipments",

source_connection=shipments,

destination_connection=sandbox,

destination_table="raw_shipments")

# Add 3 run SQL tasks to create unique DCs, unique Customers, and Customer

# Demand (aggregated by customer and product from July 2024-June 2025)

# from the raw shipments data.

create_dc_task = macro.add_run_sql_task(

name="Create DCs",

connection=sandbox,

query="""

CREATE TABLE IF NOT EXISTS distribution_centers AS

SELECT DISTINCT origin_dc AS dc_name,

AVG(origin_latitude) AS dc_latitude,

AVG(origin_longitude) AS dc_longitude

FROM raw_shipments

GROUP BY dc_name;""")

create_cz_task = macro.add_run_sql_task(

name="Create customers",

connection=sandbox,

query="""

CREATE TABLE IF NOT EXISTS customers AS

SELECT DISTINCT destination_store AS cust_name,

AVG(destination_latitude) AS cust_latitude,

AVG(destination_longitude) AS cust_longitude

FROM raw_shipments

GROUP BY cust_name;""",

auto_join=False,

previous_task=import_shipments_task)

create_demand_task = macro.add_run_sql_task(

name="Create customer demand",

connection=sandbox,

query="""

CREATE TABLE IF NOT EXISTS customer_demand AS

SELECT destination_store AS cust_name,

productname,

SUM(units) AS demand_quantity

FROM raw_shipments

WHERE TO_DATE(ship_date, 'DD/MM/YYYY') BETWEEN

'2024-07-01' AND '2025-06-30'

GROUP BY cust_name, productname;""",

auto_join=False,

previous_task=import_shipments_task)

# Add 3 export tasks to populate the Facilities, Customers,

# and CustomerDemand tables in empty CF model connection

export_dc_task = macro.add_export_task(

name="Export distribution centers",

source_connection=sandbox,

source_table="distribution_centers",

destination_connection=cf_model,

destination_table="facilities",

destination_table_type="existing",

destination_table_action="replace",

mappings=[{"sourceType":"text","targetType":"text",

"sourceColumn":"dc_name","targetColumn":"facilityname"},

{"sourceType":"number","targetType":"text",

"sourceColumn":"dc_latitude","targetColumn":"latitude"},

{"sourceType":"number","targetType":"text",

"sourceColumn":"dc_longitude","targetColumn":"longitude"}],

auto_join=False,

previous_task=create_dc_task)

export_cz_task = macro.add_export_task(

name="Export customers",

source_connection=sandbox,

source_table="customers",

destination_connection=cf_model,

destination_table="customers",

destination_table_type="existing",

destination_table_action="replace",

mappings=[{"sourceType":"text","targetType":"text",

"sourceColumn":"cust_name","targetColumn":"customername"},

{"sourceType":"number","targetType":"text",

"sourceColumn":"cust_latitude","targetColumn":"latitude"},

{"sourceType":"number","targetType":"text",

"sourceColumn":"cust_longitude","targetColumn":"longitude"}],

auto_join=False,

previous_task=create_cz_task)

export_demand_task = macro.add_export_task(

name="Export customer demand",

source_connection=sandbox,

source_table="customer_demand",

destination_connection=cf_model,

destination_table="customerdemand",

destination_table_type="existing",

destination_table_action="replace",

mappings=[{"sourceType":"text","targetType":"text",

"sourceColumn":"cust_name","targetColumn":"customername"},

{"sourceType":"text","targetType":"text",

"sourceColumn":"productname","targetColumn":"productname"},

{"sourceType":"number","targetType":"text",

"sourceColumn":"demand_quantity","targetColumn":"quantity"}],

auto_join=False,

previous_task=create_demand_task)

#--------------------------------

# Position tasks on Macro Canvas

#--------------------------------

import_shipments_task.x = 250

import_shipments_task.y = 150

import_shipments_task.save()

create_dc_task.x = 500

create_dc_task.y = 10

create_dc_task.save()

create_cz_task.x = 500

create_cz_task.y = 150

create_cz_task.save()

create_demand_task.x = 500

create_demand_task.y = 290

create_demand_task.save()

export_dc_task.x = 750

export_dc_task.y = 10

export_dc_task.save()

export_cz_task.x = 750

export_cz_task.y = 150

export_cz_task.save()

export_demand_task.x = 750

export_demand_task.y = 290

export_demand_task.save()

#-----------------------------------------------------

# Run the macro and write regular progress updates

#-----------------------------------------------------

macro.run()

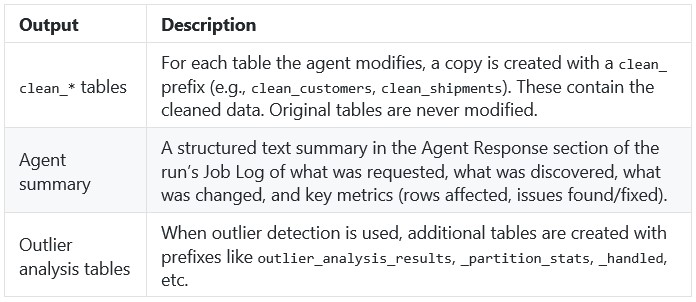

macro.wait_for_done(verbose=True)The Data Cleansing Agent is an AI-powered assistant that helps users profile, clean, and standardize their database data without writing code. Users describe what they want in plain English -- such as "find and fix postal code issues in the customers table" or "standardize date formats in the orders table to ISO" -- and the agent autonomously discovers issues, creates safe working copies of the data, applies the appropriate fixes, and verifies the results. It handles common supply chain data problems including mixed date formats, inconsistent country codes, Excel-corrupted postal codes, missing values, outliers, and messy text fields. It expects a connected database with one or more tables as input. The output is a set of cleaned copies of their tables in the database which users can immediately use for Cosmic Frog model building, reporting, or further analysis, while the original data is preserved untouched for comparison or rollback.

This documentation describes how this specific agent works and can be configured, including walking through multiple examples. Please see the “AI Agents: Architecture and Components” Help Center article if you are interested in understanding how the Optilogic AI Agents work at a detailed level.

Cleaning and standardizing data for supply chain modeling typically requires significant manual effort -- writing SQL queries, inspecting column values, fixing formatting issues one at a time, and verifying results. The Data Cleansing Agent streamlines this process by turning a single natural language prompt into a full profiling, cleaning, and verification workflow.

Key Capabilities:

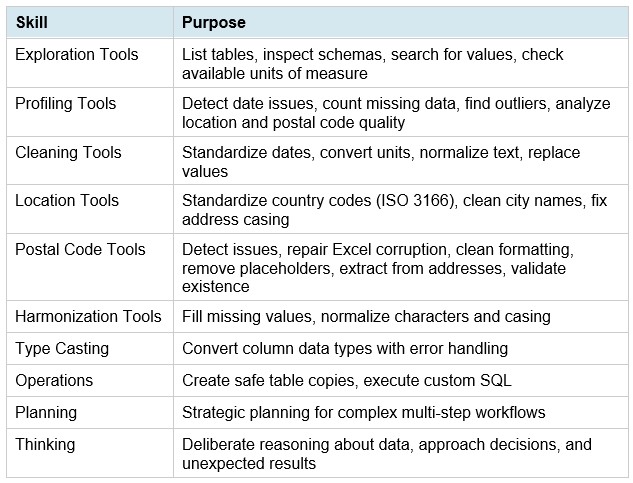

Skills:

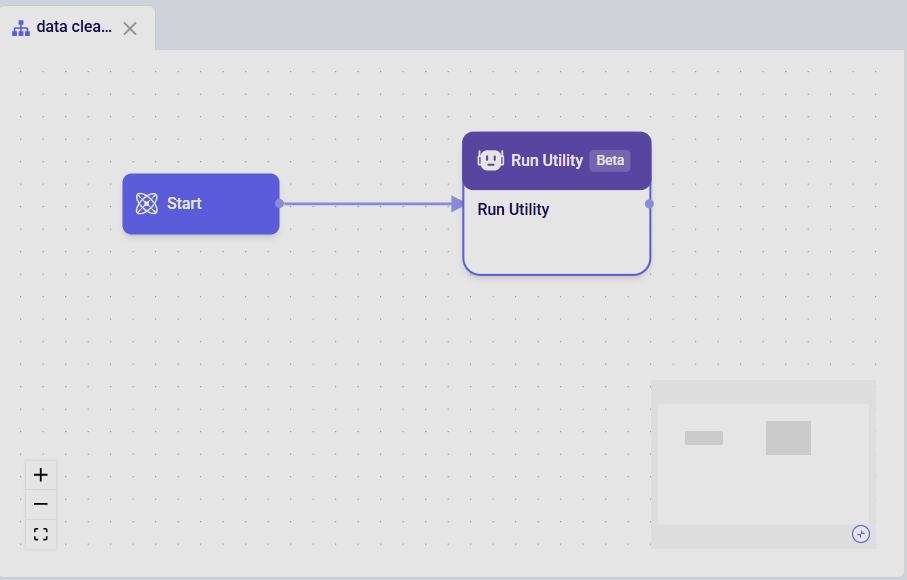

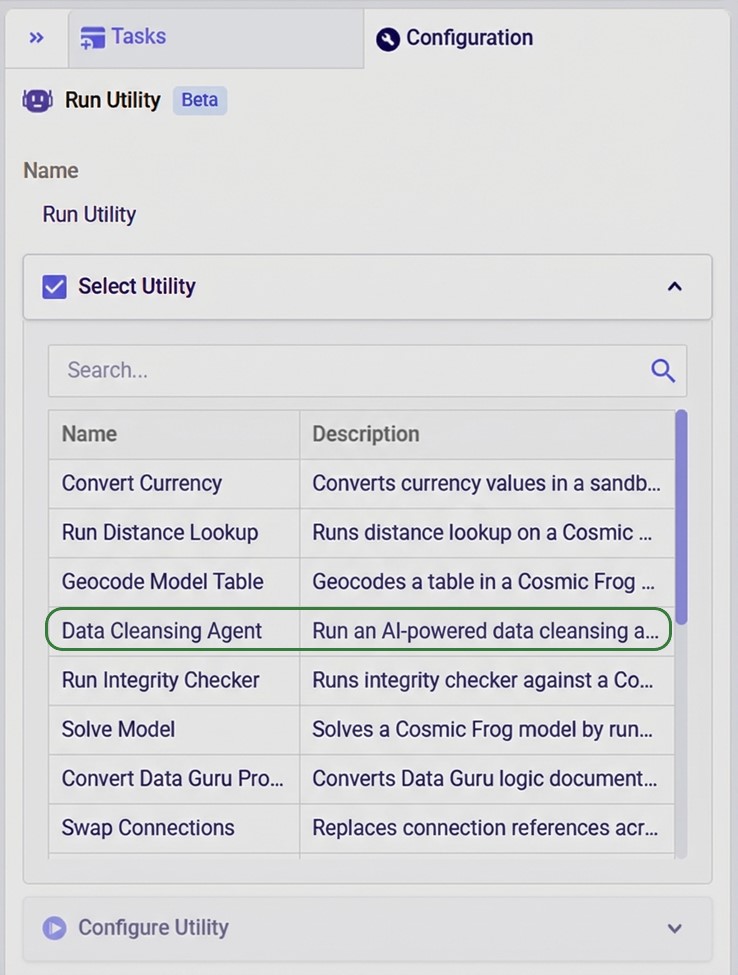

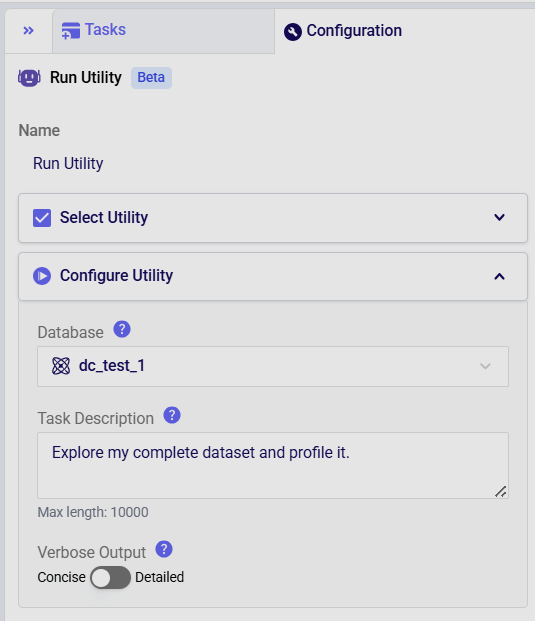

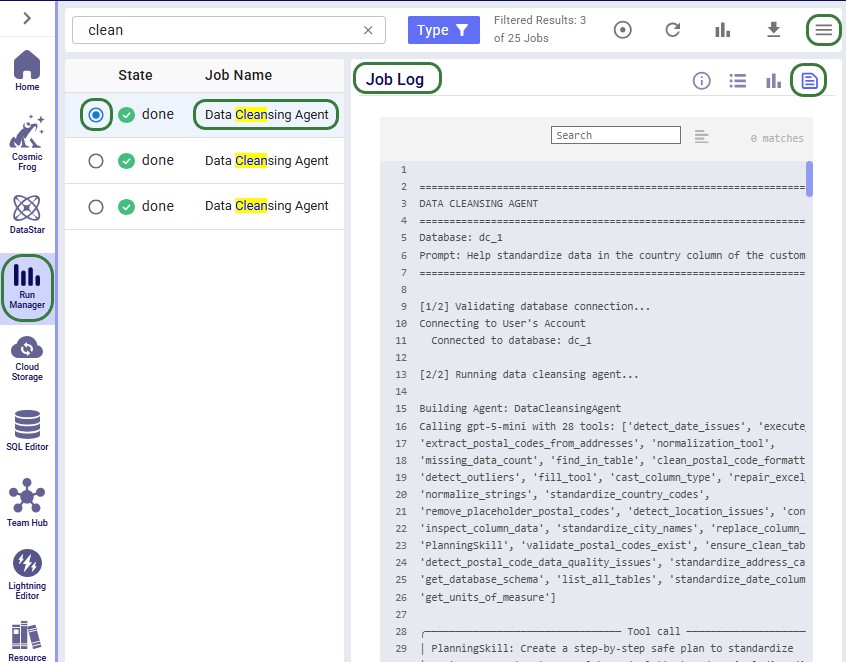

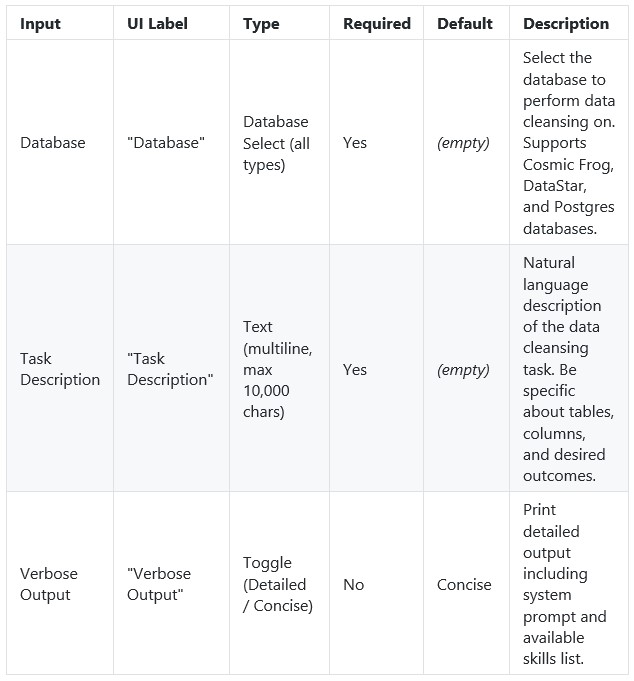

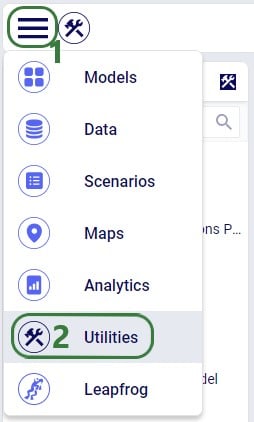

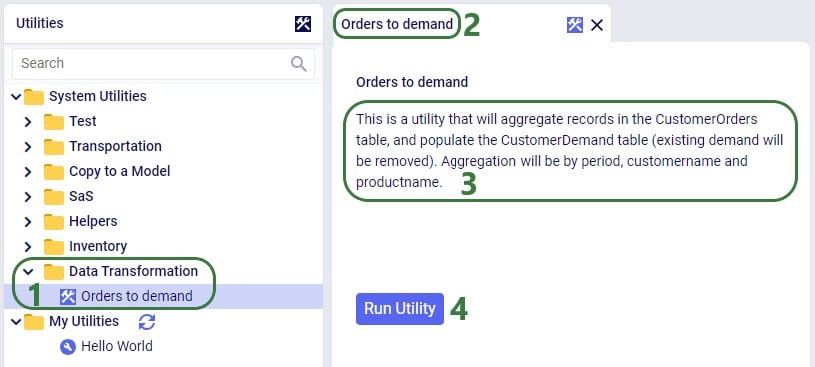

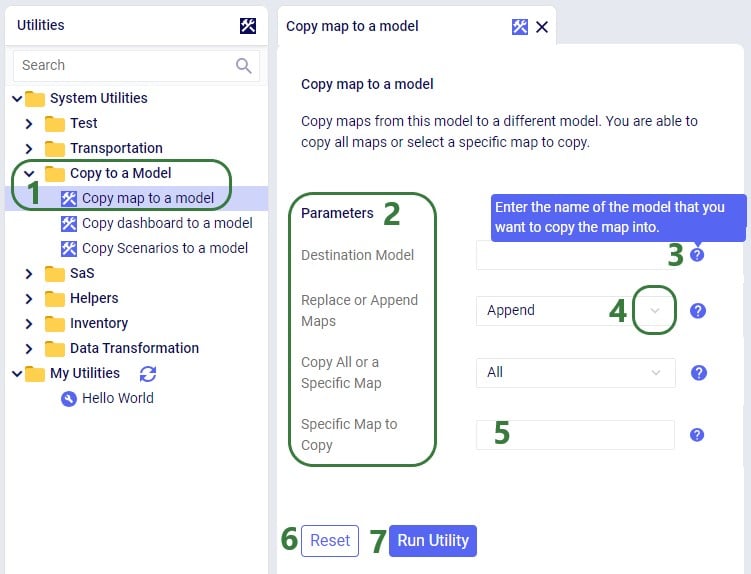

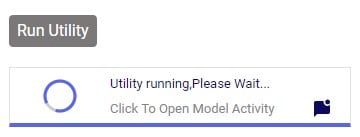

The agent can be accessed through the Run Utility task in DataStar, see also the screenshots below. The key inputs are:

The Task Description field includes placeholder examples to help you get started:

Optionally, users can:

Suggested workflow:

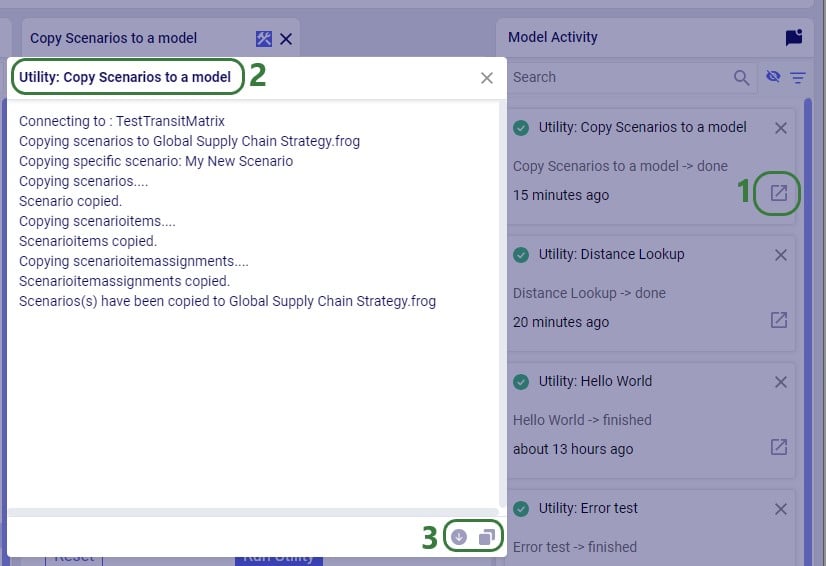

After the run, the agent produces a structured summary of everything it did, including metrics on rows affected, issues found, and issues fixed; see the next section where this Job Log is described in more detail. The cleaned data is persisted as clean_* tables in the database (e.g., clean_customers, clean_shipments).

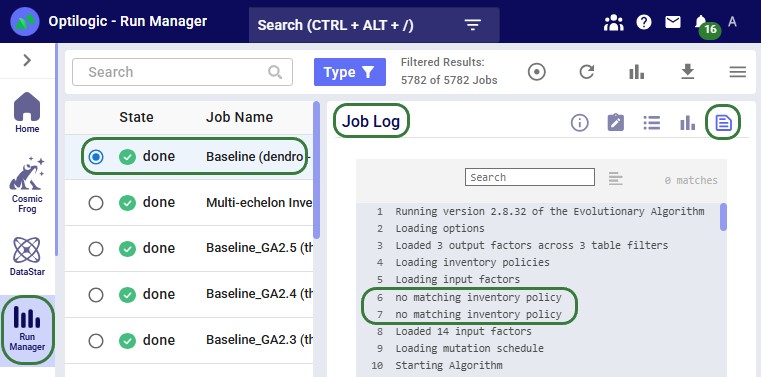

After a run completes, the Job Log in Run Manager provides a detailed trace of every step the agent took. Understanding the log structure helps users verify what happened and troubleshoot if needed. The log follows a consistent structure from start to finish.

Header

Every log begins with a banner showing the database name and the exact prompt that was submitted.

Connection & Setup

The agent validates the database connection and initializes itself with its full set of tools. If Verbose Output is set to "Detailed", the log also prints the system prompt and tool list at this stage.

Planning Phase

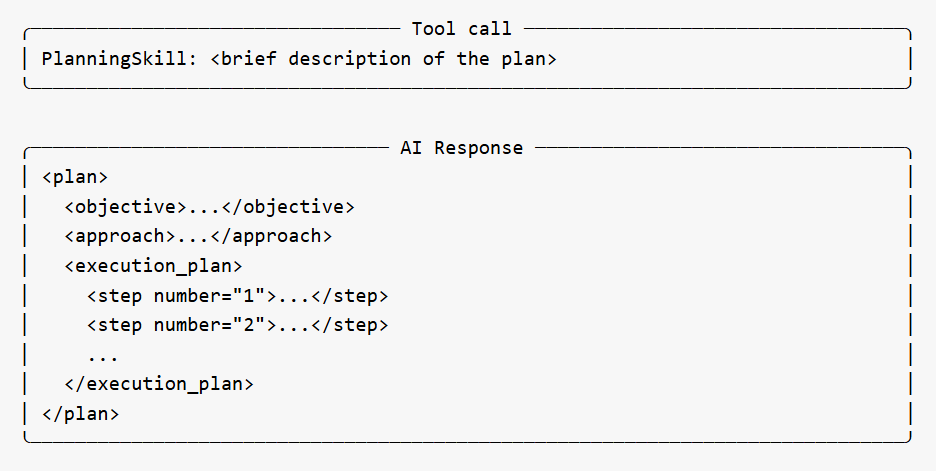

For non-trivial tasks, the agent creates a strategic execution plan before taking action. This appears as a PlanningSkill tool call, followed by an AI Response box containing a structured plan with numbered steps, an objective, approach, and skill mapping. The plan gives users visibility into the agent's intended approach before it begins working.

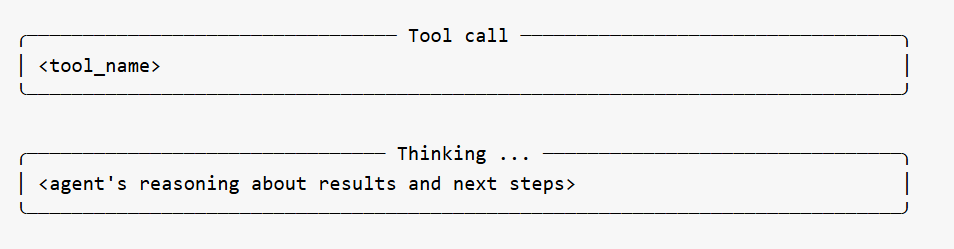

Tool Calls and Thinking

The bulk of the log shows the agent calling its specialized tools one at a time. Each tool call appears in a bordered box showing the tool name. Between tool calls, the agent's reasoning is shown in Thinking boxes -- explaining what it learned from the previous tool, what it plans to do next, and why. These thinking sections are among the most useful parts of the log for understanding the agent's decision-making.

The agent may call many tools in sequence depending on the complexity of the task. Profiling-only prompts typically involve discovery tools (schema, missing data, date issues, location issues, outliers). Cleanup prompts add transformation tools (ensure_clean_table, standardize_country_codes, standardize_date_column, etc.).

Occasionally a Memory Action Applied entry appears between steps -- this is the agent recording context for its own use and can be ignored.

Error Recovery

If the agent encounters a validation error on a tool call (e.g., a column stored as TEXT when a numeric type was expected, or a missing parameter), the log shows the error and the agent's automatic adjustment. The agent reasons about the failure in a Thinking block and retries with corrected parameters. Users do not need to intervene.

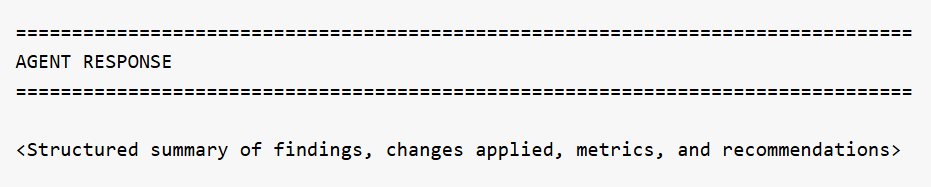

Agent Response

At the end of the run, the agent produces a structured summary of everything it discovered or changed. This is the most important section of the log for understanding outcomes:

For profiling prompts, this section reports what was found across all tables -- schema details, missing data percentages, date format inconsistencies, location quality issues, numeric anomalies, and recommendations for next steps. For cleanup prompts, it reports which tables were modified, what transformations were applied, how many rows were affected, and confirmation that originals are preserved.

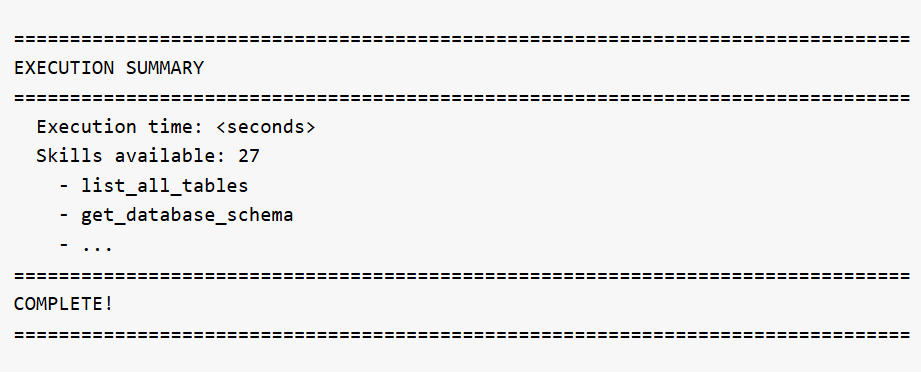

Execution Summary

The log ends with runtime statistics and the full list of skills that were available to the agent:

What the agent expects in your database:

The agent works with any tables in the selected database. There are no fixed column name requirements -- the agent discovers the schema automatically. However, for best results:

Tips & Notes

A user wants to understand what data is in their database before deciding what to clean.

Database: Supply Chain Dataset

Task Description: List all tables in the database and show their schemas

What happens: The agent calls get_database_schema for all tables and exits with a structured report.

Output:

Requested: List all tables and show schemas.

Discovered (schema 'starburst'):

...

Total: 12 tables, 405 rows, 112 columns

A user needs to clean up customer location data before using it in a Cosmic Frog network optimization model.

Database: Supply Chain Dataset

Task Description: Clean the customers table completely: standardize dates to ISO, fix postal codes (Excel corruption + placeholders), standardize country codes to alpha-2, clean city names, and normalize emails to lowercase

What the agent does:

Output:

Completed data cleansing of clean_customers table:

All changes applied to clean_customers (original customers table preserved).

The cleaned data is available in the clean_customers table in the database. The original customers table remains untouched.

A user with a 14-table enterprise supply chain database needs to clean and standardize all data before building Cosmic Frog models for network optimization and simulation.

Database: Enterprise Supply Chain

Task Description: Perform a complete data cleanup across all tables: standardize all dates to ISO, standardize all country codes to alpha-2, clean all city names, fix all postal codes, and normalize all email addresses to lowercase. Work systematically through each table.

What the agent does: The agent works systematically through all tables -- standardizing dates across 12+ tables, fixing country codes, cleaning city names, repairing postal codes, normalizing emails and status fields, detecting and handling negative values, converting mixed units to metric, validating calculated fields like order totals, and reporting any remaining referential integrity issues. This is the most comprehensive operation the agent can perform.

Output: A detailed summary covering every table touched, every transformation applied, and a final quality scorecard showing the before/after improvement.

Below are example prompts users can try, organized by category.

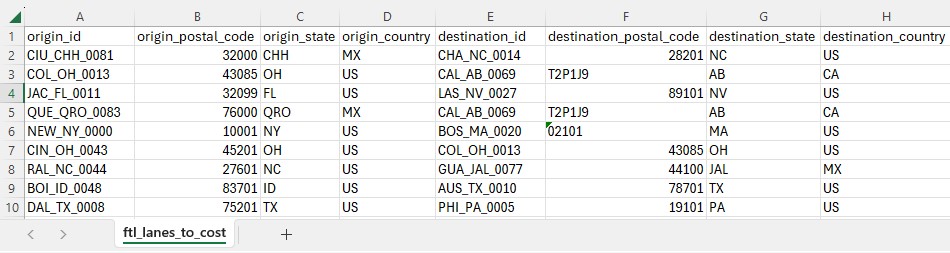

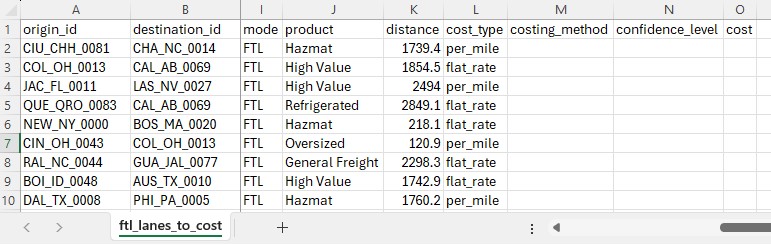

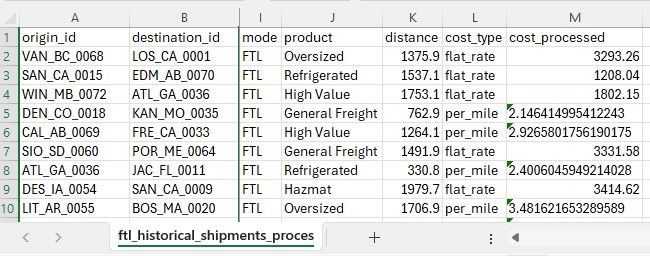

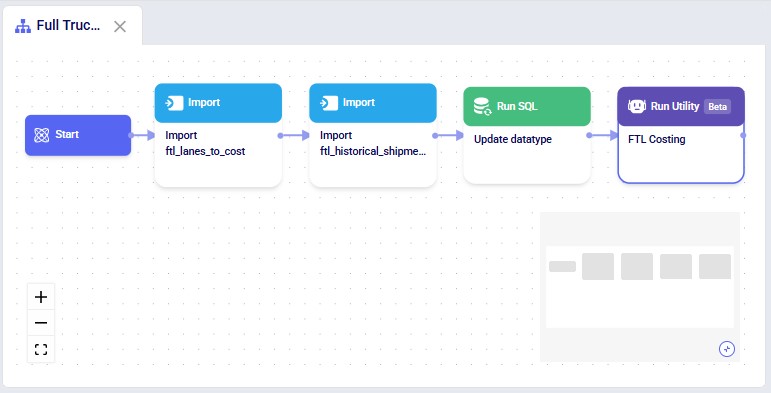

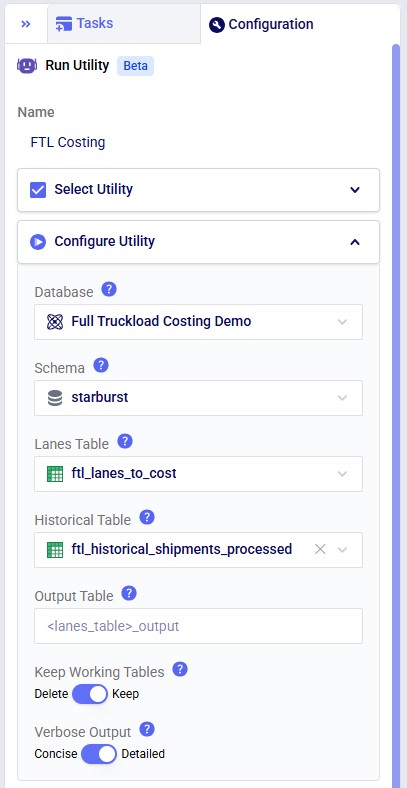

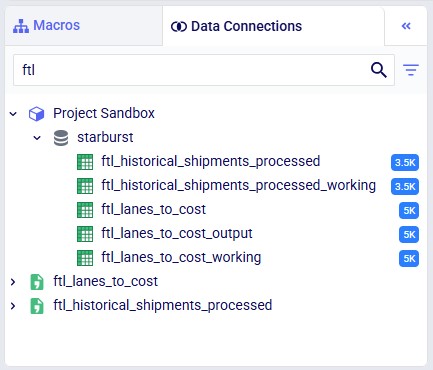

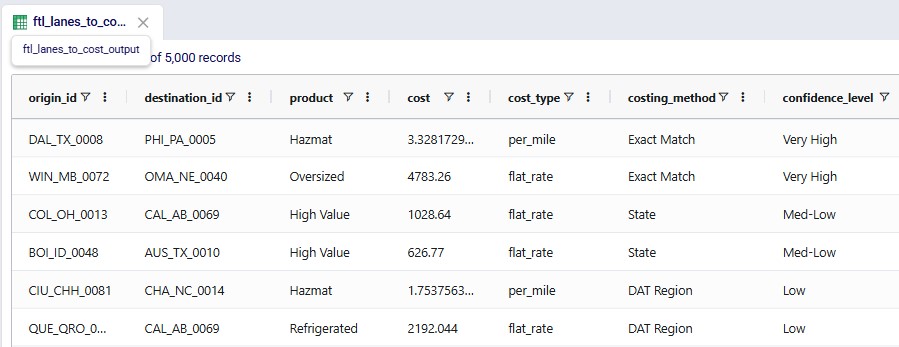

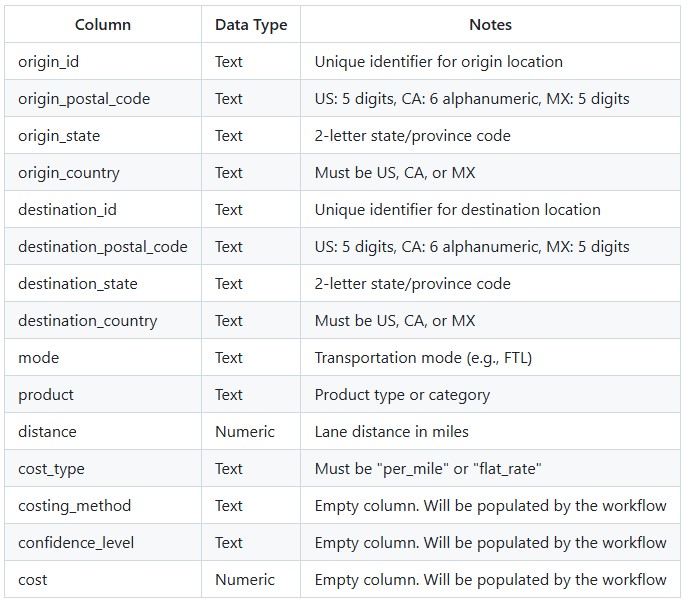

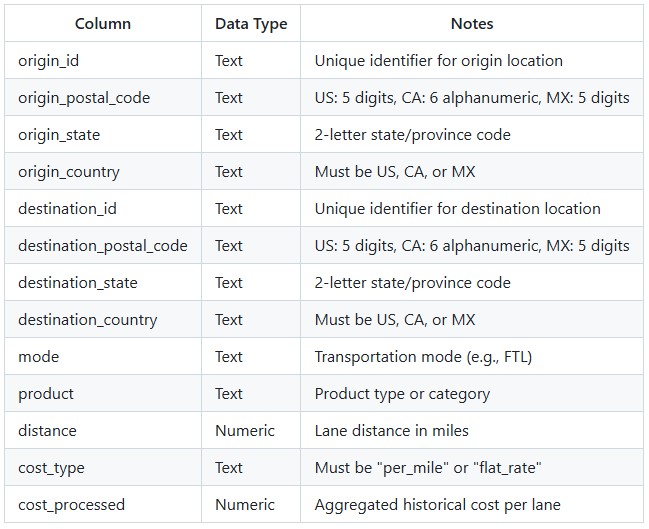

The Full Truckload Costing utility solves the common problem of missing transportation cost data when building supply chain models. Rather than requiring users to manually research rates for every lane, this workflow automatically derives costs from a company's existing shipment history. The utility expects two input tables: a lanes-to-cost table containing the origin-destination pairs that need pricing, and an optional historical shipments table containing preprocessed cost data. After running the utility, users receive a fully costed lanes table with confidence levels for each estimate.

The Full Truckload Costing Utility is available on the Resource Library, from which you can download it or copy it to your Optilogic account. Learn more about the Resource Library in this How to use the Resource Library help center article.

Sample Data

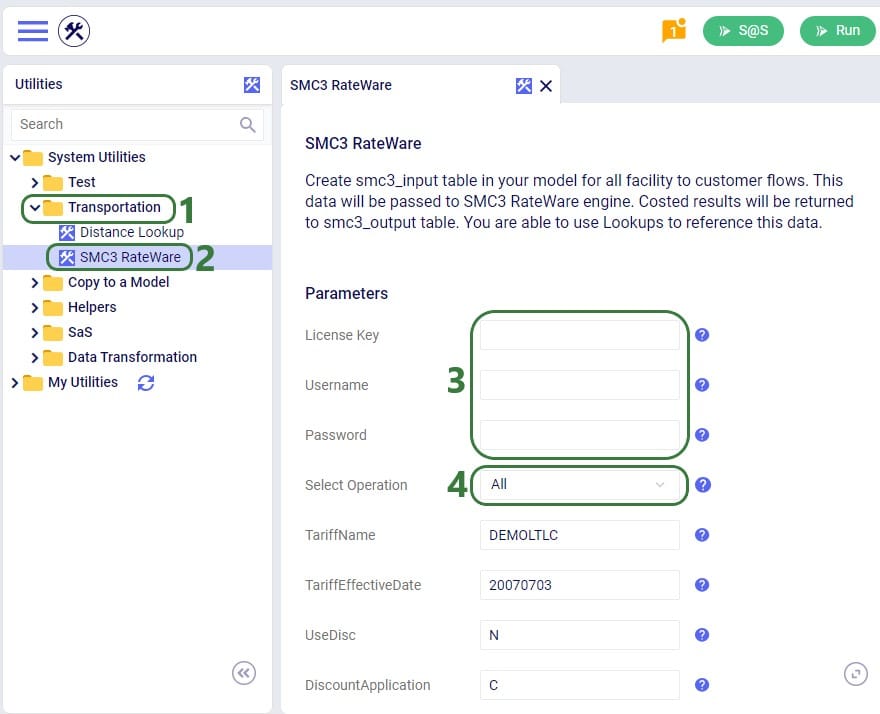

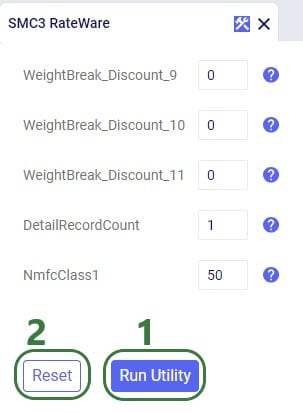

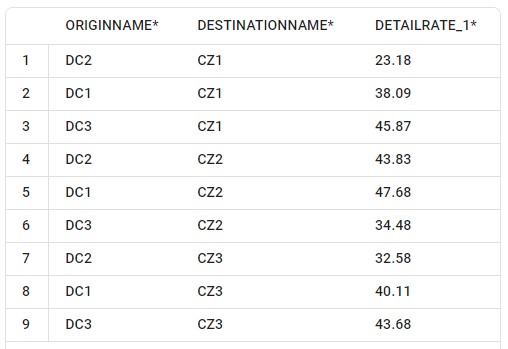

System Utility

The steps to use this utility are as follows. These are illustrated with screenshots below.

Screenshots of the steps:

Key Constraints:

Key Constraints:

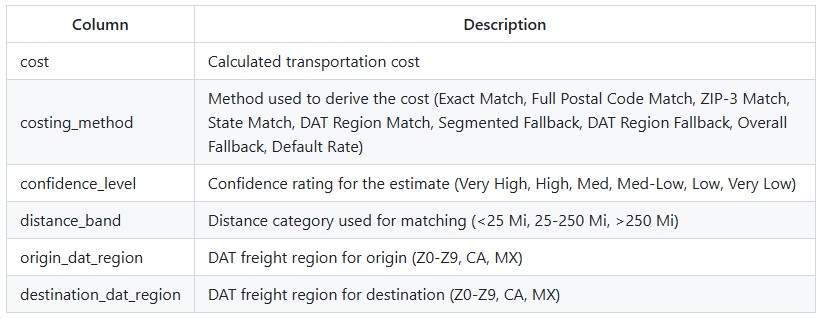

The utility produces an output table containing all lanes from the input with the following additional columns populated:

The utility processes lanes through a sequential pipeline, with each step only processing lanes that still have NULL costs:

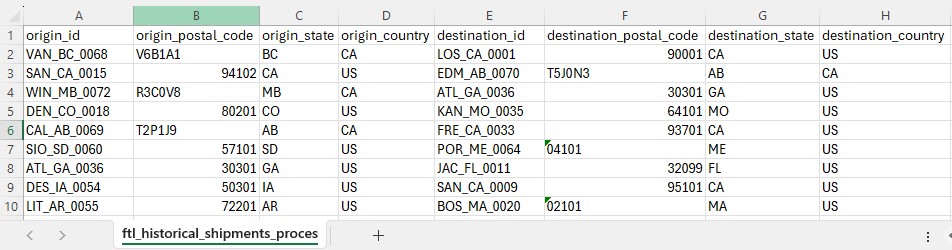

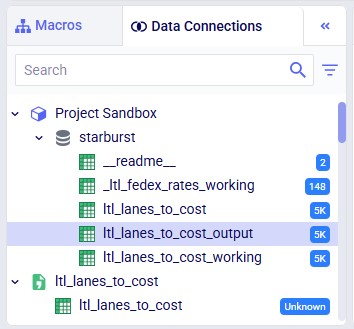

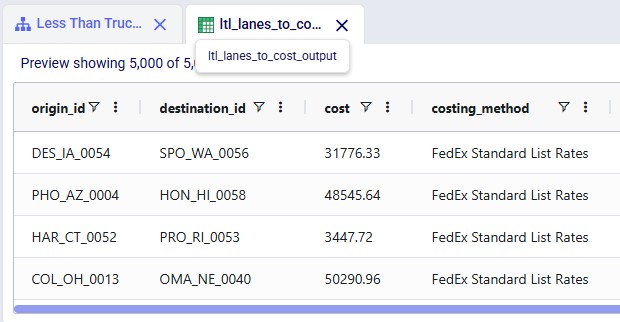

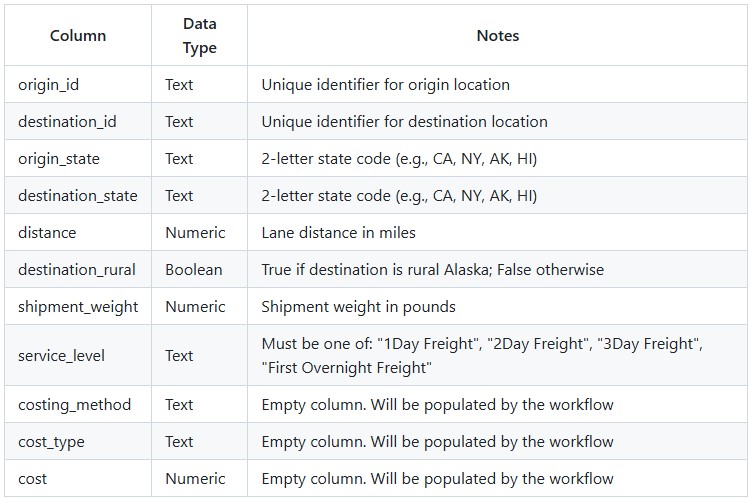

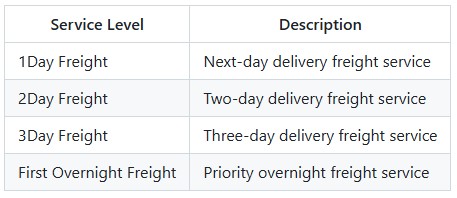

The Less Than Truckload Costing utility solves the challenge of pricing less-than-truckload shipments when carrier rate data is complex and varies by service level, distance, and weight. Rather than manually looking up rates in carrier tariff tables, this workflow automates the entire process using FedEx Express Freight standard list rates. The utility expects a lanes-to-cost table containing shipment details including origin, destination, distance, weight, and desired service level. After running the utility, users receive a fully costed table with calculated transportation costs.

The Less Than Truckload Costing Utility is available on the Resource Library, from which you can download it or copy it to your Optilogic account. Learn more about the Resource Library in this How to use the Resource Library help center article.

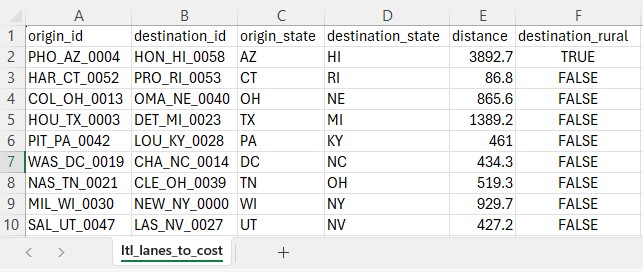

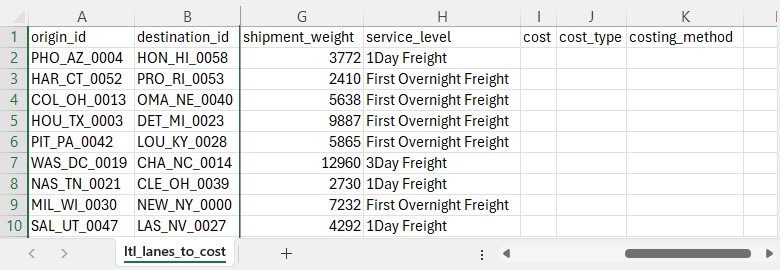

Sample Data

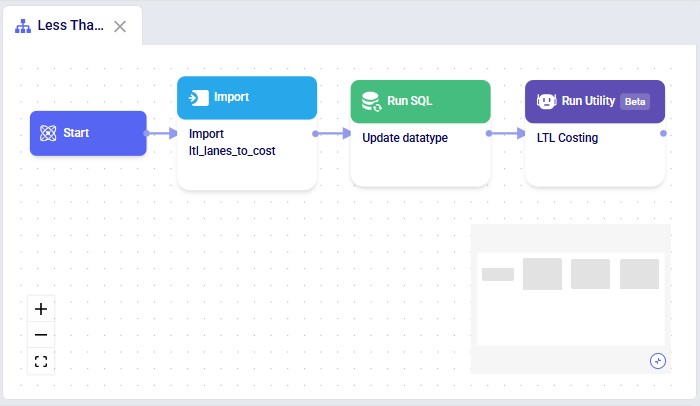

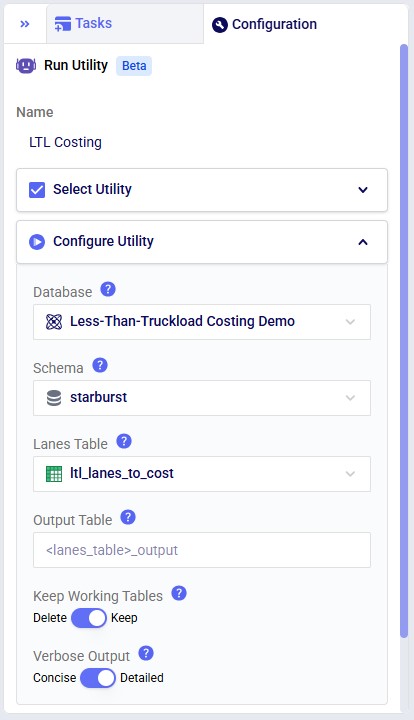

System Utility

The steps to use this utility are as follows. These are illustrated with screenshots below.

Screenshots of the steps:

Key Constraints:

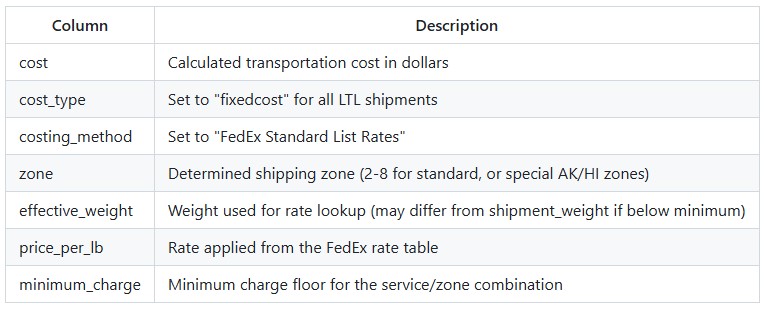

The utility produces an output table containing all lanes from the input with the following columns populated:

Zones are determined automatically based on the following priority:

Special Zones (for Alaska/Hawaii):

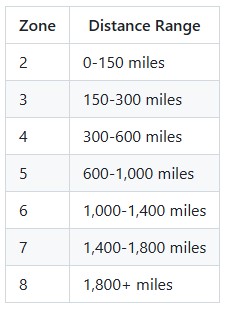

Standard Distance-Based Zones:

Costs are calculated using the following formula:

base_charge = shipment_weight x price_per_lb final_cost = MAX(base_charge, minimum_charge) Effective Weight: If the shipment weight is below the minimum weight for a service/zone combination, the utility uses the minimum weight band's rate but calculates the charge based on the actual shipment weight.

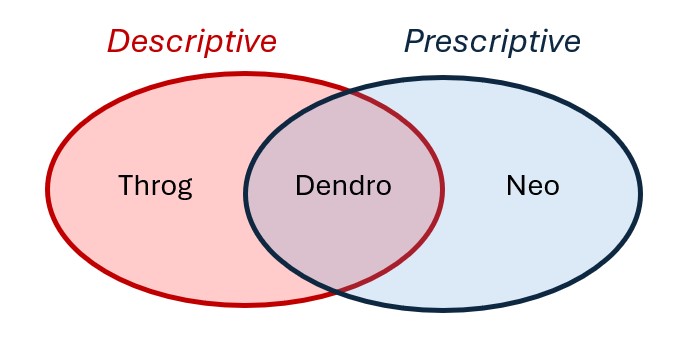

Dendro is Optilogic's simulation-optimization engine. A prime use case for Dendro is inventory policy optimization.

Simulation-optimization is a method in which simulation is leveraged to intelligently explore alternative configurations of a system. Dendro accomplishes this data-driven search by layering a genetic algorithm on top of simulation; simulation is the core of a Dendro model. Before a Dendro study can begin, a simulation model (run with the Throg engine) must be built, verified, and validated.

Simulation-optimization enables us to ask and answer questions that we cannot address in traditional network optimization or simulation alone.

In this article, we will explore:

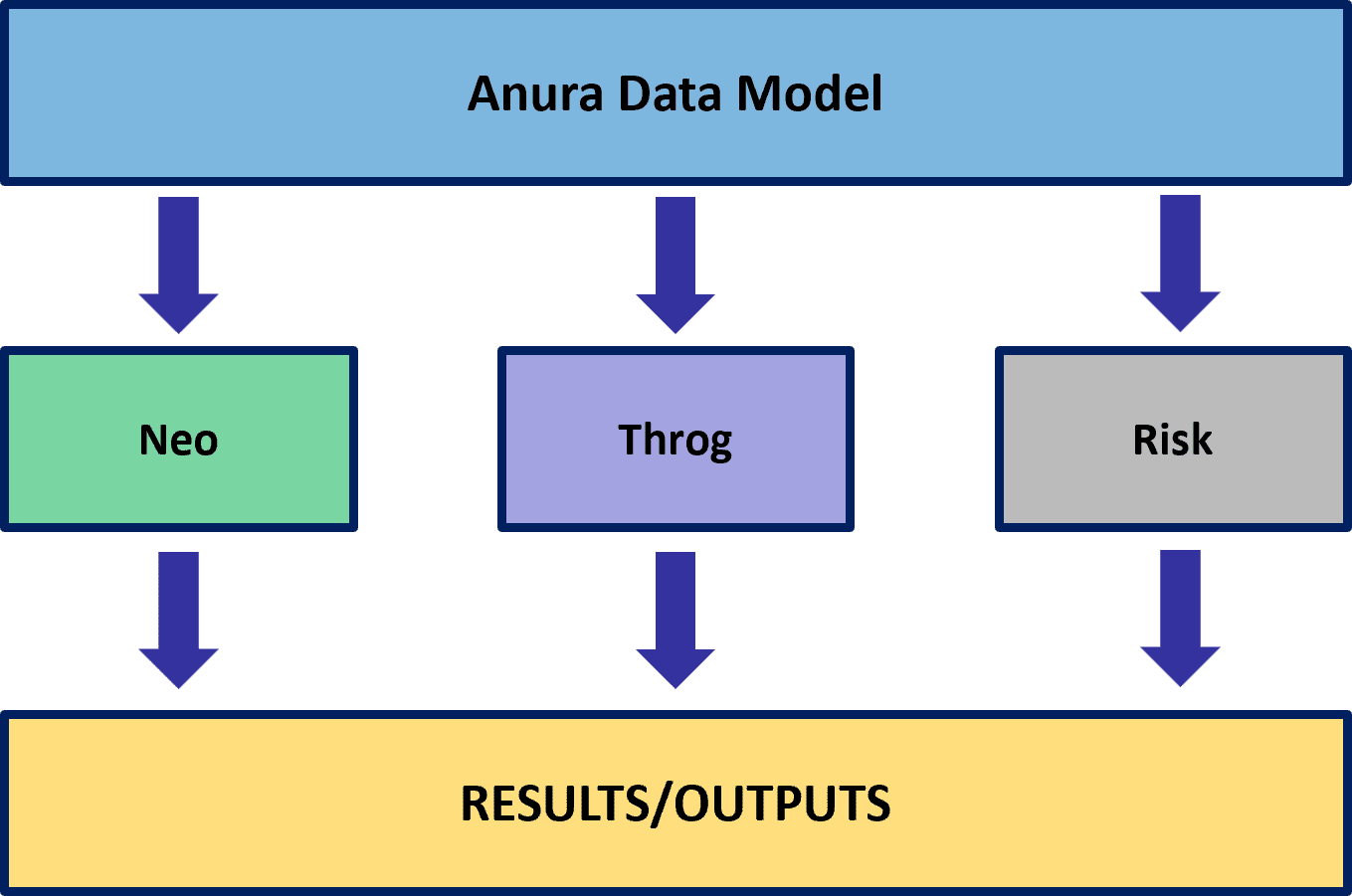

The modeling methods of network optimization (Neo), discrete event simulation (Throg), and simulation-optimization (Dendro) address different supply chain design use cases.

A prime use case for Dendro is inventory policy optimization: right-sizing inventory levels by changing inventory policy parameters (reorder points, reorder quantities, etc.) with the goal of balancing cost and service. Dendro's foundation in simulation minimizes the abstraction of cost accounting, service metrics, and the business rules surrounding inventory management. Dendro provides actionable inventory policy recommendations and data-driven evidence to support those changes.

Primary Focus: Determining where inventory should be positioned across the network.

Use Case: Network design decisions that include high-level inventory considerations -- such as stocking locations, target turns, and working capital trade-offs.

How It Handles Inventory:

Primary Focus: Testing and observing how specific inventory policies perform under realistic operational dynamics.

Use Case: Evaluate inventory control logic (e.g., reorder point, order quantity, order-up-to level) in a time-based simulation environment.

How It Handles Inventory:

Primary Focus: Finding better inventory policies that balance cost and service, combining Throg's simulation accuracy with an optimization engine.

Use Case: Adjust inventory policy parameters (e.g., reorder point, reorder quantity, policy type) to find configurations that deliver the best cost-service trade-offs.

How It Handles Inventory:

While this comparison highlights how each engine contributes to inventory management decisions, the specific use case covered in this article is inventory policy optimization -- using Dendro to balance total inventory carrying cost and network-level customer service (measured as quantity fill rate).

That said, Dendro's capabilities extend far beyond inventory. The same framework of input factors, output factors, and utility functions can be applied to a wide range of optimization problems -- and its utility components are not limited to just cost and service. Dendro can optimize for any measurable performance metric that matters to your business.

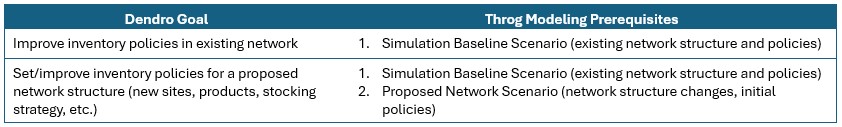

As stated above, a Dendro study cannot be initiated without first establishing a Throg simulation model; a Dendro project should be thought of as a simulation project with an added layer of analysis. Careful Throg model verification and validation are part of a simulation project and are therefore a prerequisite for a Dendro study. To learn how to set up a Throg simulation model, we recommend reviewing the following on-demand training content:

In addition, Throg-specific articles can be found here on the Help Center.

Throg scenario results serve as one piece of input for a Dendro run. Identification of the appropriate Throg scenario to apply Dendro to depends on the goal of the Dendro study. The network structure under which the modeler is seeking to optimize inventory policies must be represented in a Throg scenario.

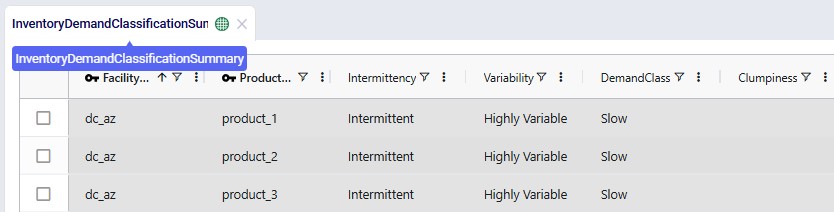

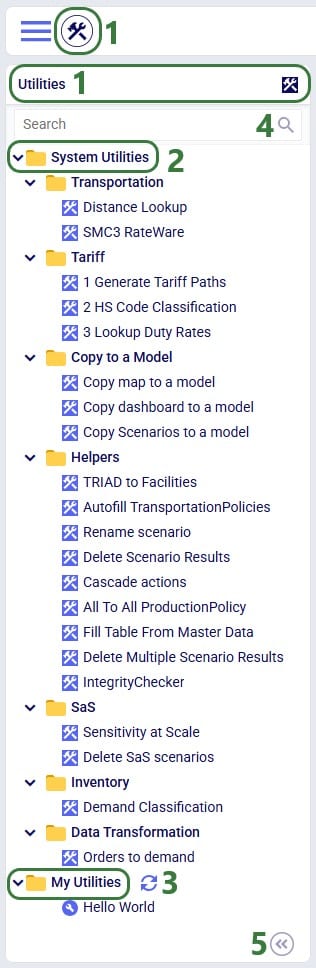

Inventory policies are required to run a simulation scenario. In some cases, a modeler may not have existing inventory policies to utilize in a baseline scenario. Similarly, simulating a proposed network structure requires first setting inventory policies for new site-product combinations. To set policies, we recommend utilizing the Demand Classification utility in Cosmic Frog's Utilities module.

Suggested inventory policies from the Inventory Policy Data Summary output table can then be employed in the Inventory Policies input table. For the sake of testing while the Throg model is being built and verified, users may find it helpful to initially leverage simple placeholder inventory policies where policies are unknown or not yet in existence (e.g., (s,S) = (0,0)).

Note: if the modeler's goal is to set policies for a proposed network structure, it is recommended to first optimize Baseline inventory policies. This enables the modeler to compare potential performance of the existing network structure (i.e., performance under optimized policies) with performance of the proposed network structure. This is especially encouraged if the Demand Classification utility was used to set Baseline inventory policies.

Before running Dendro to optimize inventory policies, the modeler must consider

The answers to these questions will inform the design of Dendro model inputs.

Every Dendro optimization begins with a well-defined model foundation and input configuration.

This configuration tells Dendro three essential things:

Together, these define the search space and fitness criteria that drive optimization.

Dendro builds directly on your validated Throg simulation model, which serves as the environment for its optimization runs.

All Throg input tables -- facilities, customers, products, and policies -- are carried into Dendro.

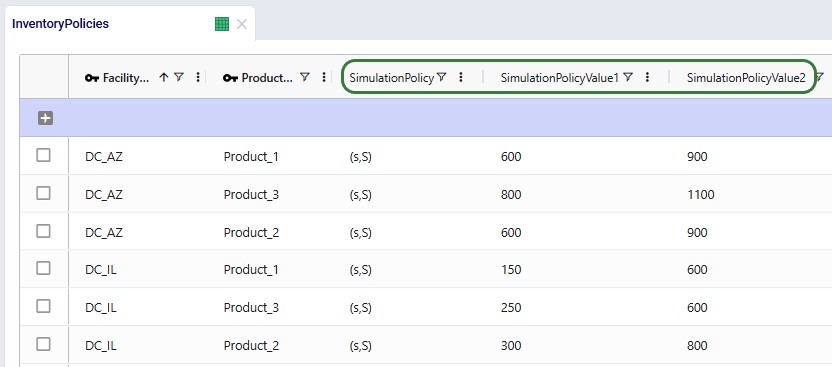

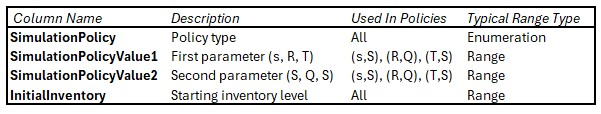

For inventory-focused optimizations, the Inventory Policies input table is most critical.

It defines policy types and parameters such as reorder points and order-up-to levels. These become natural candidates for Dendro input factors.

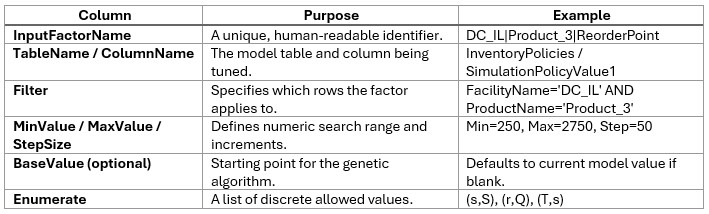

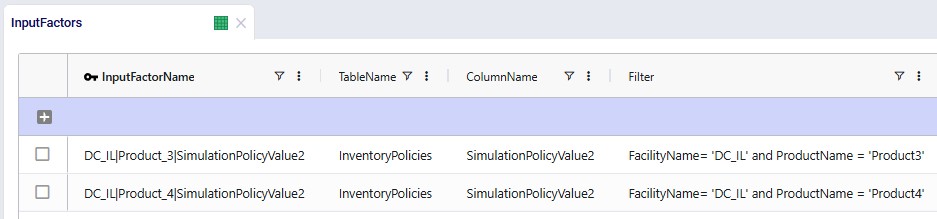

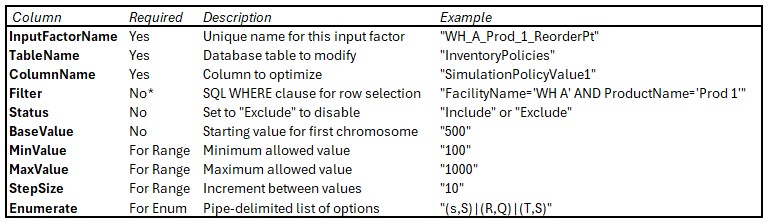

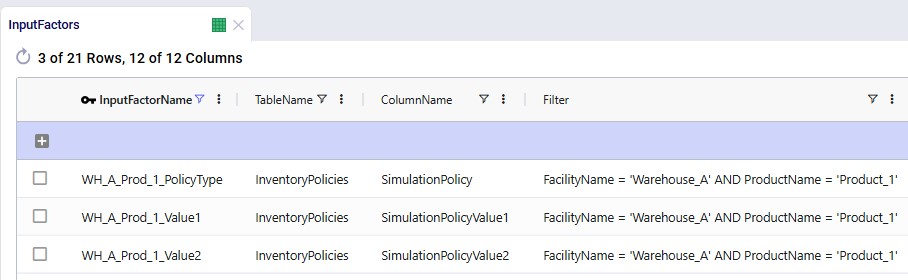

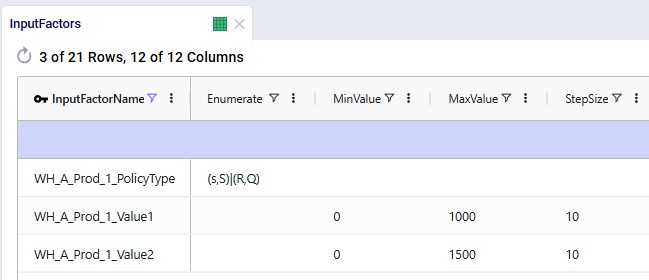

The Input Factors input table tells Dendro which parameters it can adjust during optimization. Each input factor represents a decision lever -- for instance, a reorder point or a policy type -- that Dendro will tune to seek better outcomes.

The search space defines both the breadth (how wide Dendro can explore) and the granularity (how detailed the exploration is).

The goal is a "computationally tractable search space" -- broad enough for Dendro to discover impactful alternatives but focused enough to identify patterns efficiently.

Learn all about input factors in the "Dendro: Input Factors Guide".

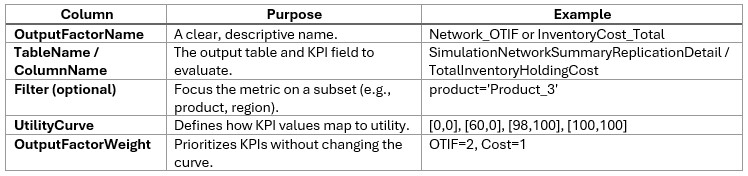

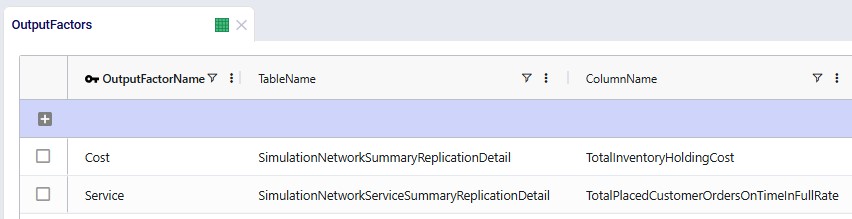

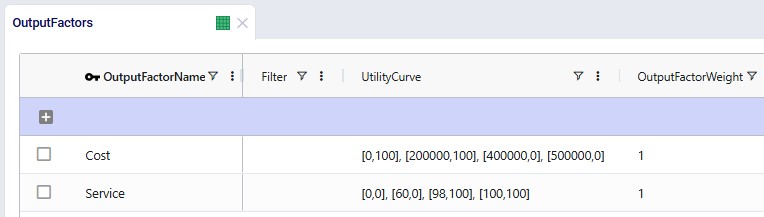

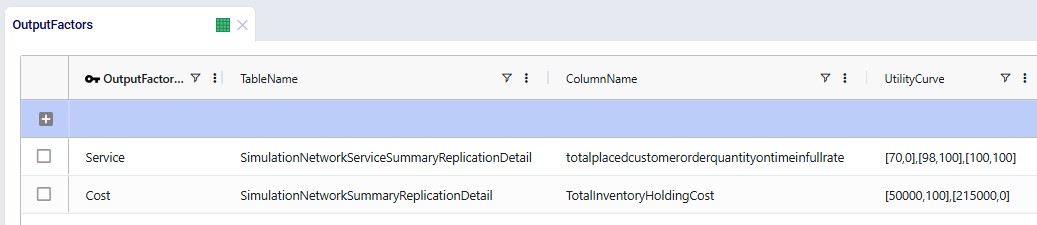

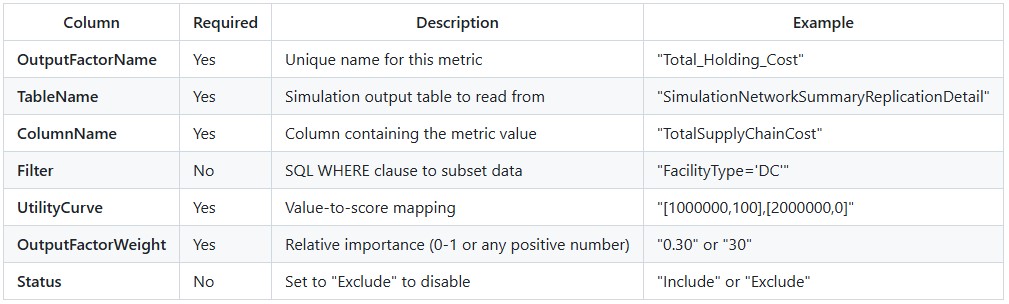

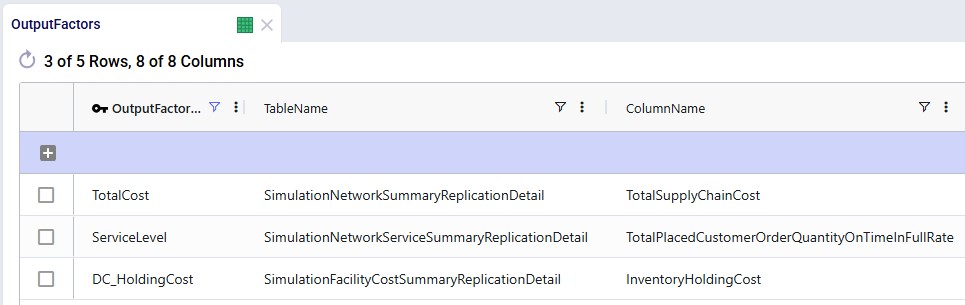

Once Dendro knows what it can vary, it needs to know how to judge success. The Output Factors input table defines the KPIs Dendro will use to evaluate each scenario and how they are scored.

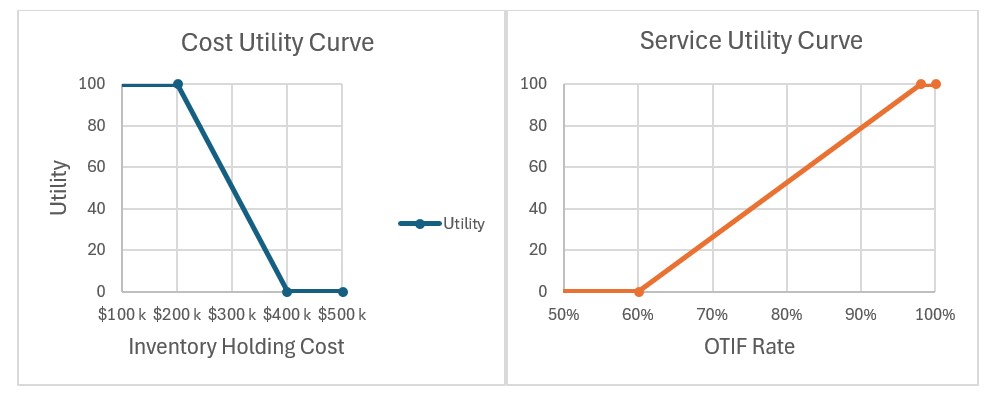

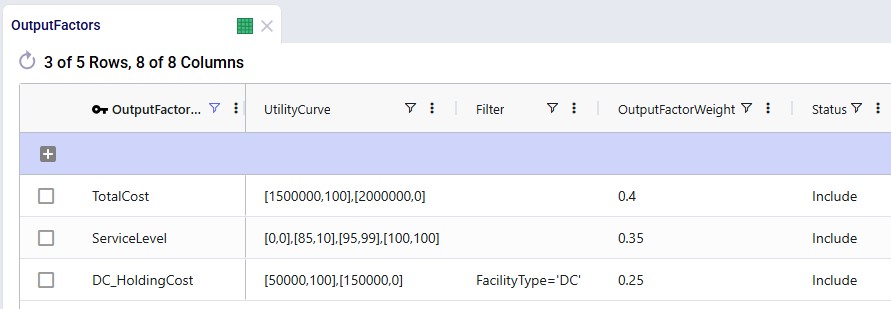

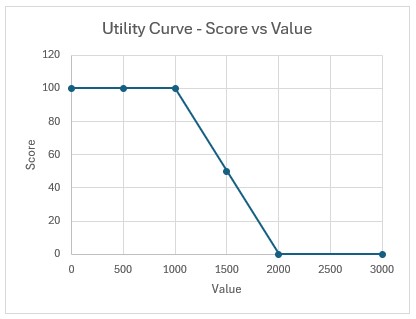

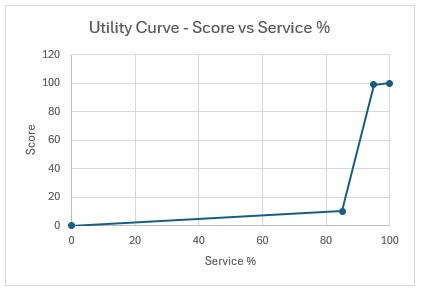

Utility curves express your business preferences:

Examples:

Tip: Curves should span the expected simulation range (e.g., 5th-95th percentile of early results). Dendro will stretch the utility curves if results fall outside the range, which can distort scoring.

Weights dictate how much influence each KPI has in Dendro's overall fitness score calculation.

Learn more about output factors in the "Dendro: Output Factors Guide".

A well-scoped input configuration defines the playing field for Dendro's optimization.

By combining realistic input ranges, balanced KPI scoring, and robust run settings, you enable Dendro to explore intelligently finding high-performing, data-backed configurations without unnecessary computation.

Dendro is built to explore a wide range of supply chain configurations using your verified and validated Throg simulation scenario(s) as the base. Running a Dendro job is straightforward, but there are a few important steps and best practices to keep in mind to ensure smooth operation.

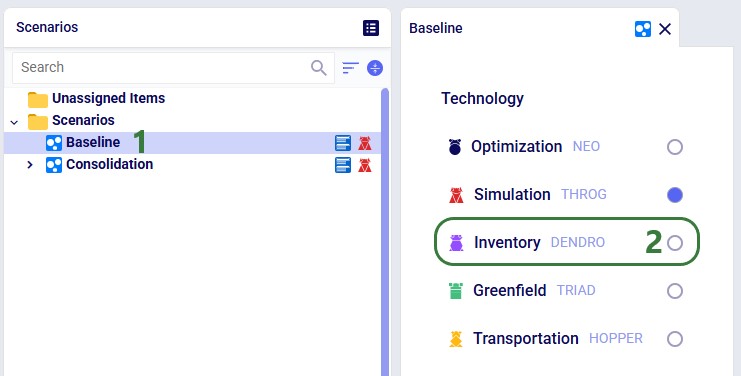

To get started:

INSERT INTO anura_2_8.modelrunoptions (

datatype,

description,

option,

status,

technology,

uidisplaycategory,

uidisplayname,

uidisplayorder,

uidisplaysubcategory,

value)

VALUES (

'double',

'Time limit (seconds) on individual Dendro scenarios',

'DendroTimeout',

'Include',

'[DENDRO]',

'Basic',

'Dendro Timeout',

'',

'',

'3600');

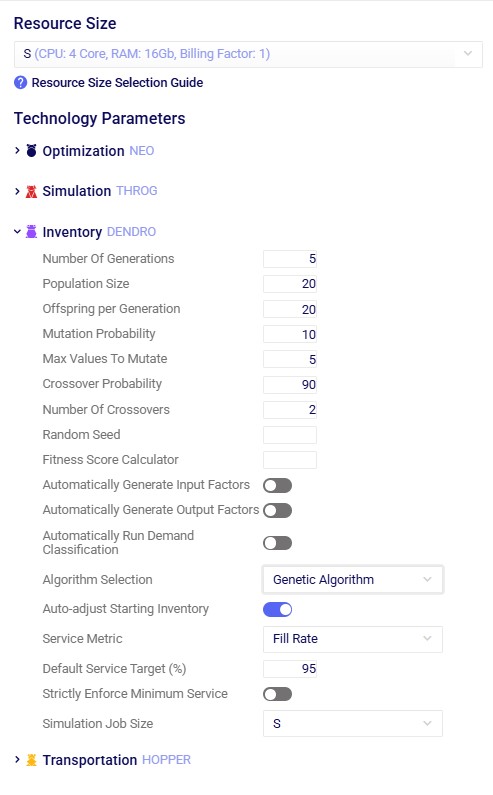

The technology parameters define how Dendro explores the solution space -- including how many scenarios to generate, how they evolve, and how long each simulation may run. As mentioned above, the default values are typically sufficient for a first run. The following are the main parameters that users may want to update in case their defaults are not suitable.

Key Settings

See also more detailed explanations of all Dendro technology parameters here.

Tuning Guidance

Use these principles to fine-tune your technology parameters:

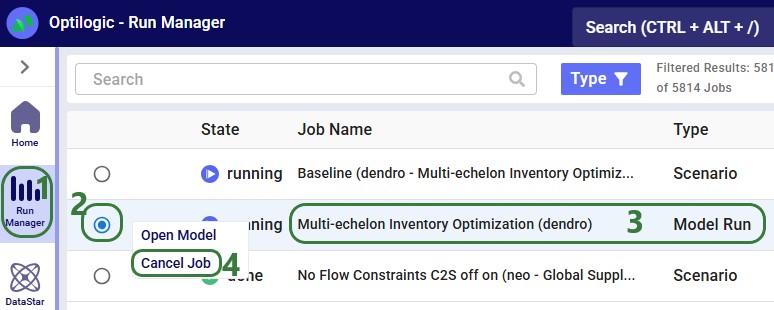

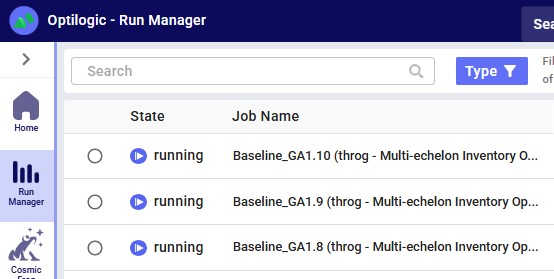

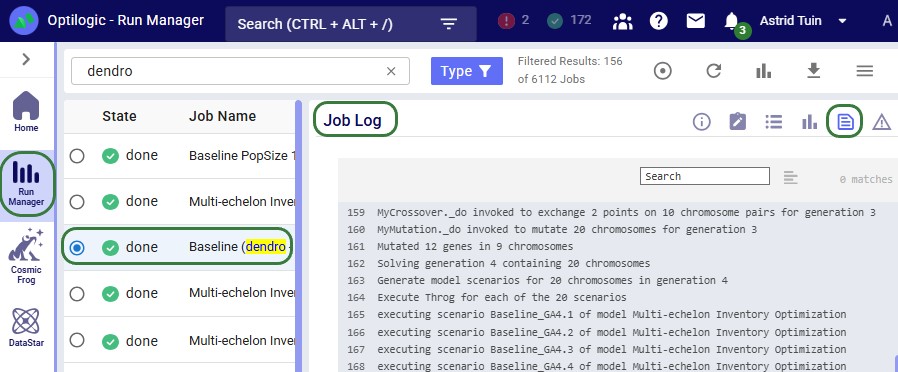

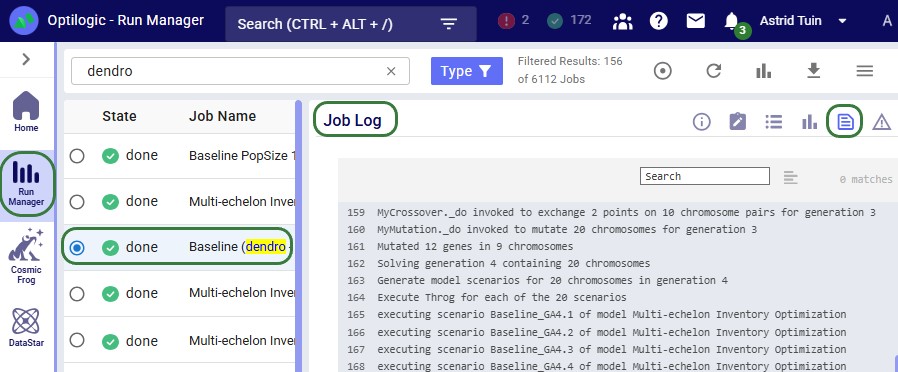

In the course of a Dendro run, the system launches many scenarios for each generation. This is the expected behavior for the genetic algorithm.

Key tips for tracking progress:

Running Dendro is as simple as selecting the engine and starting from a validated Throg model. From there, you can monitor progress, let the algorithm evolve scenarios, and apply best practices for canceling or troubleshooting runs. By following these guidelines, you ensure that Dendro can efficiently search for high-performing supply chain configurations.

To understand how the Dendro genetic algorithm works in detail, please see "Dendro: Genetic Algorithm Guide".

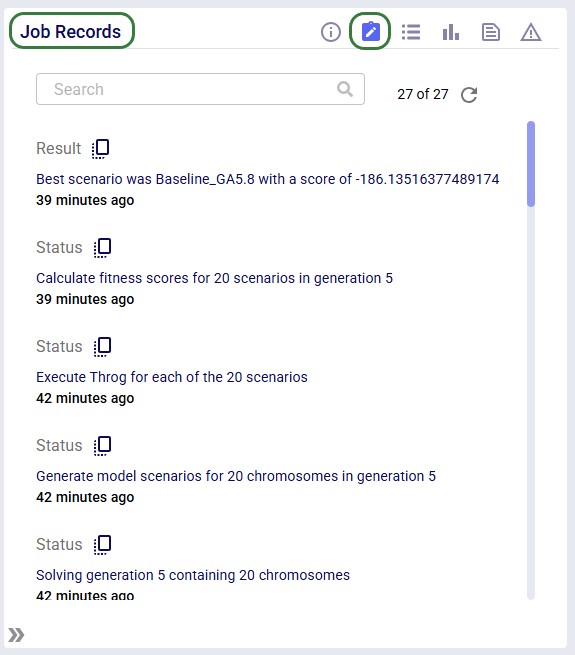

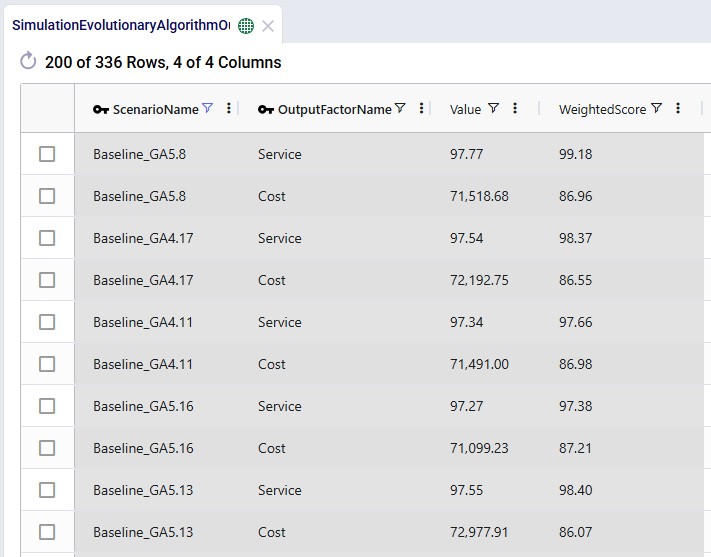

When a Dendro run completes, it produces a rich set of outputs that capture the results of the genetic algorithm's search. These outputs represent the different supply chain configurations that were explored, along with how each one performed according to the objectives you defined (e.g., cost, service level, or a balance of both).

Rather than giving you a single "right" answer, Dendro presents a spectrum of high-performing options. As the modeler, you use these results to evaluate trade-offs and select the scenarios that best align with your business goals.

During a run, Dendro evaluates each candidate scenario by:

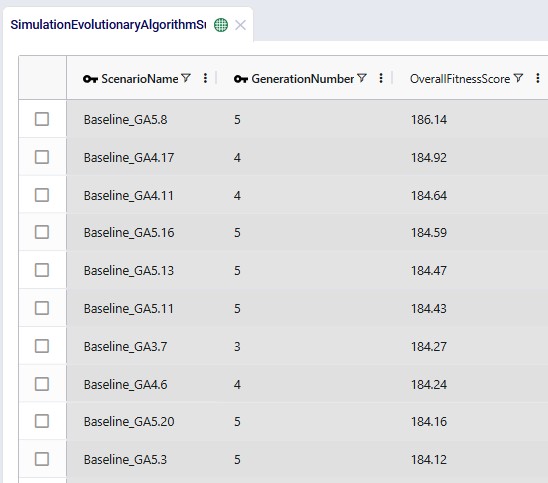

Dendro records the input factors and output factors of every scenario it evaluates. However, only the top 20 highest-performing scenarios are saved as named scenarios in your model, making them easier to access and reuse.

After the run, the top 20 scenarios are automatically saved in your model. These represent the best balances of trade-offs Dendro discovered within your defined search space.

Each scenario has an overall fitness score, calculated as the weighted sum of its output factor utility values. A higher score means the scenario performed well according to the priorities you set (e.g., balancing low cost with high service).

Tip: The "best" scenario numerically may not always be the most practical operationally. Always interpret results in context.

Where to Find Key Results

Tips for Reviewing Results

Dendro generates raw scenario outputs, but actionable recommendations come from your interpretation in the context of business goals.

In summary, Dendro does not hand you a single answer, it gives you a portfolio of high-performing options. By interpreting these scenarios in context, you can make informed decisions, balance trade-offs, and run deeper simulations where needed to guide your supply chain strategy.

You may find these links helpful, some of which have already been mentioned above:

As always, please feel free to reach out to the Optilogic Support team at support@optilogic.com in case of questions or feedback.

Dendro, Optilogic’s simulation-optimization engine, uses a sophisticated Genetic Algorithm (GA) to, for example, optimize inventory policies across your supply chain network. This guide explains how the algorithm works in business-friendly terms, helping you understand what happens when you run Dendro and how to get the best results.

The Big Picture: Dendro's Genetic Algorithm explores thousands of different inventory policy combinations, simulates each one to see how it performs, and gradually evolves toward the best possible solution - much like natural evolution produces better-adapted organisms over time.

Recommended reading prior to diving into this guide: Getting Started with Dendro, which is a higher-level overview of how Dendro works.

Genetic Algorithms are inspired by biological evolution. Just as species evolve to become better adapted to their environment through:

Dendro evolves inventory policies to become better adapted to your business objectives through:

Traditional optimization methods struggle with inventory networks due to:

Genetic Algorithms excel at this type of problem because they:

Dendro's implementation uses three fundamental elements: chromosomes, genes, and fitness score.

A chromosome represents one complete set of inventory policies for your entire supply chain.

Example Chromosome:

Each chromosome is essentially a complete "proposal" for how to manage inventory across your network.

Each gene within a chromosome represents the policy for one facility-product combination.

Example Gene:

Genes can mutate (change their values) to explore different policy settings.

The fitness score measures how good a chromosome is - combining costs, service levels, and other objectives.

Higher scores are better - Dendro displays scores where better solutions have higher values.

A fitness score might combine:

Whenever the below refers to an option, this is a model run option that can be set in the Dendro section of the Technology Parameters on the right-hand side of the Run Settings screen that comes up after a user clicks on the green Run button in Cosmic Frog.

What happens: Dendro creates the initial population of chromosomes (policy combinations).

Implementation details:

Business perspective: Think of this as Dendro assembling a diverse team of proposals. The first proposal is "keep doing what we are doing", while the others explore variations like "increase safety stock by 10%", "reduce order quantities", etc.

Each generation follows the same four-step cycle:

What happens: Each chromosome is evaluated by:

Implementation details:

Business perspective: Dendro tests each proposal by running it through a realistic simulation of your supply chain over time. It is like running a pilot program for each policy combination to see what would actually happen - but virtually, so you can test thousands of options without risk.

Typical duration:

What happens: Dendro ranks all chromosomes by fitness score and selects the best ones to continue to the next generation.

Implementation details:

Business perspective: After testing all proposals, Dendro keeps the most promising ones and discards the poor performers. This is like a review committee keeping the best ideas and dropping the ones that do not work well.

Example:

Generation 5 Results (20 chromosomes evaluated):

What happens: Dendro creates new chromosomes by combining parts of two successful parent chromosomes.

Implementation details:

Business perspective: Dendro creates new proposals by combining the best parts of successful proposals. If one policy set works well for East Coast facilities and another works well for high-volume products, crossover might create a policy that combines both successful approaches.

Example with 1-Point Crossover:

Parent 1: [Gene_A1, Gene_A2, Gene_A3, Gene_A4, Gene_A5]

Parent 2: [Gene_B1, Gene_B2, Gene_B3, Gene_B4, Gene_B5]

↑ Crossover point

Offspring 1: [Gene_A1, Gene_A2, Gene_A3 | Gene_B4, Gene_B5]

Offspring 2: [Gene_B1, Gene_B2, Gene_B3 | Gene_A4, Gene_A5]

When crossover is most effective:

What happens: Dendro randomly adjusts policy values in the new chromosomes to explore variations.

Implementation details:

Business perspective: Dendro introduces controlled randomness to explore new options. Even the best current policies might not be optimal, so mutation ensures the algorithm does not get stuck. It is like saying "this policy works well at 500 units, but let's try 525 and 475 too".

Example mutations:

Original Gene: Reorder Point = 500, Order-Up-To = 1000

Mutated Gene: Reorder Point = 525, Order-Up-To = 1050

Mutation strategies:

Input factors define what Dendro can change. They are the "variables" in your optimization problem. They can be specified in the Input Factors input table in your Cosmic Frog model.

Common input factors:

How they work in chromosomes: Each input factor becomes a position in the chromosome. Dendro explores different values for each position.

Example: If you have 50 facility-product combinations to optimize, and each has two policy values (reorder point and order-up-to), your chromosome has 50 genes, each with 2 values = 100 total parameters being optimized.

Input factors are covered in detail in the Dendro: Input Factors Guide.

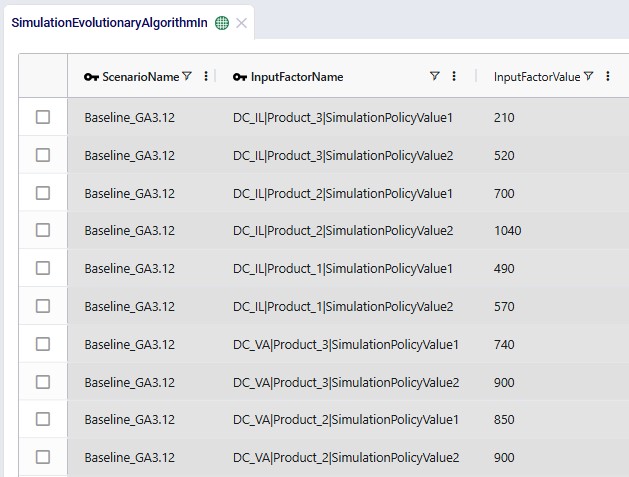

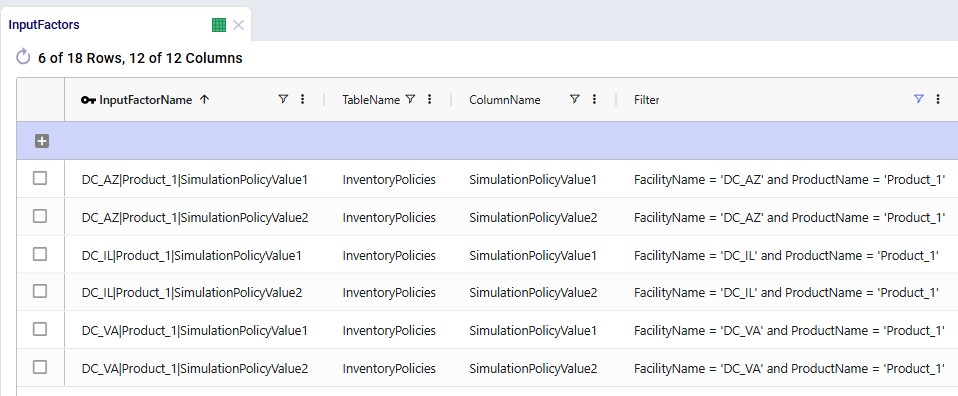

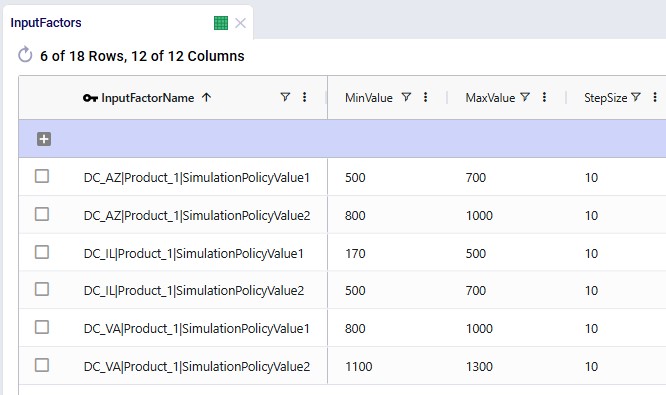

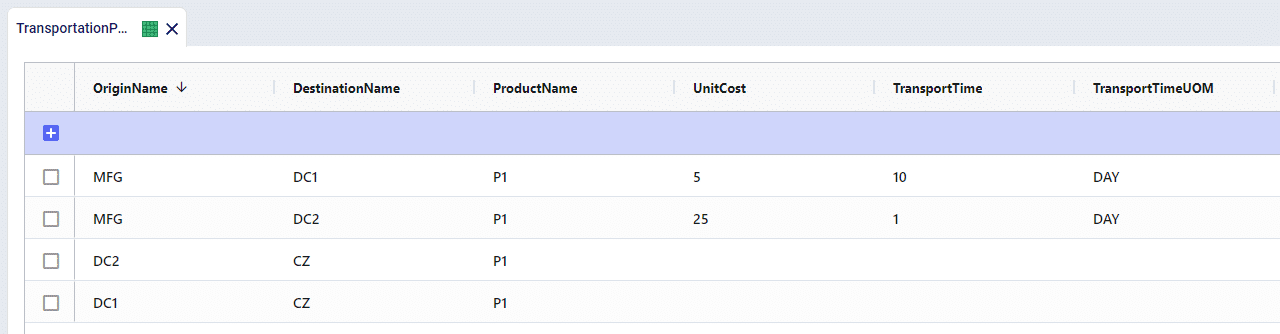

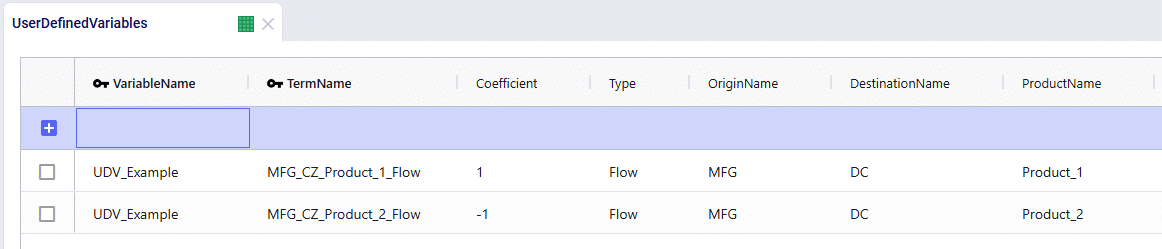

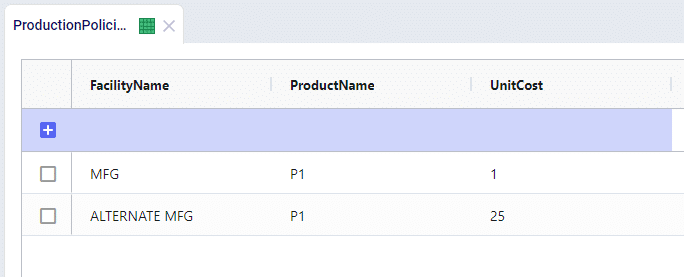

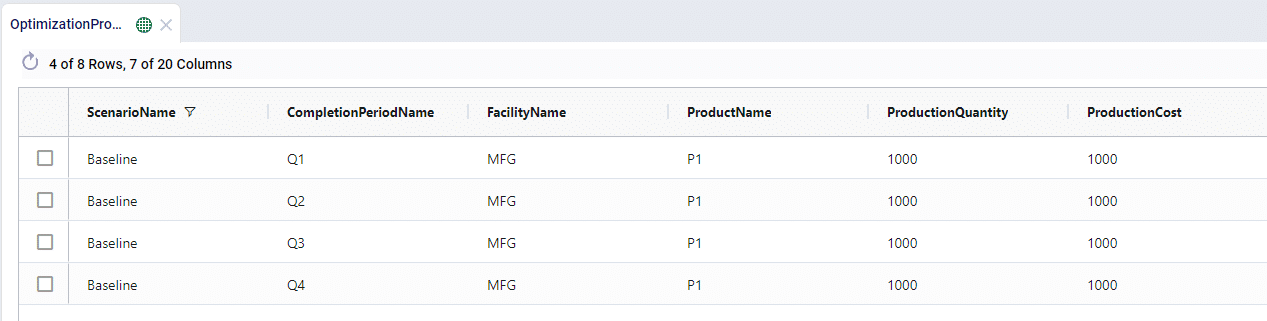

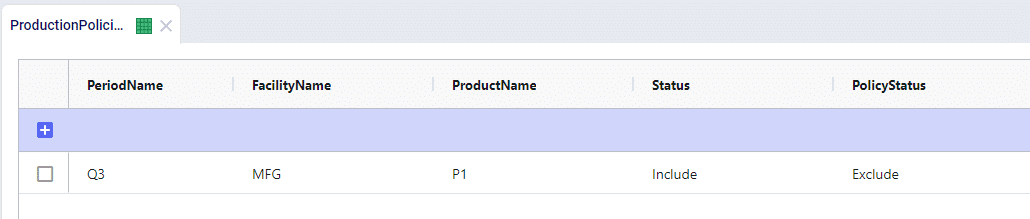

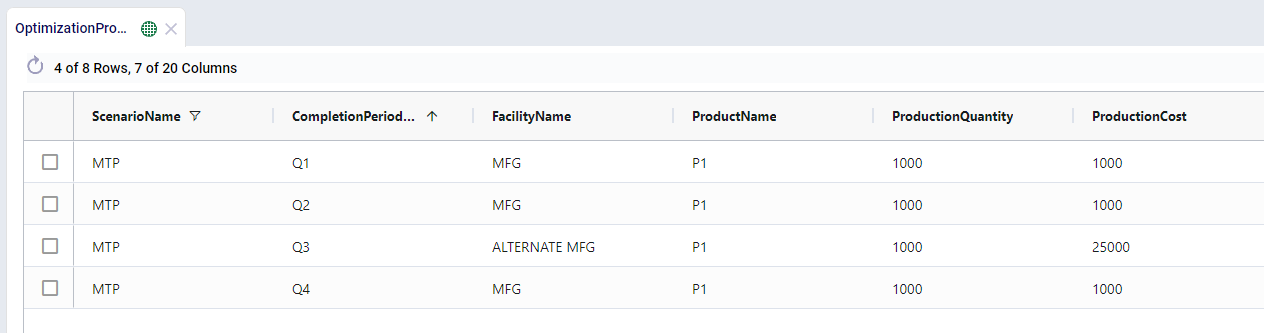

The following 2 screenshots show 6 records in an Input Factors input table in Cosmic Frog. Both simulation policy values for Product_1 at 3 different DCs are specified in these records:

Output factors define what Dendro tries to minimize. They are the "objectives" in your optimization problem. Output factors can be set in the Output Factors input table.

Common output factors:

How they combine - each output factor has a:

The Dendro: Output Factors Guide covers output factors in more detail.

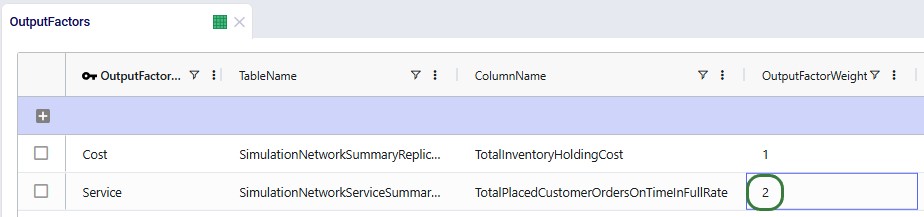

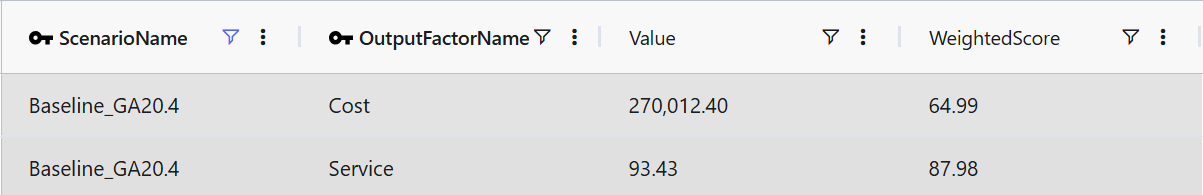

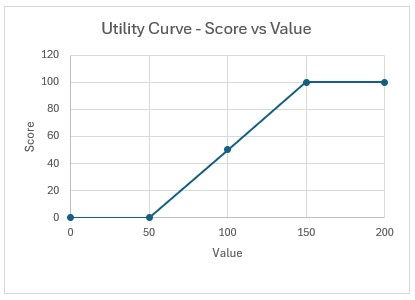

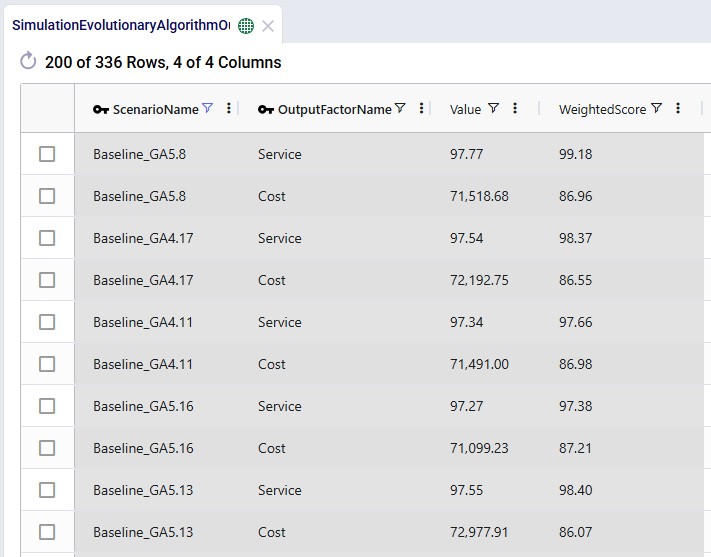

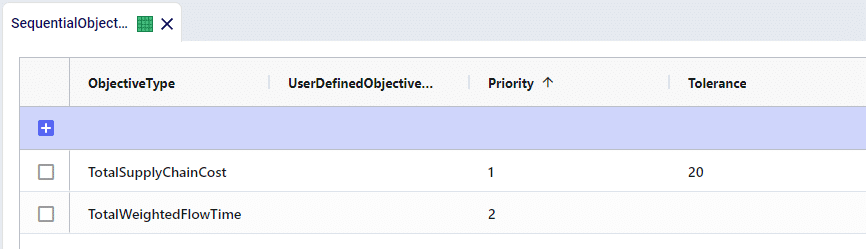

The following screenshot shows an output factors table containing 2 output factors, one measuring service and the other cost:

Utility curves convert simulation outputs into comparable fitness scores.

Why we need them:

How they work:

Example:

Holding Cost Utility Curve:

Business benefit: Utility curves let you define what "good" and "bad" mean for each metric. They translate apples-and-oranges metrics into a single comparable score.

The overall fitness score is the weighted sum of all output factors.

Formula:

Fitness Score = Σ(Weighted Score for each Output Factor)

Where for each factor:

Weighted Score = (Normalized Score from Utility Curve) × (Factor Weight)

Higher is better - Better solutions receive higher fitness scores.

Example calculation:

Output Factors:

1. Holding Cost: $75,000 → Raw Score 75 → Weighted 22.5 (weight 30%)

2. Transport Cost: $40,000 → Raw Score 60 → Weighted 15.0 (weight 25%)

3. Stockout Penalty: $10,000 → Raw Score 80 → Weighted 20.0 (weight 25%)

4. Service Level: 96% → Raw Score 90 → Weighted 18.0 (weight 20%)

Overall Fitness = 22.5 + 15.0 + 20.0 + 18.0 = 75.5 points

Important note: Scores are recalculated when new min/max values are discovered to ensure fair comparison across all generations.

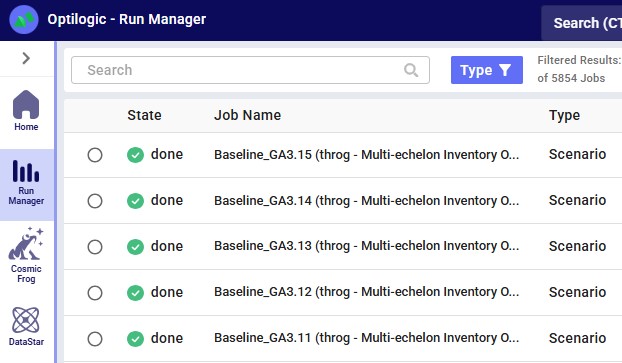

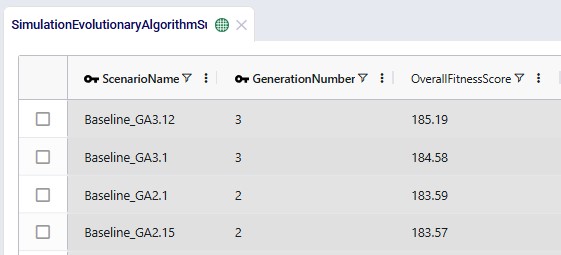

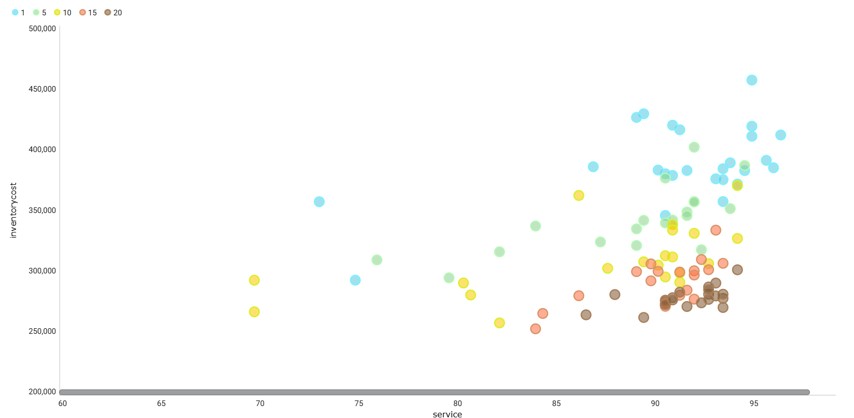

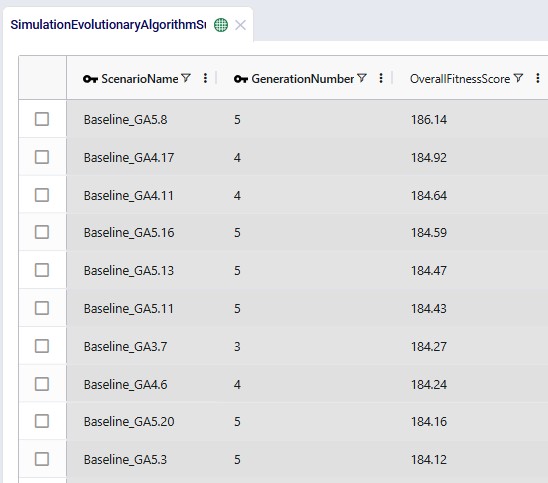

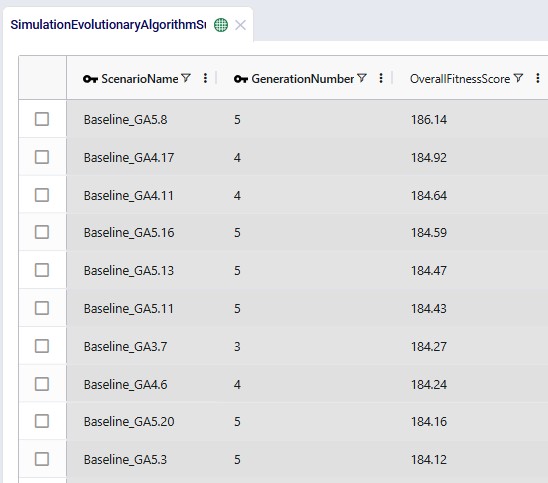

After a Dendro run completes, the fitness scores of all scenarios (=chromosomes) of all generations can be found in the Simulation Evolutionary Algorithm Summary output table (showing the top 10 records here with the highest overall fitness scores):

The challenge: Early in the optimization, Dendro does not know what the best and worst possible values are for each metric. As it explores, it might find: