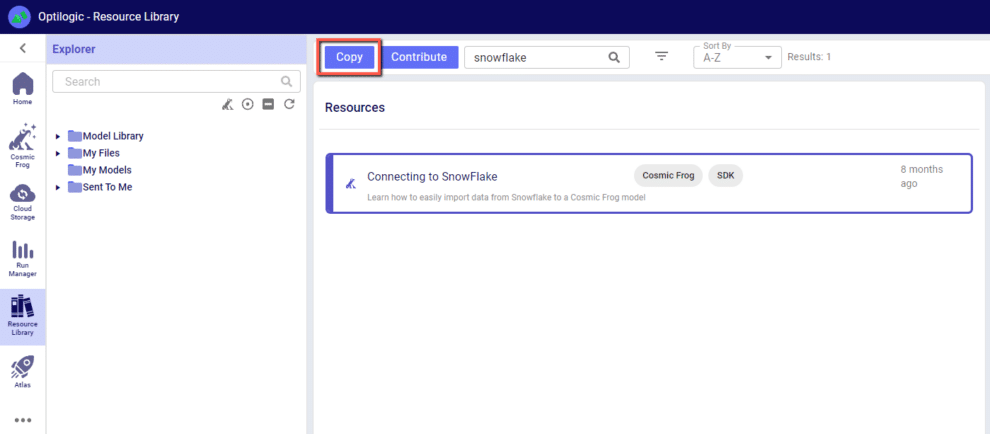

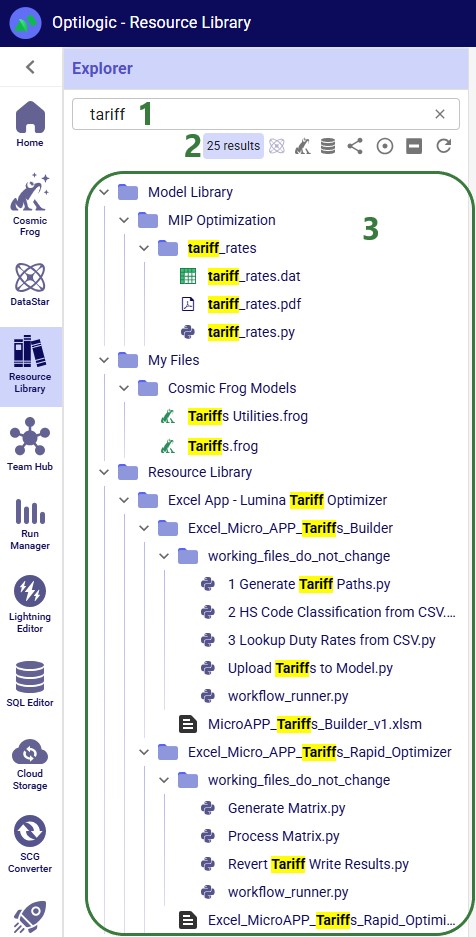

An example of how to connect a Cosmic Frog model to a Snowflake database, along with a video walkthrough, can be found in the Resource Library. To get a copy of this demo into your own Optilogic account simply navigate to the Resource Library and copy the Snowflake template into your workspace.

The following instructions show how to establish a local connection, using Alteryx, to an Optilogic model that resides within our platform. These instructions will show you how to:

Watch the video for an overview of the connection process:

A step by step set of instructions can also be downloaded in the slide deck here: CosmicFrog-Alteryx-Connection-Instructions

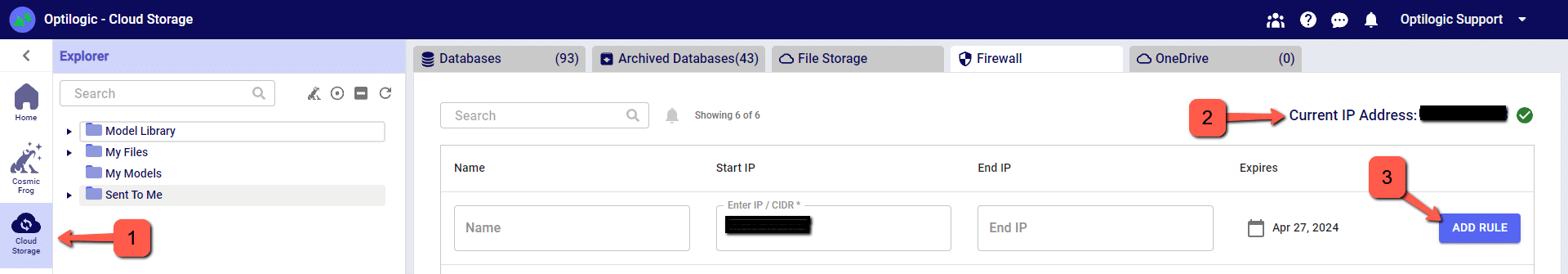

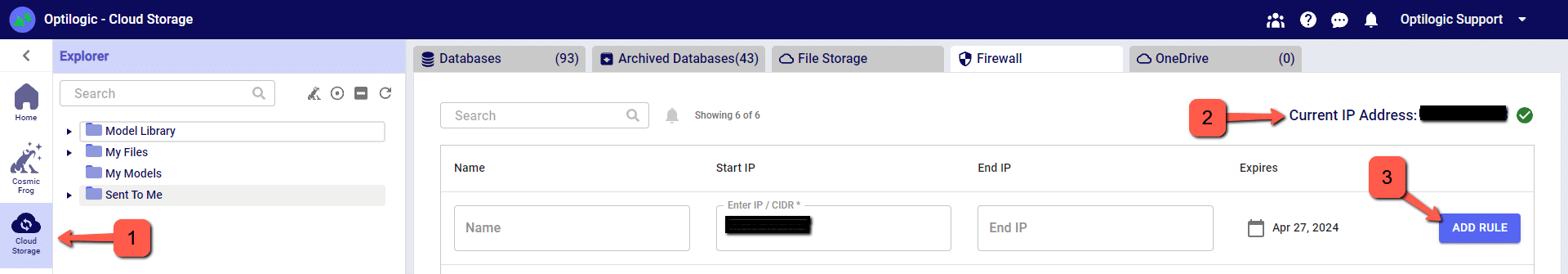

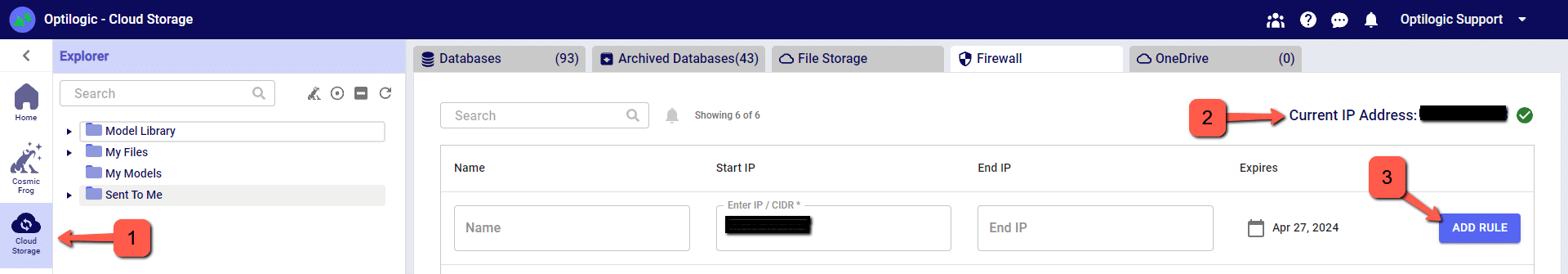

To make a local connection you must first open a Firewall connection between your current IP address and the Optilogic platform. Navigate to the Cloud Storage app – note that the app selection found on the left-hand side of the screen might need to be expanded. Check to see if your current IP address is authorized and if not, add a rule to authorize this IP address. You can optionally set an expiration date for this authorization.

If you are working from a new IP Address, a banner notification should be displayed to let you know that the new IP Address will need to be authorized.

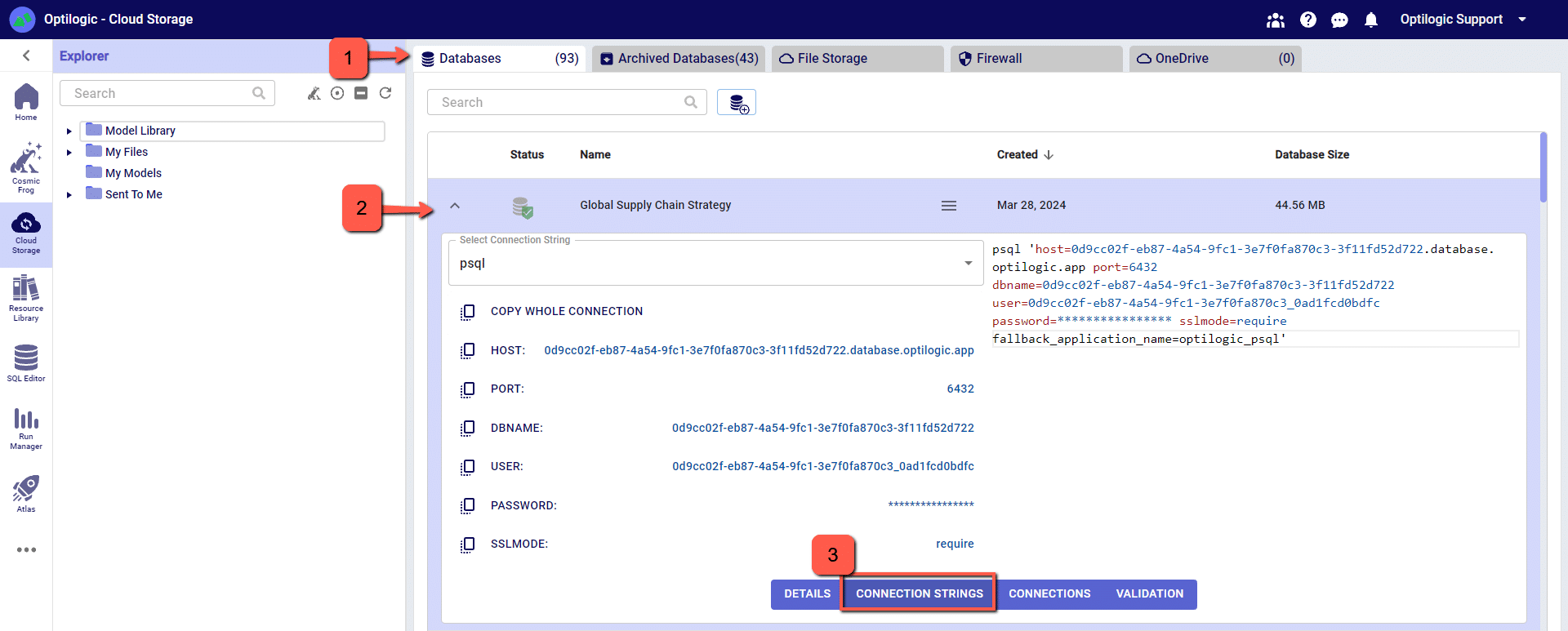

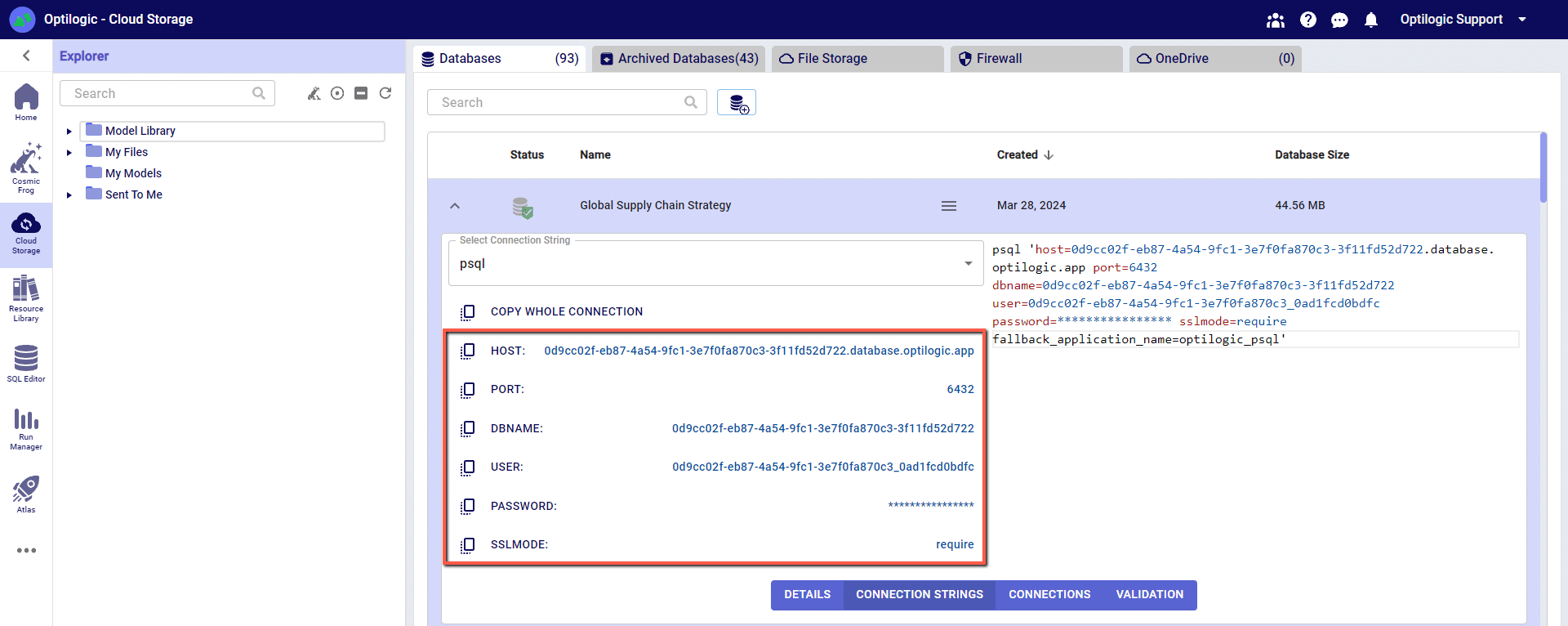

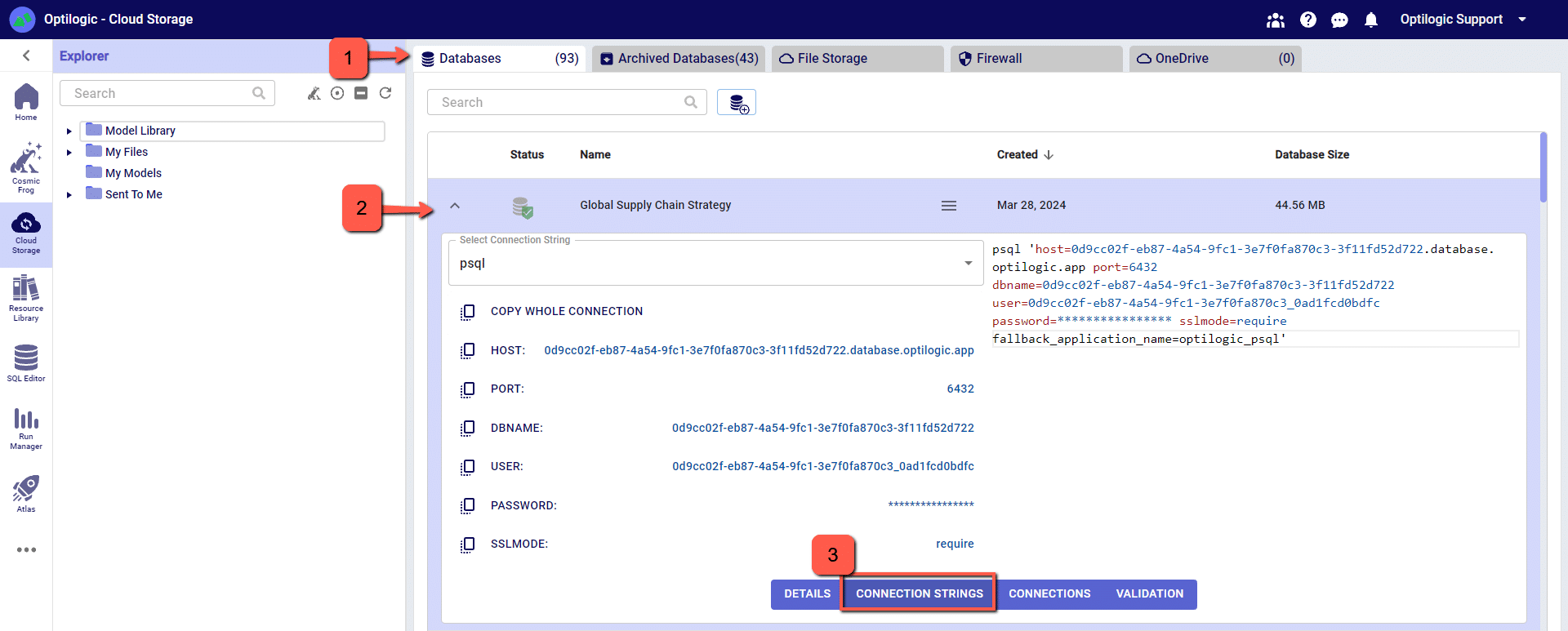

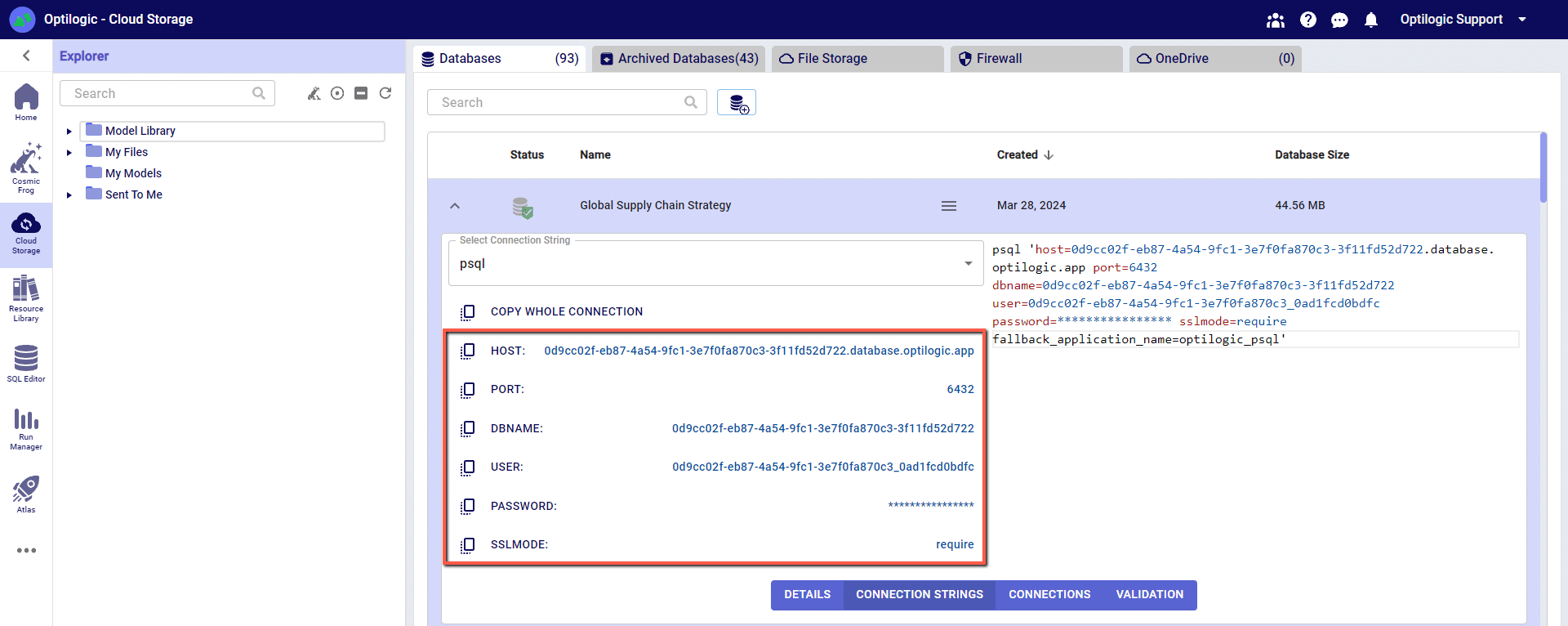

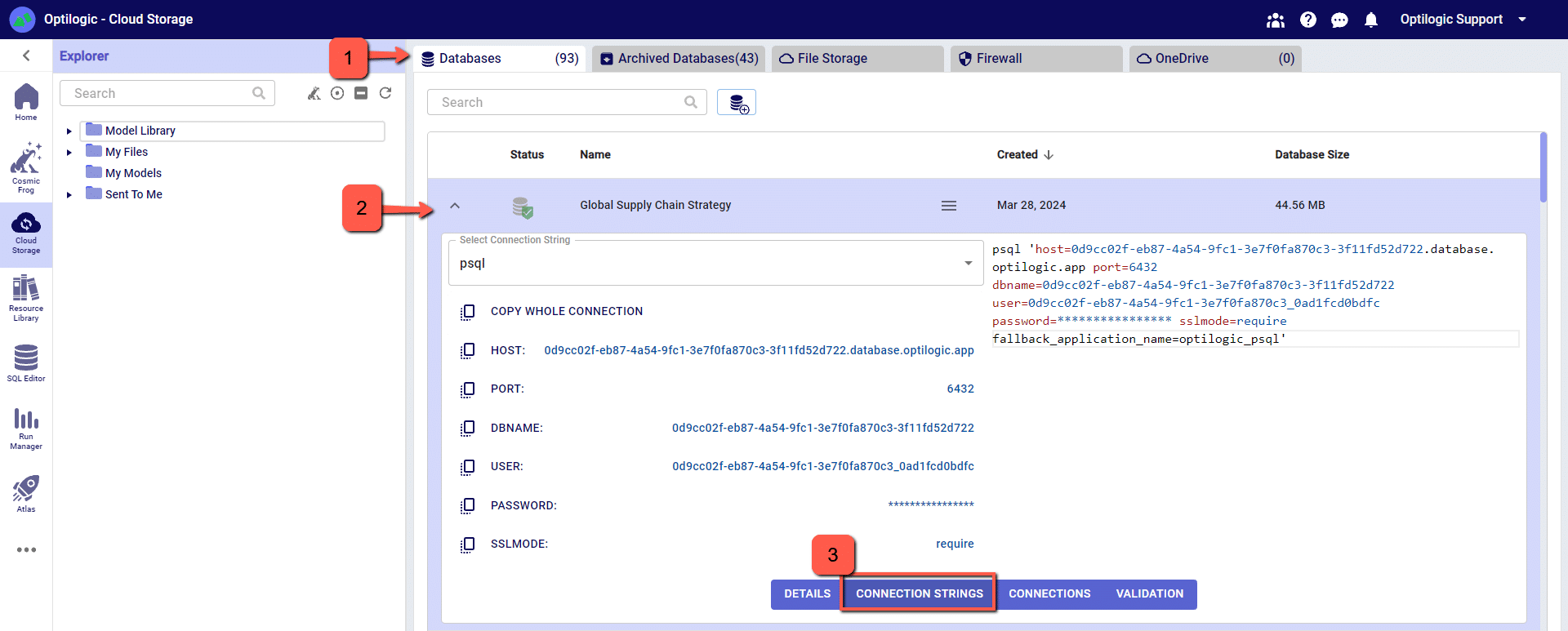

From the Databases section of the Cloud Storage page, click on the database that you want to connect to. Then, click on the Connection Strings button to display all of the required connection information.

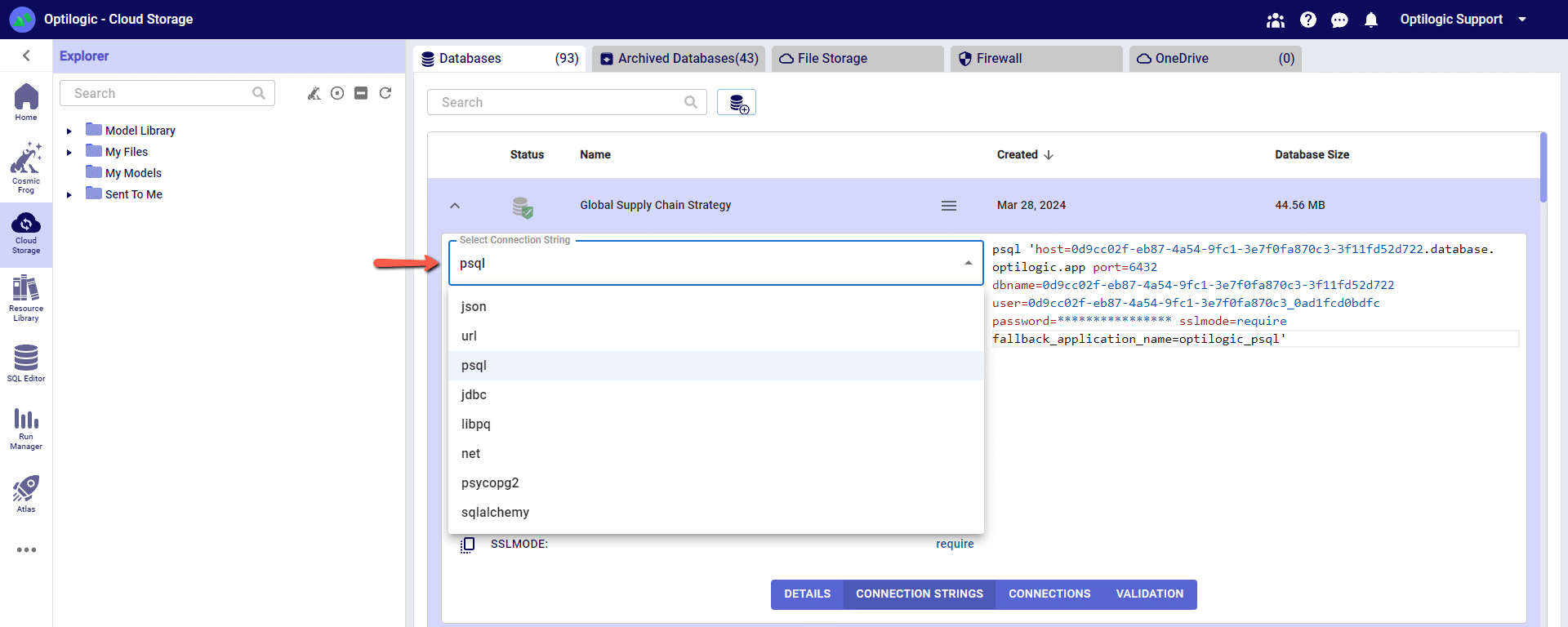

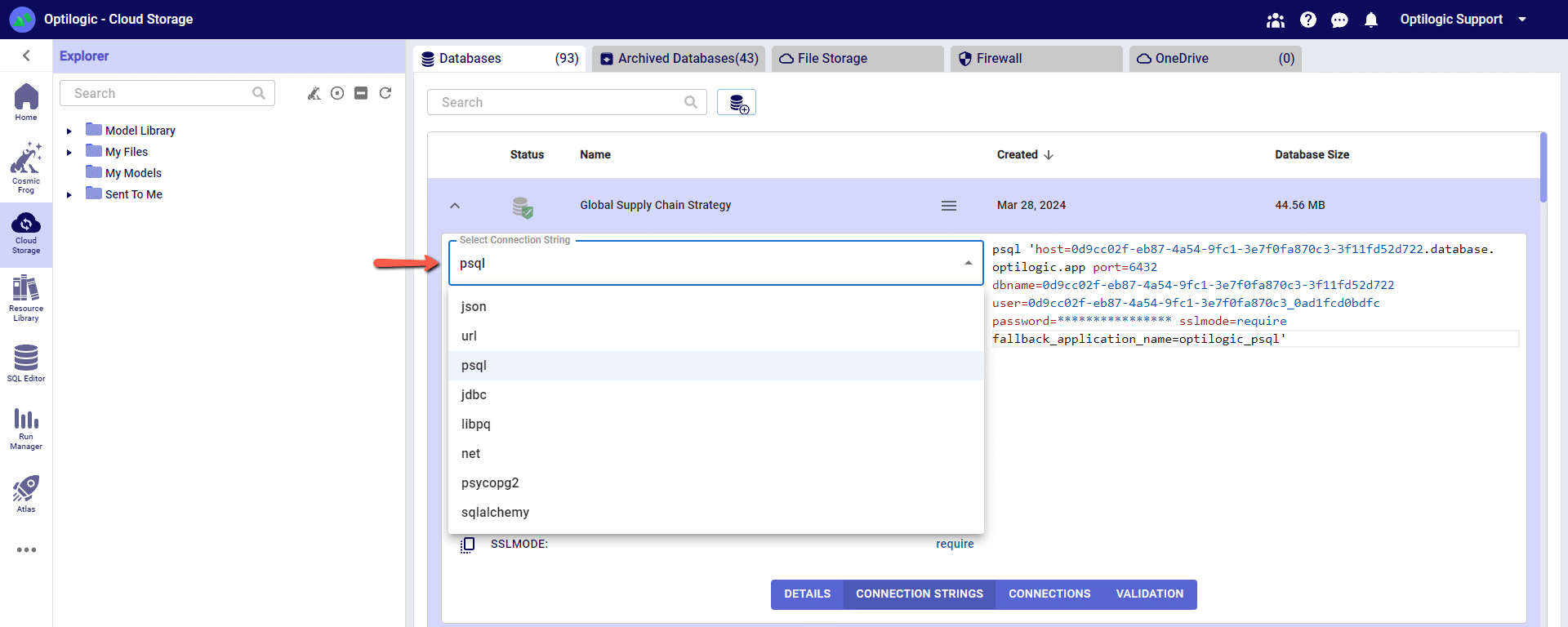

We have connection information for the following formats:

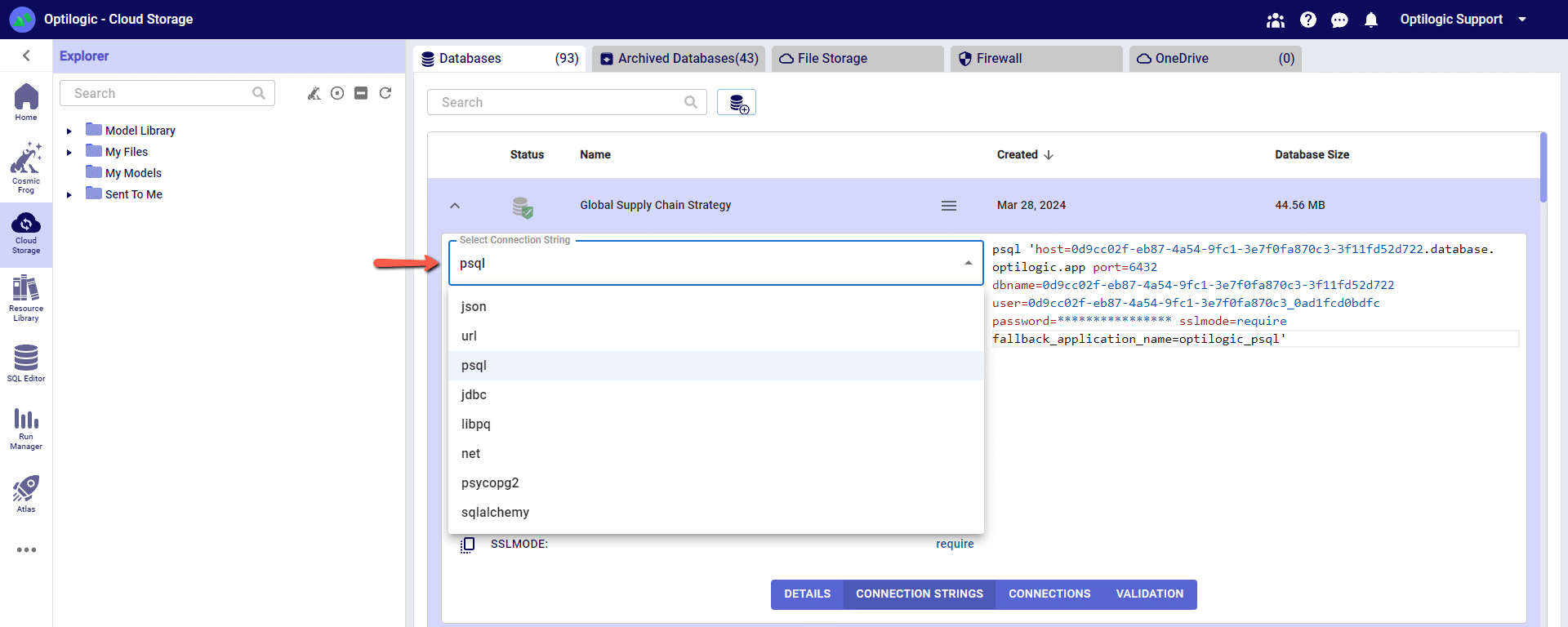

To select the format of your connection information, use the drop-down menu labeled Select Connection String:

For this example, we will copy and paste the strings for the ‘PSQL’ connection. The screen should look something like the following:

You can click on any of the parameters to copy them to your clipboard, and then paste them into the relevant field when establishing the PSQL ODBC connection.

Many tools, including Alteryx, use Open Database Connectivity (ODBC) to enable a connection to the Cosmic Frog model database. To access the Cosmic Frog model, you will need to download and install the relevant ODBC drivers. Latest versions of the drivers are located here: https://www.postgresql.org/ftp/odbc/releases/

From here, click on the latest parent folder, which as of June 20, 2024 will be REL-16_00_0005. Select and download the psqlodbc_x64.msi file.

When installing, use the default settings from the installation wizard.

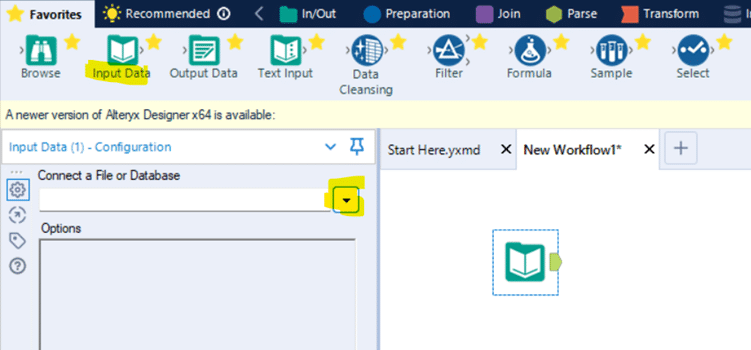

At this point we have the pieces to make a connection in Alteryx. Open Alteryx and start a new Workflow. Drag the Input Data action into the Workflow and click to “Connect a File or Database.”

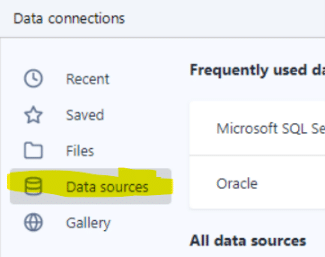

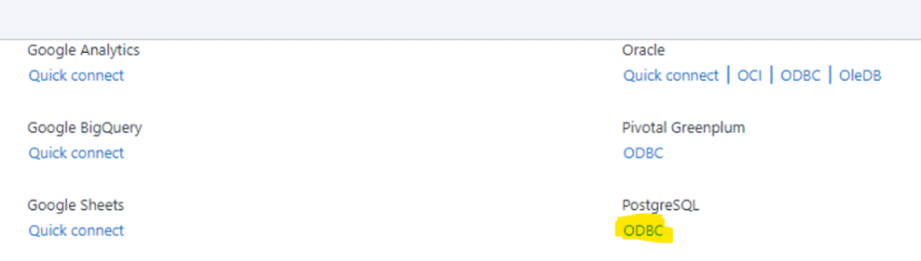

Select “Data sources” and scroll down to select “PostgresSQL ODBC”

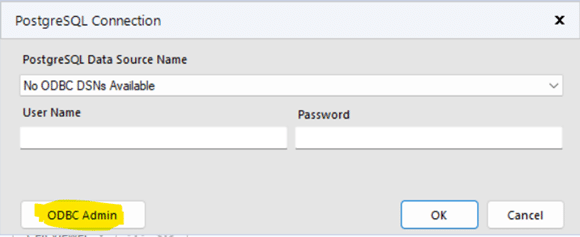

On the next screen click “ODBC Admin” to setup the connection.

Click “Add” to create a new connection and then select “PostgreSQL ANSI(x64)” then click “Finish.”

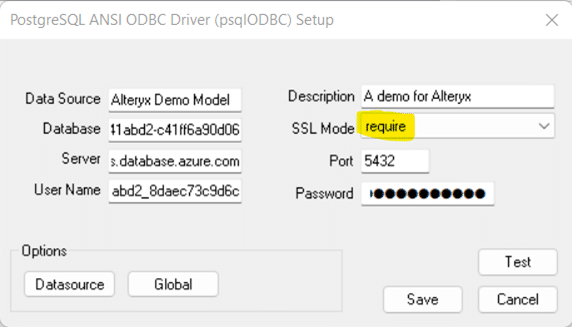

Now we need to configure the connection with the information we gathered from the connection strings.

“Data Source” and “Description” allow you to name the connection, these can be named whatever you wish.

Copy the values for “Server”, “Database”, “User Name”, “Password” and “Port” from the connection string information copied from Optilogic Cloud Storage (see above).

DON’T FORGET to select “require” in “SSL Mode”

You may click “Test” to confirm the connection works or click “Save.”

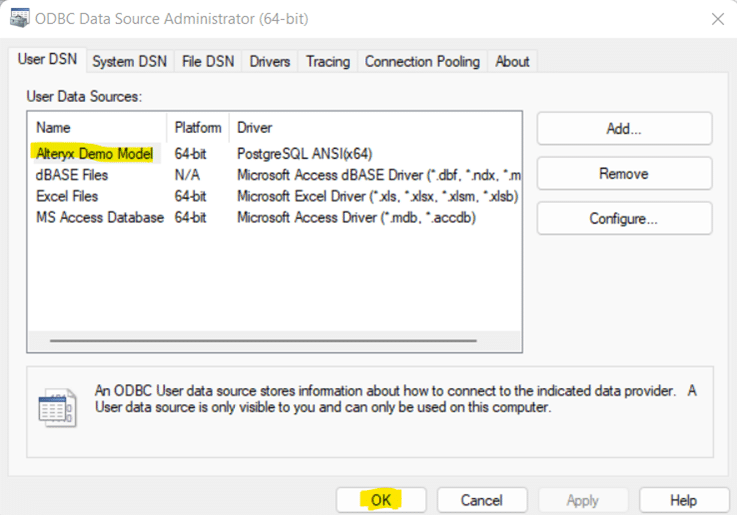

Now select the new connection, in this example “Alteryx Demo Model” and click “OK”

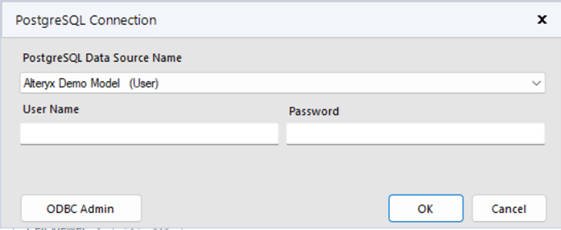

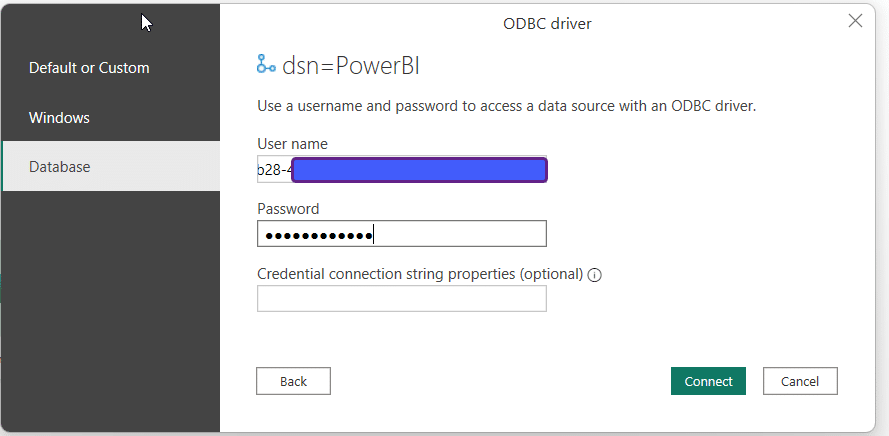

Now we need to select the same Data Source that we just built in ODBC within Alteryx. We need to enter the username and password for the connection for Alteryx authentication. These are the same credentials used to setup the ODBC connection. Remember to use your specific model’s credentials from the Connection String in the Optilogic platform Cloud Storage page.

Depending on your organization’s security protocols, one additional step might need to be taken to whitelist Optilogic’s Postgres SQL Server. This can be done by whitelisting the host URL (*.postgres.database.azure.com) and the port (6432). If you are unsure how to whitelist the server or do not have the necessary permissions, please contact your IT department or network administrator for assistance.

11/16/2023 – There is an issue connecting through ODBC with the latest version of Alteryx. While we await a fix in an updated version of Alteryx, you can still connect with an older version of Alteryx (2021.4.2.47884)

05/01/2024 – Alteryx has resolved the ODBC connection issue with their latest major version release of 2024.1. If your currently installed Alteryx version is not working as intended, please upgrade to latest.

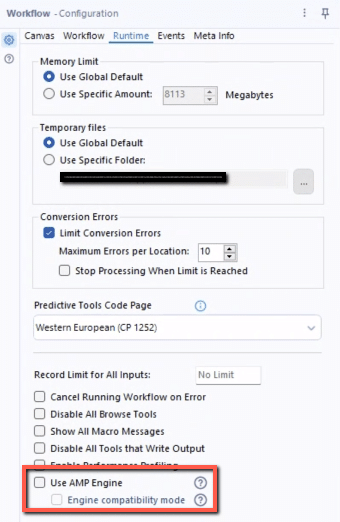

An alternative workaround is to disable the AMP engine on your Alteryx workflow. For any workflows that use the ODBC connection to a database hosted on the platform, you can uncheck the option in the Workflow Configuration for ‘Use AMP Engine’. The Workflow Configuration window will display on the left side of your screen if you click on the whitespace anywhere in your workflow.

The following instructions show how to establish a local connection, using Azure Data Studio, to an Optiogic model that resides in the platform. These instructions will show you how to:

Watch the video for an overview of the connection process:

To make a local connection you must first open a Firewall connection between your current IP address and the Optilogic platform. Navigate to the Cloud Storage app – note that the app selection found on the left-hand side of the screen might need to be expanded. Check to see if your current IP address is authorized and if not, add a rule to authorize this IP address. You can optionally set an expiration date for this authorization.

If you are working from a new IP Address, a banner notification should be displayed to let you know that the new IP Address will need to be authorized.

From the Databases section of the Cloud Storage page, click on the database that you want to connect to. Then, click on the Connection Strings button to display all of the required connection information.

We have connection information for the following formats:

To select the format of your connection information, use the drop-down menu labeled Select Connection String:

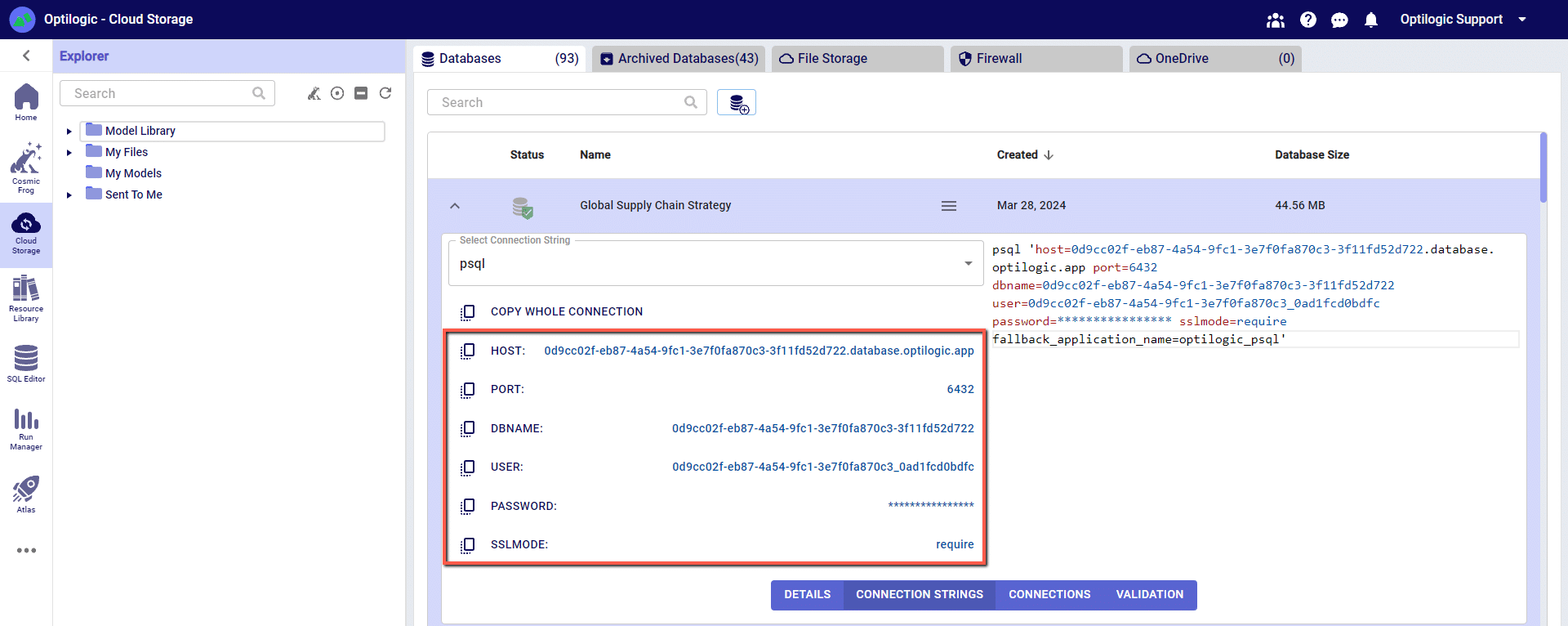

For this example, we will copy and paste the strings for the ‘PSQL’ connection. The screen should look something like the following:

You can click on any of the parameters to copy them to your clipboard, and then paste them into the relevant field in Azure Data Studio when establishing the PSQL connection.

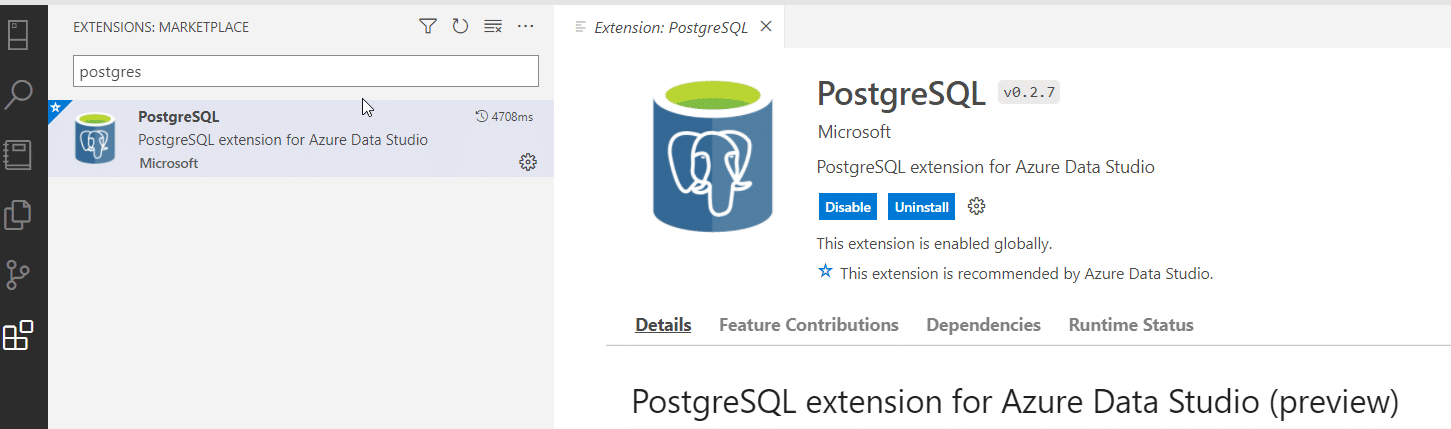

Within Azure Data Studio click the “Extensions” button and type in “postgres” in the search box to find and install the PostgreSQL extension.

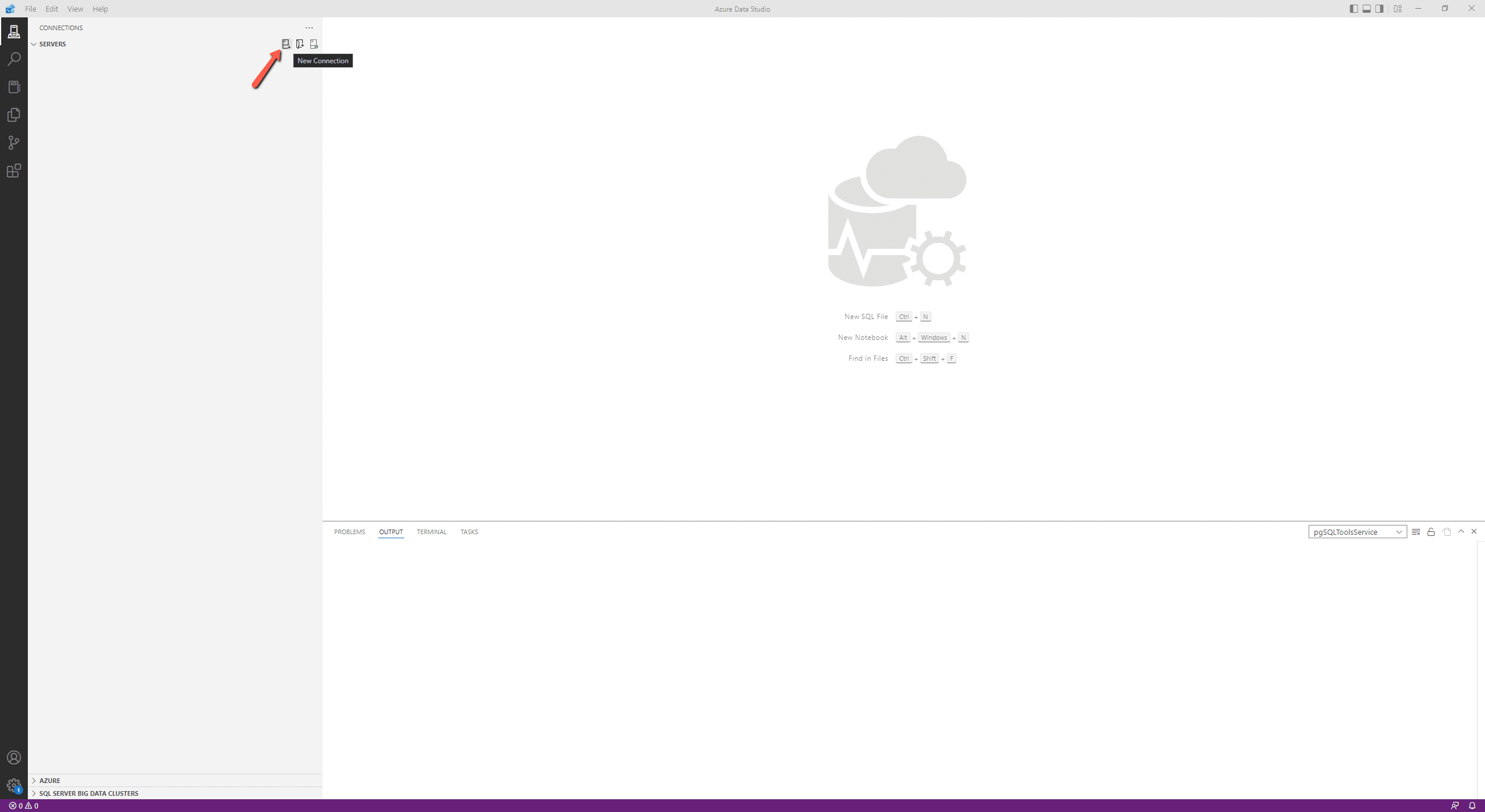

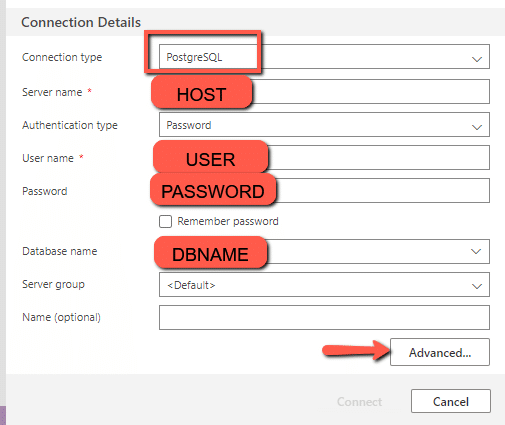

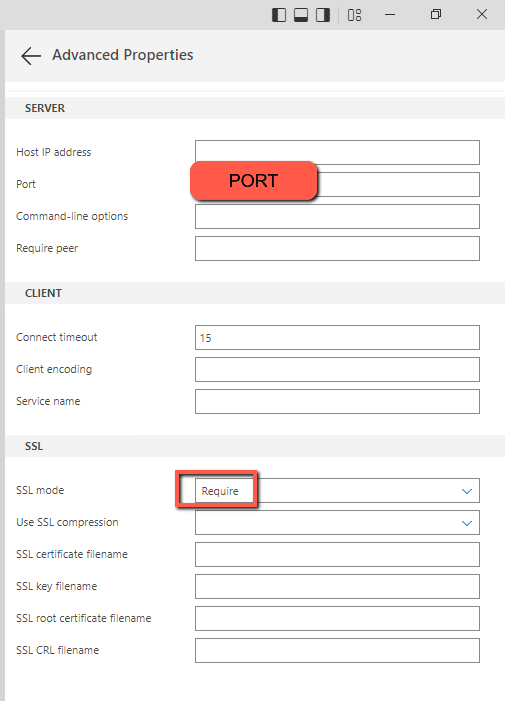

Add a new connection in Azure Data Studio, change the connection type to “PostgreSQL, “and enter the arguments for “PSQL” from the Cloud Storage page. NOTE: you will need to click “Advanced” to type in the Port and to change the SSL mode to “require.”

Depending on your organization’s security protocols, one additional step might need to be taken to whitelist Optilogic’s Postgres SQL Server. This can be done by whitelisting the host URL (*.database.optilogic.app) and the port (6432). If you are unsure how to whitelist the server or do not have the necessary permissions, please contact your IT department or network administrator for assistance.

The following instructions show how to establish a local connection, using Power BI, to an Optilogic model that resides within our platform. These instructions will show you how to:

To make a local connection you must first open a Firewall connection between your current IP address and the Optilogic platform. Navigate to the Cloud Storage app – note that the app selection found on the left-hand side of the screen might need to be expanded. Check to see if your current IP address is authorized and if not, add a rule to authorize this IP address. You can optionally set an expiration date for this authorization.

If you are working from a new IP Address, a banner notification should be displayed to let you know that the new IP Address will need to be authorized.

From the Databases section of the Cloud Storage page, click on the database that you want to connect to. Then, click on the Connection Strings button to display all of the required connection information.

We have connection information for the following formats:

To select the format of your connection information, use the drop-down menu labeled Select Connection String:

For this example, we will copy and paste the strings for the ‘PSQL’ connection. The screen should look something like the following:

You can click on any of the parameters to copy them to your clipboard, and then paste them into the relevant field when establishing the PSQL ODBC connection.

Many tools, including Alteryx, use Open Database Connectivity (ODBC) to enable a connection to the Cosmic Frog model database. To access the Cosmic Frog model, you will need to download and install the relevant ODBC drivers. Latest versions of the drivers are located here: https://www.postgresql.org/ftp/odbc/releases/

From here, click on the latest parent folder, which as of June 20, 2024 will be REL-16_00_0005. Select and download the psqlodbc_x64.msi file.

When installing, use the default settings from the installation wizard.

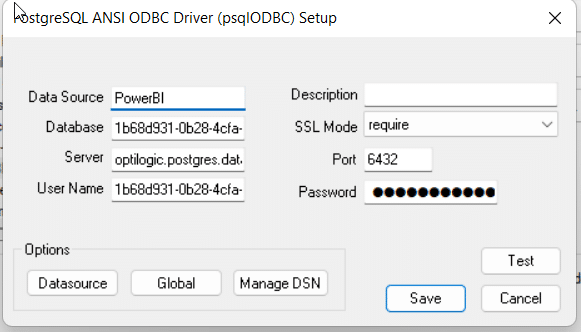

Within Windows, open the ODBC Data Sources App (hint search: “ODBC” in your Windows spotlight search).

Click “Add” to create a new connection and then select “PostgreSQL ANSI(x64)” then click “Finish.”

Enter the details from your Cloud Storage connection — (hint: click to copy/paste)

You may click “Test” to confirm the connection works or click “Save.”

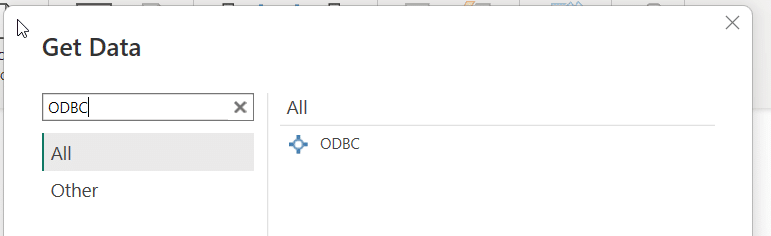

Open Power BI and select “Get data from another source”

Enter “ODBC” in the Get Data window and select connect

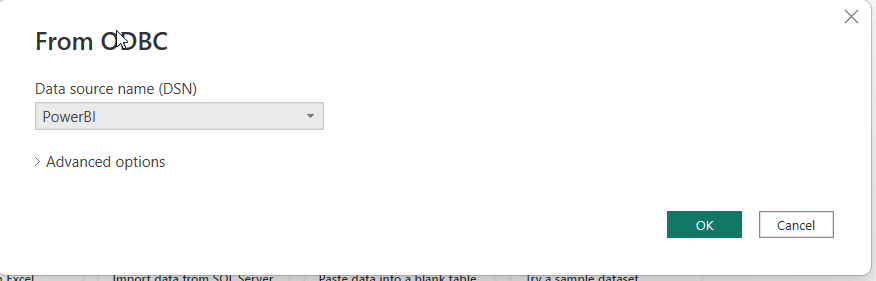

Select your Database connection from the dropdown and click OK

Enter your username and password one last time from the Cloud Storage page

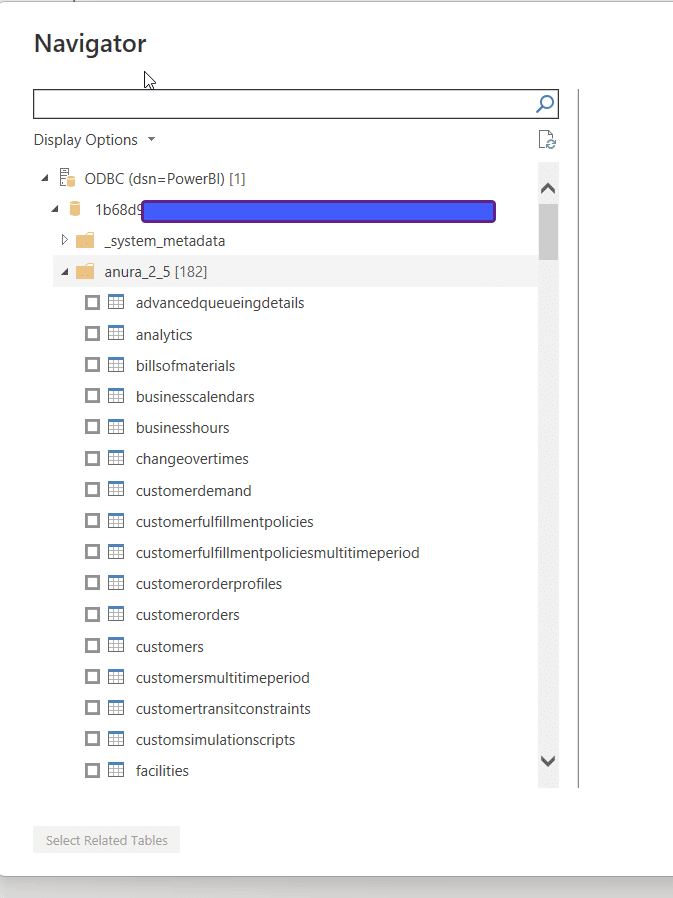

Select the tables you wish to see and use within PowerBI

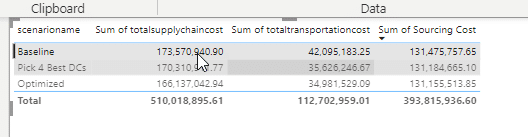

Create Dashboards of Data from Cosmic Frog tables!

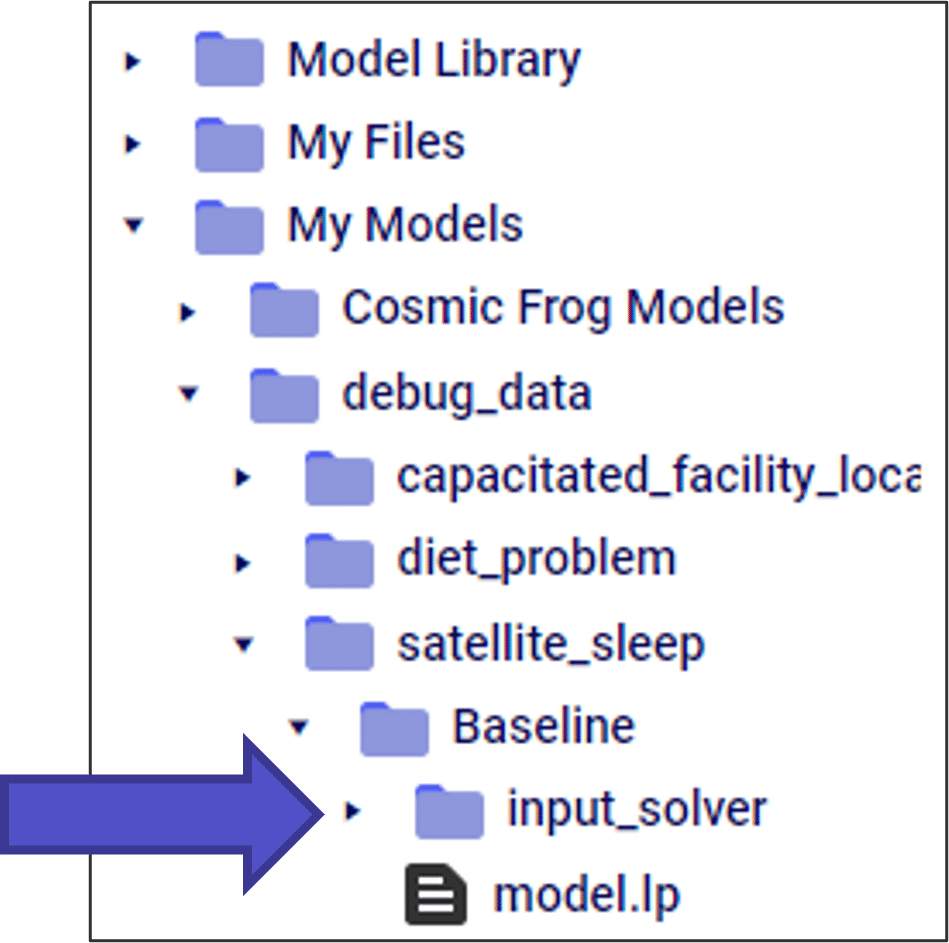

If you are comfortable with traditional linear programming techniques, you can select the “Write Input Solver Files” and “Write LP File” parameters to get useful output files.

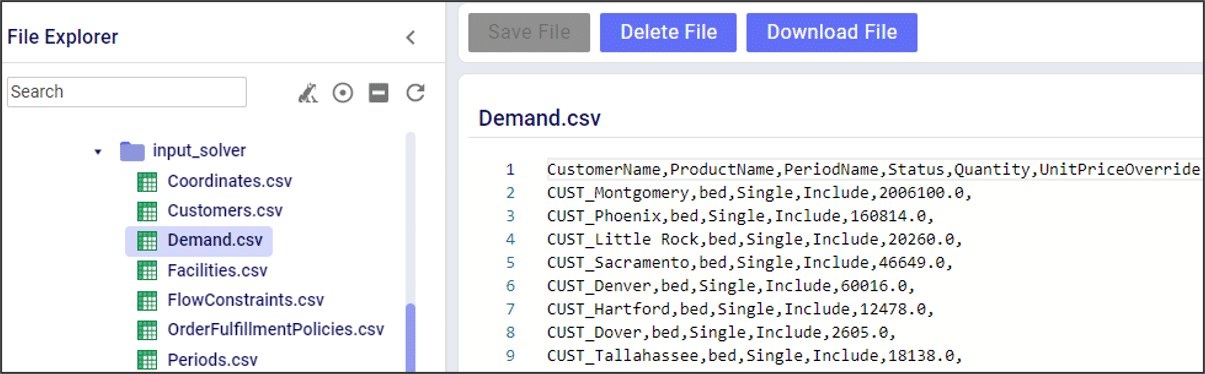

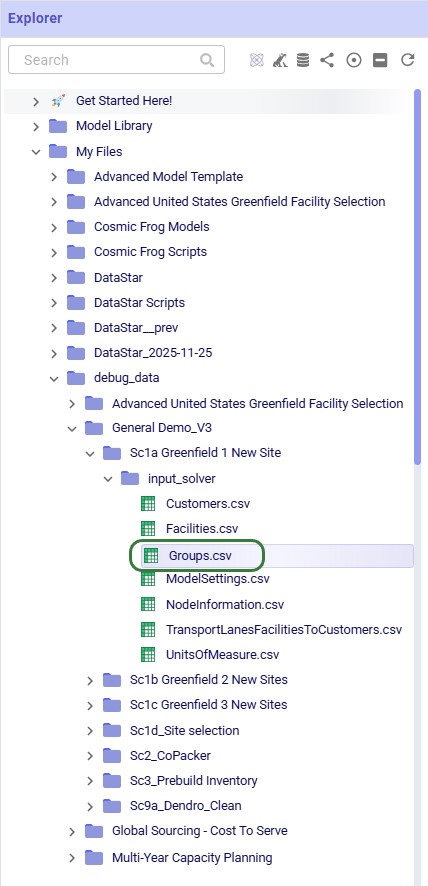

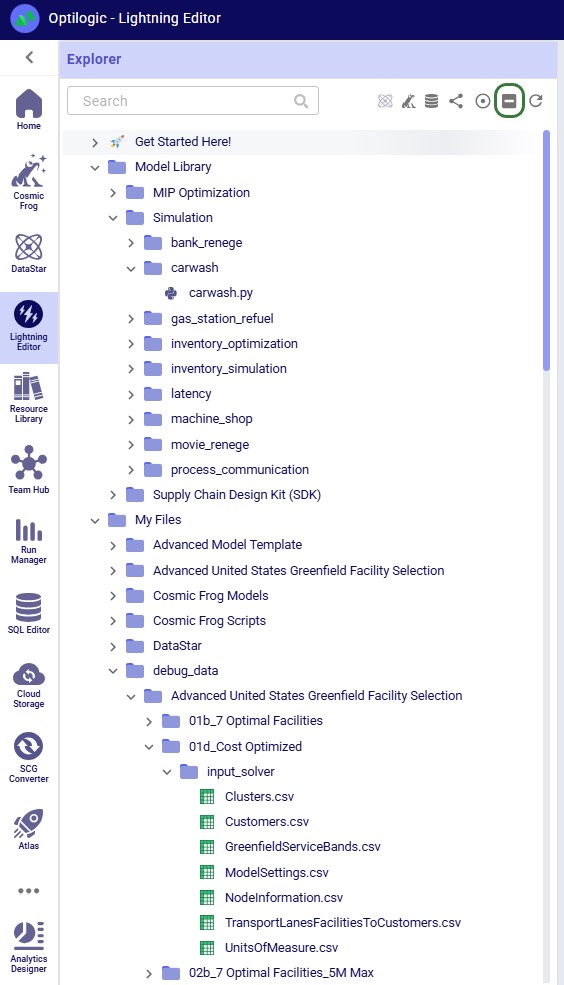

After running, these files are in your file explorer.

The “input_solver” folder has a list of all the tables that are entered into the optimization solver. This is useful for:

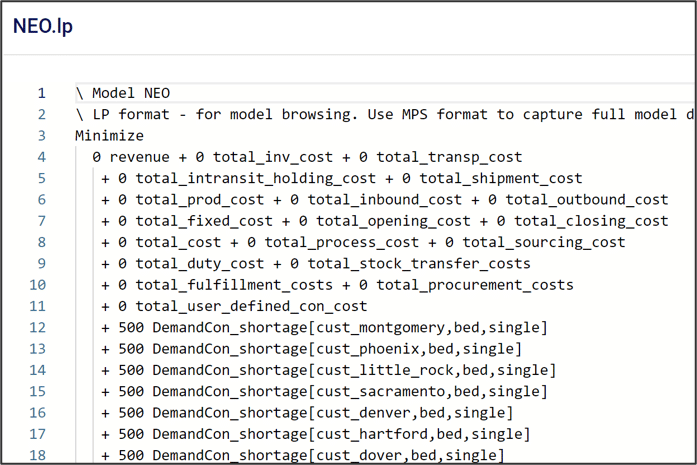

The “model.lp” file shows the model in a more traditional MIP optimization format, including the objective function and model constraints.

When using the Infeasibility Diagnostic engine, the LP file is different than a traditional Neo run. In this case, the cost coefficients in the objective function are set to 0. Instead, a positive cost coefficient is added to each slack constraint, and the goal of the model is to minimize the slack values. This allows us to find the “edge” of infeasibility.

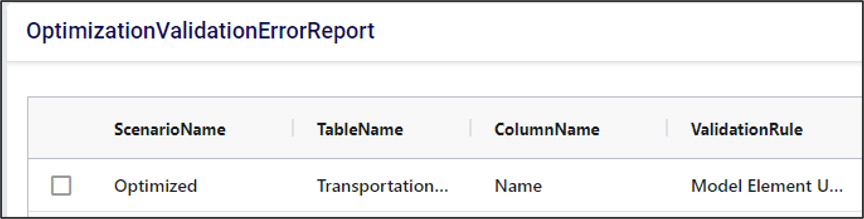

After every Cosmic Frog run, it’s a great habit to check the OptimizationValidationErrorReport table. Even for models that run successfully, this table can have useful information on how the input data is being processed by the solver.

Key columns in the validation error report can tell you:

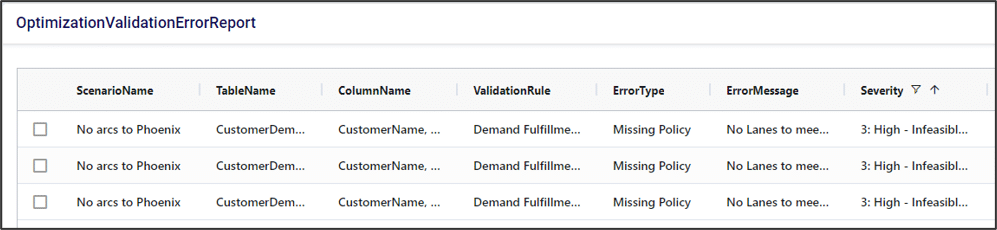

Validation errors that have “high” or “moderate” severity are likely to cause an infeasible model. In fact, Cosmic Frog has an “infeasibility checker” that looks for these kinds of errors and flags them before running the model to save you run time.

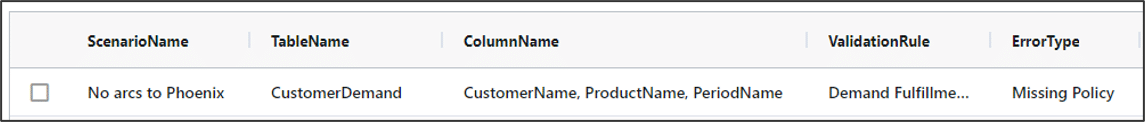

In this example, there are no transportation lanes that can deliver beds to CUST_Phoenix, so demand cannot be fulfilled. The infeasibility checker finds this and stops the model run before it even tries to optimize.

A couple of examples of how we could fix this error:

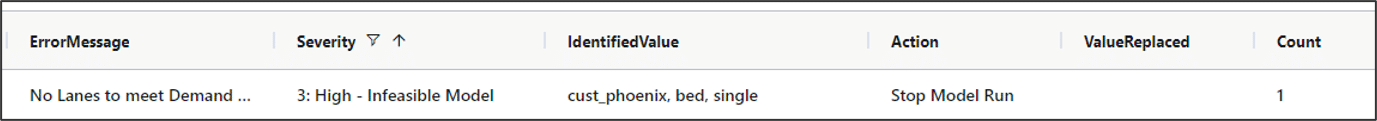

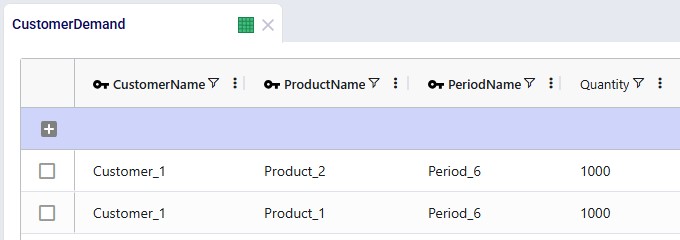

The OutputValidationErrorReport table is often very useful, even if a model “successfully” runs. This table will catch potential errors that do not cause infeasibility but could change the model results. In this example, there is a typo in the CustomerDemand table for “CUST_Montgomery”. Here, the model drops that row in the demand table. This will not cause an infeasible model, as it just removes that demand constraint. However, it means that no product will be sent to this customer in our optimized result.

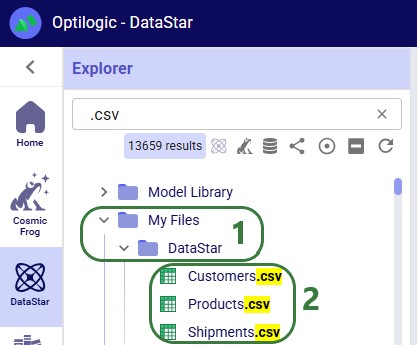

Cosmic Frog supports importing and exporting both CSV and Excel files directly through the application. This enables users to for example:

In this documentation we will cover how users can import and export data into and out of Cosmic Frog, and illustrate this with multiple examples.

There are 2 methods of importing Excel/CSV data into Cosmic Frog’s input tables available to users:

Pointers on how data to be imported needs to be formatted will be covered first, including some tips and call outs of specifics to keep in mind when using the upsert import method. Next, the steps to import a CSV/Excel file will be walked through step by step.

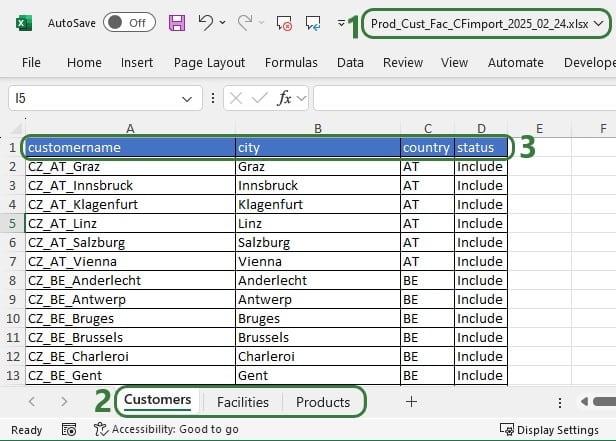

Data is mapped from CSV/Excel files based on matching column names and table names matching to the file name (CSV) or worksheet name (Excel):

Data preparation tips:

CSV vs Excel: CSV files only have 1 “worksheet”, so it can only contain data to be imported into 1 table, whereas Excel files can have multiple worksheets with data to be imported to different tables in Cosmic Frog.

Please take note of how existing records are treated when using the upsert import method to import to a table which already has some data in it:

We will illustrate these behaviors through several examples too.

Users can import 1 or multiple CSV or Excel files simultaneously, please take note of how the import will work for following situations:

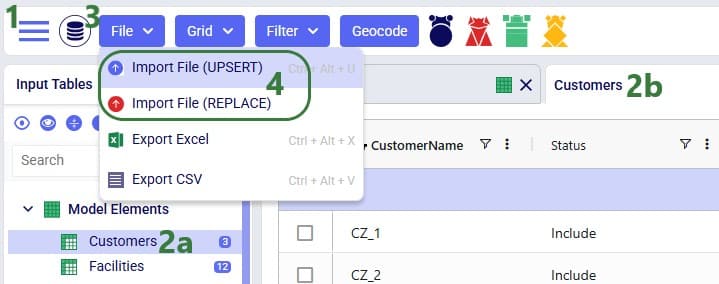

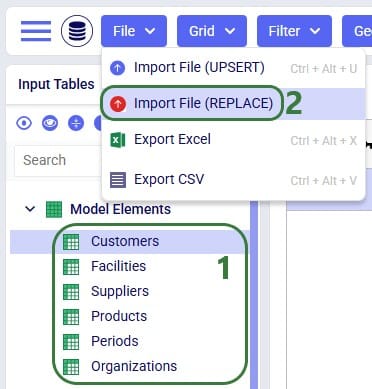

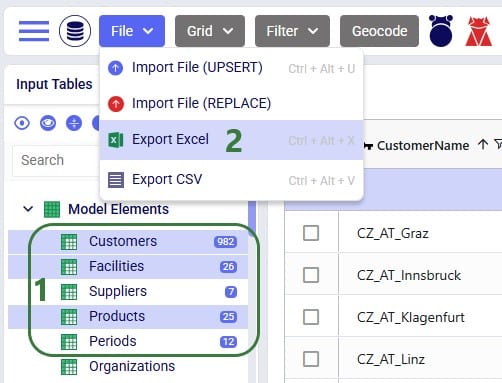

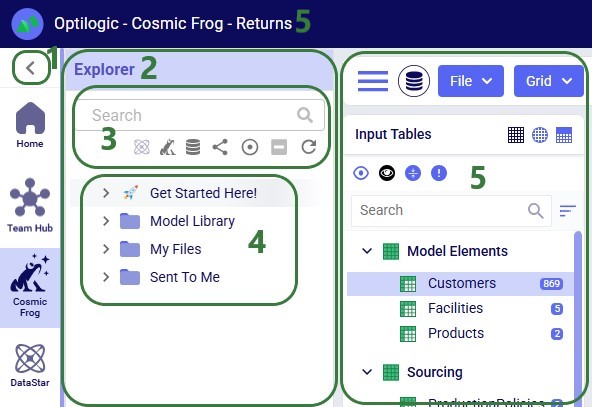

Once ready to import the prepared CSV/Excel file(s), user has 2 ways of accessing the import and export methods: from the File menu in the toolbar and from the right-click context menu of an input table. It looks like this from the File menu to import a file:

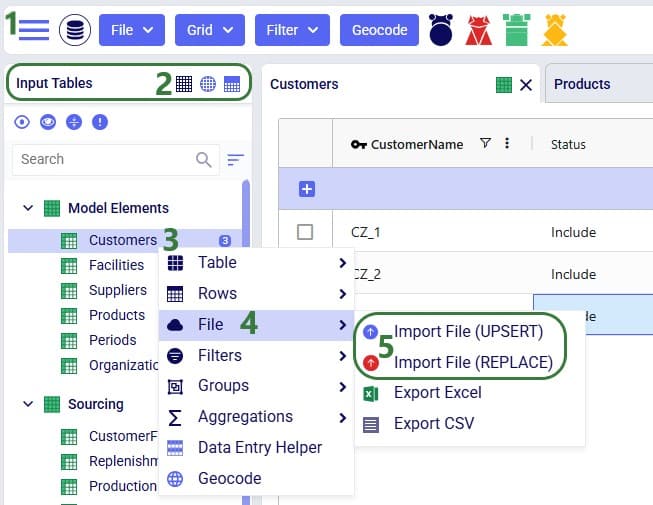

And when using the right-click context menu the steps to import a file are as follows:

When using the replace import method, a confirmation message will now be shown on which user can click Import to continue the import or Cancel to abort.

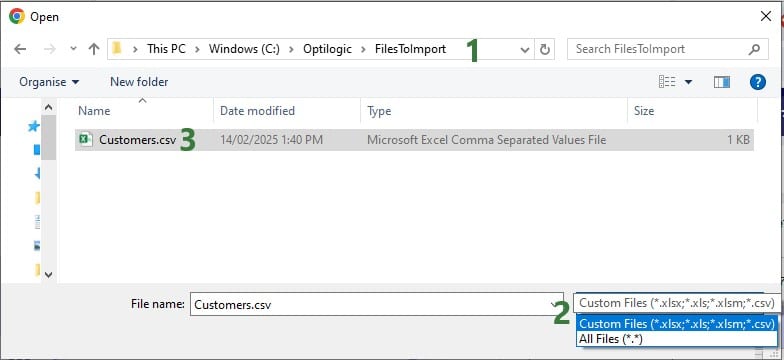

Next, a file explorer window opens in which user can browse to and select the CSV/Excel file(s) to import:

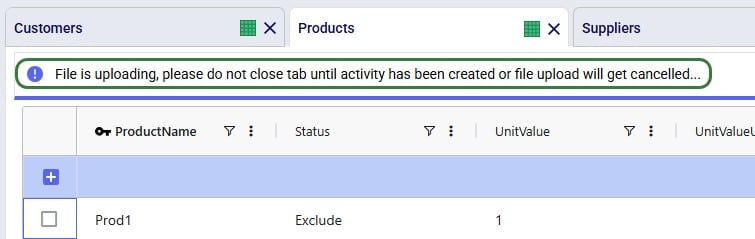

Once the import starts, a status message shows at the top of the active table:

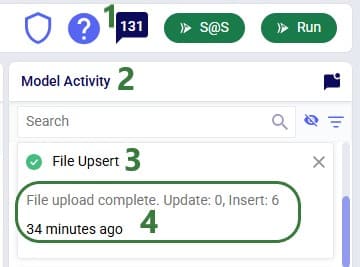

The Model Activity log will also have an entry for each import action:

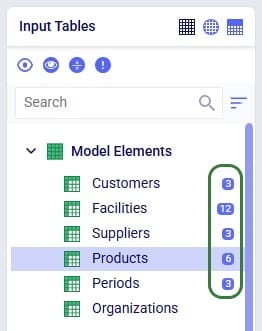

User can see the results of the import by opening and inspecting the affected input table(s), and by looking at the row counts for the tables in the input tables list, outlined in green in this screenshot:

A common way to start building a new model in Cosmic Frog is to make use of the replace import method to populate multiple tables simultaneously with data from Excel or CSV files. These files have typically been prepared from ERP extracts which have been manipulated to match the Cosmic Frog table and column names. This way, users do not need to enter data manually into the Cosmic Frog input tables, which would be very laborious. Note that it can be helpful to first export empty tables from a new, empty Cosmic Frog model to have a template to start filling out (see the “Exporting to CSV/Excel Files” section further below on how to do this).

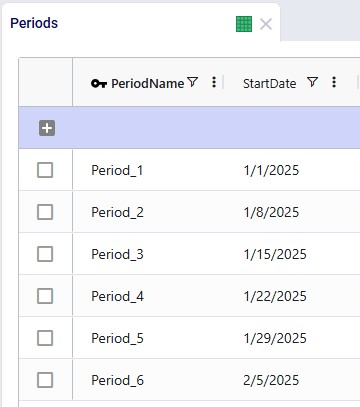

Starting with an empty new model in Cosmic Frog:

User has prepared the following Excel .xlsx file:

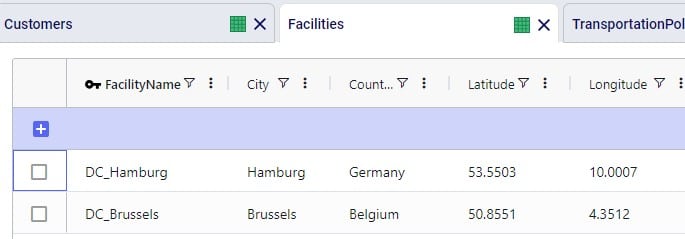

After importing this file into Cosmic Frog, we notice that the Customers, Facilities and Products tables now have row counts that match the number of records we had in the Excel file that was used for the import, and we can open the individual tables to see the imported records:

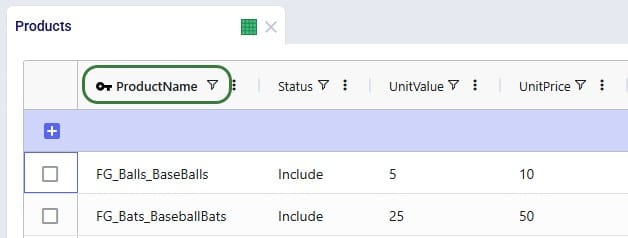

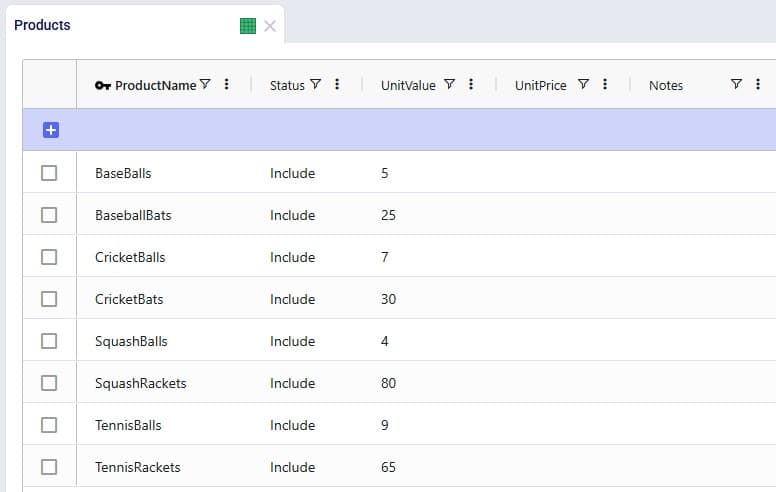

Consider user is modelling a sports equipment company and has populated the Products table of a Cosmic Frog model with 8 products as follows:

After working with the model for a while, the user realizes a few things:

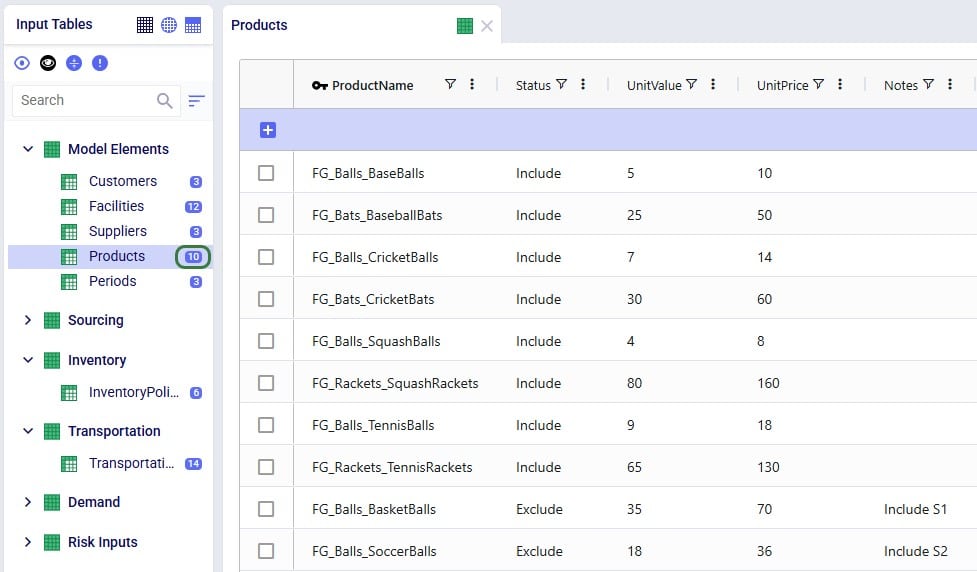

As item number 1 will change the product names, a column that is part of the primary key of the Products table, user will need to use the replace import method to make these changes as the upsert method does not change the values of columns that are part of the primary key. Following is the .xlsx file user prepares to replace the data in the Products table with:

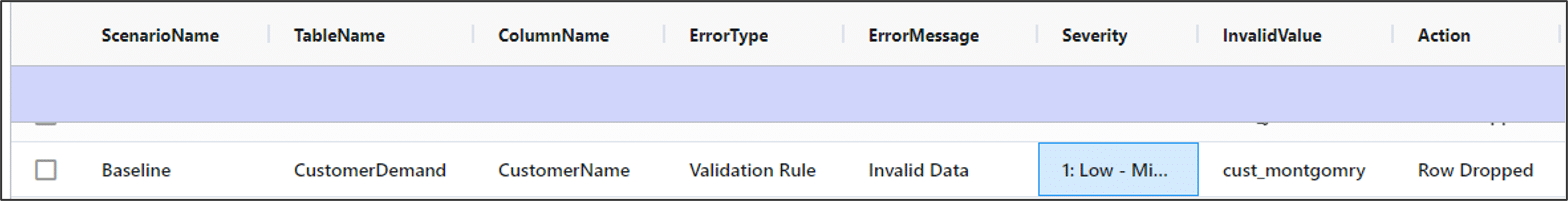

After importing the file using the replace method, the Products table looks like this:

We see the records are the exact same as what was contained in the Products.xlsx file that was imported, and the row count for the Products table has correctly gone up to 10 with the 2 new products added.

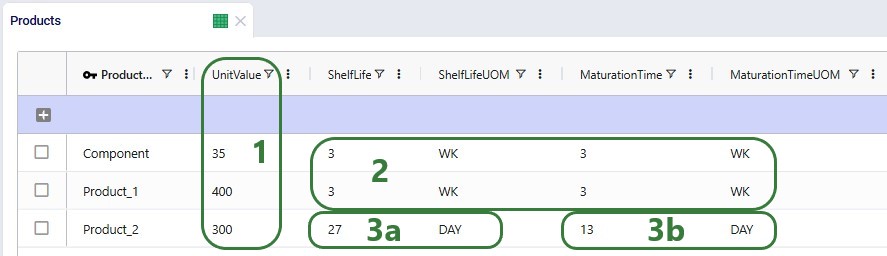

Continuing from the Products table in the last screenshot above, user now wants to make a few additional changes as follows:

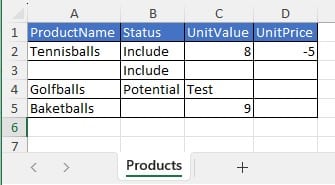

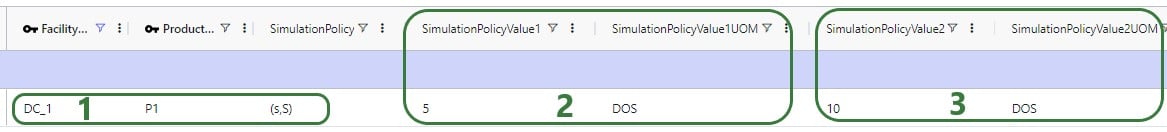

To make these changes to the Products table, the user prepares the following Products file to be upserted to the Products table, where the green numbers in the screenshot below match the items described in the bullet point list directly above:

After using the upsert import method for this file into the Products table, it contains following records. The ones changed / added are listed at the bottom:

In the boxes outlined in green we see that all the expected changes and the insertion of the 1 new record have been made.

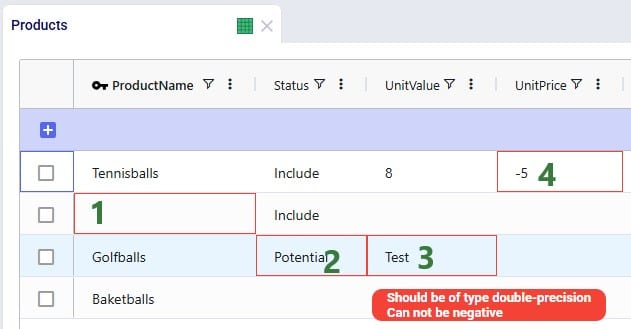

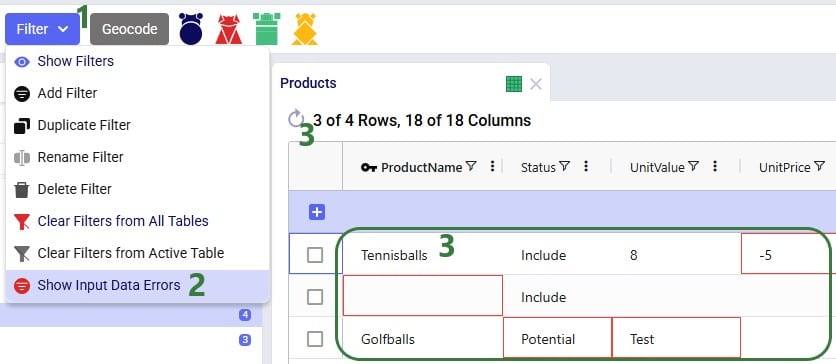

Let us also illustrate what will happen when files with invalid /missing data are imported. We will use the replace import method for the example here, but similar results will be seen when using the upsert method. Following screenshot shows a Products table that has been prepared in Excel, where we can see several issues already: a blank Product Name, a negative value for Unit Price, etc.

After this file is imported to the Products table using the replace method, the Products table will look as follows:

The cells that are outlined in red contain invalid values. Hovering over each cell will show a tooltip message describing the problem.

For tables with many records, it may be hard to find the fields in red outline manually. To help with this, there is a standard filter user can apply that will show all records that have 1 or multiple input data errors:

In conclusion, Cosmic Frog will let a user import invalid data, and then helps user identify the data issues with the red outlines, hover over tooltips, and the Show Input Data Errors filter.

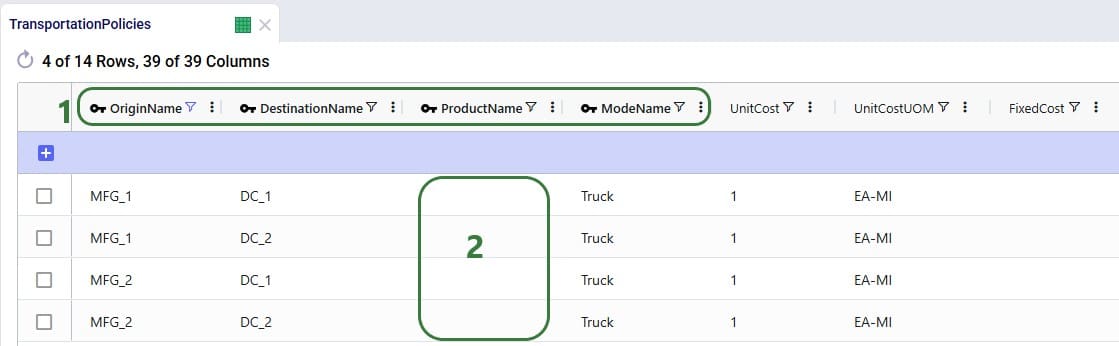

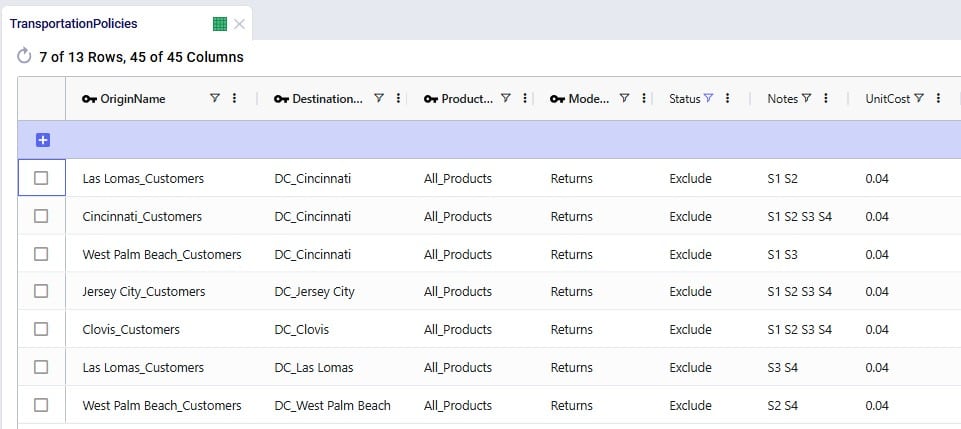

Consider following Transportation Policies table:

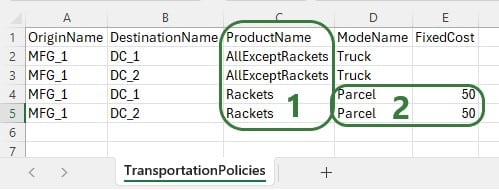

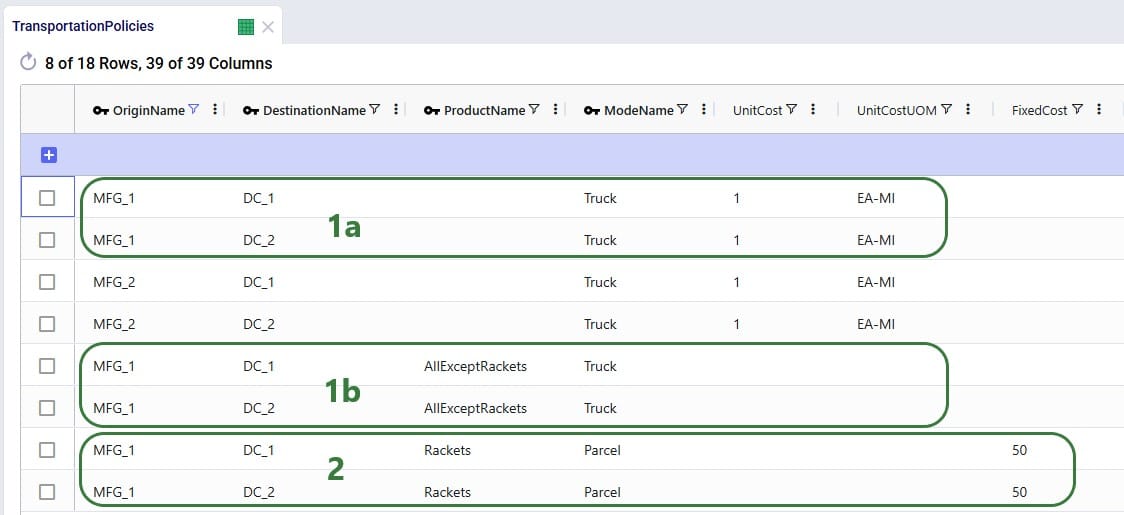

There is now a change where from MFG_1 all Racket products need to be shipped by Parcel for a fixed cost of $50. User creates 2 Named Filters (see the Named Filters in Cosmic Frog help center article) in the Products table: 1 that filters out all racket products (those products that have a product name that start with FG_Racket) which is named Rackets and 1 that filters out all non-racket products (those products that do not contain racket in the product name) which is named AllExceptRackets. Next, user prepares following TransportationPolicies.csv file to upsert into the Transportation policies table with the intention to update the first 2 records in the existing table to be specific for the AllExceptRackets products and add 2 new ones for the Rackets products:

The result of using this file to upsert to the Transportation Policies table is as follows:

This example shows that users need to be mindful of which fields are part of the table’s primary key and remember that values of primary key fields cannot be changed by the upsert import method. An example workflow that will achieve the desired changes to the Transportation Policies table is as follows:

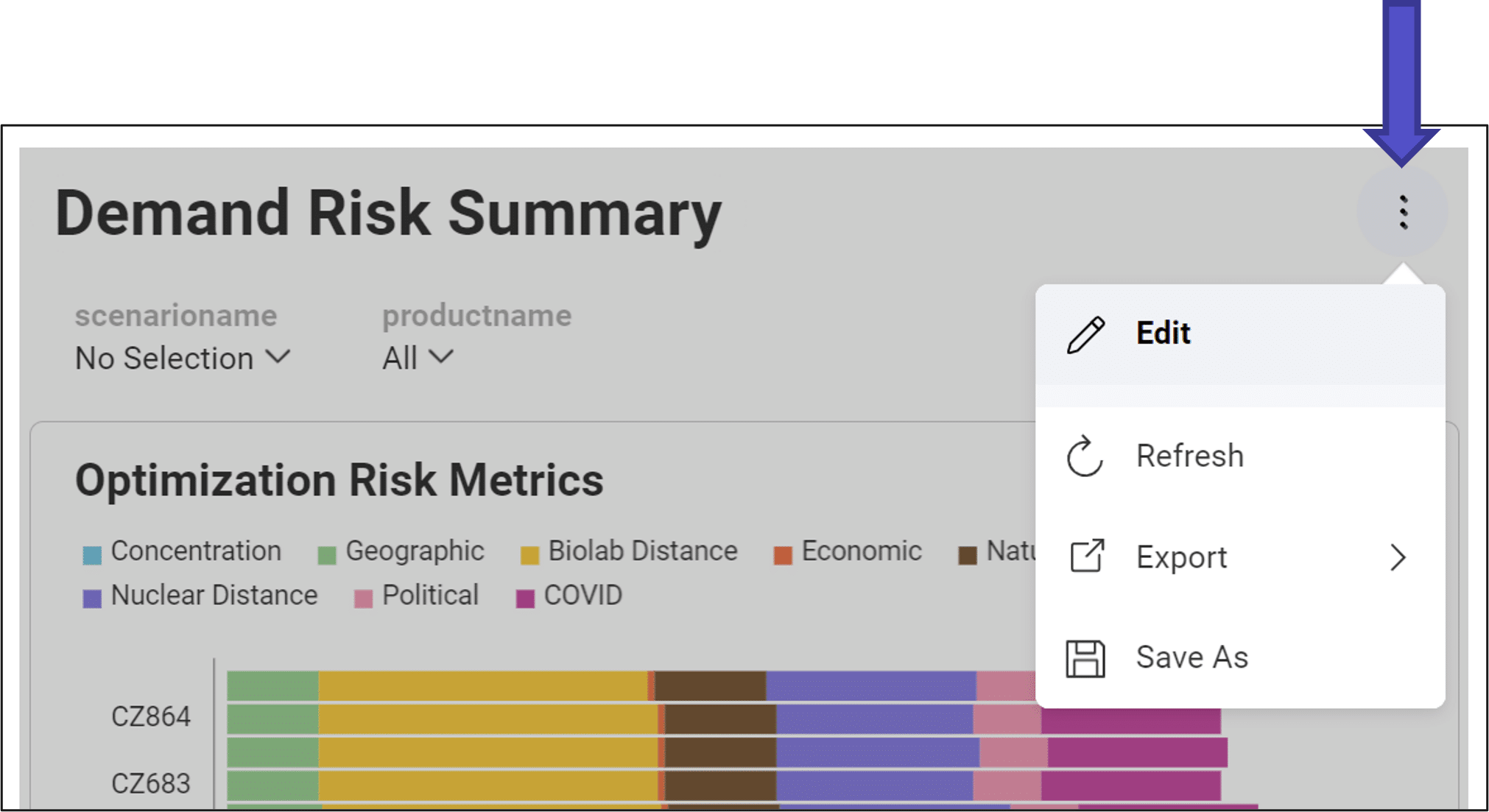

It is possible to export a single table or multiple tables (input and output tables) to CSV or Excel from Cosmic Frog. Similar to importing data from CSV/Excel, user can access the export options in 2 ways: from the File menu in the toolbar and from the context menus that come up when right-clicking on tables in the input/output/custom tables lists.

Please note:

The steps to export multiple tables to an Excel file are as follows:

Once the export starts, following message appears at the top of the active table:

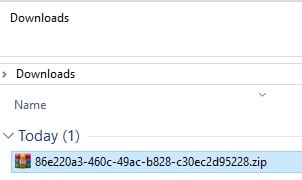

Once the export is complete, the exported file can be found in the folder where user’s downloaded files are saved:

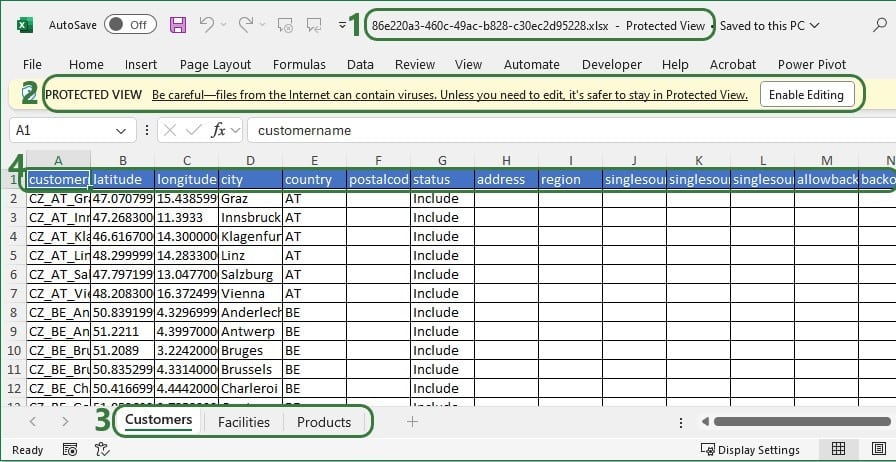

When exporting multiple tables to Excel or CSV, the downloaded file will be a .zip file with an automatically generated name based on the model’s Cosmic Frog ID. Extracting the zip-file will show an .xlsx file of the same name, which can be opened in Excel:

These are the steps to export multiple tables to CSV:

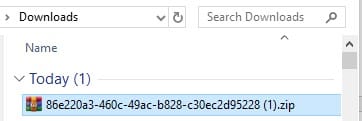

When the export starts, the same “File is exporting…” message as shown in the previous section will be showing at the top of the active table. Once the export process is finished, the exported file can again be found in the folder where user’s downloaded files are saved:

The file is again a zip-file, and it has the same name based on the model’s Cosmic Frog ID, just appended with (1), as there is already a zip-file of the same name in the Downloads folder from the previous export to Excel. Unzipping the file creates a new sub-folder of the same name in the Downloads folder:

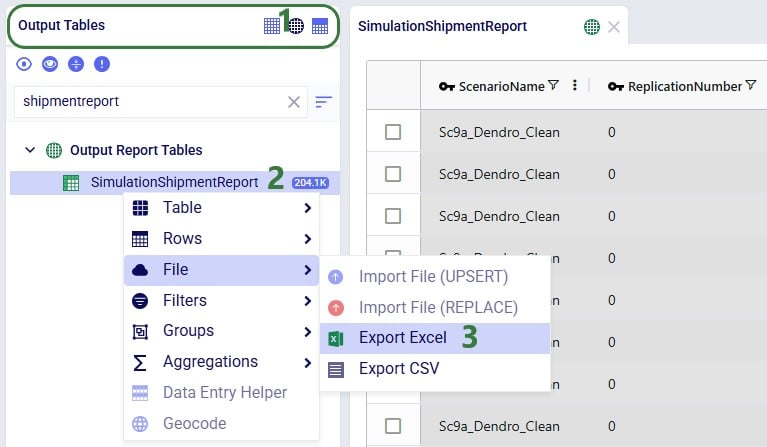

Exporting a single table to Excel can also be done from the File menu, in the same way as multiple tables are exported to Excel, which was shown above in the “Export Multiple Tables to Excel” section. Now, we will show the second way of doing this by using the context menu that comes up when right-clicking on a table:

When the export starts, the same “File is exporting…” message as shown above will be showing at the top of the active table. Once the export process is finished, the exported file can again be found in the folder where user’s downloaded files are saved:

The name of the exported CSV file matches that of the table that was exported.

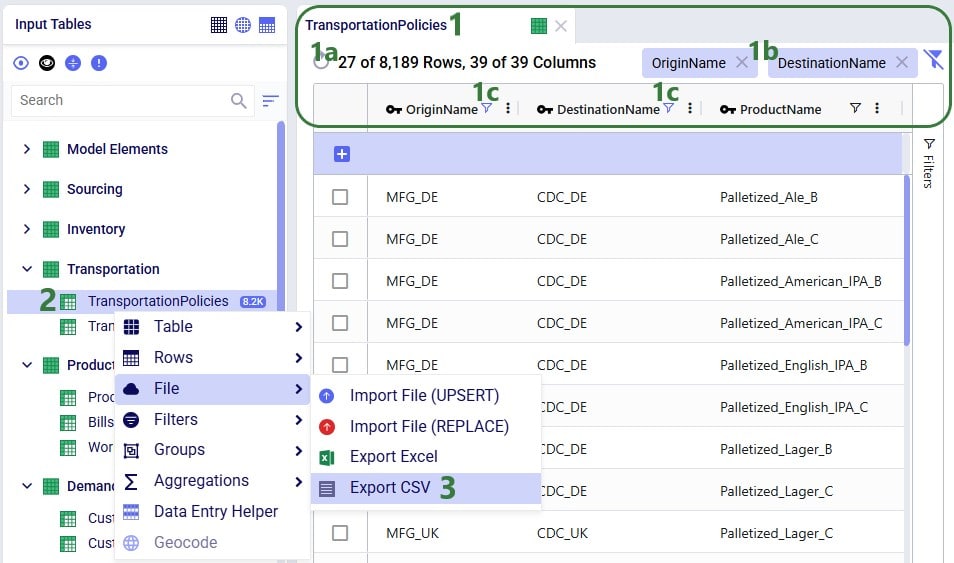

Exporting a single table to CSV can also be done from the File menu, in the same way as multiple tables are exported to CSV, which was shown above in the “Export Multiple Tables to CSV” section. Now, we will show the second way of doing this by using the context menu that comes up when right-clicking on a table:

When the export starts, the same “File is exporting…” message as shown above will be showing at the top of the active table. Once the export process is finished, the exported file can again be found in the folder where user’s downloaded files are saved:

For single tables exported to CSV, the name of the file is the same as the name of the exported table. If the Cosmic Frog table was filtered, the file name is appended with “_filtered” like it is here to remind user that only the filtered rows are contained in this exported file.

This video guides you though creating your free account and the features of the Optilogic Cosmic Frog supply chain design platform.

If you are running into issues receiving your account confirmation email, please see the troubleshooting article linked here.

Greenfield analysis (GF) is a method for determining the optimal location of facilities in a supply chain network. The Greenfield engine in Cosmic Frog is called Triad and this name comes from the oldest known species of frogs – Triadobatrachus. You can think of it as the starting point for the evolution of all frogs, and it serves as a great starting point for modeling projects too! We can use Triad to identify 3 key parameters:

GF is a great starting point for network design—it solves quickly and can reduce the number of candidate site locations in complicated design problems. However, a standard GF requires some assumptions to solve (e.g. single time period, single product). As a result, the output of a Triad model is best suited as initial information for building a more robust Cosmic Frog optimization (Neo) or simulation (Throg) model.

You can run GF in any Cosmic Frog model. Running a GF model only requires two input tables to be populated:

A third important table for running GF is the Greenfield Settings table in the Functional Tables section of the input tables. We call our GF approach “Intelligent Greenfield” because of the different parameters available by configuring this settings table. The Greenfield Settings table is always populated with defaults and users can change these as needed. See the Greenfield Setting Explained help article for an explanation of the fields in this table.

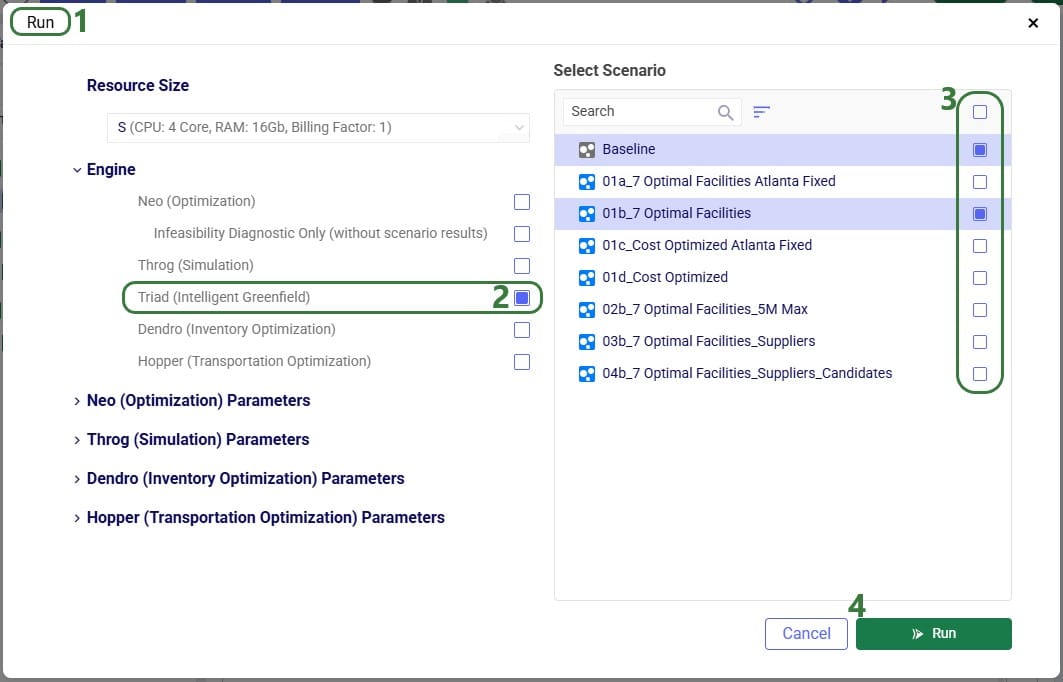

A greenfield analysis starts with clicking the “Run” button at the right top of the Cosmic Frog application, just like a Neo or Throg model.

After clicking on the Run button, the Run screen comes up:

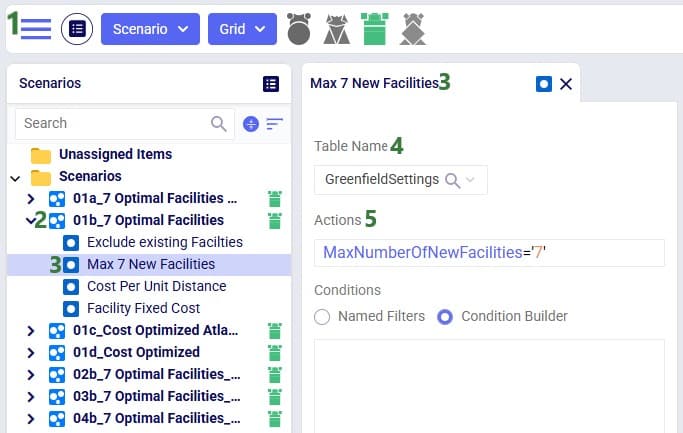

Besides making changes to values in the Customers and/or Customer Demand tables, GF scenarios often make changes to 1 or multiple settings on the Greenfield Settings table. The next screenshot shows an example of this:

To improve the solve speed of a Triad model, we can use customer clustering. Customer clustering reduces the size of the supply chain by grouping customers within a given geometric range into a single customer. We can set the clustering radius (in miles) in the Greenfield Settings table in the Customer Cluster Radius column.

Clustering is optional, and leaving this column blank is the same as turning off clustering.

While grouping customers can significantly improve the run time of the model, clustering may result in a loss of optimality. However, Greenfield is typically used as a starting point for a future Neo optimization model, so small losses in optimality at this phase are typically manageable.

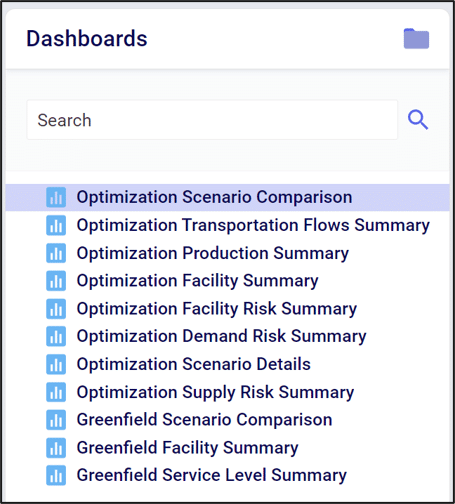

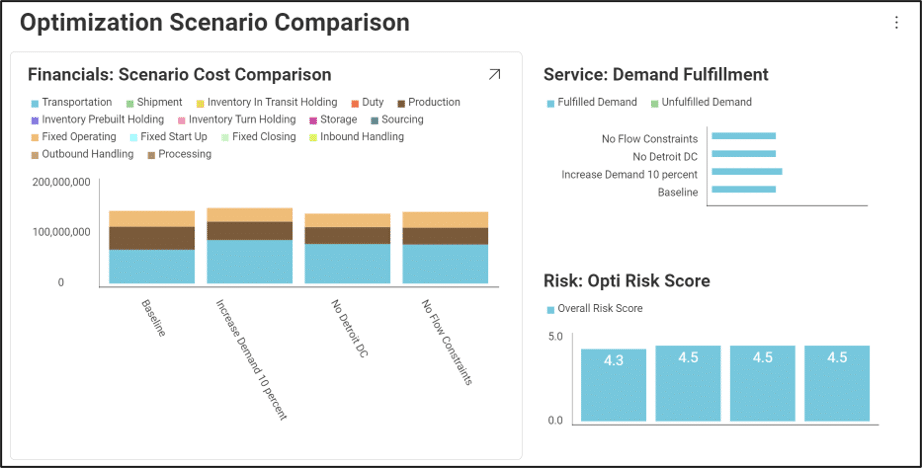

Once you have run a model, you can visualize your results using the Analytics tab.

In Analytics, a dashboard is a collection of visualizations. Visualizations can take on many forms, such as charts, tables or maps.

In Cosmic Frog, there are default dashboards available to help you analyze your model results.

The default dashboards highlight some common analytics and metrics. They are designed to be interacted with through a set of filters.

We can hover over visualization elements to get more information. This floating card of information is called a “Tooltip”.

We can customize existing dashboards to fit our needs. For more information see Editing Dashboards and Visualizations.

The only constant is change. When building our supply chains, the “optimal” design doesn’t only mean lowest cost. What happens if (or perhaps when) a disruption occurs? Fragile, low-cost supply chains can end up costing more in the long run if they aren’t resilient to the dynamic nature of today’s world.

We believe that optimality includes resilience. That’s why every Cosmic Frog run includes a risk rating from our DART risk engine.

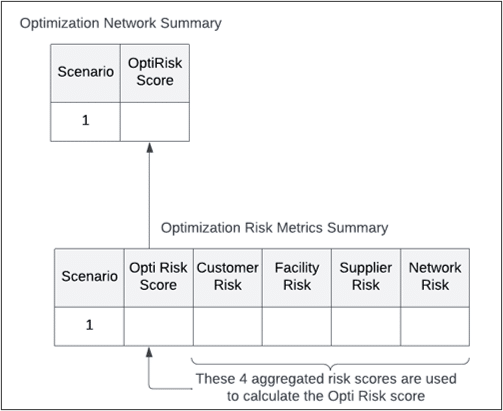

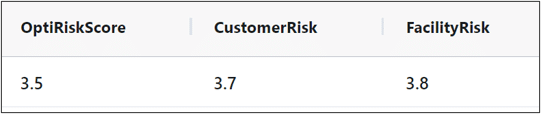

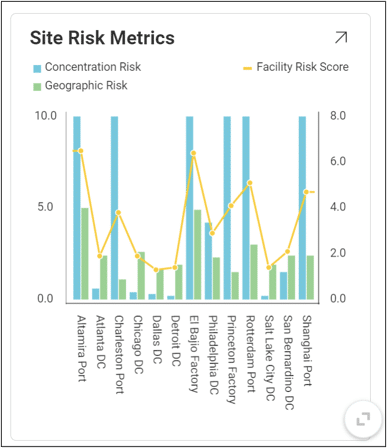

Every Cosmic Frog run outputs an Opti Risk score. The Opti Risk score is an aggregate measure of the overall supply chain risk. It includes the following sub-categories:

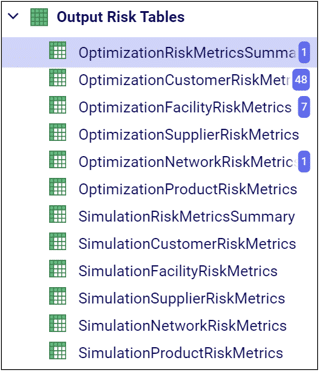

After running a model, you can find the Opti Risk score (as well as the scores for each of the sub-categories) in the output risk tables. The Opti Risk score can also be found in the OptimizationNetworkSummary table.

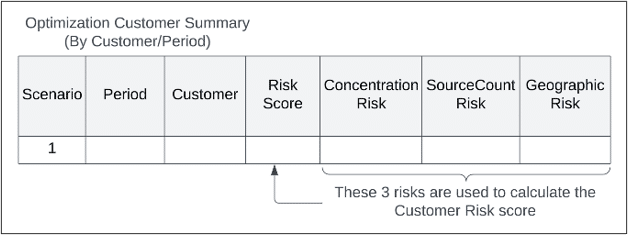

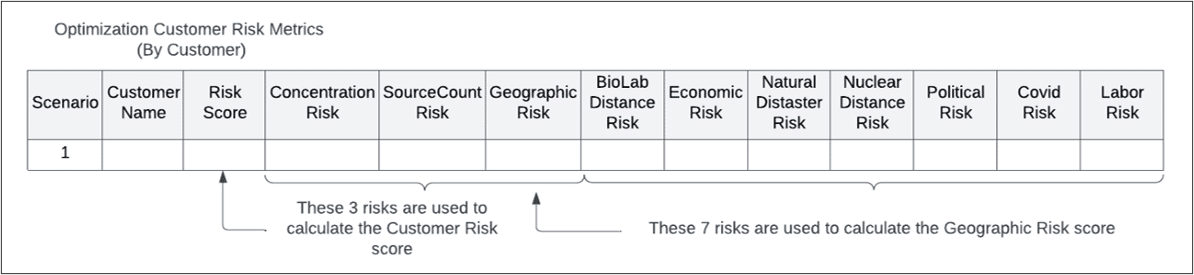

The overall Customer Risk score is an aggregation of each individual customer’s risk described in the OptimizationCustomerRiskMetrics or SimulationCustomerRiskMetrics tables. In each scenario, there is one risk score per customer per period.

Each customer risk score includes:

For each sub-category, the geographic risk score is also an aggregation of several risk factors:

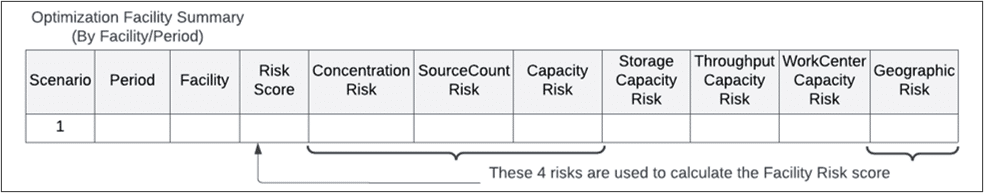

Like the customer risk score, the overall facility risk score is an aggregation of risk across all facilities in your supply chain. In the FacilityRiskMetric tables, there is an individual risk score per facility per period.

The facility risk score includes:

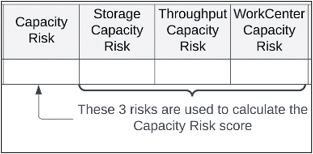

The capacity risk has three sub-components:

The facility geographic risk has the same components as the customer geographic risk.

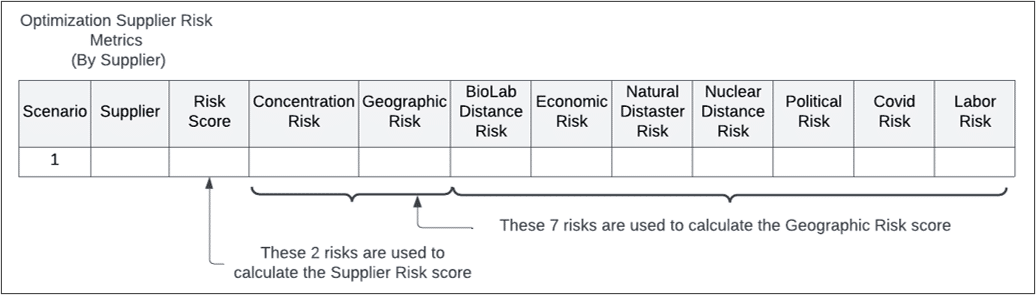

The supplier risk is calculated per supplier per period and includes:

Both the concentration and geographic risks include the same elements as described previously.

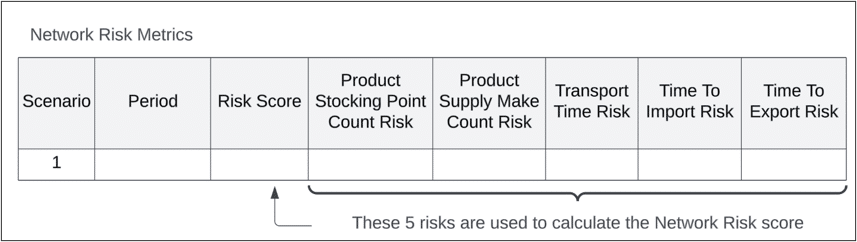

Network risk differs from the other risk scores in that it is not tied to a specific supply chain element. There is only one network risk score per scenario, and it includes:

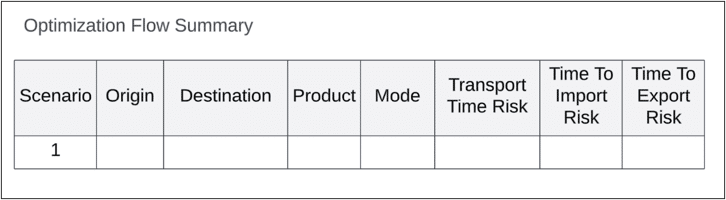

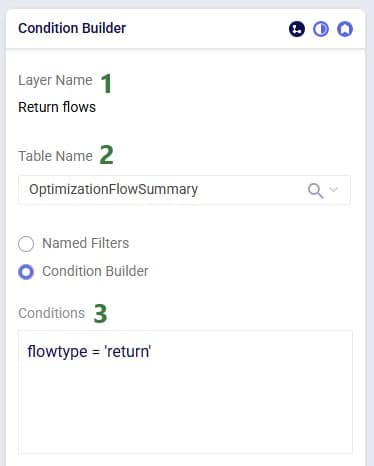

The transport and import/export time risks are aggregated across individual origin/destination pairs for every product and transport mode. The individual risk scores can be found in the OptimizationFlowSummary table.

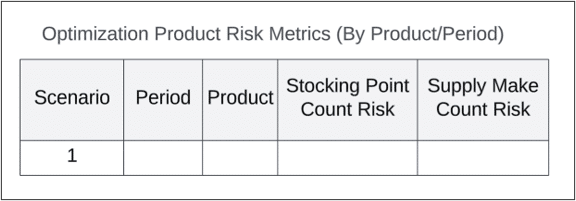

The stocking point count and supply make count risks are aggregations across every product and period. The individual risk scores can be found in the ProductRiskMetrics tables.

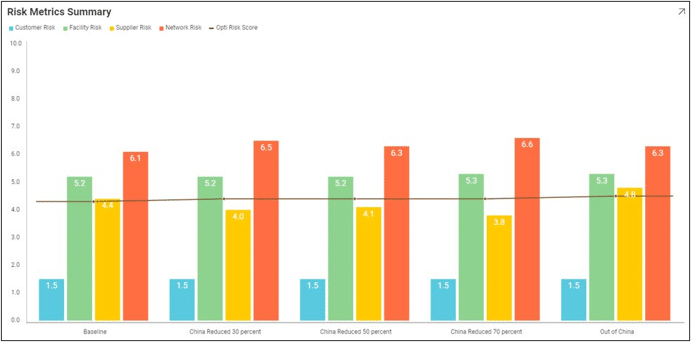

We can use our visualization tools to get a better sense of how risk varies across design scenarios.

Named Filters are an exciting new feature which allows users to create and save specific filters directly on grid views, to then be utilized seamlessly across all policies tables, scenario items and map layers. For example, if you create a filter named “DCs” in the Facilities table to capture all entries with “DC” in their designation, this Named Filter can then be applied in a policy table, providing a dynamic alternative to the traditional Group function.

Unlike Groups, named filters automatically update: adding or removing a DC record in the Facilities table will instantly reflect in the Named Filter, streamlining the workflow and eliminating the need for manual updates. Additionally, when creating Scenario Items or defining Map Layers, users can easily select Named Filters to represent specific conditions, easily previewing the data, making the process much quicker and simpler.

In this help article, how Named Filters are created will be covered first. In the sections after, we will discuss how Named Filters can be used on input tables, in scenario items, and on map layers, while the final section contains a few notes on deleting Named Filters.

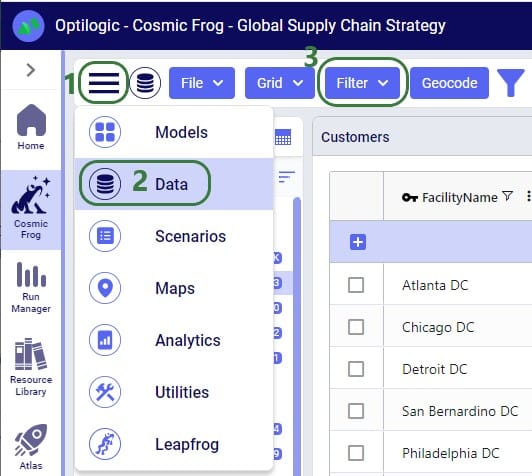

Named Filters can be set up and saved on any Cosmic Frog table: input tables, output tables, and custom tables. These tables are found in the Data module of a Cosmic Frog model:

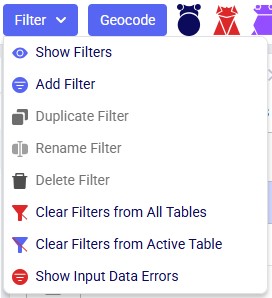

A quick description of each of the options available in the Filter drop-down menu follows here, we will cover most of these in more detail in the remainder of this Help Article:

Note that an additional Save Filter option becomes available in this menu in case a filter has been created (added) and next changes have been made to the table's filter conditions. The Save Filter option can then be used to update the existing named filter to reflect these changes.

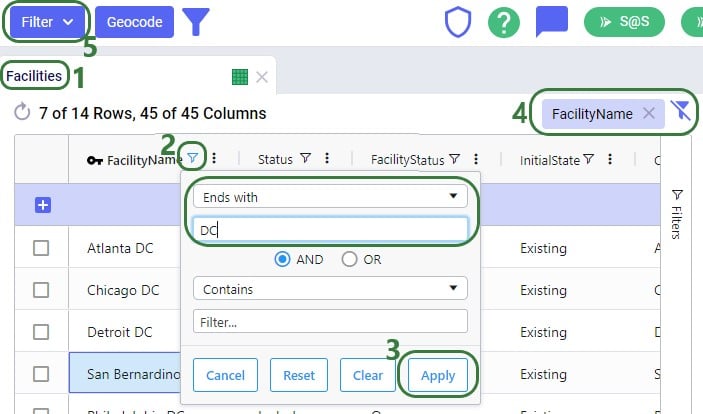

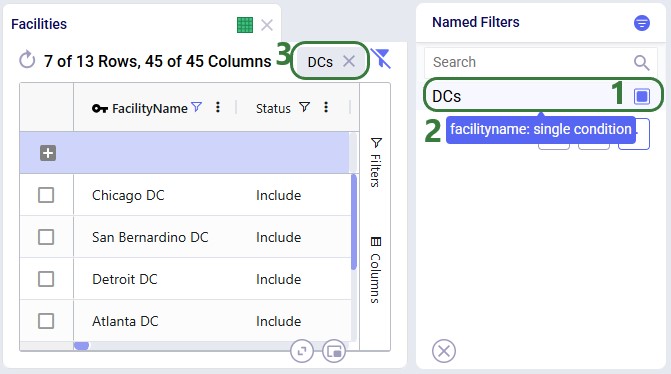

Let’s walk through setting up a filter on the Facilities table that filters out records where the Facility Name ends in “DC” and save it as a named filter called “DCs”:

There are 3 buttons below the list of filters as follows (these were obscured by the hover text in the previous screenshot):

There is a right-click context menu available for filters listed in the Named Filters pane, which allows the user to perform some of the same actions as those in the main Filter menu shown above:

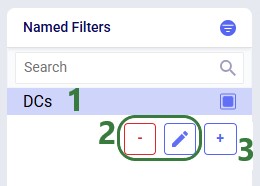

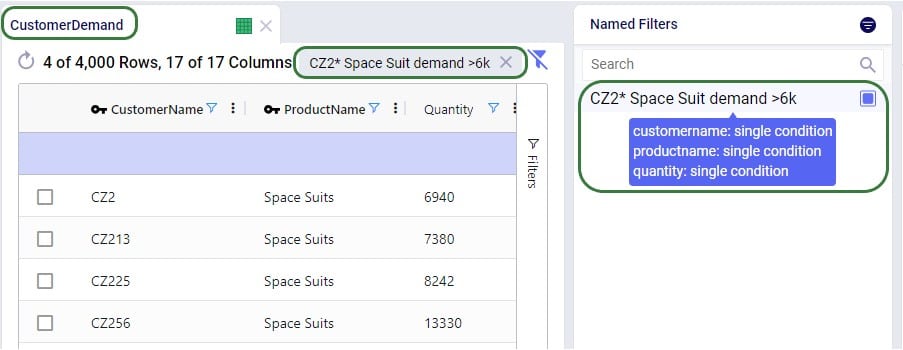

Named Filters can use filtering conditions that are applied to multiple fields in a table. The next example shows a Named Filter called “CZ2* Space Suit demand >6k” on the Customer Demand input table which uses filtering conditions on three fields:

Conditions were applied to 3 fields in the Customer Demand table, as follows: 1) Customer Name Begins With “CZ2”, 2) Product Name Contains “Space”, and 3) Quantity Greater Than “6000”. The resulting filter was saved as a Named Filter with the name “CZ2* Space Suit demand >6k” which is applied in the screenshot above. When hovering over this Named Filter, we indeed see the 3 fields and that they each have a single condition on them.

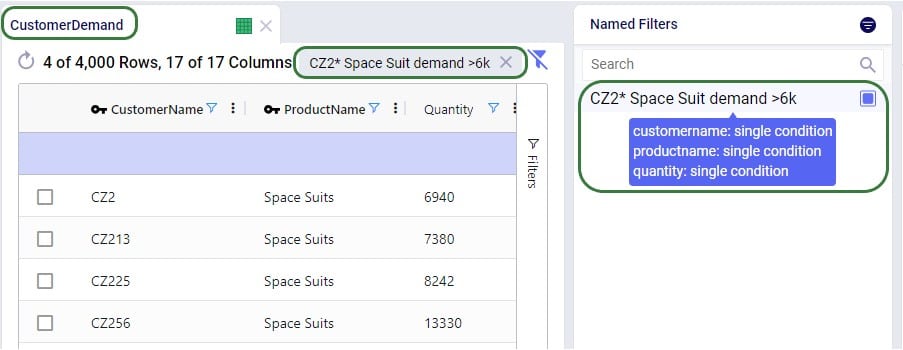

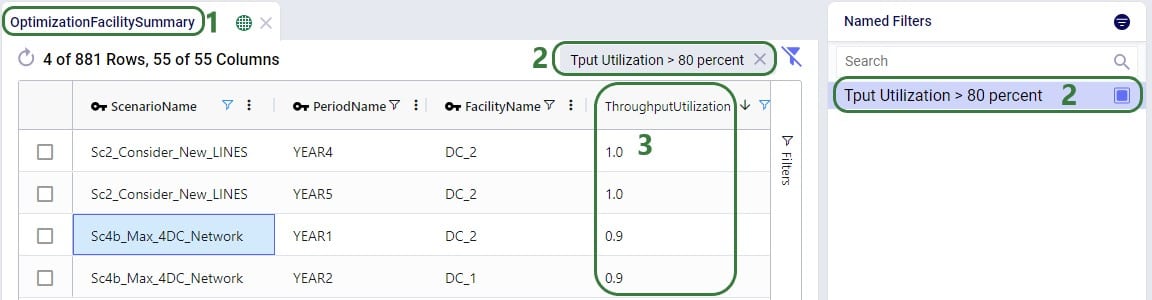

Besides being able to create Named Filters on input tables, they can also be created on output and custom tables. On output tables this can for example expedite the review of results after running additional scenarios where one can apply a pre-saved set of Named Filters one after the other once the runs are done instead of having to re-type each filter that shows the outputs of interest each time. This example shows a Named Filter on the Optimization Facility Summary output table to show records where the Throughput Utilization is greater than 0.8:

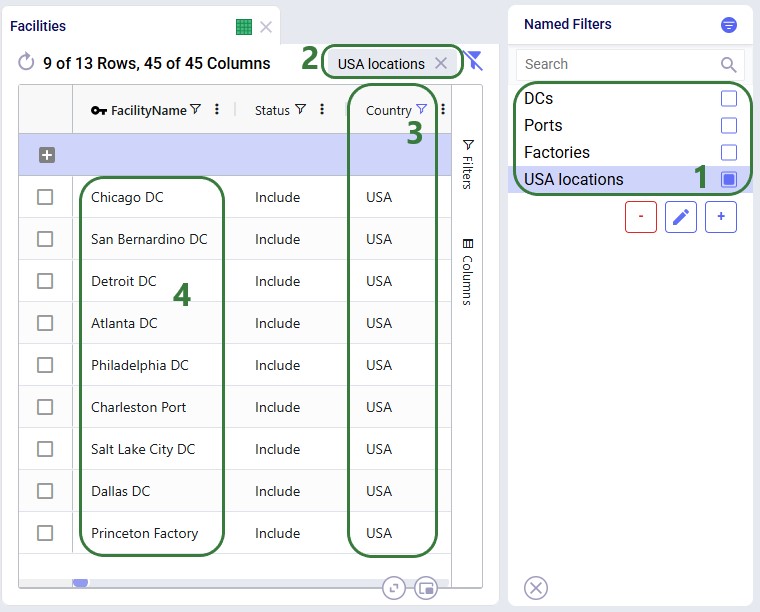

Next, we will see how multiple Named Filters can be applied to a table. In the example we will use, there are 4 Named Filters set up on the Facilities table:

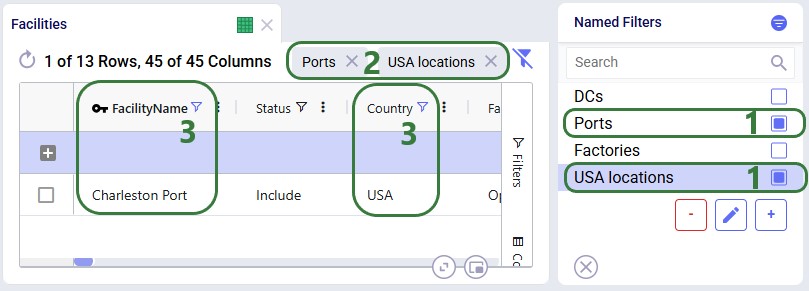

Next, we will apply another Named Filter in addition to this first one ("USA locations"). How the Named Filters work together depends on if they are filtering on the same field or on different fields:

Now, if we want to filter out only Ports located in the USA, we can apply 2 of the Named Filters simultaneously:

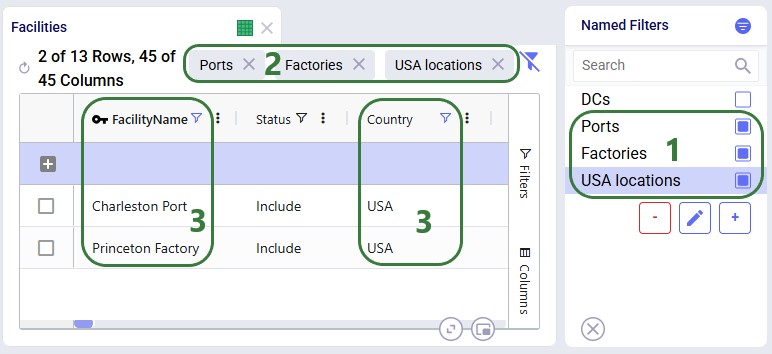

To show an example of how multiple named filters that filter on the same field work, we will add a third Named Filter:

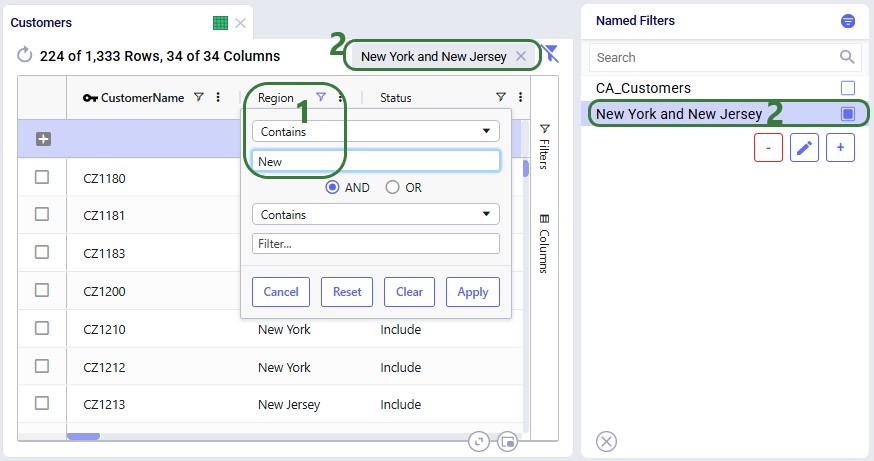

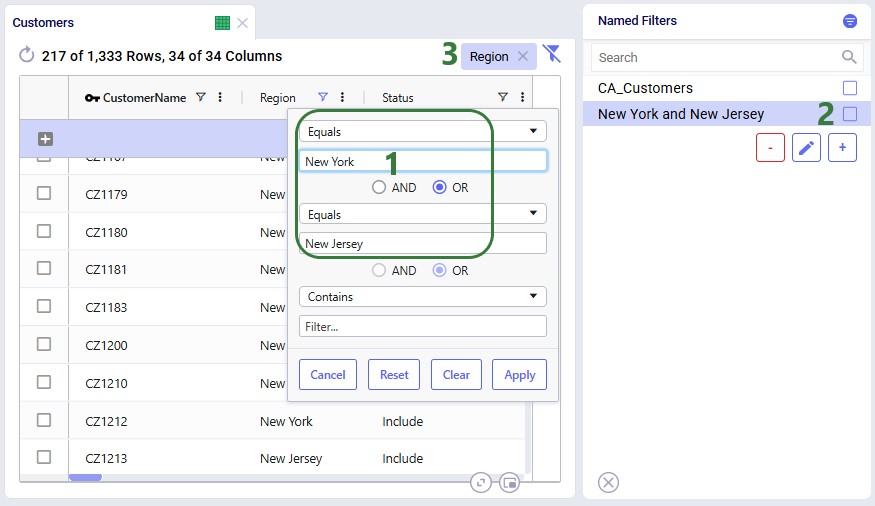

To alter an existing filter, we can change the criteria of this existing filter, and then save the resulting filter, replacing the original Named Filter. Let’s illustrate this through an example: in a model with about 1.3k customers in the US, we have created a Named Filter “New York and New Jersey”, but later on realize that this filter also includes customers in New Hampshire and New Mexico:

In reality, this filter also filters out customers located in the regions (states) of New Hampshire and New Mexico in addition to those in New York and New Jersey. So, the next step is to update the filter to only filter out the New York and New Jersey customers:

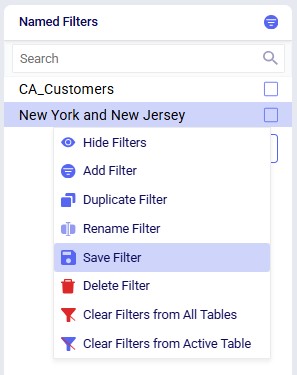

Next, we can use the Save Filter option from either the Filter menu drop-down list or the context menu after right-clicking on the filter in the Named Filters pane to update the existing "New York and New Jersey" named filter to use the updated condition. The following screenshot shows the latter method:

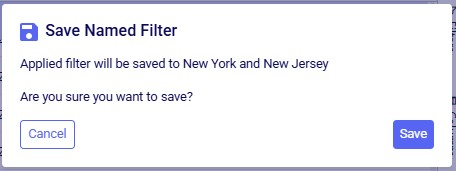

After choosing Save Filter from the context menu, the following message is shown for the user to confirm they want to overwrite the original named filter using the current filter conditions:

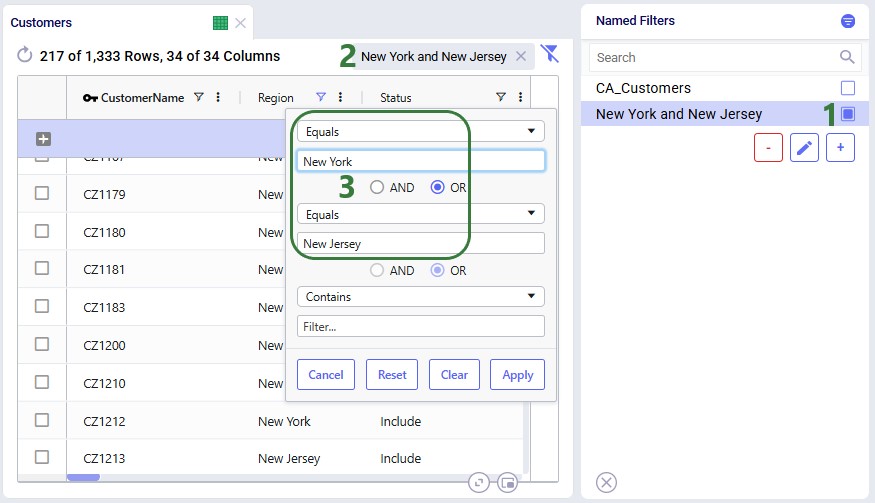

After clicking Save, the existing named filter has been updated:

So far, the only examples were of filters applied to one field in an input table. The next example shows a Named Filter called “CZ2* Space Suit demand >6k” on the Customer Demand input table which uses filtering conditions on multiple fields:

Conditions were applied to 3 fields in the Customer Demand table, as follows: 1) Customer Name Begins With “CZ2”, 2) Product Name Contains “Space”, and 3) Quantity Greater Than “6000”. The resulting filter was saved as a Named Filter with the name “CZ2* Space Suit demand >6k” which is applied in the screenshot above. When hovering over this Named Filter, we indeed see the 3 fields and that they each have a single condition on them.

Besides being able to create Named Filters on input tables, they can also be created on output and custom tables. On output tables this can for example expedite the review of results after running additional scenarios where one can apply a pre-saved set of Named Filters one after the other once the runs are done instead of having to re-type each filter that shows the outputs of interest each time. This example shows a Named Filter on the Optimization Facility Summary output table to show records where the Throughput Utilization is greater than 0.8:

The last option of Show Input Data Errors in the Filter menu creates a special filter named ERRORS and filters out records in the input table it is used on that have errors in the input data. This can be very helpful as records with input errors may have these in different fields and the types of errors may be different, so a user is not able to create 1 single filter that would capture multiple different types of errors. When this filter is applied, any record that has 1 or multiple fields with a red outline will be filtered out and shown. Hovering over the field gives a short description of the problem with the value in the field.

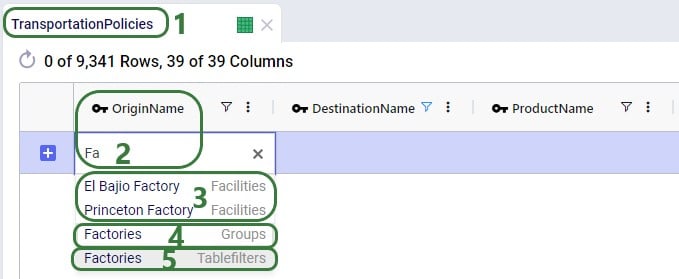

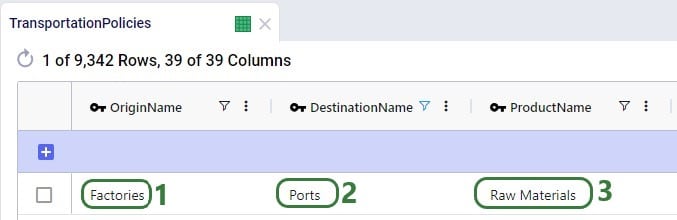

Named Filters for certain model elements (i.e. Customers, Facilities, Suppliers, Products, Periods, Modes, Shipments, Transportation Assets, Processes, Bills Of Materials, Work Centers, and Work Resources) can be used in other input tables, very similar to how Groups work in Cosmic Frog: instead of setting up multiple records for individual elements, for example a transportation policy from A to B for each finished good, a Named Filter that filters out all finished goods on the Products table can be used to set up 1 transportation policy for these finished goods from A to B (which at run-time will be expanded into a policy for each finished good). The advantage of using Named Filters instead of Groups is that Named Filters are dynamic. If records are added to tables containing model elements and they match the conditions of any Named Filters, they are automatically added to those Named Filters. Think for example of Products with the pre-fix FG_ to indicate they are finished goods and a Named Filter “Finished Goods” that filters the Product Name on Begins With “FG_”. If a new product is added where the Product Name starts with FG_, it is automatically added to the Finished Goods Named Filter and anywhere this filter is used this new finished good is now included too. We will look at 2 examples in the next few screenshots.

The completed transportation policy record uses Named Filters for the Origin Name, Destination Name, and Product Name, making this record flexible as long as the naming conventions of the factories, ports, and raw materials keep following the same rules.

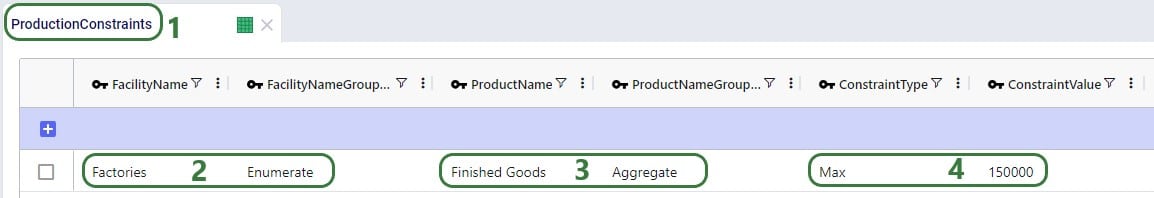

The next example is on one of the Constraints tables, Production Constraints. On the Constraints tables, the Group Behavior fields dictate how an element name that is a Group or a Named Filter should be used. When set to Enumerate, the constraint is applied to each individual member of the group or named filter. If it is set to Aggregate, the constraint applies to all members of the group or named filter together. This Production Constraint states that at each factory a maximum amount of 150,000 units over all finished goods together can be produced:

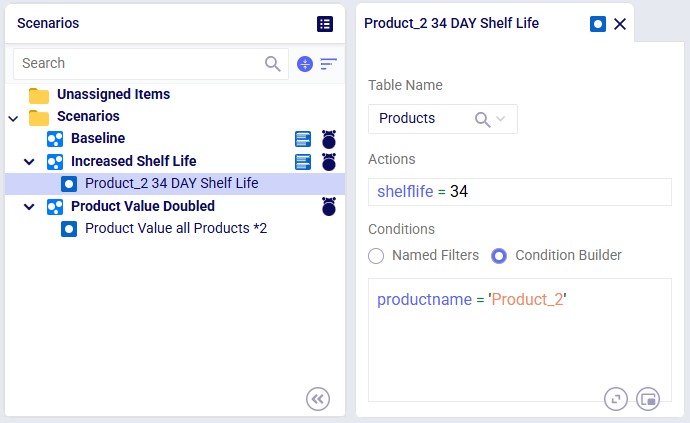

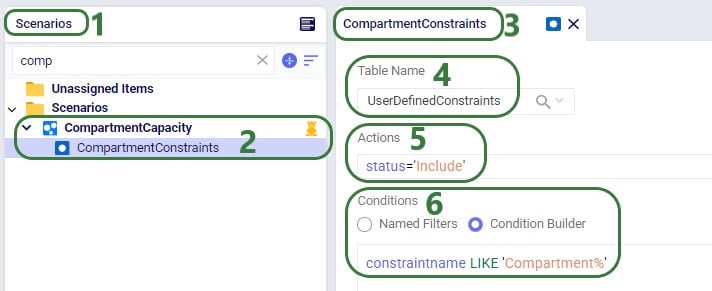

When setting up a scenario item, previously, the records that the scenario item’s change needed to be applied to could be set by using the Condition Builder. Now users have the added option to use a saved Named Filter instead, which makes it easier as the user does not need to know the syntax for building a condition, and it also makes it more flexible as Named Filters are dynamic as was discussed in the previous section. In addition, users can preview the records that the change will be made to so the chance of mistakes is reduced.

Please note that a maximum of 1 Named Filter can be used on a scenario item.

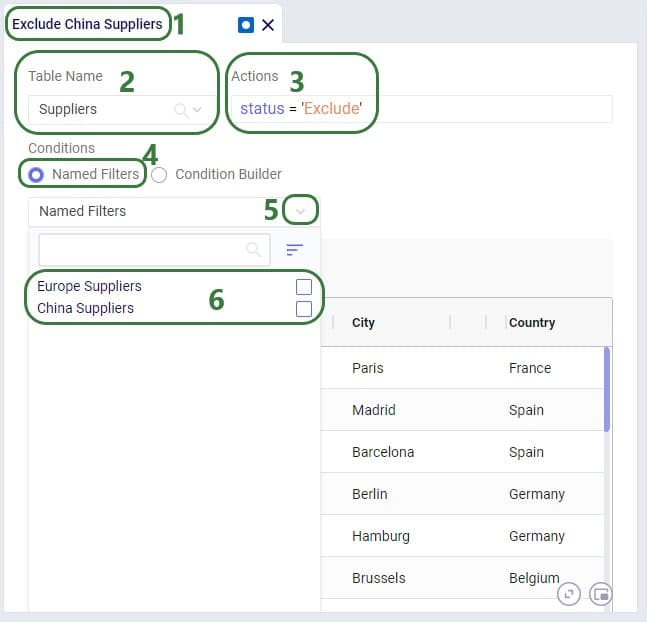

The following example changes the Suppliers table of a model which has around 70 suppliers, about half of these are in Europe and the other half in China:

The Named Filters drop-down collapses after choosing the China Suppliers Named Filter as the condition, and now we see the Preview of the filtered grid. This is the Suppliers table with the Named Filter China Suppliers applied. At the right top of the grid the name of the applied Named Filter(s) is shown, and we can see that in the preview we indeed only see Suppliers for which the Country is China. So these are the records the change (setting Status = Exclude) will be made to in scenarios that use this scenario item.

A few notes on the Filter Grid Preview:

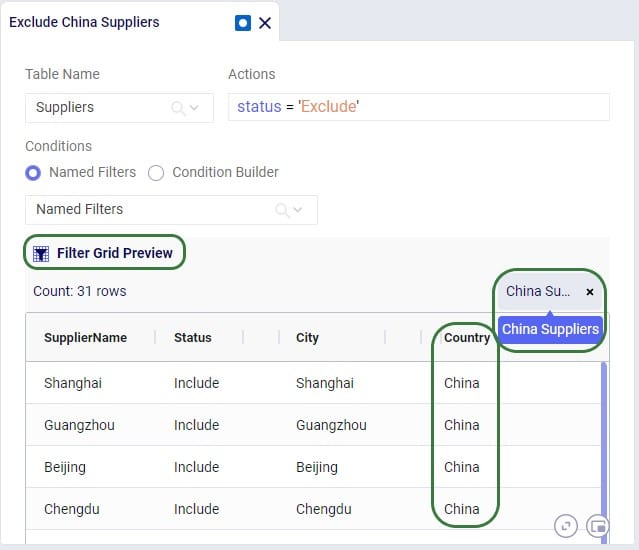

Besides using Named Filters on other input tables and for setting up conditions for scenario items, they can also be used as conditions for Map Layers, which will be covered in this final section of this Help Article. Like for scenario items, there is also a Filter Grid Preview for Map Layers to double-check which records will be filtered out when applying the condition(s) of 1 or multiple Named Filters.

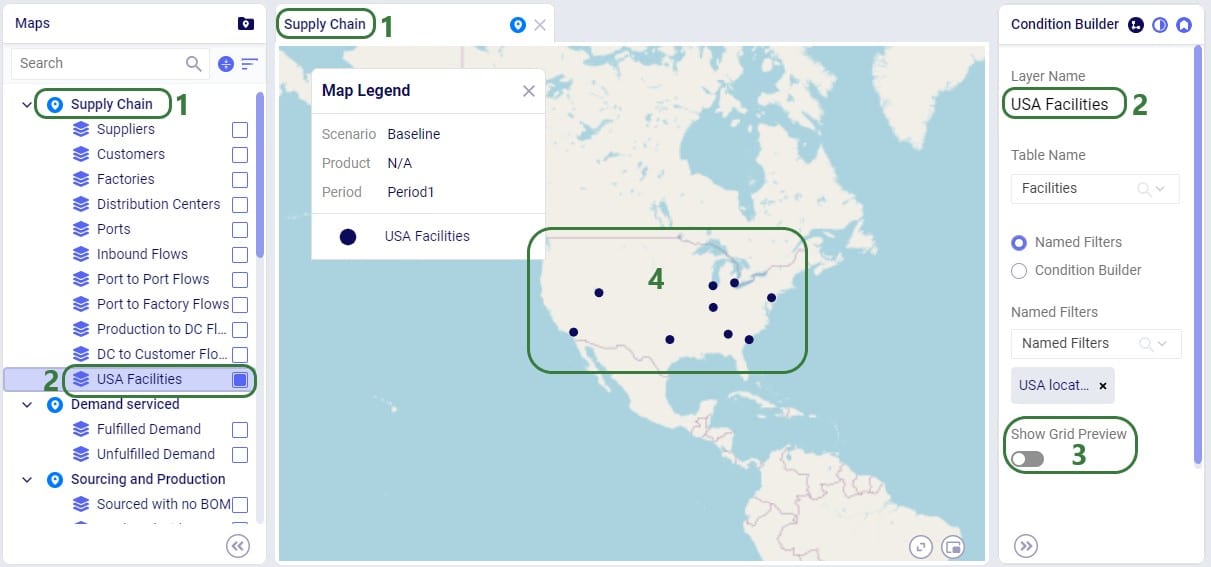

In this first example, a Named Filter on the Facilities table filters out only the Facilities that are located in the USA:

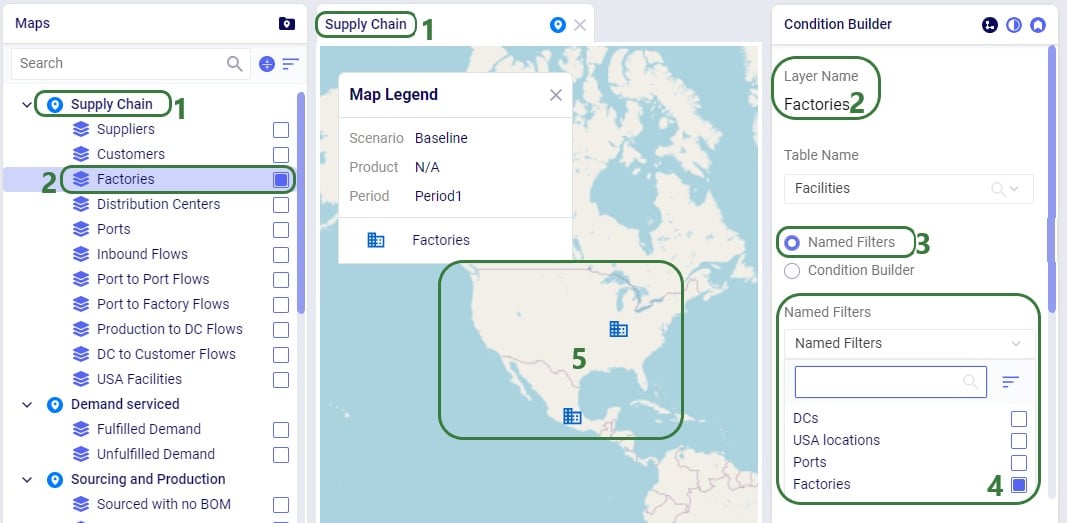

Another example of the same model is to use a different Named Filter from the Facilities table to show only Factories on the map:

If the Factories and the Ports Named Filters had both been enabled, then all factories and ports would be showing on the map. So, like for scenario items, applying multiple Named Filters to a Map Layer is additive (acts like OR statements).

The same notes that were listed for the Filter Grid Preview for scenario items apply to the Filter Grid Preview for Map Layers too: columns with conditions have the filter icon on them, users can resize and (multi-)sort the columns, however, re-ordering the columns is not possible.

Named Filters can be deleted, and this affects other input tables, scenario items, and map layers that used the now deleted Named Filter(s). This will be explained further in this final section of the Help Article on Named Filters.

A Named Filter can be deleted by using one of three methods:

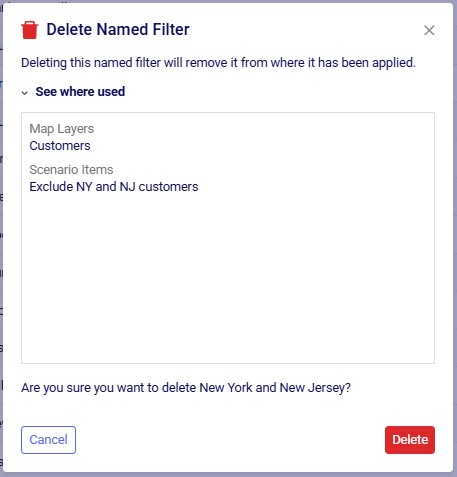

After choosing to delete a named filter, the following message comes up to ask the user for confirmation. In this example we are deleting the filter named "New York and New Jersey" which is a filter on the Customers input table:

The message will let the user know if the named filter that is about to be deleted was used in any Map Layers and/or Scenario Items. If so, it lists the names of these layers/items in the "See where used" section which can be expanded and collapsed by clicking on the caret symbol. Note that currently this message does not indicate if the named filter is used in any input tables.

The results of deleting a Named Filter that was used are as follows:

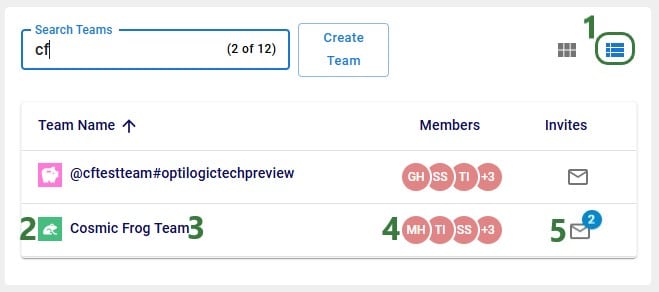

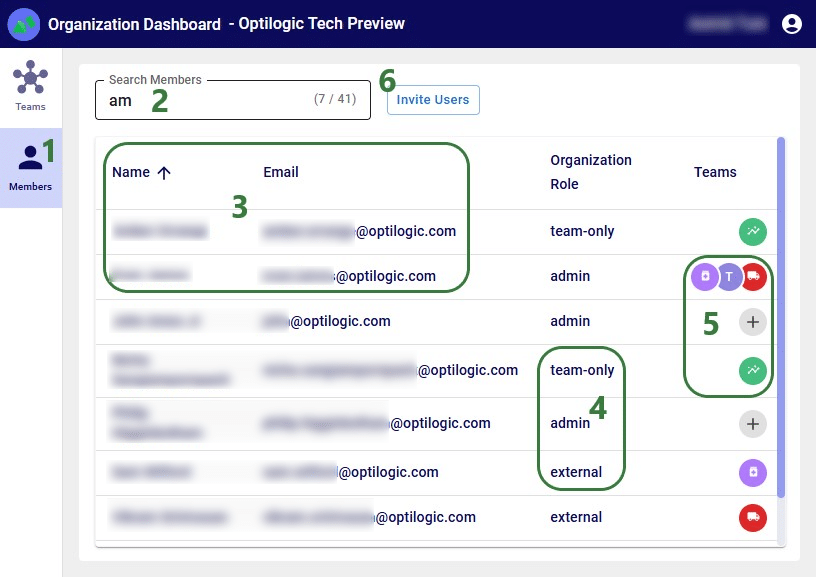

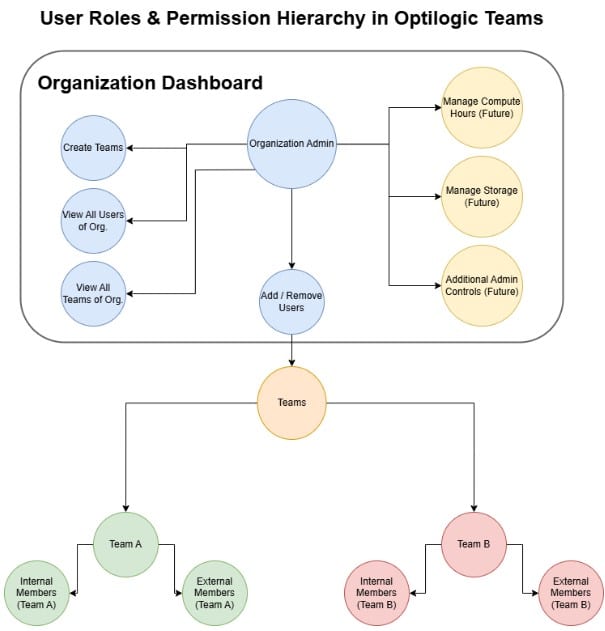

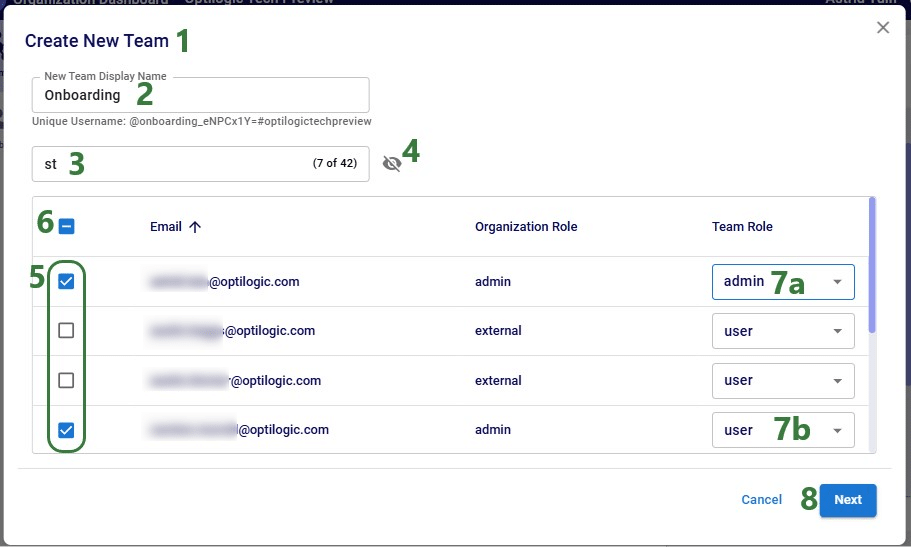

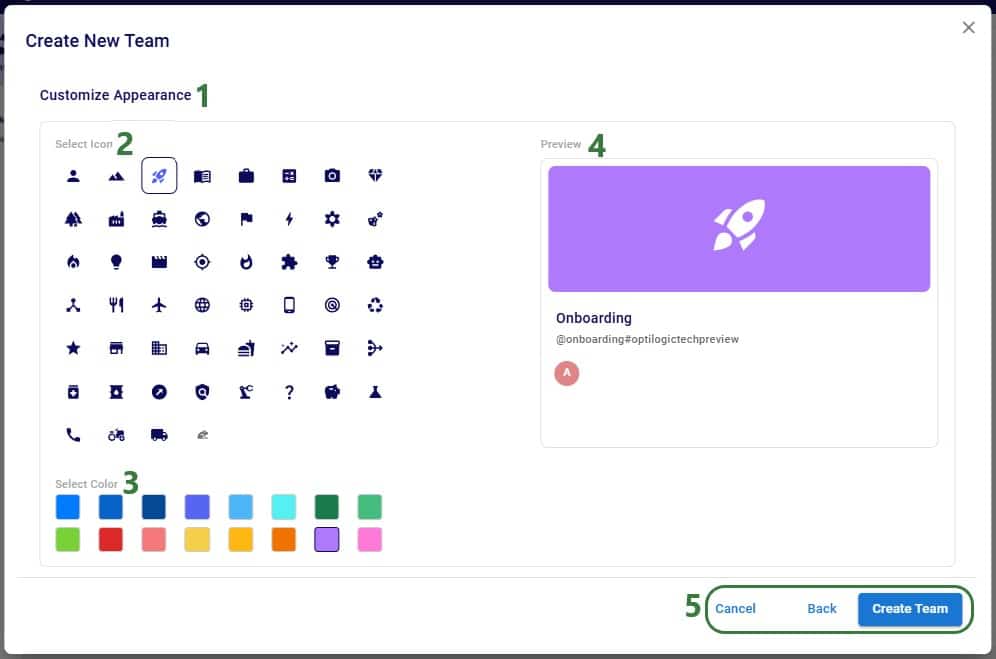

With Optilogic’s new Teams feature set (see the "Getting Started with Optilogic Teams" help center article) working collaboratively on Cosmic Frog models has never been easier: all members of a team have access to all contents added to that team’s workspace. Centralizing data using Teams ensures there is a single source of truth for files/models which prevents version conflicts. It also enables real-time collaboration where files/models are seamlessly shared across all team members, and updates to any files/models are instantaneous for all team members.

However, whether your organization uses Teams or not, there can be a need to share Cosmic Frog models, for example to:

In this documentation we will cover how to share models, and the different options for sharing. Sharing models can be from an individual user or a team to an individual user or a team. As the risk of something undesirable happening with the model when multiple people work on it increases, it is important to be able to go back to a previous version of the model. Therefore, it is best practice to make a backup of a model prior to sharing it. Continue making backups when important/major changes are going to be made or when wanting to try out something new. How to make a backup of a model will be explained in this documentation too and will be covered first.

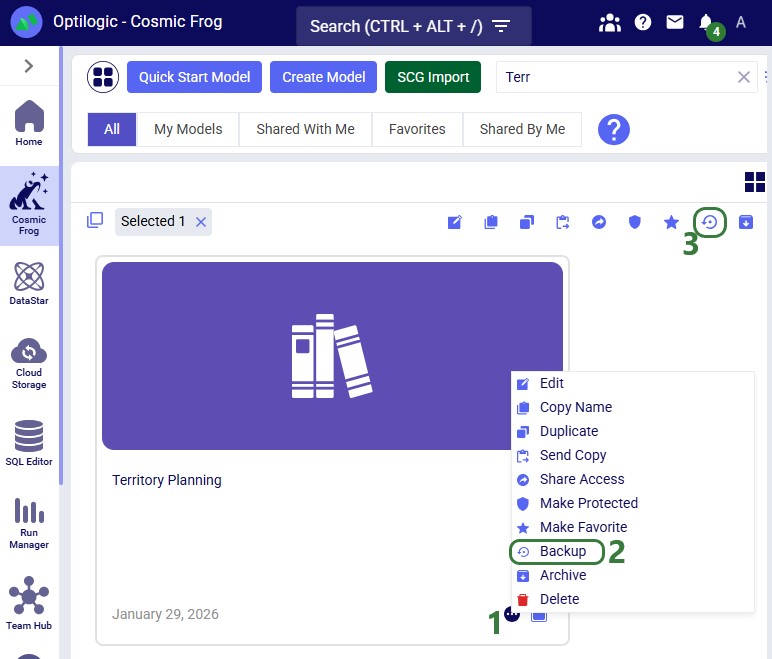

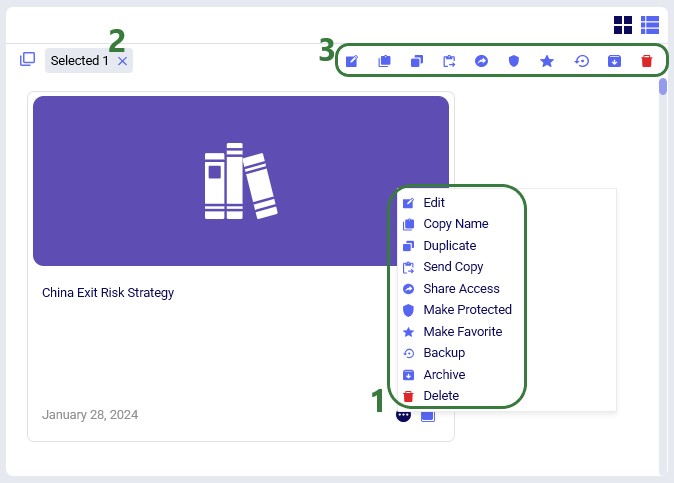

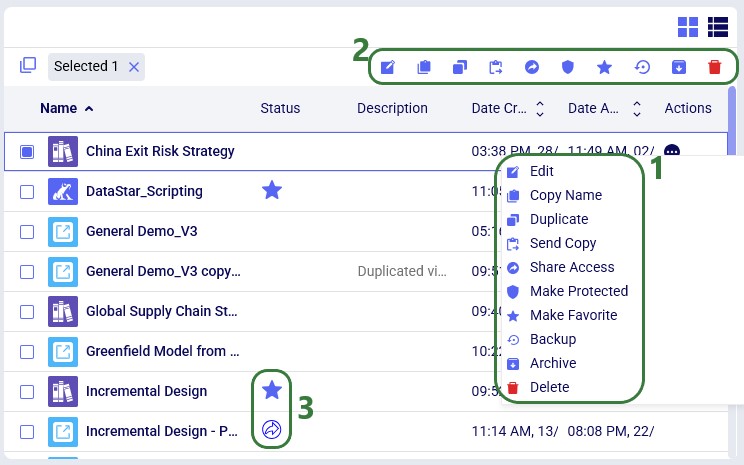

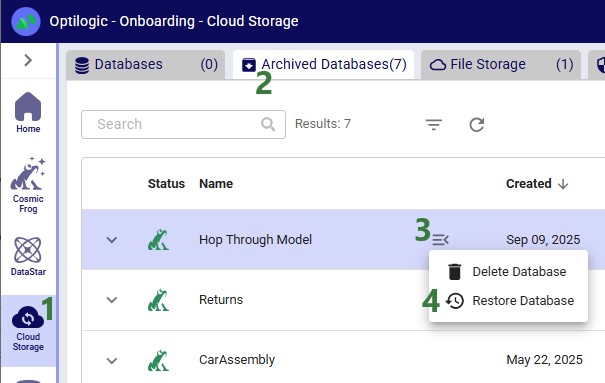

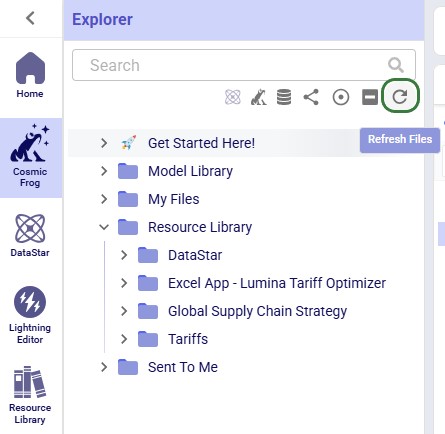

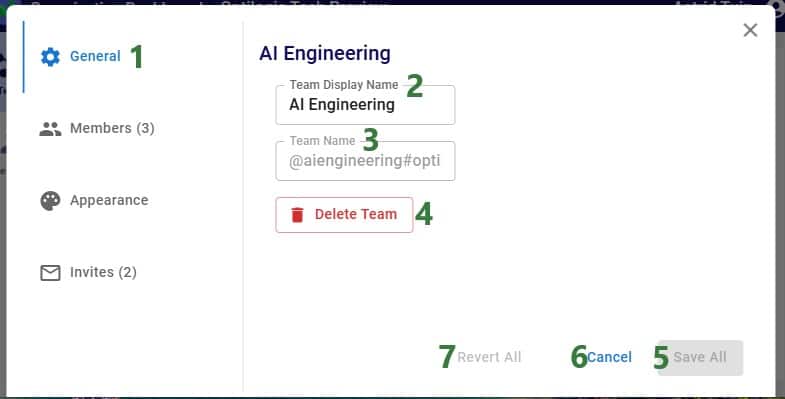

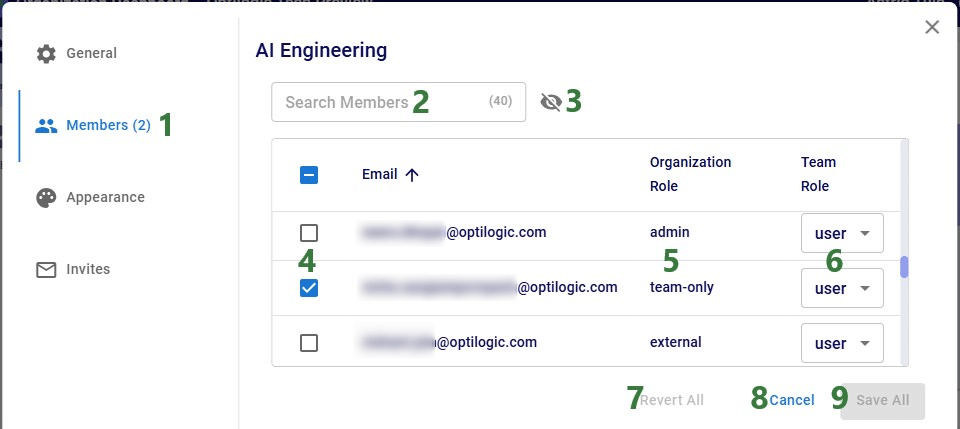

A backup of a model is a snapshot of its exact state at a certain point in time. Once a backup has been made, users can use them to revert to if needed. Initiating the creation of a backup of a Cosmic Frog model can be done from 3 locations within the Optilogic platform: 1) from the Models module within Cosmic Frog, 2) through the Explorer and 3) from within the Cloud Storage application on the Optilogic platform. The option from within Cosmic Frog will be covered first:

When in the Models module of Cosmic Frog (aka the Model Manager), hover over the model you want to create a backup for, and click on the icon with 3 horizontal dots that comes up at the bottom right of the model card (1). This brings up the model management options context menu, from which you can choose the Backup option (2). If only 1 model is selected, the Backup option can also be accessed from the toolbar at the top of the model list/grid (3).

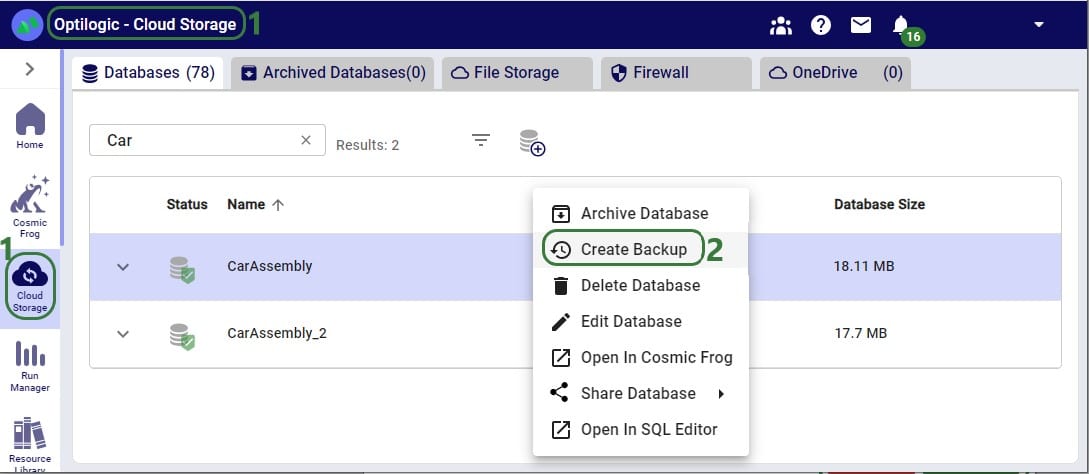

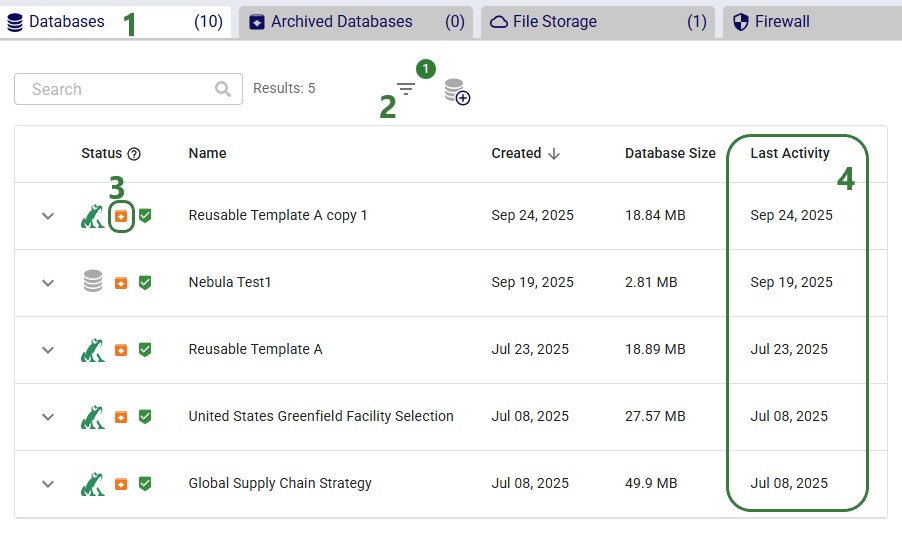

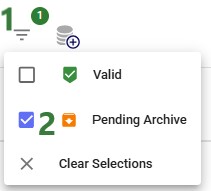

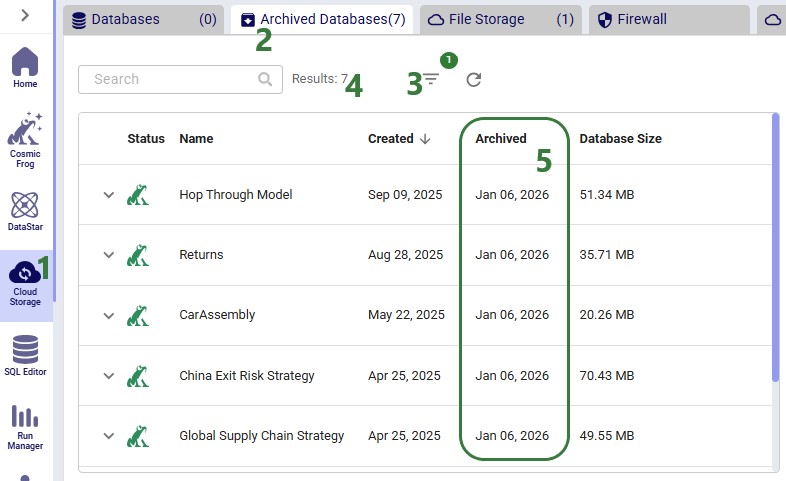

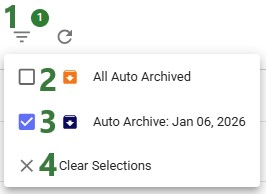

From the Cloud Storage application it works as follows:

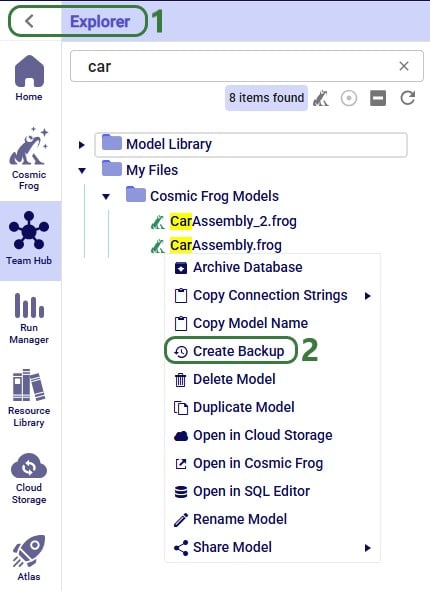

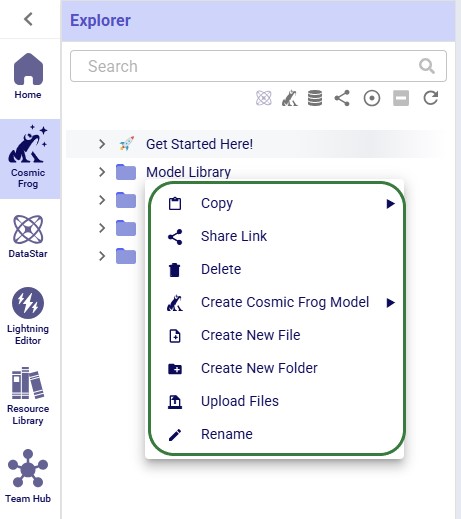

Through the Explorer, the process is similar:

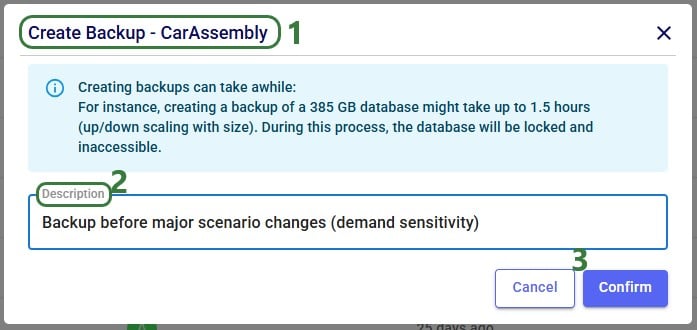

Whether from the Models module within Cosmic Frog, through the Cloud Storage application or via the Explorer, in all 3 cases the Create Backup form comes up:

After clicking on Confirm, a notification at the top of the user’s screen will pop up saying that the creation of a backup has been started:

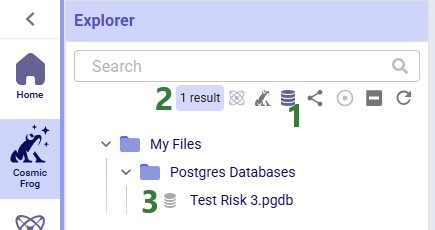

At the same time, a locked database icon with hover over text of “Backup in progress…” appears in the Status field of the model database (this is in the Cloud Storage application’s list of databases):

This locked database icon will disappear again once the backup is complete.

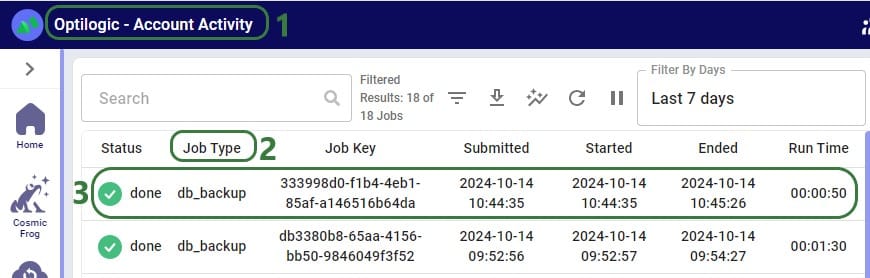

Users can check progress of the backup by going to the user’s Account menu under their username at the right top of the screen and selecting “Account Activity” from the drop-down menu:

To access any backups, users can expand individual model databases in the Cloud Storage application:

There are 2 more columns in the list of databases that are not shown in the screenshot above:

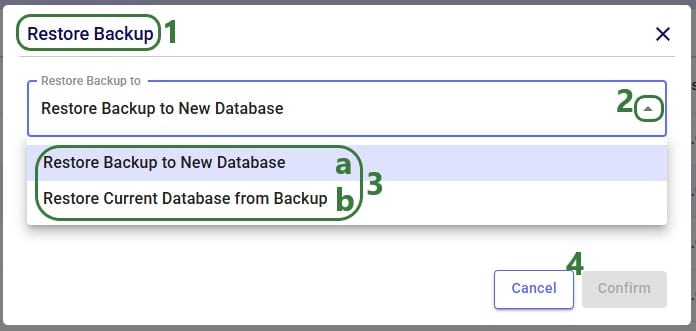

When choosing to restore a backup, the following form comes up:

Now that we have discussed how models can be backed up, we will cover how models can be shared. Note that it is best practice to make a backup of your model before sharing it.

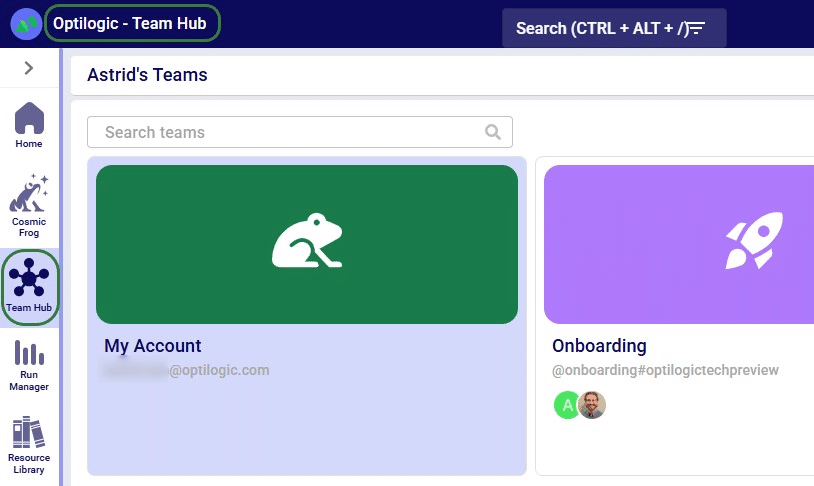

If your organization uses Teams, first make sure you are in the correct workspace, either a Team’s or your personal My Account area, from which you want to share a model. You can switch between workspaces using the Team Hub application, which is explained in this "Optilogic Teams - User Guide" help center article.

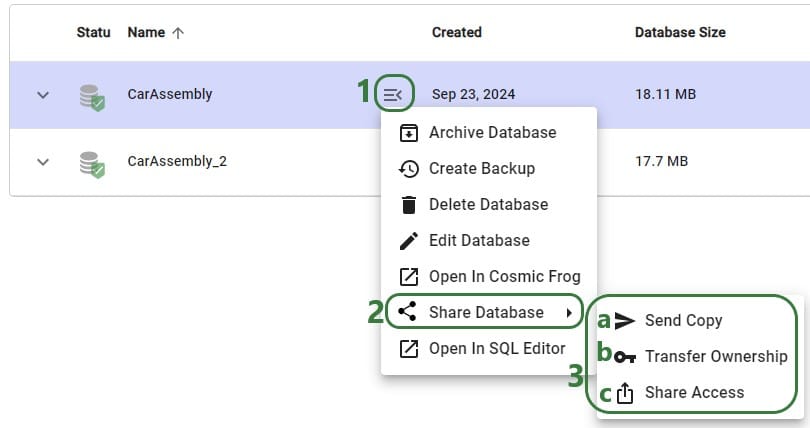

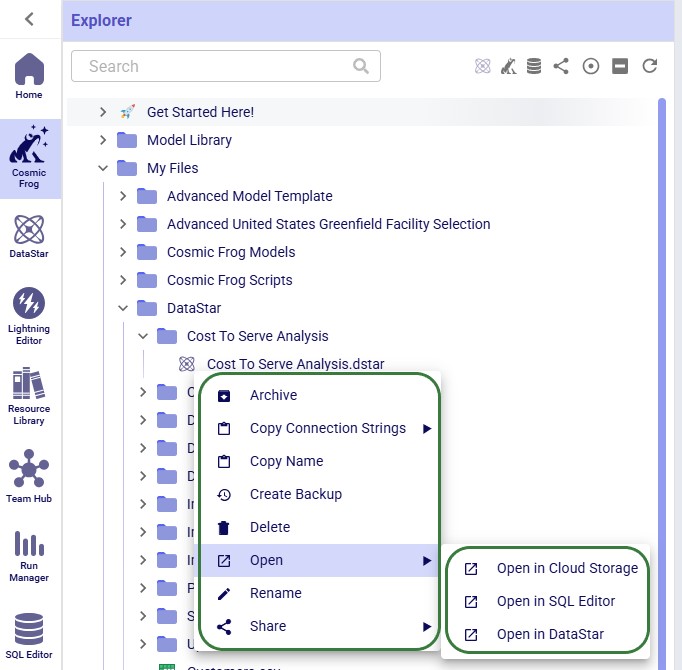

Like making a backup of a model database, sharing a model can also be done through the Cloud Storage application and the Explorer. Starting with the Cloud Storage option:

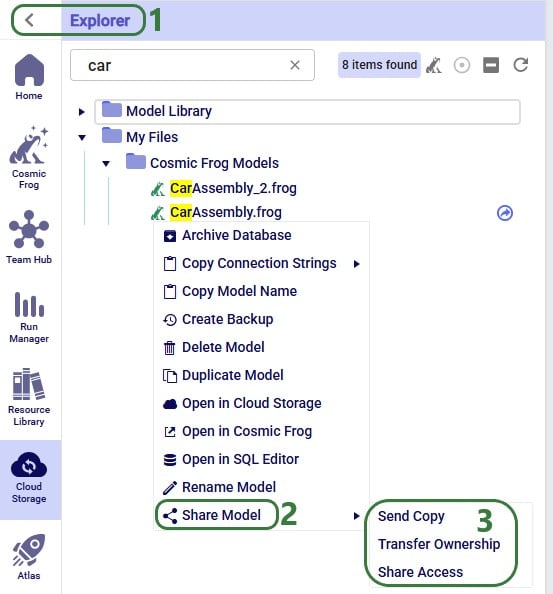

The Share Model options can also be accessed through the Explorer:

Now we will cover the steps of sending a copy of a model to another user or team. The original and the copy are not connected to each other after the model was shared in this way: updates to one are not reflected in the other and vice versa.

After clicking on the Send Model Copy button, a message that says “Model Copy Sent Successfully” will be displayed in the Send Model Copy form. Users can go ahead and send copies of other models to other user(s)/teams(s) or close out of the form by clicking on the cross icon at the right top of the form.

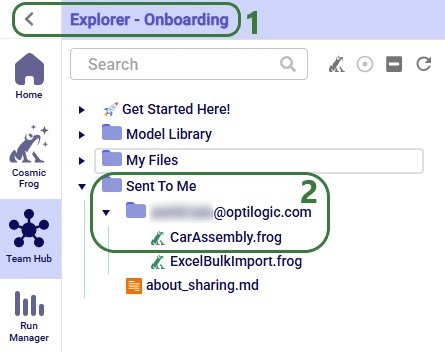

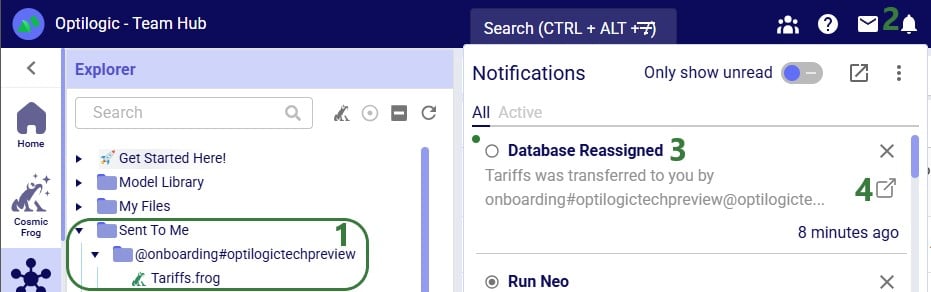

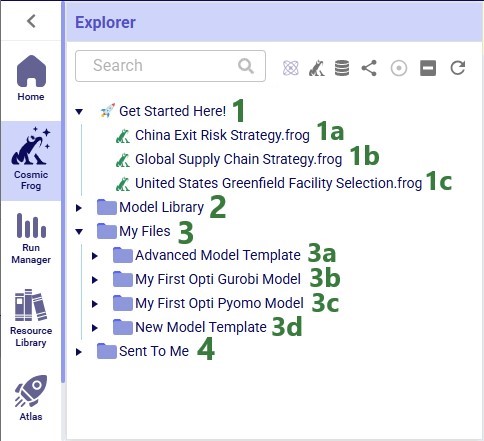

In this example, a copy of the CarAssembly model was sent to the Onboarding team. In the Onboarding team’s workspace this model will then appear in the Explorer:

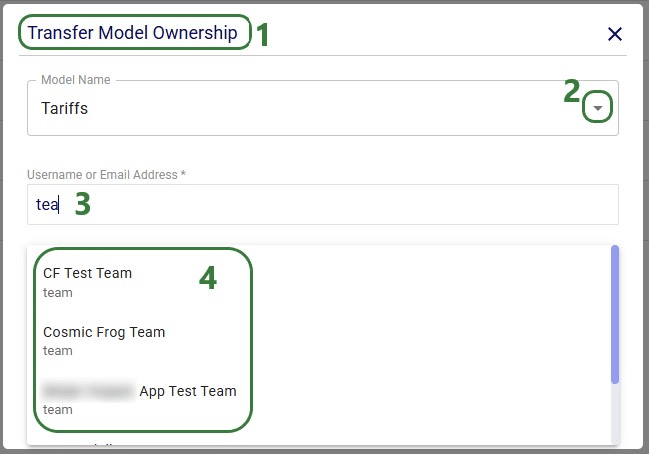

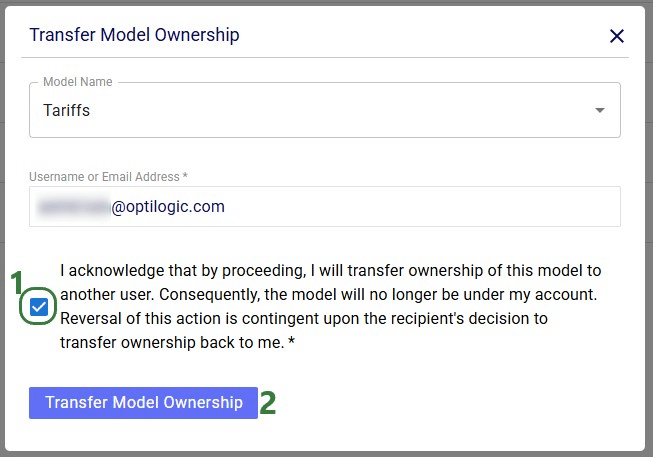

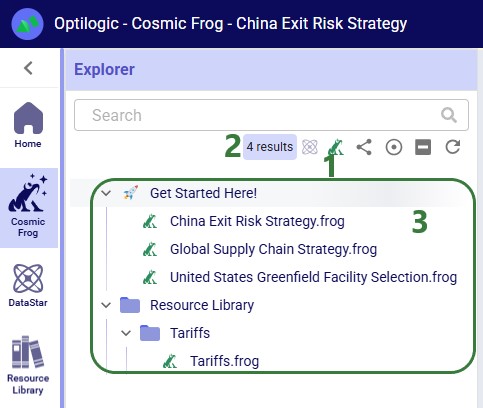

Next, we will step through transferring ownership of a model to another user or team. The original owner will no longer have access to the model after transferring ownership. In the example here, the Onboarding team will transfer ownership of the Tariffs model to an individual user.

After clicking on the Transfer Model Ownership button, a message that says “Transferred Ownership Successfully” will be displayed in the Transfer Model Ownership form. Users can go ahead and transfer ownership of other models to other user(s)/teams(s) or close out of the form by clicking on the cross icon at the right top of the form.

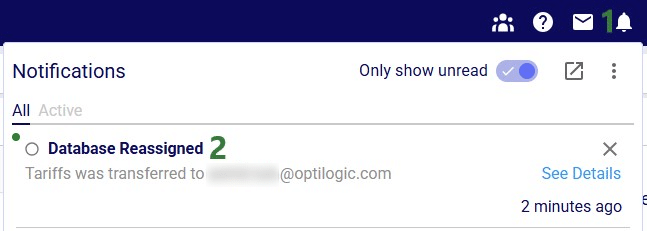

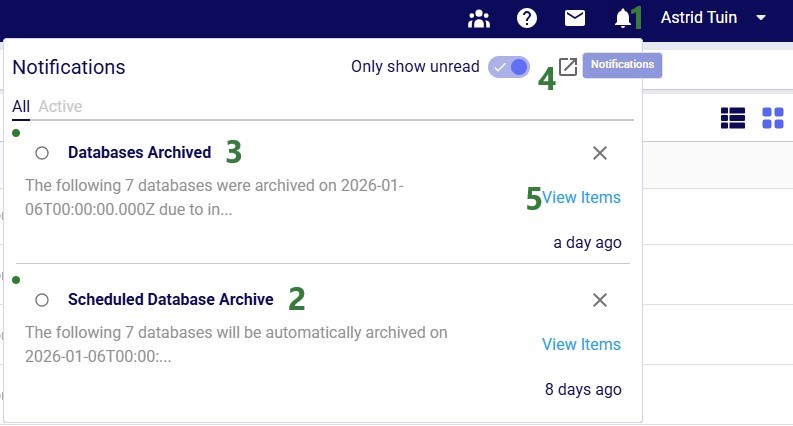

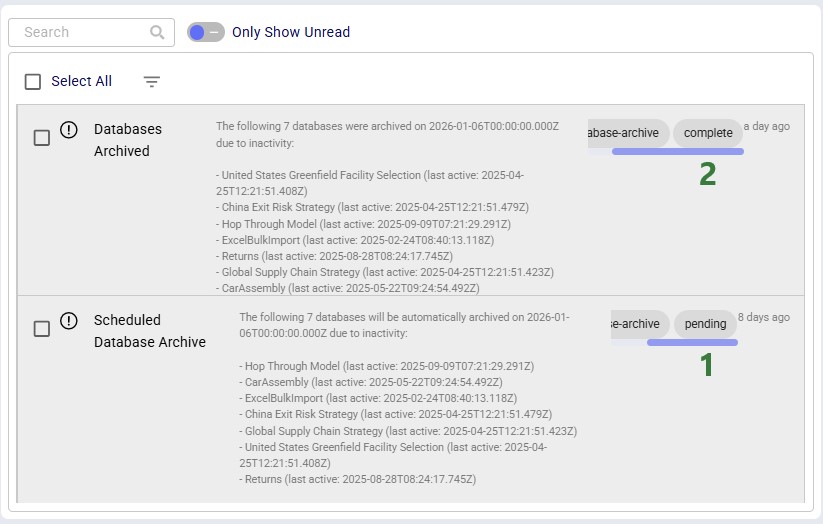

There will be a notification of the model ownership transfer in the workspace of the user/team that performed the transfer:

The model now becomes visible in the My Account workspace of the individual user the ownership of the model was transferred to:

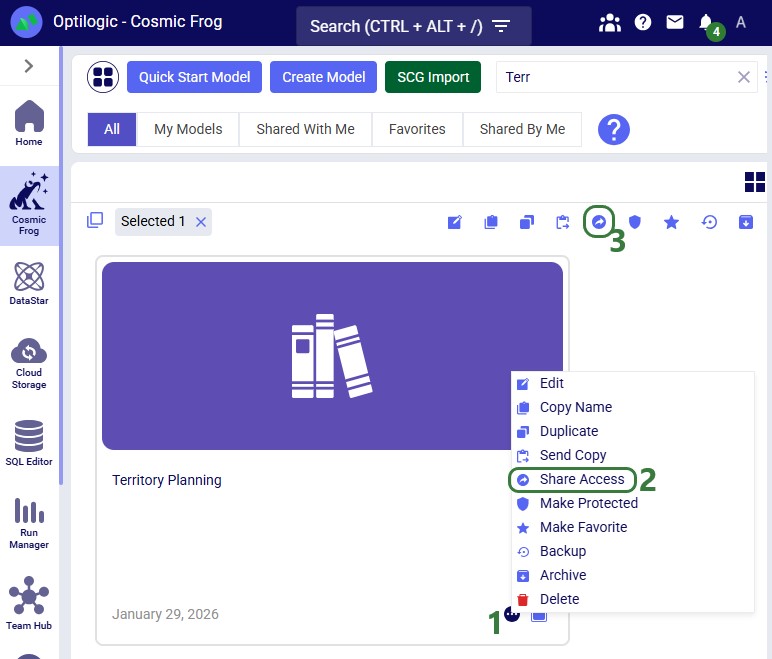

Lastly, we will show the steps of sharing access to a model with a user or team. Note that Sharing Access to a model can be done from Explorer and from the Cloud Storage application (same as for the Send Copy and Transfer Ownership options), but can also be done from the Models module in Cosmic Frog:

In Cosmic Frog's Models module (aka Model Manager), hover over the model card of the model you wan to share access to and then click on the icon with 3 horizontal dots that comes up in the bottom right of the model card (1). Clicking on this icon brings up the model management actions context menu, from which you can choose the Share Access option (2). If only 1 model is selected, the Share Access option is also available from the model management actions toolbar at the top of the model list/grid (3).

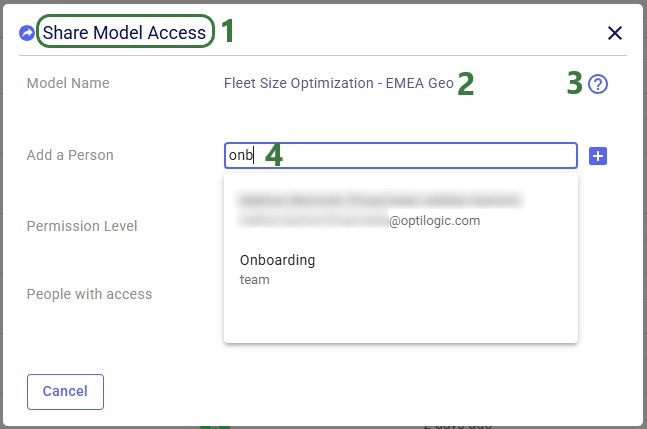

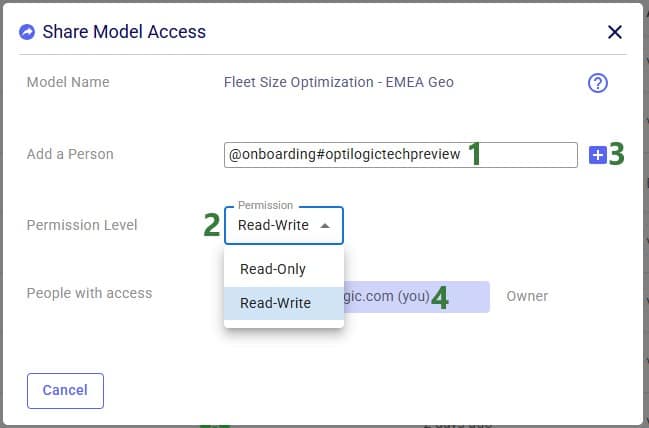

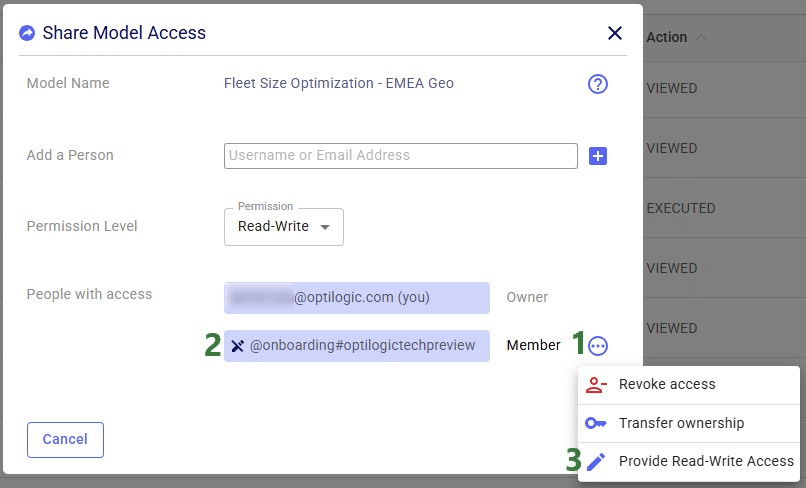

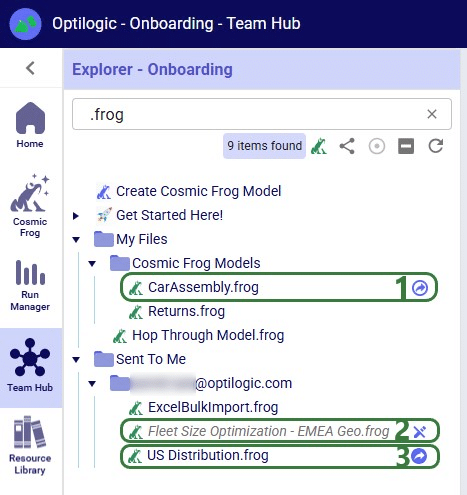

In our walk-through example, an individual user will share access to a model called "Fleet Size Optimization - EMEA Geo" with the Onboarding team.

After the plus button was clicked to share access of the Fleet Size Optimization - EMEA Geo model with the Onboarding team, this team is now listed in the People with access list:

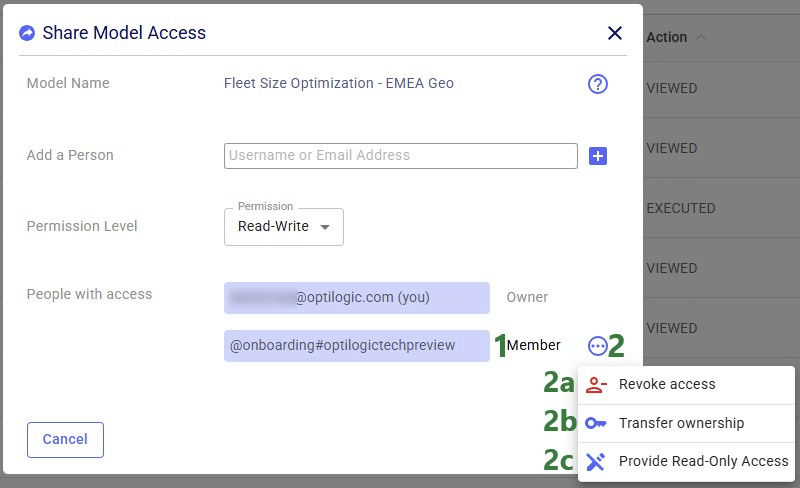

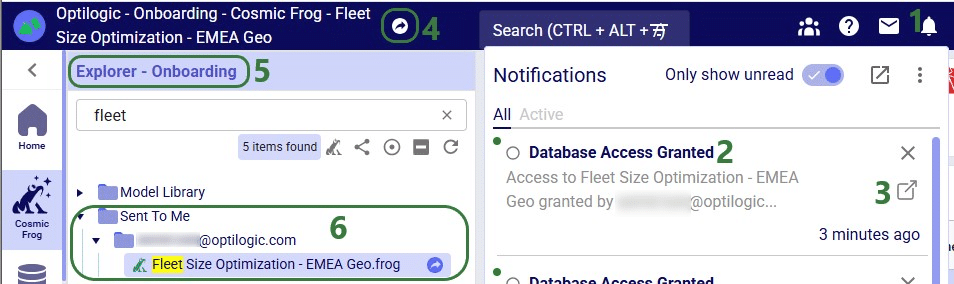

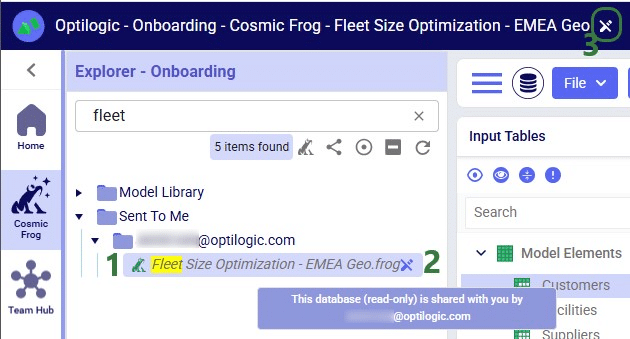

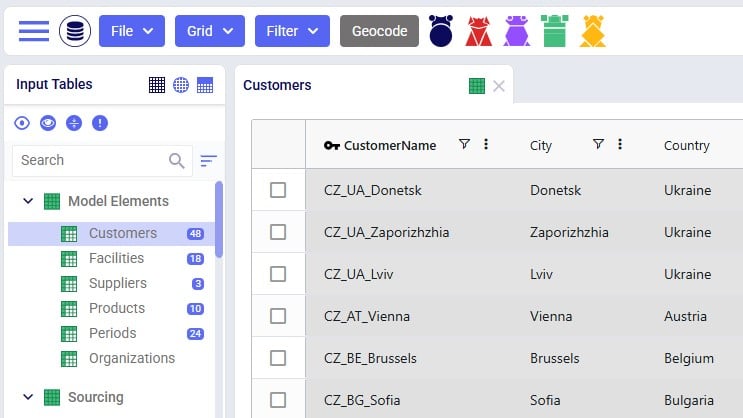

Now, in the Onboarding team’s workspace, we can access this model, of which the team receives a notification too:

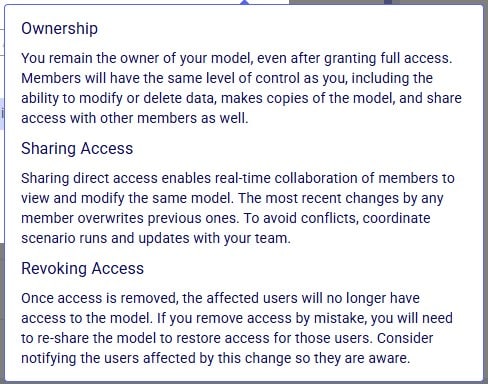

Now that the Onboarding team has access to this model, they can share it with other users/teams too: they can either send a copy of it or share access, but they cannot transfer ownership as they are not the model’s owner.

In the Explorer of the workspace of the user/team who shared access to the model, a similar round icon with arrow inside it will be shown next to the model’s name. The icon colors are just inverted (blue arrow in white circle) and here the hover text is “You have shared this database”, see the screenshot below. There will also be a notification about having granted access to this model and to whom (not shown in the screenshot):

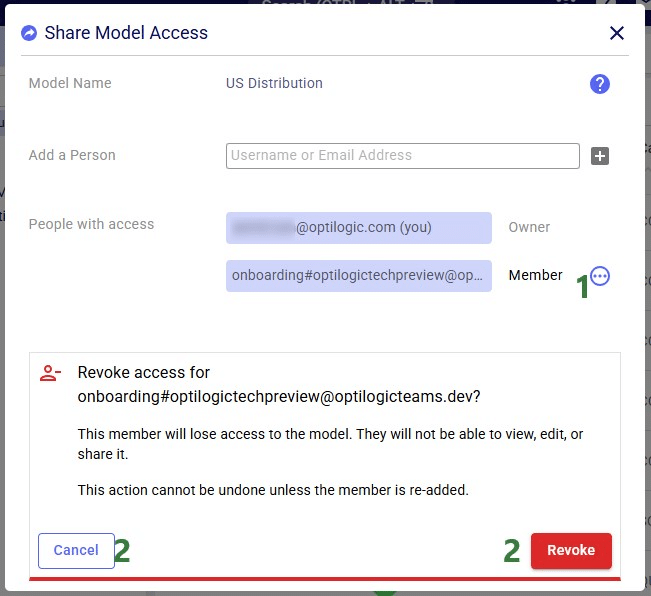

If the model owner decides to revoke access or change the permission level to a shared model, they need to open the Share Model Access form again by choosing Share Access from the Share Model options / clicking on the Share icon when hovering over the model's card on the Cosmic Frog start page:

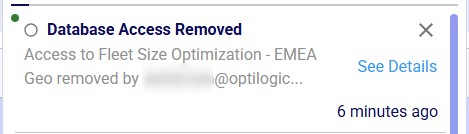

If access to a model is revoked, the team/user that was previously granted access but now no longer will have access, receives a notification about this:

With Read-Only access, teammates and stakeholders can explore a shared model, view maps, dashboards, and analytics, and provide feedback — all while ensuring that the data remains unchanged and secure.

Read-Only mode is best suited for situations where protecting data integrity is a priority, for example:

See the Appendix for a complete list of actions and whether they are allowed in Read-Only Access mode or not.

Similar to revoking access to a previously shared model, in order to change the permission level of a shared model, user opens the Share Model Access form again by choosing Share Access from the Share Model options / clicking on the Share icon when hovering over the model's card on the Cosmic Frog start page:

Models with Read-Only access can be recognized on the Optilogic platform as follows:

Input tables of Read-Only Cosmic Frog models are greyed out (like output tables already are by default), and and write actions (insert, delete, modify) are disabled:

Read-Only models can be recognized as follows in other Optilogic applications:

When working with models that have shared access, please keep following in mind:

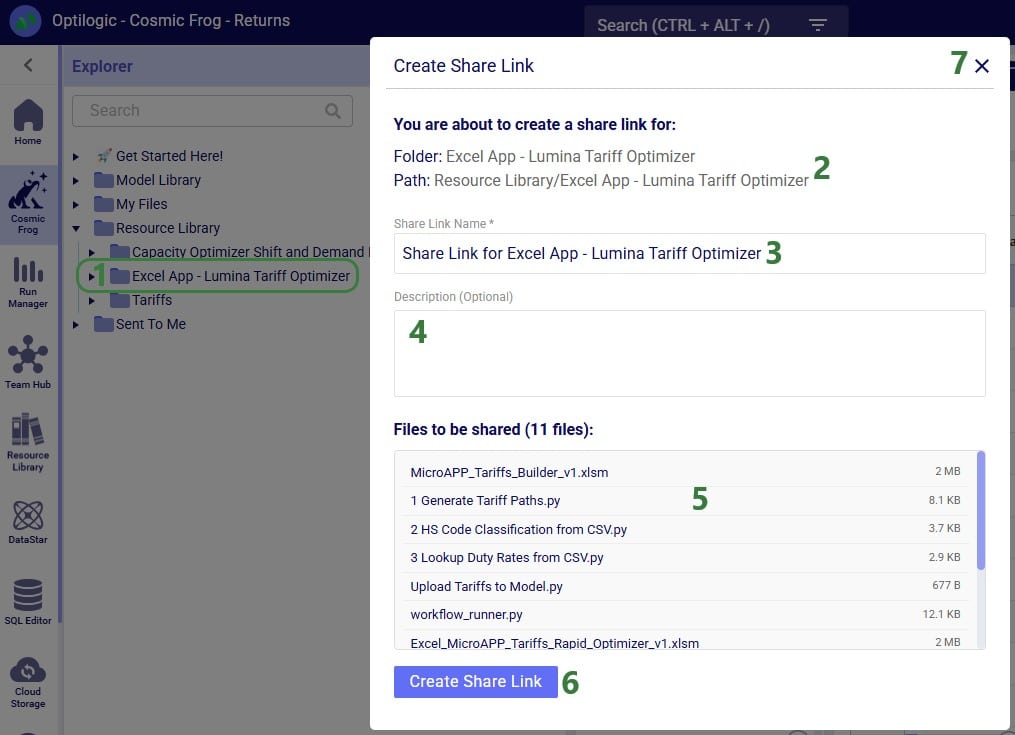

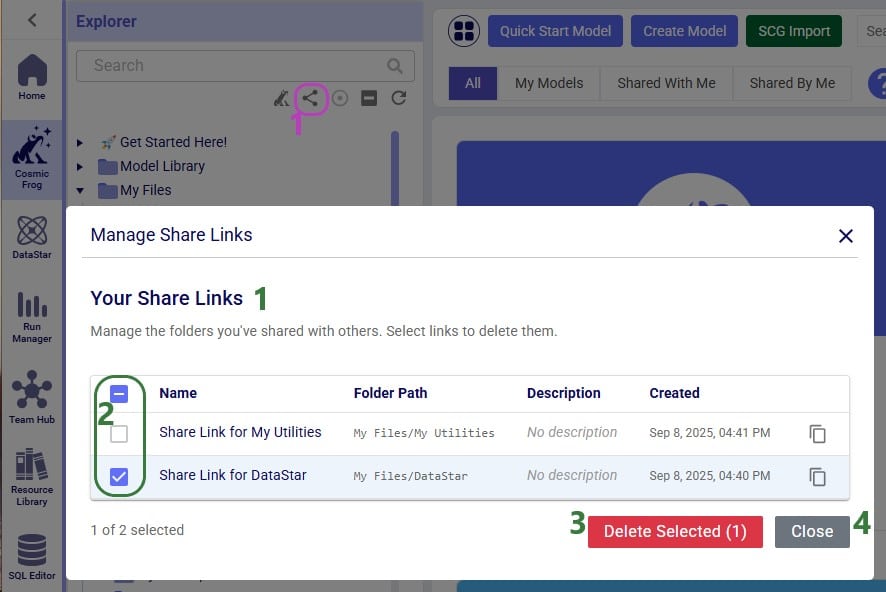

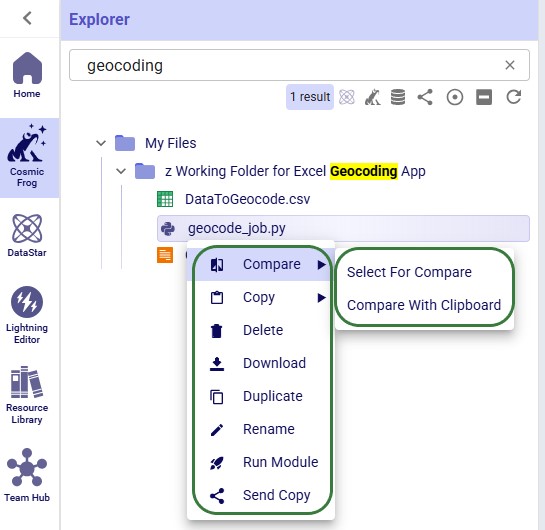

In addition to the various ways model files can be shared between users, there is a way to share a copy of all contents of a folder with another user/team too:

After clicking on the Create Share Link button, the share link is copied to the clipboard. A toast notification of this is temporarily displayed at the right top in the Optilogic platform. The user can paste the link and send it to the user(s) they want to share the contents of the folder with.

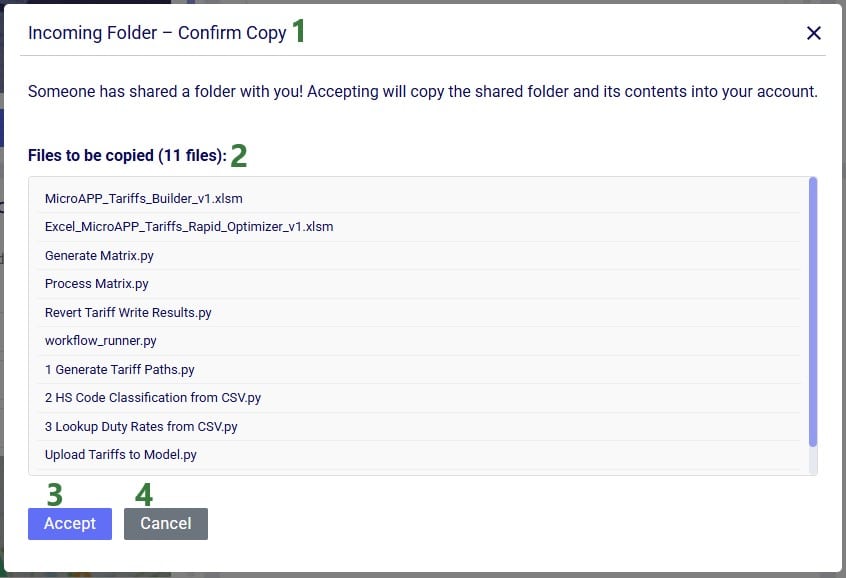

When a user who has received the share link copies it into their browser while logged into the Optilogic platform, the following form will be opened:

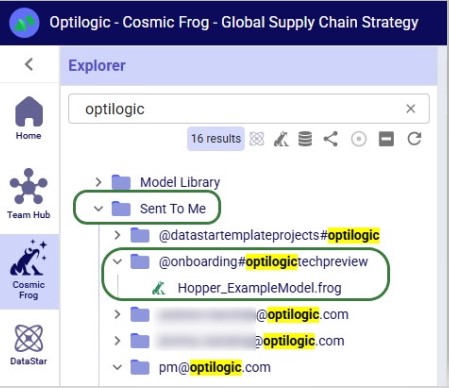

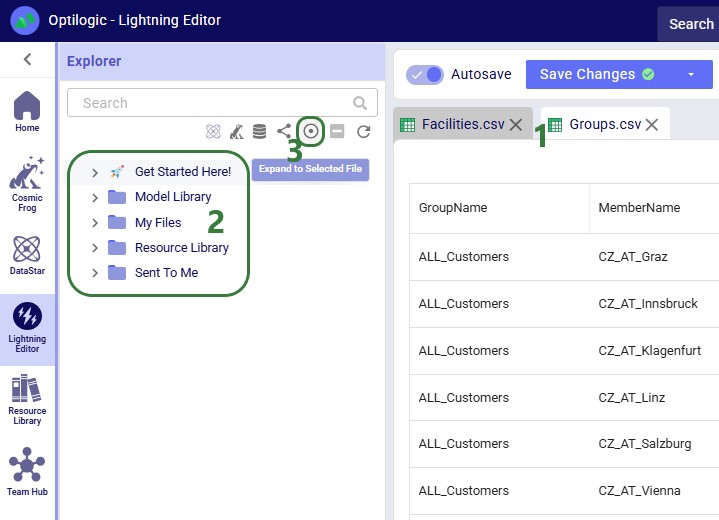

Folders copied using the share link option will be copied into a subfolder of the Sent To Me folder. The name of this subfolder will be the username / email of the user / team that sent the share link. The file structure of the sent folder will be maintained and appear the same as it was in the account of the sender of the share link.

See the View Share Links section in the Getting Started with the Explorer help center article on how to manage your share links.

Action - Allowed? - Notes:

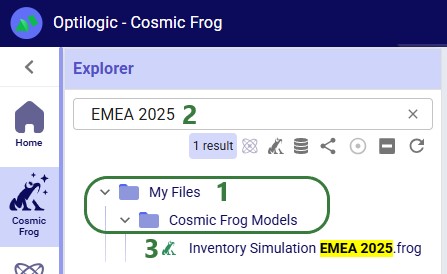

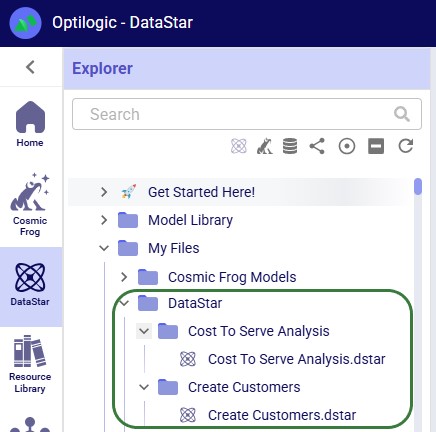

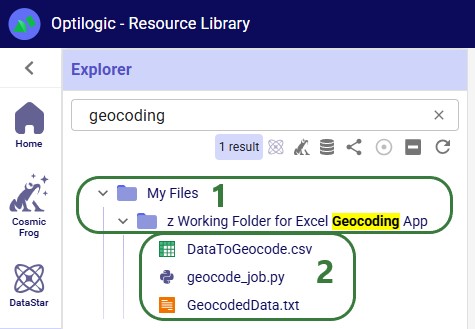

The Model Manager in Cosmic Frog is the central place to create, view, organize, and maintain your supply chain models. It provides tools for quickly finding models, understanding their status, and performing common management actions such as editing, duplicating, and deleting models.

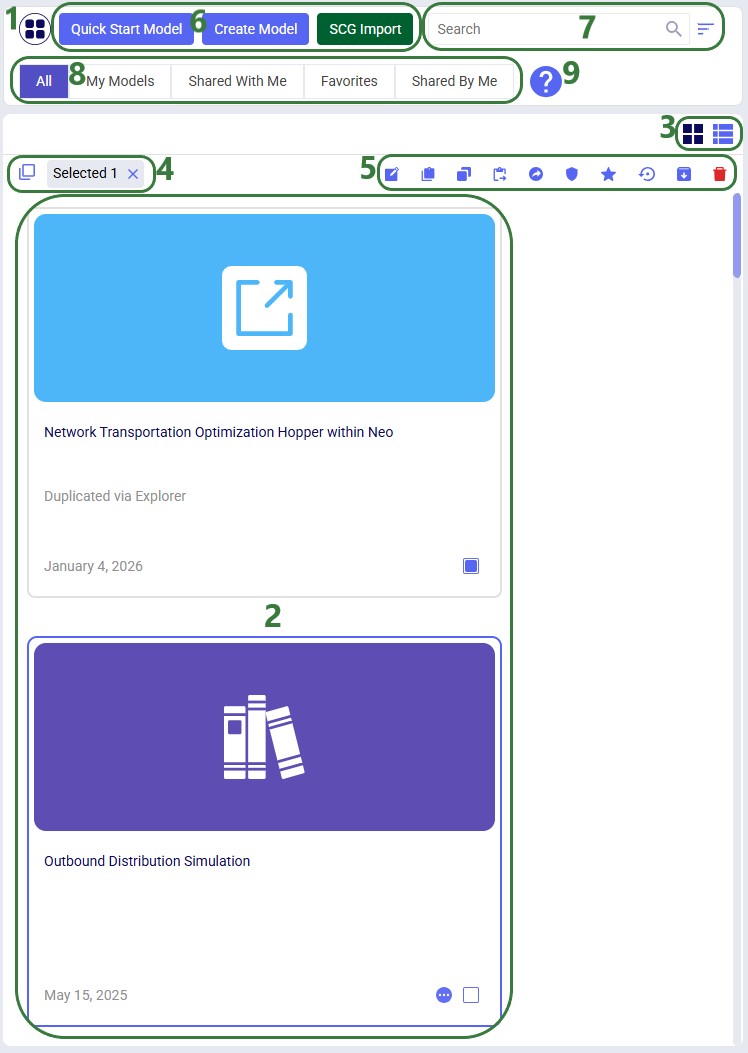

This guide walks you through the Model Manager interface step by step, explaining each major feature and control as it appears on screen. Screenshots are annotated with green outlines to highlight key areas, and numbered callouts are explained in corresponding lists so you can easily follow along.

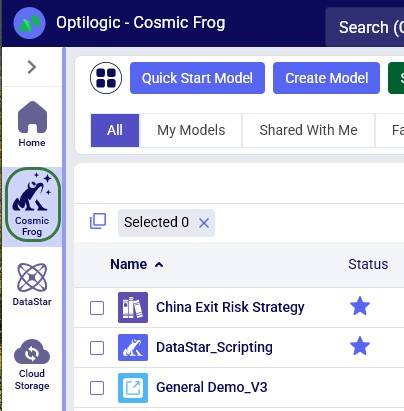

When logged into the Optilogic platform, you can open Cosmic Frog by clicking on its icon in the list of applications on the left. Note that the order of the applications may be different in your list so you may need to scroll down:

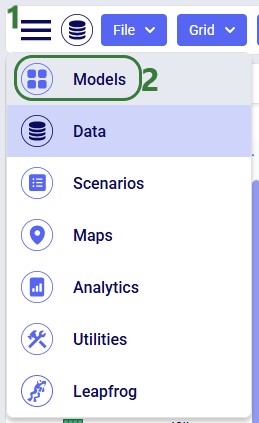

After opening Cosmic Frog, the model manager will typically be the active module. However, if you have been working in a specific model in Cosmic Frog previously, it may immediately open that model with its Data module being the active module. In that case, you can open the Model Manager from within Cosmic Frog by clicking on the icon with 3 horizontal bars at the left top to open the Module Menu, then select Models:

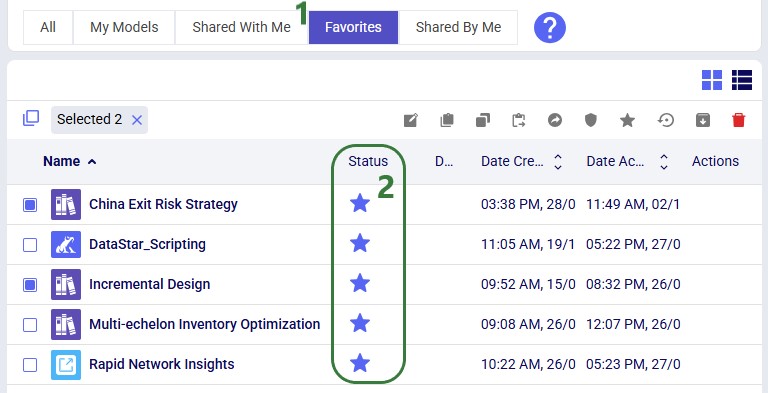

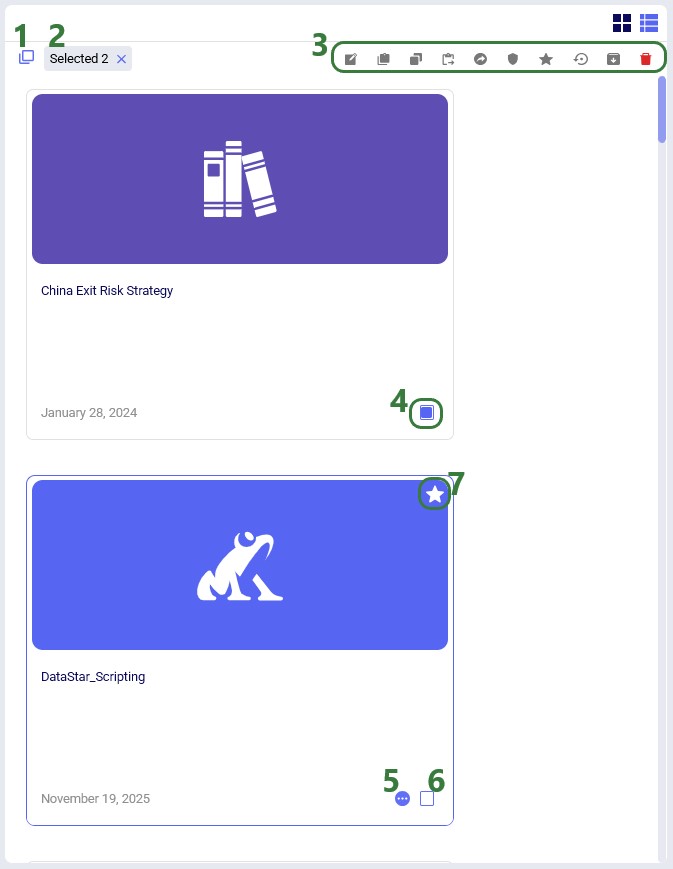

The Model Manager screen displays a table or grid of your existing models along with high level details such as name, status, and last modified date. This view is designed to give you immediate insight into your model library. The following screenshot shows at a high level the different features of the model manager. Each feature will be explained in more detail in subsequent sections of this documentation:

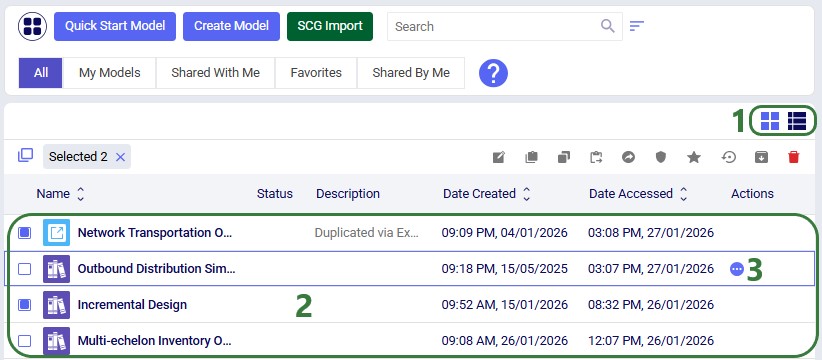

In the following screenshot we have switched from card view to list view:

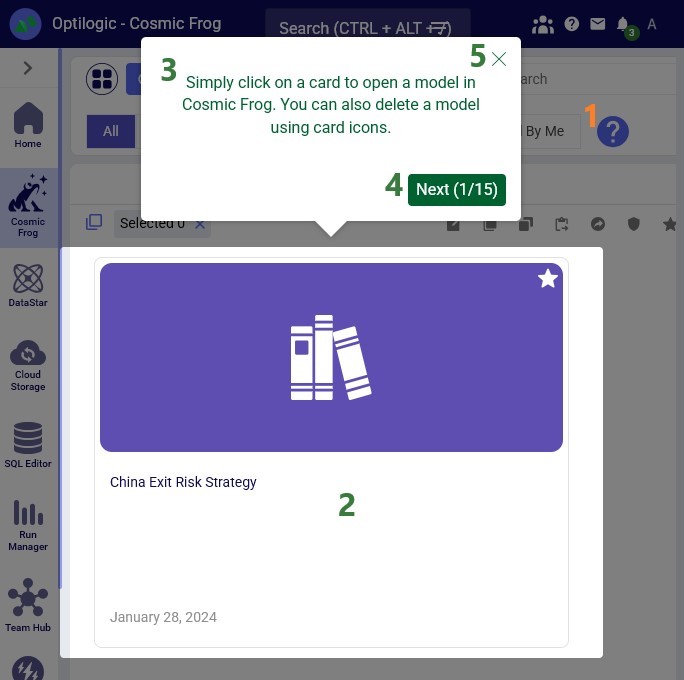

The Help & Hints area provides contextual guidance to help users understand the purpose of the Model Manager and how to use it effectively. These hints are especially useful for new users:

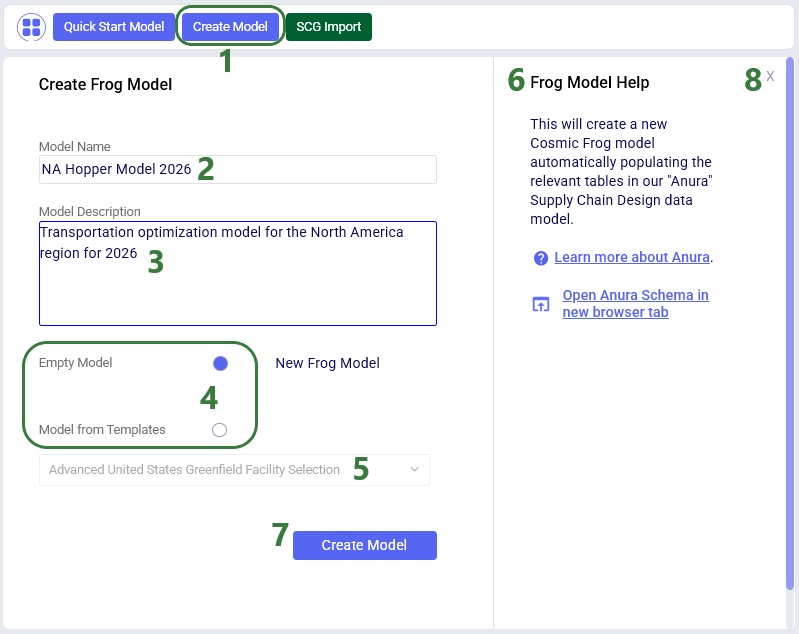

From the Model Manager there are a few options to create a new Cosmic Frog model, which will be covered in this section. We will start with the option from which new empty models as well as copies from Resource Library models can be created:

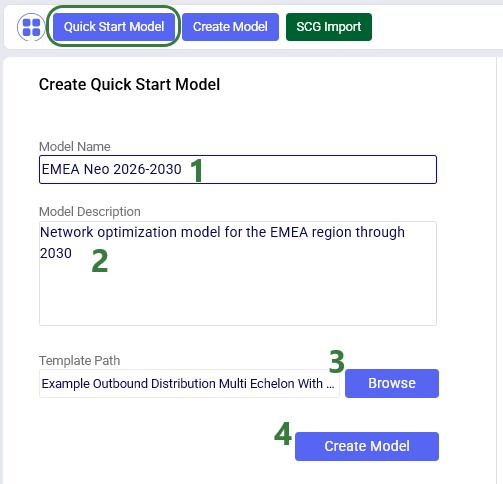

Another option to create a new model is to create one with tables populated from an Excel template, click on the Quick Start Model button to open the Create Quick Start Model form:

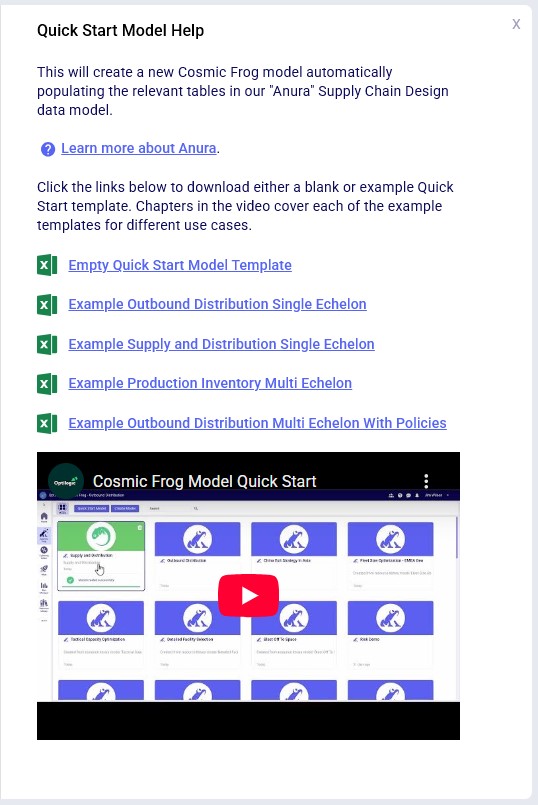

Note that for this option there is help available on the right-hand side of the form too, including example Excel template files, which can be downloaded to function as a starting point to overwrite with your own data. A video on this Quick Start option can be accessed from here too:

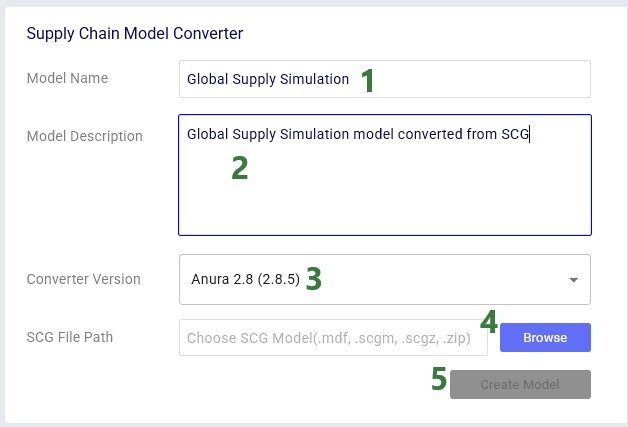

Finally, users also have the option to convert their Supply Chain Guru (SCG) models to a Cosmic Frog model. After clicking on the SCG Import button, the following Supply Chain Model Converter form comes up:

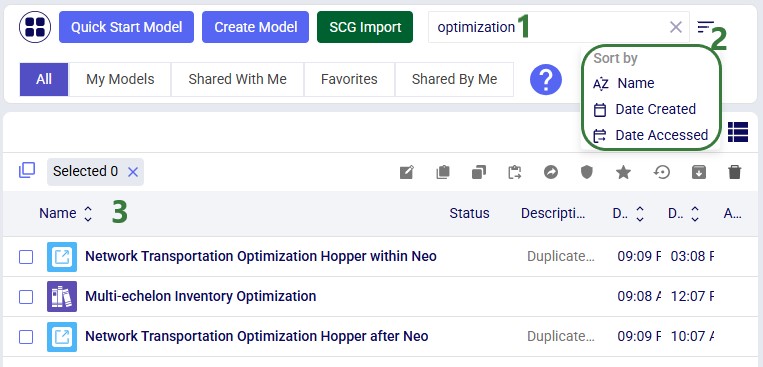

As your model library grows, search, sort, and filter tools help you quickly locate the models you need. The following screenshot shows the use of the search box and the available sort options:

Standard filters to reduce the list of models to those of interest are also available:

To learn more about sharing models, please see this "Model Sharing & Backups for Multi-User Collaboration in Cosmic Frog" help center article.

The Model Manager provides comprehensive model management capabilities through both the action toolbar and context menus. The first 2 screenshots in this section show these in card view, while the last screenshot shows the options while in list view:

The model management actions available from the toolbar and from the context menu of a model in card view are shown in this next screenshot:

Lastly, the next screenshot shows the Model Management Actions while in list view:

Exciting new features which enable users to solve both network and transportation optimization in one solve have been added to Cosmic Frog. By optimizing multi-stop route optimization as part of Network Optimization, it enables users to streamline their analysis and make their Network Optimization more accurate. This is possible because the single optimization solve calculates multi-stop route costs by shipment/customer combination, uses this cost as part of another possible transportation mode in addition to OTR, Rail, Air, etc. and will result in more accurate answers by including better transportation costs in a single solve.

In addition to this documentation, these 2 videos cover the new Network Transportation Optimization (NTO) features too:

Network Transportation Optimization is particularly useful for two classic network design problems (both will be described in more detail in the next section):

This feature set includes 2 ways of running the Transportation Optimization (Hopper) and Network Optimization (Neo) engines together:

Following will be covered in this documentation for both the Hopper within Neo and Hopper after Neo NTO algorithms: example use cases, model inputs, how to use the new features, basics of the model used to show the NTO features, and walk through 2 example models, including their setup (input tables, scenarios), and analysis of the outputs using tables and maps.

With this feature, users can consider routes as inputs in a Neo model, meaning that the model will optimize product flow sources based on all costs, including the cost of routes. Costs and asset capacities are taken into account for the routes.

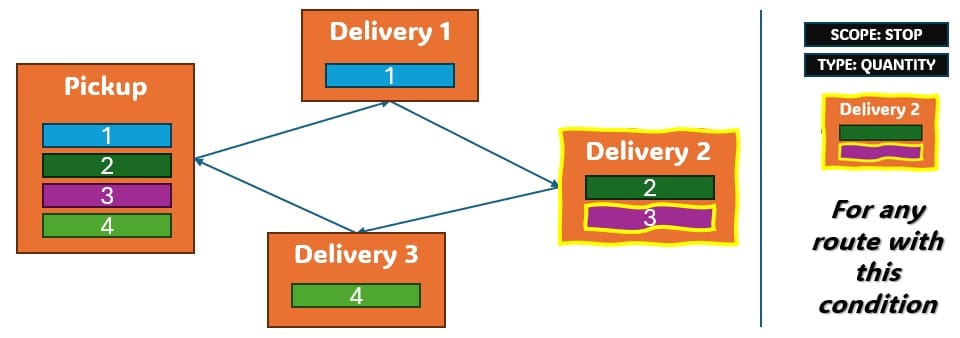

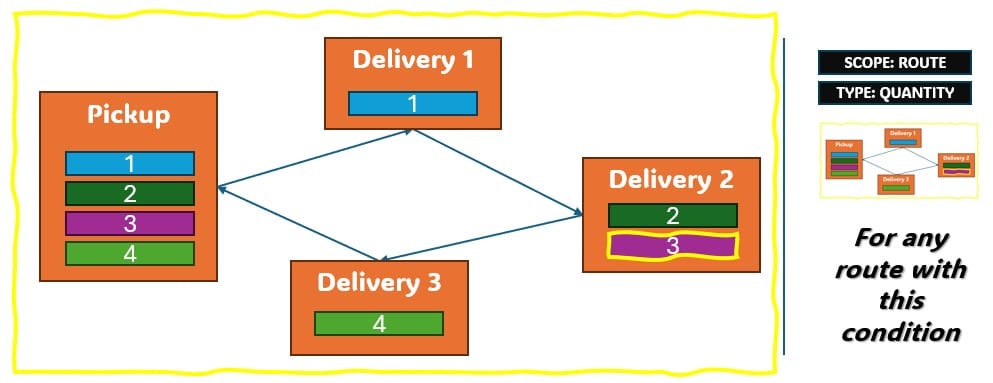

Two example use cases which can be addressed using Hopper within Neo are customer consolidation and hub optimization, which will be illustrated with the images that follow.

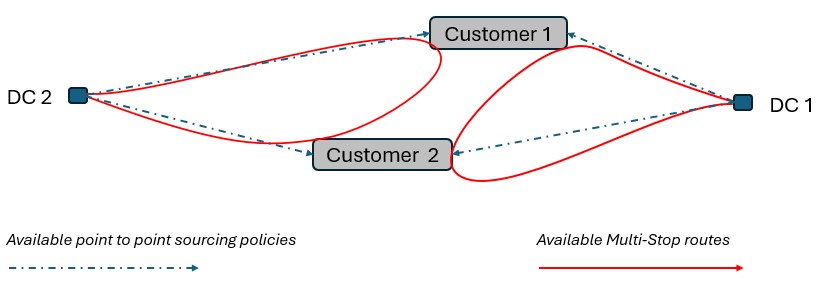

Consider a network where 2 distribution centers (DCs) are available to serve the customers. Two of these customers are in between the DCs and can either be serviced by the DCs directly (the blue dashed point to point lines) or product can be delivered to both of them as part of a multi-stop route from either DC (the red solid lines):

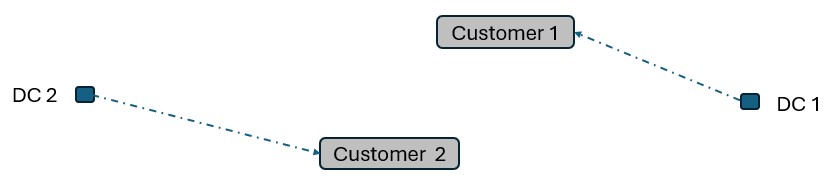

Running Neo without Hopper, not taking any route inputs into account, can lead to a solution where each DC serves 1 customer:

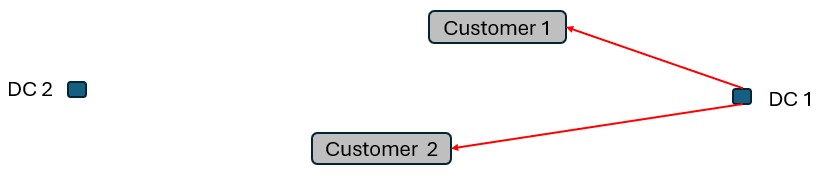

Whereas when taking route costs and capacities into account during a Hopper within Neo solve, it may be found that the overall cost solution is lower if one of the DCs (DC 1 in this example) serves both customers using a multi-stop route:

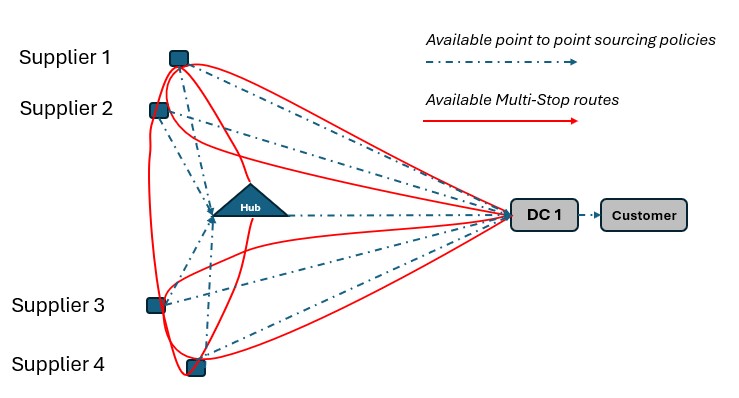

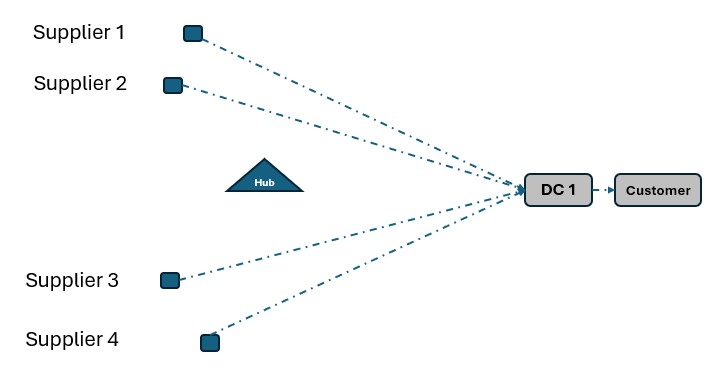

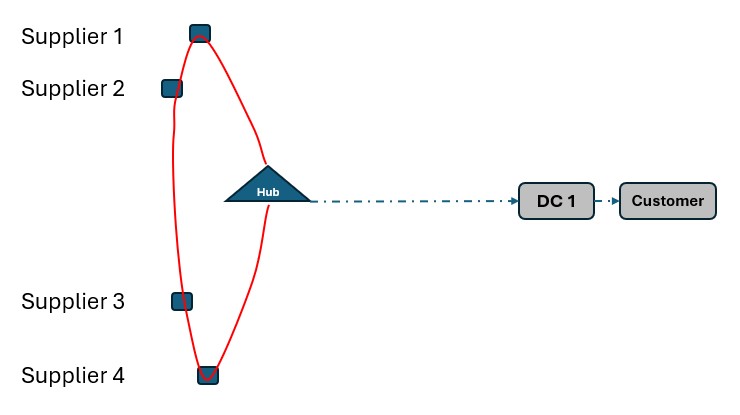

As a second example, consider a network where Suppliers can either deliver product to a Hub, either direct or on a multi-stop route from multiple suppliers, or direct/on multi-stop routes from multiple suppliers to a Distribution Center. Again, blue dashed lines indicate direct point to point deliveries and red lines indicate multi-stop route options:

Not taking route options into account when running Neo without Hopper can lead to a solution where each Supplier delivers to DC 1 directly:

This solution can change to one where the Suppliers deliver to the Hub on a multi-stop route when taking the route options into account in a Neo within Hopper run if this is overall beneficial (e.g. lower total cost) to do:

The Hopper within Neo algorithm needs inputs in order to consider routes as part of the Neo network optimization run. These include:

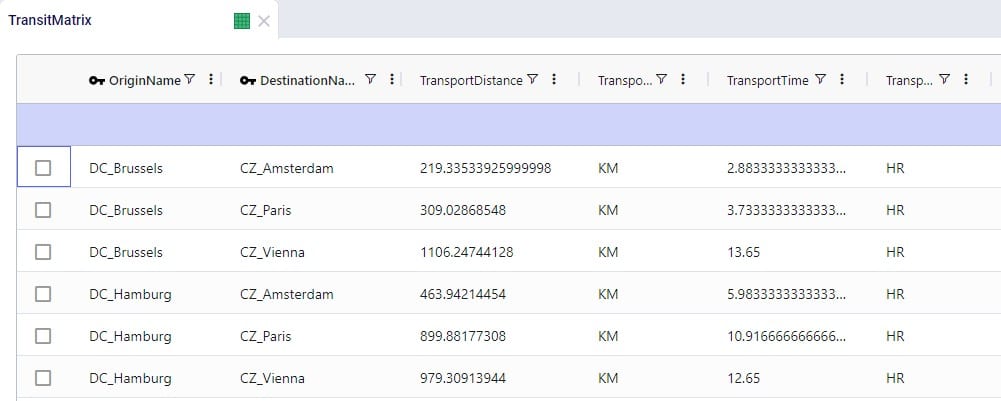

In all cases, additional records need to be added to the Transportation Policies table as well (explained in more detail below), and if the Transit Matrix table is populated, it will also be used for Hopper within Neo runs.

Please note that any other Hopper related tables (e.g. relationship constraints, business hours, transportation stop rates, etc.), whether populated or not, are not used during a Hopper within Neo solve.

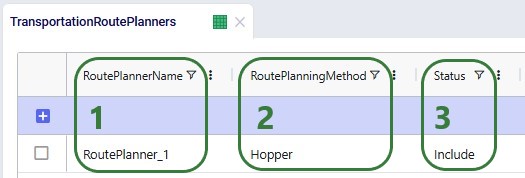

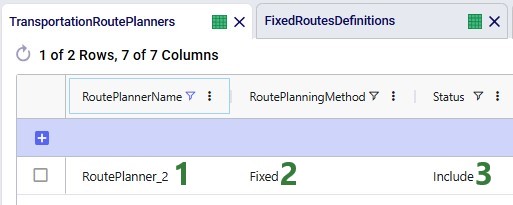

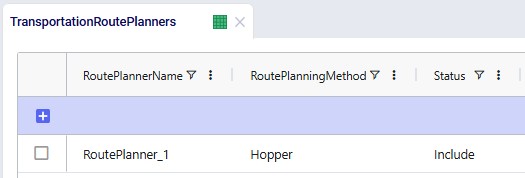

To use the Hopper algorithm for route generation, the Transportation Route Planners table and Transportation Policies tables need to be used. Starting with the Transportation Route Planners table:

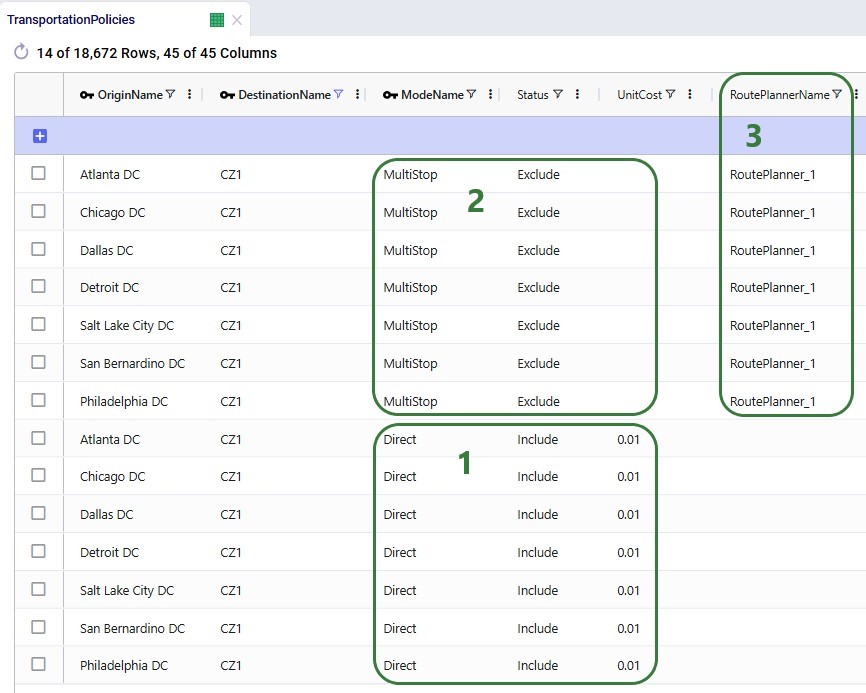

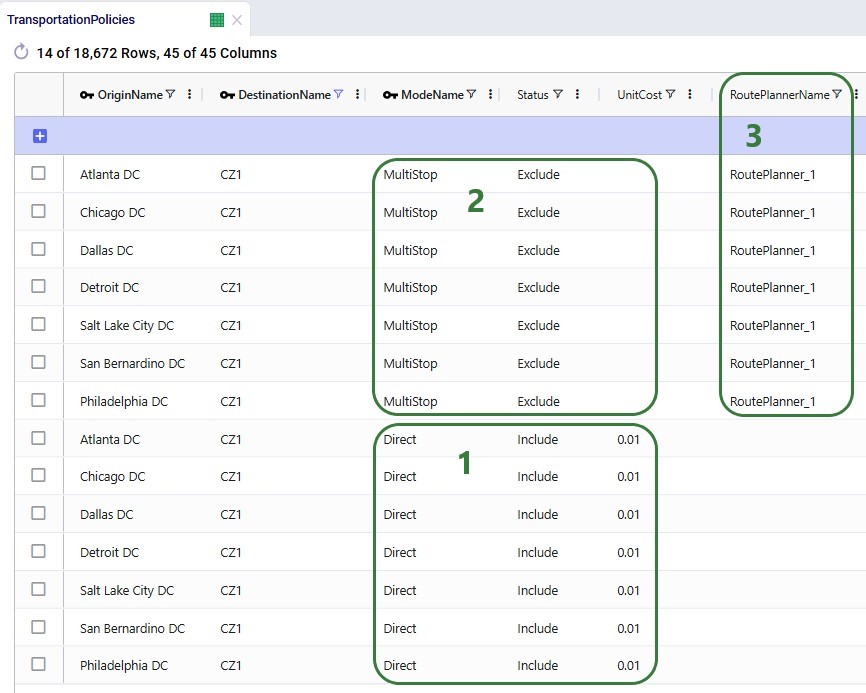

In the Transportation Policies table, records need to be added to indicate which lanes will be considered to be part of a multi-stop route:

Under the hood, potential routes will be calculated based on the inputs provided by the user. For example, for a case where the shipments table is not populated, and the user has specified their own assets:

As a numbers example to illustrate this calculation of a candidate route, let us consider following:

These costs are then used as inputs into the Network Optimization, together with costs for other transportation modes, and all are taken into account when optimizing the model.

To use routes that are defined by the user in the Neo solve, the user also needs to use the Transportation Route Planners table, in combination with the Fixed Routes, Fixed Routes Definitions, and Transportation Policies tables. Starting with the Transportation Route Planners table:

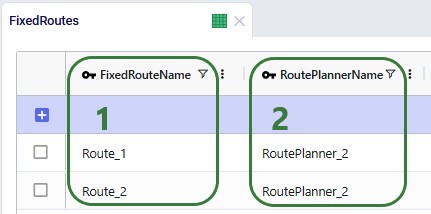

The Fixed Routes table connects the names of the routes the user defines with the route planner name:

There are a few additional columns on the Fixed Routes table which are not shown in the screenshot above. These are:

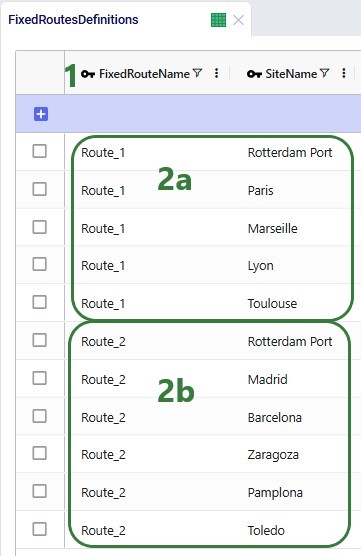

The Fixed Routes Definitions table needs to be used to indicate which stops are on a route together:

Please note that the Stop Number field on this table is currently not used by the Hopper within Neo functionality. The solve will determine the sequence of the stops.

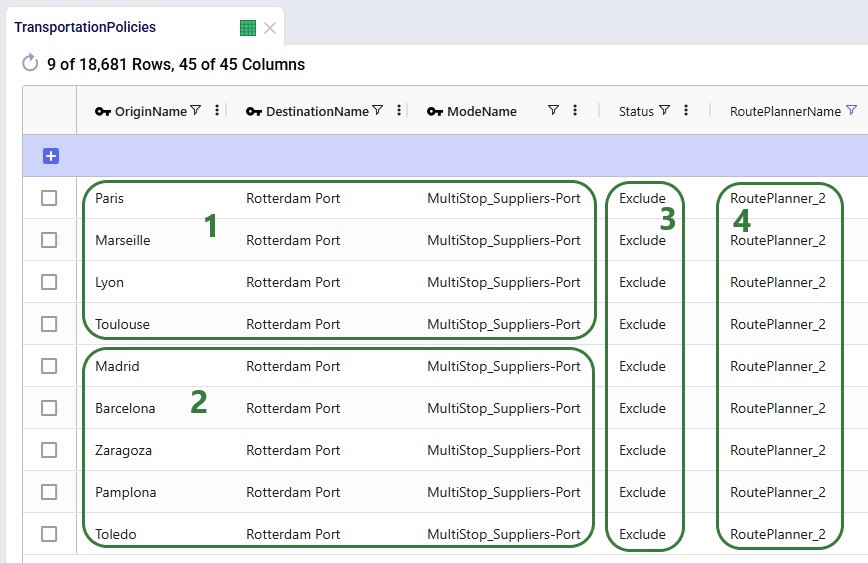

Finally, to understand which locations function as sources (pickups) and which as drop-offs (deliveries) on a route, and to indicate that multi-stop routes are an option for these source-destination combinations, corresponding records need to be added to the transportation policies table too:

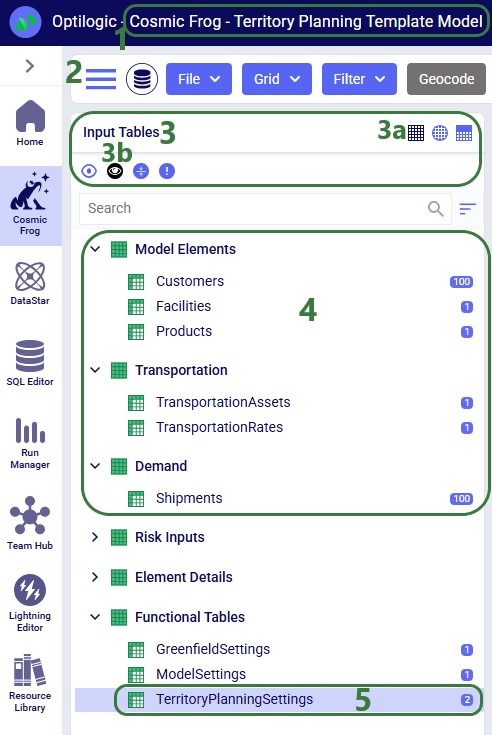

A copy of the Global Supply Chain Strategy model from the Resource Library was used as the starting point for both the Hopper within Neo and Hopper after Neo demo models. The original Global Supply Chain Strategy model can be found here on the Resource Library, and a video describing it can be found in this "Global Supply Chain Strategy Demo Model Review" Help Center article. The modified models showing the Hopper within and after Neo functionality can be found here on the Resource Library:

To learn more about the Resource Library and how to use it, please see the "How to use the Resource Library" Help Center article.

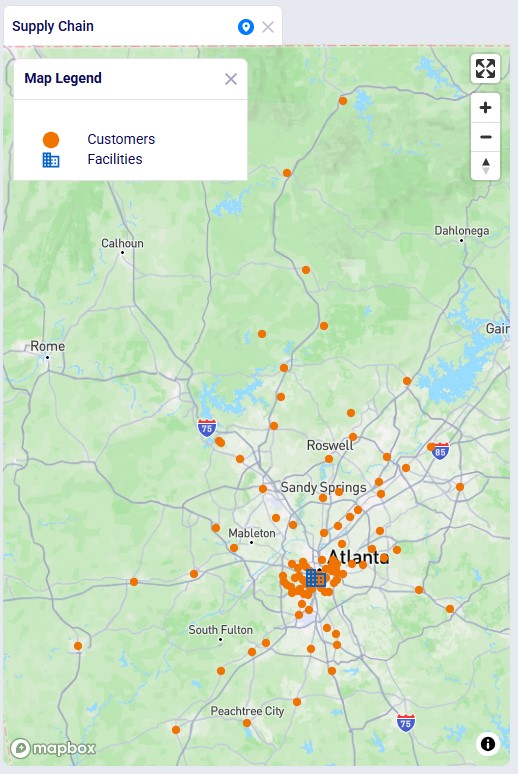

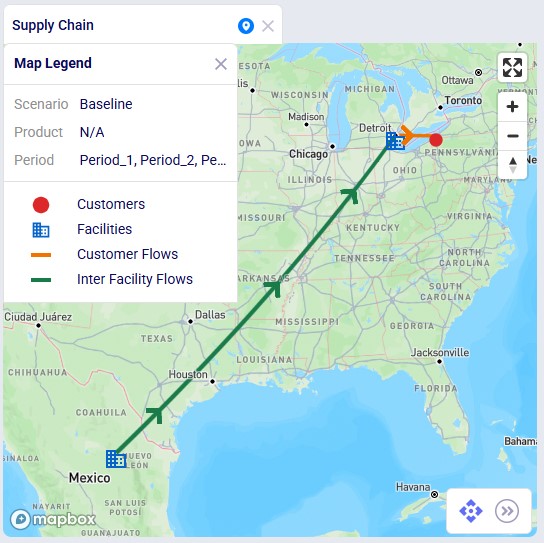

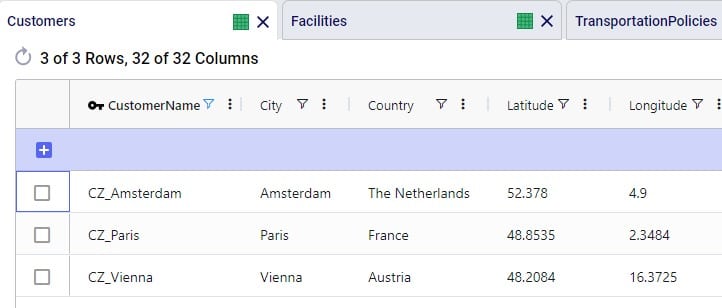

A short description of the main elements in this model is as follows:

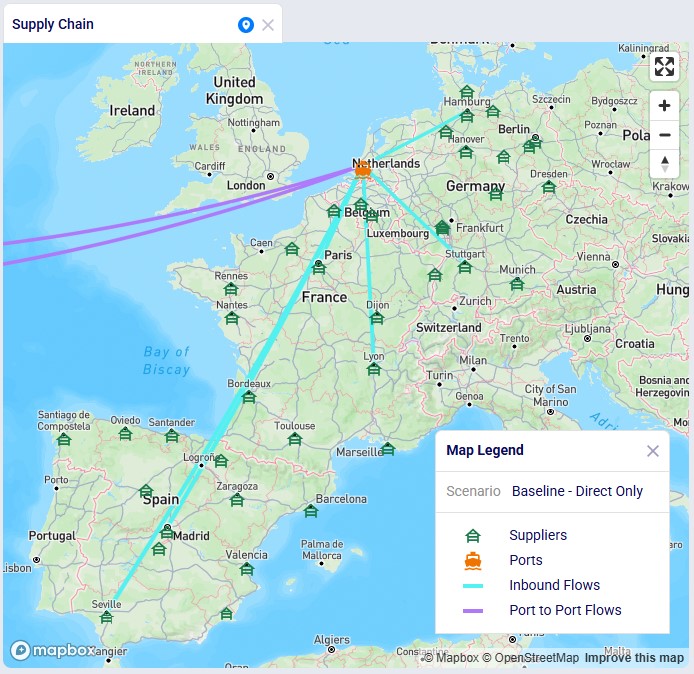

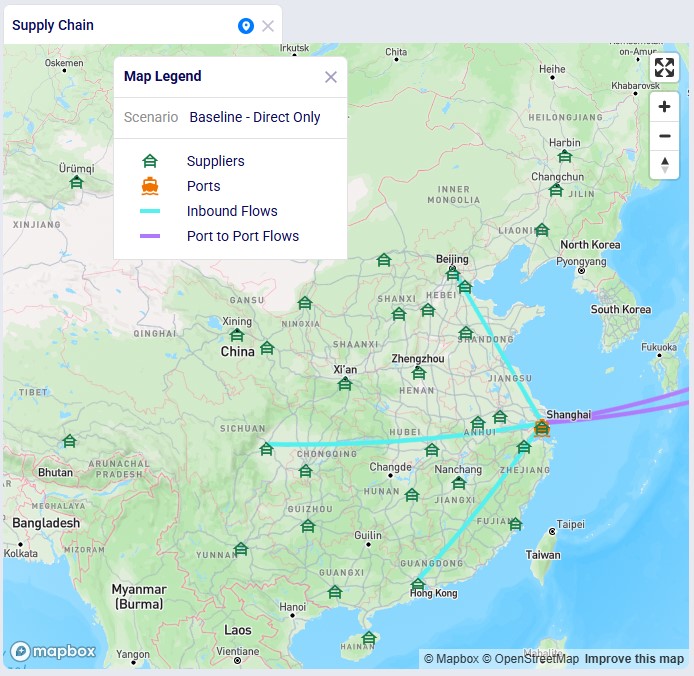

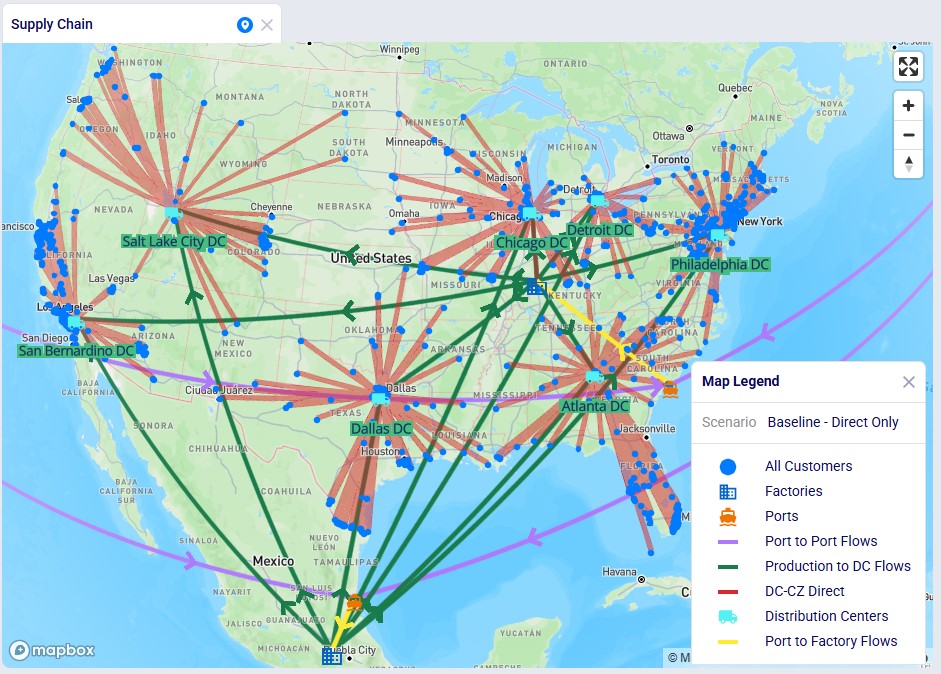

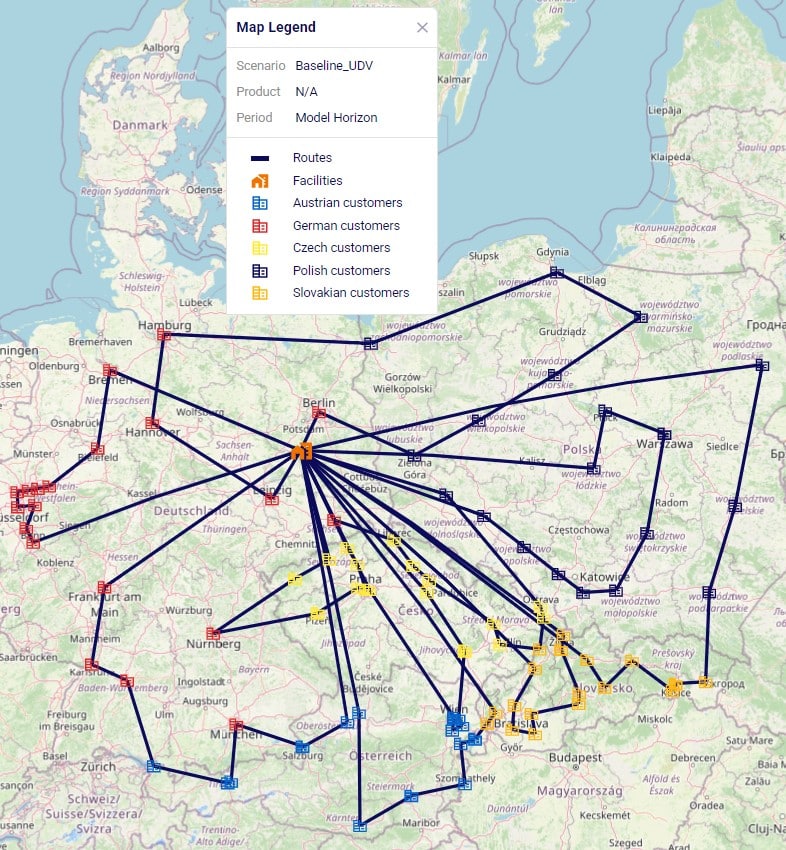

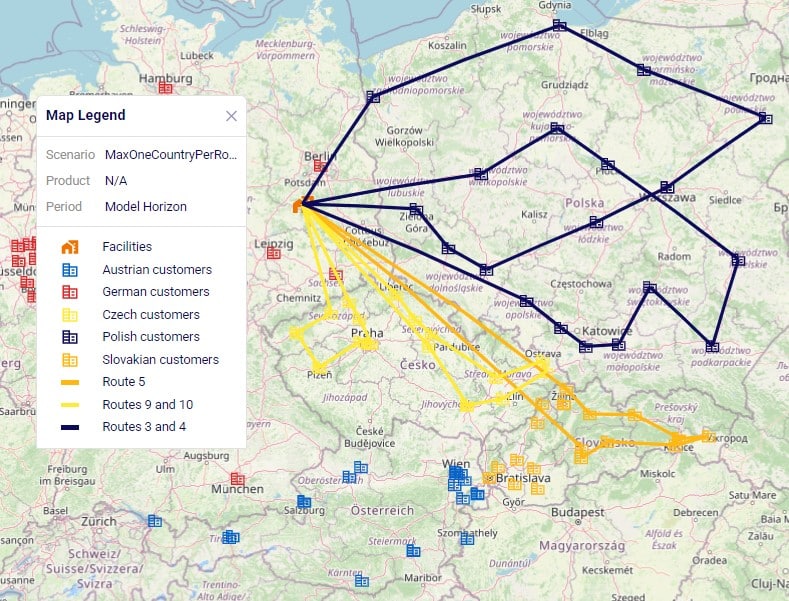

We illustrate the locations and flow of product through the following 3 maps:

Even though around 35 suppliers are set up in the model in the EMEA region, only 6 of them are used based on the records in the Supplier Capabilities input table. We see the light blue lines from these suppliers delivering raw materials to the port in Rotterdam. From there, the 2 purple lines indicate the transport of the raw materials from Rotterdam to the US and Mexican ports.

Similarly, around 35 suppliers are set up in China in the model, but only 3 of them are used per the set up in the Supplier Capabilities input table. The light blue lines are the transport of raw materials from the suppliers to the Shanghai port and the purple lines from the Shanghai port to the ports in Mexico and the US.

This map shows the flows into and within/between the US and Mexico locations:

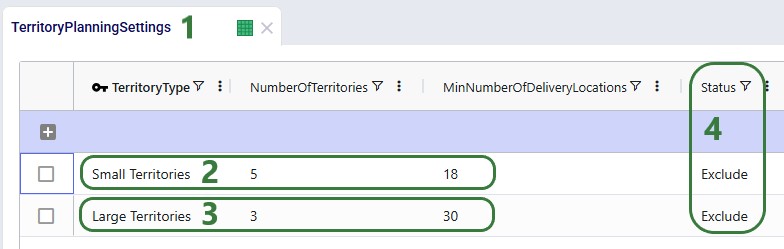

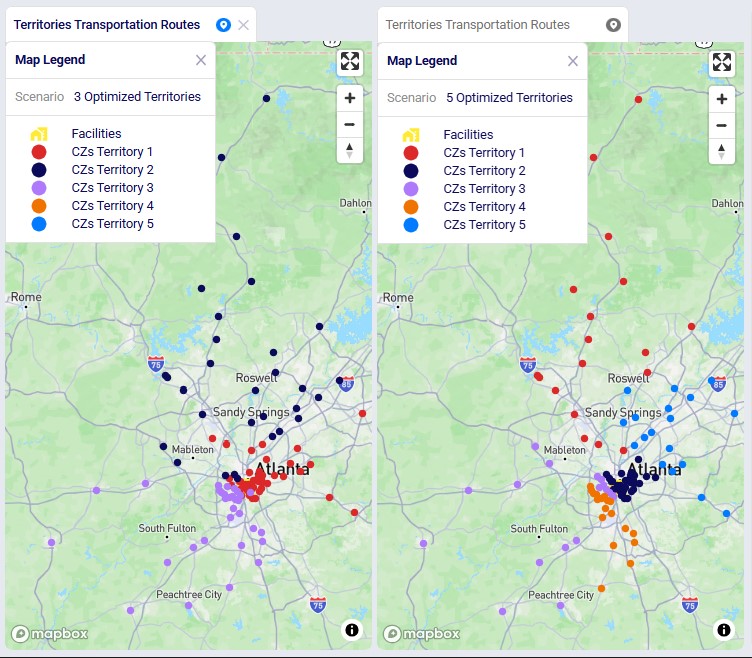

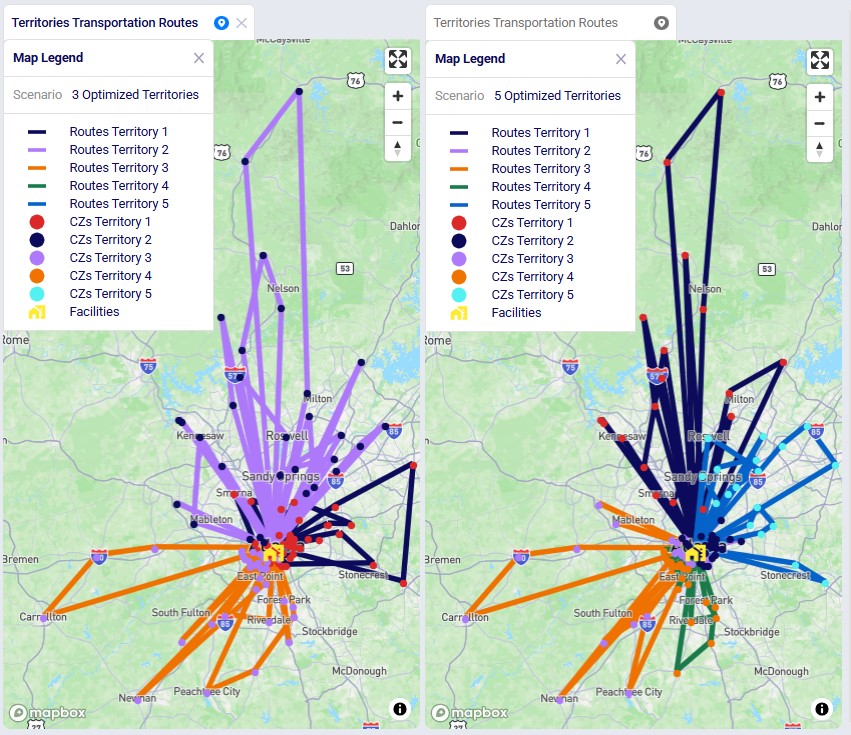

We will show how Hopper routes for the last leg of the network, from DCs to customers, can be taken into account during a Neo solve. Through a series of scenarios, we will explore if using multi-stop routes for this supply chain is beneficial:

After copying the Global Supply Chain Strategy model from the Resource Library, following changes/additions were made to be able to consider Hopper generated routes for the DC-CZ leg during the Neo solve. After listing these changes here, we will explain each in more detail through screenshots further below. Users can copy this modified model with the scenarios, outputs, and preconfigured maps from the Resource Library.

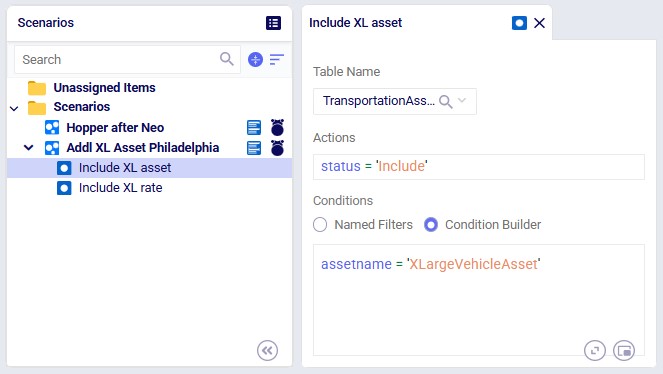

One record is added in this table where it is indicated that the route planner named "RoutePlanner_1" will use Hopper generated routes and is by default included during a Neo solve.

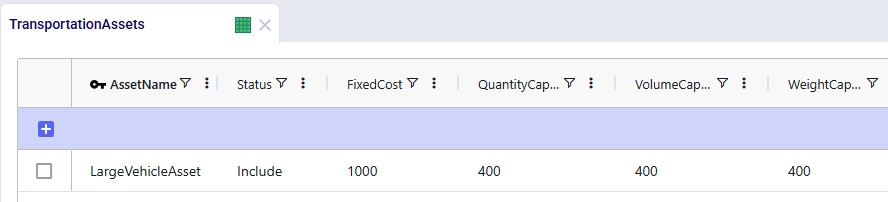

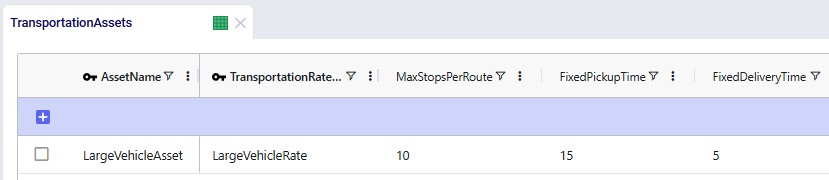

We choose to add our own asset instead of the 3 defaults ones that will be used if the Transportation Assets table is left blank. The characteristics of our large vehicle are shown in the next 2 screenshots:

This large asset is included in the scenario runs as its Status = Include. The fixed cost of the asset is $1,000, with a capacity of 400 units / 400 cubic feet / 400 pounds.

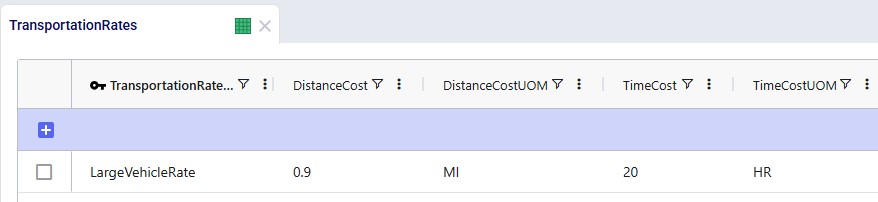

A rate record is set up in the Transportation Rates input table in order to model distance- and time-based costs. This is shown in the next screenshot. To use it, we link it to the asset by using the Transportation Rate Name field on the Transportation Assets table.

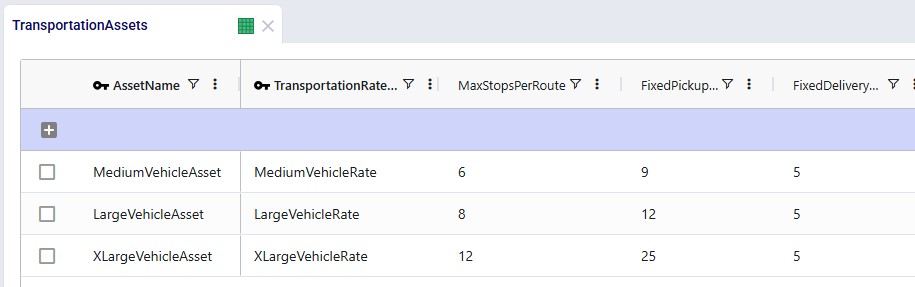

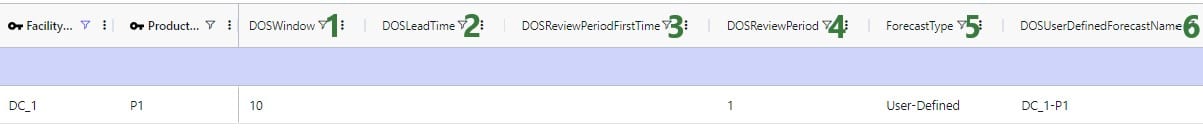

The Max Stops Per Route is set to 10. A Fixed Pickup Time of 15 minutes is entered, which will be applied once when picking up product at the DCs. Also, a Fixed Delivery Time of 5 minutes is set, this will be applied once at each customer when delivering product (the UOM fields are omitted in the screenshot; they are set to MIN).

Besides the fixed cost for the asset as specified in the Transportation Assets table, we also want to apply both distance- and time-based costs to the routes:

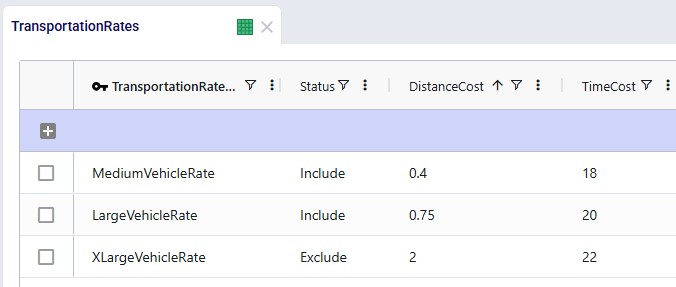

A record is set up in the Transportation Rates table where Transportation Rate Name is used in the Transportation Assets table to link the correct rate to the correct asset (e.g. LargeVehicleRate is used for the LargeVehicleAsset). The per distance cost is set to 0.9 per mile, and the time cost is set to 20 per hour; the latter mostly reflects the cost for the driver.

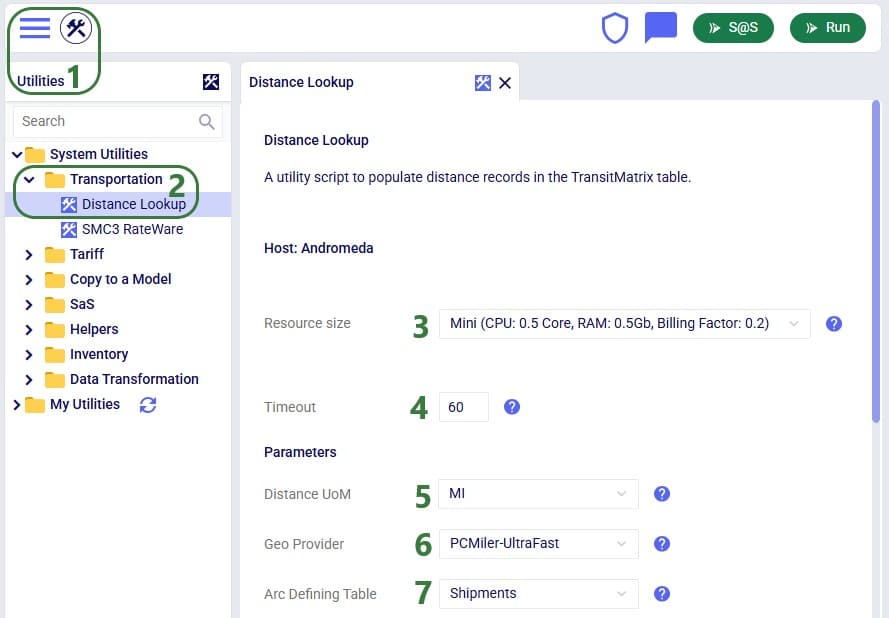

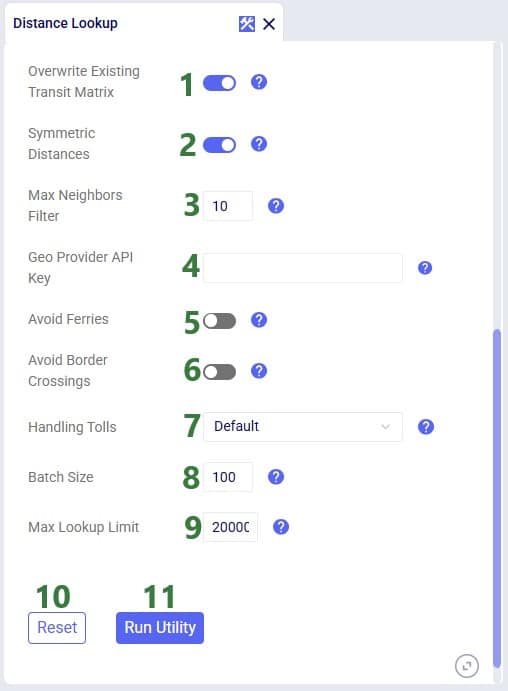

For the scenarios to use actual road distances and transport times, the Distance Lookup Utility in Cosmic Frog was used with following parameters:

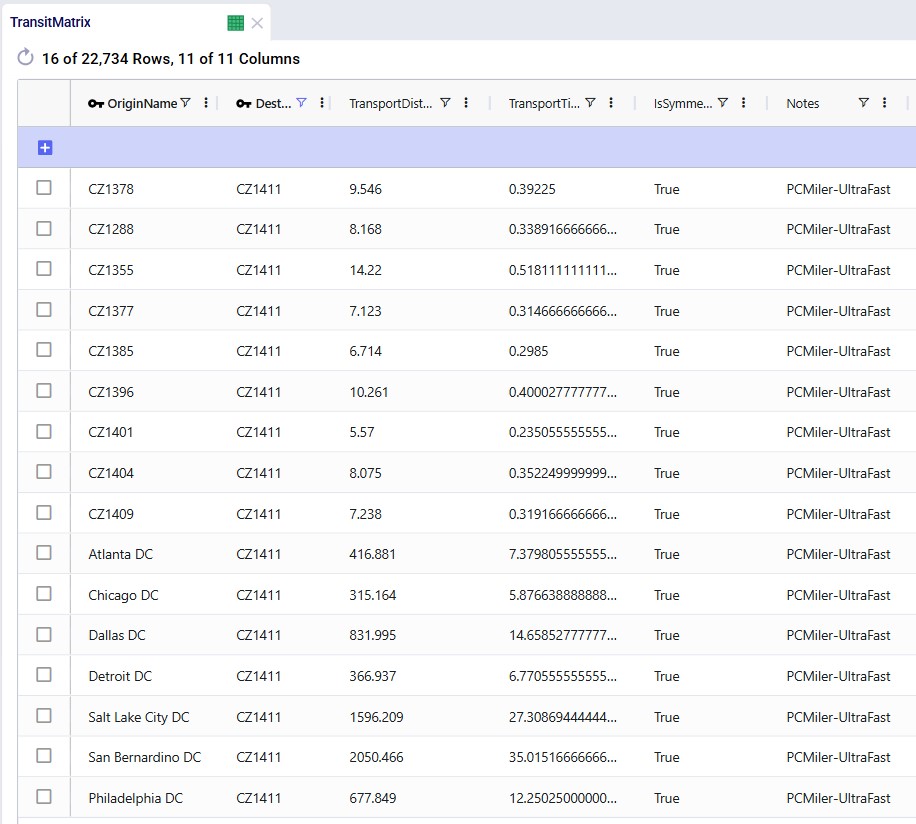

A subset of records filtered for 1 customer destination (CZ1411) is shown in this next screenshot where we see the distance and time from the 10 closest other customers to this customer, and also from all 7 DCs:

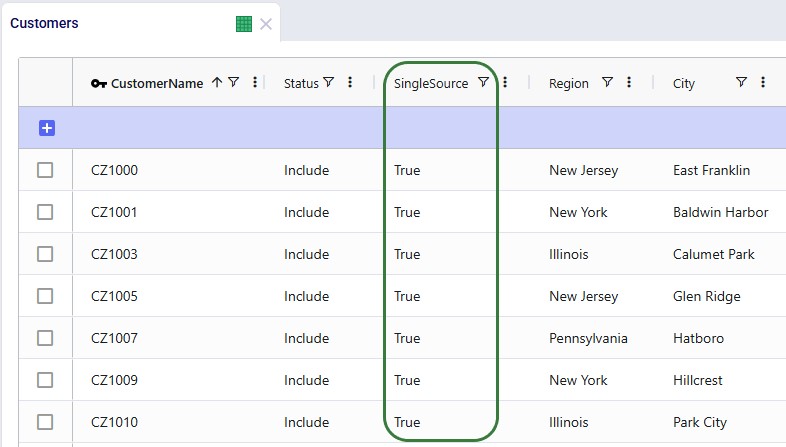

To remove potential dual sourcing, all customers are set to single sourcing (Single Source field is set to True on the Customers table), meaning they need to be served all product they demand from a single DC. In this model, setting customers to single sourcing also helps reduce the runtime of the scenarios:

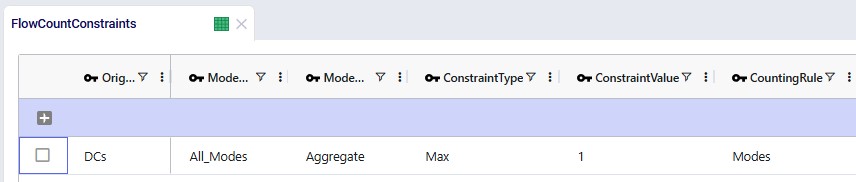

To further help reduce solve times, a flow count constraint that allows the selection of at maximum 1 mode to each customer is added:

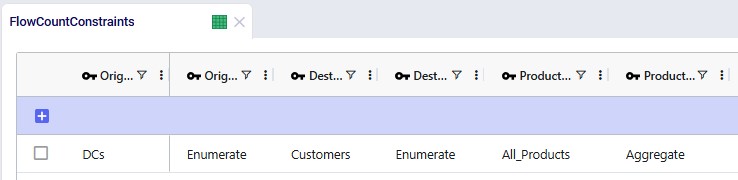

Groups for all DCs, all Customers, all Products, and all Modes (the 2 to customers, Direct and MultiStop) are being used in the Origin, Destination, Product, and Mode fields on the Flow Count Constraints table. The Group Behavior fields for Origin and Destination are set to Enumerate, meaning that under the hood, this constraint is expanded out into individual constraints for each DC-Customer combination. On the other hand, the Group Behavior fields for Product and Mode are set to aggregate, meaning that the constraint applies to all products and modes together. The Counting Rule indicates the combination of entities that "is counted on", in this case the number of modes, which is not allowed to be more than 1 (Type = Max and Value = 1), i.e. there can only be 1 mode selected on each DC-customer lane.

The Status field of the Flow Count Constraint is set to Exclude (not shown in the screenshots above), and the constraint will be included in 2 of the scenarios by changing the status through a scenario item.

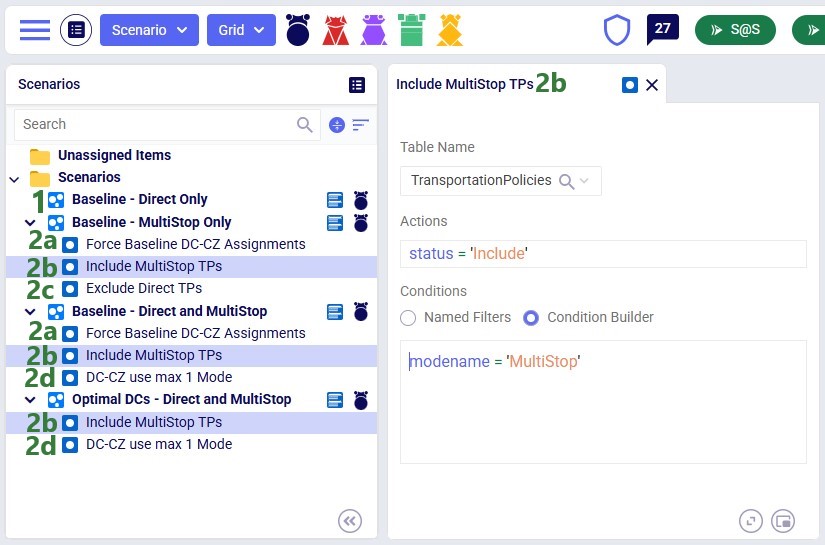

The following screenshot shows the scenarios and which scenario items are associated with each of them. To learn more about creating scenarios and their items, please see these 2 help center articles: "Creating Scenarios in Cosmic Frog" and "Getting Started with Scenarios".

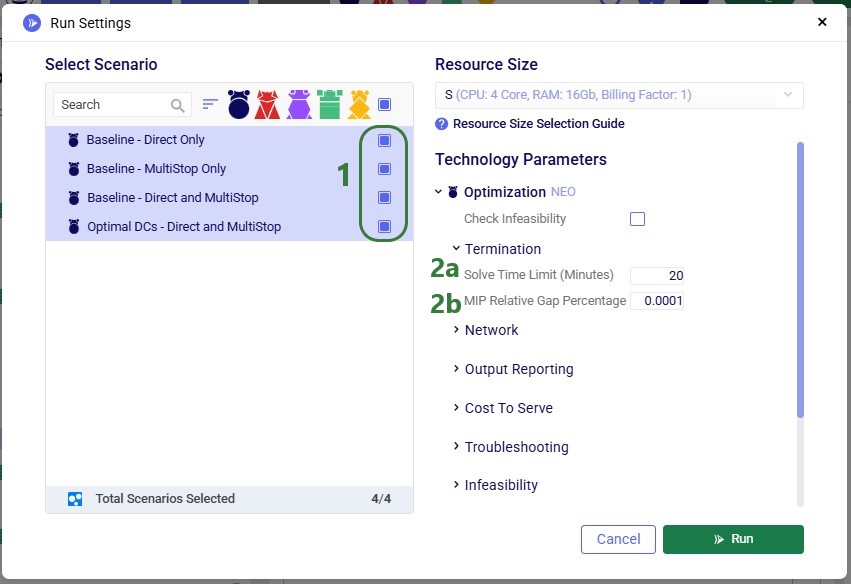

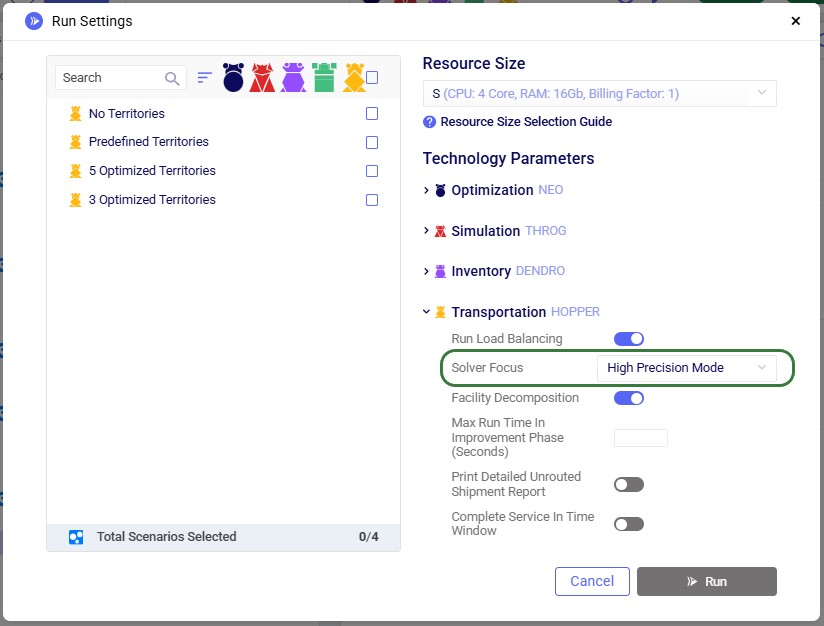

To find a balance between running the model to a low enough gap to reduce any suboptimality in the solution and running for a reasonable amount of time, the following is set on the Run Settings modal which comes up after clicking on the green Run button at the top right in Cosmic Frog:

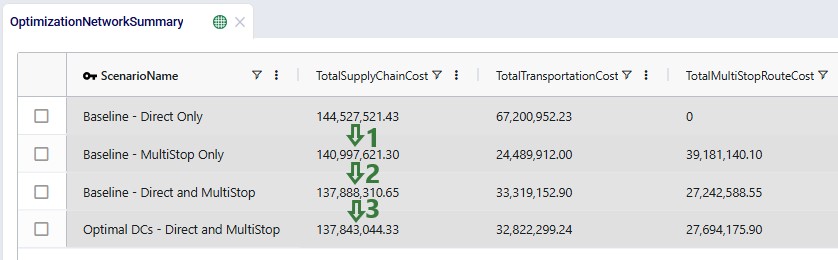

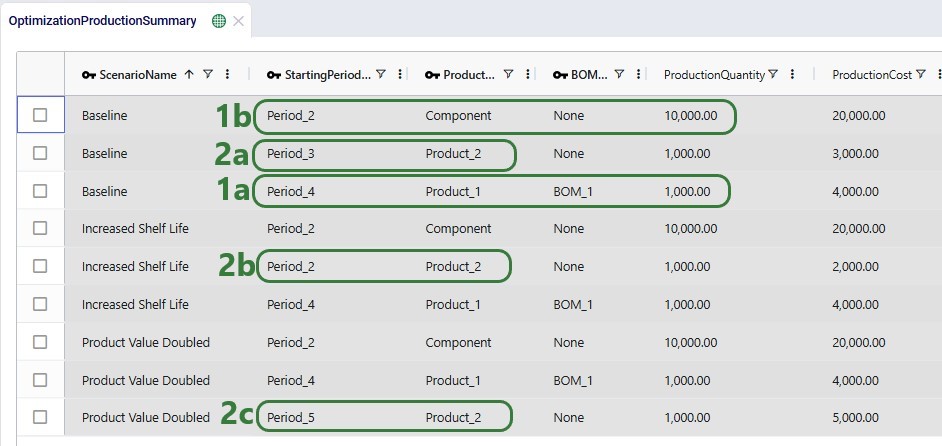

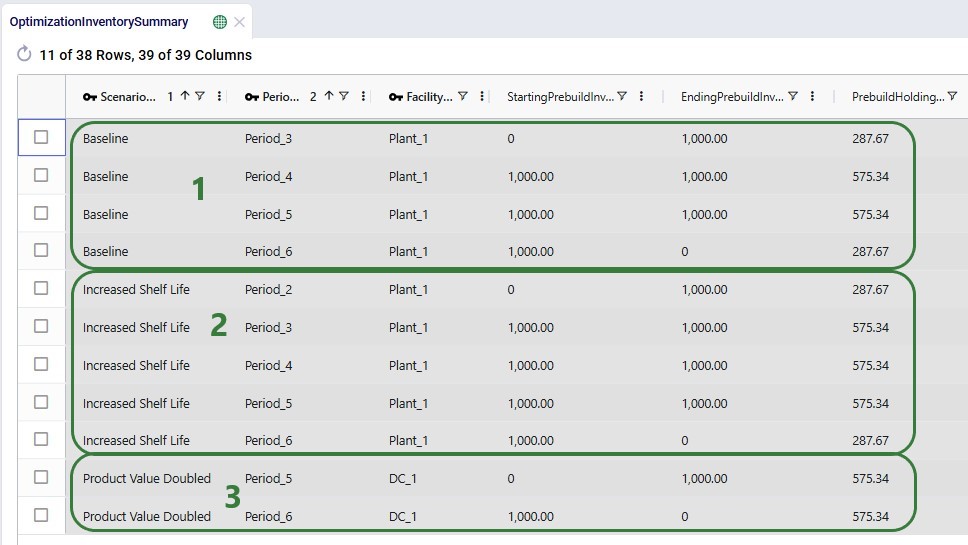

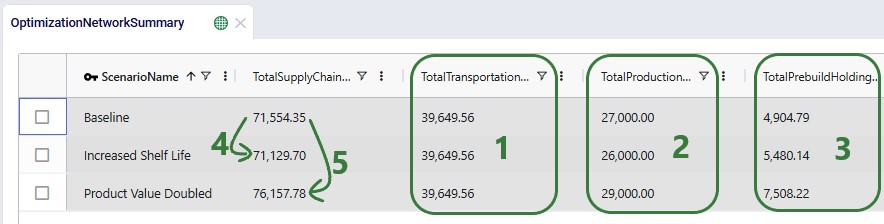

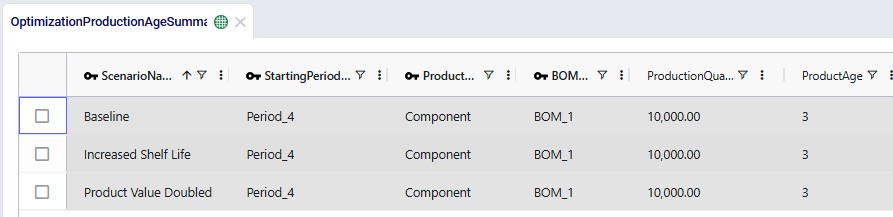

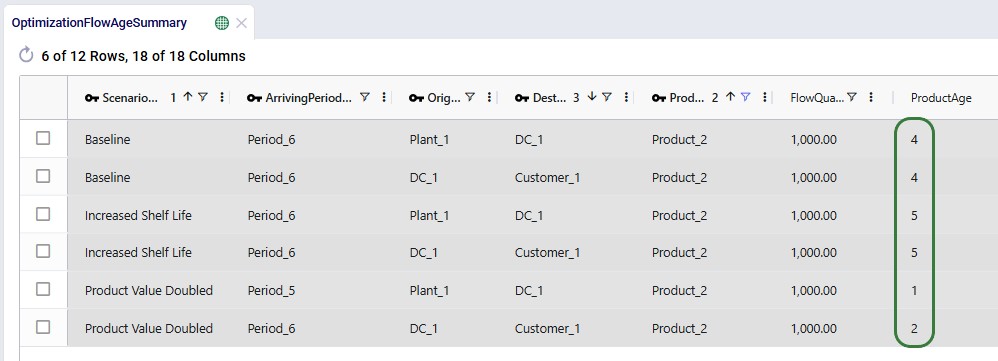

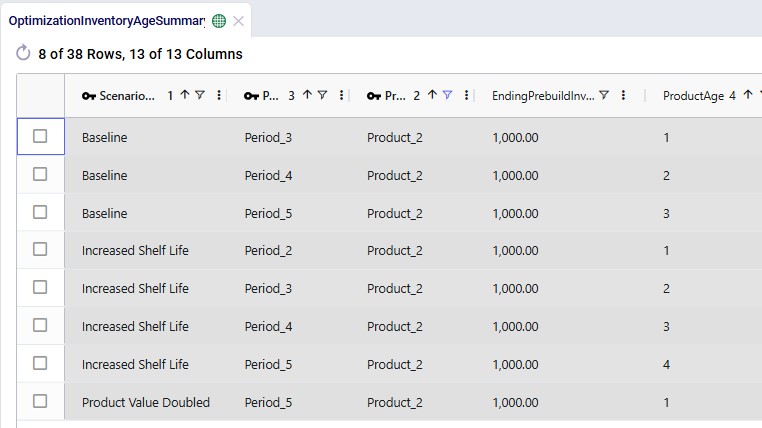

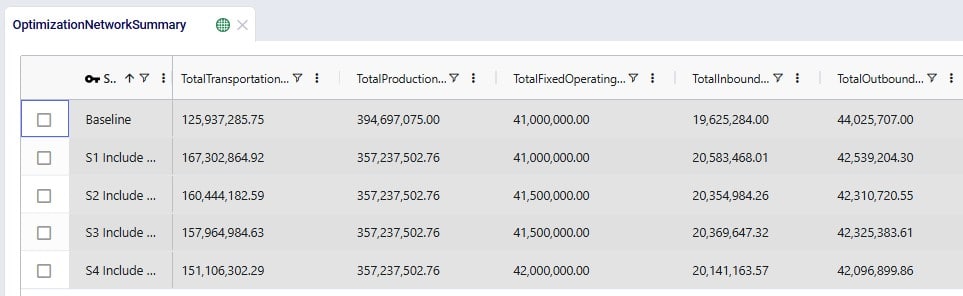

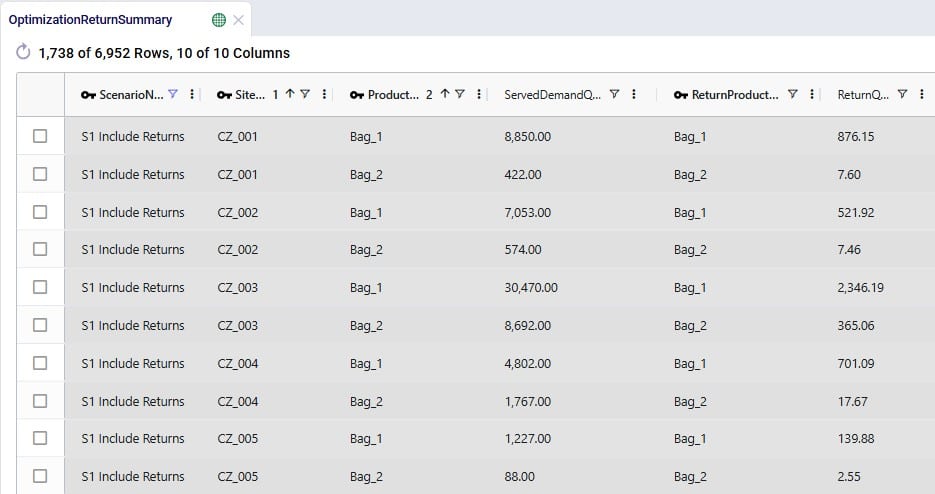

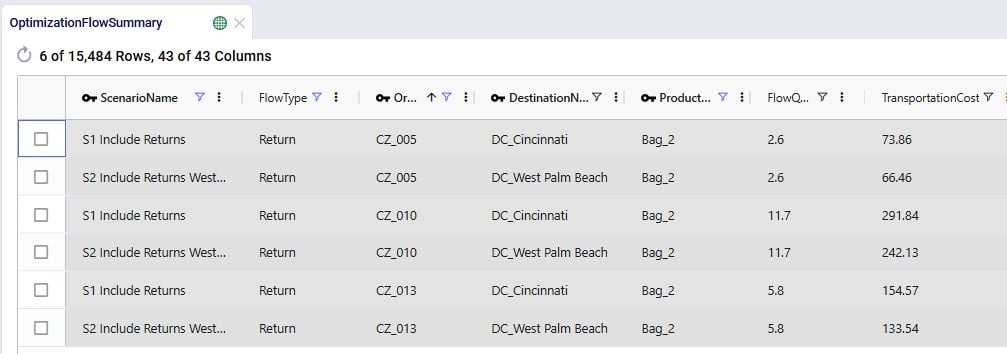

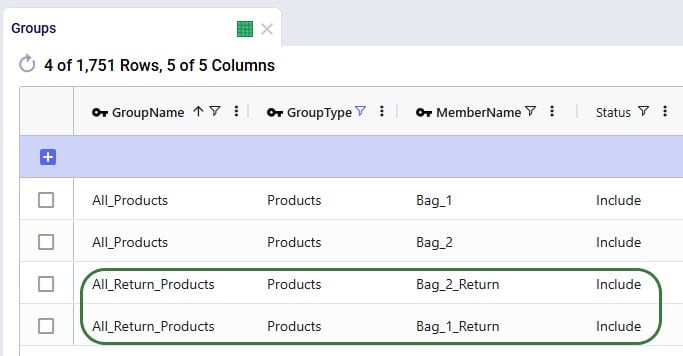

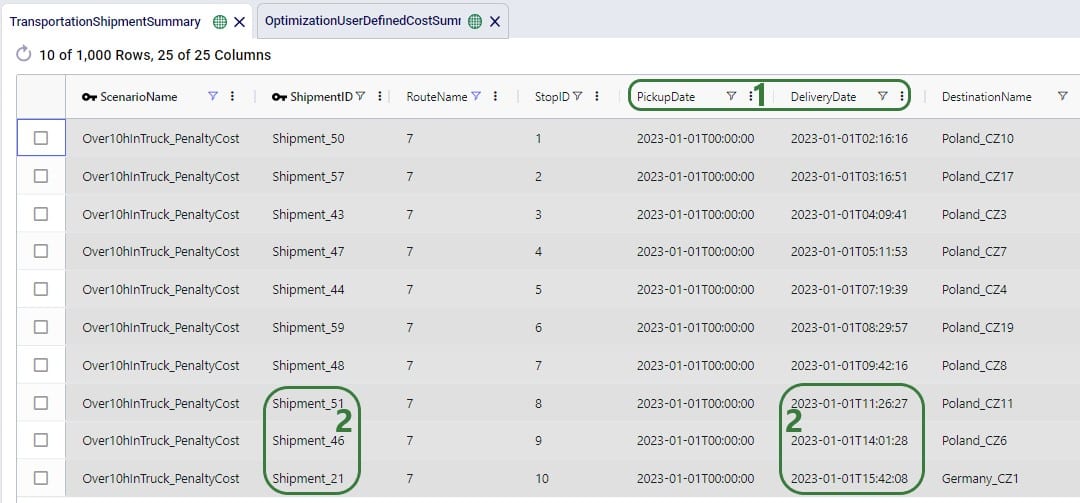

We will first look at the Optimization Network Summary and Optimization Flow Summary output tables, and next at the maps of the last 2 scenarios. We will start with the Optimization Network Summary output table. This screenshot does not show the Production and Fixed Operating Costs as they are the same across all scenarios, and we will focus on the transportation related costs:

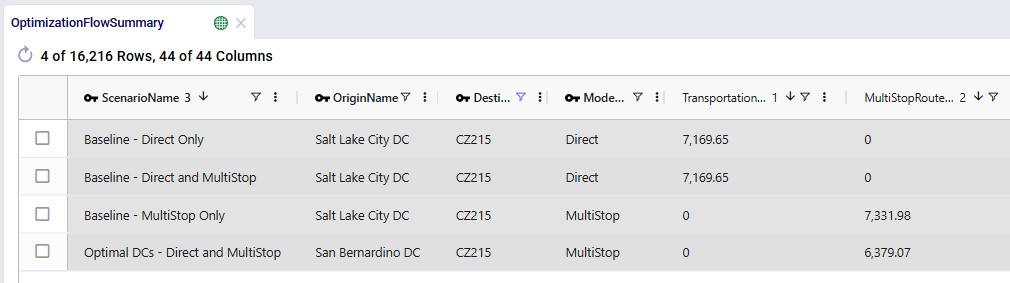

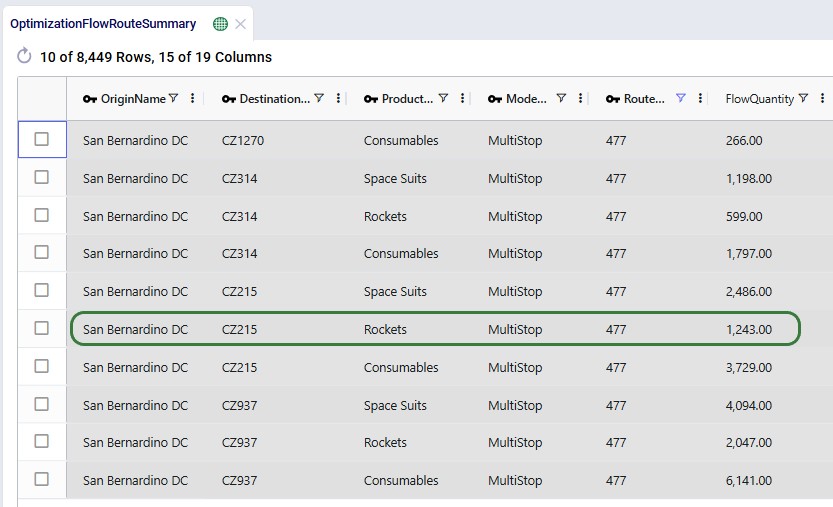

Next, the Optimization Flow Summary output table is filtered for 1 specific customer, CZ215, for which the DC assignment is changed in the final scenario. The table is also filtered for the Rockets product, for which the delivered quantity is the same for these 4 records (1,243 units):

Salt Lake City is the optimal DC to source from for this customer based on just the direct delivery option; the transportation cost is ~7.2k. We see from the "Baseline - MultiStop Only" scenario that using a multi-stop route from Salt Lake City to this customer is a little more expensive (7.3k), so therefore the Direct mode is used in the "Baseline - Direct and MultiStop" scenario. In the "Optimal DCs - Direct and MultiStop" scenario, the customer swaps DC and is served using a multi-stop route from the San Bernardino DC, this results in a transportation cost of 6.4k, which is a reduction of almost $800 as compared to going direct from the Salt Lake City DC.

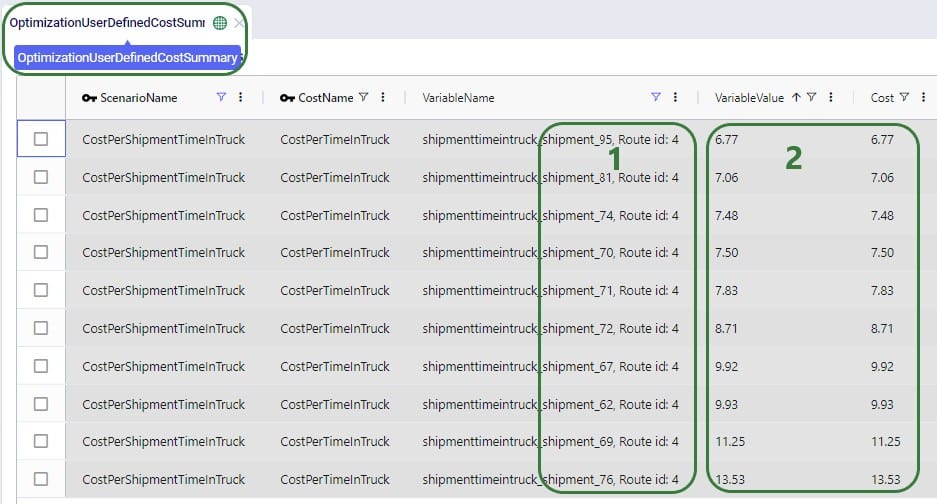

With a newly added output table named Optimization Flow Route Summary, we can see on which route from San Bernardino CZ215 is placed in this "Optimal" scenario:

We see that CZ215 is a stop on route 477 from San Bernardino. The screenshot also shows the other stops on this route. Now we will retrace how the multi-stop route cost of $6.4k for this flow (see previous screenshot) is calculated:

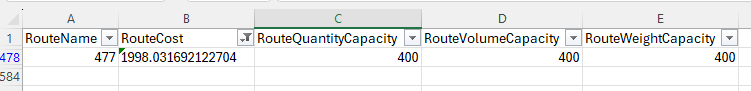

When the possible routes are created during the model run, each customer is placed on up to 3 potential routes from its closest 2 DCs. These possible routes generated during preprocessing are listed in the HopperRouteSummary.csv input file which is generated when the "Write Input Solver" model run option (Troubleshooting section) is turned on. We can look up route 477 in it to see the cost for it:

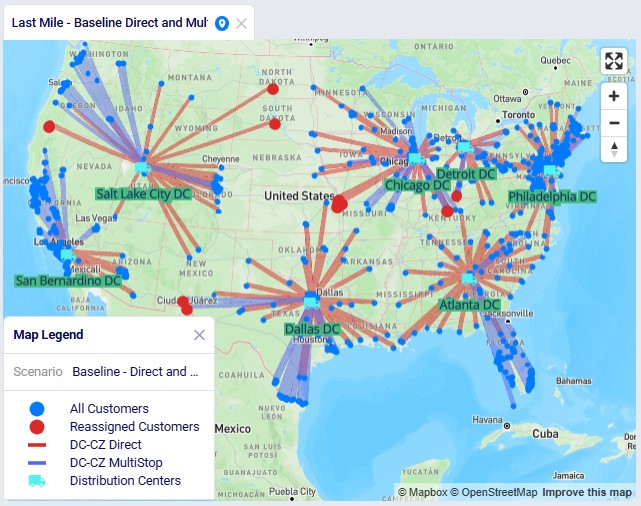

The following 2 maps show the DC-CZ flows for the "Baseline - Direct and MultiStop" and "Optimal DCs - Direct and MultiStop" scenarios. Multi-stop routes are shown with blue lines and direct deliveries with red lines. There are 15 customers that swap DCs and these are shown with larger red dots:

In total 15 CZs are swapping DCs in the "Optimal DCs - Direct and MultiStop" scenario. From west to east on the map:

The last 3 swaps cause some flow lines to be crossing each other on the map. This is due to the algorithm considering a finite number of possible routes.

Note that maps for the first 2 scenarios are also set up in this demo model.

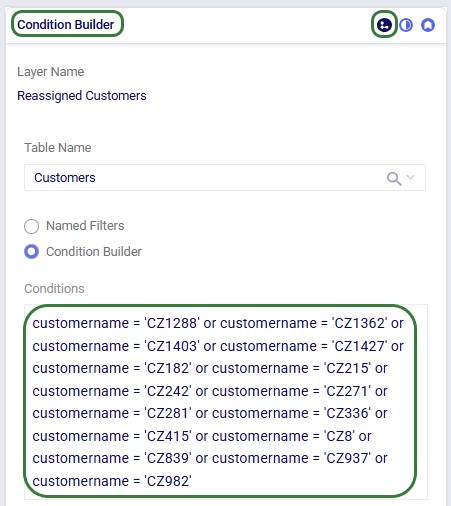

Re-running these scenarios in future with a newer solver and/or after making (small) changes to the model can change the outputs such that the set of reassigned customers which are shown in larger red dots on the map changes. To visualize these on the map in the same way as is done above, the filter in the Condition Builder panel of the "Reassigned Customers" map layer needs to be updated manually. Currently the filter is as follows:

To determine which customers are being reassigned and need to be used in this filter, users can take following steps (which can be automated using for example DataStar):

With this new functionality, a transportation optimization (Hopper) run is started immediately after a network optimization (Neo) run has completed and this will be seen as one run to the user. Underneath, the assignments on the last leg of the network (e.g. customer to DC assignments) as determined optimal by the Neo run will be fixed for the Hopper run, and then multi-stop routes are generated for this last leg during the Hopper run. All existing Hopper functionality is taken into account while determining the optimal multi-stop routes and complete sets of outputs for both the network optimization and transportation optimization are generated at the end of a Hopper after Neo run. This saves users time where they do not need to go into the model to retrieve and manipulate Neo outputs to be used as input shipments for a subsequent Hopper run.

Besides needing the usual input for the network optimization (Neo) engine to be able to run, the Hopper after Neo algorithm needs some additional inputs in order to generate optimal multi-stop routes after the Neo run has completed. These include:

In addition, all populated Hopper fields used on any other Hopper table will be used during the Hopper part of the solve.

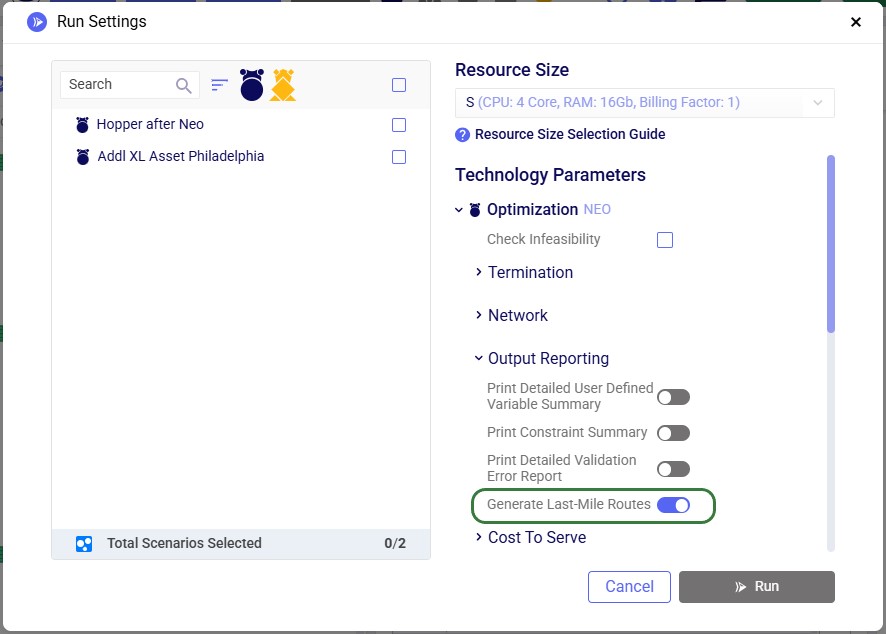

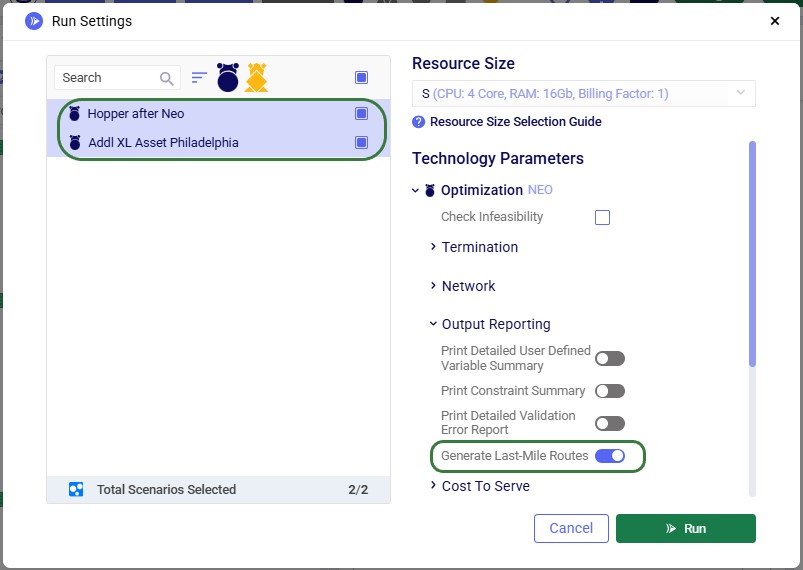

To turn on the Hopper after Neo functionality, one needs to enable the "Generate Last-Mile Routes" run setting in the Output Reporting section of the Optimization (NEO) Technology Parameters. These parameters are located on the right-hand side of the Run Settings modal which comes up after clicking on the green Run button at the right top in Cosmic Frog:

Please see the "Model used to showcase Hopper within/after Neo Features" section further above for the details of the starting point for the demo model for Hopper after Neo, which is the same one that was also the starting point for the Hopper within Neo demo model.

We will show how Hopper routes for the last leg of the network, from DCs to customers, can be created immediately after a Neo solve. Through 2 scenarios, we will explore which asset mix will be optimal for the generated multi-stop routes:

After copying the Global Supply Chain Strategy model from the Resource Library, following changes/additions were made to be able to use Hopper for multi-stop route generation for the DC-CZ leg after the Neo solve. After listing these changes here, we will explain each in more detail through screenshots further below. Users can copy this modified model with the scenarios and outputs from the Resource Library.

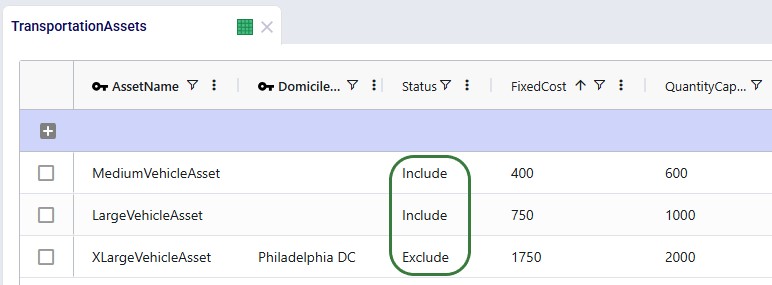

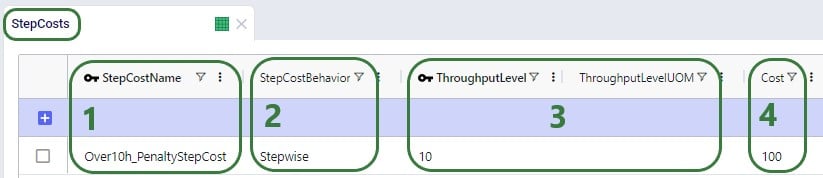

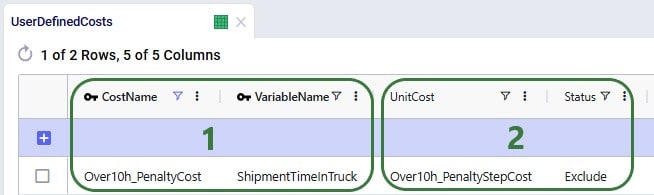

Instead of using the 1 large default vehicle when leaving the Transportation Assets table blank, we decide to use 2 user-defined assets initially: a large and medium one. One additional extra large asset will be added after running the first scenario with the 2 assets; it will be added to resolve unrouted shipments seen in the first scenario. The assets and their settings are shown in the following 2 screenshots:

We see that both the Medium and Large vehicle are available at all locations (Domicile Location is left blank) and their Status is set to "include", whereas the Extra Large vehicle will only be available at the Philadelphia DC in the second scenario, and its initial Status is set to "Exclude". The assets have increasing fixed costs, quantity capacities, max number of stops per route, and fixed pickup times (in minutes) by increasing asset size. The fixed delivery time is the same for all: 5 minutes per stop.

For each asset, a transportation rate is specified in the Transportation Rates input table. This rate is used in the Transportation Rate Name field in the Transportation Assets table (see screenshot above). The Distance and Time Costs are specified as follows, where the distance-based rate increases with the size of the vehicle to account for higher fuel usage. The Time Cost increases a bit with the size of the vehicle too to reflect the level of experience of the driver needed for larger vehicles:

Two scenarios will be run in this model:

When running the scenarios, the only additional input required to indicate that the Hopper engine should be run immediately after the Neo run, while taking its customer assignment decisions into account, is to turn on the "Generate Last-Mile Routes" option in the Output Reporting section of the Optimization Technology Parameters:

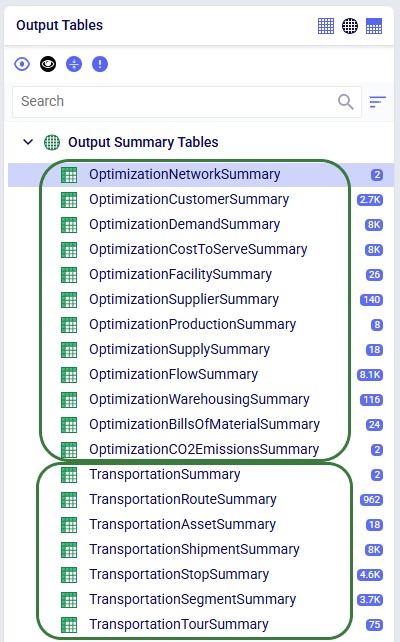

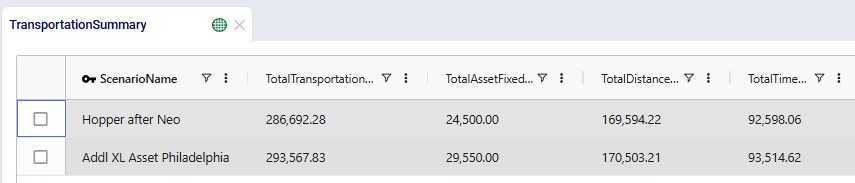

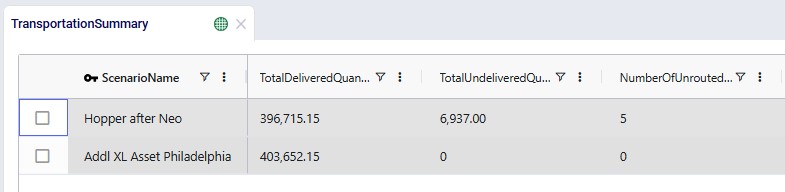

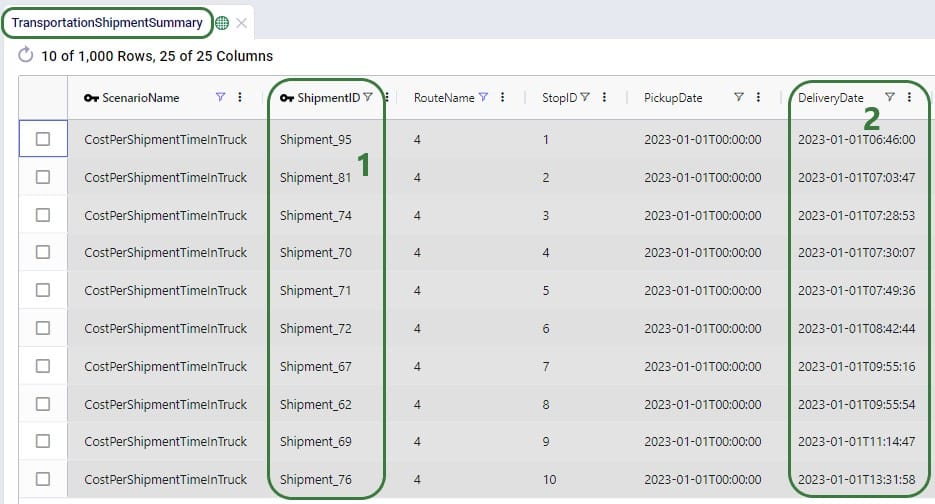

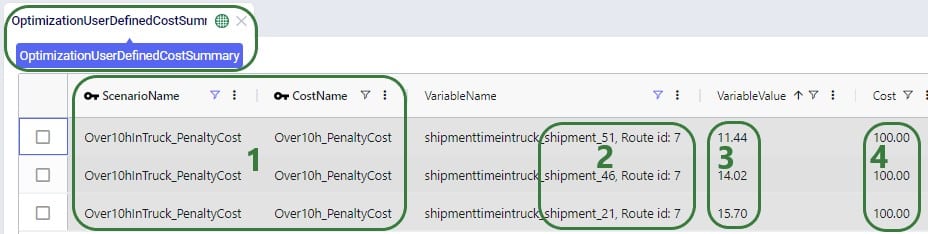

After running both scenarios, we see that full sets of outputs have been generated in the network optimization output tables and in the transportation optimization output tables:

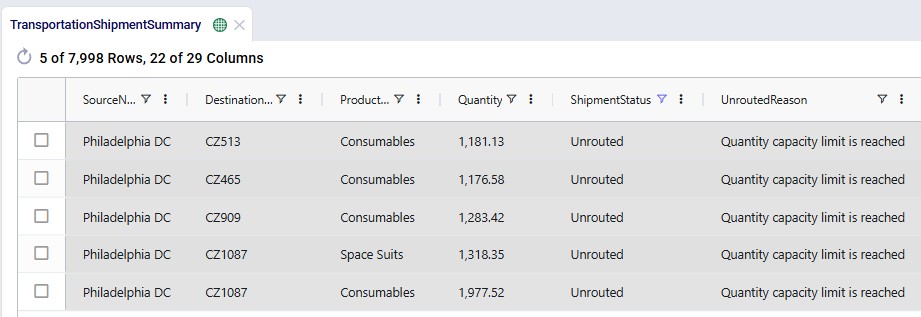

When looking through all outputs, we notice unrouted shipments in the Transportation Shipment Summary output table. Filtering these out, we find they are all shipments coming from Philadelphia DC, and the Unrouted Reason for all = "Quantity capacity limit is reached", see the next screenshot. The large vehicle asset has a capacity of 1000 units which is not big enough to fit these shipments. Therefore, the XL asset is added at Philadelphia DC in the second scenario.

After running the second scenario, there are no more unrouted shipments and we take a look at the Transportation Summary output table which summarizes the Hopper part of the run at the scenario level. We can conclude from here that also delivering these 5 large shipments adds about $6.9k per week to the total transportation cost:

The following screenshot shows fields further to the right in the Transportation Summary output table, which confirm there are no more unrouted shipments in the second scenario, and therefore the total undelivered quantity is also 0 in this scenario:

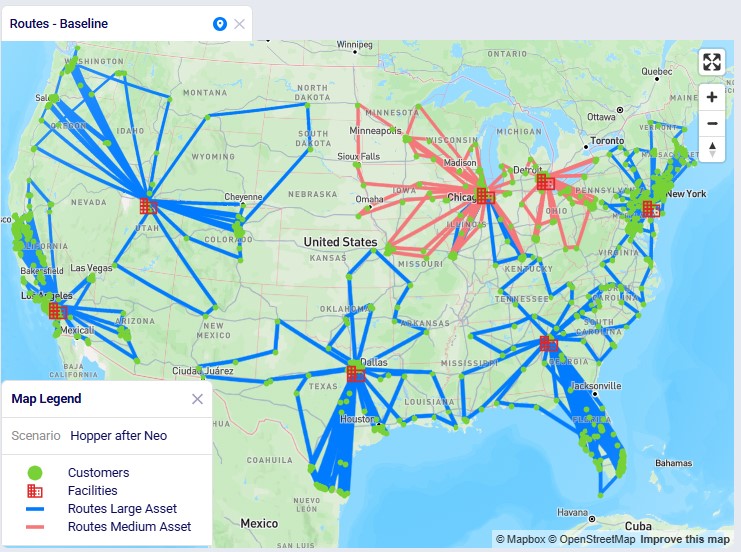

Looking on a map, we can visualize the routes. In this example, they are colored based on which vehicle is used on the route. This is the map for the "Hopper after Neo" scenario. The map is called "Routes - Baseline" and is pre-configured when copying this model from the Resource Library:

We see that the medium asset is used for most routes from the Chicago DC and all routes from the Detroit DC; the large asset is used on all other routes.

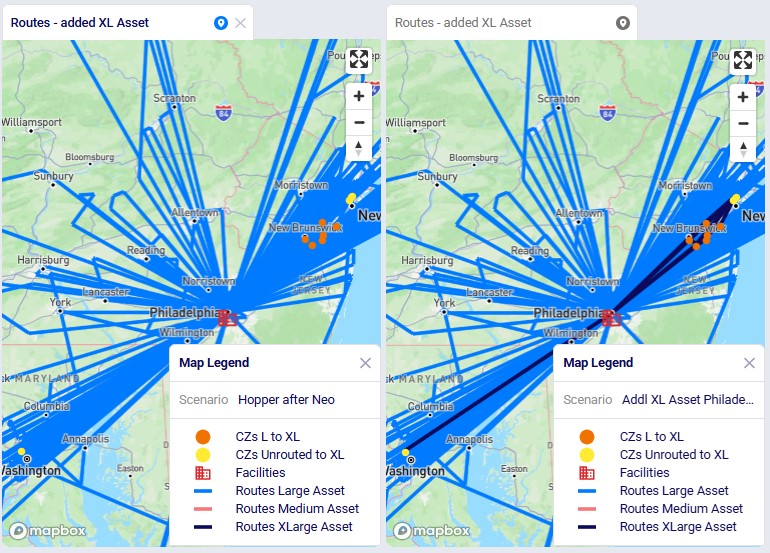

Finally, in the next screenshot we compare the 2 scenarios side-by-side, zoomed in on the Philadelphia DC and its routes. The darker routes are those that use the XL asset. Only the 10 customers which are served by the XL asset in the second scenario are shown on the map. Six of them were served by the large asset in the "Hopper after Neo" scenario; these are shown in orange on the map. The other 4 which are colored yellow had unrouted shipments for 1 or more products in the "Hopper after Neo" scenario; three of these are clustered together northeast of Philadelphia, while one is by itself southwest of Philadelphia:

Please note that the "CZs L to XL" and "CZs Unrouted to XL" map layers contain filters on the Condition Builder pane that have been manually added after analyzing and comparing the outputs of the 2 scenarios to determine which customers to include in these layers. If the scenarios are re-run in future with a newer solver and/or after making (small) changes to the model, the outputs may change, including which customers switch from a large to extra-large vehicle and/or those going from being unrouted to using the extra-large vehicle. In that case the current condition builder filters will need to be updated to visualize those changes on the map. See the notes at the end of the "Hopper within Neo-Outputs" section which apply here in a similar way.