We take data protection seriously. Below is an overview of how backups work within our platform, including what’s included, how often backups occur, and how long they’re kept.

Every backup—whether created automatically or manually—contains a complete snapshot of your database at the time of the backup. This includes everything needed to fully restore your data.

We support two types of backups at the database level:

Often called “snapshots,” “checkpoints,” or “versions” by users:

We use a rolling retention policy that balances data protection with storage efficiency. Here’s how it works:

Retention Tier - Time Period - What’s Retained

Short-Term - Days 1–4 - Always keep the 4 most recent backups

Weekly - Days 5–7 - Keep 1 additional backup

Bi-Weekly - Days 8–14 - Keep the newest and oldest backups

Monthly - Days 15–30 - Keep the newest and oldest backups

Long-Term - Day 31+ - Keep the newest and oldest backups

This approach ensures both recent and historical backups are available, while preventing excessive storage use.

In addition to per-database backups, we also perform server-level backups:

These backups are designed for full-server recovery in extreme scenarios, while database-level backups offer more precise restore options.

To help you get the most from your backup options, we recommend the following:

If you have additional questions about backups or retention policies, please contact our support team at support@optilogic.com.

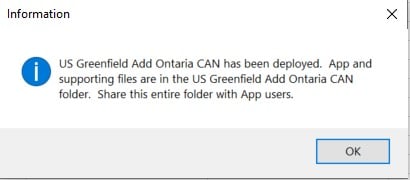

Teams is an exciting new feature set on the Optilogic platform designed to enhance collaboration within Supply Chain Design, enabling companies to foster a more connected and efficient working environment. With Teams, users can join a shared workspace where all team members have seamless access to collective models and files. For a more elaborate introduction to and high-level overview of the Teams feature set, please see this “Getting Started with Teams” help center article.

This guide will cover how to use and take advantage of the Teams functionality on the Optilogic Platform.

For organization administrators (Org Admins), there is an “Optilogic Teams – Administrator Guide” help center article available. The Admin guide details how Org Admins can create new Teams & change existing ones, and how they can add new Members and update existing ones.

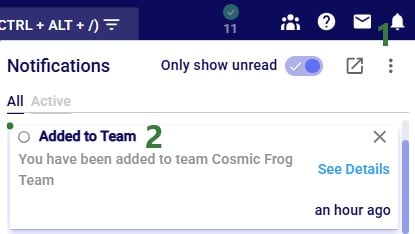

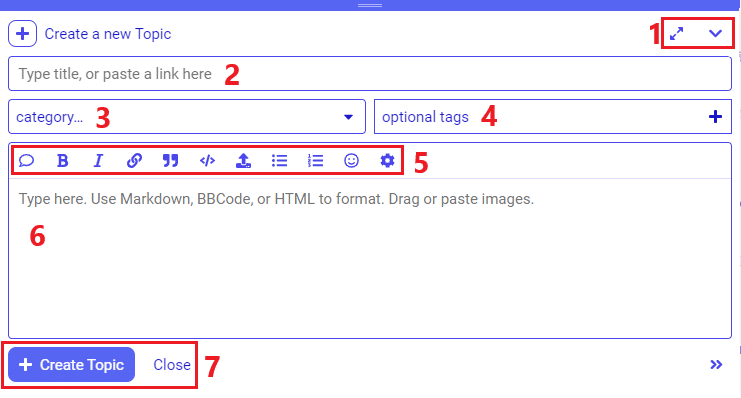

When your organization decides to start using the Teams functionality on the Optilogic platform, they will appoint one or multiple users to be the organizations’s administrators (Org Admin) who will create the Teams and add Members to these teams. Once an Org Admin has added you to a team, you will see a new application called Team Hub when logged in on the Optilogic platform. You will also receive a notification on the Optilogic platform about having been added to a team:

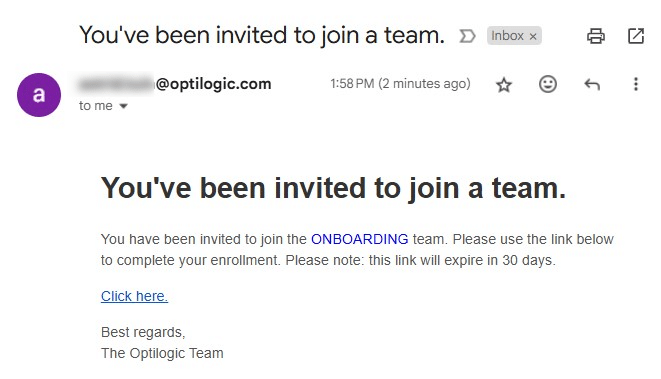

Note that it is possible to invite people from outside an organization to join one of your organization’s teams. Think for example of granting access to a contractor who is temporarily working on a specific project that involves modelling in Cosmic Frog. An Org Admin can invite this person to a specific team, see the “Optilogic Teams – Administrator Guide” help center article on how to do this. If someone is invited to join a team, and they are not part of that organization, they will receive an email invitation to the team. The following screenshots show this from the perspective of the user who is being invited to join a team of an organization they are not part of.

The user will receive an email similar to the one shown below. In this case the user is invited to the “Onboarding” team.

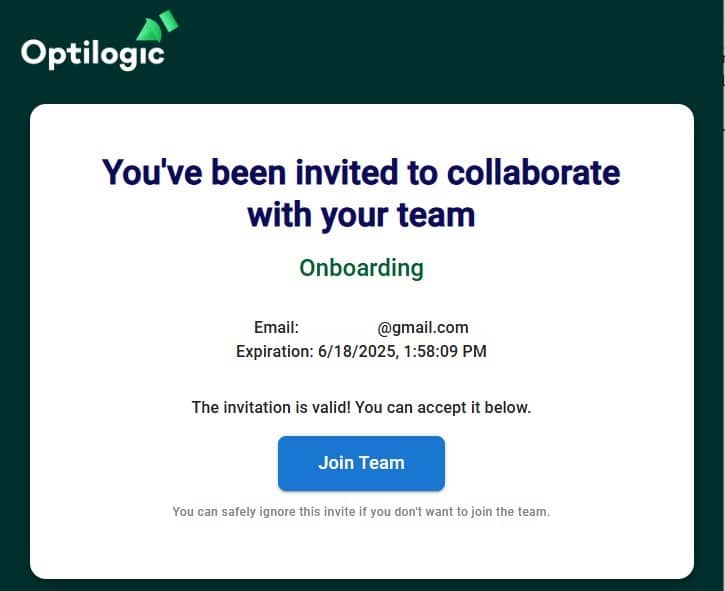

Clicking on the “Click here” link will open a new browser tab where user can confirm to join the team they are invited to by clicking on the Join Team button:

After clicking on the Join Team button, user will be prompted to login to the Optilogic platform or to create an account if they do not have one already. Once logged in, they are part of the team they were invited to and they will see the Team Hub application (see next section).

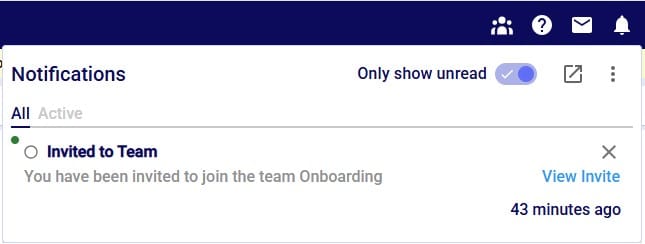

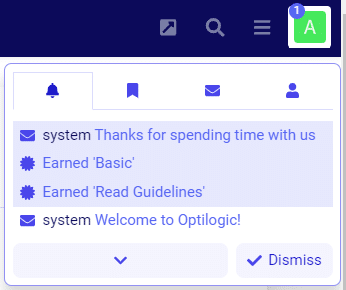

They will also see a notification in their Optilogic account:

Clicking on the notifications bell icon at the top right of the Optilogic platform will open the notifications list. There will be an entry for the invite the user received to join the Onboarding team.

Should an Org Admin have deleted the invitation before the user accepts the invite, they will get the message “Failed to activate the invite” when clicking on the Join Team button:

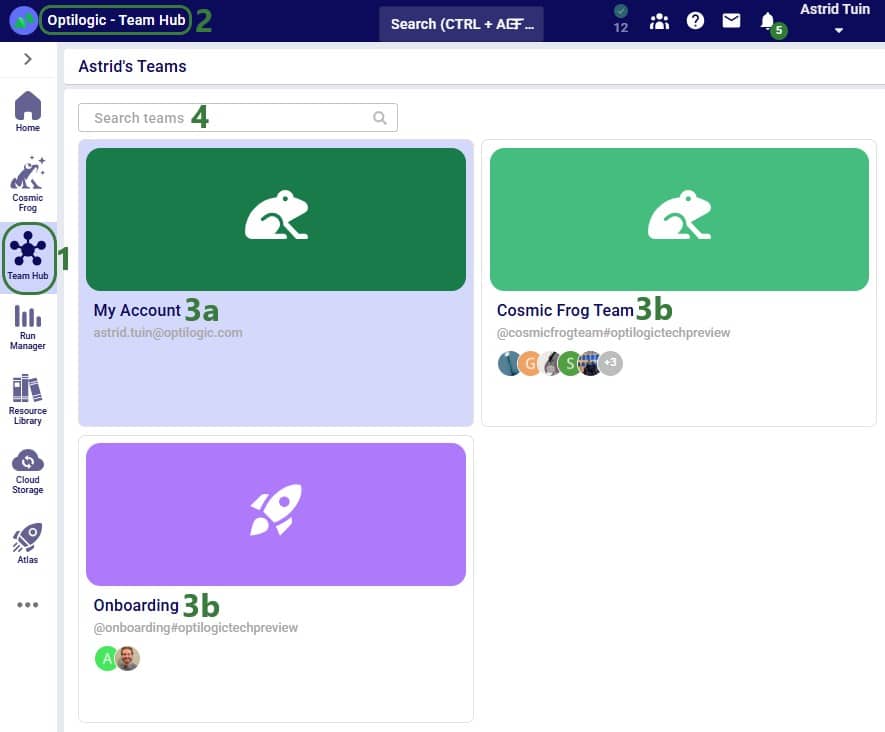

The Team Hub is a centralized workspace where users can view and switch between the teams they belong to. At its core, Team Hub provides team members with a streamlined view of their team’s activity, resources, and members. When first opening the Team Hub application, it may look similar to the following screenshot:

Next, we will have a look at the team card of the Cosmic Frog Team:

Note that changing the appearance of a team changes it not just for you, but for all members of the team.

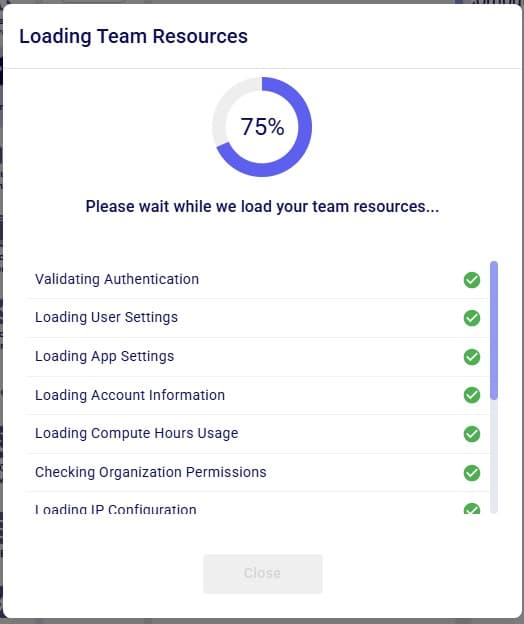

When clicking on a team or My Account in the Team Hub, user will be switching into that team and all the content will be that of the team. See also the next section “Content Switching with Team Hub” where this is explained in more detail. When switching between teams or My Account, first the resources of the team you are switching to will be loaded:

Once all resources are loaded, user can click on the Close button at the bottom or wait until it automatically closes after a few seconds. We will first have a look at what the Team Hub looks like for My Account, the user’s personal account, and after that also cover the Team Hub contents of a team.

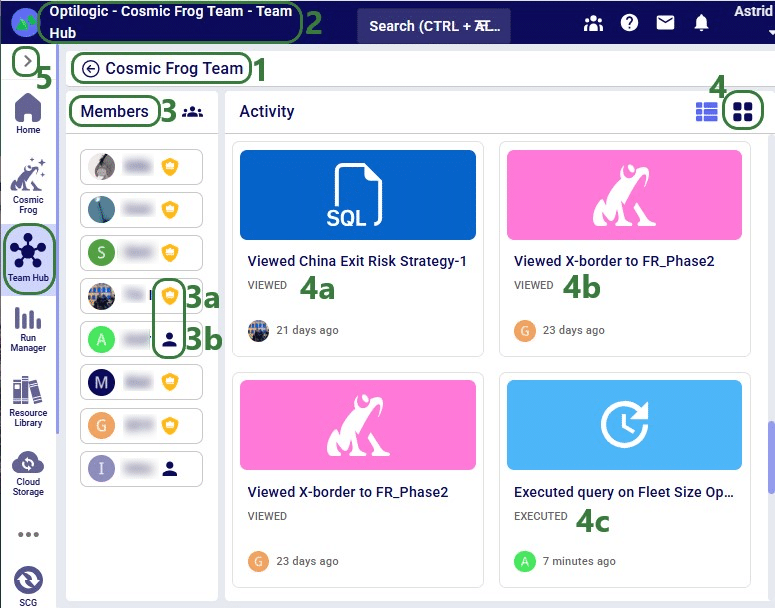

The overview of a team in the Team Hub application can look similar to following screenshot:

Note that as a best practice, users can start using the team’s activity feed instead of written / verbal updates from team members to understand the details of who worked on what when.

One of the most important features of the Team Hub application is its role as a content switcher. By default, when you log into the Optilogic platform, you’ll see only your personal content (My Account)—similar to a private workspace or OneDrive.

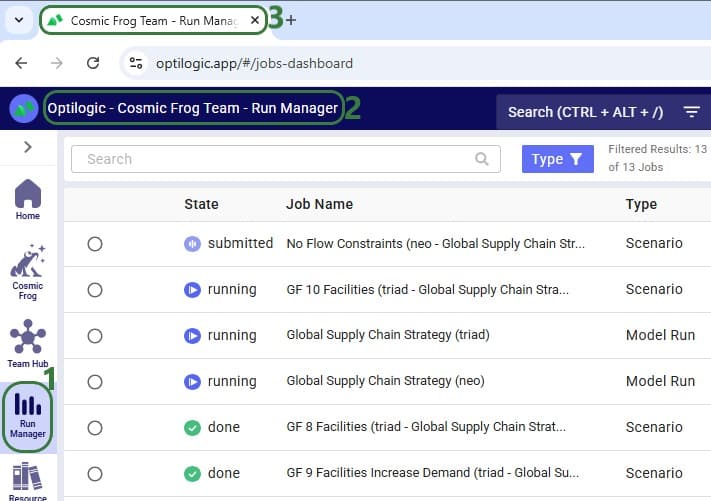

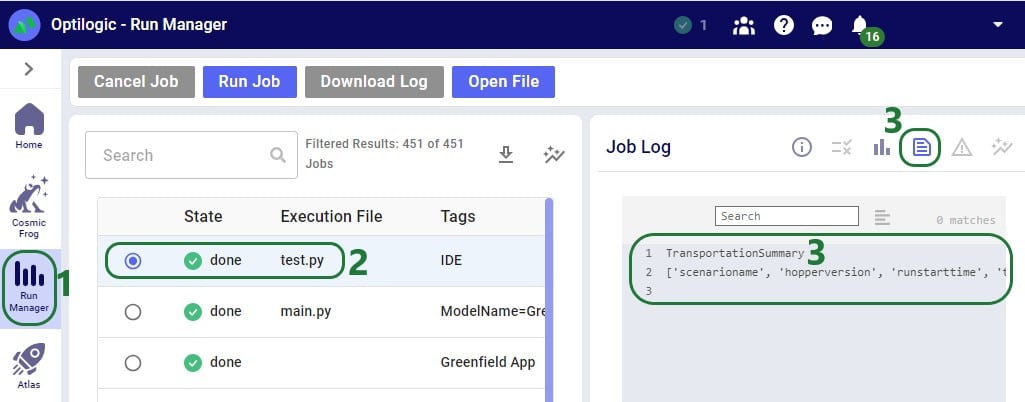

However, once you enter Team Hub and select a specific team, the Explorer automatically updates to display all files and databases associated with that team. This team context extends across the entire Optilogic platform. For example, if you navigate to the Run Manager, you’ll only see job runs associated with the selected team.

By switching into a team, all applications and data within the platform are scoped to that team. We will illustrate this with the following screenshots where user has switched to the team named “Cosmic Frog Team”.

Besides the “Cosmic Frog Team” team, this user is also part of the Onboarding team, which they have now switched to using the Team Hub application. Next, they open Resource Library application:

Note that it is best practice to return to your personal space in My Account when finished working in a Team, to ensure workspace content is kept separate and files are not accidentally created in/added to the wrong team.

Once an organization and its teams are set up, the next step is to start populating your teams with content. Besides adding content by copying from the Resource Library as seen in the last screenshot above, there are two primary ways to add models or files to a team.

Navigate to the Team Hub and switch into your team space. From here, you can create new files, upload existing ones, or begin building new models directly within the team. Keep in mind that any files or models created within a team are visible to all team members and can be modified by them. If you have content that you would prefer not to be accessed or edited by others, we recommend either labeling it clearly or creating it within your personal My Account workspace.

When user is in a specific team (Cosmic Frog Team here), they can add content through the Explorer (expand by clicking on the greater than icon at the top left on the Optilogic Platform): right clicking in the Explorer brings up a context menu with options to create new files, folders, and Cosmic Frog Models, and to upload files. When using these options, these are all created in / added to the active team.

You can also quickly add content to your team by using Enhanced Sharing. This feature allows you to easily select entire teams or individual team members to share content with. When you open the share modal and click into the form, you’ll see intelligent suggestions—teams you belong to and members from your organization—appear automatically. Simply click on the teams or users listed to autofill the form. To learn more about the different ways of sharing content and content ownership, please see the “Model Sharing & Backups for Multi-User Collaboration” help center article.

Please note that, regardless of how a team’s content has been created/added:

Once you have been added to any teams and have added content, you are ready to start collaborating and unlocking the full potential of Teams within Optilogic!

Let us know if you need help along the way—our support team (support@optilogic.com) has your back.

Optilogic introduces the Lumina Tariff Optimizer – a powerful optimization engine that empowers companies to reoptimize supply chains in real-time to reduce the effects of tariffs. It provides instant clarity on today’s evolving tariff landscape, uncovers supply chain impacts, and recommends actions to stay ahead – now and into the future.

Manufacturers, distributors, and retailers around the world are faced with an enormous task trying to keep up with changing tariff policies and their supply chain impact. With Optilogic’s Lumina Tariff Optimizer, companies can illuminate their path forward by proactively designing tariff mitigation strategies that automatically consider the latest tariff rates.

With Lumina Tariff Optimizer, Optilogic users can stay ahead of tariff policy and answer critical questions to take swift action:

The following 7-minute video gives a great overview of the Lumina Tariff Optimizer tools:

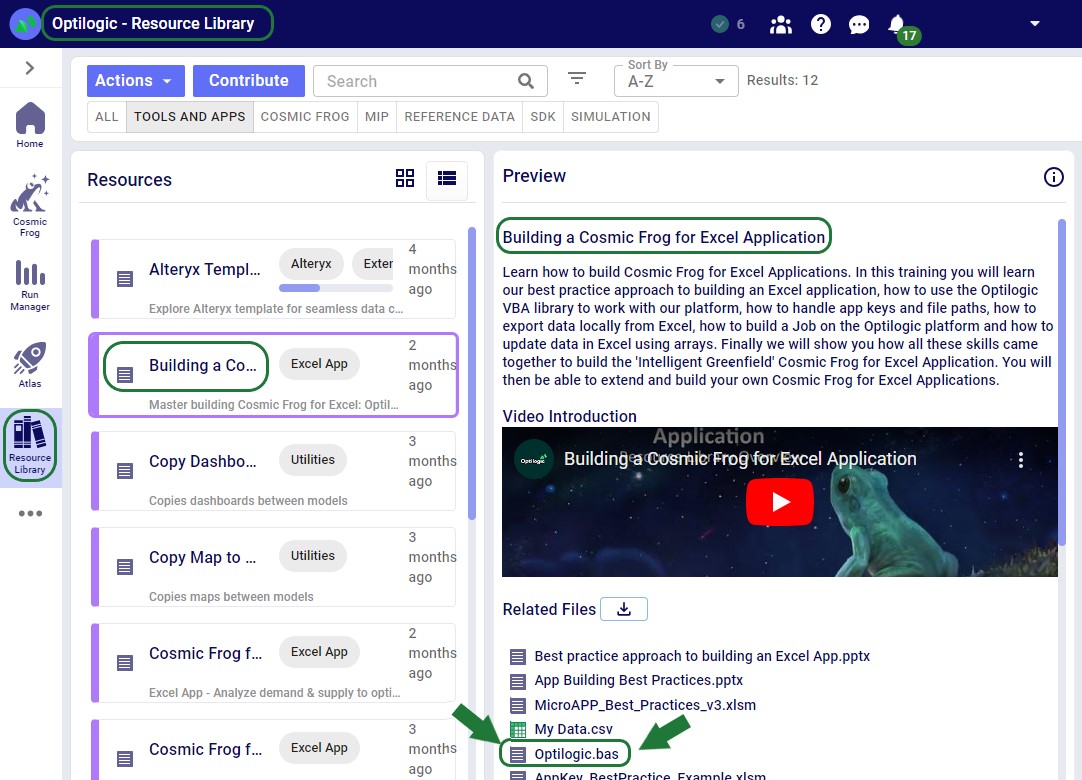

Optilogic’s Lumina Tariff Optimization engine can be leveraged by modelers within Cosmic Frog or be leveraged within a Cosmic Frog for Excel app for other stakeholders across the business to evaluate the tariff impact to their end-to-end supply chain. Optilogic enables users to get started quickly with Lumina with several items in the Resource Library that include:

This documentation will cover each of these Lumina Tariff Optimizer tools, in the same order as listed above.

The first tool in the Lumina Tariff Optimizer toolset is the Tariffs example model which users can copy to their own account from the Resource Library. We will walk through this model, covering inputs and outputs, with emphasis on how to specify tariffs and their impact on the optimal solution when running network optimization (using the Neo engine) on the scenarios in the model.

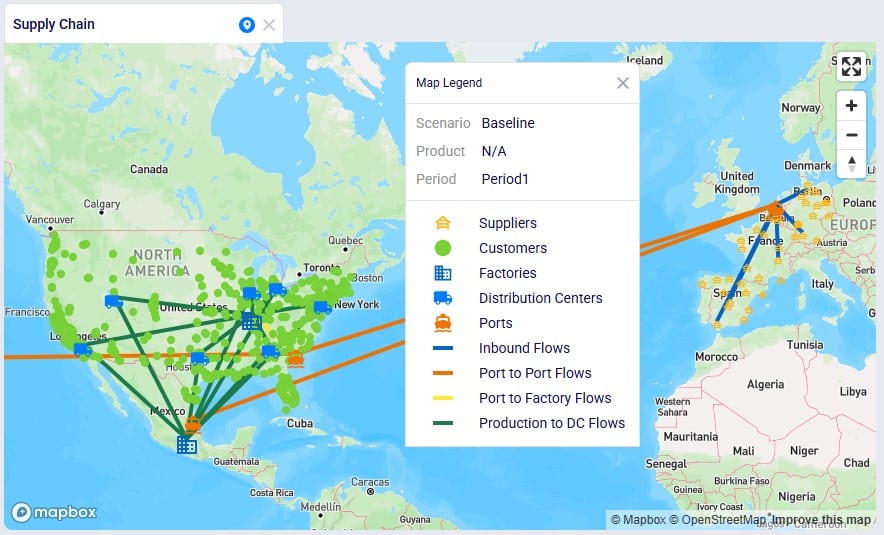

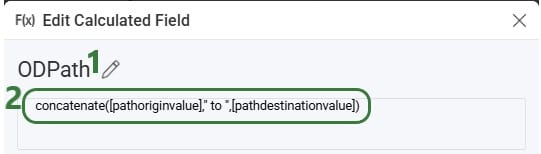

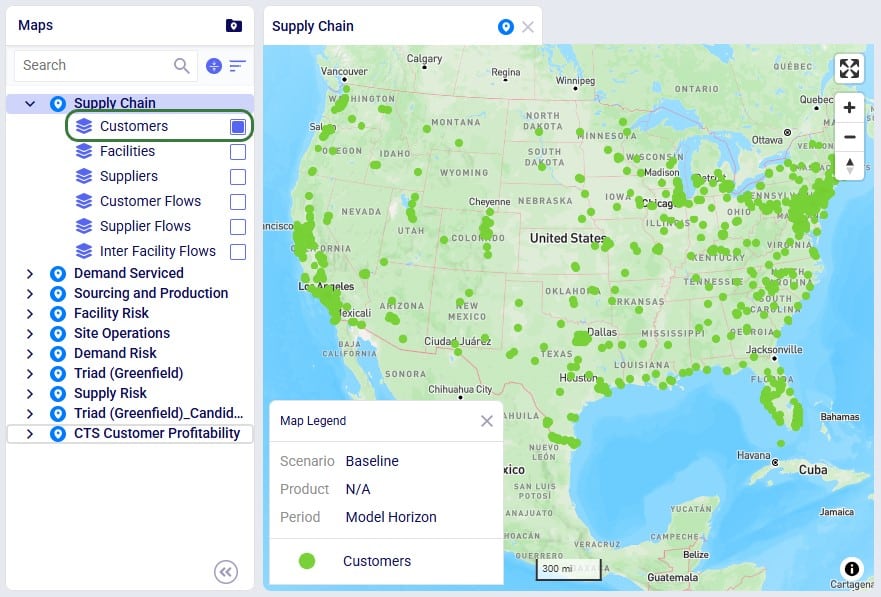

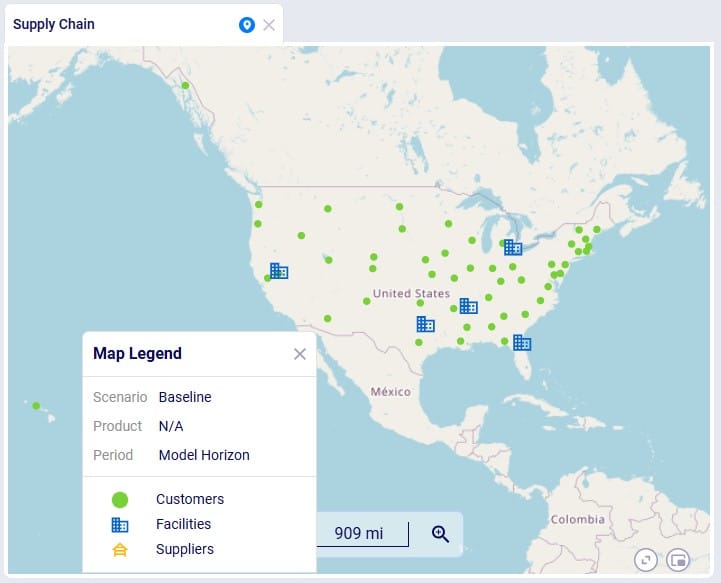

Let us start by looking at the map of the Tariffs model, which is showing the model locations and flows for the Baseline scenario:

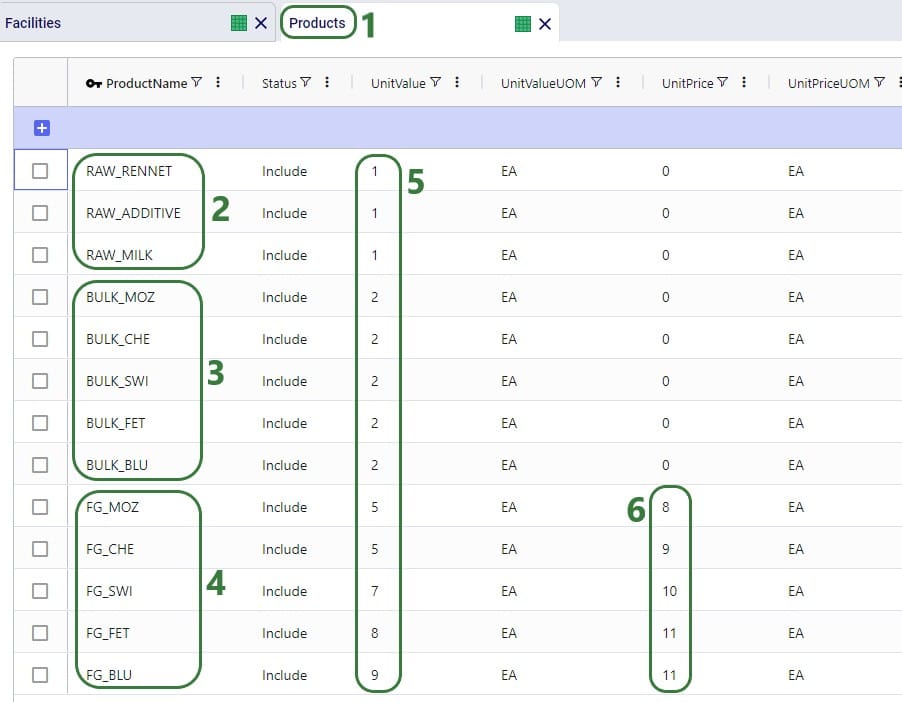

This model consists of the following sites:

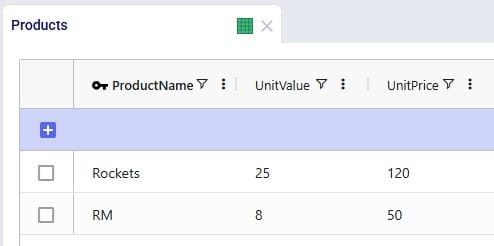

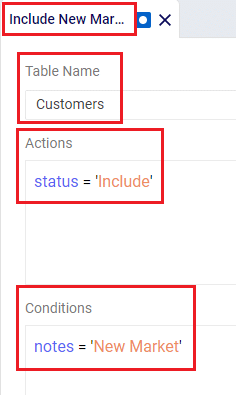

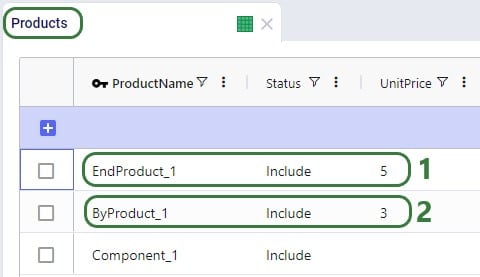

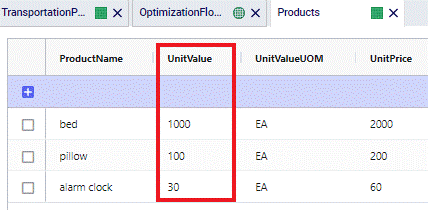

Next, we will have a look at the Products table:

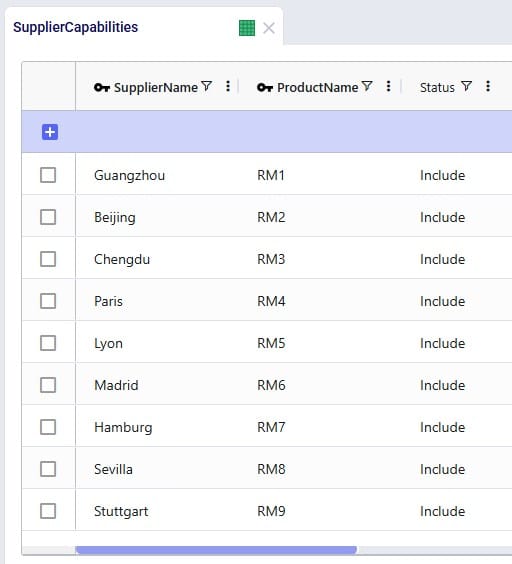

As mentioned above, raw materials RM1, RM2, and RM3 are supplied by Chinese suppliers and the others 6 raw materials by European suppliers, which we can confirm by looking at the Supplier Capabilities input table:

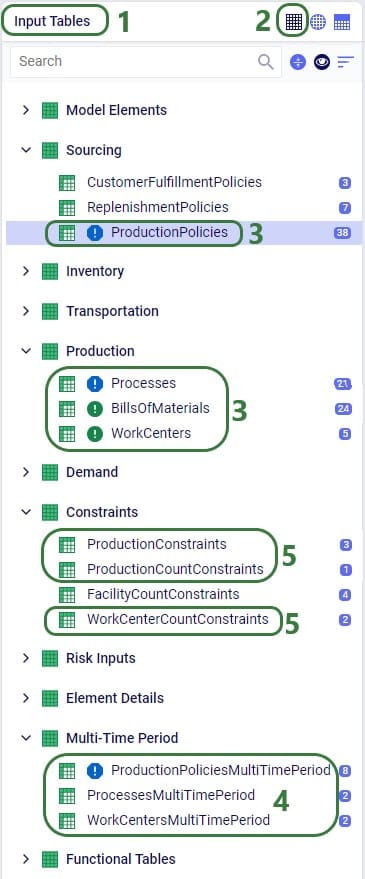

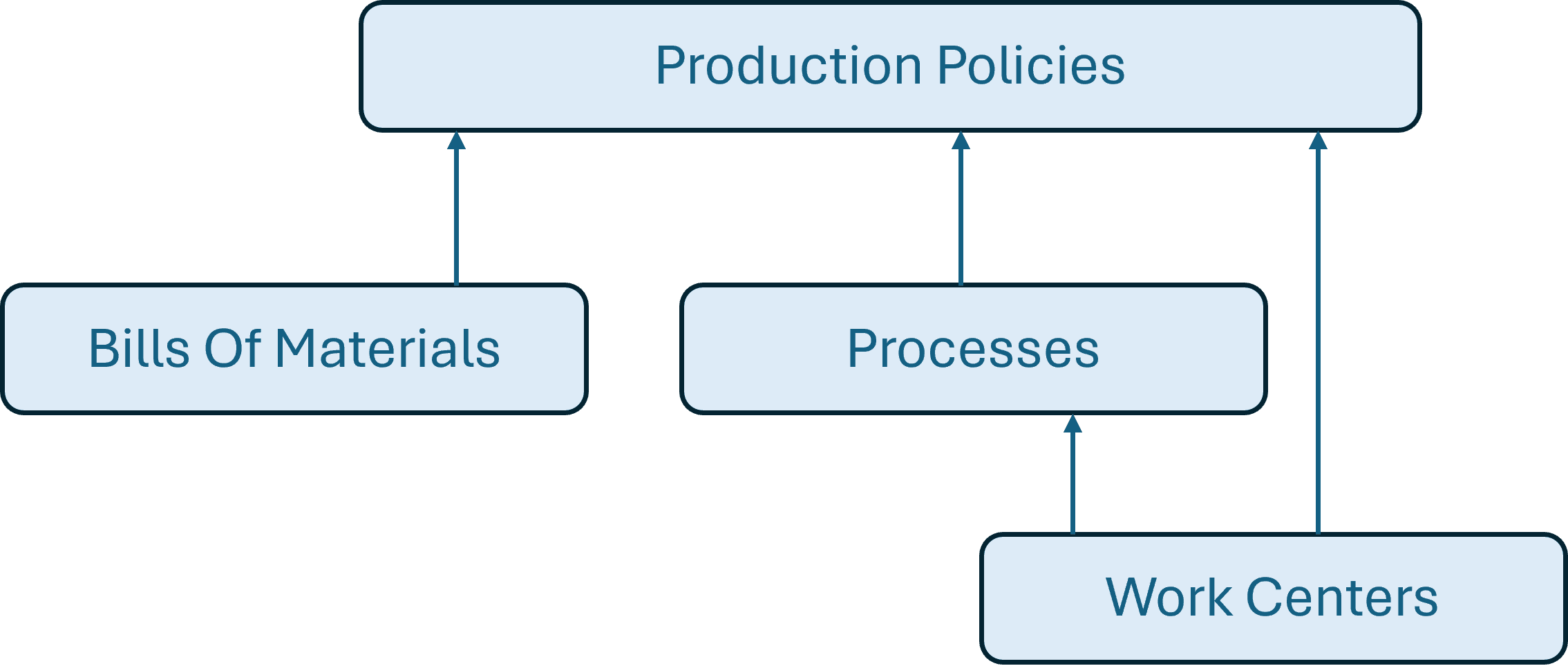

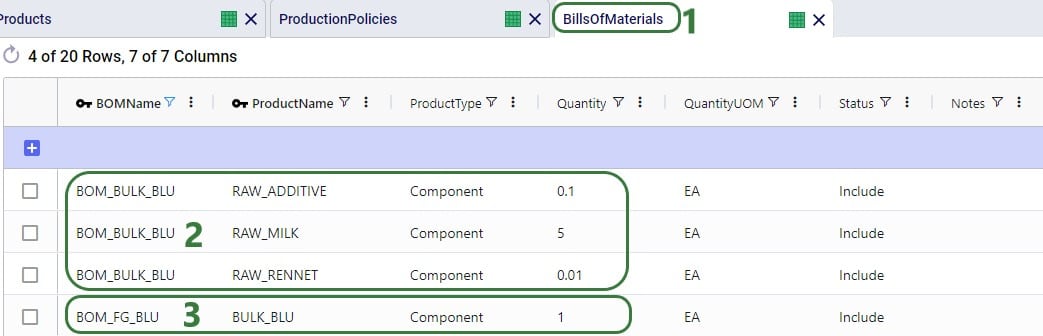

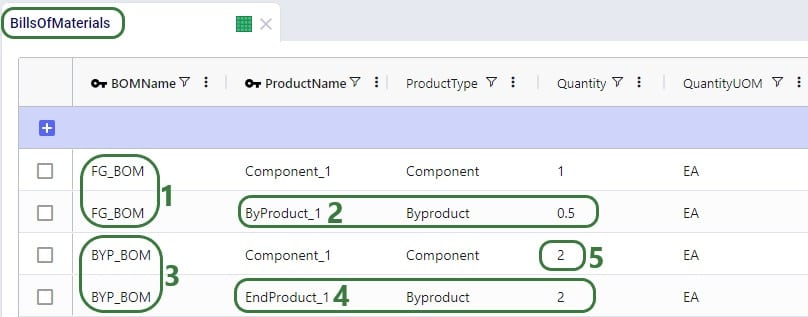

The Bills Of Materials input table shows that each finished good takes 3 of the Raw Materials to be manufactured; the Quantity field indicates how much of each is needed to create 1 unit of finished good:

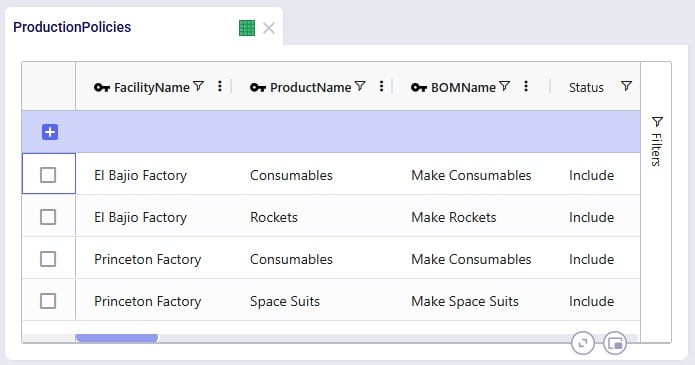

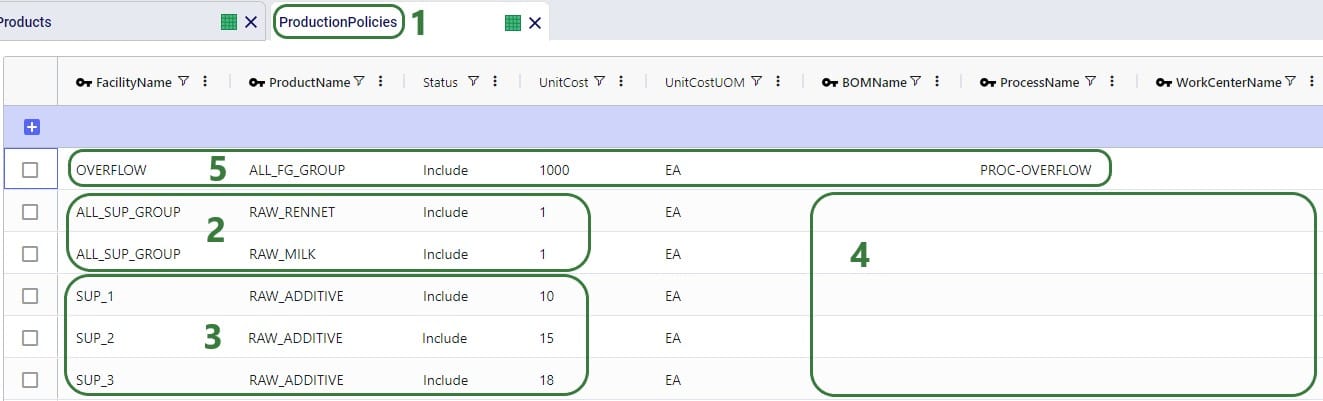

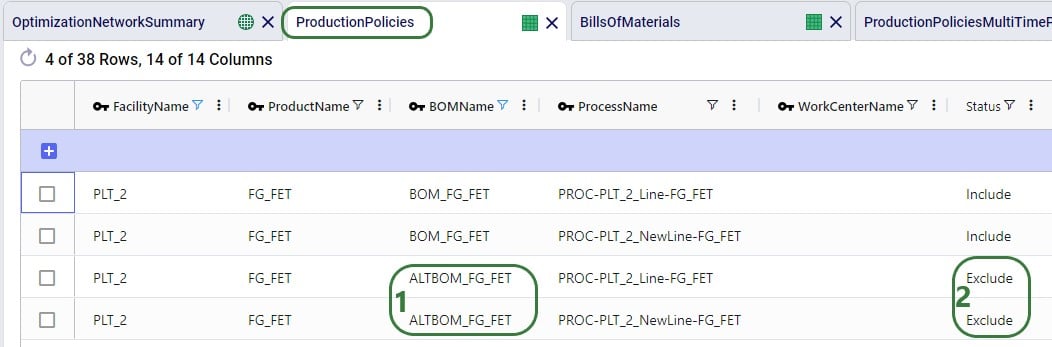

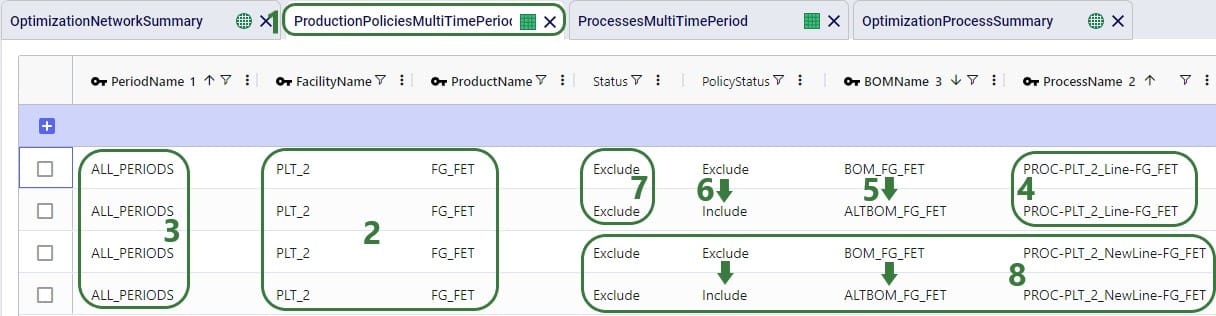

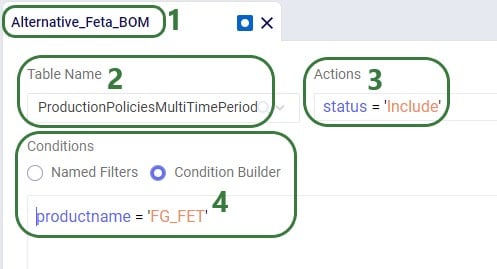

Looking at the Production Policies input table, we see that both the US and Mexico factory can produce Consumables, but Rockets are only manufactured in Mexico and Space Suits only in the US:

To understand the outputs later, we also need to briefly cover the Flow Constraints input table, which shows that the El Bajio Factory in Mexico can at a maximum ship out 3.5M units of finished goods (over all products and the model horizon together):

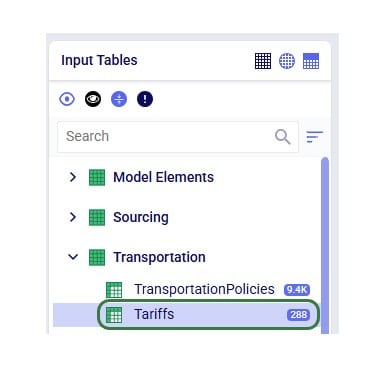

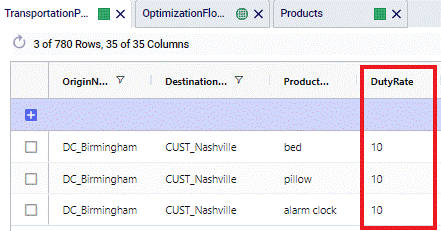

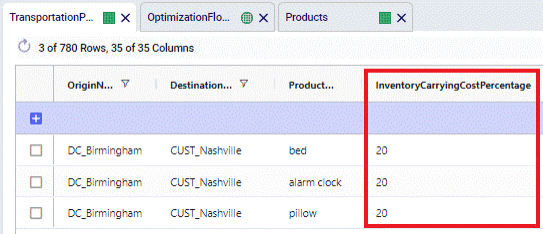

To enter tariffs and take them into account in a network optimization (Neo) run, users need to populate the new Tariffs input table:

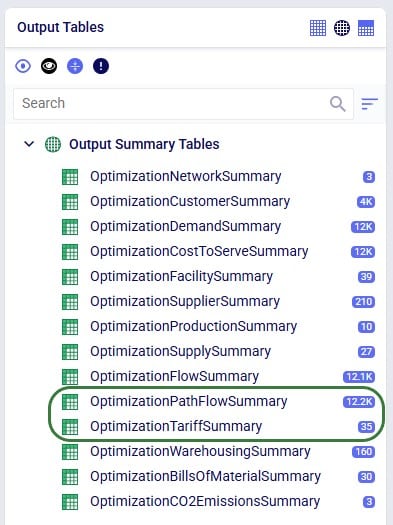

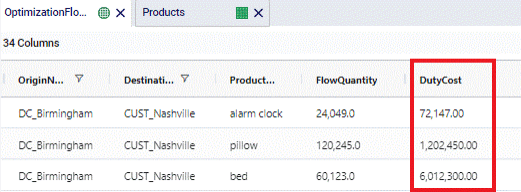

There are also 2 new Neo output tables that will be populated when tariffs are included in the model, the Optimization Path Flow Summary and the Optimization Tariff Summary tables:

Tariffs can be specified at multiple levels in Cosmic Frog, so users can choose the one that fits their modeling needs and available data best:

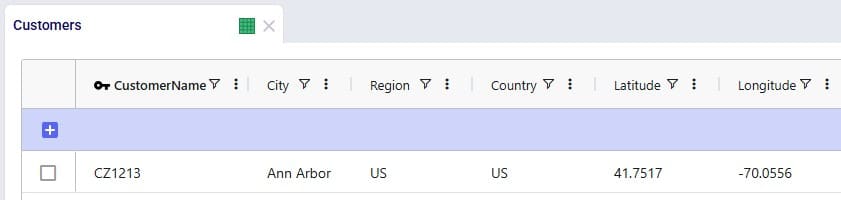

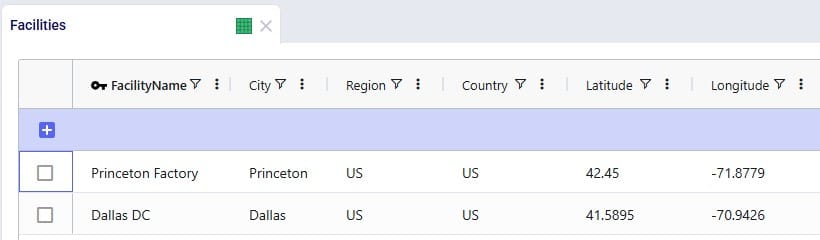

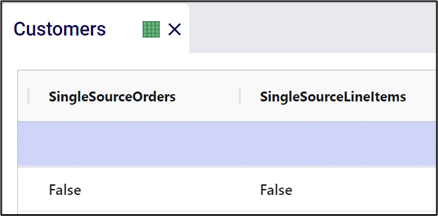

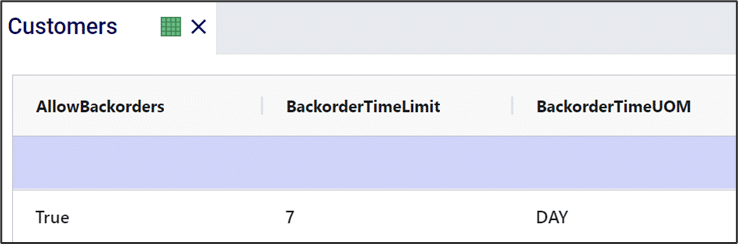

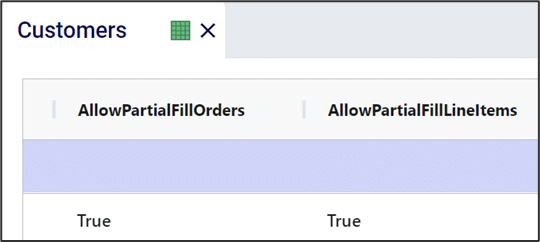

In order to model tariffs from/to a region or country, these fields need to be populated in the Customers, Facilities, and Suppliers tables:

In the Tariffs input table, all path origin location (furthest upstream) – path destination location (furthest downstream) – product combinations to which tariffs need to be applied are captured. There can be any number of echelons in between the path origin location and path destination location where the product flows through. Consider the following path that a raw material takes:

The raw material is manufactured/supplied from China (the path origin), it then flows through a location in Vietnam, then through a location in Mexico, before ending its path in the USA (the path destination, where it is consumed when manufacturing a finished good). In this case the tariff that is set up for this raw material with path origin = China, and path destination = USA will be applied. The tariff will be applied to the segment of the path where the product arrives in the region / country of its final destination. In the example here, that is on last leg (/lane / segment) of the path, e.g. on the Mexico to USA lane.

If we have a raw material that takes the same initial path, except it ends in Mexico to be consumed in a finished good, then the tariff that is set up for this raw material with path origin = China and path destination = Mexico will be applied. To continue from this example: then if this finished good manufactured in Mexico is shipped to the US and sold there, and if there is a path with a tariff set up from Mexico to USA for the finished good, then that tariff will be applied (path origin = Mexico, path destination = USA). I.e. in this last example the entire path is just the 1 segment between Mexico and USA.

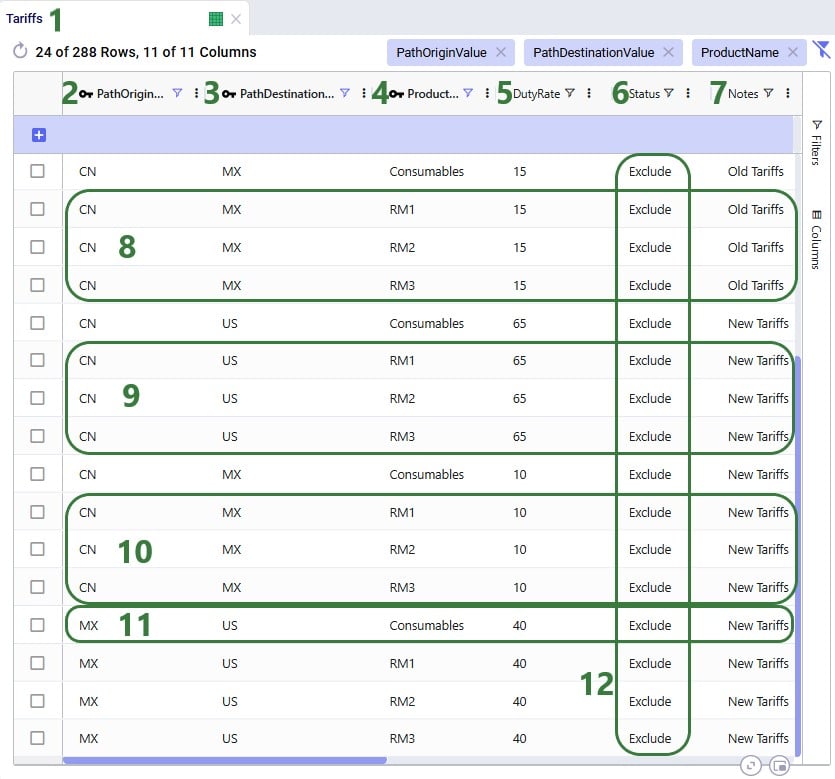

So, now we will look how this can be set up in the Tariffs input table:

Please note:

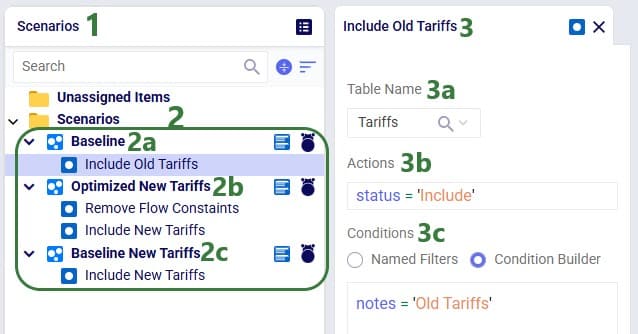

Three scenarios were run in the Tariffs example model:

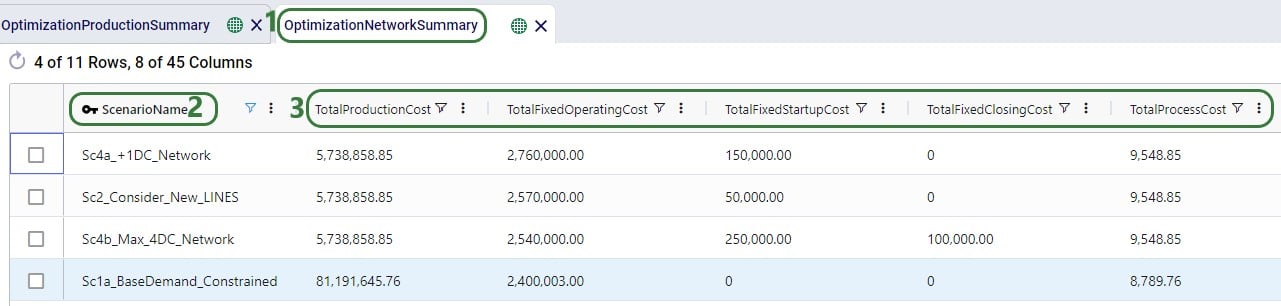

Now, we will look at the outputs for these 3 scenarios, first at a higher level and later on, we will dig into some details of how the tariff costs are calculated as well.

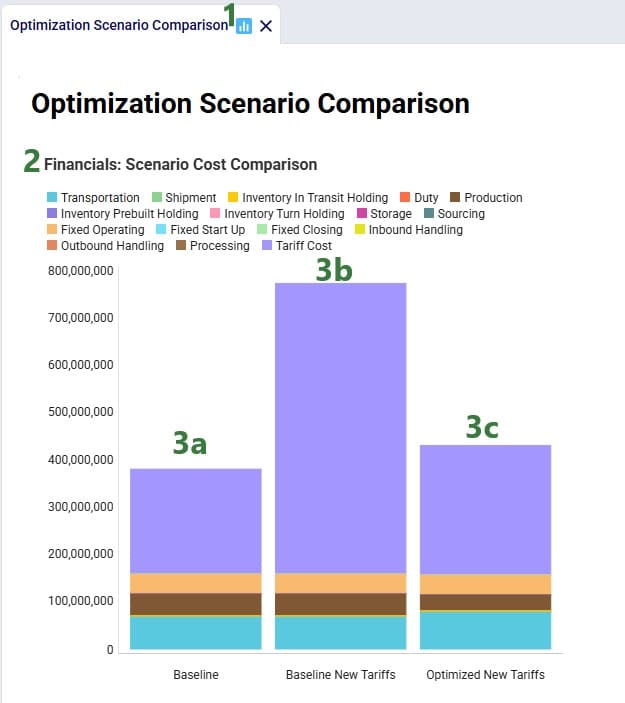

The Financials stacked bar chart in the standard Optimization Scenario Comparison dashboard in the Analytics module of Cosmic Frog can be used to compare all costs for all 3 scenarios in 1 graph:

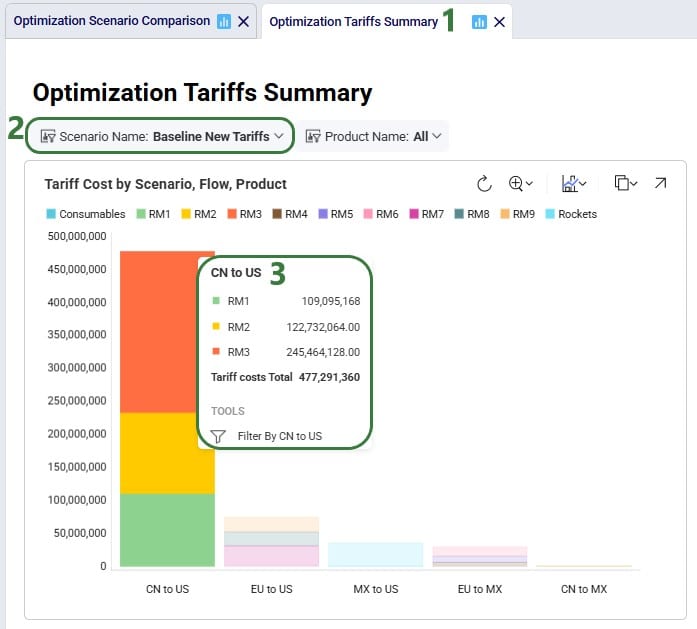

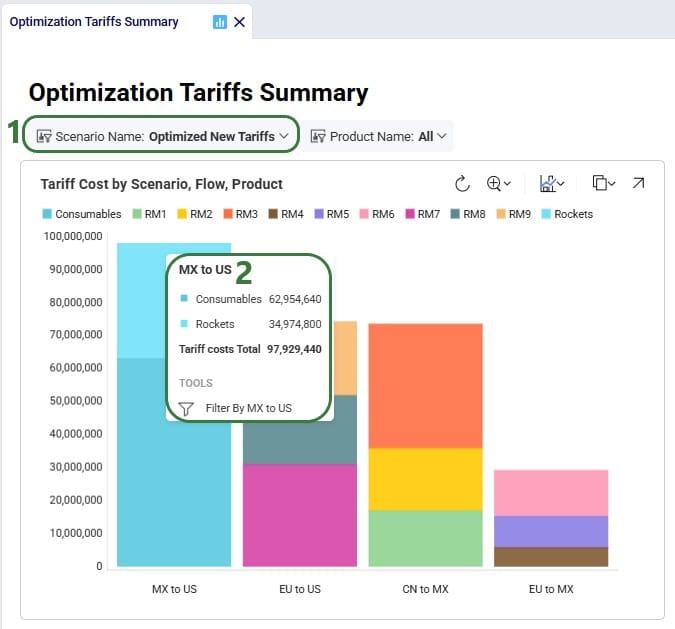

To compare the Tariffs by path origin – path destination and product, a new “Optimization Tariffs Summary” dashboard was created. We will look at the Baseline New Tariffs scenario first, and the Optimized New Tariffs scenario next:

Note that in the Appendix it is explained how this chart can be created.

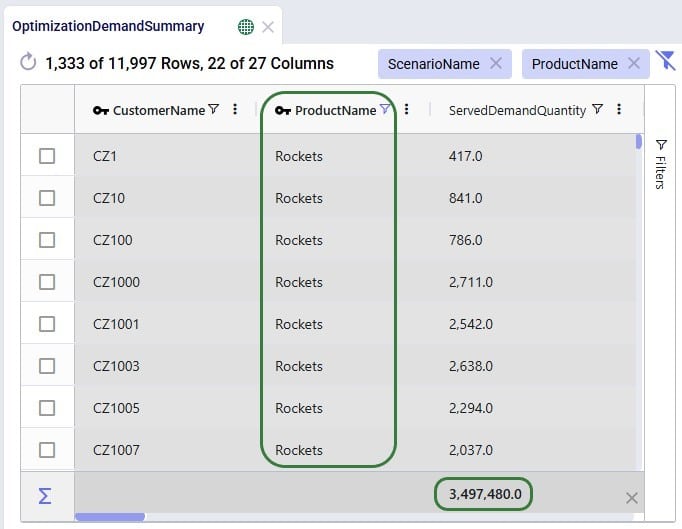

Next, we will take a closer look at some more detailed outputs. Starting with how much demand there is in the model for Rockets and Consumables, the 2 finished goods the Mexican factory in El Bajio can manufacture. The next screenshot shows the Optimization Demand Summary network optimization output table, filtered for Rockets and with a summation aggregation applied to it to show the total demand for Rockets at the bottom of the grid:

Next, we change the filter to look at the Consumables product:

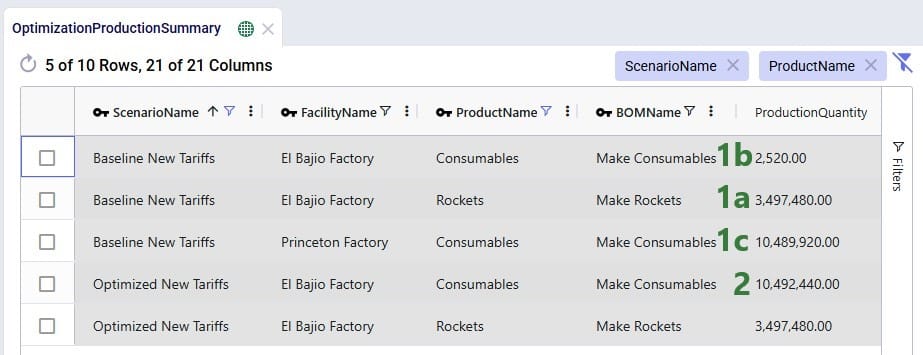

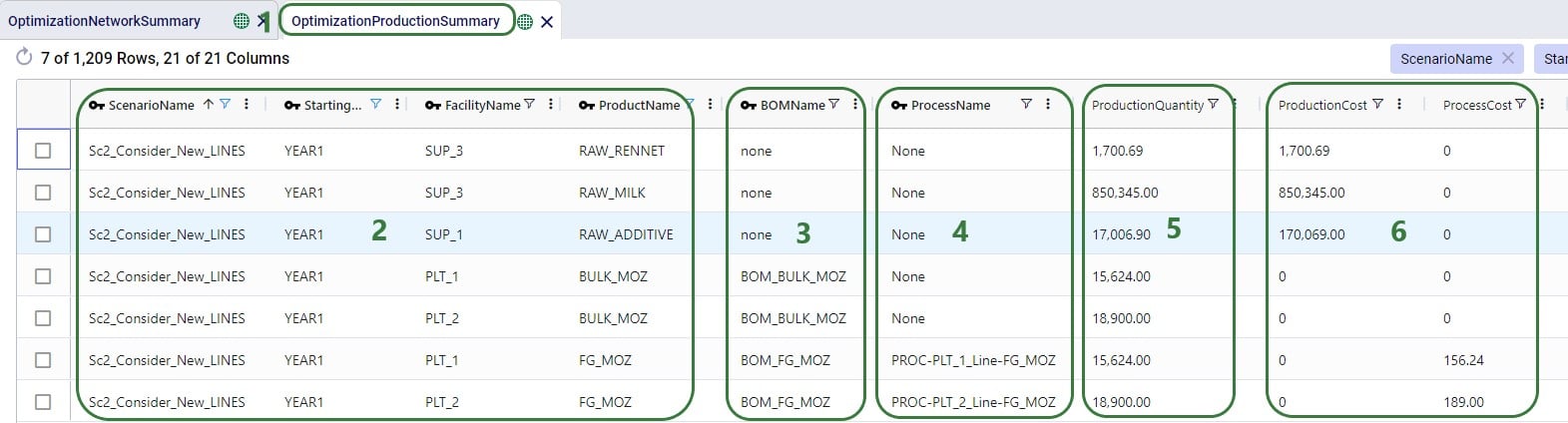

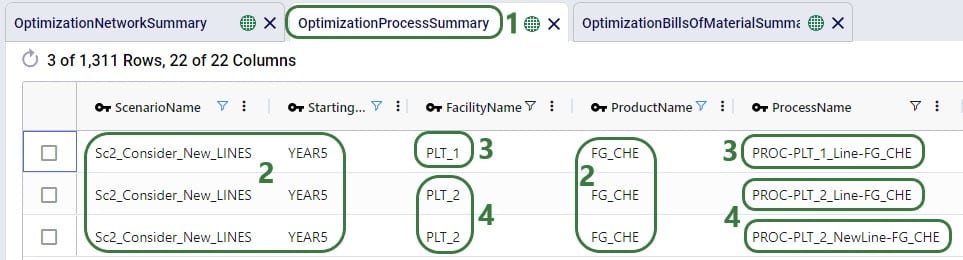

In conclusion: the demand for Rockets is nearly 3.5M units and for Consumables nearly 10.5M. Rockets can only be produced in Mexico whereas Consumables can be produced by both factories. From the charts above we suspected a shift in production from US to Mexico for the Consumables finished good in the Optimized New Tariffs scenario, which we can confirm by looking at the Optimization Production Summary output table:

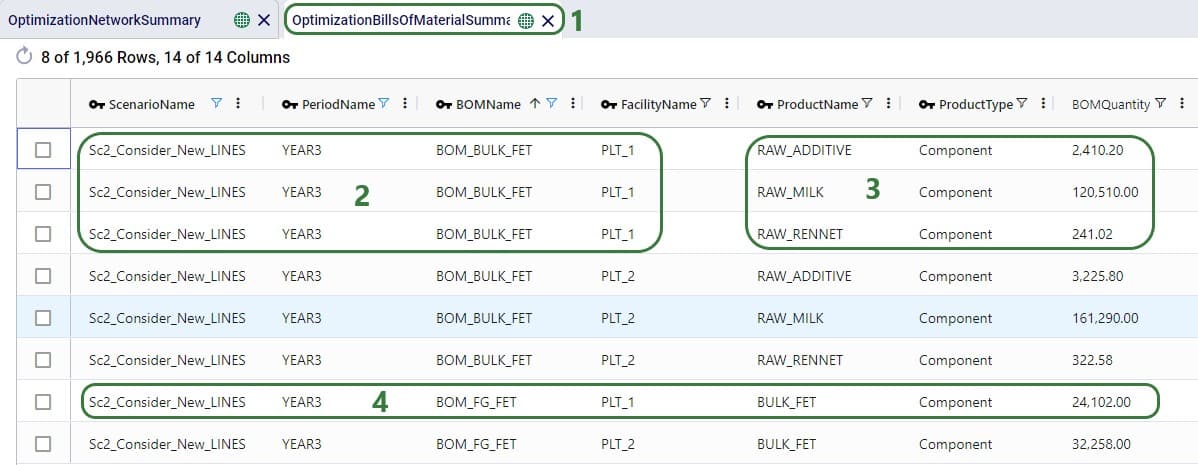

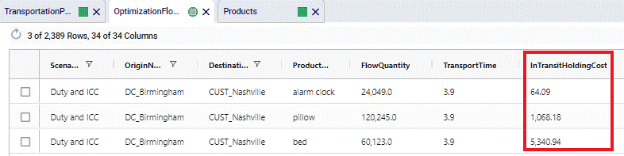

Since the production of Consumables requires raw materials RM1, RM2, and RM3, we expect to see the above production quantities for Consumables to be reflected in the amount of these raw materials that was moved from the suppliers in China to the US vs to Mexico. We can see this in the Optimization Flow Summary network optimization output table, which is filtered for the 2 scenarios with new tariffs, Port to Port lanes, and these 3 raw materials:

The custom Optimization Tariff Summary and Optimization Path Flow Summary output tables are automatically generated after running a network optimization on a model with a populated Tariffs table. The first of these 2 is shown in the next screenshot where we have filtered out the raw materials RM1, RM2, and RM3 again, plus also the Consumables finished good for the 2 scenarios that use the new tariffs:

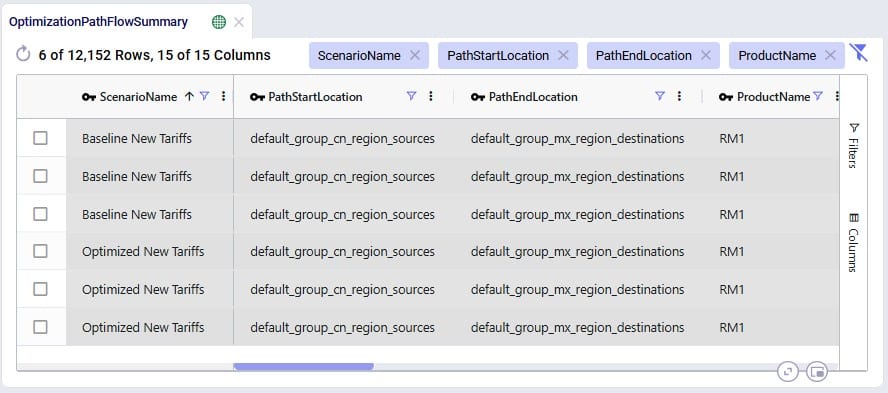

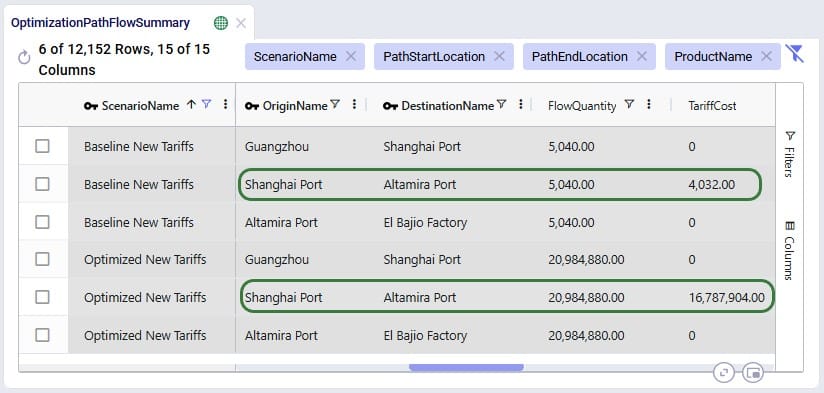

Where the Optimization Tariff Summary output table summarizes the tariffs at the scenario - path origin – path destination – product level, the Optimization Path Flow Summary output table gives some more detail around the whole path, and on which segments the tariffs are applied. The next 2 screenshots show 6 records of this output table for the Tariffs example model:

For the 2 scenarios that use the new tariffs, records are filtered out for raw material RM1 where the Path Start Location represents the CN region and the Path End Location represents the MX region. These Path Start and End Locations are automatically generated based on the Path Origin Property and Value and Destination Property and Value set in the Tariffs input table. Scrolling right for these 6 records:

We see that the path for RM1 is the same in both scenarios: originate at location Guangzhou in China, moved to Shanghai Port (CN), from Shanghai Port moved to Altamira Port (MX), and from Altamira Port moved to the El Bajio Factory (MX). The calculations of the Tariff Cost based on the Flow Quantity are the same as explained above, and we see that the tariffs are applied on the second segment where the product arrives in the region / country of its final destination.

Wondering where to go from here? If you are wanting to start using tariffs in your own models, but are not exactly sure where to start, please see the “Cosmic Frog Utilities to Create the Tariffs Table” section further below, which also includes step-by-step instructions based on what data you have available.

In the next section, we will first discuss how quick sensitivity analyses around tariffs can be run using a Cosmic Frog for Excel App.

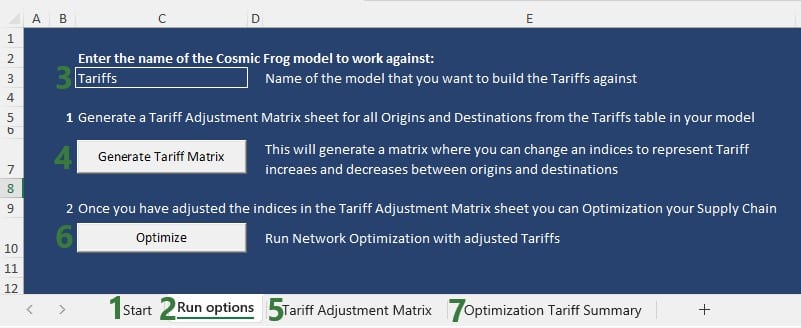

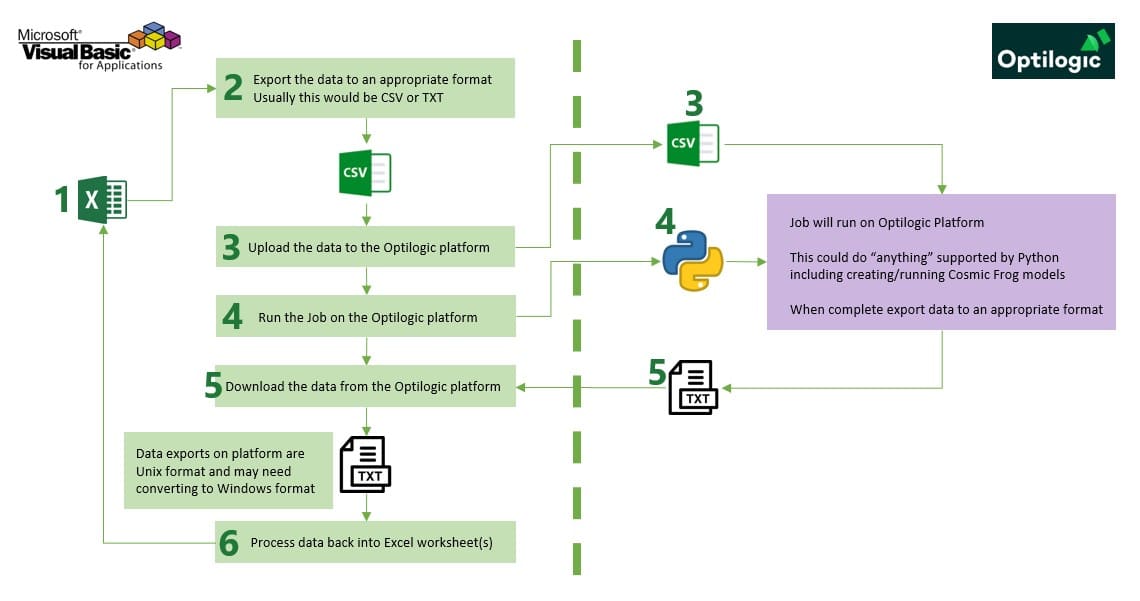

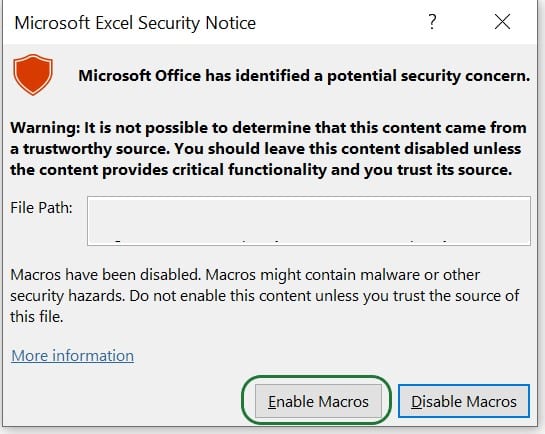

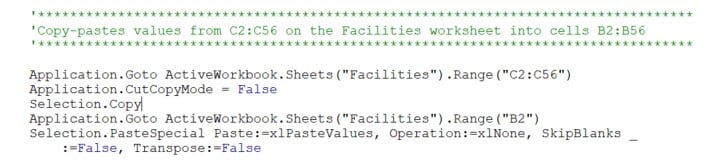

To enable Cosmic Frog users, and also managers and executives with no or limited knowledge of Cosmic Frog, to run quick sensitivity scenarios around changing tariffs, Optilogic has developed an Excel Application for this specific purpose. Users can connect to their Cosmic Frog model that contains a populated Tariffs input table and indicate which tariffs to increase/decrease by how much, run network optimization with these changed tariffs, and review the optimization tariff summary output table, all in 1 Excel workbook. Users can download this application and related files from the Resource Library.

The following represents a typical workflow when using the Tariffs Rapid Optimizer application:

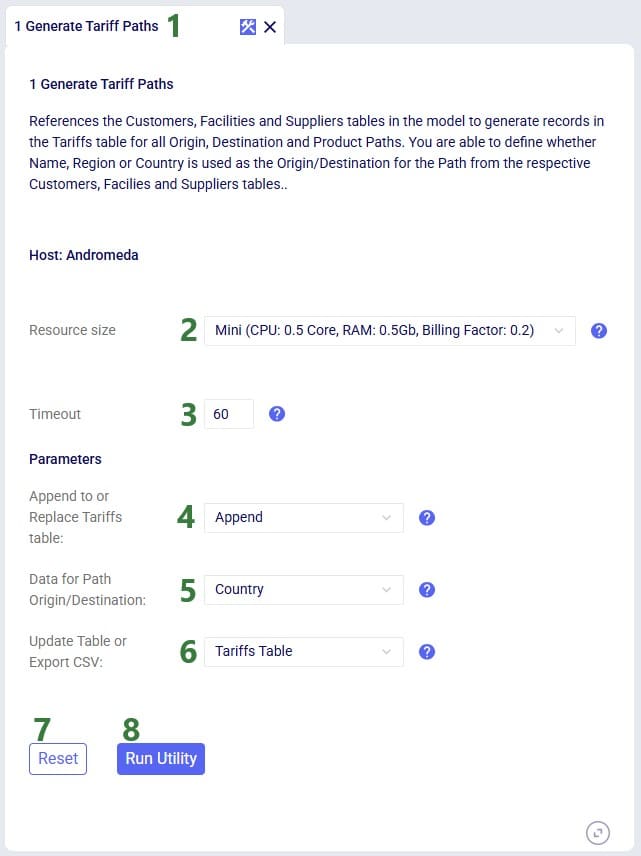

For users to take advantage of the power of the Lumina Tariff Optimizer they will want to create their own network optimization model which includes a populated Tariffs input table (see also the “Tariffs Model – Tariffs Table” section earlier in this documentation). Depending on the data available to the user, populating the Tariffs input table can be a straightforward task or a difficult one in case no or little data around tariffs is known/available within the organization. Optilogic has developed 3 utilities to help users with this task. The utilities are available from within Cosmic Frog, which will be covered in this section of the documentation, and they are also available through the Cosmic Frog for Excel Tariffs Builder App, which will be covered in the next section. Here follows a short description of each utility, they will each be covered in more detail later in this section:

In Cosmic Frog, they are accessible from the Utilities module (click on the 3 horizontal bars icon at the top left in Cosmic Frog to open the Module menu drop-down and select Utilities):

The utilities are listed under System Utilities > Tariff.

The latter 2 utilities hook into Avalara APIs, and users need to use / obtain their own Avalara API keys for each to be able to use these utilities from within Cosmic Frog or the Tariffs Builder Excel App.

The following list shows the recommended steps for users with varying levels of Tariffs data available to them from least to most data available (assuming an otherwise complete Cosmic Frog model has been built):

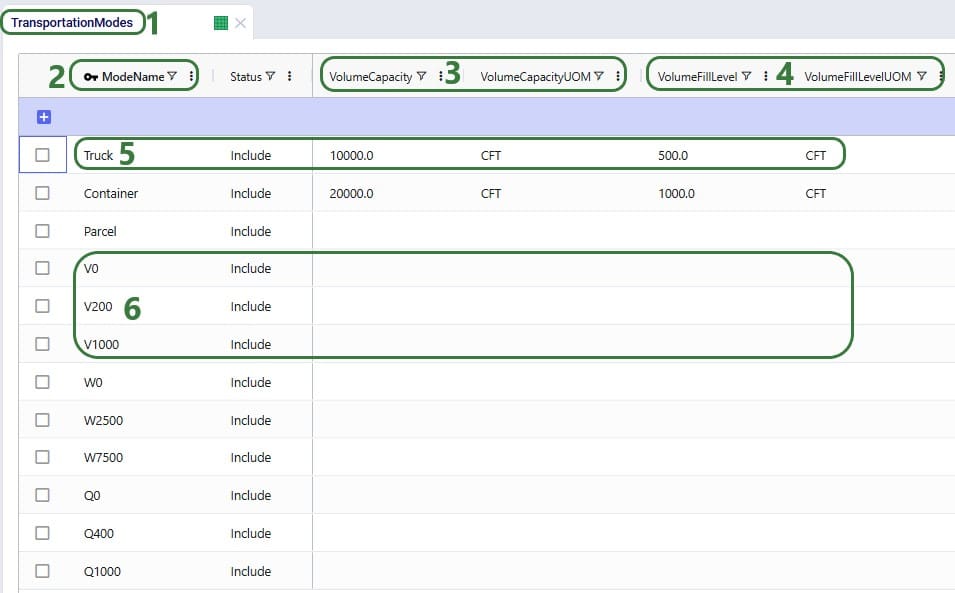

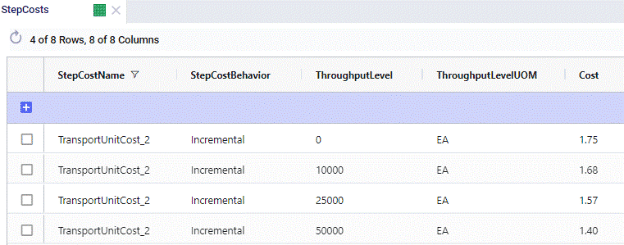

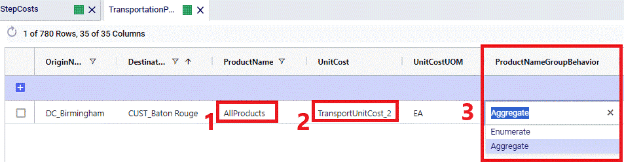

To populate the Tariffs table with all possible path origin – path destination – product combinations, based on the contents of the Transportation Policies input table, use this first utility:

Consider a small model with 1 customer in the US, 2 facilities (1 DC and 1 factory) both in the US, 1 supplier in China, and 2 products (1 finished good and 1 component):

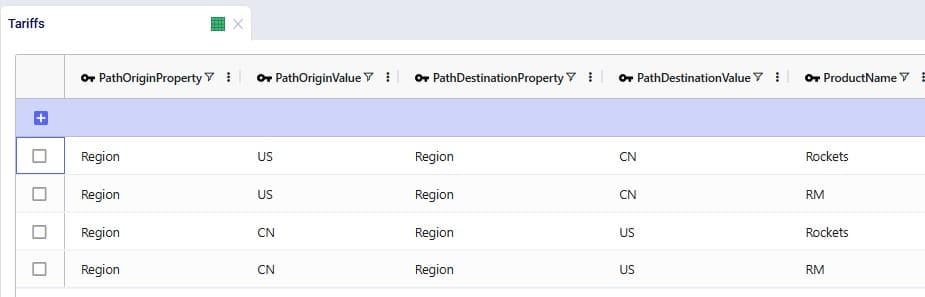

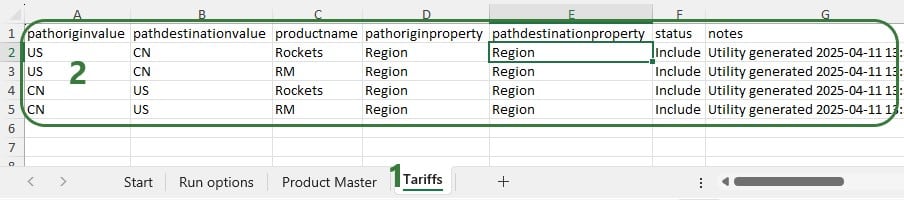

After running the 1 Generate Tariff Paths utility (using Region as the data to use for the path origin and path destination), the Tariffs table is generated and populated as shown in the next 2 screenshots:

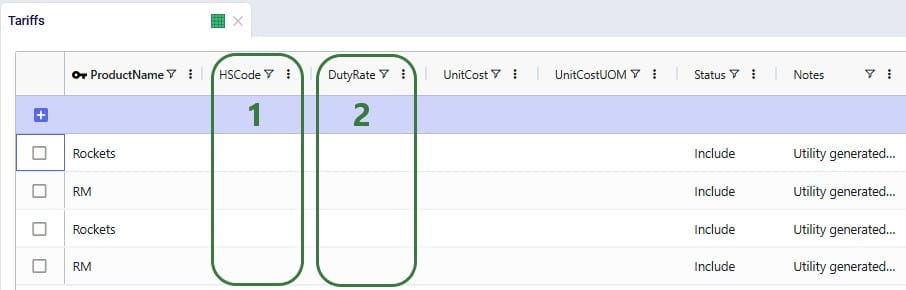

All combinations for path origin region, path destination region, and product have been added to the Tariffs table. Scrolling further right, we see the remaining fields of this table:

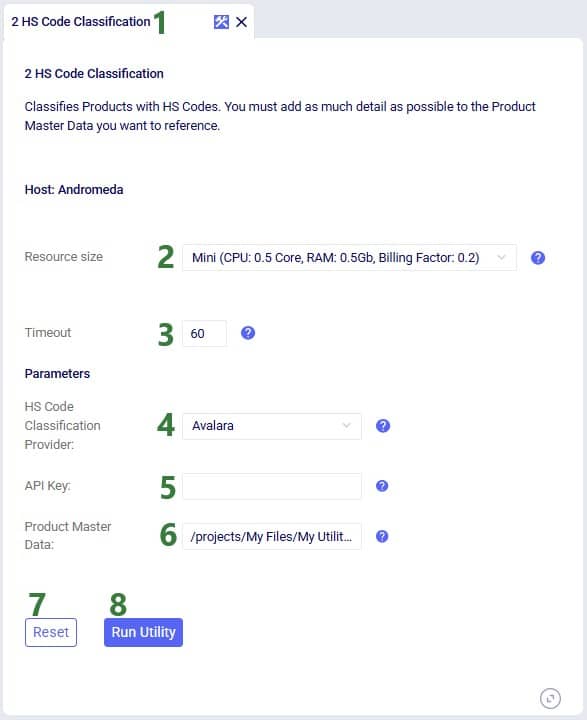

To update the HS Code field in the Tariffs table, we can use the second utility:

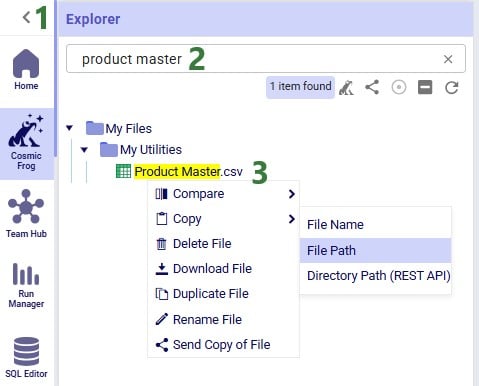

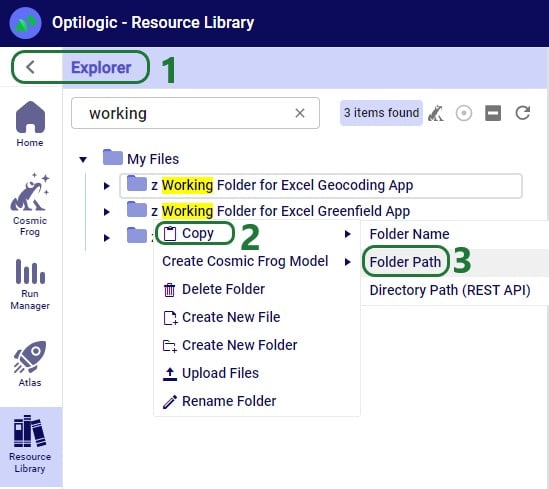

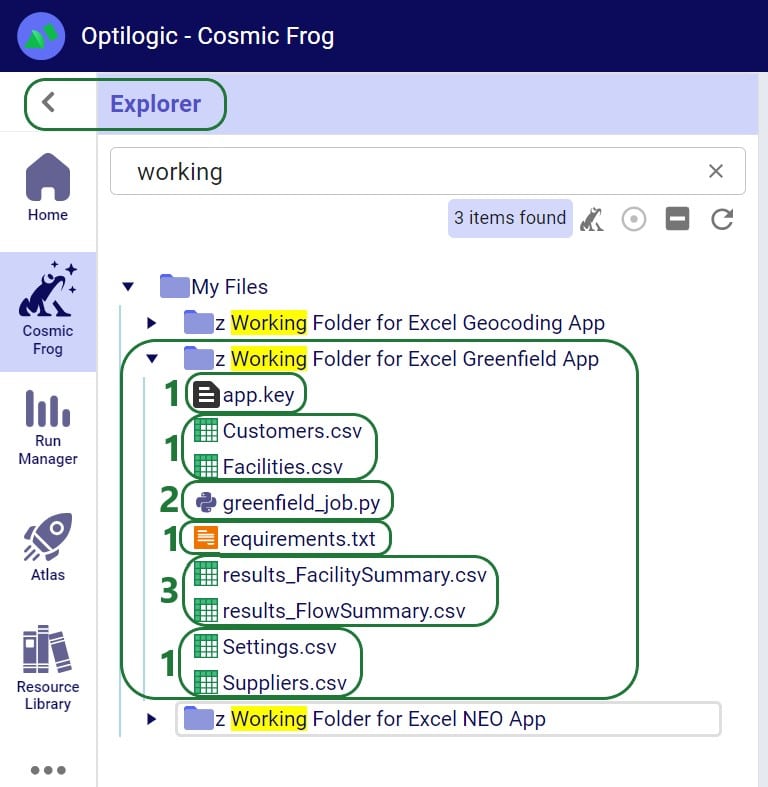

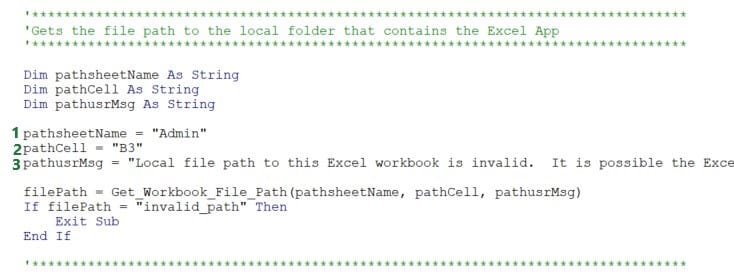

Users can find the full path of a file uploaded to their Optilogic account as follows:

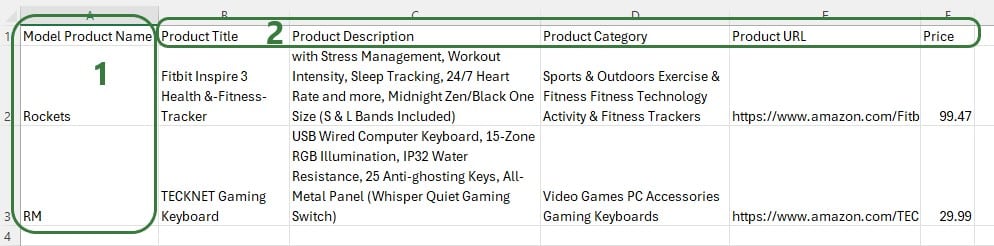

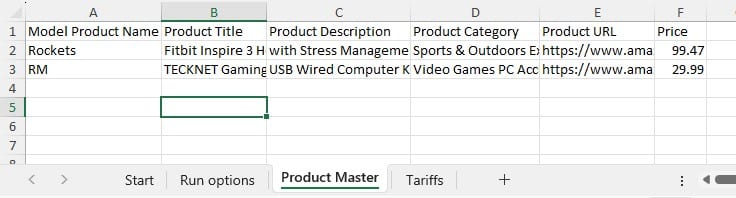

The file containing the product master data needs to have the same columns as shown in the next screenshot:

Note that columns B-F contain information of products that do not match the product name in Cosmic Frog as this is just an example to show how the utility works.

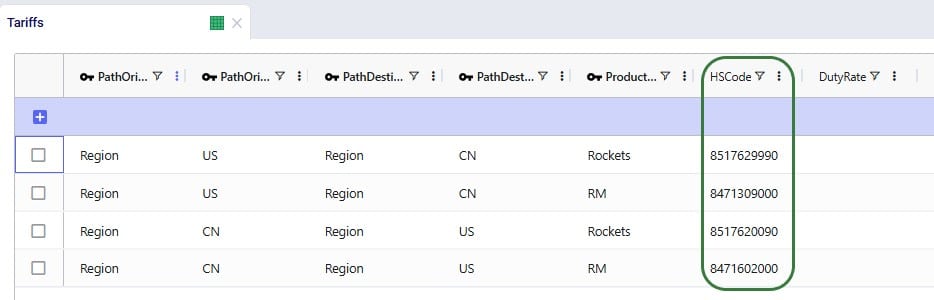

After running the 2 HS Code Classification utility, we see that the HS Code field in the Tariffs table is now populated:

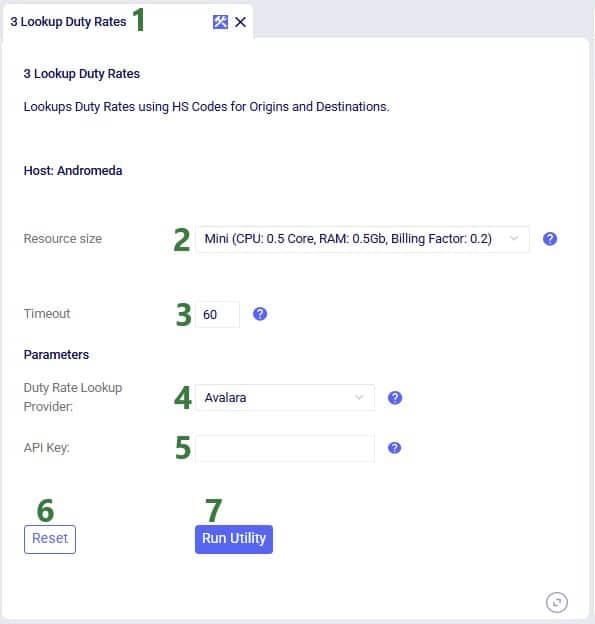

To use the HS Code field to next look up duty rates we can use the third utility:

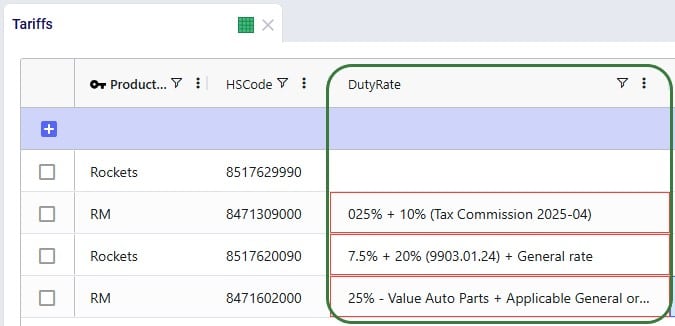

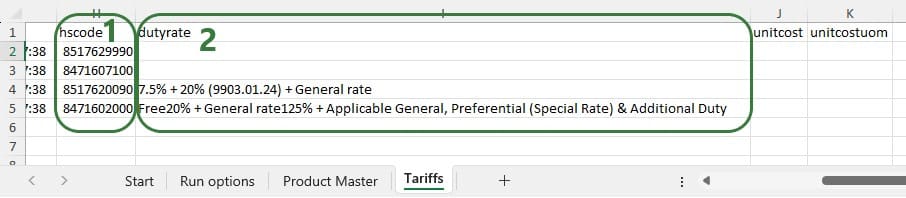

After running the 3 Lookup Duty Rates utility, we see that the Duty Rate field in the Tariffs table is now populated:

The raw output from the API is placed in the Duty Rate field and user needs to update this so that the field contains just a number representing the total duty rate. For the second record (US region to China region for product RM), the total duty rate is 35% (25% + 10%), and user needs to enter 35 in this field. For the third record (China region to US region for product Rockets), the duty rate is 27.5% (7.5% + 20%), and user needs to enter 27.5 in this field. For the fourth record (China region to US region for product RM), the total duty rate is 25%, and user needs to enter 25 in this field.

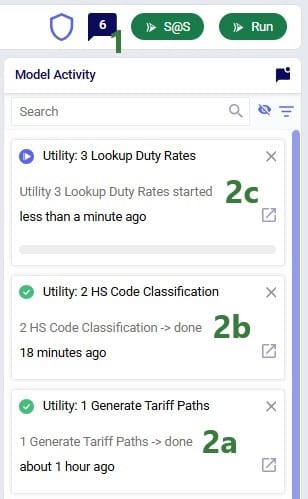

When running a utility in Cosmic Frog, user can track the progress in the Model Activity window:

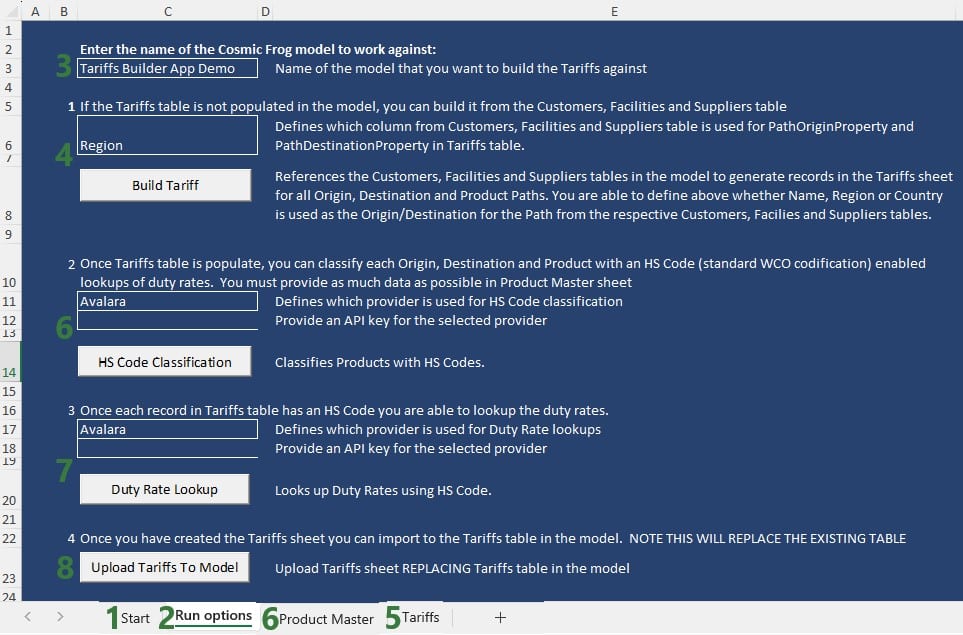

The 3 utilities covered in the previous section to generate and populate the Tariffs input table are also made available in the Cosmic Frog for Excel Tariffs Builder App, which we will cover in this section. Users can download this application and related files from the Resource Library.

The following represents a typical workflow when using this Tariffs Builder application:

The next screenshot shows the Tariffs table after just running the Build Tariff workflow (bullet 4 in the list above):

The next screenshot shows the Product Master worksheet which contains the product information to be used by the HS Code Classification workflow, it needs to be in this format and users should enter as much product information in here as possible:

After also running the HS Code Classification and the Duty Rate Lookup workflows (bullets 6 and 7 in the list further above), we see that these fields are now also populated on the Tariffs worksheet:

We hope users feel empowered to take on the challenging task of incorporating tariffs into their optimization workflows. For any questions, please do not hesitate to contact Optilogic support on support@optilogic.com.

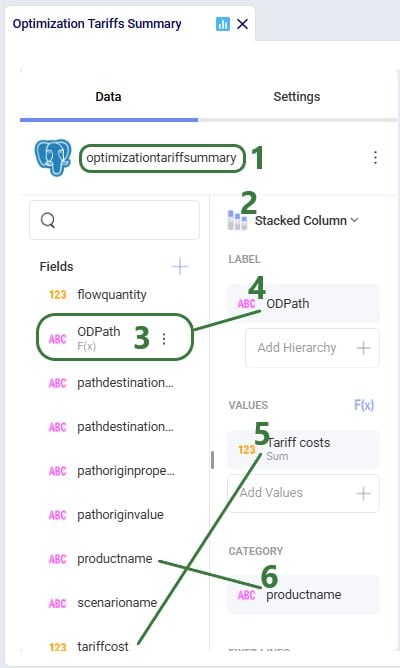

In this appendix we will show users how to create a stacked bar chart for each path origin – path destination pair, showing the tariff costs by product.

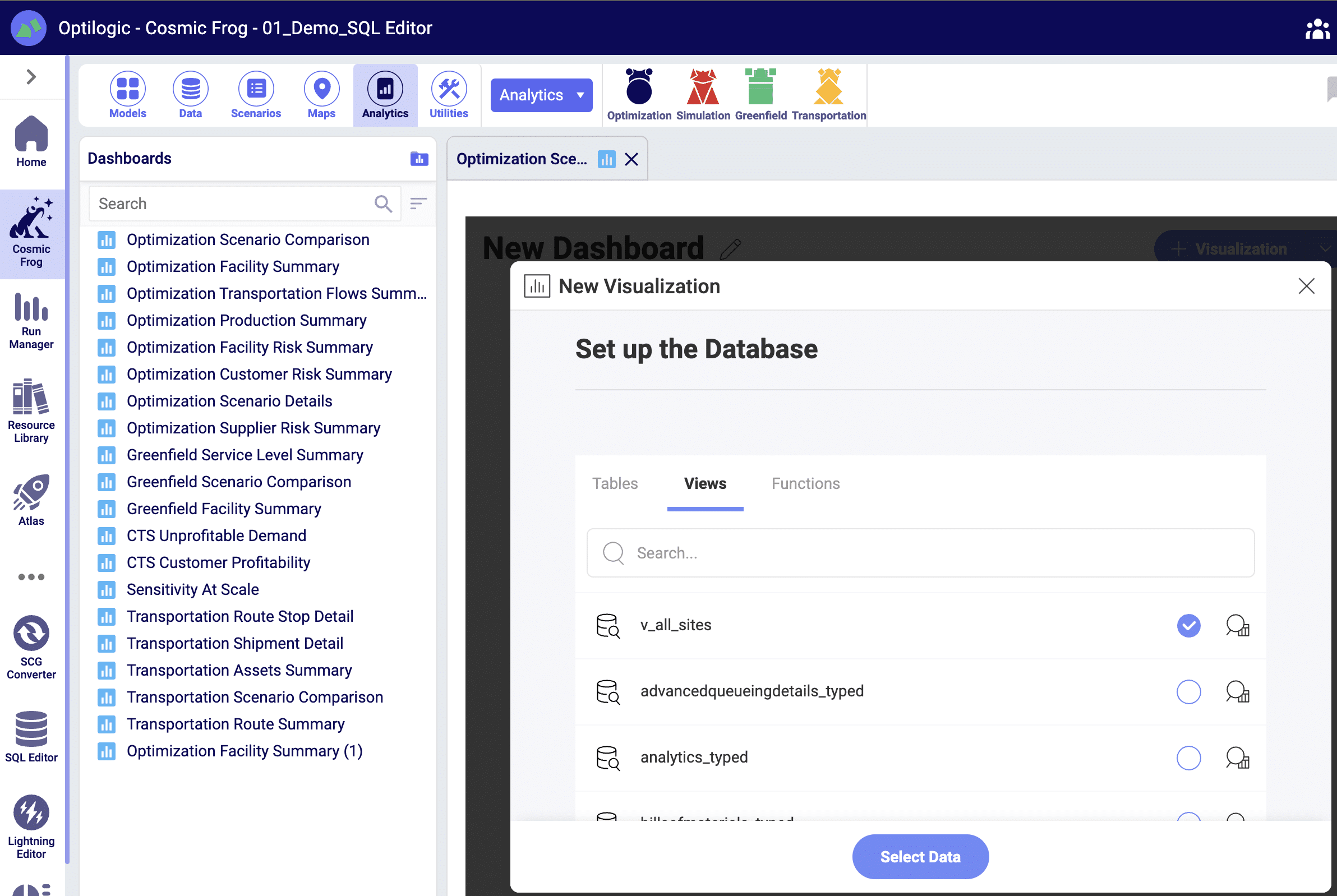

In the Analytics drop-down menu in the toolbar while in the Analytics module of Cosmic Frog, select New Dashboard, give it a name (e.g. Optimization Tariff Summary), then click on the blue Visualization button on the top right to create a new chart for the dashboard. In the New Visualization configuration form that comes up, type “tariff” in the Tables Search box, then check the box for the Optimization Tariff Summary table in the list, and click on Select Data.

To create the OD Path calculated field, click on the plus icon at the top right of the Fields list and select Calculated Field which brings up the Edit Calculated Field configuration window:

Tax systems can be complex, like for example those in Greece, Colombia, Italy, Turkey, and Brazil are considered to be among the most complex ones. It can however be important to include taxes, whether as a cost or benefit or both, in supply chain modeling as they can have a big impact on sourcing decisions and therefore overall costs. Here we will showcase an example of how Cosmic Frog’s User Defined Variables and User Defined Costs can be used to model Brazilian ICMS tax benefits and take these into account when optimizing a supply chain.

The model that is covered in this documentation is the “Brazil Tax Model Example” which was put together by Optilogic’s partner 7D Analytics. It can be downloaded from the the Resource Library. Besides the Cosmic Frog model, the Resource Library content also links to this “Cosmic Frog – BR Tax Model Video” which was also put together by 7D Analytics.

A helpful additional resource for those unfamiliar with Cosmic Frog’s user defined variables, costs, and constraints is this “How to use user defined variables” help article.

In this documentation the setup of the example model will first be briefly explained. Next, the ICMS tax in Brazil will be discussed at a high level, including a simplified example calculation. In the third section, we will cover how ICMS tax benefits can be modelled in Cosmic Frog. And finally, we will look at the impact of including these ICMS tax benefits on the flows and overall network costs.

One quick note upfront is that the screenshots of Cosmic Frog tables used throughout this help article may look different when comparing to the same model in user’s account after taking it from the Resource Library. This is due to columns having been moved or hidden and grids being filtered/sorted in specific ways to show only the most relevant information in these screenshots.

In this example model, 2 products are included: Prod_National to represent products that are made within Brazil at the MK_PousoAlegre_MG factory and Prod_Imported to represent products that are imported, which is supplied from SUP_Itajai_SC within the model, representing the seaport where imported products would arrive. There are 6 customer locations which are in the biggest cities in Brazil; their names start with CLI_. There are also 3 distribution centers (DCs): DC_Barueri_SP, DC_Contagem_MG, and DC_FeiraDeSantana_BA. Note that the 2 letter postfixes in the location names are the abbreviations of the states these locations are in. Please see the next screenshot where all model locations are shown on a map of Brazil:

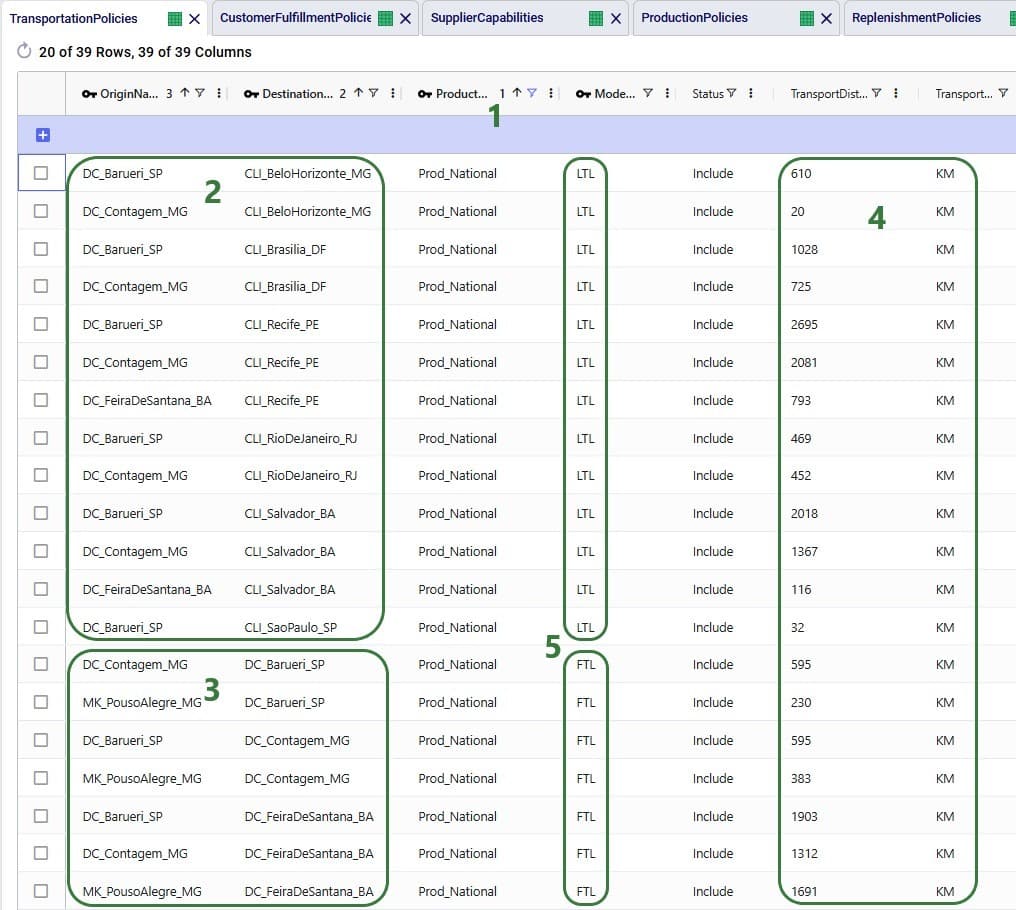

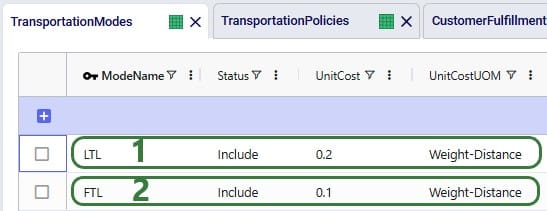

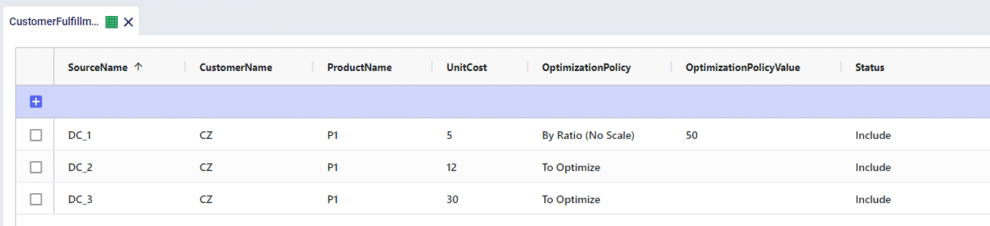

The model’s horizon is all of 2024 and the 6 customers each have demand for both products, ranging from 100 to 600 units. The SUP_ location (for Prod_Imported) and MK_ location (for Prod_National) replenish the DCs with the products. Between the DCs, some transfers are allowed too. The demand at the customer locations can be fulfilled by 1, 2 or all 3 DCs, depending on the customer. The next screenshot of the Transportation Policies table (filtered for Prod_National) shows which procurement, replenishment, and customer fulfillment flows are allowed:

For the other product modelled, Prod_Imported, the same customer fulfillment, DC-DC transfer, and supply options are available, except:

In Brazil, the ICMS tax (Imposto sobre Circulaçao de Mercadorias e Serviços, or Tax on Commerce and Services) is levied by the states. It applies to movement of goods, transportation services between several states or municipalities, and telecommunication services. The rate varies and depends on the state and product.

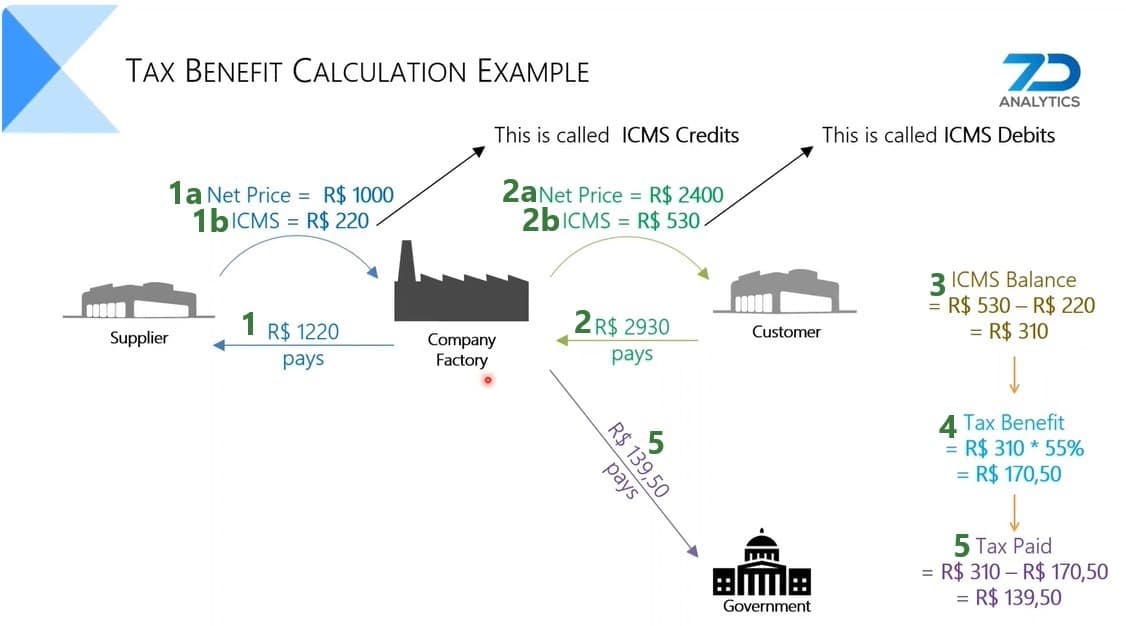

When a company sells a product, the sales price includes ICMS, which results in an ICMS debit for the company (the company owes this to the state). Likewise, when purchasing or transferring product, the ICMS is included in what the company pays the supplier. This creates ICMS credit for the company. The difference between the ICMS debits and credits is what the company will pay as ICMS tax.

The next diagram shows an ICMS tax calculation example, where company also has a 55% tax benefit which is a discount on the ICMS it needs to pay.

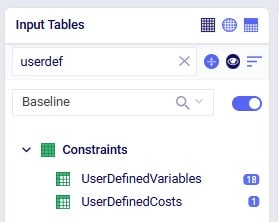

In order to include ICMS tax benefits in a model, we need to be able to calculate ICMS debits and credits based on the amount of flow between locations in different states for both national and imported products. As different states and different products can have different ICMS rates, we need to define these individual flow lanes as variables and apply the appropriate rate to each. This can be done by utilizing the User Defined Variables and User Defined Costs input tables, which can be found in the “Constraints” section of the Cosmic Frog input tables, shown in the below screenshot (here user entered a search term of “userdef” to filter out these 2 tables):

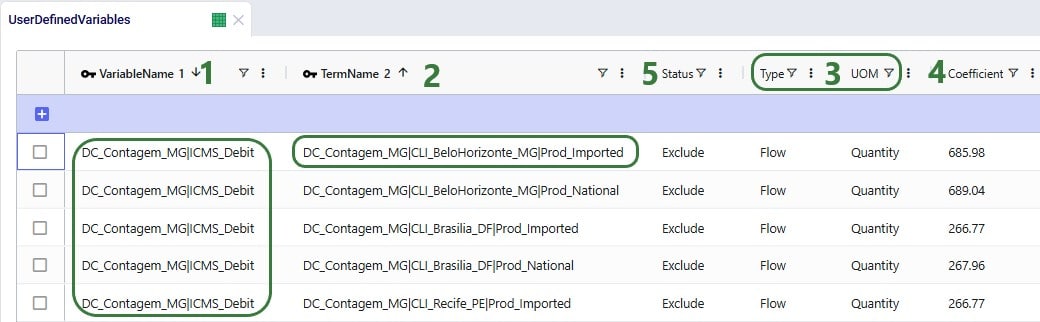

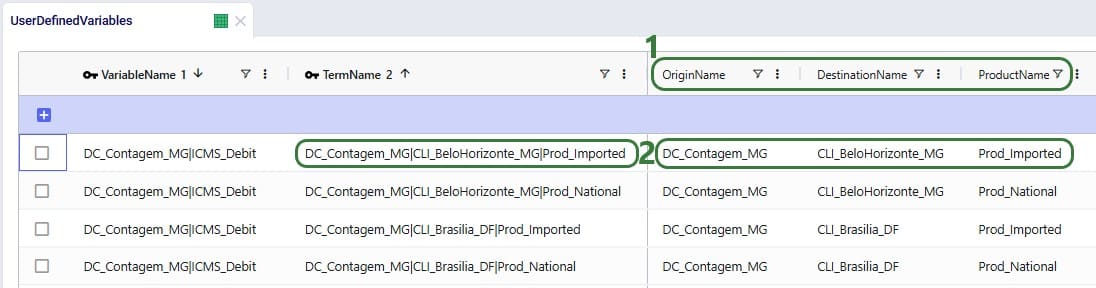

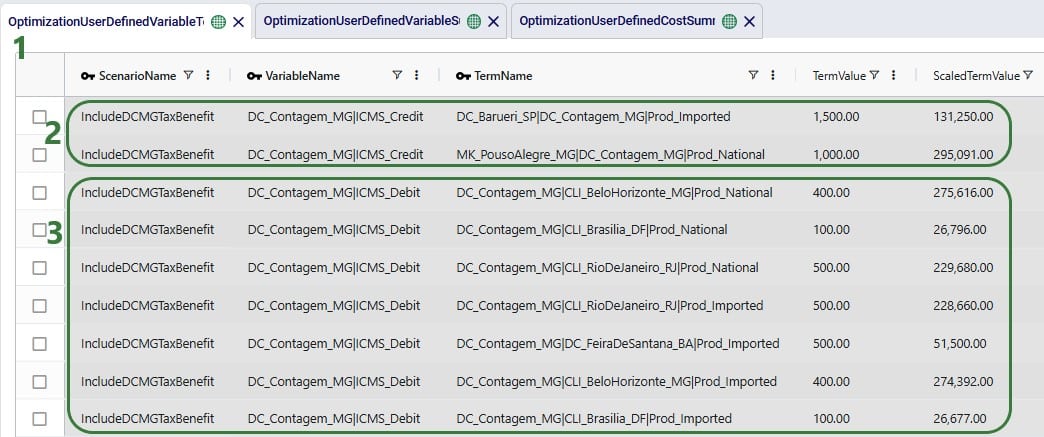

In the User Defined Variables table, we will define 3 variables related to DC_Contagem_MG: one that represents the ICMS Debits, one that represents the ICMS Credits, and one that represents the ICMS Balance (= ICMS Debits – ICMS Credits) for this DC. The ICMS Debits and ICMS Credits variables have multiple terms that each represents a flow out of or a flow into the Contagem DC, respectively. Let us first look at the ICMS Debits variable:

Still looking at the same top records that define the DC_Contagem_MG|ICMS_Debit variable, but freezing the Variable Name and Term Name columns and scrolling right, we can see more of the columns in the User Defined Variables table:

Note that there are quite a few custom columns in this table (not shown in the screenshots; can be added through Grid > Table > Create Custom Column), which were used to calculate the ICMS rates outside of the model. These are helpful to keep in the model, should changes need to be made to the calculations.

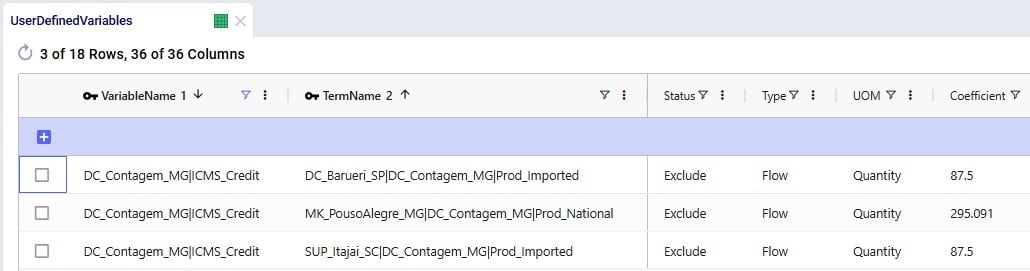

Next, we will have a look at the ICMS Credit variable, which is made up of 3 terms, where each term represents a possible supply/replenishment flow into the Contagem DC:

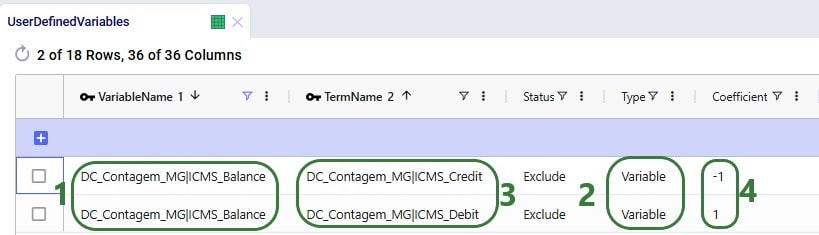

The last step on the User Defined Variables table is to combine the ICMS Credit and ICMS Debit variables to calculate the ICMS balance:

To finalize the setup, we need to add 1 record to the User Defined Costs table, where we will specify that the company has a 55% discount (tax incentive) for the ICMS it pays relating to the Contagem DC:

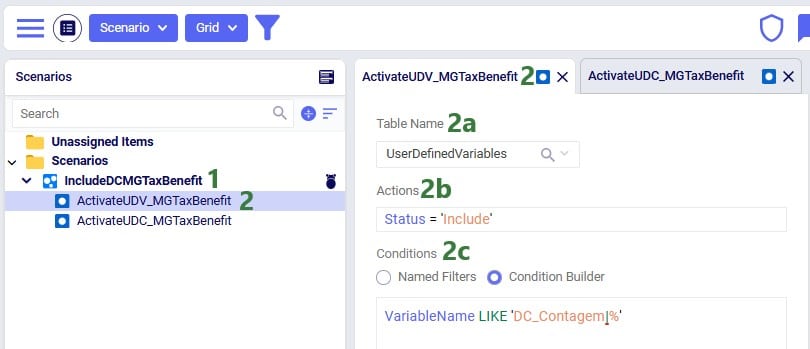

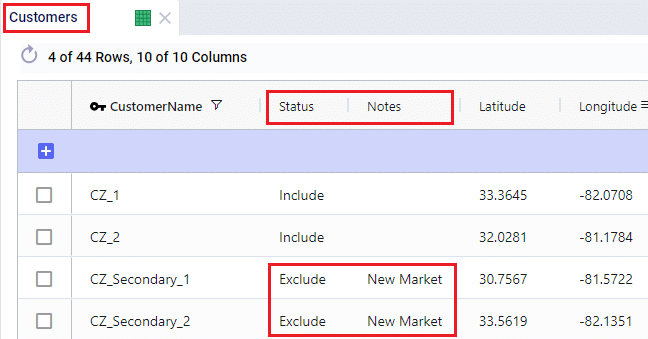

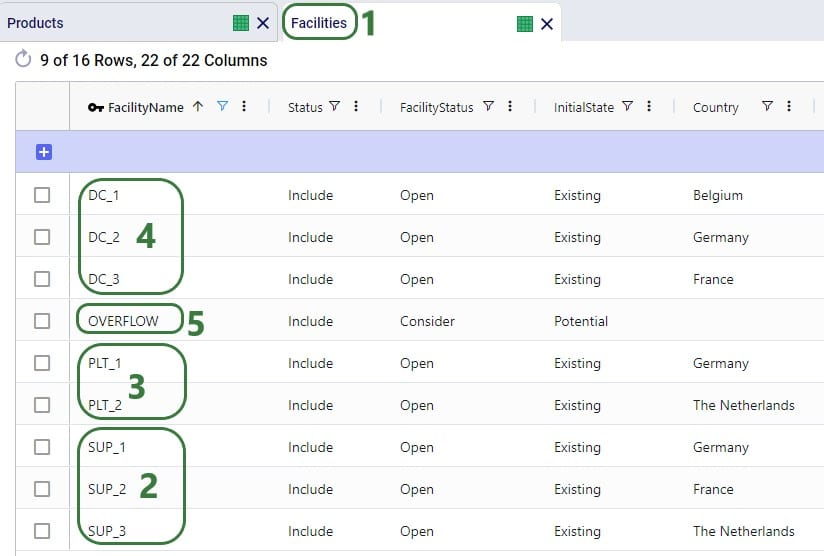

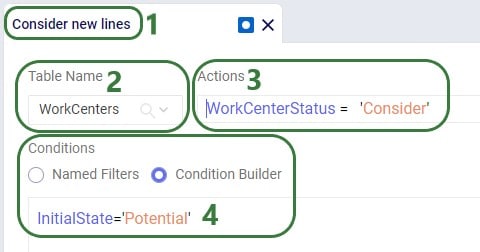

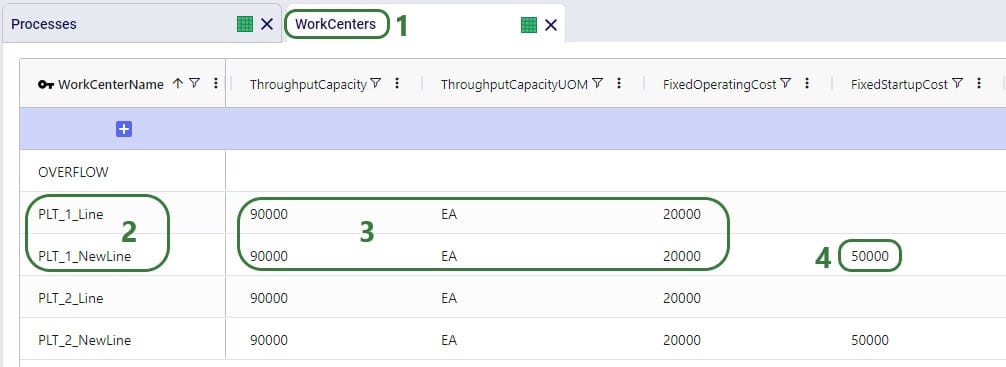

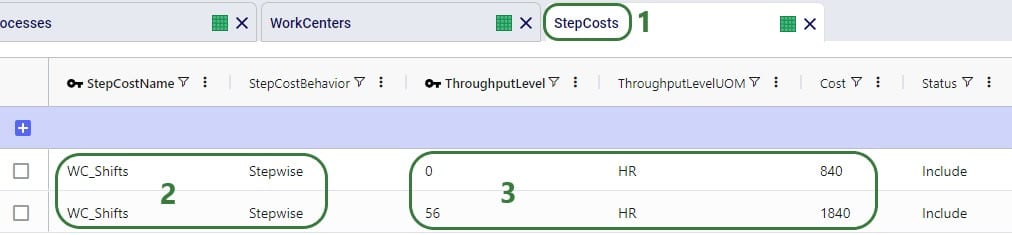

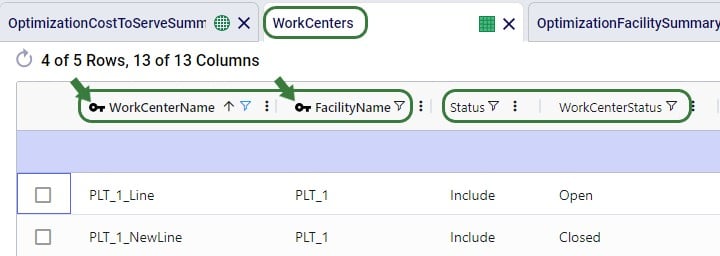

As mentioned in the previous section, all records in the User Defined Variables and User Defined Costs tables have their Status set to Exclude. This way, when the Baseline scenario is run, the ICMS tax incentive is not included, and the network will be optimized just based on the costs included in the model (in this case only transportation costs). We want to include the ICMS tax incentive in a scenario and then compare the outputs with the Baseline scenario. This “IncludeDCMGTaxBenefit” scenario is set up as follows:

Next, we have a look at the second scenario item that is part of this scenario:

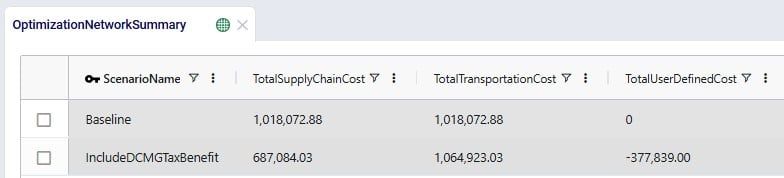

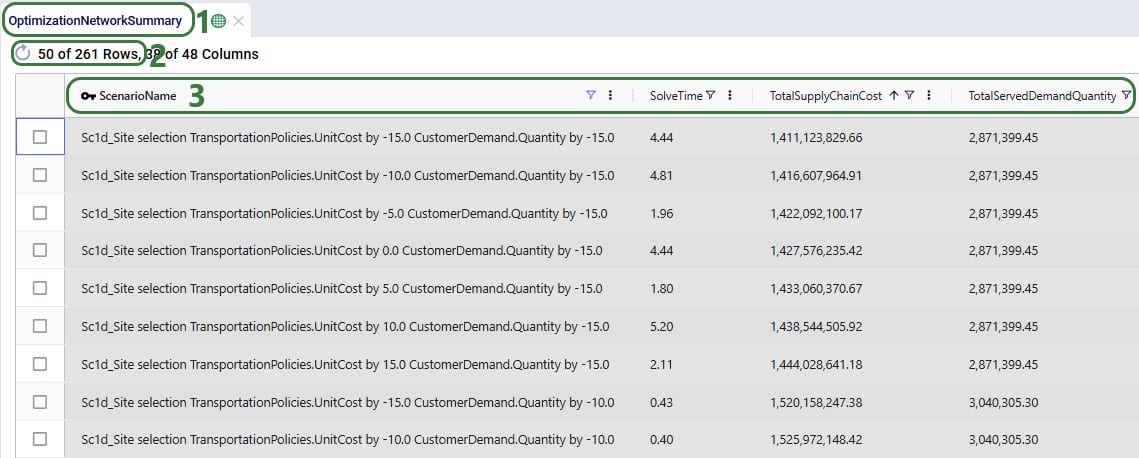

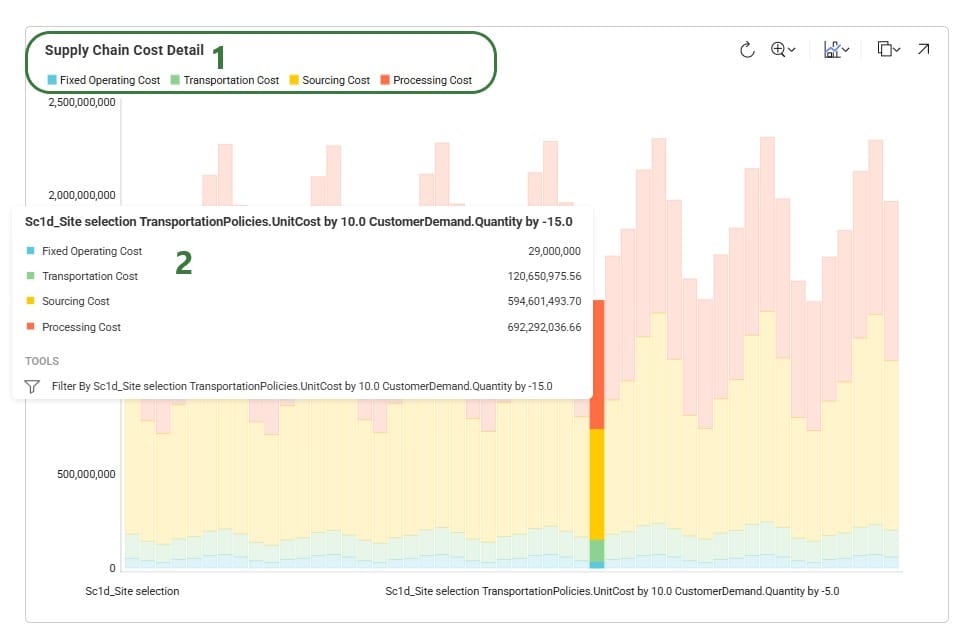

With the scenario set up, we run a network optimization (using the Neo engine) on both scenarios and then first look in the Optimization Network Summary output table:

Notice that the Baseline scenario as expected only contains transportation costs, while the IncludeDCMGTaxBenefits scenario also contains user defined costs, which represent the calculated ICMS tax benefit and have a negative value. So, overall, the IncludeDCMGTaxBenefit scenario has about R$ 331k lower total cost as compared to the Baseline scenario, even though the transportation costs are close to R$ 47k higher. Since the transportation costs are different between the 2 scenarios, we expect some of the flows have changed.

There are 3 network optimization output tables that contain the outputs related to User Defined Variables and Costs:

We will first discuss the Optimization User Defined Variable Term Summary output table:

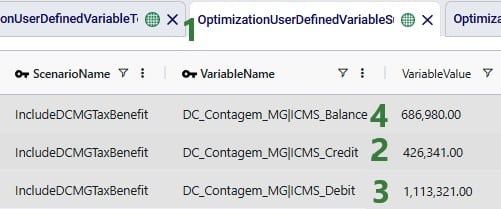

The Optimization User Defined Variable Summary output table contains the outputs at the variable level (e.g. the individual terms of the variables have been aggregated):

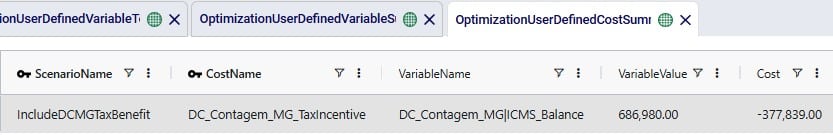

Finally, the Optimization User Defined Cost Summary output table shows the cost based on the 55% benefit that was set:

The DC_Contagem_MG_TaxIncentive benefit is calculated from the DC_Contagem_MG|ICMS_Balance variable, where the Variable Value of R$ 686,980 is multiplied by -0.55 to arrive at the Cost value of R$ -377,839.

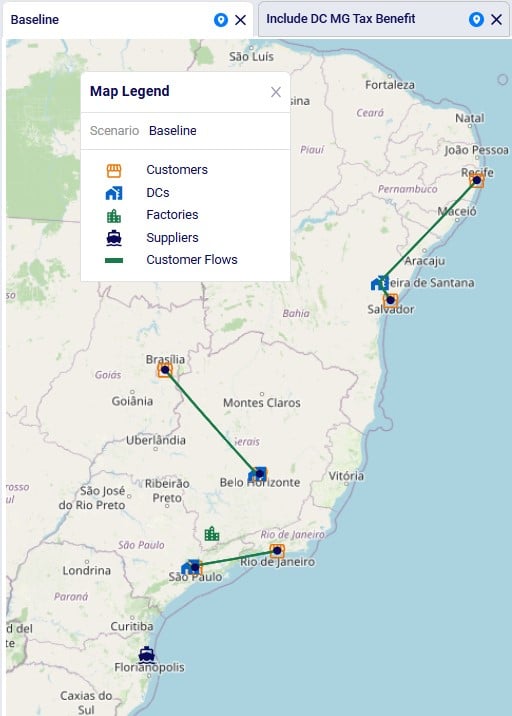

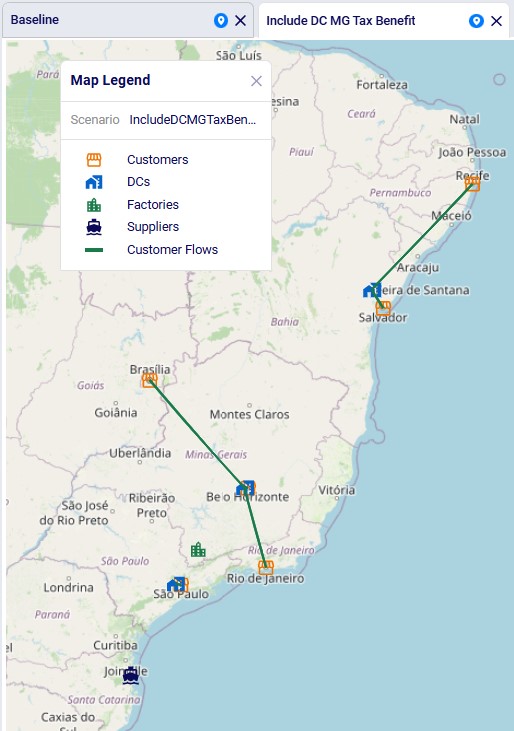

Now that we understand at a high level the cost impact of the ICMS tax incentive and the details of how this was calculated, let us look at more granular outputs, starting with looking at the flows between locations. Navigate to the Maps module within Cosmic Frog and open the maps named Baseline and Include DC MG Tax Benefit, which show outputs from the Baseline and IncludeDCMGTaxBenefit scenarios, respectively. The next 2 screenshots show the flows from DCs to customer locations: Baseline flows in the top screenshot and scenario “Include DC MG Tax Benefit” flows in the bottom screenshot:

We see that in the Baseline the customer in Rio de Janeiro is served by the DC in Sao Paulo. This changes in the scenario where the tax benefit is included: now the Rio de Janeiro customer is served by the Contagem DC (located close to Belo Horizonte). The other customer fulfillment flows are the same between the 2 scenarios.

This model also has 2 custom dashboards set up in the Analytics module; the 1. Scenarios Overview dashboard contains 2 graphs:

This Summary graph shows the cost buckets for each scenario as a bar chart. As discussed when looking at the Optimization Network Summary output table, the IncludeDCMGTaxBenefit scenario has an overall lower cost due to the tax benefit, which offsets the increased transportation costs as compared to the Baseline scenario.

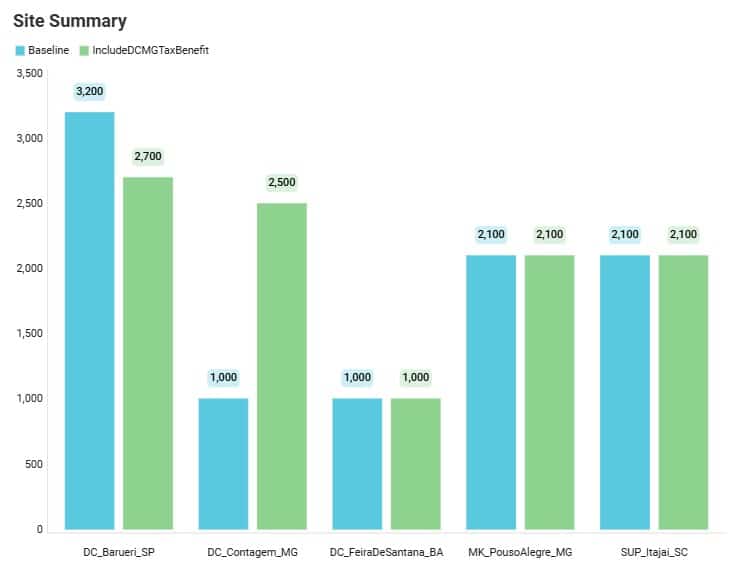

This Site Summary bar chart shows the total outbound quantity for each DC / Factory / Supplier by scenario. We see that the outbound flow for the DC in Barueri is reduced by 500 units in the IncludeDCMGTaxBenefit scenario as compared to the Baseline scenario, whereas the Contagem DC has an increased outbound flow, from 1,000 to 2,500 units. We can examine these shifts in further detail in the second custom dashboard named 2. Outbound Flows by Site, as shown in the next 2 screenshots:

This first screenshot of the dashboard shows the amount of flow from the 3 DCs and the factory to the 6 customer locations. As we already noticed on the map, the only shift here is that the Rio De Janeiro customer is served by the Barueri DC in the Baseline scenario and this changes to it being served by the Contagem DC in the IncludeDCMGTaxBenefit scenario.

Scrolling further right in this table, we see the replenishment flows from the 3 DCs and the Factory to the 3 DCs. There are some more changes here where we see that the flow from the factory to the Barueri DC is reduced by 500 units in the scenario, whereas the flow from the factory to the Contagem DC is increased by 500 units. In the Baseline, the Barueri DC transferred a total of 1,000 units to the other 2 DCs (500 each to the Contagem and Feira de Santana DCs), and the other 2 DCs did not make DC transfers. In the Tax Benefit scenario, the Barueri DC only transfers to the Contagem DC, but now for 1,500 units. We also see that the Contagem DC now transfers 500 units to the Feira de Santana DC, whereas it did not make any transfers in the Baseline scenario.

We hope this gives you a good idea of how taxes and tax incentives can be considered in Cosmic Frog models. Give it a go and let us know of any feedback and/or questions!

Leapfrog helps Cosmic Frog users explore and use their model data via natural language. View data, make changes, create & run scenarios, analyze outputs, learn all about the Anura schema that underlies Cosmic Frog models, and a whole lot more!

Leapfrog combines an extensive knowledge of PostgreSQL with the complete knowledge of Optilogic’s Anura data schema, and all the natural language capabilities of today’s advanced general purpose LLMs.

For a high-level overview and short video introducing Leapfrog, please see the Leapfrog landing page on Optilogic’s website.

In this documentation, we will first get users oriented on where to find Leapfrog and how to interact with it. In the section after, Leapfrog’s capabilities will be listed out with examples of each. Next, the Tips & Tricks section will give users helpful pointers so they can get the most out of Leapfrog. Finally, we will step through the process of building, running, and analyzing a Cosmic Frog model start to finish by only using Leapfrog!

Dive in if you’re ready to take the leap!

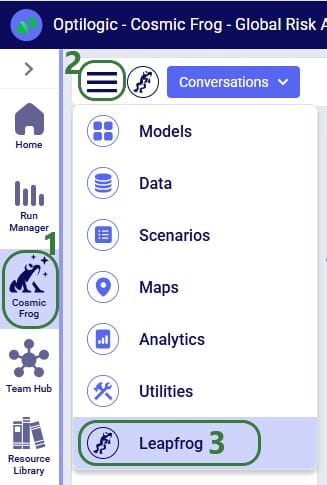

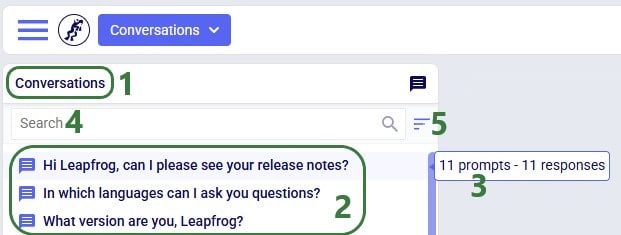

Start using Leapfrog by opening the module within Cosmic Frog:

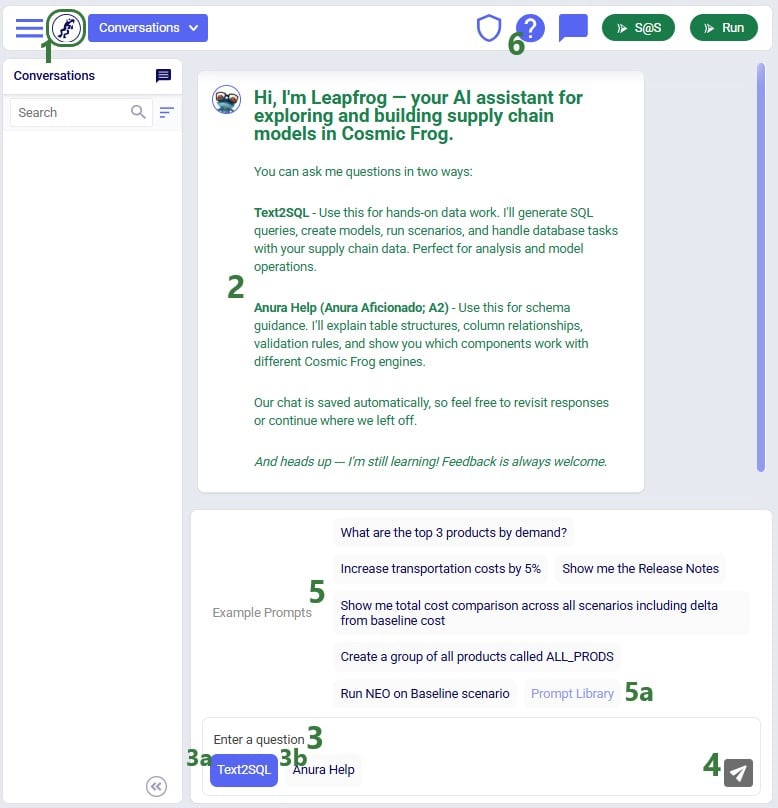

Once the Leapfrog module is open, users’ screens will look similar to the following screenshot:

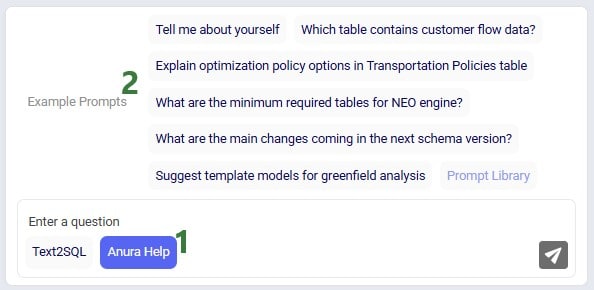

The example prompts when using the Anura Help LLM are shown here:

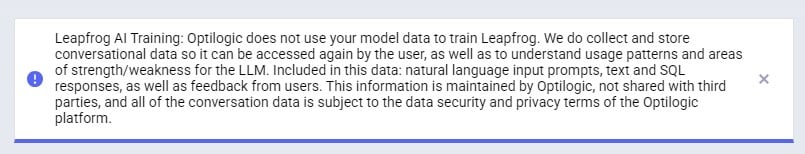

When first starting to use Leapfrog, users will also see the Privacy and Data Security statement, which reads as follows:

“Leapfrog AI Training: Optilogic does not use your model data to train Leapfrog. We do collect and store conversational data so it can be accessed again by the user, as well as to understand usage patterns and areas of strength/weakness for the LLM. Included in this data: natural language input prompts, text and SQL responses, as well as feedback from users. This information is maintained by Optilogic, not shared with third parties, and all of the conversation data is subject to the data security and privacy terms of the Optilogic platform.”

This message will stay visible within Leapfrog whenever it is being used, unless user clicks on the grey cross button on the right to close the message. Once closed, the message will not be shown again while using Leapfrog.

Conversation history is stored on the platform at the user level - not in the model database - so it does not get shared when a model is shared. Note that if you are working in a Team rather than in your My Account (see documentation on Teams on the Optilogic platform here), the Leapfrog conversations you are creating will be available to the other team members when they are working with the same model.

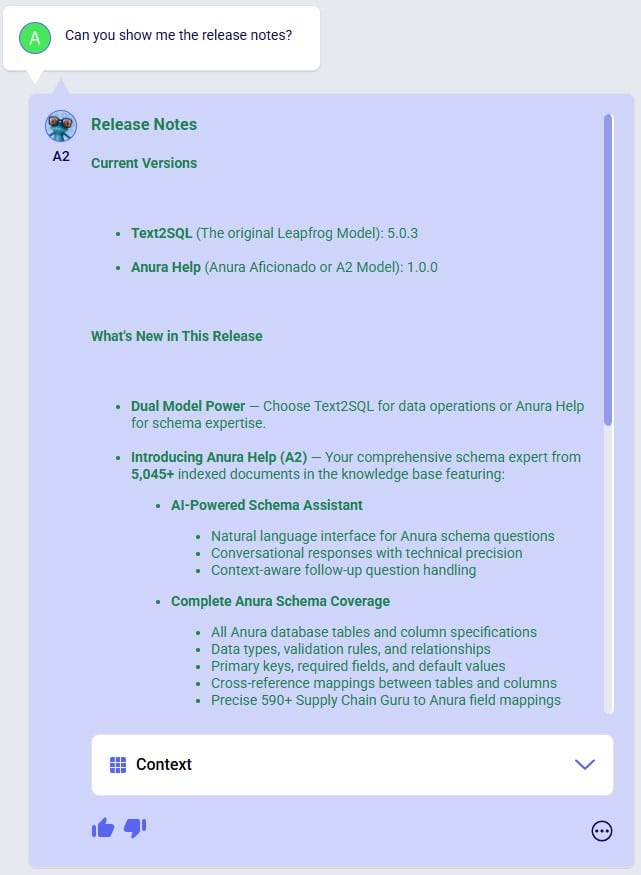

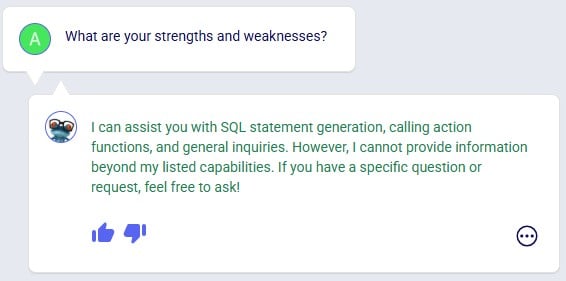

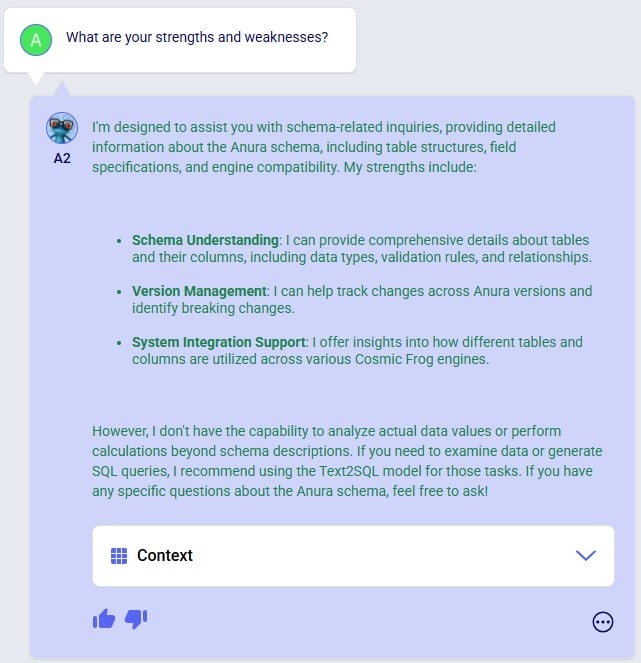

As mentioned in the previous section, Leapfrog currently makes use of 2 large language models (LLMs): Text2SQL and Anura Help (also referred to as Anura Aficionado or A2). They will be explained in some more detail here. There is also an appendix to this documentation where for a few example personas Leapfrog questions and responses are listed, which showcases how some users may predominantly use one model, while others may switch back and forth between them. Of course, when unsure, users can try a specific prompt using both LLMs to see which provides the most helpful response.

Please note that in future users will not need to indicate which LLM they want to run a prompt against as Leapfrog will recognize which one will be most suitable to use based on the prompt.

The Text2SQL LLM combines extensive knowledge of PostgreSQL with Optilogic’s Anura data schema, and all the natural language capabilities of today’s advanced general purpose LLMs. It has been further fine-tuned on a large set of prompt-response pairs hand-crafted by supply chain modeling experts. This allows the Text2SQL model to generate SQL queries from natural language prompts.

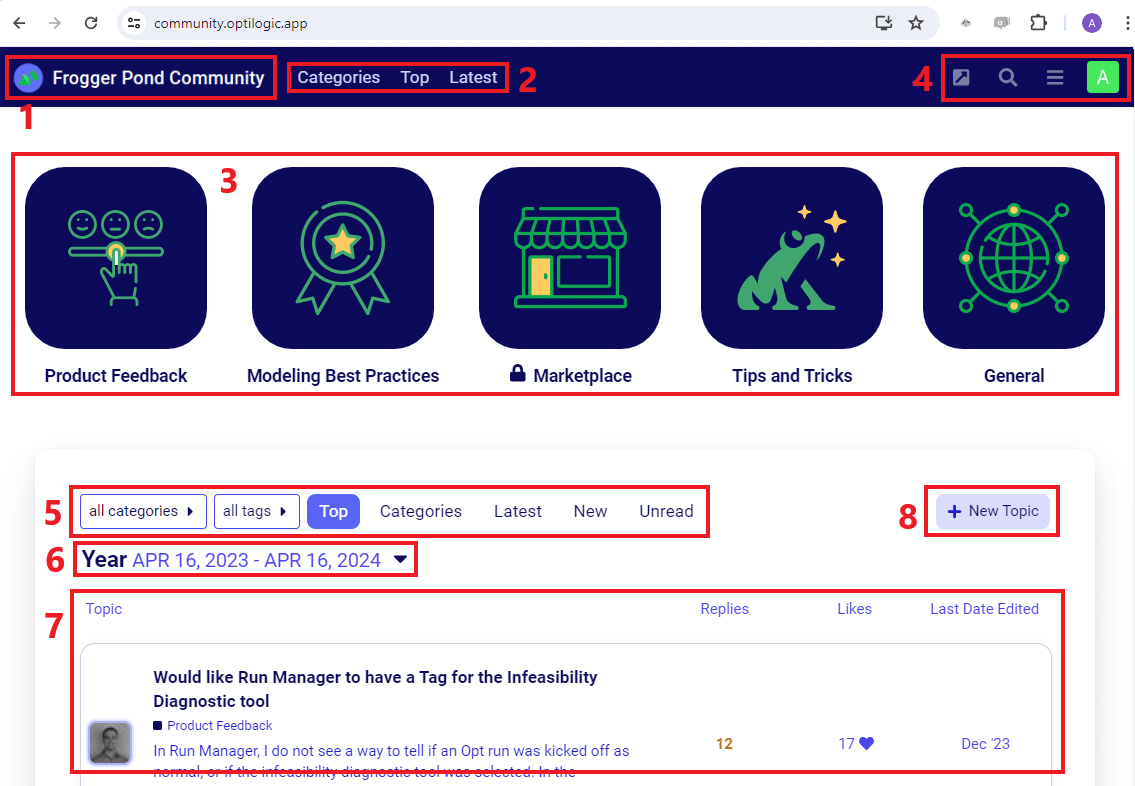

Prompts for which it is best to use the Text2SQL model often imply an action: “Show me X”, “Add Y”, “Delete Z”, “Run scenario A”, “Create B”, etc. See also the example prompts listed when starting a new conversation and those in the Prompt Library on the Frogger Pond community.

Leapfrog responses using this model are usually actionable: run the returned SQL query to add / edit / delete data, create a scenario or model, run a scenario, geocode locations, etc.

A full list of the capabilities of both LLMs is covered in the section “Leapfrog Capabilities” further below.

Anura Help (also referred to as Anura Aficionado or A2) is a specialized assistant that leverages advanced natural language processing to help users navigate and understand the Anura schema within Optilogic's Cosmic Frog application. The Anura schema is the foundational framework powering Cosmic Frog's optimization, simulation, and risk assessment capabilities. Anura Help eliminates traditional barriers to schema understanding by providing immediate, authoritative guidance for supply chain modelers, developers, and analysts.

Anura Help’s architecture uses the Retrieval Augmented Generation (RAG) approach: based on the natural language prompt, first the most relevant documents of those in its knowledge base are retrieved (e.g. schema details or engine awareness details). Next, it uses them to generate a natural language response.

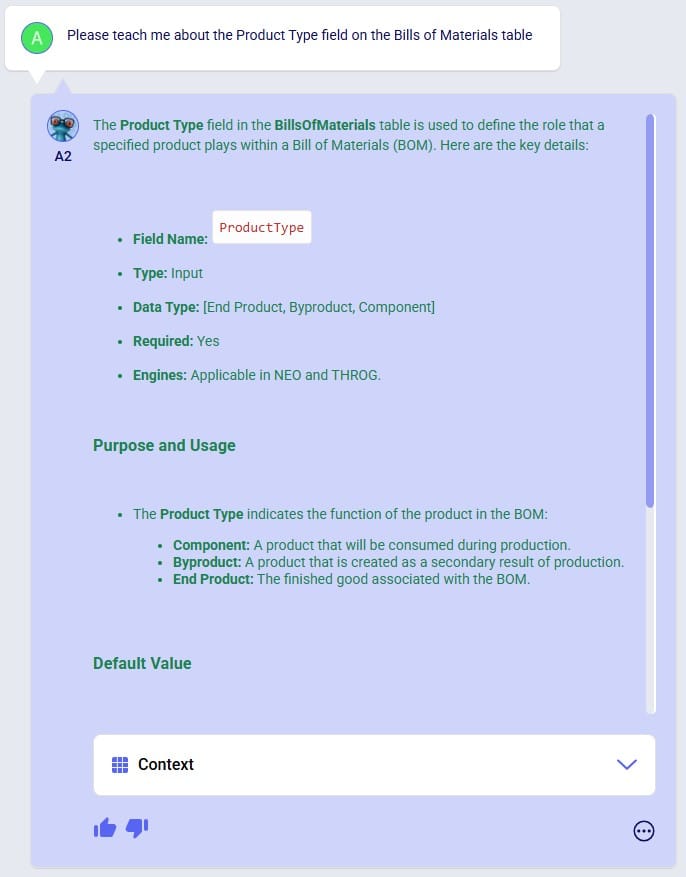

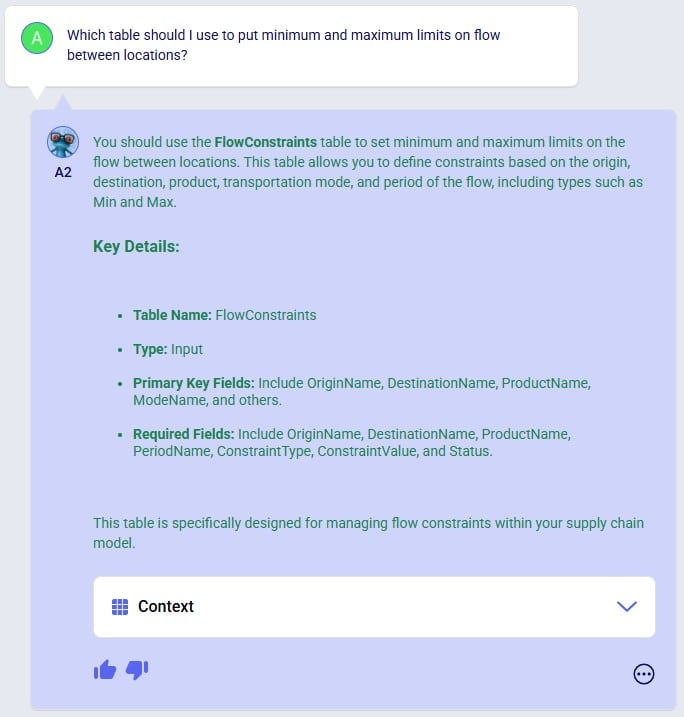

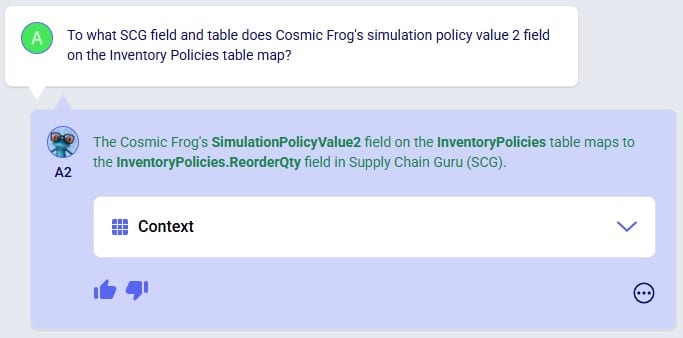

Use the Anura Help model when wanting to learn about specific fields, tables or engines in Cosmic Frog. Its core capabilities include:

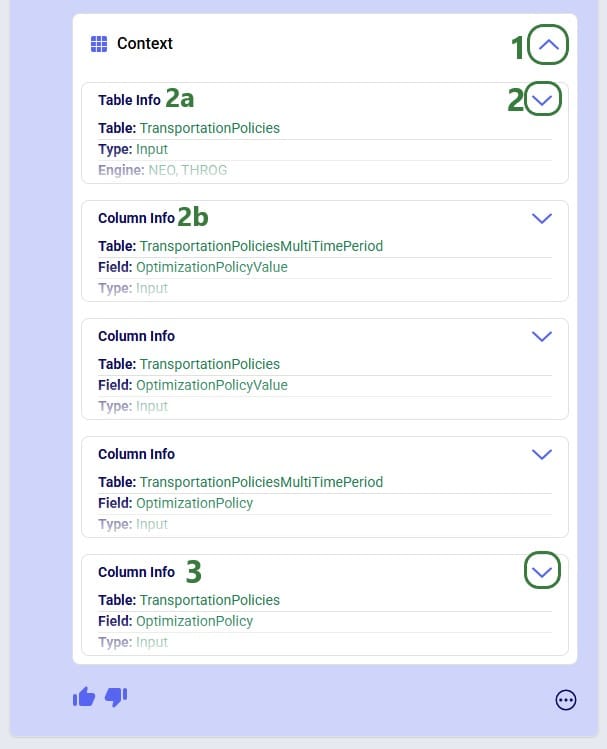

Responses from Leapfrog when using the Anura Help model are text-based and generated from retrieved documents shown in the context section. This context can for example be of the category “column info” where all details for a specific field are listed.

A full list of the capabilities of both LLMs is covered in the section “Leapfrog Capabilities” further below.

The following list compares the 2 LLMs available in Leapfrog today:

Depending on the type of question, Leapfrog’s response to it can take different forms: text, links, SQL queries, data grids, and options to create models, scenarios, scenario items, groups, run scenarios, or geocode locations. We will look at several examples of questions that result in these different types of responses in this section. This is not an exhaustive list; the next section “Leapfrog Capabilities” will go through the types of prompt-response pairs Leapfrog is capable of today.

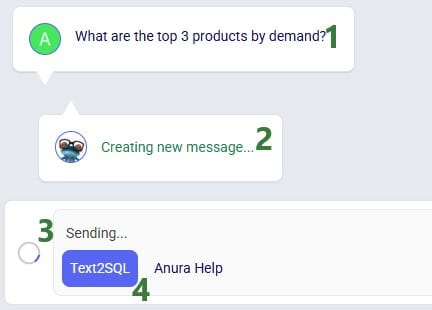

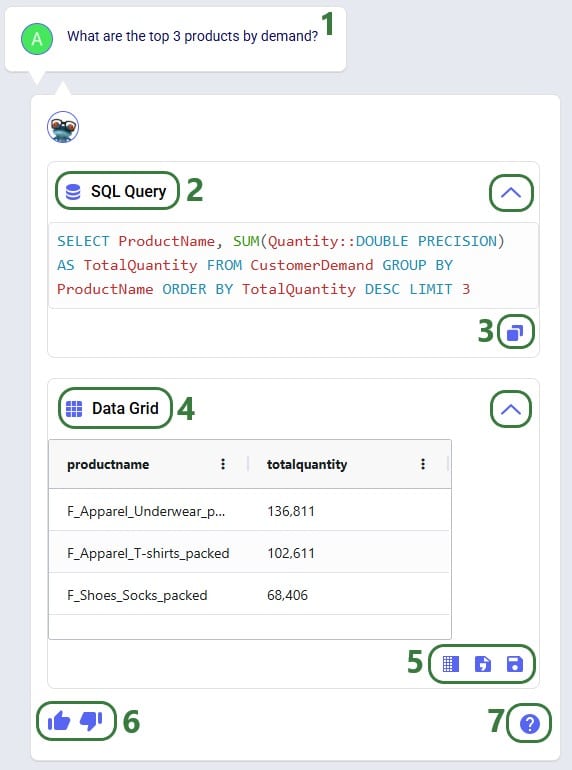

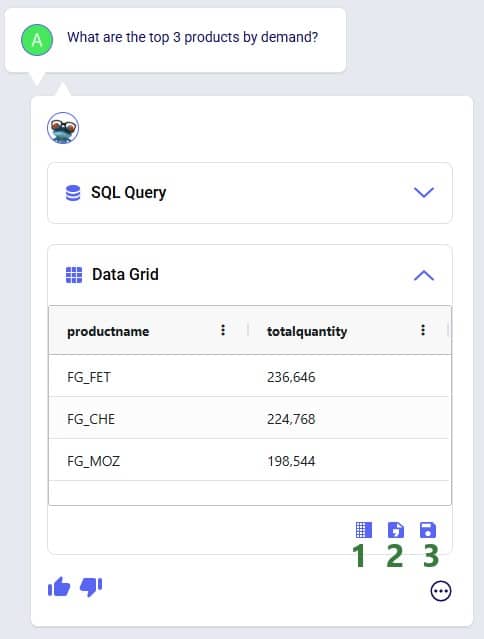

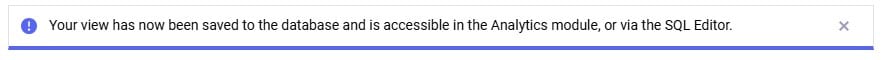

For our first question, we used the first Text2SQL example prompt “What are the top 3 products by demand?” by clicking on it. After submitting the prompt, we see that Leapfrog is busy formulating a response:

And Leapfrog’s response to the prompt is as follows:

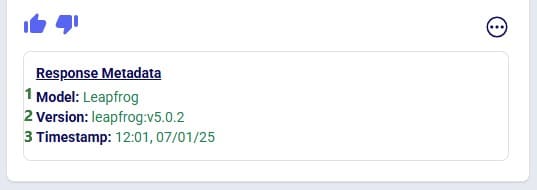

The metadata included here are:

Clicking on the icon with 3 dots again will collapse the response metadata.

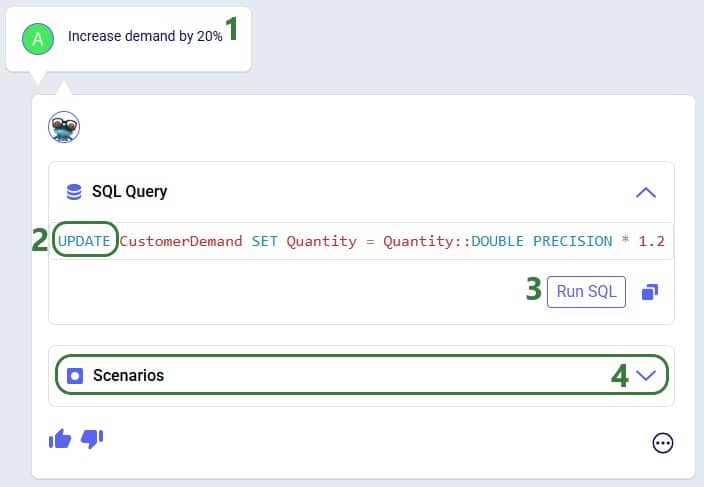

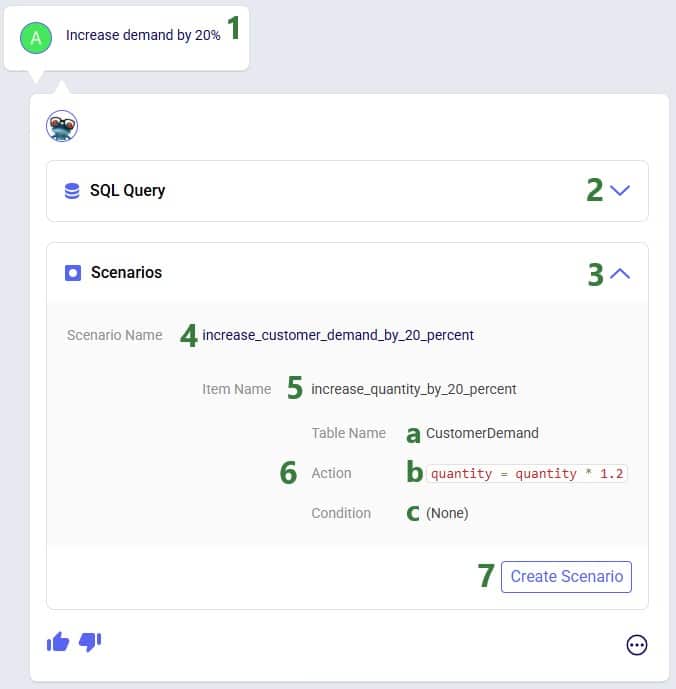

This first prompt asked a question about the input data contained in the Cosmic Frog model. Let us now look at a slightly different type of prompt, which asks to change model input:

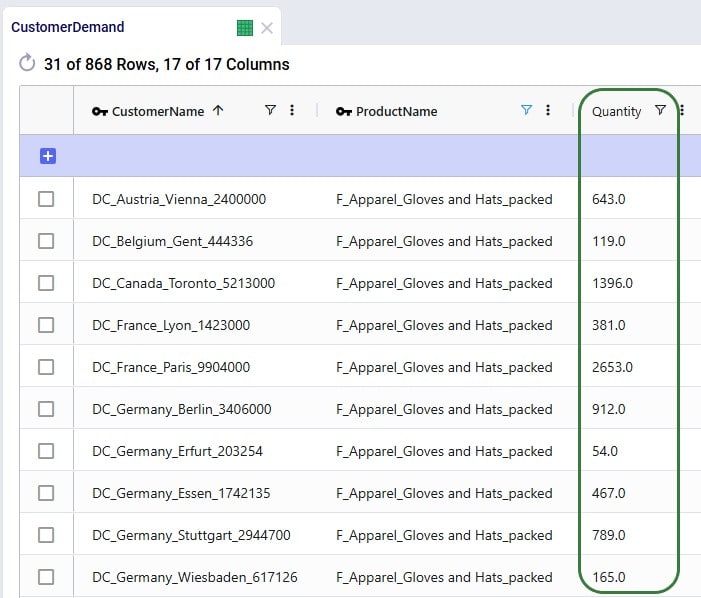

We are going to run the SQL query of the above response to our “Increase demand by 20%” prompt. Before doing so, let’s review a subset of 10 records of the Customer Demand input table (under the Data Module, in the Input Tables section):

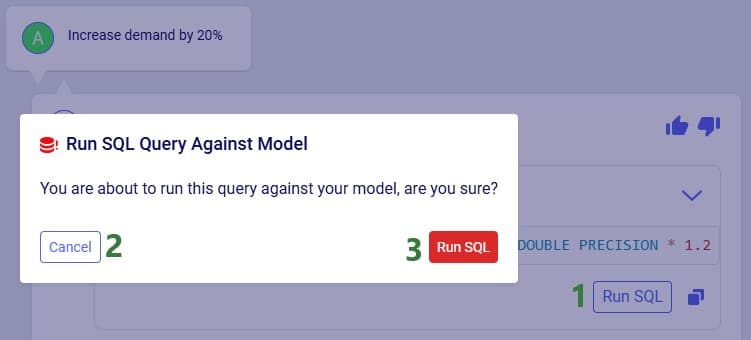

Next, we will run the SQL query:

After clicking the Run SQL button at the bottom of the SQL Query section in Leapfrog’s response, it becomes greyed out so it will not accidentally be run again. Hovering over the button also shows text indicating the query was already run:

Note that closing and reopening the model or refreshing the browser will revert the Run SQL button’s state so it is clickable again.

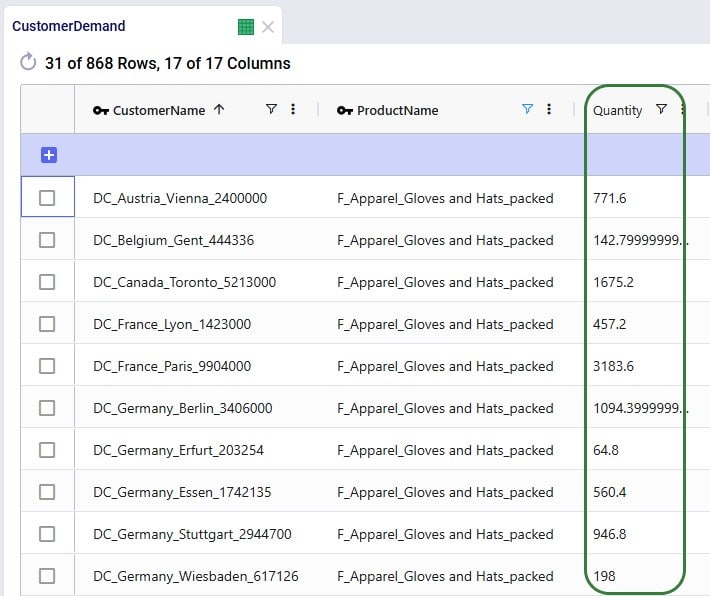

Opening the Customer Demand table again and looking at the same 10 records, we see that the Quantity field has indeed been changed to its previous value multiplied by 1.2 (the first record’s value was 643, and 643 * 1.2 = 771.6, etc.):

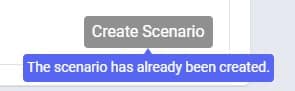

Running the SQL query to increase the demand by 20% directly in the master data worked fine as we just saw. However, if we do not want to change the master data, but rather want to increase the demand quantity as part of a scenario, this is possible too:

After navigating to the Scenarios module within our Cosmic Frog model, we can see the scenario and its item have been created:

Note that if desired, the scenario and scenario item names auto-generated by Leapfrog can be changed in the Scenarios module of Cosmic Frog: just select the scenario or item and then choose “Rename” from the Scenario drop-down list at the top.

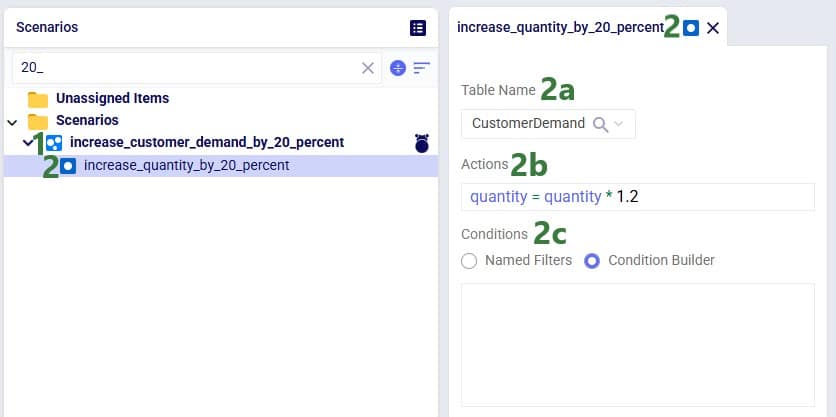

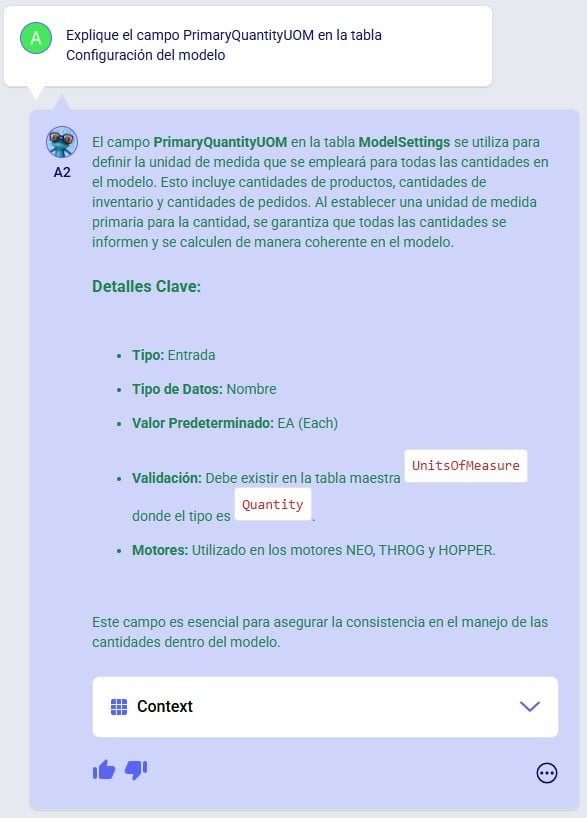

As a final example of a question & answer pair in this section, let us look at one where we use the Anura Help LLM, and Leapfrog responds with text plus context:

There is a lot of information listed here; we will explain the most commonly used information:

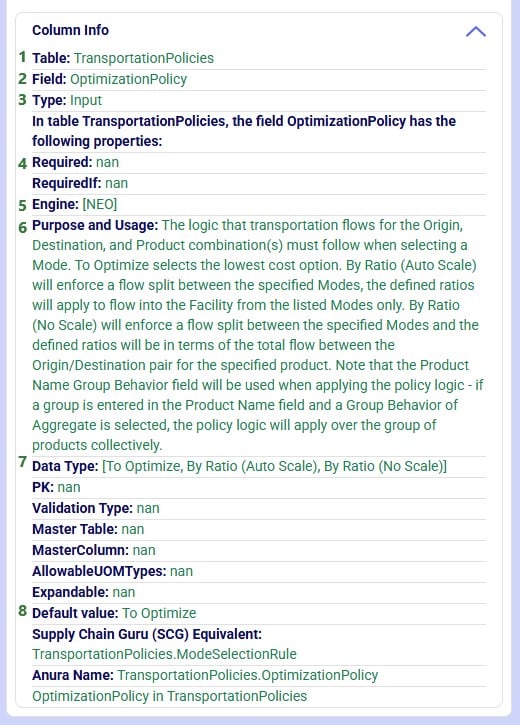

Prompts and their responses are organized into conversations in the Leapfrog module:

Users can organize their conversations with Leapfrog by using the options from the Conversations drop-down at the top of the Leapfrog module:

Users can rate Leapfrog responses by clicking on the thumbs up (like) and thumbs down (dislike) buttons and, optionally, providing additional feedback. This feedback is used to continuously improve Leapfrog. Giving a thumbs up to indicate the response is what you expected helps reinforce correct answers from Leapfrog. When a response is not what was expected or wrong, users can help improve Leapfrog’s underlying LLMs by giving the response a thumbs down. Especially thumbs down ratings & additional feedback will be reviewed so Leapfrog can learn and become more useful all the time.

When a response is not as expected as was the case in the following screenshot, user is encouraged to click the thumbs down button:

After clicking on the Send button, the detailed feedback is automatically added to the conversation:

The next screenshot shows an example where user gave Leapfrog’s response a thumbs up as it was what user expected. This feedback can then be used by Leapfrog to reinforce correct answers. User also had the option to provide detailed feedback again, using any of the following 4 optional tags: Showcase Example, Surprising, Fun, and Repeatable Use Case. In this example, user decided not to give detailed feedback and clicked on the Close button after the detailed feedback form came up:

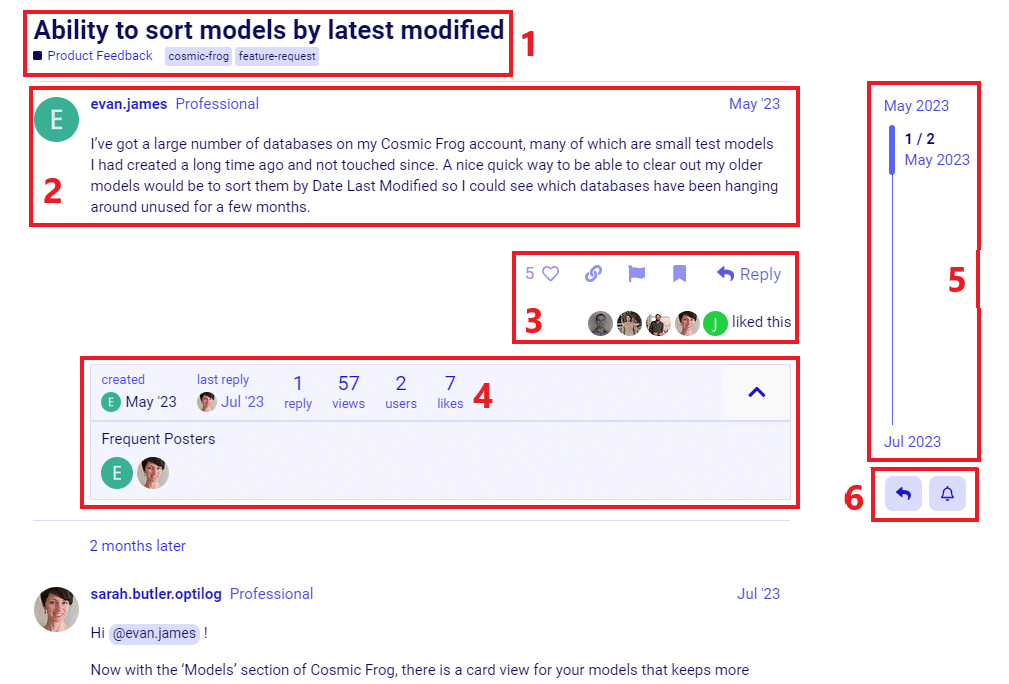

If you have any additional Leapfrog feedback (or questions) beyond what can be captured here, you can feel free to send an email to Leapfrog@Optilogic.com. You are also very welcome to ask questions, share your experiences, and provide feedback on Leapfrog in the Frogger Pond Community.

We will now go back to our first prompt “What are the top 3 products by demand” to explore some of the options users have when Data Grids are included in a Leapfrog response, which is the case when Leapfrog’s SQL Query response is a SELECT statement.

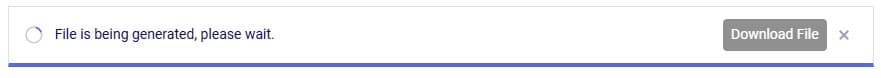

When clicking on the Download File button, a zip file with the name of the active Cosmic Frog model appended with an ID, is downloaded to the user’s Downloads folder. The zip contains:

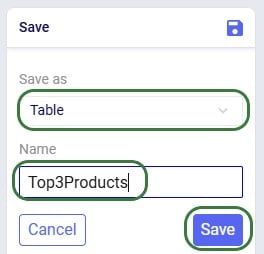

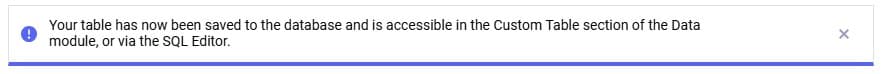

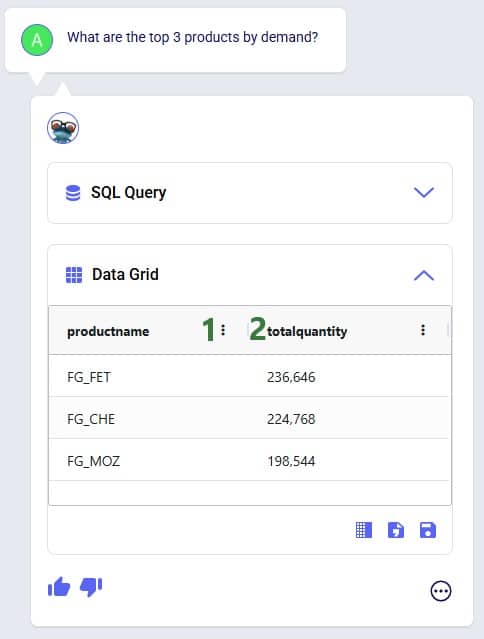

After clicking on Save, following message appears beneath the Data Grid in Leapfrog’s response:

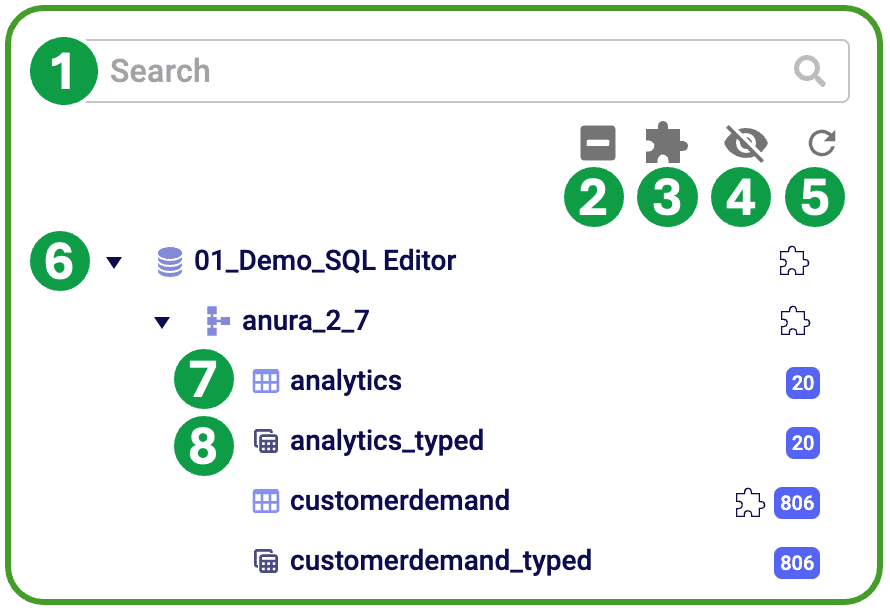

Looking in the Custom Tables section (#2 in screenshot below) of the Data module (#1 in screenshot below), we indeed see this newly created table named top3products (#3 in screenshot below) with the same contents as the Data Grid of the Leapfrog response:

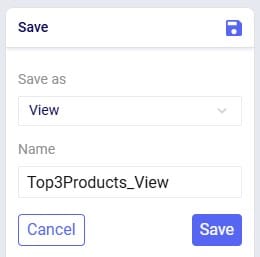

If we choose to save the Data Grid as a view instead of a table, it goes as follows:

We choose Save as View and give it the name of Top3Products_View. The message that comes up once the view is created reads as follows:

Going to the Analytics module in Cosmic Frog, choosing to add a new dashboard and in this new dashboard a new visualization, we can find the top3products_view in the Views section:

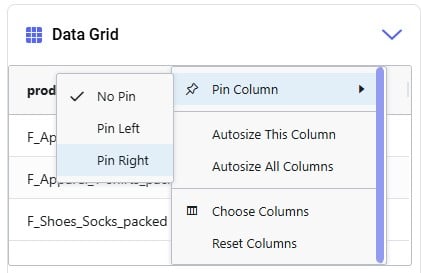

We will go back to the original Data Grid in Leapfrog’s response to explore a few more options user has here:

Please note:

In this section we will list out what Leapfrog is capable of and give examples of each capability. These capabilities include (the LLM each capability applies to is listed in parentheses):

Each of these capabilities will be discussed in the following sections, where a brief description of each capability is given, several example prompts illustrating the capability are listed, and a few screenshots showing the capability are included as well. Please remember that many more example prompts can be found in the Prompt Library on the Frogger Pond community.

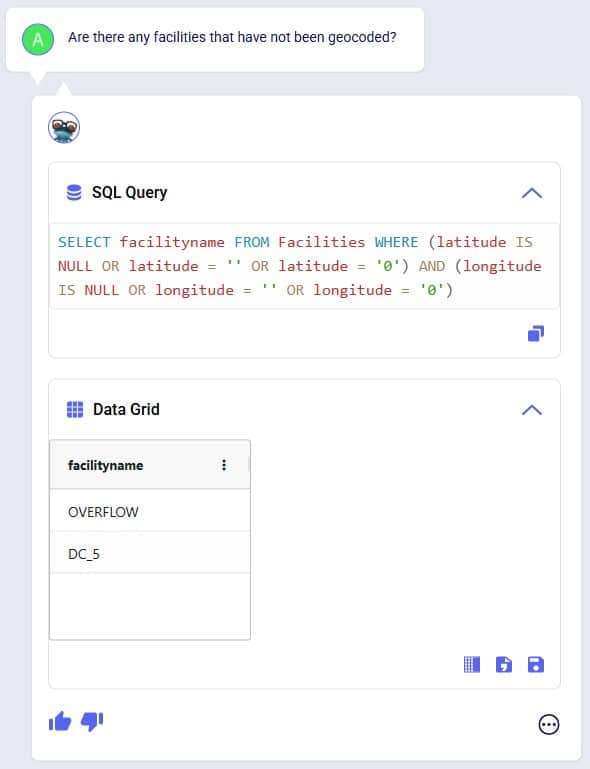

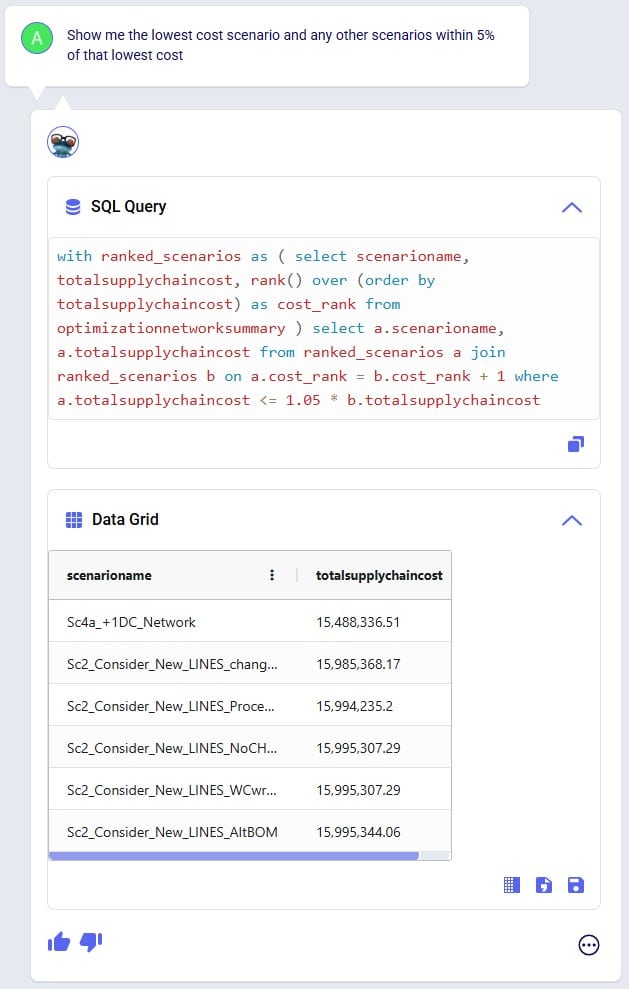

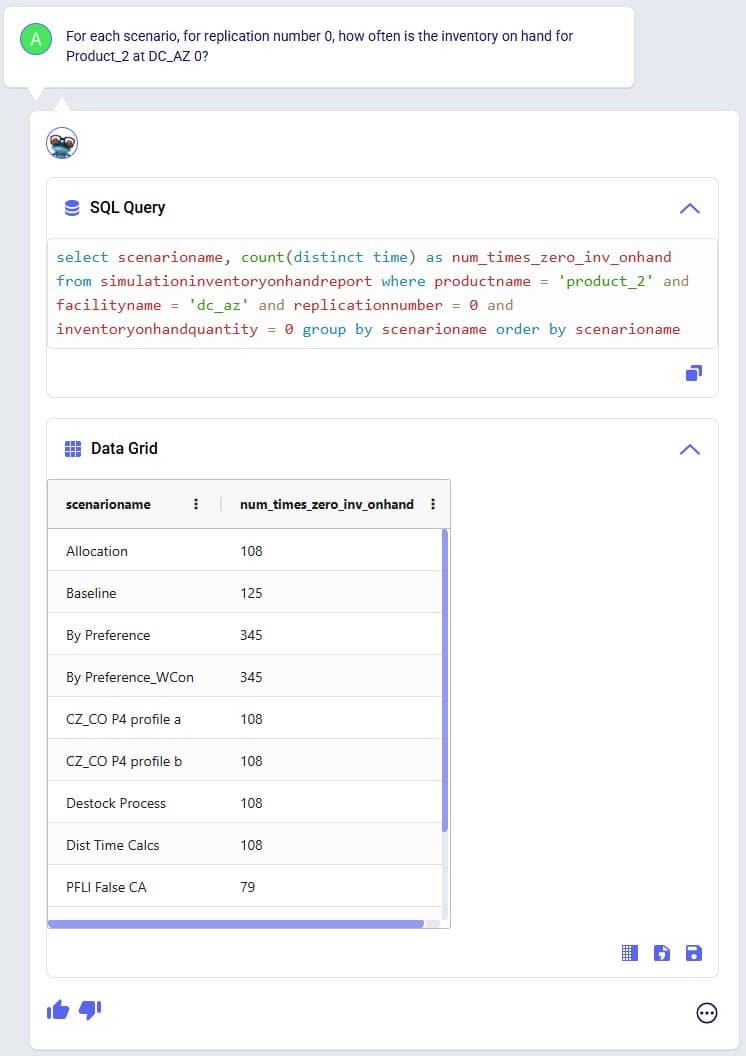

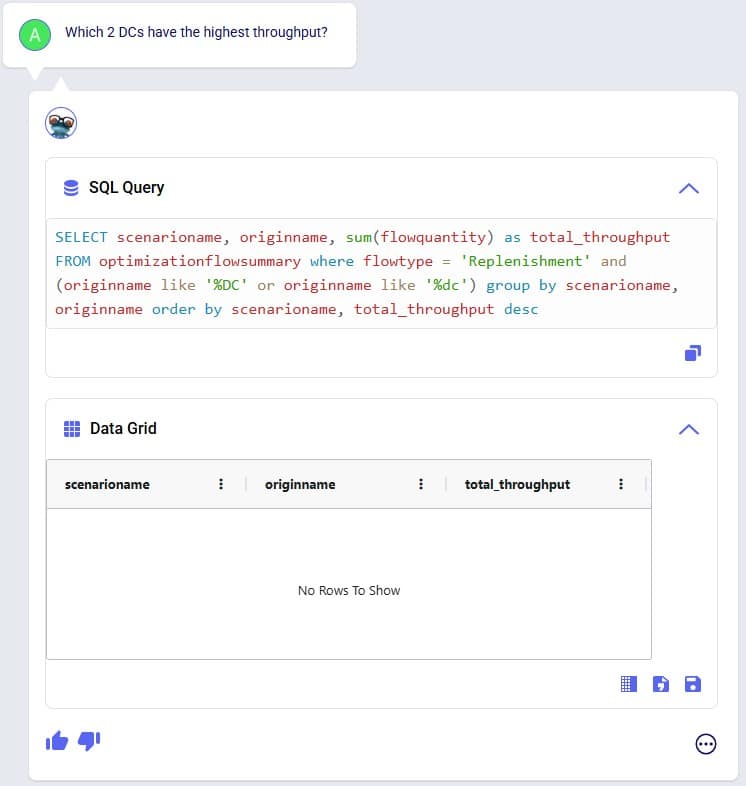

Interrogate input and output data using natural language. Use it to check completeness of input data, and to summarize input and/or output data. Leapfrog responds with SELECT Statements and shows a Data Grid preview as we have seen above. Export the data grid or save it as a table or view for further use, which has been covered above already too.

Example prompts:

The following 3 screenshots show examples of checking input data (first screenshot), and interrogating output data (second and third screenshot):

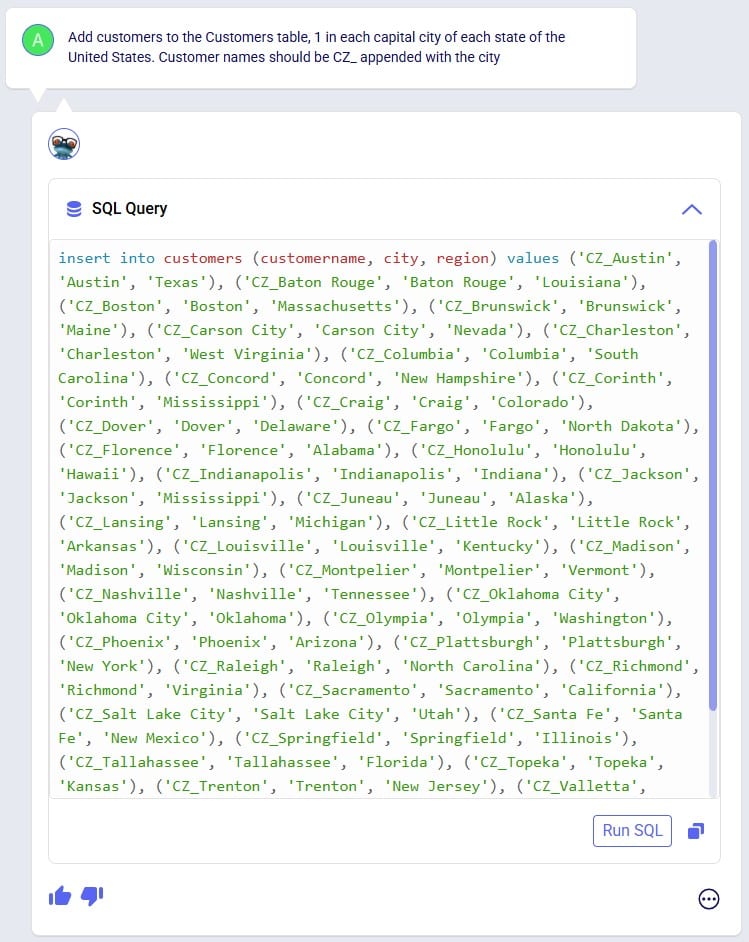

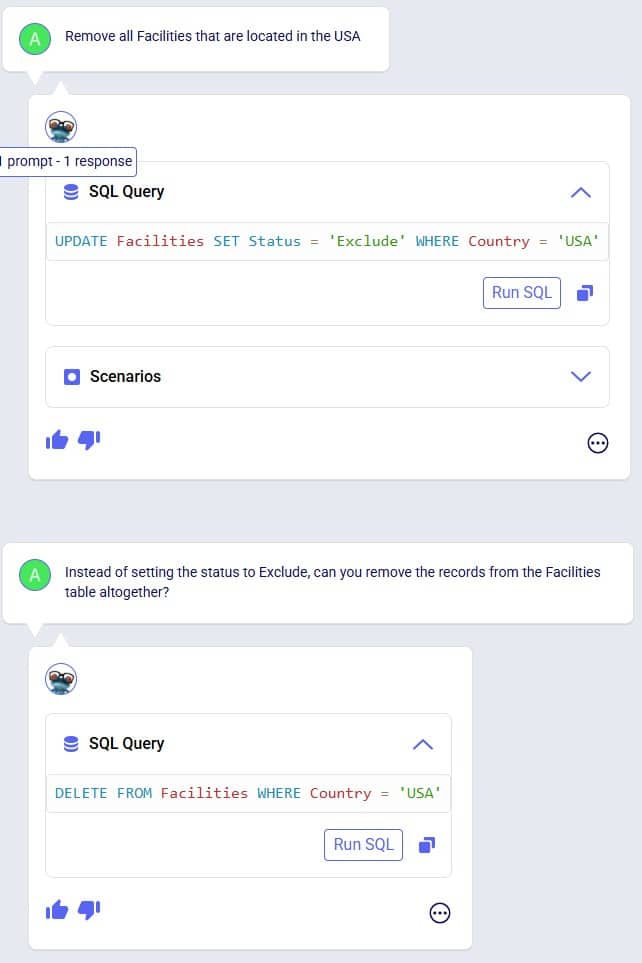

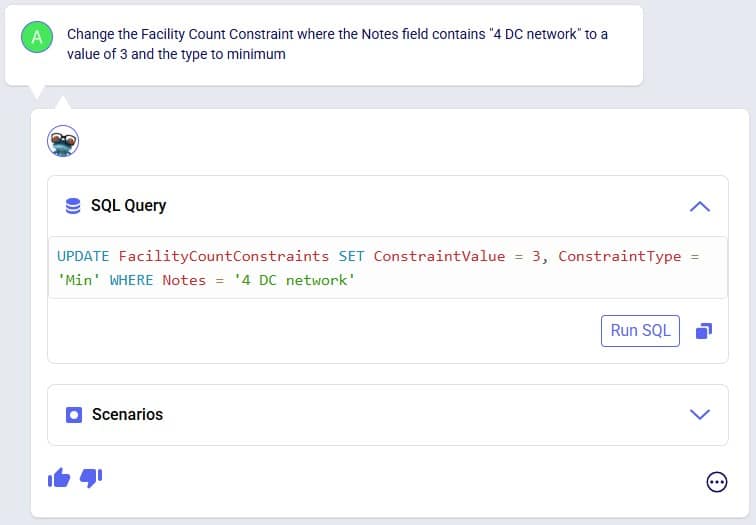

Tell Leapfrog what you want to edit in the input data of your Cosmic Frog model, and it will respond with UPDATE, INSERT, and DELETE SQL Statements. User can opt to run these SQL Queries to permanently make the change in the master input data. For UPDATE SQL Queries, Leapfrog’s response will also include the option to create a scenario and scenario item that will make the change, which we will focus on in the next section.

Example prompts:

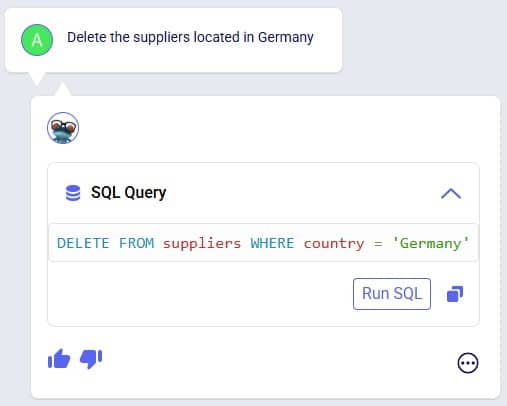

The following 3 screenshots show examples changing values in the input data (first screenshot), adding records to an input table (second screenshot), and deleting records from an input table (third screenshot):

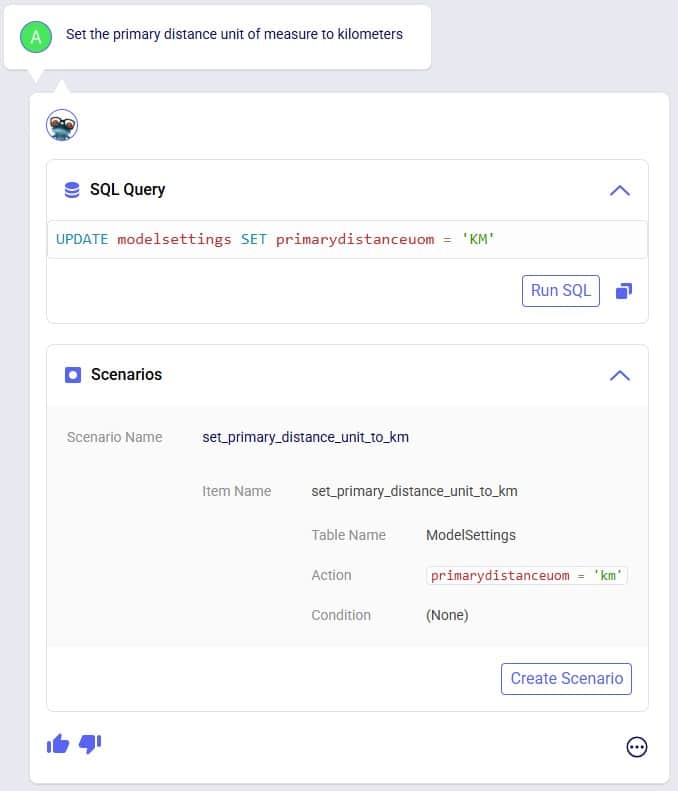

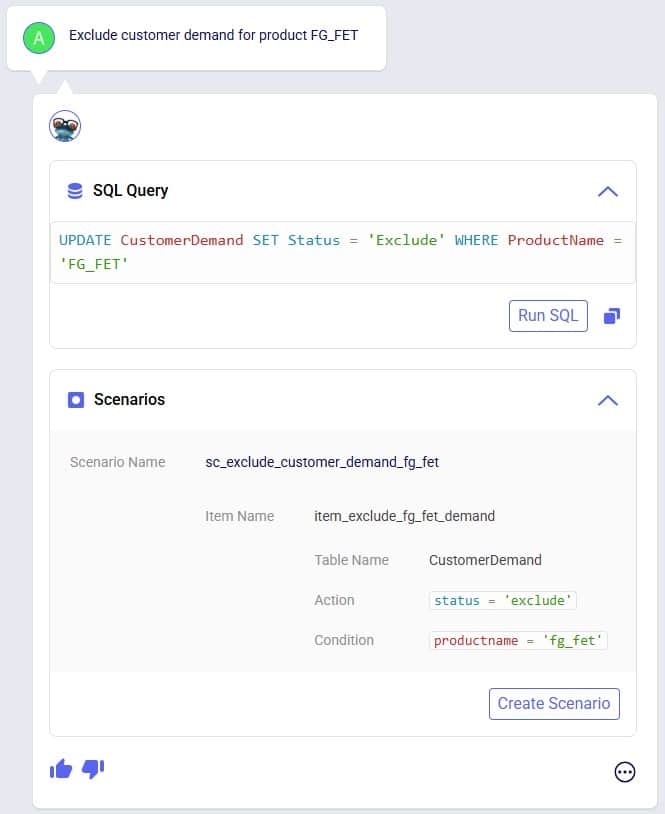

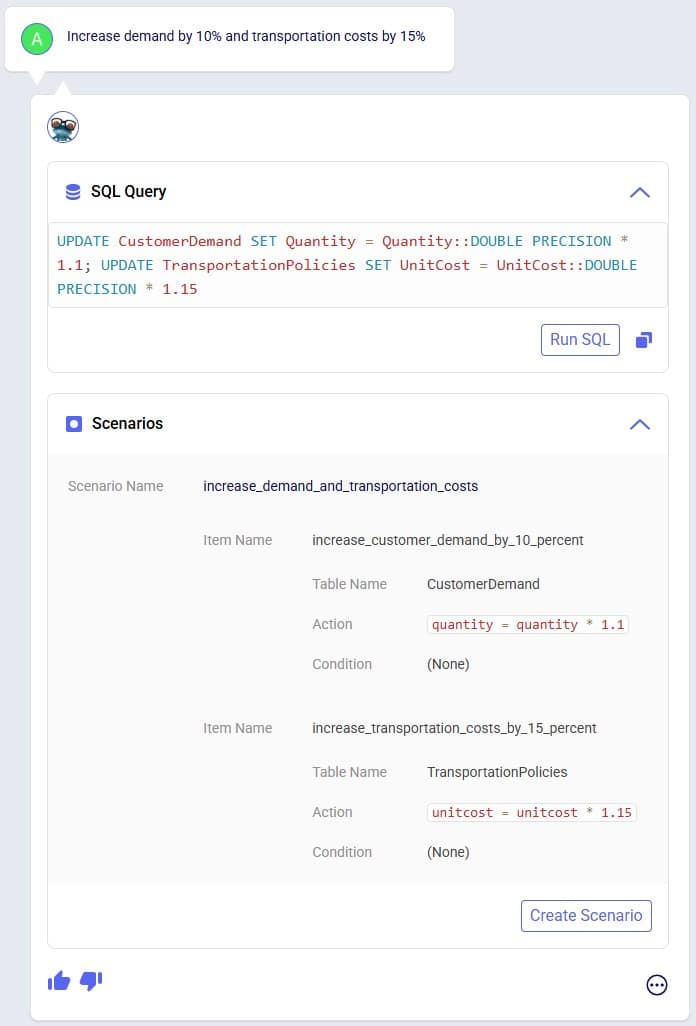

Make changes to input data, but through scenarios rather than updating the master tables directly. Prompts that result in UPDATE SQL Queries will have a Scenarios part in their responses and users can easily create a new scenario that will make the input data change by one click of a button.

Example prompts:

The following 3 screenshots show example prompts with responses from which scenarios can be created: create a scenario which makes a change to all records in 1 input table (first screenshot), create a scenario which makes a change to records in 1 input table that match a condition (second screenshot), and create a scenario that makes changes in 2 input tables (third screenshot):

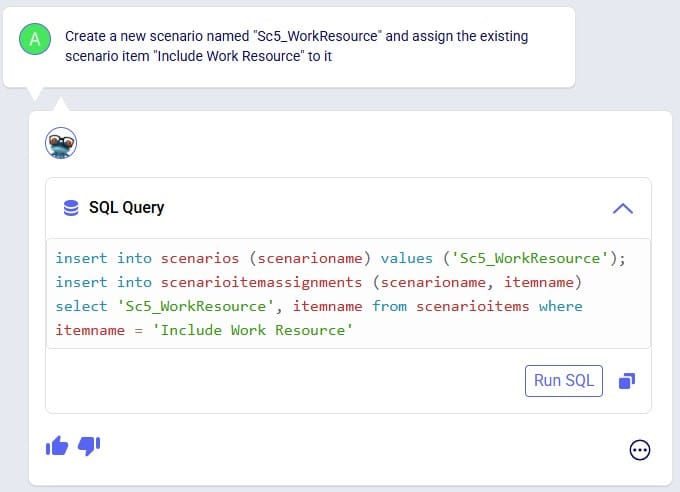

The above screenshots show examples of Leapfrog responses that contain a Scenarios section and from which new scenarios and scenario items can be created by clicking on the Create Scenario button. In addition to the above, users can also use Leapfrog to manage scenarios by using prompts that specifically create scenarios and/or items and assigning specific scenario items to specific scenarios. These result in INSERT INTO SQL Statements which can then be implemented by using the Run SQL button. See the following 2 screenshots for examples of this, where 1) a new scenario is created and an existing scenario item is then assigned to it, and 2) a new scenario item is created which is then assigned to an already existing scenario:

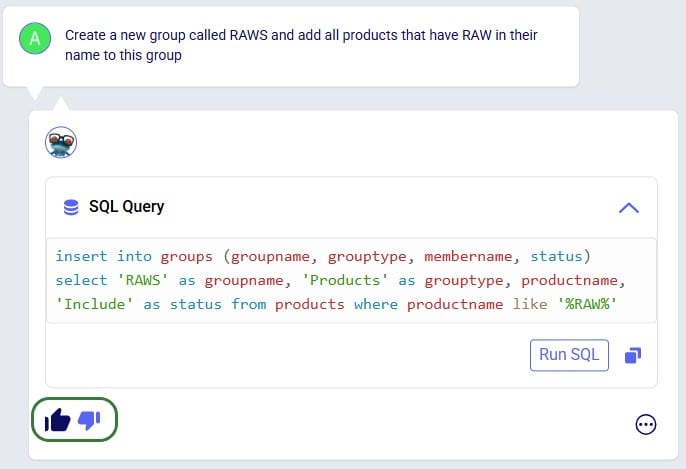

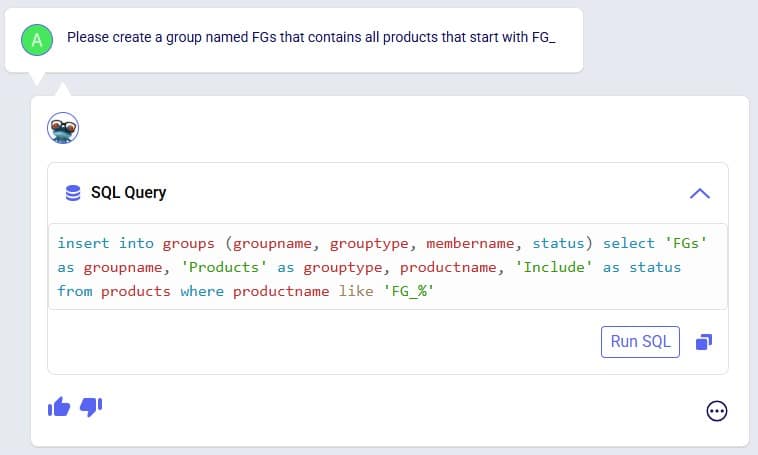

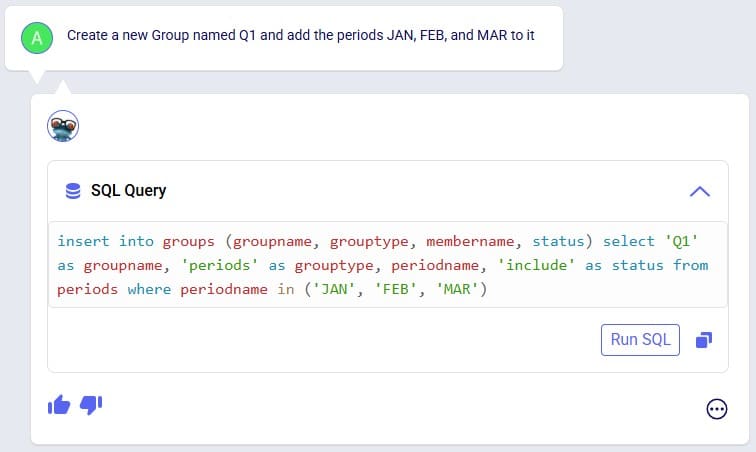

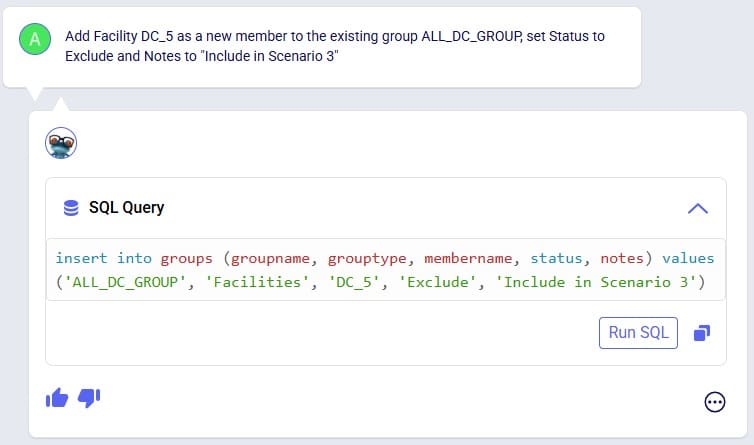

Leapfrog can create new groups and add group members to new and existing groups. Just specify the group name and which members it needs to have in the prompt and Leapfrog’s response will be one or multiple INSERT INTO SQL Statements.

Example prompts:

The following 4 screenshots show example prompts of creating groups and group members: 1) creates a new products group and adds products that have names with a certain prefix (FG_) to it, 2) creates a new periods group and adds 3 specific periods to it, 3) creates a new suppliers group and adds all suppliers that are located in China to it, and 4) adds a new member to an existing facilities group, and in addition explicitly sets the Status and Notes field of this new record in the Groups table:

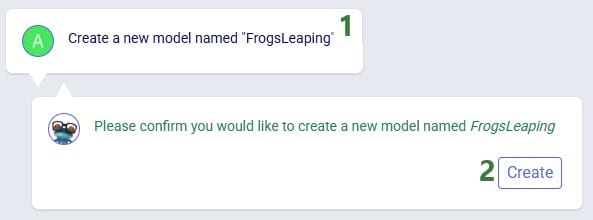

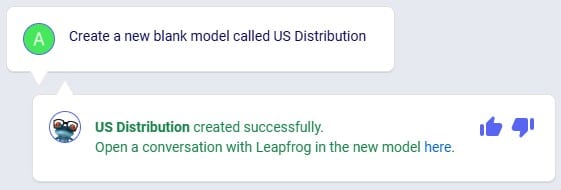

Leapfrog can create a new, blank model. Leapfrog's response will ask user to confirm if they want to create the new model before creating it. If confirmed, the response will update to contain a link which takes user to the Leapfrog module in this newly created model in a new tab of the browser.

Example prompts:

Following 2 screenshots show an example where a new model named “FrogsLeaping” is created:

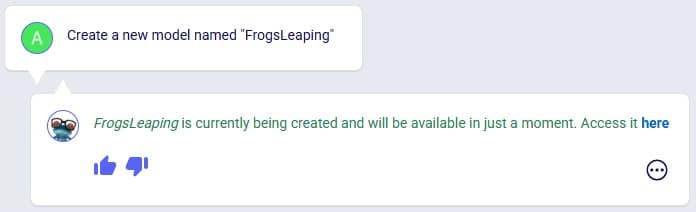

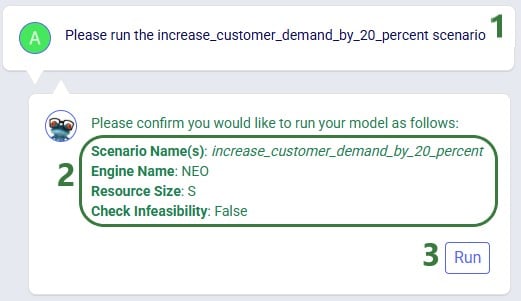

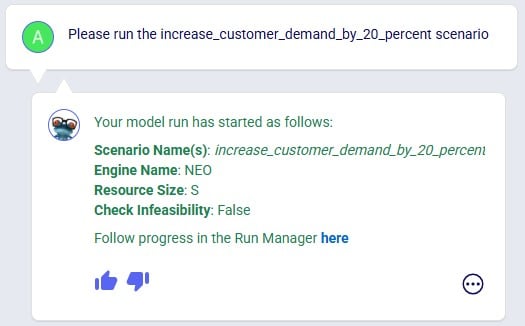

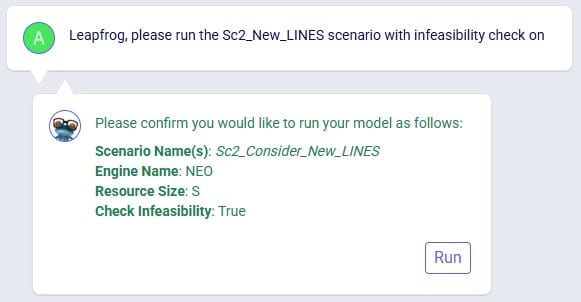

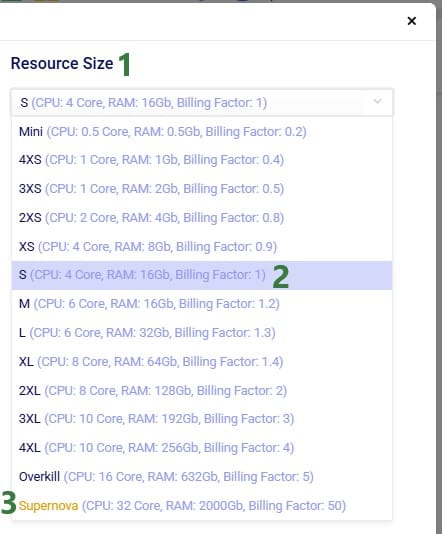

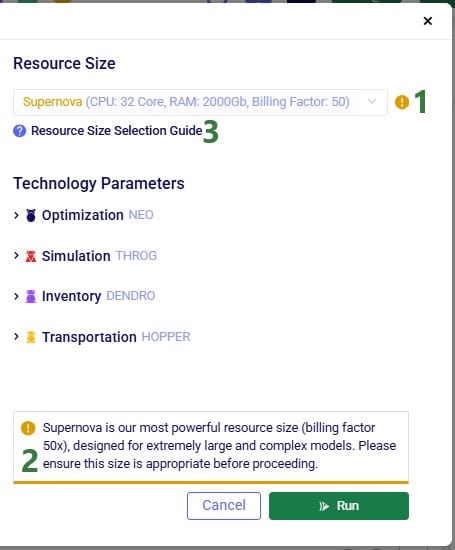

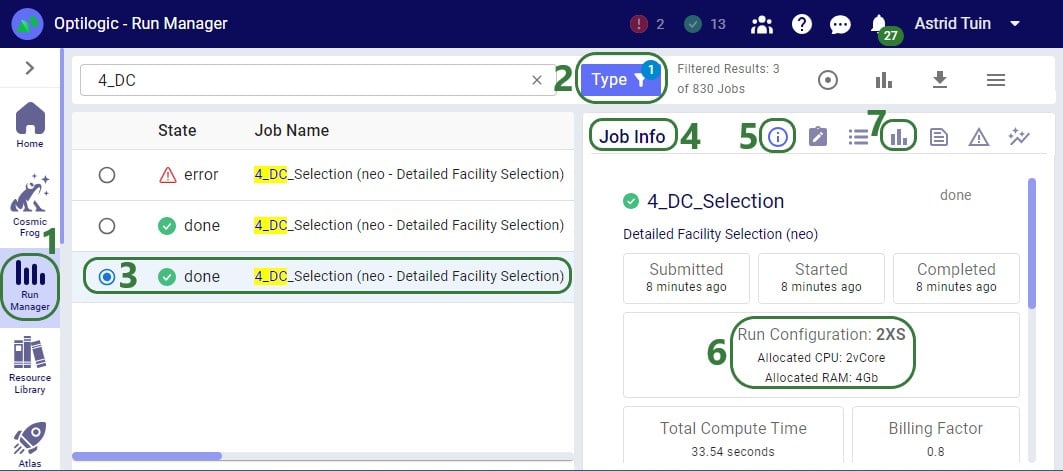

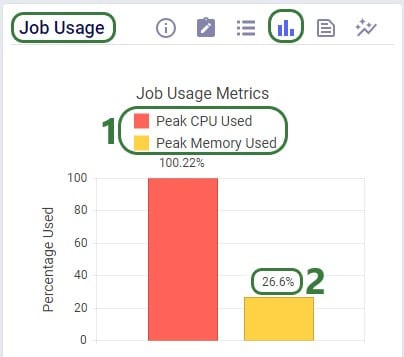

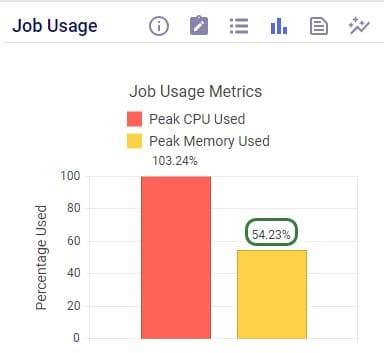

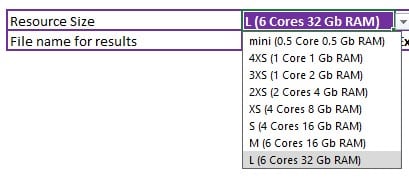

You can ask Leapfrog to kick off any model runs for you. Optionally you can specify the scenario(s) you want to be run, which engine to use, and what resource size to use. For Neo (network optimization) runs, user can additionally indicate if the infeasibility check should be turned on. If no scenario(s) are specified, all scenarios present in the model will be run. If no engine is specified, the Neo engine (network optimization) will be used. If no resource size is specified, S will be used. If for Neo runs it is not specified if the infeasibility check should be turned on or off it will be off by default.

Leapfrog’s response will summarize the scenario(s) that are to be run, the engine that will be used, the resource size that will be used, and for Neo runs if the infeasibility check will be on or off. If user indeed wants to run the scenario(s) with these settings, they can confirm by clicking on the Run button. If so, the response will change to contain a link to the Run Manager application on Optilogic’s platform, which will be opened in a new tab of the browser when clicked. In the Run Manager, users can monitor the progress of any model runs.

The engines available in Cosmic Frog are:

The resource sizes available are as follows, from smallest to largest: Mini, 4XS, 3XS, 2XS, XS, S, M, L, XL, 2XL, 3XL, 4XL, Overkill. Guidance on choosing a resource size can be found here.

Example prompts:

The following 2 screenshots show an example prompt where the response is to run the model: only the scenario name is specified in which case a network optimization (Neo) is run using resource size S with the infeasibility check turned off (False):

Leapfrog's response now indicates the run has been kicked off and provides a link (click on the word "here") to check the progress of the scenario run(s) in the Run Manager.

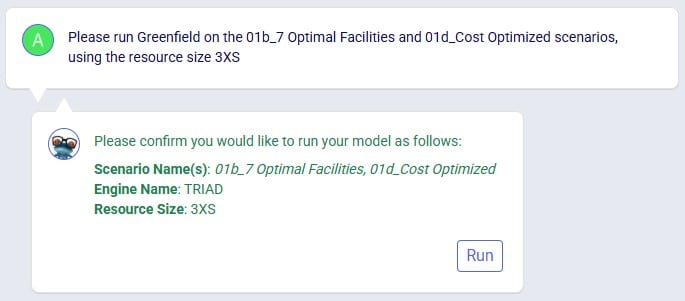

The next screenshot shows a prompt asking to specifically run Greenfield (Triad) on 2 scenarios, where the resource size to be used is specified in the prompt too:

The last screenshot in this section shows a prompt to run a specific scenario with the infeasibility check turned on:

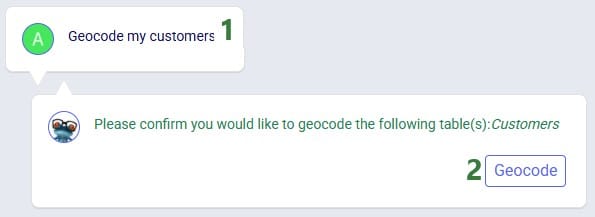

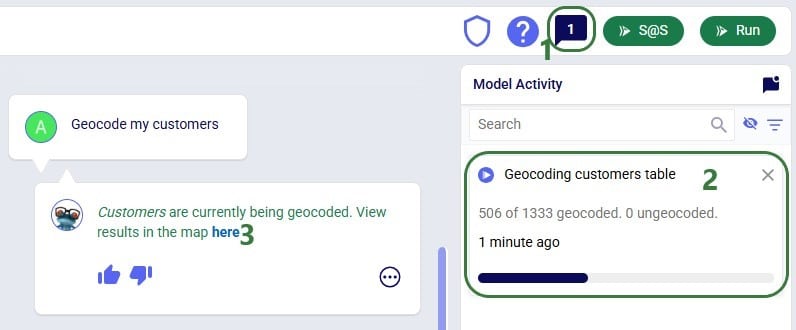

Leapfrog can find latitude & longitude pairs for locations (customers, facilities, and suppliers) based on the location information specified in these input tables (e.g. Address, City, Region, Country). Leapfrog’s response will ask user to confirm they want to geocode the specified table(s). If so, the response will change to contain a link which will open a Cosmic Frog map showing the locations that have been geocoded in a new tab of the browser.

Example prompts:

Notes on using Leapfrog for geocoding locations:

In the following screenshot, user asks Leapfrog to geocode customers:

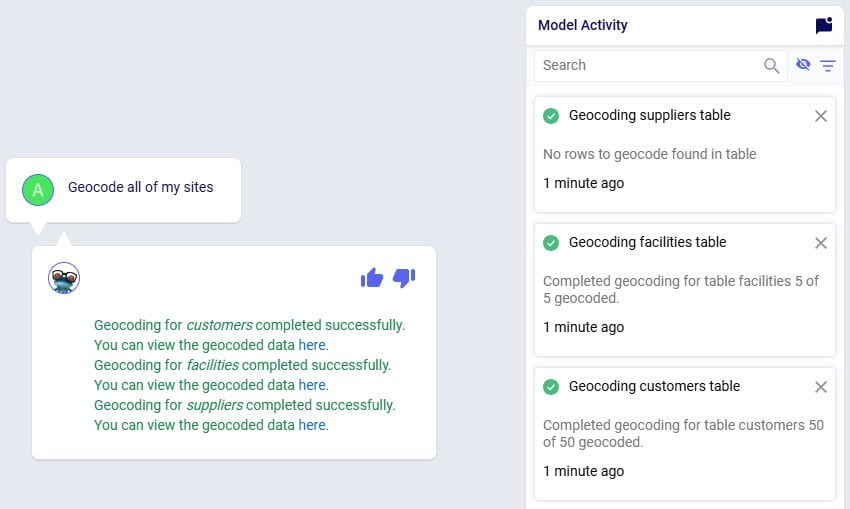

As geocoding a larger set of locations can take some time, it may look like the geocoding was not done or done incompletely if looking at the map or in the Customers / Facilities / Suppliers input tables shortly after kicking off the geocoding. A helpful tool which shows the progress of the geocoding (and other tools / utilities within Cosmic Frog) is the Model Activity list:

Leapfrog can teach users all about the Anura schema that underlies Cosmic Frog models, including:

Example prompts:

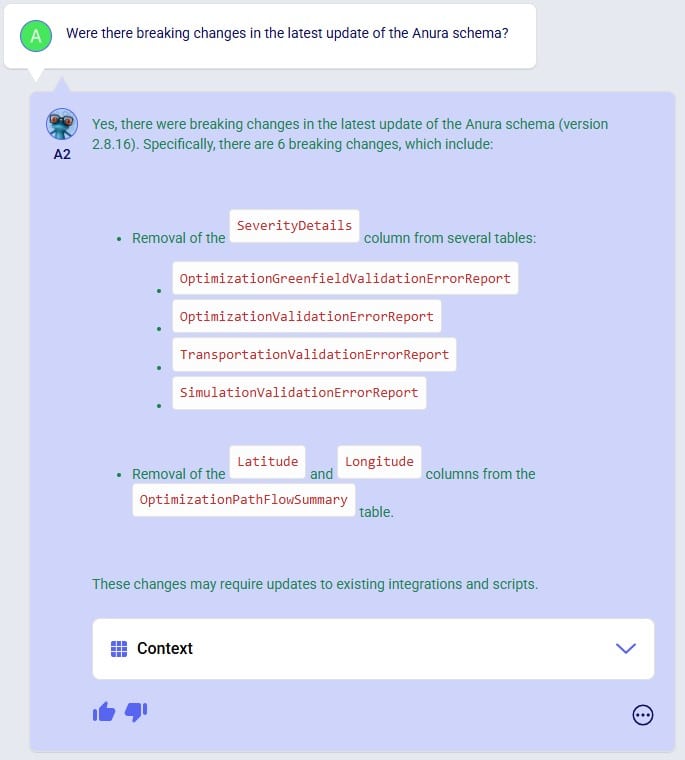

The following 4 screenshots show examples of these types of prompts & Leapfrog’s responses: 1) ask Leapfrog to teach us about a specific field on a specific table, 2) find out which table to use for a specific modelling construct, 3) understand the SCG to Cosmic Frog’s Anura mapping for a specific field on a specific table, and 4) ask about breaking changes in the latest Anura schema update:

Anura Help provides information around system integration, which includes:

Example prompts:

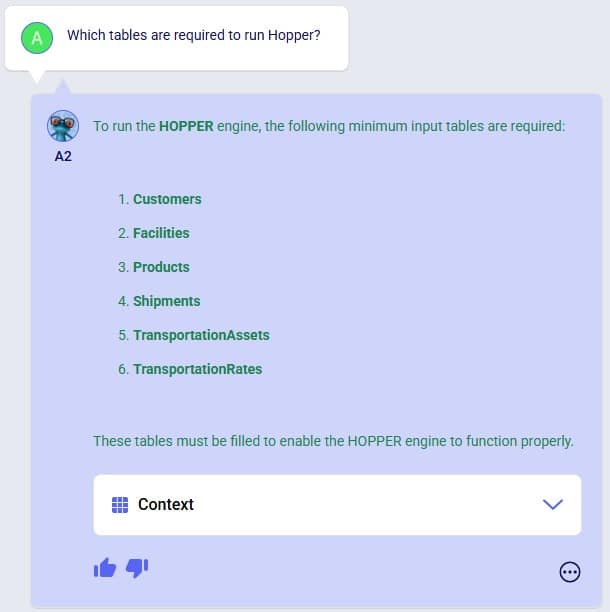

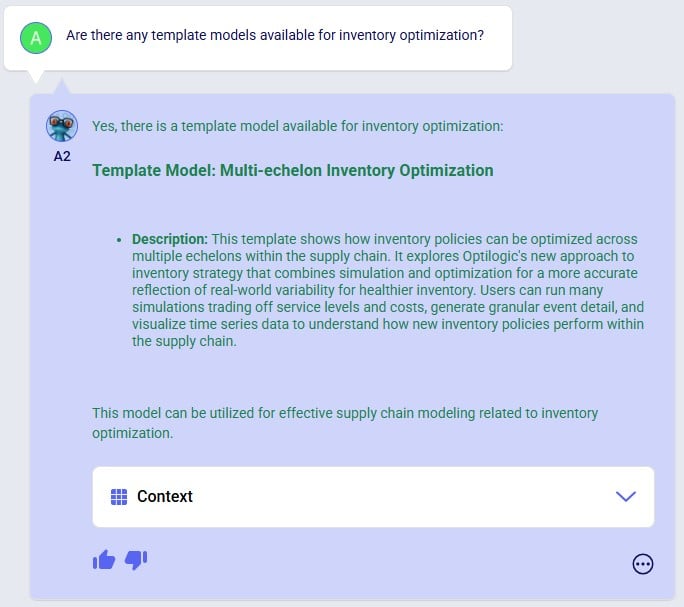

The following 4 screenshots show examples of these types of prompts & Leapfrog’s responses: 1) ask which tables are required to run a specific engine, 2) find out which engines use a specific table, 3) learn which table contains a certain type of outputs, and 4) ask about availability of template models for a specific purpose:

Leapfrog knows about itself, Optilogic, Cosmic Frog, the Anura database schema, LLM’s, and more. Ask Leapfrog questions so it can share its knowledge with you. For most general questions both LLMs will generate the same or a very similar answer, whereas for questions that are around capabilities, each may only answer what is relevant to it.

Example prompts:

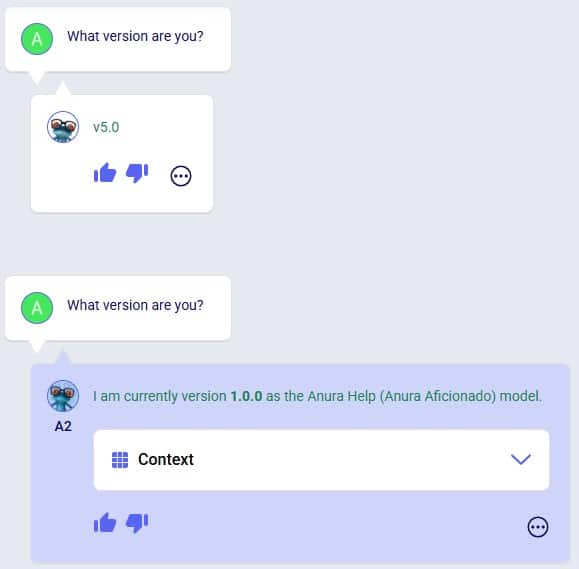

The following 5 screenshots show examples of these types of prompts & Leapfrog’s responses: 1) ask both LLMs about their version, 2) ask a general question about how to do something in Cosmic Frog (Text2SQL), 3) ask Anura Help for the Release Notes, and 4 & 5) ask both LLMs about what they are good at and what they are not good at:

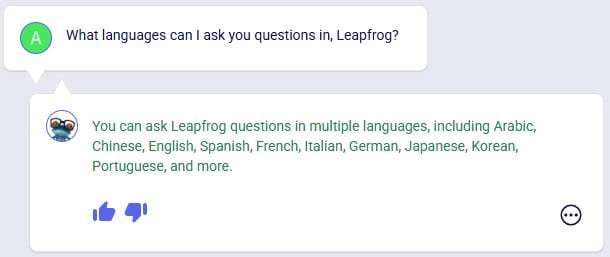

Even though this documentation and Leapfrog example prompts are predominantly in English, Leapfrog supports many languages so users can ask questions in their most natural language. Where the Leapfrog response is in text form, it can respond in the language the question was asked in. Other response types like a standard message with a link or names of scenarios and scenario items will be in English.

The following 3 screenshots show: 1) a list of languages Leapfrog supports, 2) a French prompt to increase demand by 20%, and 3) a Spanish prompt asking Leapfrog to explain the Primary Quantity UOM field on the Model Settings table:

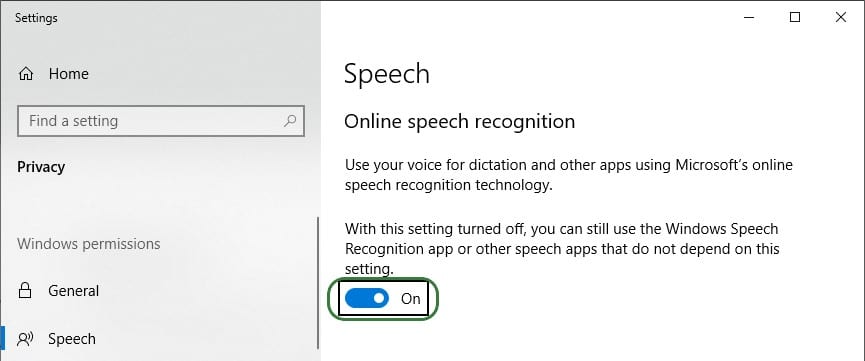

To get the most out of Leapfrog, please take note of these tips & tricks:

After this is turned on, you can start using it by pressing the keyboard’s Windows key + H. A bar with a microphone which shows messages like “initializing”, “listening”, “thinking” will show up at the top of your active monitor:

Now you can speak into your computer’s microphone, and your spoken words will be turned into text. If you put your cursor in Leapfrog’s question / prompt area, click on the microphone in the bar at the top so your computer starts listening, and then say what you want to ask Leapfrog, it will appear in the prompt area. You can then click on the send icon to submit your prompt / question to Leapfrog.

The following screenshots show several examples of how one can build on previous prompts and responses and try to re-direct Leapfrog as described in bullets 6 and 7 of the Tips & Tricks above. In the first example user wants to delete records from an input table whereas Leapfrog’s initial response is to change the Status of these records to Exclude. The follow-up prompt clarifies that user wants to remove them. Note that it is not needed to repeat that it is about facilities based in the USA, which Leapfrog still knows from the previous prompt:

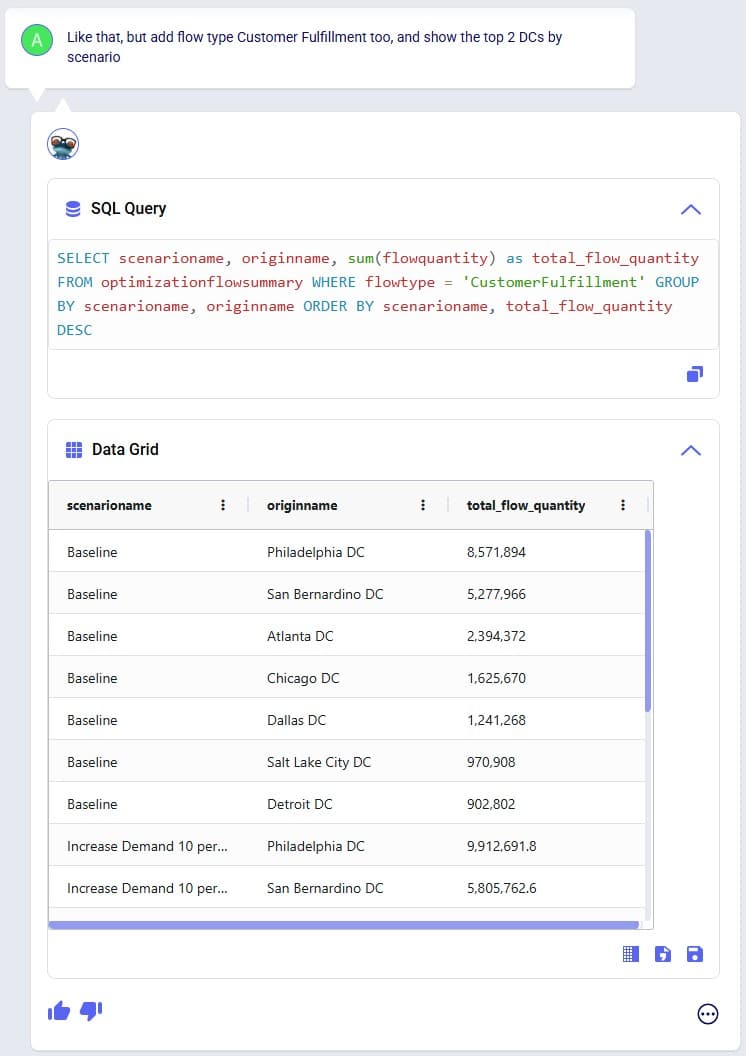

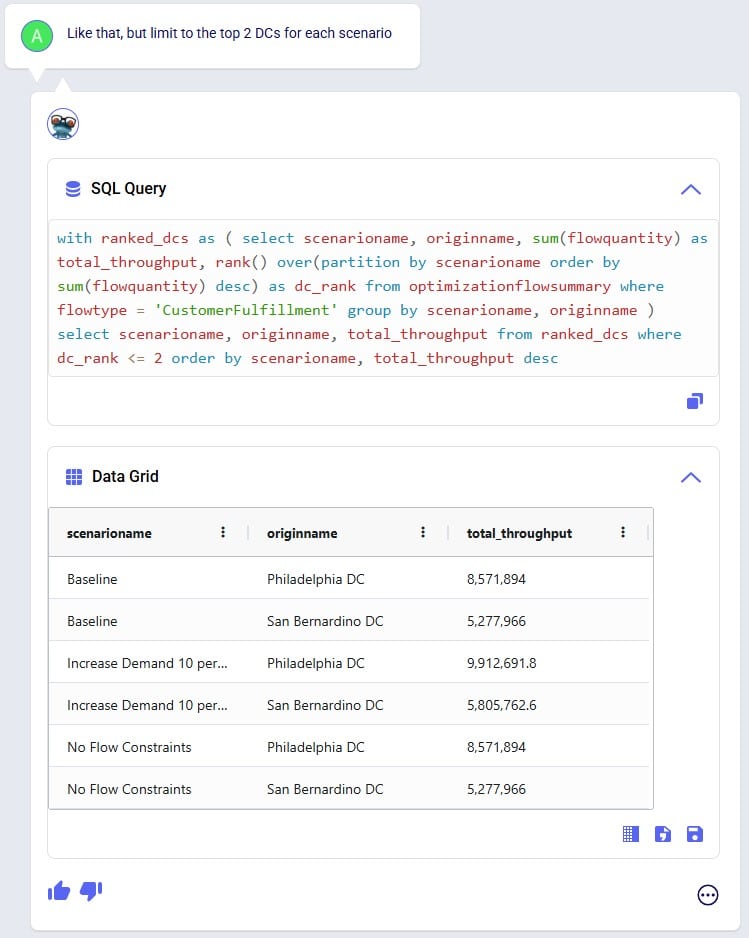

In the following example shown in the next 3 screenshots, user starts by asking Leapfrog to show the 2 DCs with the highest throughput. The SQL query response only looks at Replenishment flows, but user wants to include Customer Fulfillment flows too. Also, the SQL Query does not limit the list to the top 2 DCs. In the follow-up prompt the user clarifies this (“Like that, but…”) without needing to repeat the whole question. However, Leapfrog only picks up on the first request (adding the Customer Fulfillment flows), so in the third prompt user clarifies further (again: “Like that, but…”), and achieves what they set out to do:

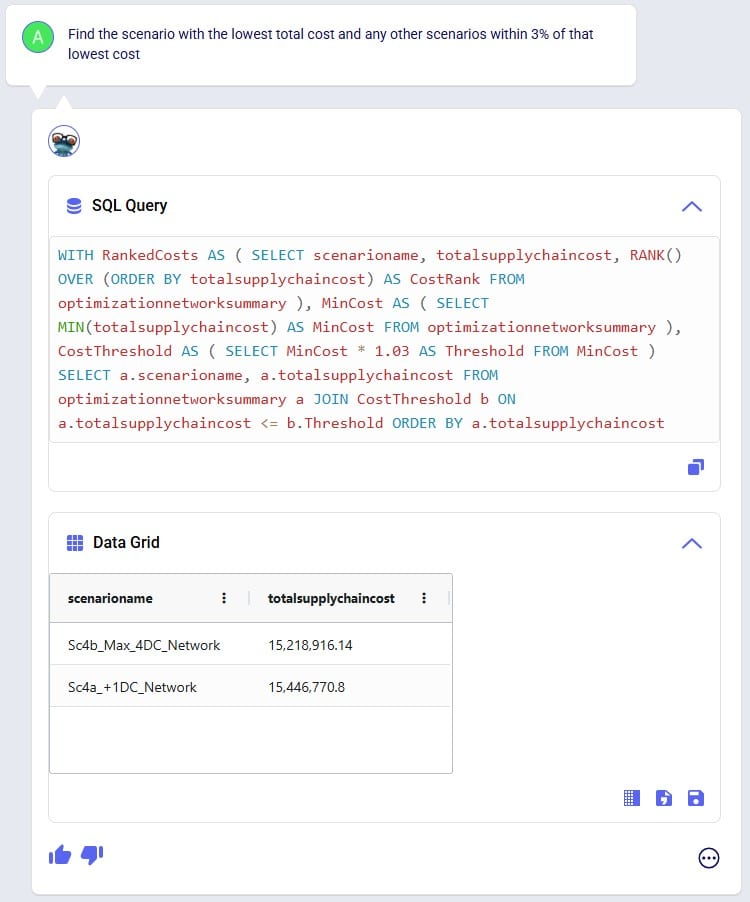

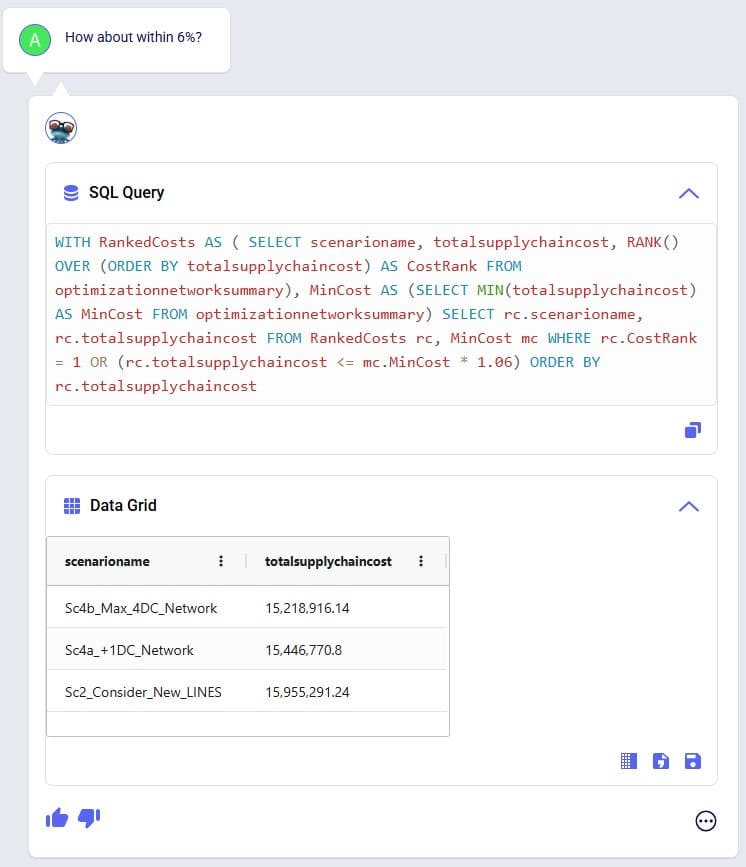

In the next 2 screenshots we see an example of first asking Leapfrog to show outputs that meet certain criteria (within 3%), and then essentially wanting to ask the same question but with the criteria changed (within 6%). There is no need to repeat the first prompt, it suffices to say something like “How about with [changed criteria]?”:

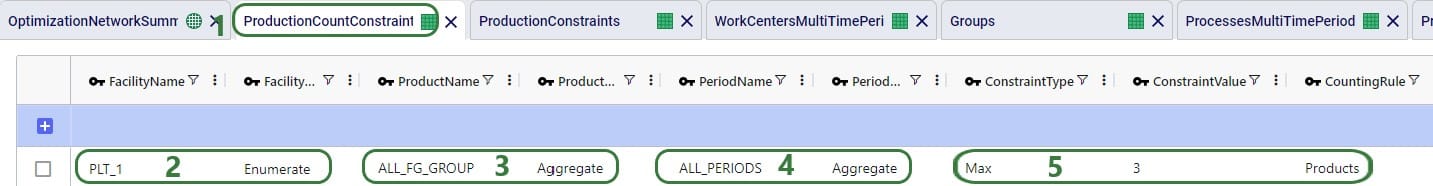

When Leapfrog only does part of what a user intends to do, it can often still be achieved in multiple steps. See the following screenshots where user intended to change 2 fields on the Production Count Constraints table and initially Leapfrog only changes one. The follow-up prompt simply consists of “And [change 2]”, building on the previous prompt. In the third prompt user was more explicit in describing the 2 changes and then Leapfrog’s response is what user intended to achieve:

Here we will step through the process of building a complete Cosmic Frog demo model, creating an additional scenario, running this new scenario and the Baseline scenario, and interrogating some of the scenarios’ outputs, all by using only Leapfrog.

Please note that if you are trying the same steps using Leapfrog in your Cosmic Frog:

We will first list the prompts that were used to build, run, and analyze the model, and then review the whole process step-by-step through (lots of!) screenshots. Here is the list of prompts that were submitted to Leapfrog (all of them used the Text2SQL LLM):

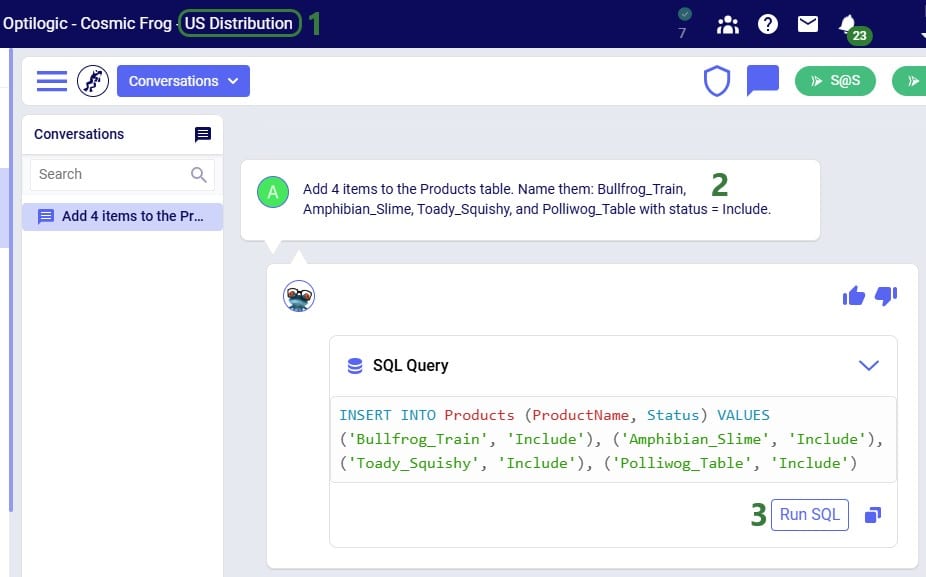

And here is the step-by-step process shown through screenshots, starting with the first prompt given to Leapfrog to create a new empty Cosmic Frog model with the name “US Distribution”:

Clicking on the link in Leapfrog’s response will take user to the Leapfrog module in this newly created US Distribution model:

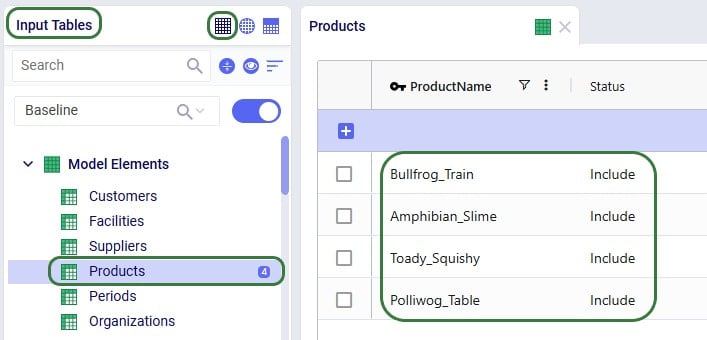

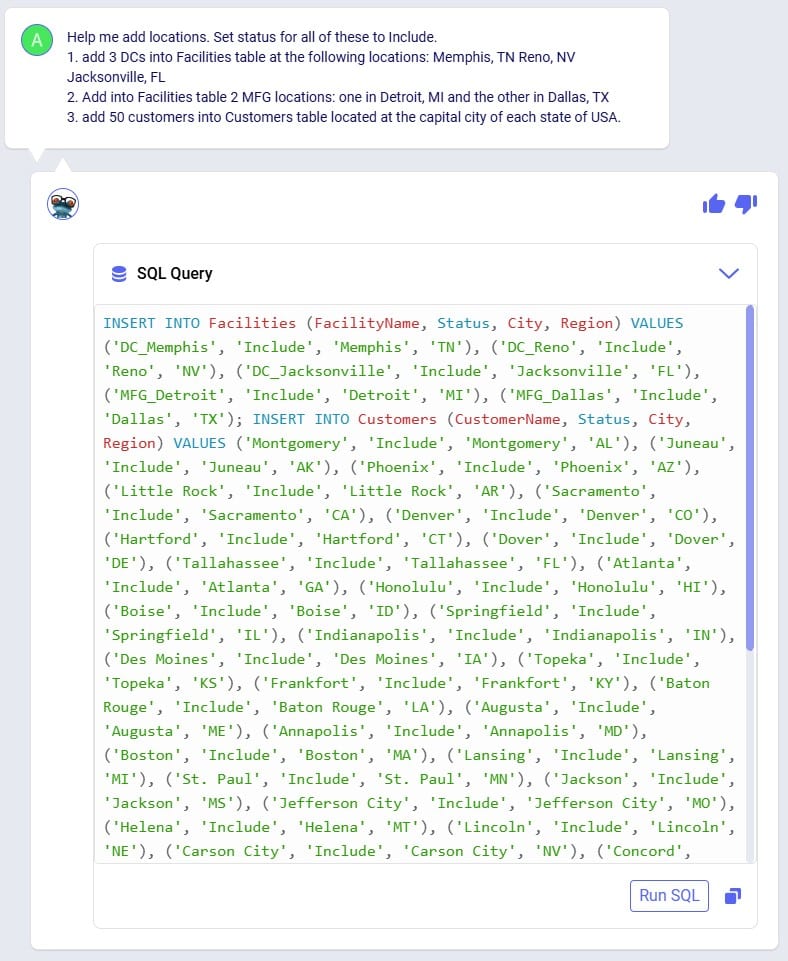

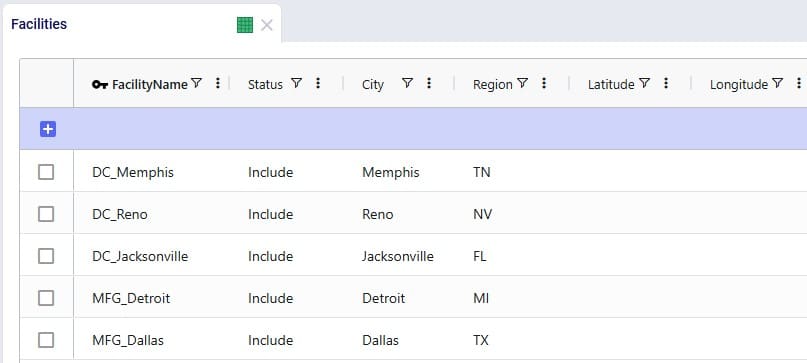

In the next prompt (the third one from the list), distribution center (DC) and manufacturing (MFG) locations are added to the Facilities table, and customer locations to the Customers table. Note the use of a numbered list to help Leapfrog break the response up into multiple INSERT INTO statements:

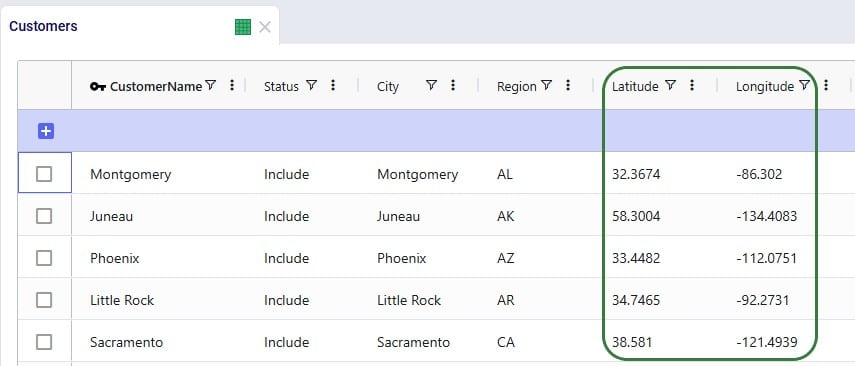

After running the SQL of that Leapfrog response, user has a look in the Facilities and Customers tables and notices that as expected all Latitude and Longitude values are blank:

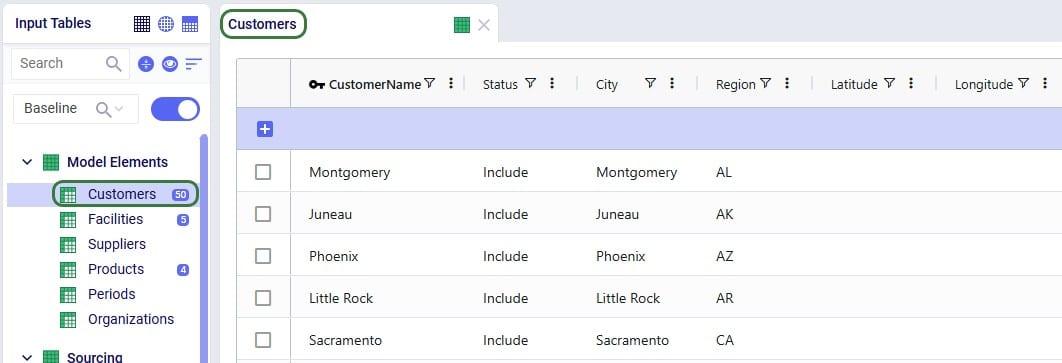

Since all Facilities and Customers have blank Latitudes and Longitudes, our next (fourth) prompt is to geocode all sites:

Once the geocoding completes (which can be checked in the Model Activity list), user clicks on one of the links in the Leapfrog response. This opens the Supply Chain map of the model in a new tab in the browser, showing Facilities and Customers, which all look to be geocoded correctly:

We can also double-check this in the Customers and Facilities tables, see for example next screenshot of a subset of 5 customers which now have values in their Latitude and Longitude fields:

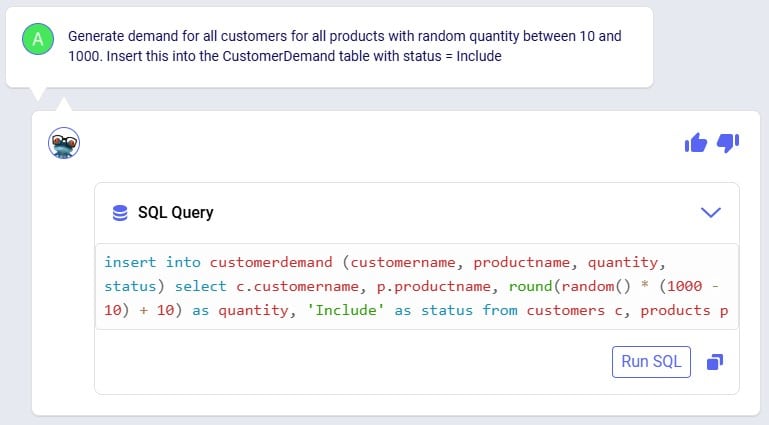

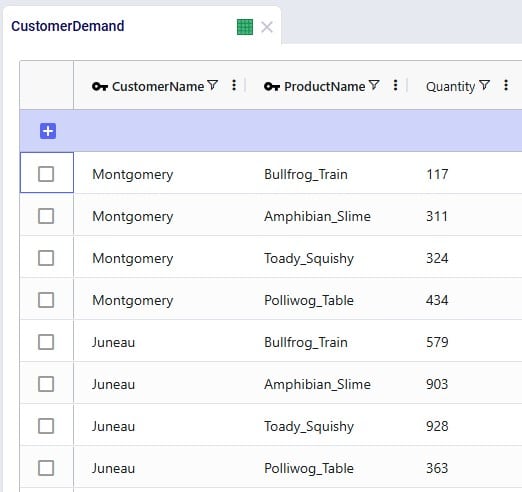

For a (network optimization - Neo) model to work, we will also need to add demand. As this is an example/demo model, we can use Leapfrog to generate random demand quantities for us, see this next (fifth) prompt and response:

After clicking the Run SQL button, we can have a look in the Customer Demand input table, where we find the expected 200 records (50 customers which each have demand for 4 products) and eyeballing the values in the Quantity field we see the numbers are as expected between 10 and 1000:

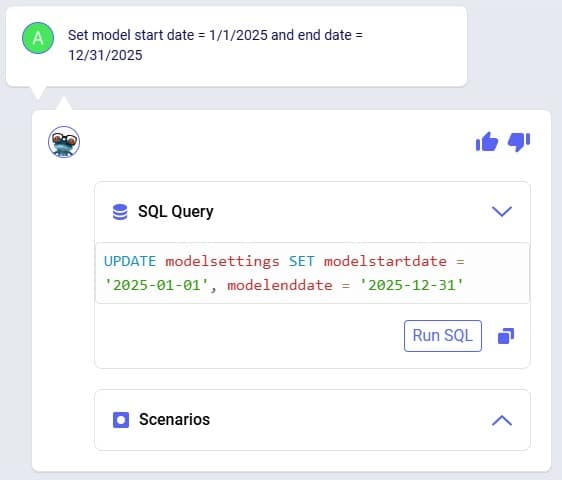

Our sixth prompt sets the model end and start dates, so the model horizon is all of 2025:

Again, we can double-check this after running the SQL response by having a look in the Model Settings input table:

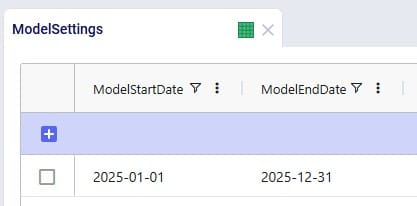

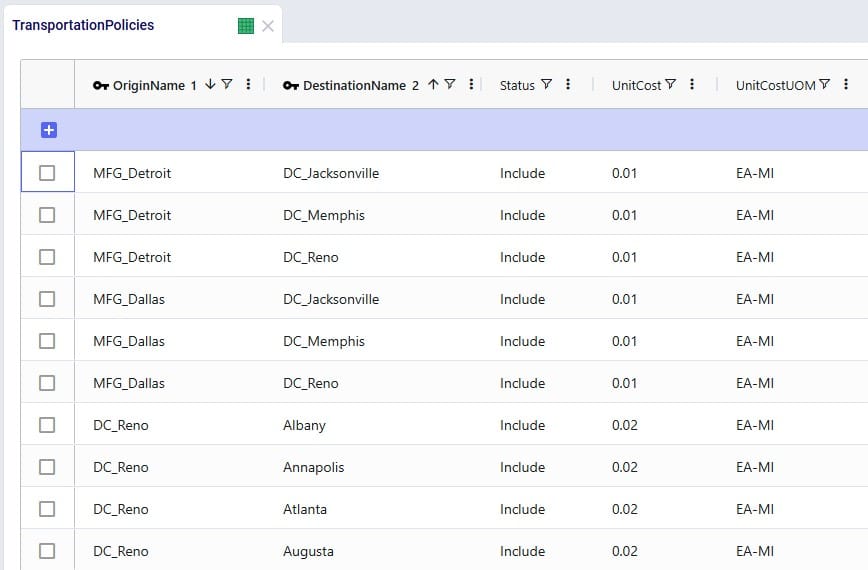

We also need Transportation Policies, the following prompt (the seventh from our list) takes care of this and creates lanes from all MFGs to all DCs and from all DCs to all customers:

We see the 6 enumerated MFG (2 locations) to DC (3 locations) lanes when opening and sorting the Transportation policies table, plus the first few records of the 150 enumerated DC to customer lanes. No Unit Costs are set so far (blank values):

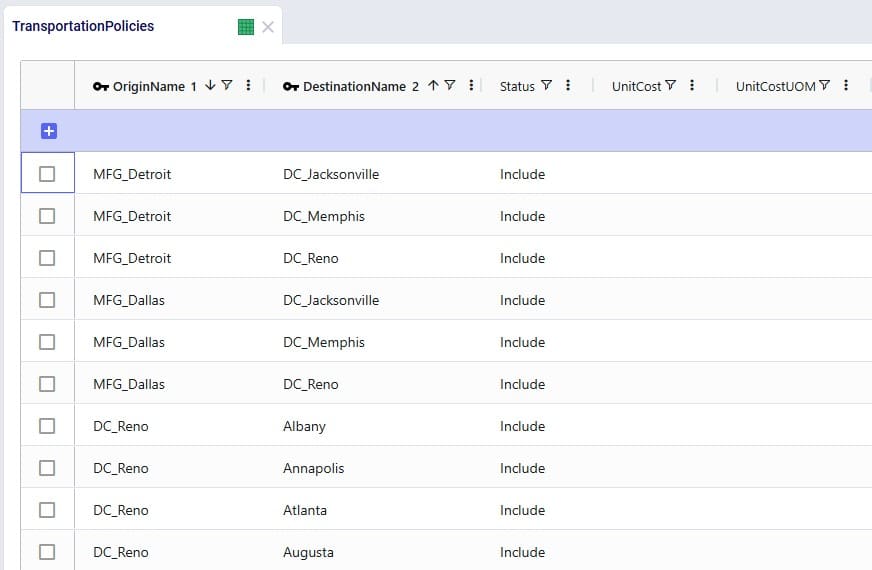

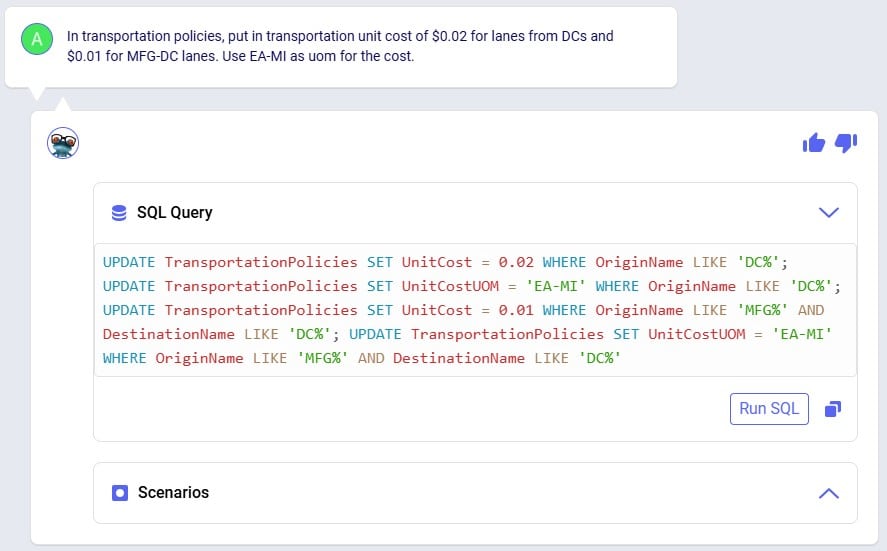

Our eighth prompt sets the transportation unit costs on the transportation policies created in the previous step. All use a unit of measure of EA-MI which means the costs entered are per unit per mile, and the cost itself is 1 cent on MFG to DC lanes and 2 cents on DC to customer lanes:

Clicking the Run SQL button will run the 4 UPDATE statements, and we can see the changes in the Transportation Policies input table:

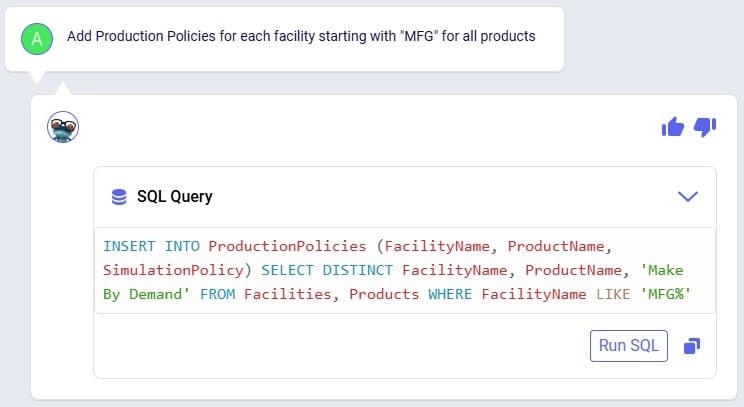

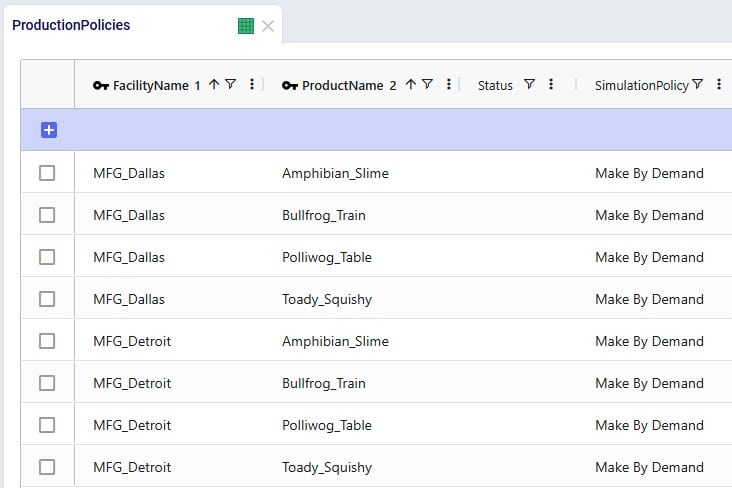

In order to run, the model also needs Production Policies, which the next (ninth) prompt takes care of: both MFG locations can produce all 4 products:

Again, double-checking after running the SQL from the response, we see the 8 expected records in the Production Policies input table:

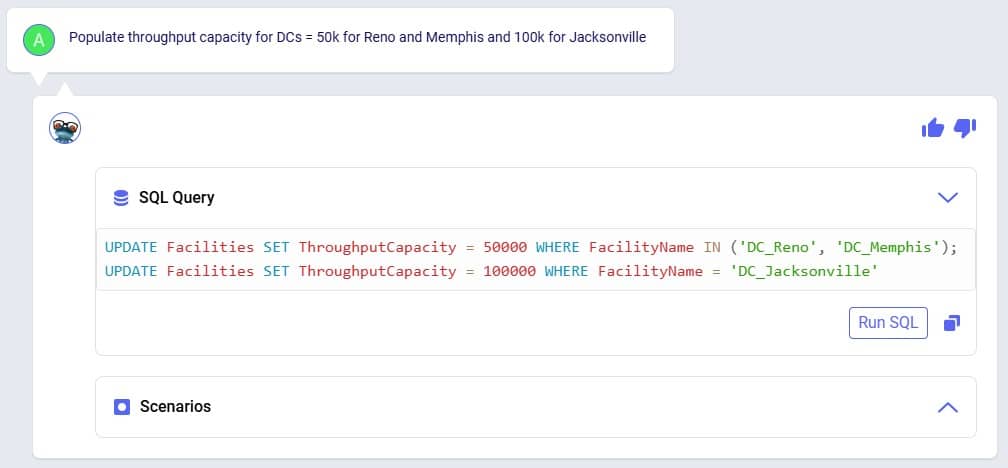

Our 3 DCs have an upper limit as to how much throughput they can handle over the year, this is 50,000 for the DCs in Reno and Memphis and 100,000 for the DC in Jacksonville. Prompt number 10 sets these:

We can see these numbers appear in the Throughput Capacity field on the Facilities input table after running the SQL of Leapfrog’s response:

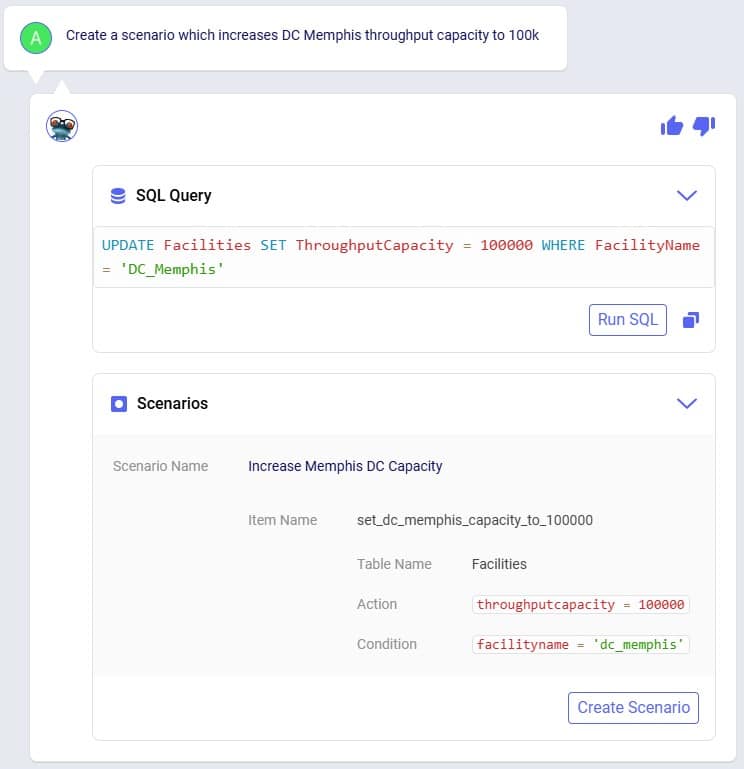

We want to explore what happens if the maximum throughput of the DC in Memphis is increased to 100,000; this is what the eleventh prompt asks to do:

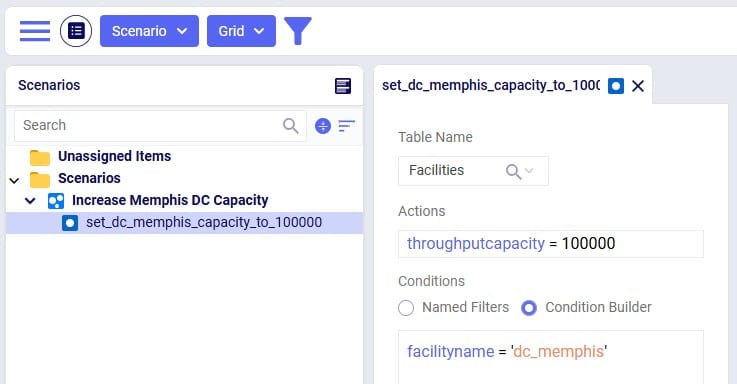

Leapfrog’s response has both a SQL UPDATE query, which would change the throughput at DC_Memphis in the Facilities input table, and a Scenarios section. We choose to click on the Create Scenario button so a new scenario is created (Increase Memphis DC Capacity) which will contain 1 scenario item (set_dc_memphis_capacity_to_100000) that sets the throughput capacity at DC_Memphis to 100,000:

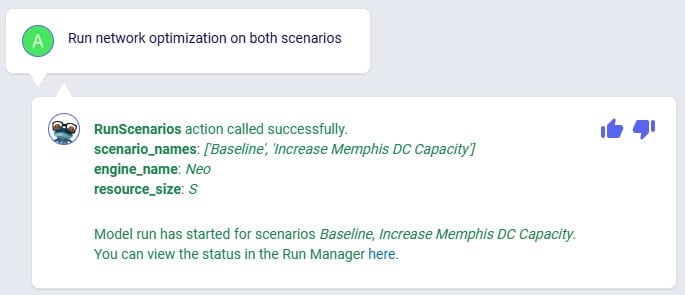

Our small demo model is now complete, and we will use Leapfrog (using our twelfth prompt) to run network optimization (using the Neo engine) on the Baseline and Increase Memphis DC Capacity scenarios:

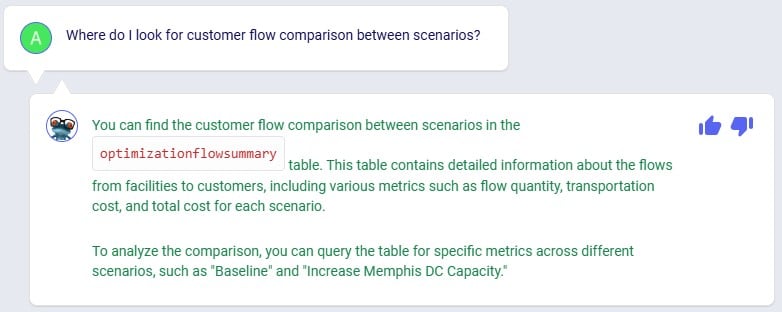

While the scenarios are running, we are thinking about what will be interesting outputs to review, and ask Leapfrog about how one can compare customers flows between scenarios (prompt number 13):

This information can come in handy in one of the next prompts to direct Leapfrog on where to look.

Using the link from the previous Leapfrog response where we started the optimization runs for both scenarios, we open the Run Manager in a new tab of the browser. Both scenarios have completed successfully as their State is set to Done:

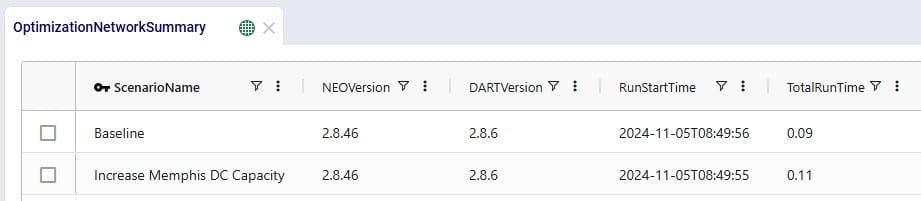

Looking in the Optimization Network Summary output table, we also see there are results for both scenarios:

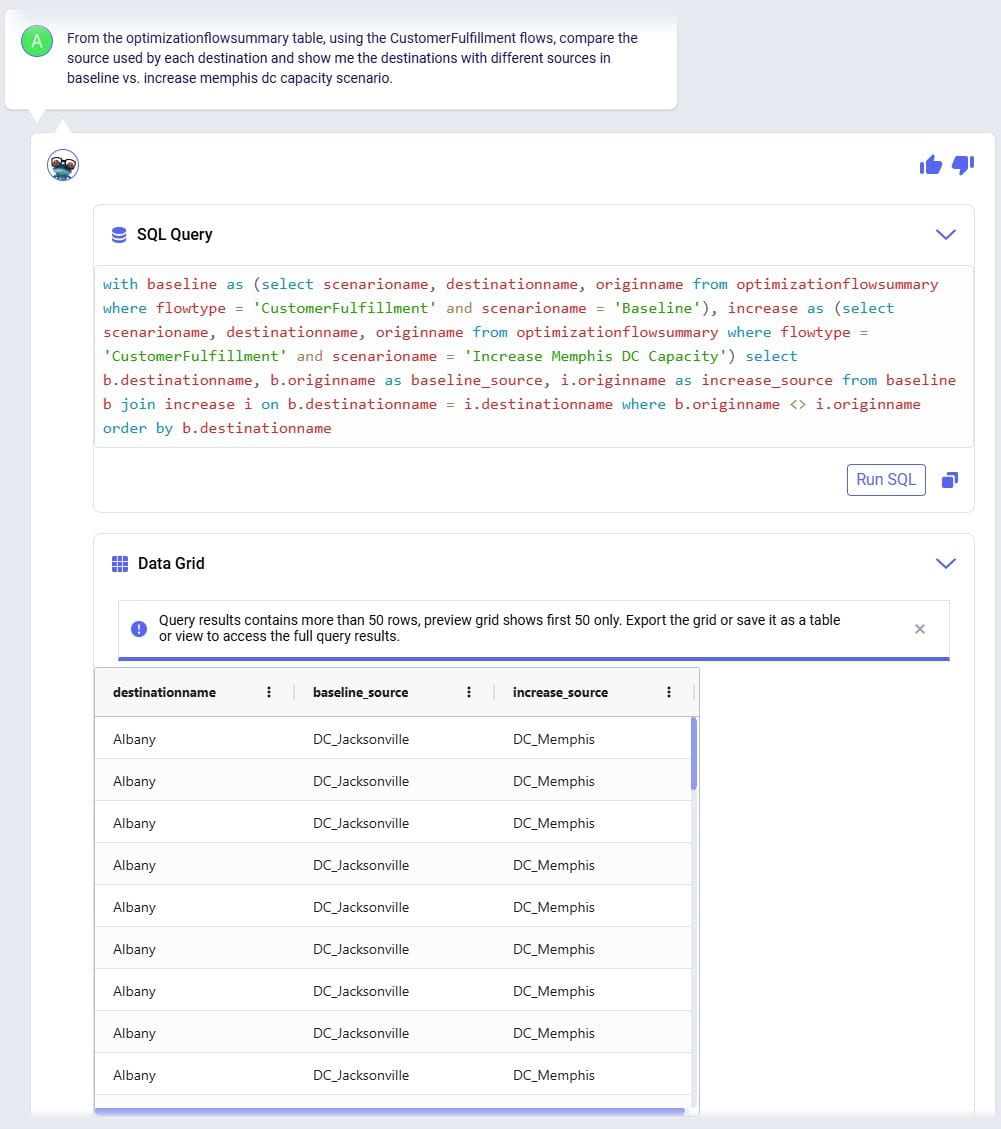

In the next few prompts Leapfrog is used to look at outputs of the 2 scenarios that have been run. The prompt (number 14 from our list) in the next screenshot aims to get Leapfrog to show us which customers have a different source in the Increase Memphis DC Capacity scenario as compared to the Baseline scenario:

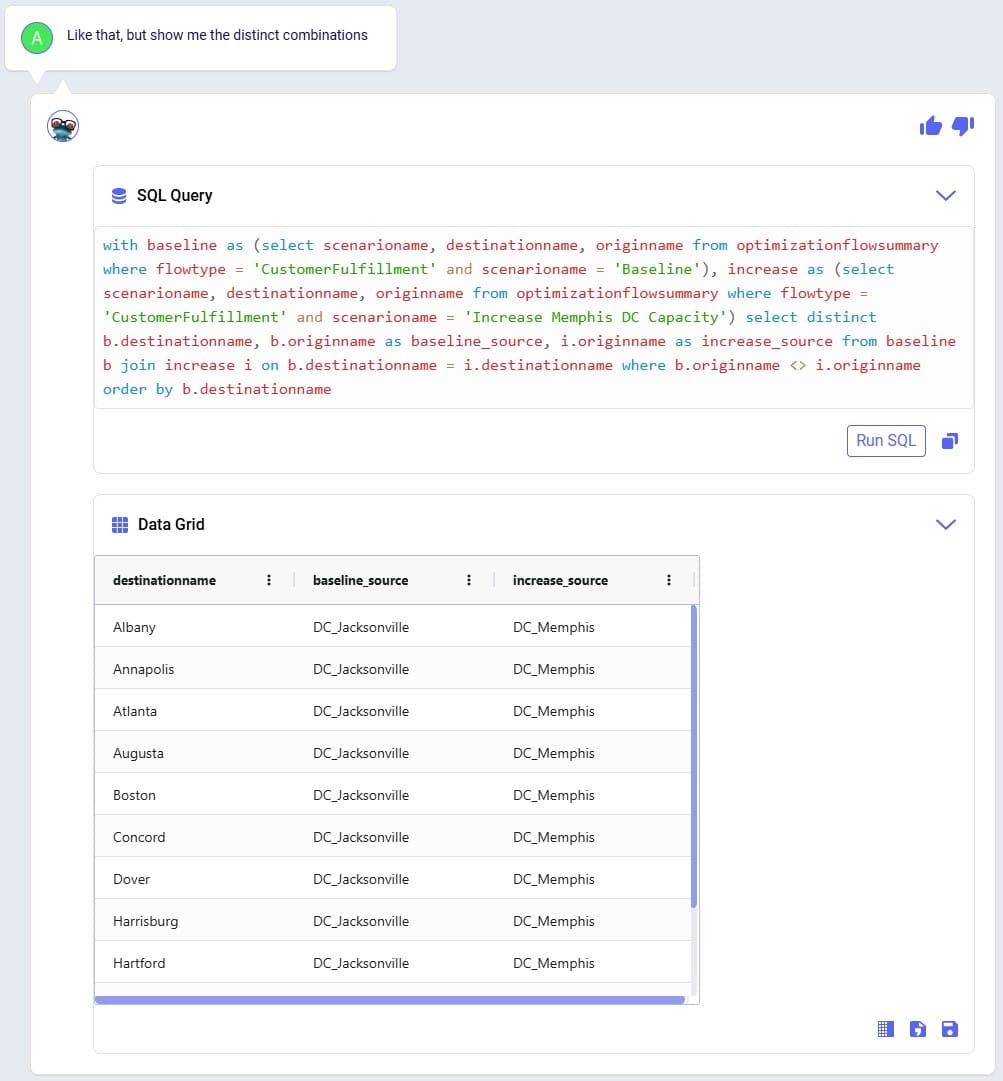

Leapfrog’s response is almost what we want it to be, however it has duplicates in the Data Grid. Therefore, we follow our previous prompt up with the next one (number 15), where we ask to see only distinct combinations. Instead of “distinct” we could have also used the word “unique” in our prompt:

We see that the source for around 11-12 customers changed from the DC in Jacksonville in the Baseline to the DC in Memphis in the Increase Memphis DC Capacity scenario.

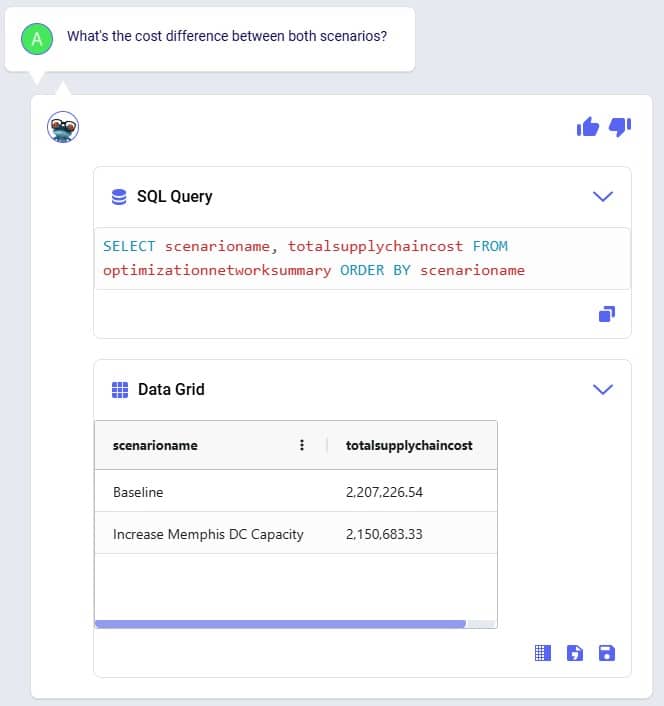

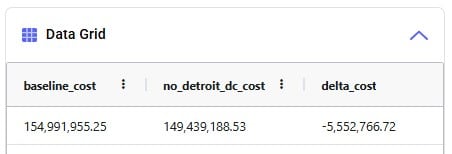

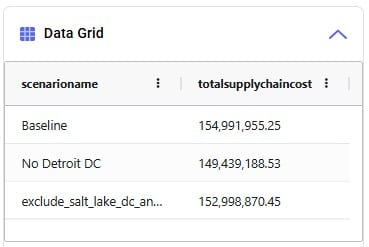

Cost comparisons between scenarios are usually interesting too, so that is what prompt number 16 asks about:

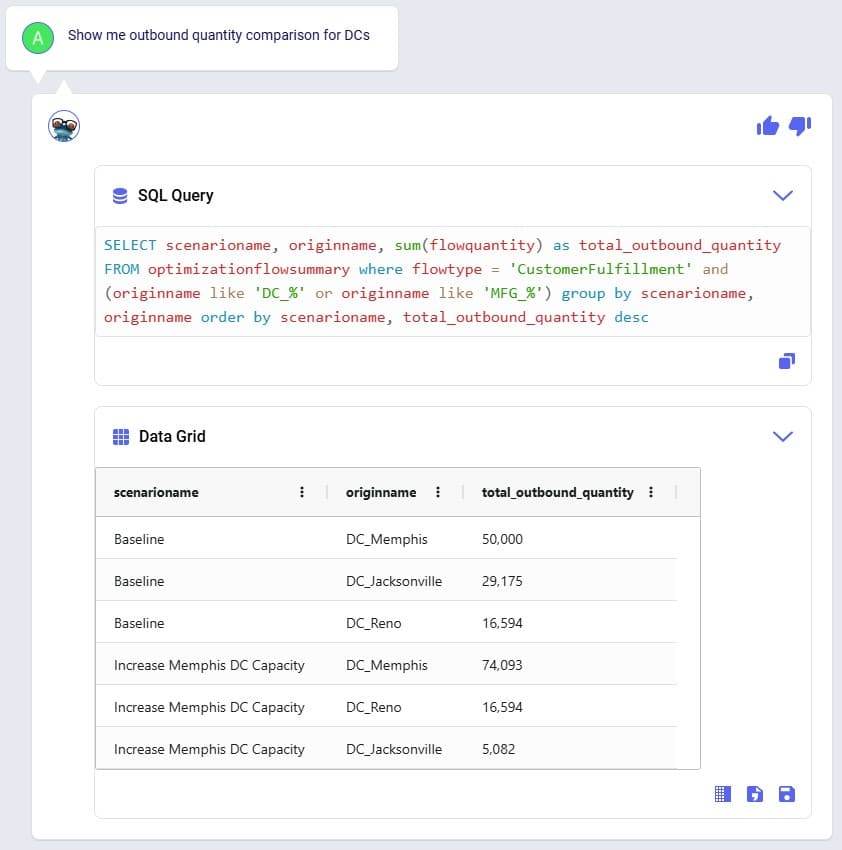

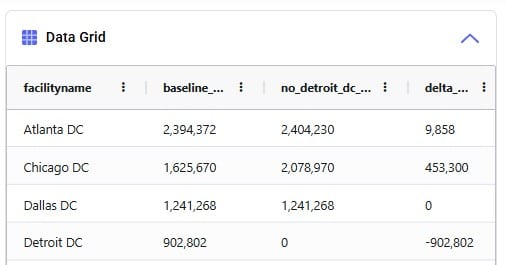

We notice that increasing the throughput capacity at DC_Memphis leads to a lower total supply chain cost by about 56.5k USD. Next, we want to see how much flow has shifted between the DCs in the Baseline scenario compared to the Increase Memphis DC Capacity scenario, which is what the last prompt (number 17) asks about:

This tells us that the throughput at DC_Reno is the same in both scenarios, but that increasing the DC_Memphis throughput capacity allows a shift of about 24k units from the DC in Jacksonville to the DC in Memphis (which was at its maximum 50k throughput in the Baseline scenario). This volume shift is what leads to the reduction in total supply chain cost.

We hope this gives you a good idea of what Leapfrog is capable of today. Stay tuned for more exciting features to be added in future releases!

Do you have any Leapfrog questions or feedback? Feel free to use the Frogger Pond Community to ask questions, share your experiences, and provide feedback. Or, shoot us an email at Leapfrog@Optilogic.com.

Happy Leapfrogging!

PERSONA: Alex is an experienced supply chain modeler who knows exactly what to analyze but often spends too much time pulling and formatting outputs. They are looking to be more efficient in summarizing results and identifying key drivers across scenarios. While confident in their domain expertise, they want help extracting insights faster without losing control or accuracy. They see AI as a time-saving partner that helps them focus on decision-making, not data wrangling.

USE CASE: After running multiple scenarios in Cosmic Frog, Alex wants to quickly understand the key differences in cost and service across designs. Instead of manually exporting data or writing SQL queries, Alex uses Leapfrog to ask natural-language questions which saves Alex hours and lets them focus on insight generation and strategic decision-making.

Model to use: Global Supply Chain Strategy (available under Get Started Here in the Explorer).

Prompt #1

Prompt #2

Prompt #3

Prompt #4

Prompt #5

PERSONA: Chris is an experienced supply chain modeler with a well-established, repeatable workflow that pulls data from internal systems to rebuild models every quarter. He relies on consistency in the model schema to keep his automation running smoothly.

USE CASE: With an upcoming schema change in Cosmic Frog, Chris is concerned about disruptions or errors in his process and wants Leapfrog to help provide info on the changes that may require him to update his workflow.

Model to use: any.

Prompt #1

Prompt #2

Prompt #3

PERSONA: Larry Loves LLMs – he wants to use an LLM to find answers.

USE CASE: I need to understand the outputs of this model someone else built. I want to know how many products come from each supplier for each network configuration. Can Leapfrog help with that?

Yes, Leapfrog can help with that! Let's use Anura Help to better understand which tables have that data and then ask Text2SQL to pull the data.

Model to use: any; Global Supply Chain Strategy (available under Get Started Here in the Explorer).

Prompt #1

Prompt #2

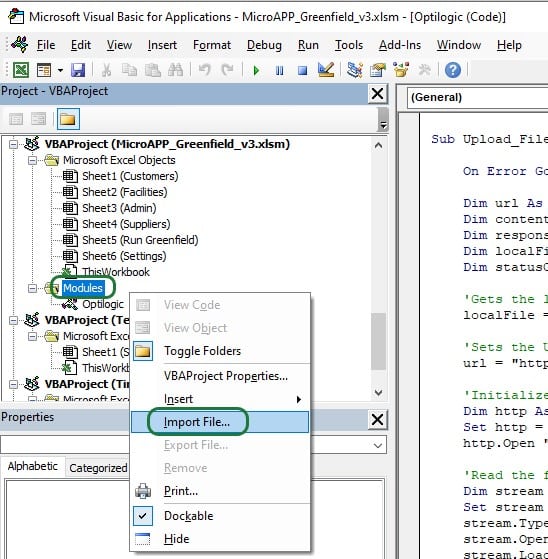

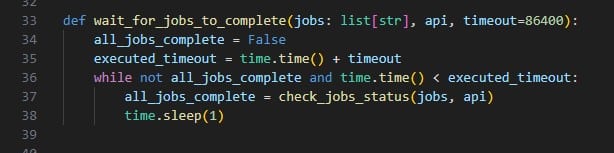

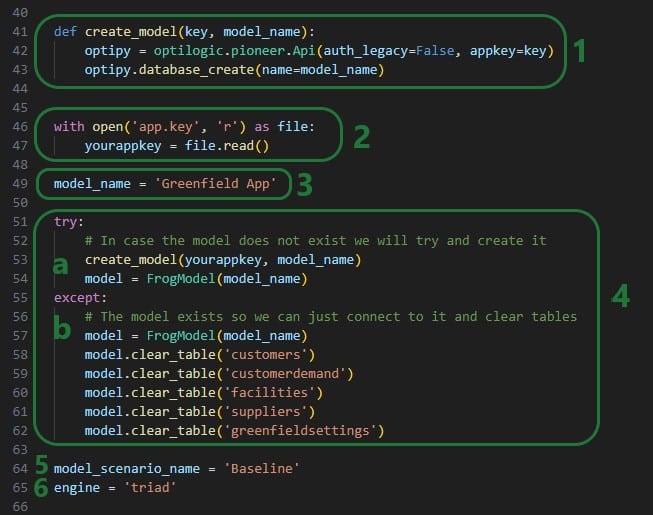

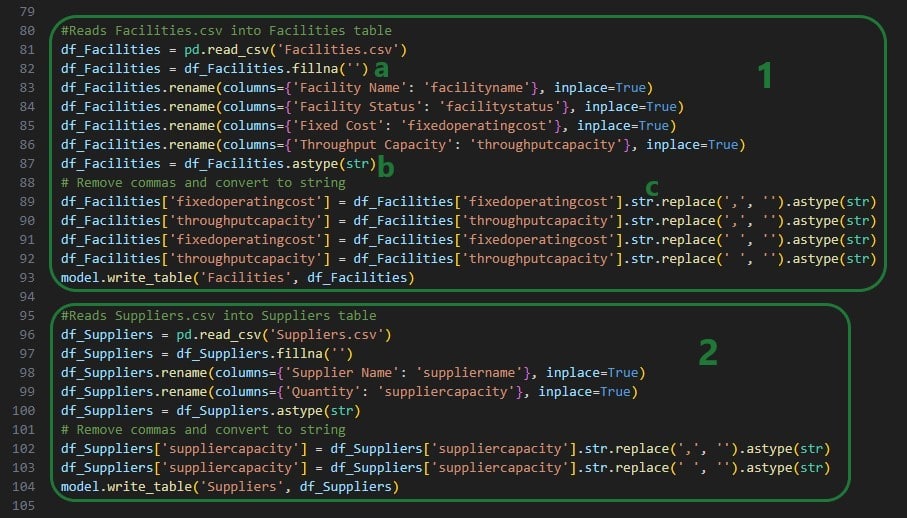

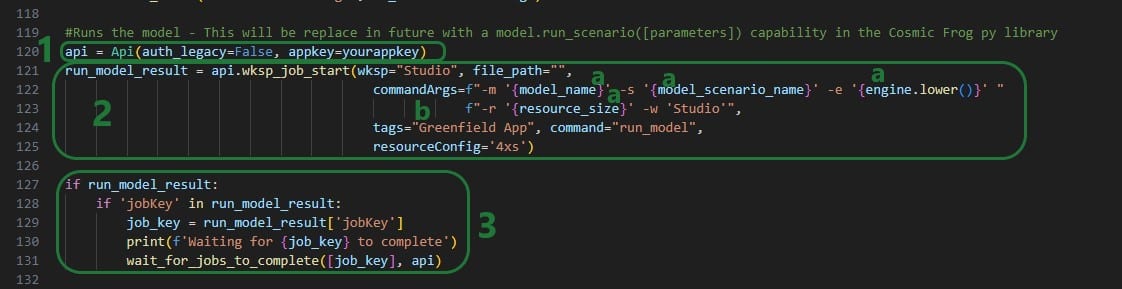

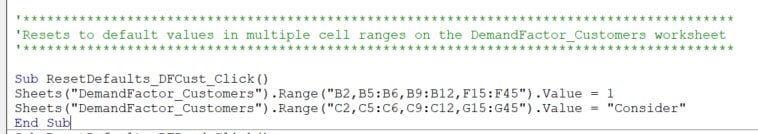

To enable users to build basic Cosmic Frog for Excel Applications to interact directly with Cosmic Frog from within Excel without needing to write any code, Optilogic has developed the Cosmic Frog for Excel Application Builder (also referred to as App Builder in this documentation). In this App Builder, users can build their own workflows using common actions like creating a new model, connecting to an existing model, importing & exporting data, creating & running scenarios, and reviewing outputs. Once a workflow has been established, the App can be deployed so it can be shared with other users. These other users do not need to build the workflow of the App again, they can just use the App as is. In this documentation we will take a user through the steps of a complete workflow build, including App deployment.

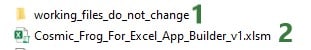

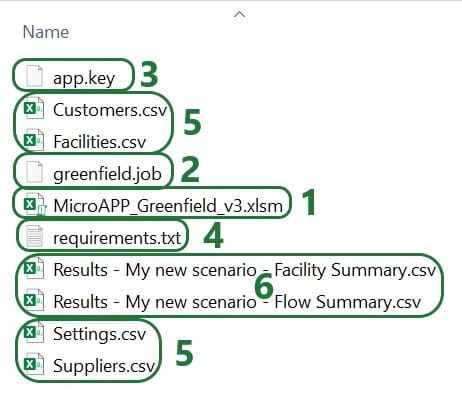

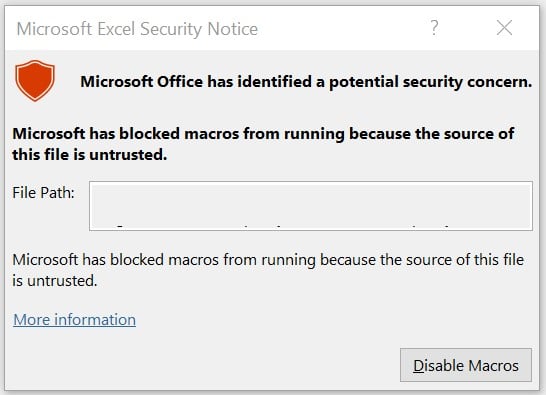

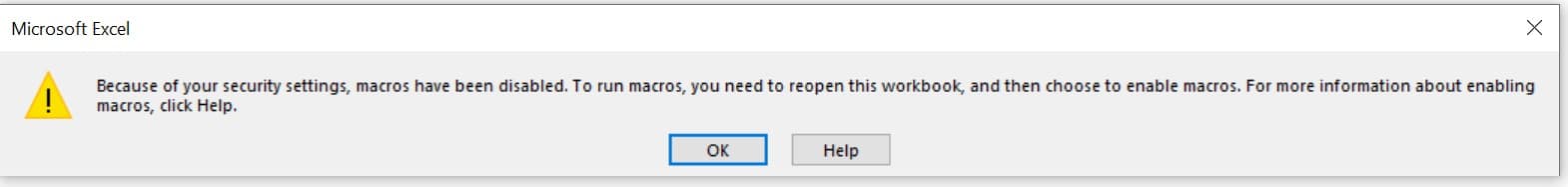

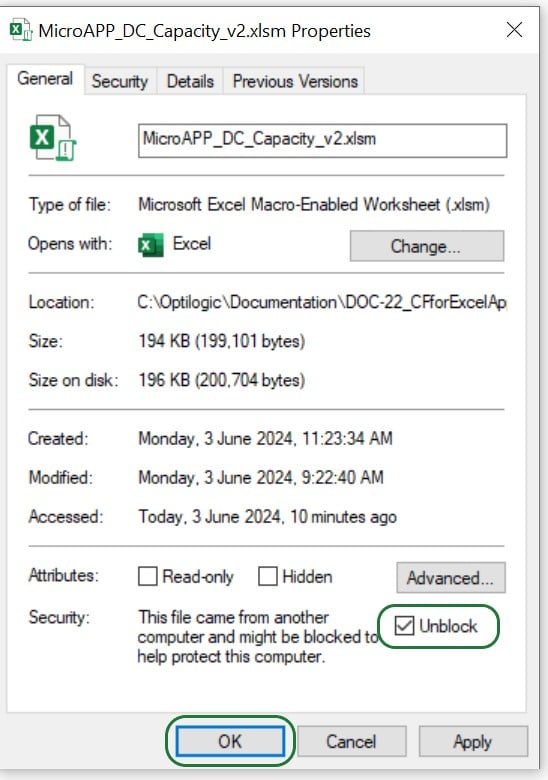

You can download the Cosmic Frog for Excel – App Builder from the Resource Library. A video showing how the App Builder is used in a nutshell is included; this video is recommended viewing before reading further. After downloading the .zip file from the Resource Library and unzipping it on your local computer, you will find there are 2 folders included: 1) Cosmic_Frog_For_Excel_App_Builder, which contains the App Builder itself and this is what this documentation will focus on, and 2) Cosmic_Frog_For_Excel_Examples, which contains 3 examples of how the App Builder can be used. This documentation will not discuss these examples in detail; users are however encouraged to browse through them to get an idea of the types of workflows one can build with the App Builder.

The Cosmic_Frog_For_Excel_App_Builder folder contains 1 subfolder and 1 Macro-enabled Excel file (.xlsm):

When ready to start building your first own basic App, open the Cosmic_Frog_For_Excel_Builder_v1.xlsm file; the next section will describe the steps a user needs to take to start building.

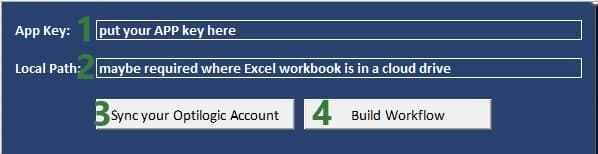

When you open the Cosmic_Frog_For_Excel_App_Builder_v1.xlsm file in Excel, you will find there are 2 worksheets present in the workbook, Start and Workflow. The top of the Start worksheet looks like this:

Going to the Workflow worksheet and clicking on the Cosmic Frog tab in the ribbon, we can see the actions that are available to us to create our basic Cosmic Frog for Excel Applications:

We will now walk through building and deploying a simple App to illustrate the different Actions and their configurations. This workflow will: connect to a Greenfield model in my Optilogic account, add records to the Customer and CustomerDemand tables, create a new scenario with 2 new scenario items in it, run this new scenario, and then export the Greenfield Facility Summary output table from the Cosmic Frog model into a worksheet of the App. As a last step we will also deploy the App.

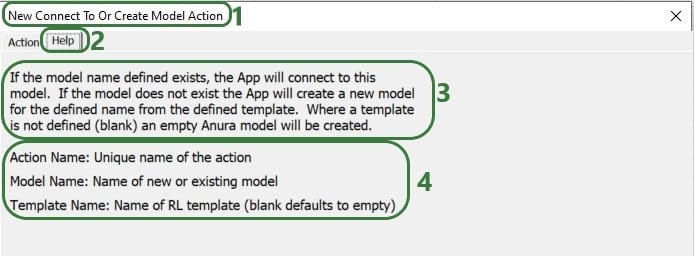

On the Workflow worksheet, we will start building the workflow by first connecting to an existing model in my Optilogic account:

The following screenshot shows the Help tab of the “Connect To Or Create Model Action”:

In the remainder of the documentation, we will not show the Help tab of each action. Users are however encouraged to use these to understand what the action does and how to configure it.

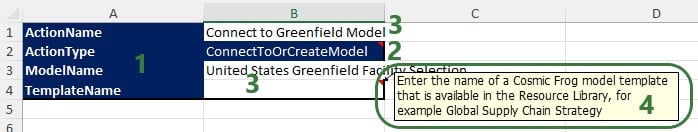

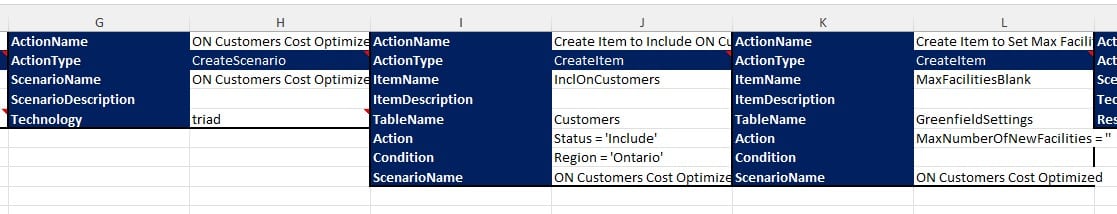

After creating an action, the details of it will be added to 2 columns in the Workflow worksheet, see screenshot below. The first action of the workflow will use columns A & B, the next action C & D, etc. When adding actions, the placement on the Workflow worksheet is automatic and user does not need to do or change anything. Blue fields contain data that cannot be changed, white fields are user inputs when setting up the action and can be changed in the worksheet itself too.

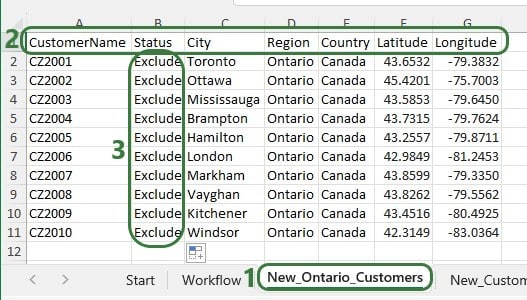

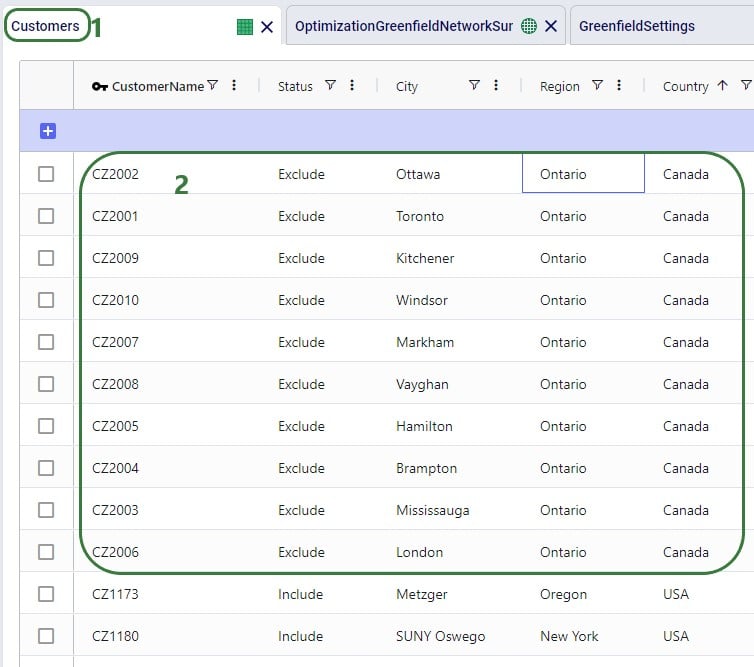

The United States Greenfield Facility Selection model we are connecting to contains about 1.3k customer locations in the US which have demand for 3 products: Rockets, Space Suits, and Consumables. As part of this workflow, we will add 10 customers located in the state of Ontario in Canada to the Customers table and add demand for each of these customers for each product to the CustomerDemand table. The next 2 screenshots show the customer and customer demand data that will be added to this existing model.

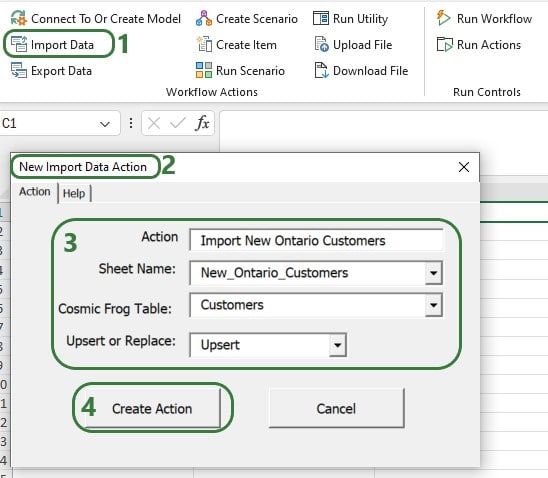

First, we will use an Import Data action to append the new customers to the Customers table in the model we are connecting to:

Next, use the Import Data Action again to upsert the data contained in the New_CustomerDemand worksheet to the CustomerDemand table in the Cosmic Frog model, which will be added to columns E & F. After these 2 Import Data actions have been added, our workflow now looks like this:

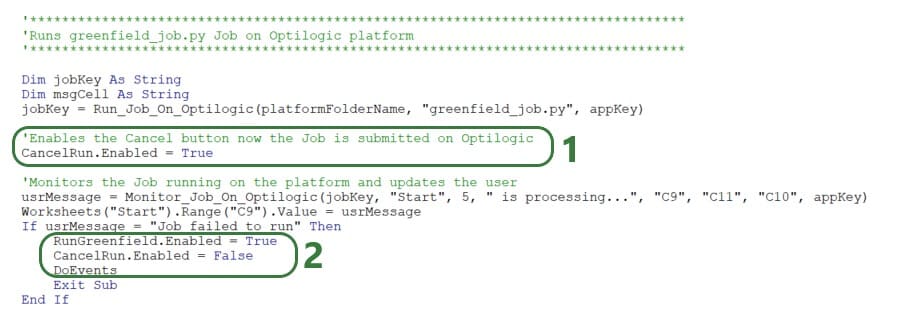

Now that the new customers and their demand have been imported into the model, we will add several actions to create a new scenario where the new customers will be included. In this scenario, we will also remove the Max Number of New Facilities value, so the Greenfield algorithm can optimize the number of new facilities just based on the costs specified in the model. After setting up the scenario, an action will be added to run it.

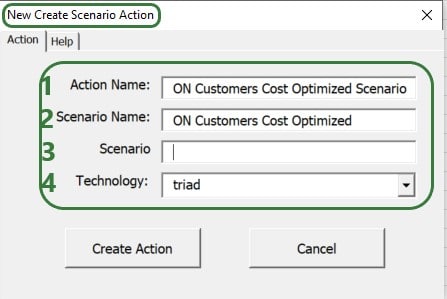

Use the Create Scenario action to add a new scenario to the model:

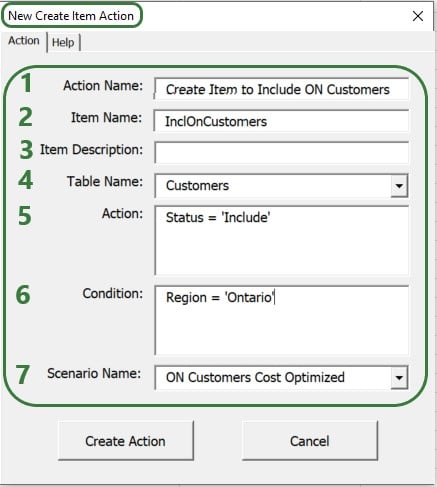

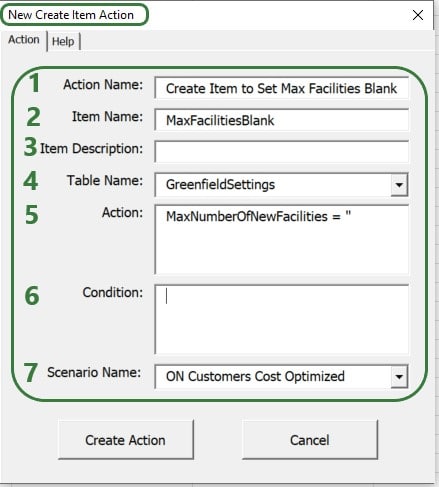

Then, use 2 Create Item Actions to 1) include the Ontario customers and 2) remove the Max Number Of New Facilities value:

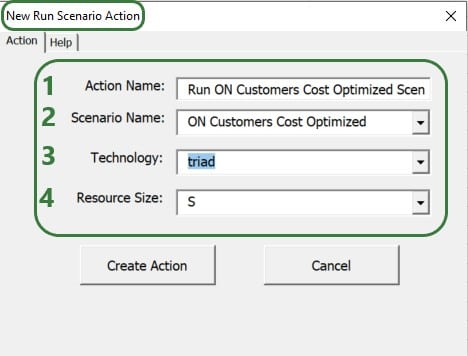

After setting up the scenario and its 2 items, the next step of the workflow will be to run it. We add a Run Scenario action to the workflow to do so:

The configuration of this action takes following inputs:

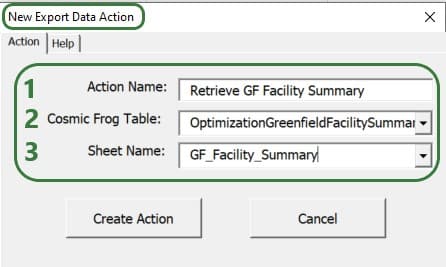

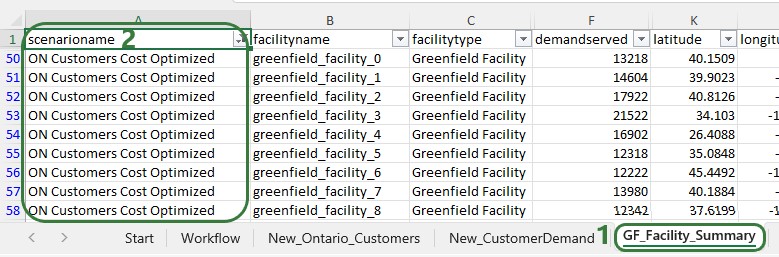

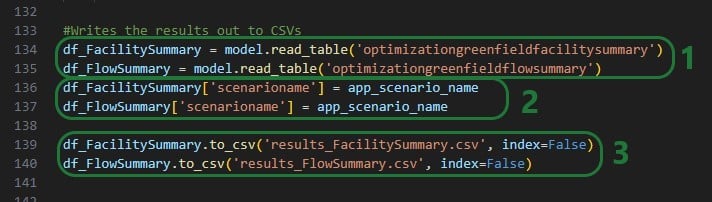

We now have a workflow that connects to an existing US Greenfield model, adds Ontario customers and their demand to this model, then creates and runs a new scenario with 2 items in this Cosmic Frog model. After running the scenario, we want to export the Optimization Greenfield Facility Summary output table from the Cosmic Frog model and load it into a new worksheet in the App. We do so by adding an Export Data Action to the workflow:

After adding the above actions to the workflow, the workflow worksheet now looks like the following 2 screenshots from column G onwards (columns A-F contain the first 3 actions as shown in a screenshot further above):

Columns G-H contain the details of the action that created the new ON Customers Cost Optimized scenario, and columns I-J & K-L contain the details of the actions that added the 2 scenario items to this scenario.

Columns M-N contain the details of the action that will run the scenario that was added and columns O-P those of the action that will export the selected output table (Optimization Greenfield Facility Summary) into the GF_Facility_Summary worksheet of the App.

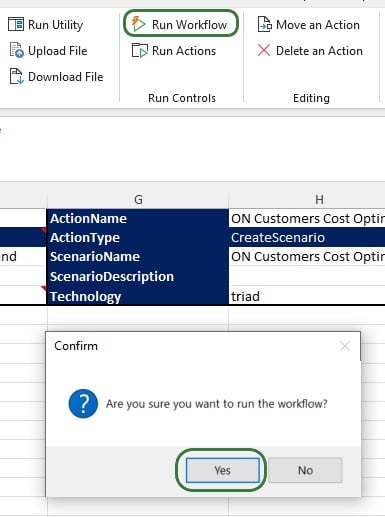

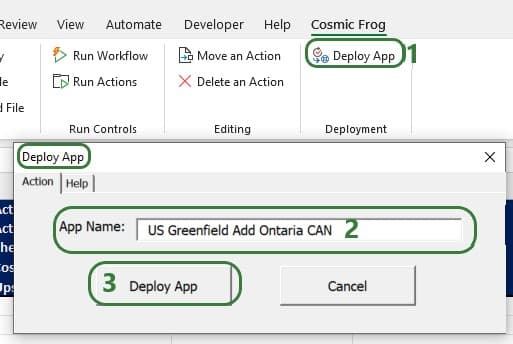

To run the completed Workflow, all we need to do is click on the Run Workflow action and confirm we want to run it:

After kicking off the workflow, if we switch to the Start worksheet, details of the run and its progress are shown in rows 9-11:

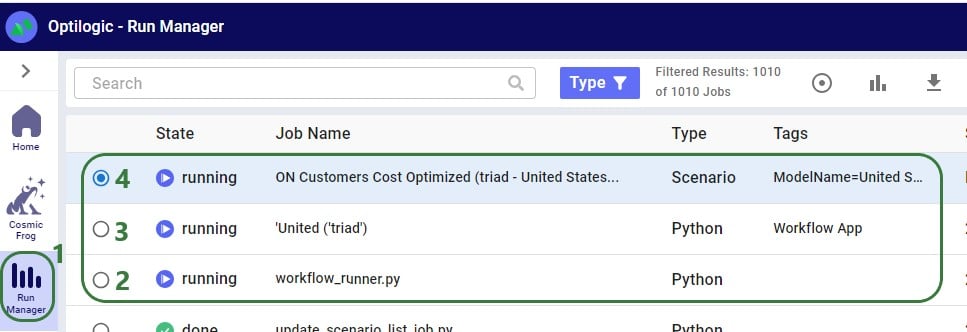

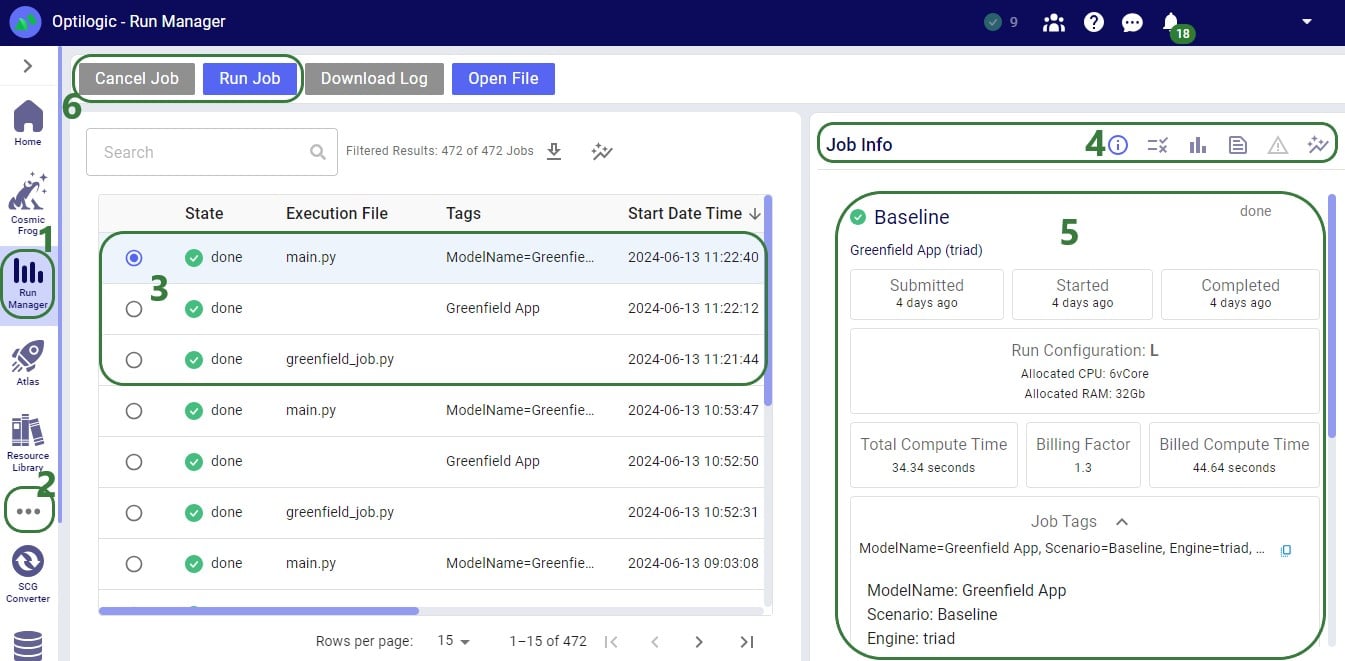

Looking on the Optilogic Platform, we can also check the progress of the App run and the Cosmic Frog model changes:

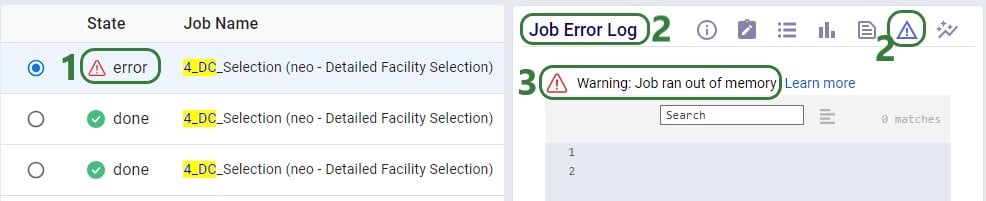

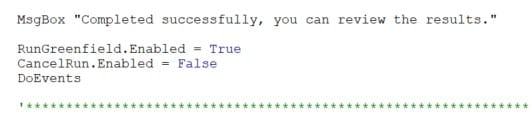

Once the run is done all 3 jobs will have their State changed to Done, unless an error occurred in which case the State will say Error.

Checking the United Stated Greenfield Facility Selection model itself in the Cosmic Frog application on cosmicfrog.com:

Once the App is finished running, we see that a worksheet named GF_Facility_Summary was added to the App Builder:

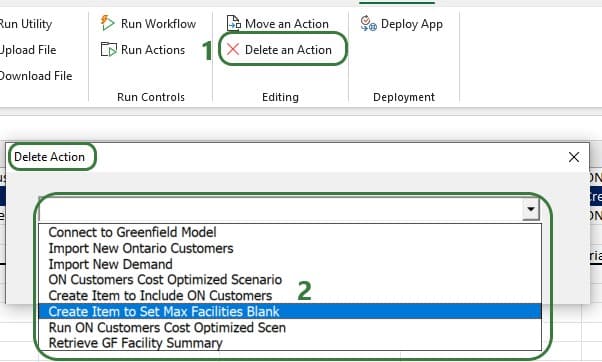

There are several other actions that users of the App Builder can incorporate into a workflow or use to facilitate workflow building. We will cover these now. Feel free to skip ahead to the “Deploying the App” section if your workflow is complete at this stage.

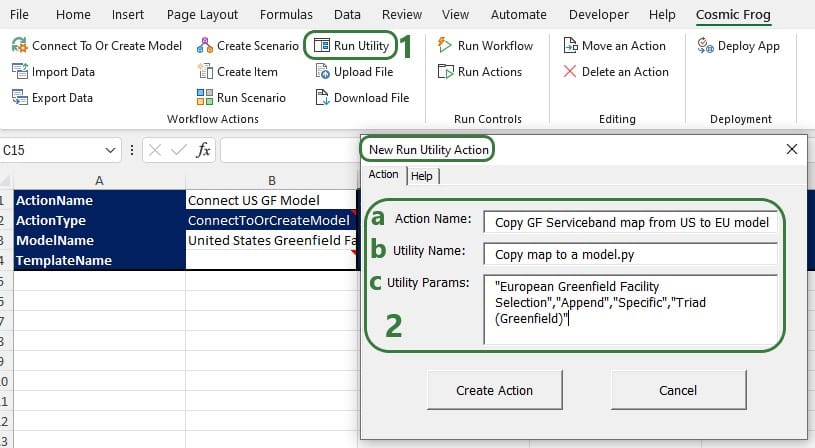

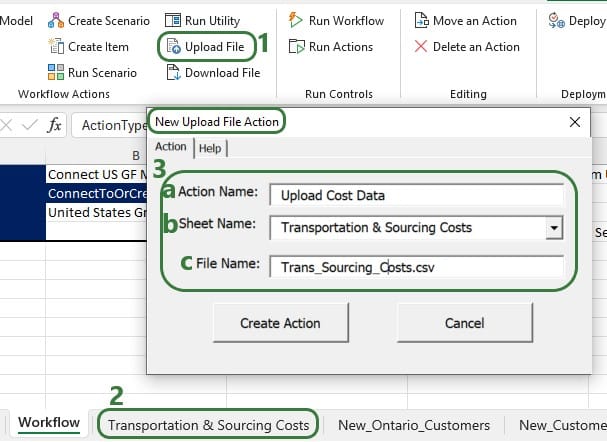

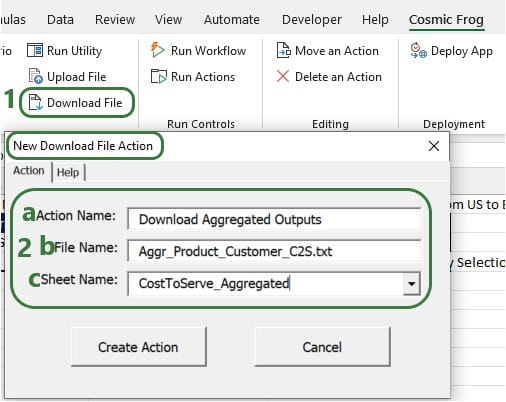

Additional actions that can be incorporated into workflows are the Run Utility, Upload File, and Download File actions. The Run Utility action can be used to run a Cosmic Frog Utility (a Python script), which currently can be a Utility downloaded from the Resource Library or a Utility specifically built for the App.

There are currently 4 Utilities available in the Resource Library: