When running geocoding through the default Mapbox provider, all of the available location data from the Customers, Facilities and Suppliers table will be used to try and determine the latitude and longitude coordinates. Mapbox will use all of these components and perform the best mapping possible and will return a latitude / longitude coordinate along with a confidence score. By default, Cosmic Frog will only accept scores with a confidence score of 100. You can optionally turn this option off and the top confidence score will then be returned by Mapbox.

More information on how Mapbox calculates latitude and longitude coordinates can be found here: Mapbox Geocoding Documentation.

If you’d like to use an alternate provider instead of Mapbox, setup instructions can be found here: Using Alternate Geocoding Providers.

Every account holder has access to create the Global Supply Chain Strategy demo model. Following is an overview of the features of the model (and of Cosmic Frog).

If you wish to build the model instead, please follow the instructions located here: Build Your First Cosmic Frog Model

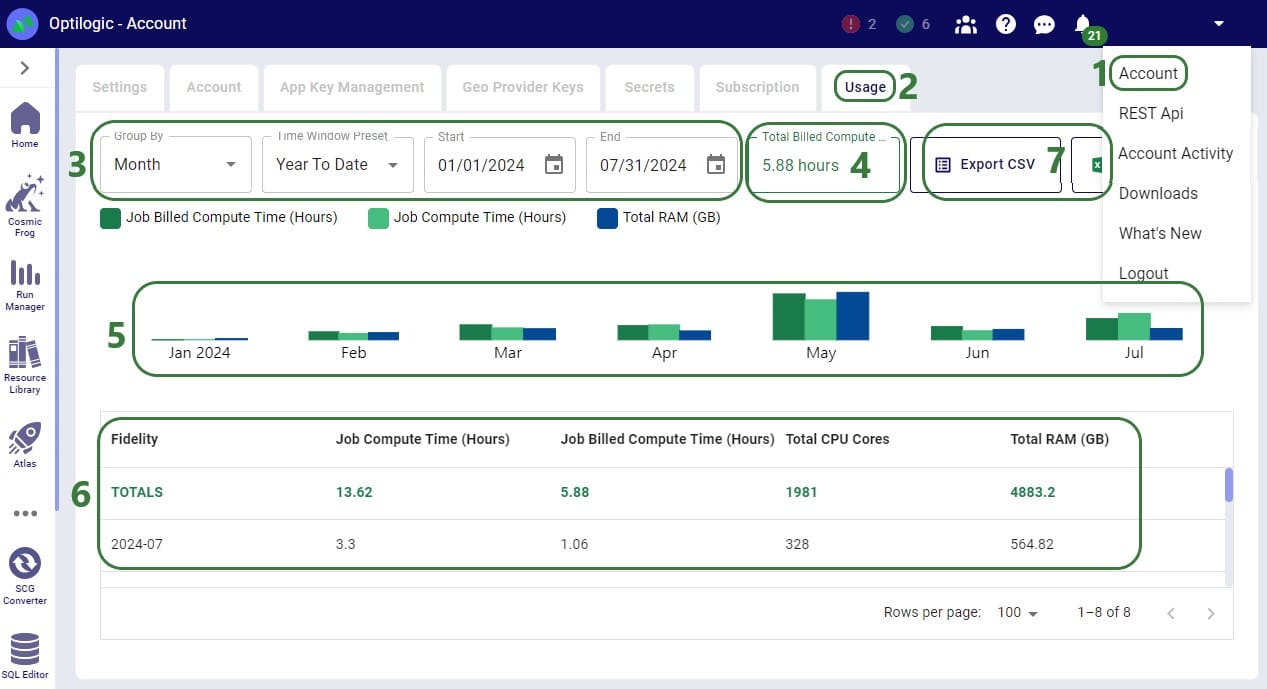

When running Cosmic Frog models and other jobs on the Optilogic platform, cloud resources are used. Usage of these resources is billed based on the billing factor of the resource used for the job. Each Optilogic customer has an amount of cloud compute hours included in their Master License Agreement (MLA). Users may want to check how many of these hours have been used up and in this documentation 2 ways to do so will be covered. In the last section we will touch on how to best track hours at the team/company level.

The first option for hours tracking that will be covered is through the Usage tab in the user’s Account:

If a user is asked by their manager to report the hours they have used on the Optilogic platform, they can go here and use the Custom Time Window Preset option to align the start and end date of the reporting period with the dates of the MLA. They can then report back the number shown as the Total Billed Compute Time (box 4 in the above screenshot).

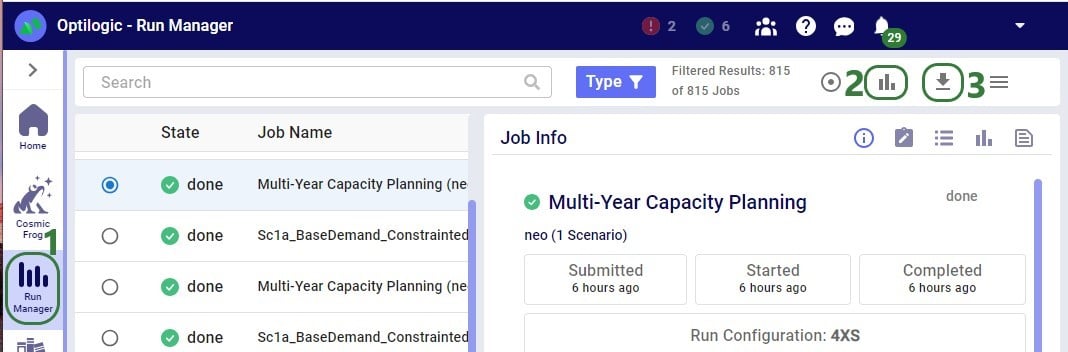

Through the Run Manager application on the Optilogic platform, user can also analyze their jobs run, including retrieving the Total Billed Compute Time:

After clicking on the View Charts icon, a screen similar to the following screenshot will be shown:

If a user needs to report their hours used on the Optilogic platform, they can download this jobs.csv file and:

Currently, only tracking of usage hours at the individual user level is available as described above. To get total team or company usage, a manager can ask their users to use 1 of the above 2 methods to report their Total Billed Compute Time and the manager can then add these up to get the total used hours so far. Tracking at the team/company level is planned to be made available on the Optilogic platform later in 2024.

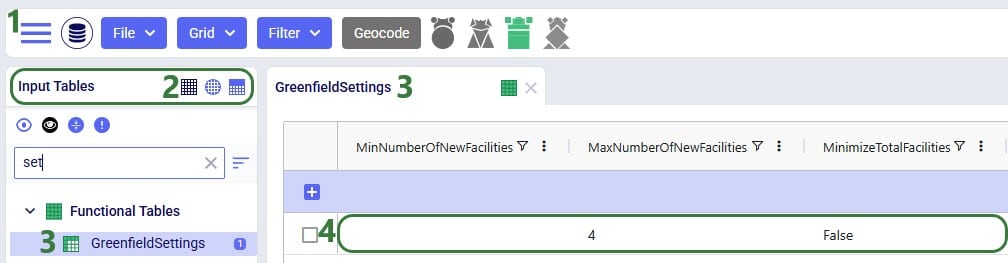

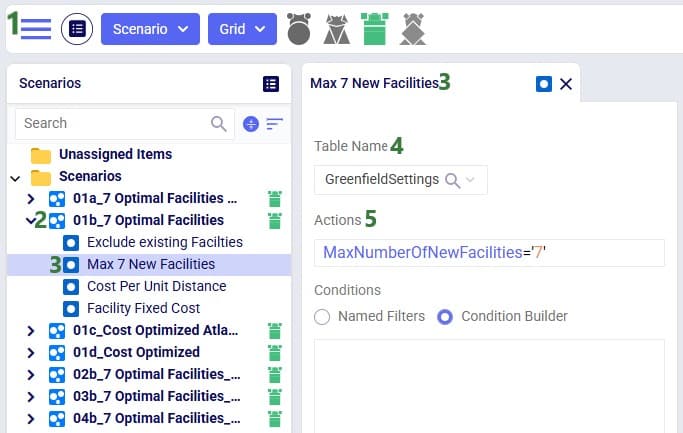

With Intelligent Greenfield Analysis (the Triad engine in Cosmic Frog), you have control over several different solve settings. For ease of use with scenario modeling, these have been placed in a dedicated table called Greenfield Settings. This allows for quick scenario building that leverages the column names. We will cover the settings which can be configured on the Greenfield Settings table and show an example of how scenarios can be used to change these settings.

Following screenshot shows the Greenfield Settings table:

An explanation of each setting is as follows:

Note that the “Getting Started with Intelligent Greenfield Analysis” help article contains a visual explanation of customer clustering too.

Finally, we will look at an example where a scenario item changes a Greenfield setting:

In this section, we outline some techniques for debugging models in Cosmic Frog. In general, there’s no one “right” approach to debugging, but knowing where you can get information on what went wrong can be helpful.

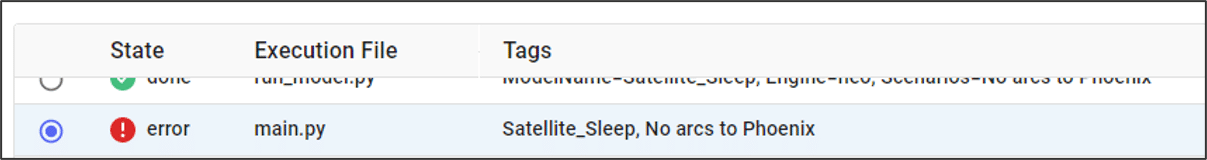

An error state in the run manager is the most obvious sign that something is wrong with our model setup.

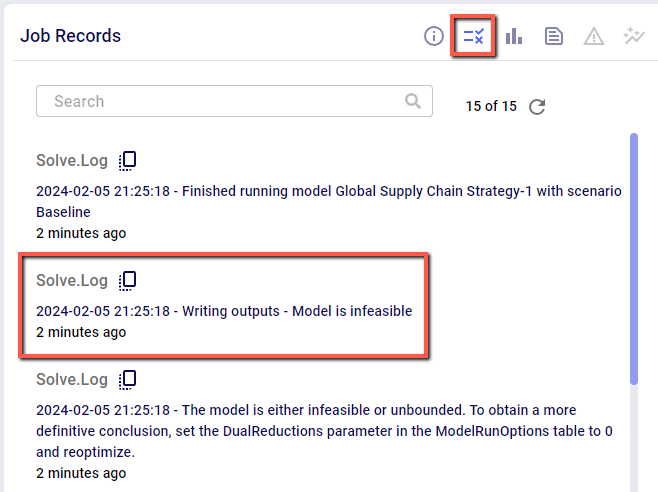

After reaching an error state, the first place to check is the Job Records section of the Run Manager. Here you will find a summary of events from the model solve, and if an error is thrown you can potentially see the cause directly from here:

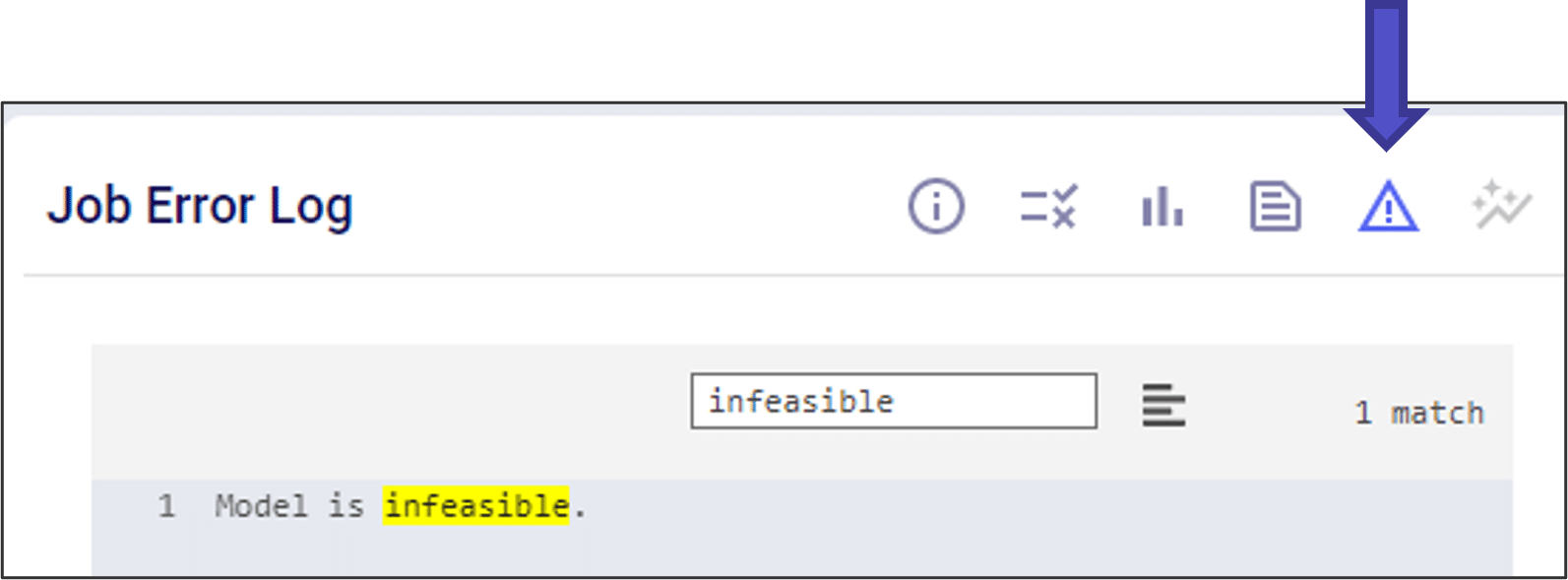

Next, you can check the Job Error Log. The Job Error Log will contain more detailed messaging on errors that are thrown during a model solve. While there are a number of possible errors, the most common cause of an error state is an infeasible model. You can check if your model is infeasible by scrolling down to the bottom of the Job Error Log, or by searching for “infeasible” in the search bar.

If your model is infeasible, you can use the following toolkit to help understand why:

Sometimes even a model that finishes running can give results that we do not expect. It a good habit to check your Output Summary Tables after each run to make sure the results look like you expect.

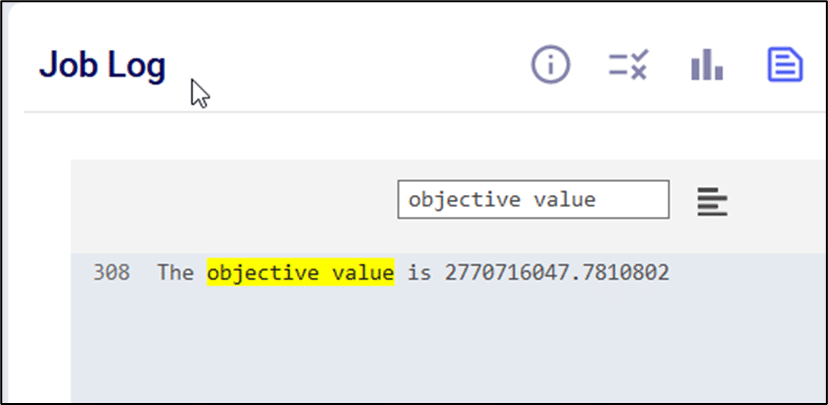

In some cases, the output tables might not populate even if the model runs successfully. Even if these values do not populate, you can find the Gurobi optimization results in the job log. One useful tip is to search for “objective value” in the job log and make sure the value is in the range you expect.

If your model is running, but seems incorrect, you can use the following toolkit to help understand why:

If you are still having trouble, you can also reach out to us directly at support@optilogic.com.

Watch the video for an introduction to the features and functions of the Optilogic Intelligent Greenfield (Triad) engine.

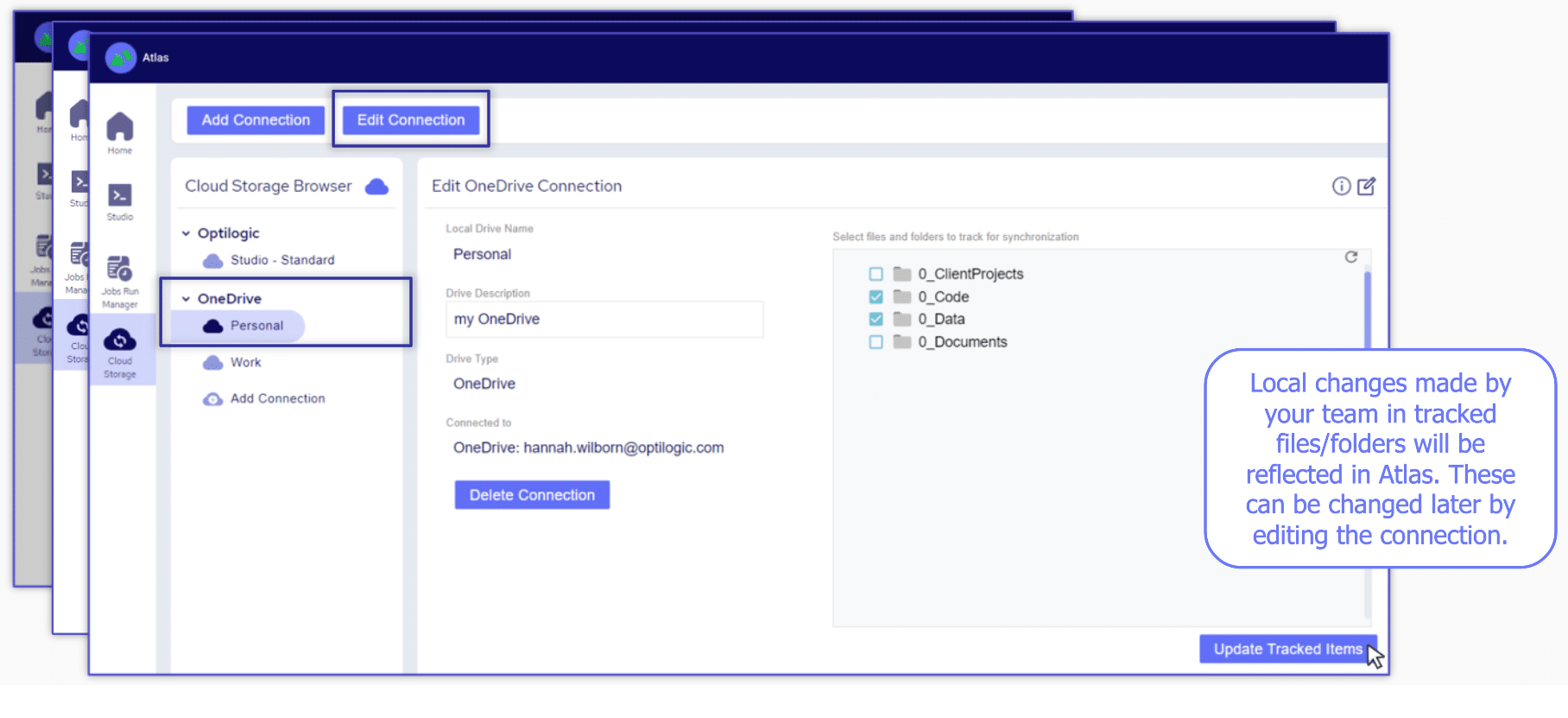

There are four main methods for adding data to Atlas:

1. Drag/Drop

2. Upload tool

3. OneDrive

4. Leverage API code

Before you get started in Cosmic Frog watch this short video to learn how to navigate the software.

To help familiarize you with our product we have created the following video.

Thank you for using the most powerful supply chain design software in the galaxy (I mean, as far as we know).

To see the highlights of the software please watch the following video.

As you continue to work with the tool you might find yourself asking, what do these engine names mean and how the heck did they come up with them?

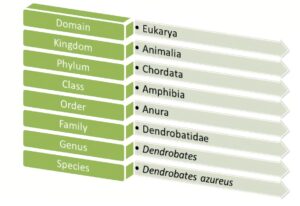

Anura, the name of our data schema, comes from the biological root where Anura is the order that all frogs and toads fall into.

NEO, our optimization engine, takes its name from a suborder of Anura – Neobatrachia. Frogs in this suborder are known to be the most advanced of any other suborder.

THROG, our simulation engine, takes its name from a superhero hybrid that crosses Thor with a frog (yes, this actually exists)

Triad, our greenfield engine, takes its name from the oldest known species of frogs – Triadobatrachus. You can think of it as the starting point for the evolution of all frogs, and it serves as a great starting point for modeling projects too!

Dart, our risk engine, takes its name from the infamous poison dart frog. Just as you would want to be aware of a poisonous frog’s presence, you’ll also want to be sure to evaluate any opportunities for risks to present themselves.

Dendro, our simulation evolutionary algorithm, takes its name from what is known to be the smartest species of frog – Dendrobates auratus. These frogs have the ability to create complex mental maps to evaluate and better navigate their surroundings.

Hopper, our transportation routing engine, doesn’t have a name rooted in frog-related biology but rather a visual of a frog hopping along from stop to stop just as you might see with a multi-drop transportation route.

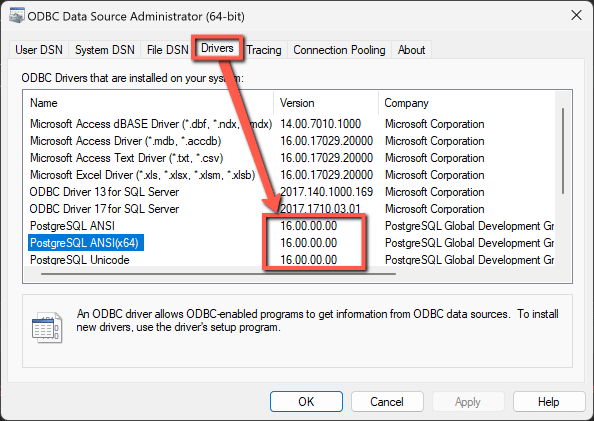

There can be many different causes for an ODBC connection error, this article contains a couple of specific error messages along with resolution steps.

Resolution: Please make sure that the updated PSQL driver has been installed. This can be checked through your ODBC Data Sources Administrator

Latest versions of the drivers are located here: https://www.postgresql.org/ftp/odbc/releases/ from here, click on the latest parent folder, which as of June 20, 2024 will be REL-16_00_0005. Select and download the psqlodbc_x64.msi file. When installing, use the default settings from the installation wizard.

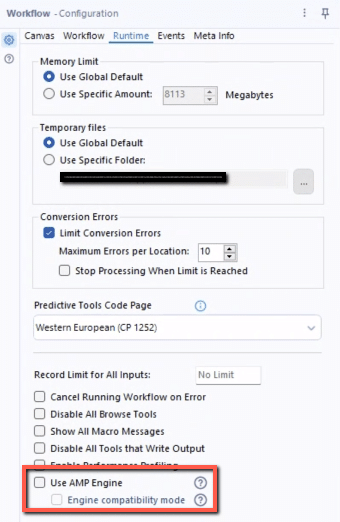

Resolution: Please confirm the PSQL drivers are updated as shown in the previous resolution. If this error is being thrown while running an Alteryx workflow specifically, please disable the AMP Engine for the Alteryx workflow.

Running a model is simple and easy. Just click the run button and watch your Python model execute. Watch the video to learn more.

To leverage the power of hyperscaling, click the “Run as Job” button.

Running a model from Atlas via the SDK requires the following inputs:

Model data can be stored in two common locations:

1. Cosmic Frog model (denoted by a .frog suffix) stored within Postgres SQL database in the platform

2. .CSV files stored within Atlas

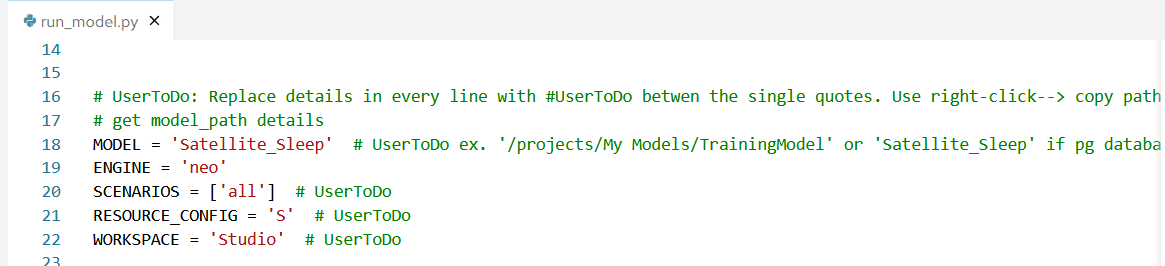

For #1 simply enter the database name within single quotes. For example to run a model called Satellite_Sleep I will need to enter this data in the run_model.py file

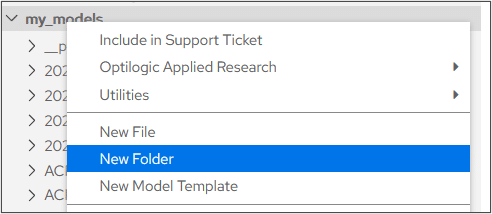

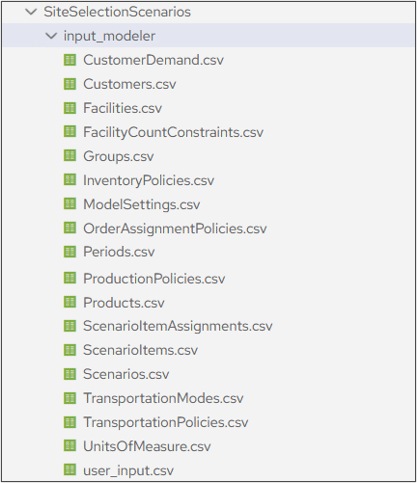

For #2 you will need to create a folder within Atlas to store your .CSV modeling files:

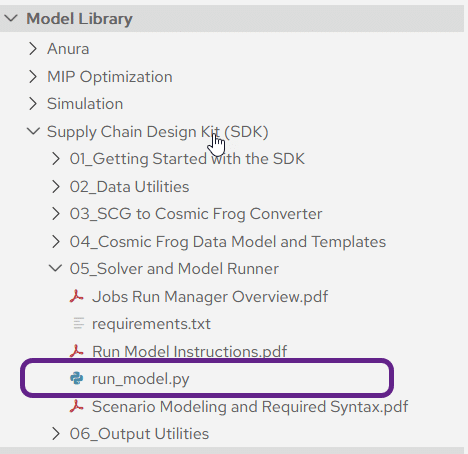

Each Atlas account is loaded with a run_model.py file located within the SDK folder in your Model Library

Double click the file to open it within Atlas and enter the following data:

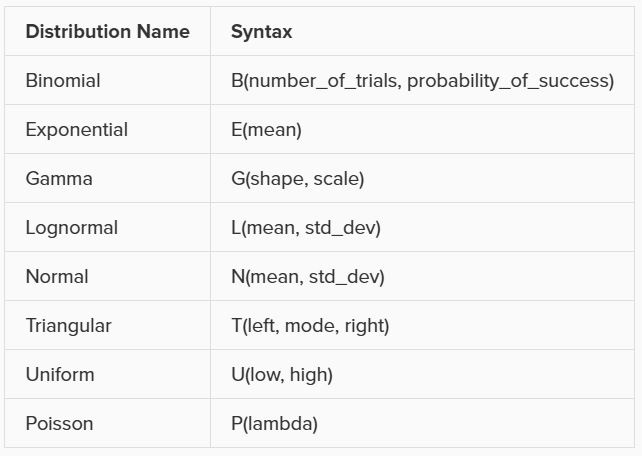

Several input columns used by Throg (Simulation) will accept distributions as inputs. The following distributions, along with their syntax, are currently supported by Throg:

A user-defined Histogram is also supported as an acceptable input. These will be defined in the Histograms input table, and to reference a histogram in an allowed input column simply put in the Histogram Name.

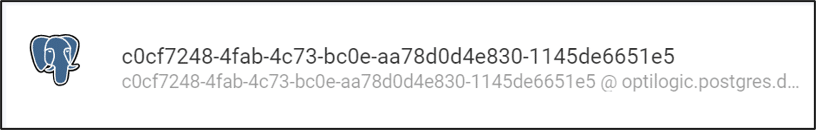

The data for our visualizations comes from our Cosmic Frog model database. This is often stored in the cloud using a unique identifier.

We can decide if we want to use Optilogic servers to do our data computations, or if we want the computations to be performed locally.

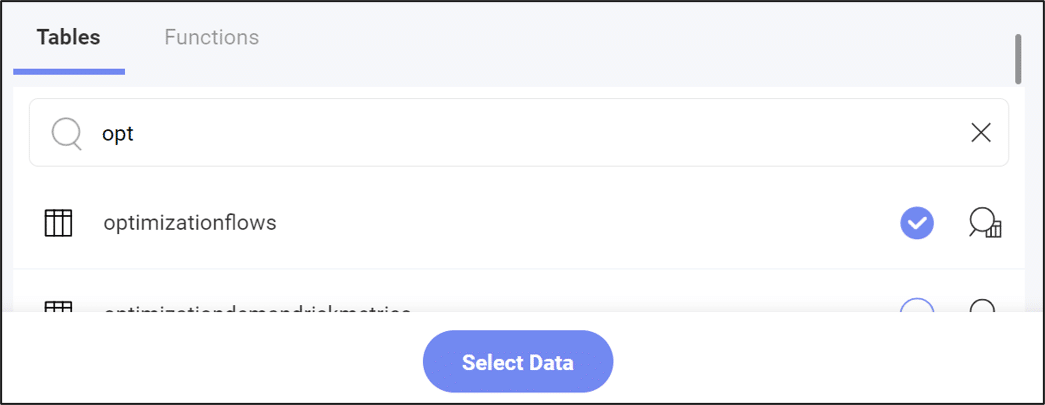

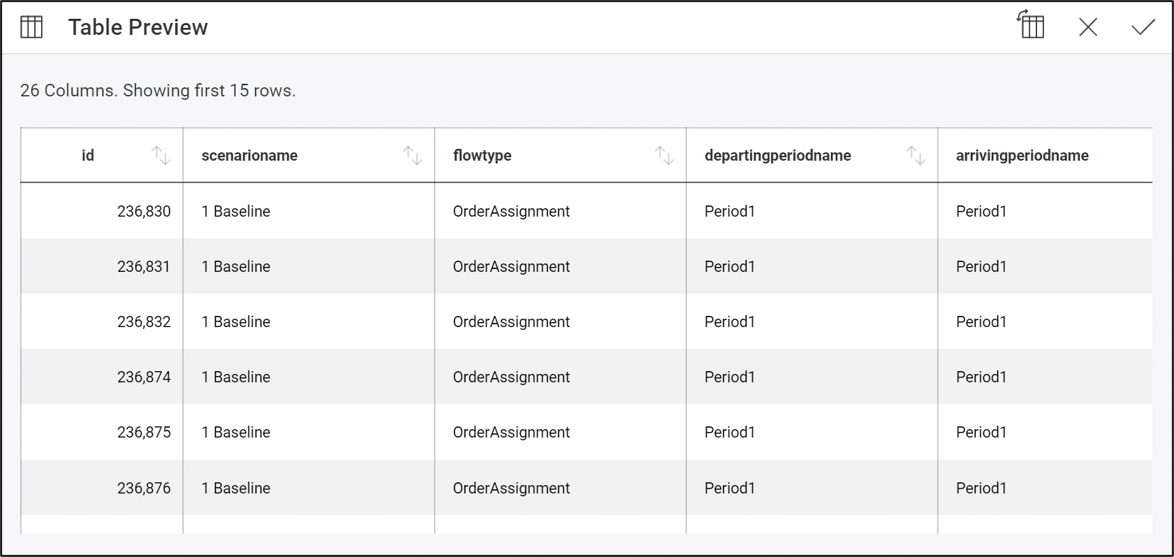

Next, we must decide which table we want to use for our visualization. Generally, the results of running our model are stored in the “optimization” tables, so it can be helpful to use search for this to see output data. However, we can also use tables containing input data if desired.

We can also preview the data in a table using the magnifying glass button:

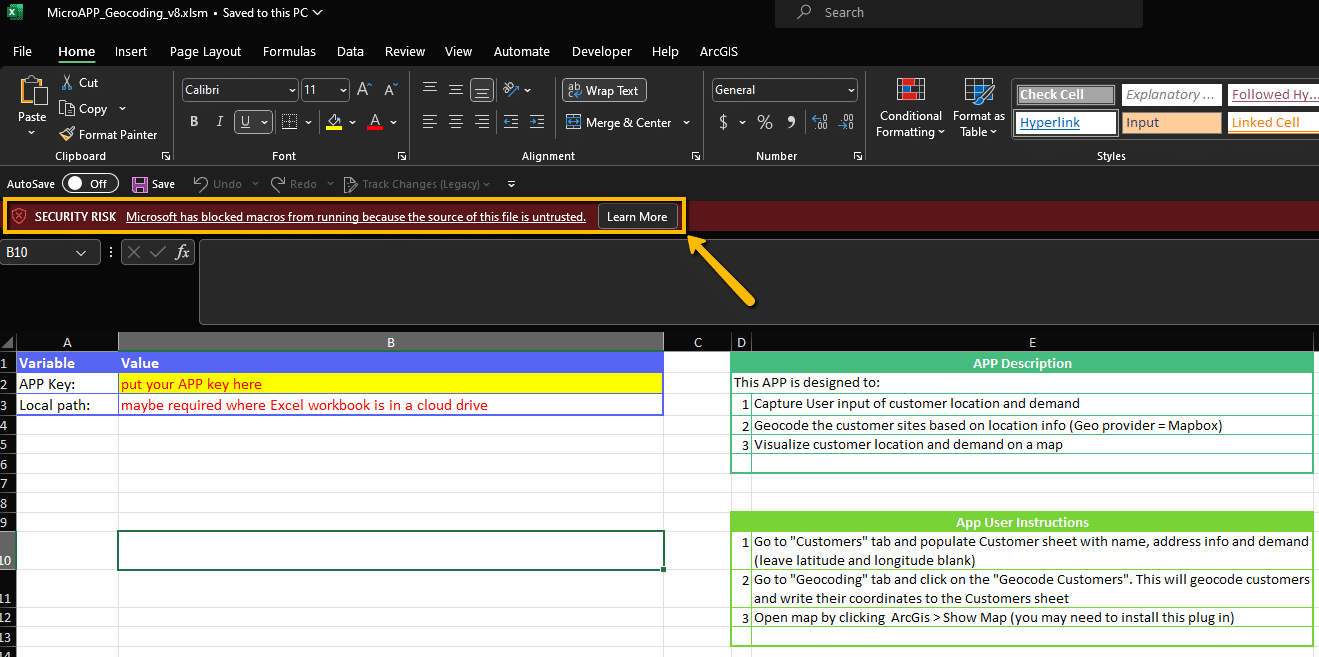

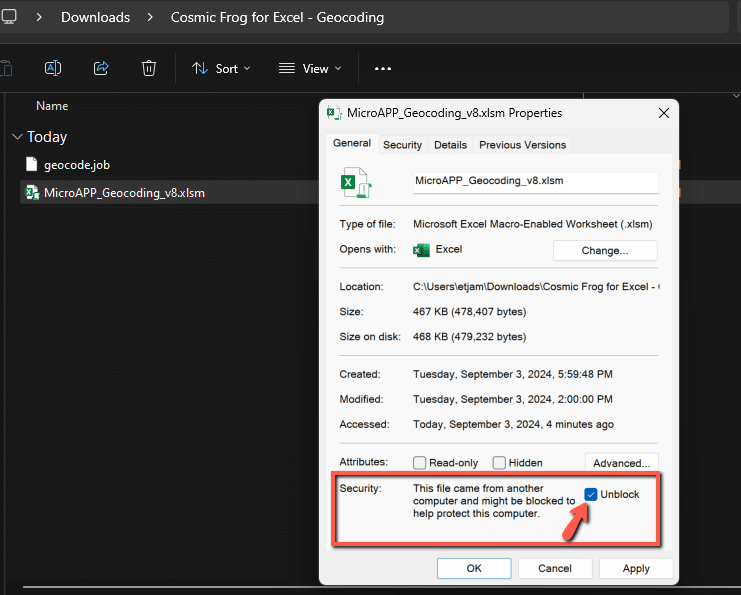

When downloading all of the related files for any of the sample Excel Apps that are posted to our Resource Library, you will likely encounter macro-enabled Excel workbooks (.xlsm) which might have their macros disabled following download.

To enable the macros, you can right-click on the Excel file in a File Explorer and select the option for Properties. In the Properties menu, check the box in the Security section to Unblock the file and then hit Apply.

You can then re-open the document and should see the macros are now enabled.

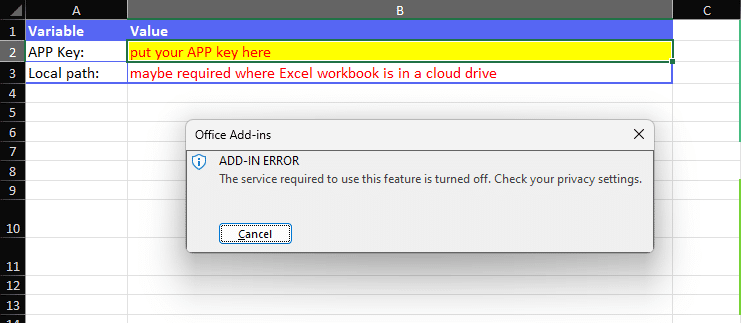

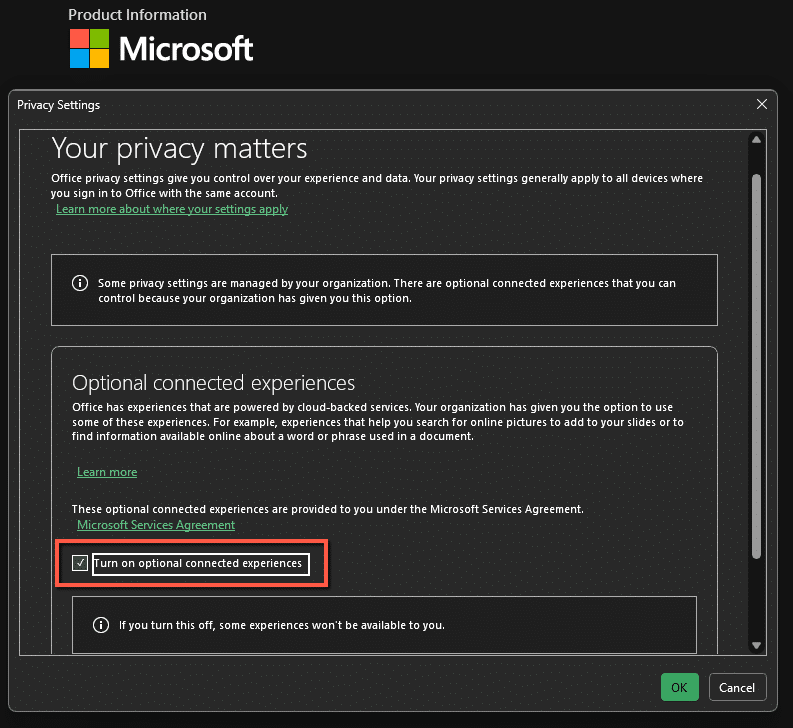

Some of the Excel templates also make use of add-ins to help expand the workbook’s capabilities. An example of this is the ArcGIS add-in which allows for map visuals to be created directly in the workbook. It is possible that these add-ins might be disabled by default in the Microsoft Office settings, if that is the case you should see an error message as follows:

This can be resolved by updating your Privacy Settings under your Microsoft Office Account page. To access this menu, click into File > Account > Account Privacy > Manage Settings. You will then want to make sure that in the Optional Connected Experiences, the option to Turn on optional connected experiences is enabled.

Updating this value will require a restart of Microsoft Office. Once the restart is completed, you should see that the add-ins are now enabled and working as expected.

If other issues come from any Resource Library templates please do not hesitate to reach out to support@optilogic.com.

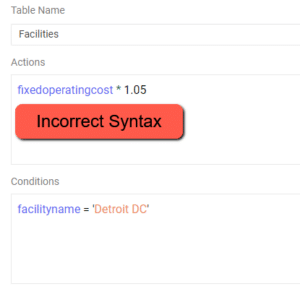

Please find a list of model errors that might appear in the Job Error Log and their associated resolutions.

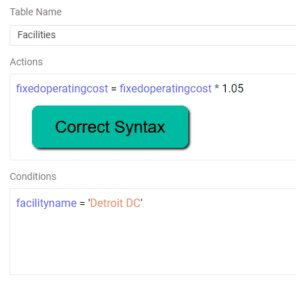

Resolution – Please check to see if a scenario item was built where an action does not reference a column name. Scenario Actions should be of the form ColumnName = …..

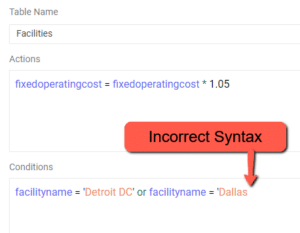

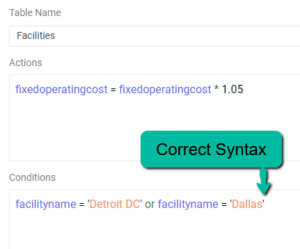

Resolution – Please check to see if there is a string in the scenario condition that is missing an apostrophe to start or close the string

Resolution – This indicates an intermittent drop in connection when reading / writing to the database. Re-running the scenario should resolve this error. If the error persists, please reach out to support@optilogic.com.

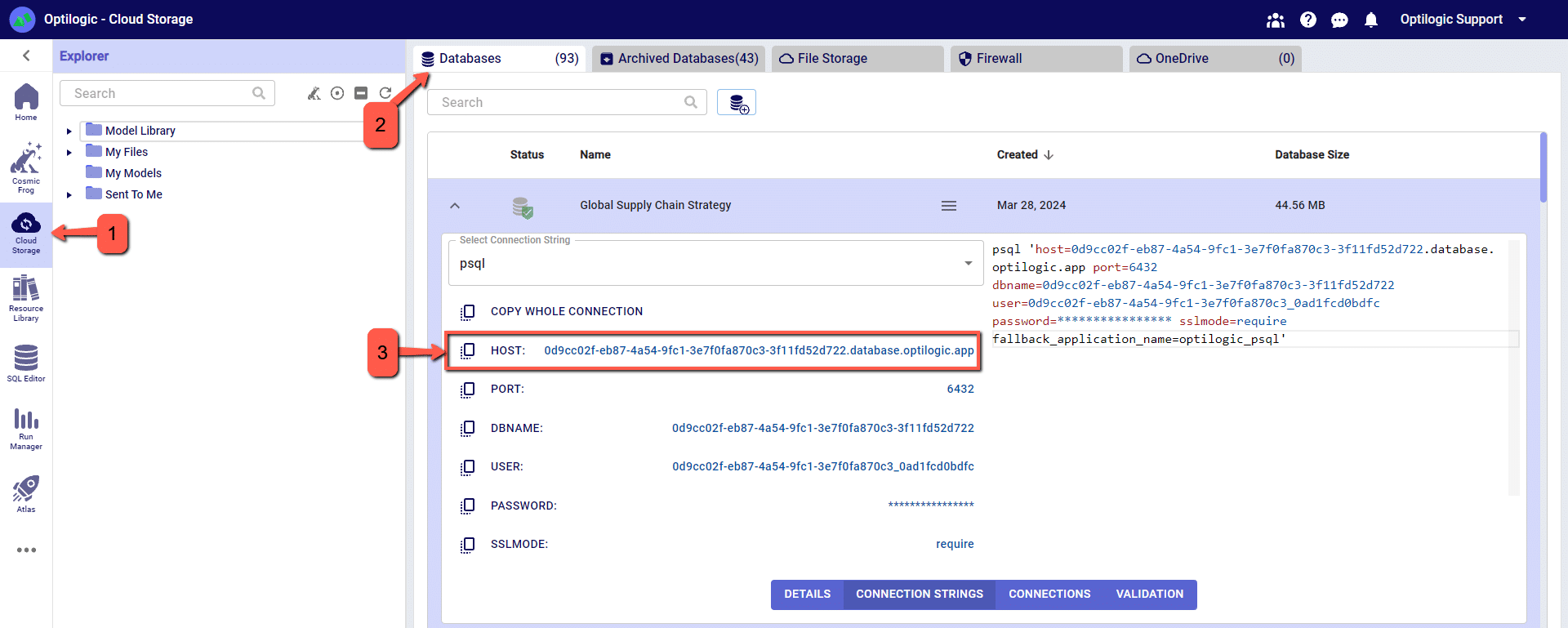

‘Connection Info’ is required when connecting 3rd party tools such as Alteryx, Tableau, PowerBI, etc. to Cosmic Frog.

Anura version 2.7 makes use of Next-Gen database infrastructure. As a result, connection strings must be updated to maintain connectivity.

Severed connections will produce an error when connecting your 3rd party tool to Cosmic Frog.

Steps to restore connection:

Cosmic Frog ‘HOST’ value can be copied from Cloud Storage browser.

Watch the video to learn how to build dashboards to analyze scenarios and tell the stories behind your Cosmic Frog models:

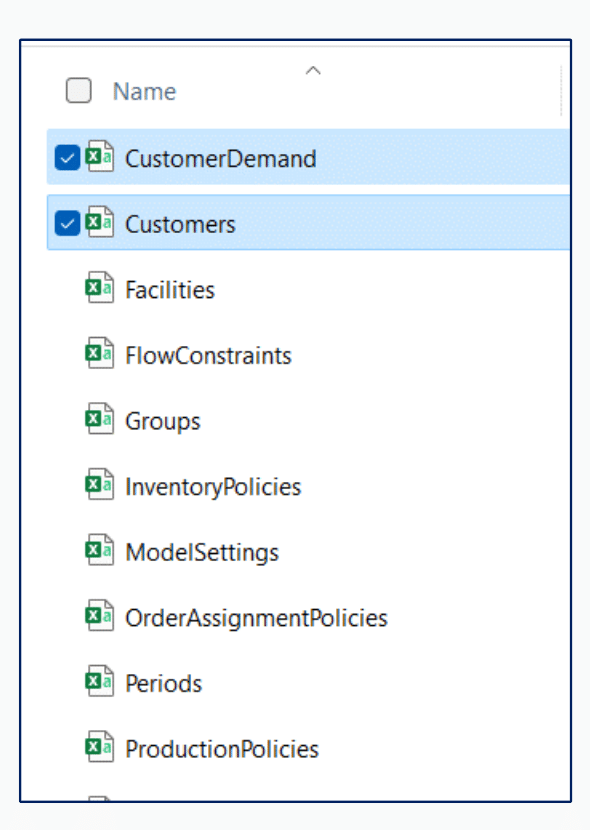

The following instructions begin with the assumption that you have data that you wish to upload to your Cosmic Frog model.

The instructions below assume the user is adding information to the Suppliers table in the model database. This will use the same connection that was previously configured to download data from the Customers table.

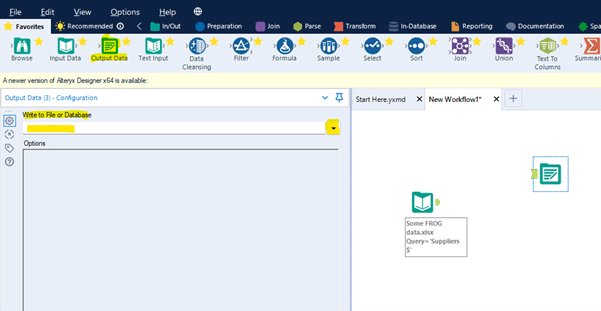

Drag the “Output Data” action into the Workflow and click to select “Write to File or Database”

Select the relevant ODBC connection established earlier, in this example we called the connection “Alteryx Demo Model.”

You will be prompted to enter a valid table name to which the data will be written in the model database. In this example enter suppliers (all in lower case to match the table name in PostGres, which is case sensitive).

Click OK

Within the Options menu – edit “Output Options” to Append Existing in the drop-down list.

Within the Options menu – edit “Append Field Map” by clicking the three dots to see more options.

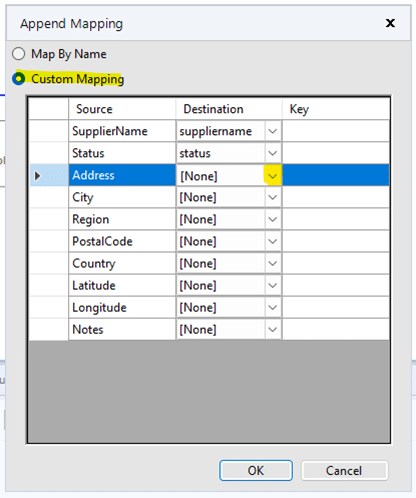

Select “Custom Mapping” option and then use the drop-down lists to map each field in the Destination column to the Source column. Fields of the same name, but case sensitive as it is PostGres.

Click OK

Now you can Run the Workflow to upload the data to your model. Once it has completed check the Suppliers table in Cosmic Frog to see the data.

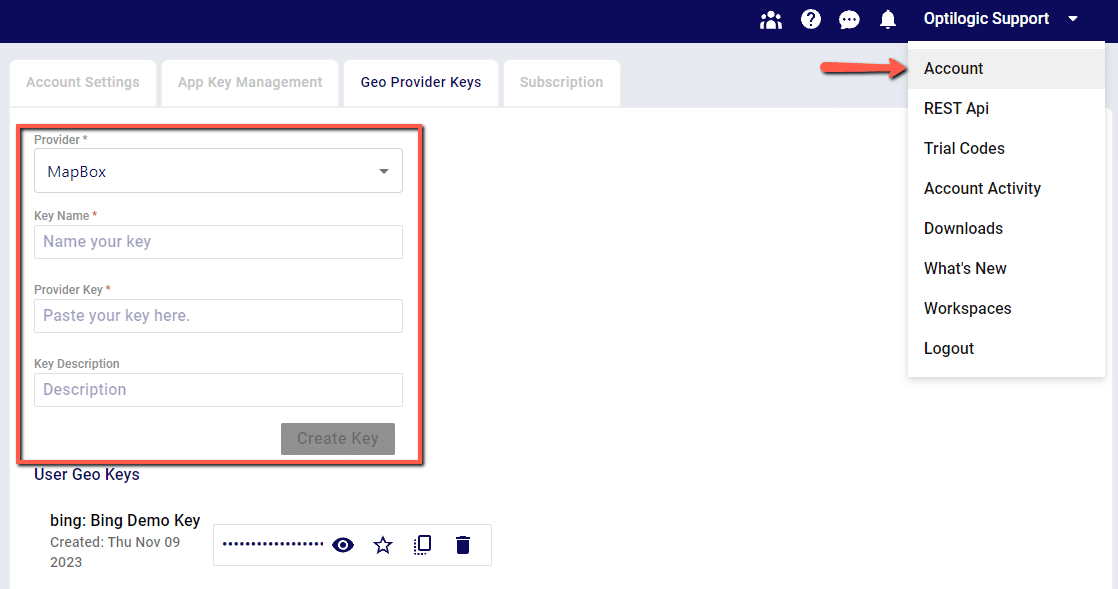

By default, the Geocoding option in Cosmic Frog will use Mapbox as the geodata provider. Other supported providers will be Bing, Google, PTV and PC Miler. If you have an access key for an alternate provider and would like to configure this provider as the default option, follow these two steps:

1. Set up your geocoding key under your Account > Geoprovider Keys page

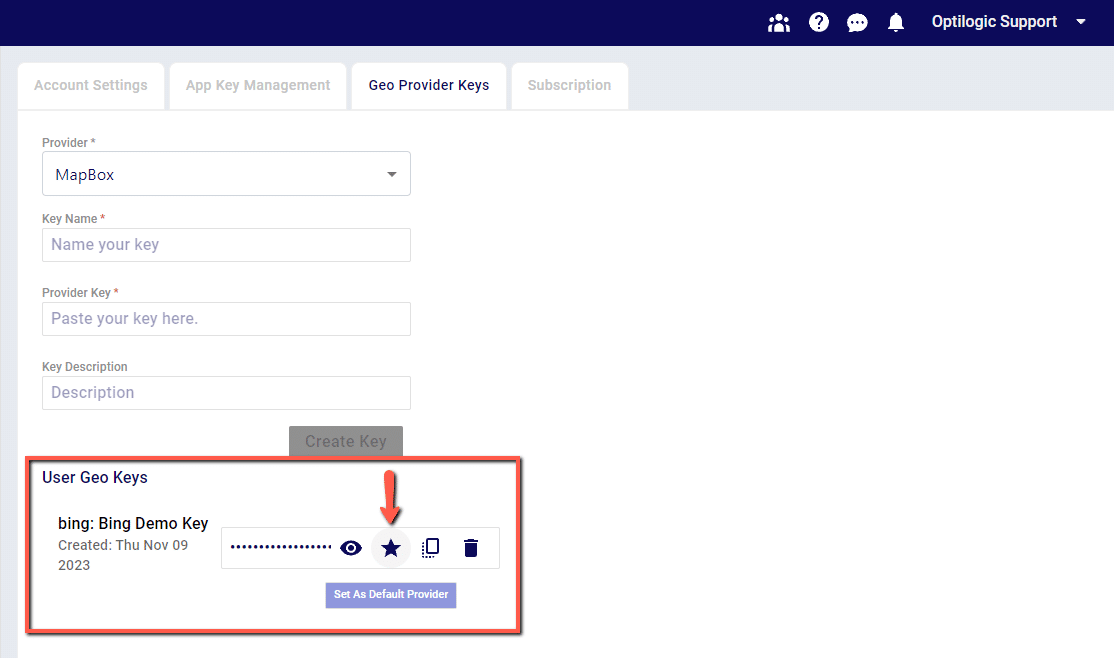

2. Once a new key has been created, set the key as the Default Provider to be used by clicking the Star icon next to the key. This key will now be used for Geocoding in Cosmic Frog

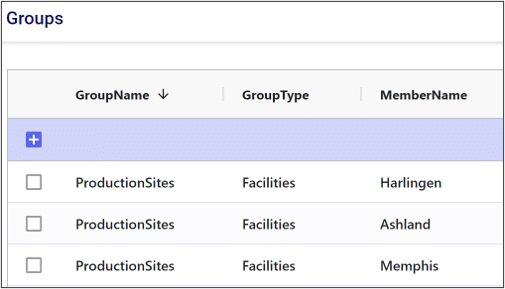

Adding model constraints often requires us to take advantage of Cosmic Frog’s Groups feature. Using the Groups table, we can define groups of model elements. This allows us to reference several individual elements using just the group name.

In the example below, we have added the Harlingen, Ashland, and Memphis production sites to a group named “ProductionSites”.

Now, if we want to write a constraint that applies to all production sites, we only need to write a single constraint referencing the group.

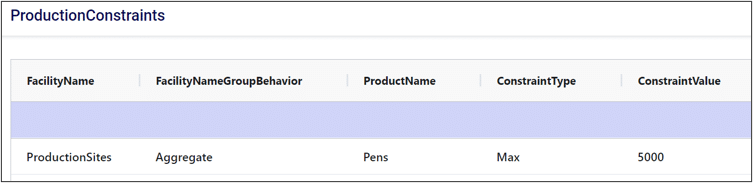

When we reference a group in a constraint, we can choose to either aggregate or enumerate the constraint across the group.

If we aggregate the constraint, that means we want the constraint to apply to some aggregate statistic representing the entire group. For example, if we wanted the total number of pens produced across all production sites to be less than a certain value, we would aggregate this constraint across the group.

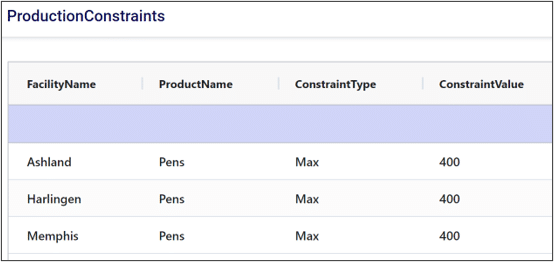

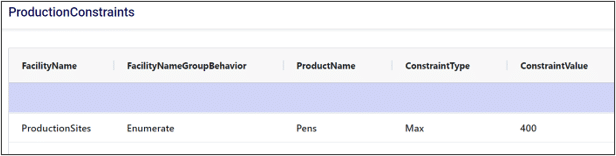

Enumerating a constraint across a group applies the constraint to each individual member of the group. This is useful if we want to avoid writing repetitive constraints. For example, if each production site had a maximum production limit of 400 pens, we could either write a constraint for each site or write a single constraint for the group. Compare the two images below to see how enumeration can help simplify our constraint tables.

Without enumeration:

With enumeration:

Thank you for using the most powerful Supply Chain Design software in the galaxy (I mean, as far as we know).

To see the highlights of the software please watch the following video.